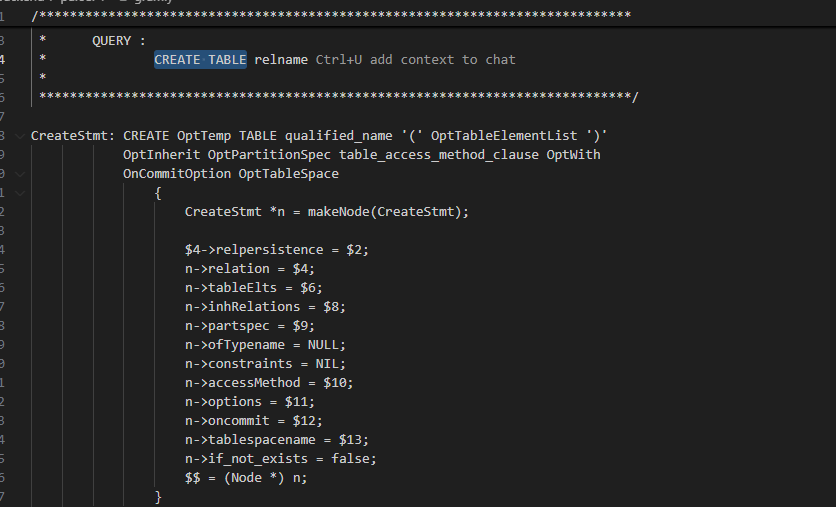

语法

OptTableElementList->TableElementList->TypedTableElement->columnDef->ColQualList->ColConstraint->ColConstraintElem

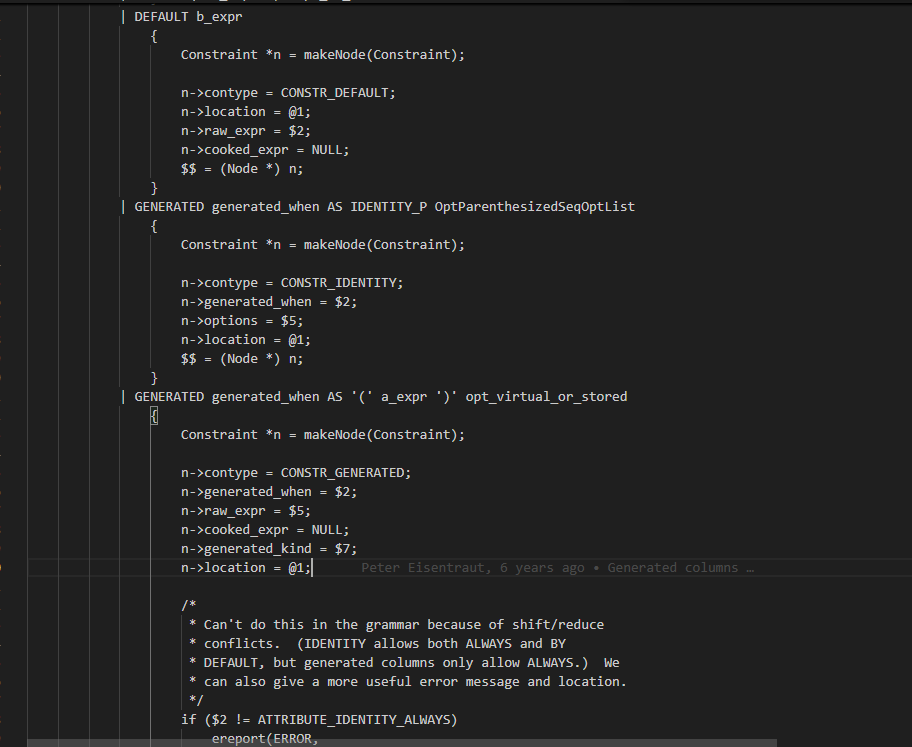

default的语法规则在ColConstraintElem下,解析时会解析为Constraint,contype为CONSTR_DEFAULT 存在ColumnDef->constraints下

DDL创建表

transformCreateStmt

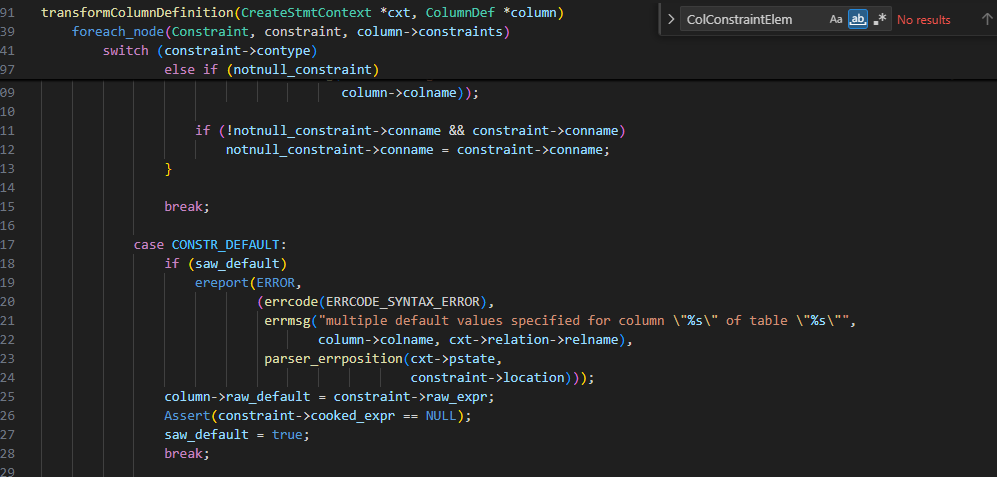

transformColumnDefinition

default语法表达式赋值给raw_default

DefineRelation

AddRelationNewConstraints

make_parsestate

addRangeTableEntryForRelation

addNSItemToQuery

cookDefault

StoreAttrDefault

语义分析对应constraint 并且把表达式结果序列化插入pg_attrdef表中

c

List *

AddRelationNewConstraints(Relation rel,

List *newColDefaults,

List *newConstraints,

bool allow_merge,

bool is_local,

bool is_internal,

const char *queryString)

{

List *cookedConstraints = NIL;

TupleDesc tupleDesc;

TupleConstr *oldconstr;

int numoldchecks;

ParseState *pstate;

ParseNamespaceItem *nsitem;

int numchecks;

List *checknames;

List *nnnames;

Node *expr;

CookedConstraint *cooked;

/*

* Get info about existing constraints.

*/

tupleDesc = RelationGetDescr(rel);

oldconstr = tupleDesc->constr;

if (oldconstr)

numoldchecks = oldconstr->num_check;

else

numoldchecks = 0;

/*

* Create a dummy ParseState and insert the target relation as its sole

* rangetable entry. We need a ParseState for transformExpr.

*/

pstate = make_parsestate(NULL);

pstate->p_sourcetext = queryString;

nsitem = addRangeTableEntryForRelation(pstate,

rel,

AccessShareLock,

NULL,

false,

true);

addNSItemToQuery(pstate, nsitem, true, true, true);

/*

* Process column default expressions.

*/

foreach_ptr(RawColumnDefault, colDef, newColDefaults)

{

Form_pg_attribute atp = TupleDescAttr(rel->rd_att, colDef->attnum - 1);

Oid defOid;

expr = cookDefault(pstate, colDef->raw_default,

atp->atttypid, atp->atttypmod,

NameStr(atp->attname),

atp->attgenerated);

/*

* If the expression is just a NULL constant, we do not bother to make

* an explicit pg_attrdef entry, since the default behavior is

* equivalent. This applies to column defaults, but not for

* generation expressions.

*

* Note a nonobvious property of this test: if the column is of a

* domain type, what we'll get is not a bare null Const but a

* CoerceToDomain expr, so we will not discard the default. This is

* critical because the column default needs to be retained to

* override any default that the domain might have.

*/

if (expr == NULL ||

(!colDef->generated &&

IsA(expr, Const) &&

castNode(Const, expr)->constisnull))

continue;

defOid = StoreAttrDefault(rel, colDef->attnum, expr, is_internal);

cooked = (CookedConstraint *) palloc(sizeof(CookedConstraint));

cooked->contype = CONSTR_DEFAULT;

cooked->conoid = defOid;

cooked->name = NULL;

cooked->attnum = colDef->attnum;

cooked->expr = expr;

cooked->is_enforced = true;

cooked->skip_validation = false;

cooked->is_local = is_local;

cooked->inhcount = is_local ? 0 : 1;

cooked->is_no_inherit = false;

cookedConstraints = lappend(cookedConstraints, cooked);

}

...

/*

* Update the count of constraints in the relation's pg_class tuple. We do

* this even if there was no change, in order to ensure that an SI update

* message is sent out for the pg_class tuple, which will force other

* backends to rebuild their relcache entries for the rel. (This is

* critical if we added defaults but not constraints.)

*/

SetRelationNumChecks(rel, numchecks);

return cookedConstraints;

}插入数据

在重写阶段将表达式赋给对应value

rewriteTargetListIU