结论:窗口的关闭需要带 watermarker 的时间

我想使用 kafkaUI 界面发送 json 数据给 kafka,json 数据长这个样子:

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303530000,"uid":0}这个数据我是编写了一个代码生成的:

package com.bigdata.day04;

import com.alibaba.fastjson.JSON;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

/**

* @基本功能:

* @program:FlinkDemo

* @author: 闫哥

* @create:2025-11-28 09:30:26

**/

public class CreateOrderInfoJson {

public static void main(String[] args) throws Exception {

//1. env-准备环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

DataStreamSource<OrderInfo> streamSource = env.addSource(new MySource(), "自动创建订单");

streamSource.map(new MapFunction<OrderInfo, String>() {

@Override

public String map(OrderInfo orderInfo) throws Exception {

return JSON.toJSONString(orderInfo);

}

}).print();

env.execute();

}

}编写一个 flink 读取 kafka 数据的代码

这个代码中可以读取 kafka 数据,并且必须使用 evenetTime 时间语义,并且还需要有watermarker+allowedLateness

package com.bigdata.day04;

import com.alibaba.fastjson.JSON;

import org.apache.commons.lang3.time.DateFormatUtils;

import org.apache.flink.api.common.eventtime.SerializableTimestampAssigner;

import org.apache.flink.api.common.eventtime.WatermarkStrategy;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.connector.kafka.source.KafkaSource;

import org.apache.flink.connector.kafka.source.enumerator.initializer.OffsetsInitializer;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SideOutputDataStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.windowing.WindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

import org.apache.flink.util.OutputTag;

import java.time.Duration;

/**

* @基本功能:

* @program:FlinkDemo

* @author: 闫哥

* @create:2025-11-28 09:30:26

**/

public class TestAllowedLateness {

public static void main(String[] args) throws Exception {

//1. env-准备环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

KafkaSource<String> streamSource = KafkaSource.<String>builder().setBootstrapServers("bigdata01:9092").setTopics("first").setStartingOffsets(OffsetsInitializer.latest()).setValueOnlyDeserializer(new SimpleStringSchema()).build();

DataStreamSource<String> dataStreamSource = env.fromSource(streamSource, WatermarkStrategy.noWatermarks(), "kafkaSource");

//dataStreamSource.print();

SingleOutputStreamOperator<OrderInfo> streamOperator = dataStreamSource.map(new MapFunction<String, OrderInfo>() {

@Override

public OrderInfo map(String json) throws Exception {

return JSON.parseObject(json, OrderInfo.class);

}

});

// 此处添加水印

SingleOutputStreamOperator<String> result = streamOperator.assignTimestampsAndWatermarks(WatermarkStrategy.<OrderInfo>forBoundedOutOfOrderness(Duration.ofSeconds(3)).withTimestampAssigner(

new SerializableTimestampAssigner<OrderInfo>() {

// long 是时间戳吗?是秒值还是毫秒呢?年月日时分秒的的字段怎么办呢?

@Override

public long extractTimestamp(OrderInfo orderInfo, long recordTimestamp) {

// 这个方法的返回值是毫秒,所有的数据只要不是这个毫秒值,都需要转换为毫秒

return orderInfo.getTimeStamp();

}

}

)).keyBy(order -> order.getUid()).window(TumblingEventTimeWindows.of(Time.seconds(5)))

.allowedLateness(Time.seconds(10))

.apply(new WindowFunction<OrderInfo, String, Integer, TimeWindow>() {

@Override

public void apply(Integer key, TimeWindow window, Iterable<OrderInfo> input, Collector<String> out) throws Exception {

// 返回 时间开始--> 时间结束 用户id 金额

long start = window.getStart();

long end = window.getEnd();

String startTime = DateFormatUtils.format(start, "yyyy-MM-dd HH:mm:ss");

String endTime = DateFormatUtils.format(end, "yyyy-MM-dd HH:mm:ss");

int sumMoney = 0;

for (OrderInfo orderInfo : input) {

sumMoney += orderInfo.getMoney();

}

out.collect(startTime + "->" + endTime + "->" + key + "-->" + sumMoney);

}

});

result.print();

env.execute();

}

}开始测试:

使用 kafkaUI 界面发送第一条数据:

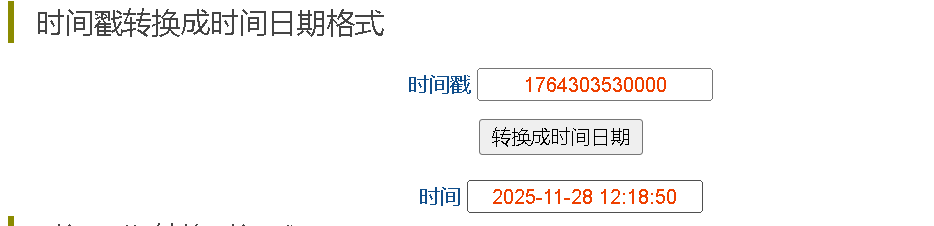

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303530000,"uid":0}我使用时间戳转换器看一下是什么时候:

https://www.beijing-time.org/shijianchuo/

1764303530000 = 12:18:50 也就意味着 第一个区间是 [12:18:50,12:18:55)

接着在这个区间造一些数据:

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303531000,"uid":0}

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303532000,"uid":0}控制台不会有输出,因为没有触发。

输入以下数据可以触发:12:18:58

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303538000,"uid":0}控制台输出了数据:

2025-11-28 12:18:50->2025-11-28 12:18:55->0-->141得到了一个结论: 水印时间 (12:18:58)>= 区间结束时间 就会触发该区间的计算 【2025-11-28 12:18:50->2025-11-28 12:18:55)

此时这个 【2025-11-28 12:18:50->2025-11-28 12:18:55) 区间触发了,没有关闭

接着在这个区间【2025-11-28 12:18:50->2025-11-28 12:18:55)继续放入数据:

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303533000,"uid":0}

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303534000,"uid":0}我们在这个里面又放入了12:18:53 12:18:54 的数据,放入一条,计算一次,放入一条计算一次,为什么?

触发条件不是当前的时间-3 ,而是 最大的那个时间 -3 ,以前放的最大的事件时间是12:18:58

接着造一个 结束时间+10s 的数据:

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303545000,"uid":0}此时触发了一个区间的运行 【2025-11-28 12:18:55->2025-11-28 12:19:00) ,我们不关系,而关系的是【2025-11-28 12:18:50->2025-11-28 12:18:55) 有没有关闭?

按照以前的理解,事件时间>= 窗口的结束时间+ allowedLateness 就关闭该窗口,如何测试呢?

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303533000,"uid":0}发现依然输出了结果,说明 添加了allowedLateness 之后,窗口的结束时间 不是

事件时间>= 窗口的结束时间+ allowedLateness

那关闭时间到底是多少?

事件时间 >= 窗口结束12:18:55 + watermarker(3s) + allowedLateness(10s)

接着需要造一个数据:12:19:08 秒

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303548000,"uid":0}发现没有任何的输出,接着测试 【2025-11-28 12:18:50->2025-11-28 12:18:55) 是否关闭:

{"money":47,"orderId":"6ce94dcefaac4106bb7b66302bb9e785","timeStamp":1764303533000,"uid":0}最后发现窗口已经关闭啦。

所以结论就是:如果项目中使用了eventTime,那么必须添加水印,水印时间如果是3s,这个时候你又实用了AllowedLateness,这个时间是10s,那么窗口的关闭时间是 窗口结束时间+水印时间+allowedLaterness的时间。