前言

随着大模型能力的提升,单纯的对话已经无法满足实际工程需求。越来越多的场景需要模型具备 调用外部工具、访问系统能力、执行复杂任务 的能力。

MCP(Model Context Protocol) 提供了一种统一、标准化的方式,让模型可以通过协议调用外部工具;

LangGraph 则用于构建可控、可追踪的 Agent 推理流程;

Chainlit 提供了一个轻量但功能完整的 Web Chat UI,非常适合 Agent 场景。

本文将介绍如何从零搭建一个:

- 支持 MCP 多工具调用

- 支持 Ollama / OpenAI 两种模型后端

- 支持流式输出

- 支持 Web UI(Chainlit)

- 支持多轮对话与状态持久化

的完整 MCP Chat Agent。

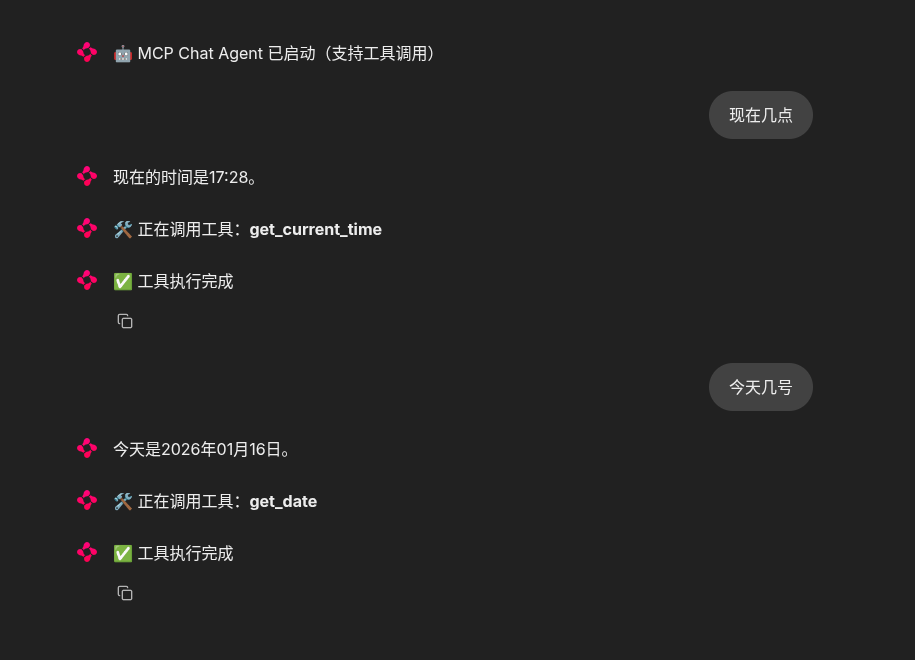

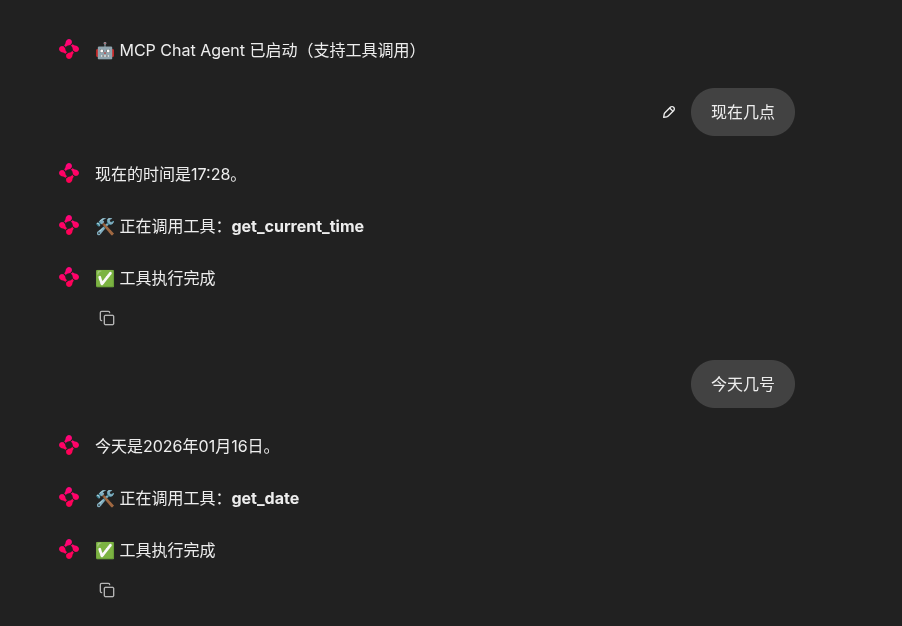

效果图

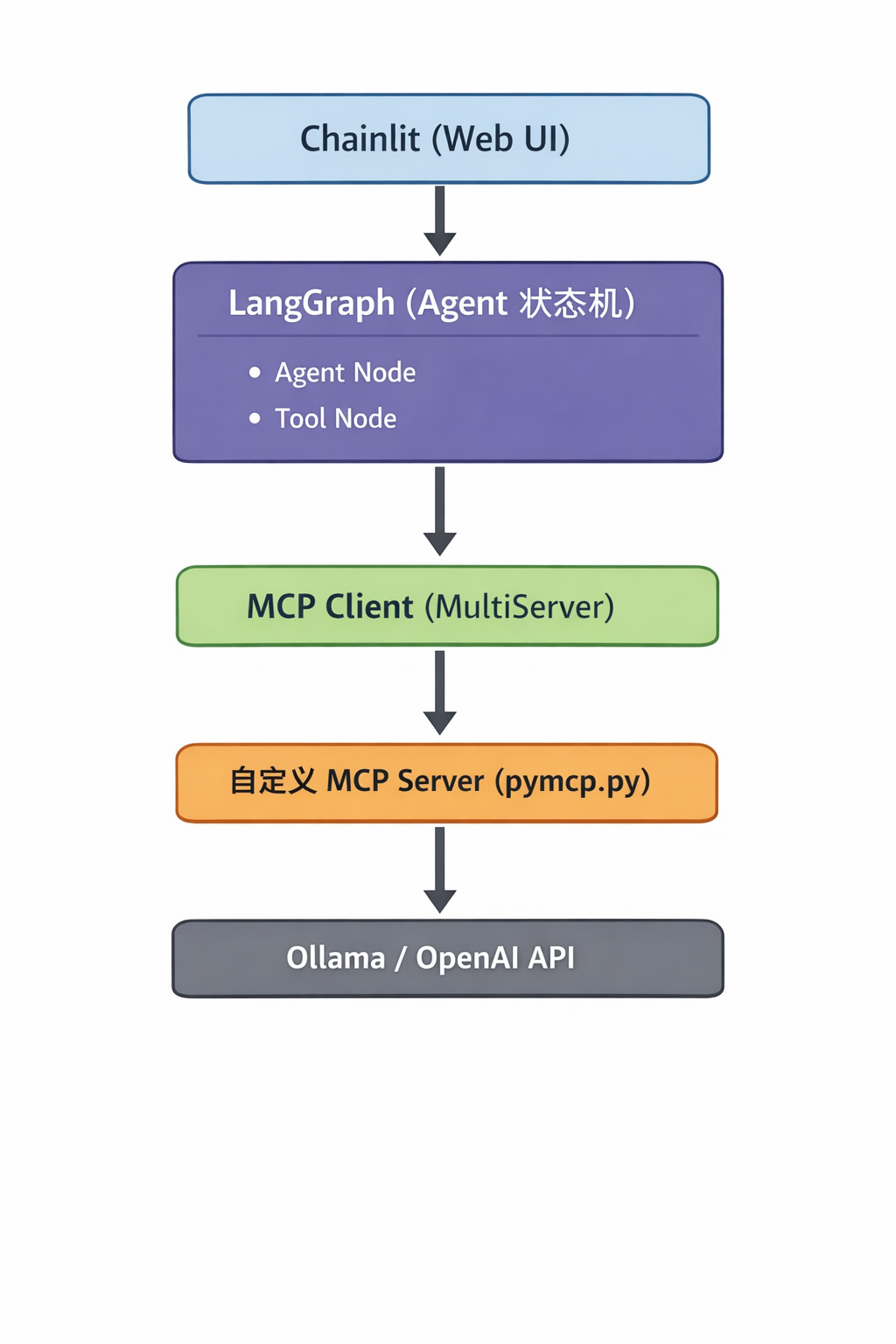

一、整体架构说明

系统整体架构如下:

Chainlit 负责 UI 与用户交互;

LangGraph 负责 Agent 的执行流程与工具调度;

MCP 负责工具协议与进程管理;

Ollama / OpenAI 提供模型推理能力。

二、环境准备

1. 安装 Ollama 并拉取模型

bash

ollama pull qwen2.5:7b确认模型已加载:

bash

ollama list2. 安装 Python 依赖

bash

pip install \

chainlit \

langchain-ollama \

langchain-openai \

langgraph \

langchain-mcp-adapters三、MCP 配置说明(mcp.json)

示例 mcp.json:

json

{

"mcpServers": {

"my_tools": {

"command": "/usr/bin/python3",

"args": [

"/home/user/langchain-mcp-demo/pymcp.py"

]

}

}

}说明:

- MCP Server 通过

stdio启动 - LangChain 会自动拉起、管理子进程

- 不需要手动启动 MCP Server

四、核心 Agent 实现(LangGraph + MCP)

1. Agent State 定义

python

from typing import Annotated, TypedDict

from langgraph.graph.message import add_messages

class State(TypedDict):

messages: Annotated[list, add_messages]该 State 用于在 LangGraph 中维护对话消息历史。

2. MCP Agent 实现

python

import asyncio

import json

from langchain_ollama import ChatOllama

from langchain_openai import ChatOpenAI

from langchain_mcp_adapters.client import MultiServerMCPClient

from langgraph.graph import StateGraph, START

from langgraph.prebuilt import ToolNode, tools_condition

from langgraph.checkpoint.memory import MemorySaver

python

class MCPAgent:

def __init__(self, backend: str = "ollama"):

if backend == "ollama":

self.llm = ChatOllama(

model="qwen2.5:7b",

temperature=0.7,

streaming=True,

)

else:

self.llm = ChatOpenAI(

model="qwen3-30b-a3b-instruct-2507",

temperature=0.7,

api_key="sk-xxxxx",

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1",

streaming=True,

)

with open("mcp.json", "r", encoding="utf-8") as f:

cfg = json.load(f)

client = MultiServerMCPClient({

name: {

"transport": "stdio",

"command": s["command"],

"args": s["args"]

}

for name, s in cfg["mcpServers"].items()

})

self.tools = asyncio.run(client.get_tools())

self.llm = self.llm.bind_tools(self.tools)

self.graph = self._build_graph()

def _agent(self, state: State):

return {"messages": [self.llm.invoke(state["messages"])]}

def _build_graph(self):

g = StateGraph(State)

g.add_node("agent", self._agent)

g.add_node("tools", ToolNode(self.tools))

g.add_edge(START, "agent")

g.add_conditional_edges("agent", tools_condition)

g.add_edge("tools", "agent")

return g.compile(checkpointer=MemorySaver())说明:

- MCP 工具通过

bind_tools注入模型 - LangGraph 使用

tools_condition自动判断是否调用工具 - MemorySaver 用于多轮对话状态保存

五、Chainlit Web UI 集成

1. 会话启动事件

python

import chainlit as cl

@cl.on_chat_start

async def on_start():

cl.user_session.set("agent", MCPAgent("ollama"))

cl.user_session.set("thread_id", "default")

await cl.Message(

content="MCP Chat Agent 已启动"

).send()2. 消息处理(流式输出 + 工具提示)

python

@cl.on_message

async def on_message(message: cl.Message):

agent = cl.user_session.get("agent")

thread_id = cl.user_session.get("thread_id")

msg = cl.Message(content="")

await msg.send()

async for event in agent.graph.astream_events(

{"messages": [("user", message.content)]},

config={"configurable": {"thread_id": thread_id}},

version="v2",

):

etype = event["event"]

if etype == "on_chat_model_stream":

chunk = event["data"]["chunk"]

if chunk.content:

msg.content += chunk.content

await msg.update()

elif etype == "on_tool_start":

await cl.Message(

content=f"调用工具:{event['name']}",

author="tool"

).send()

elif etype == "on_tool_end":

await cl.Message(

content="工具执行完成",

author="tool"

).send()3. 完整代码

python

import chainlit as cl

import asyncio

import json

import os

from typing import Annotated, TypedDict

from langchain_ollama import ChatOllama

from langchain_openai import ChatOpenAI

from langchain_mcp_adapters.client import MultiServerMCPClient

from langgraph.graph import StateGraph, START

from langgraph.prebuilt import ToolNode, tools_condition

from langgraph.checkpoint.memory import MemorySaver

from langgraph.graph.message import add_messages

# ================== 配置 ==================

OPENAI_API_KEY = "sk-xxxxxxxxxxxxxxxxxxxxx"

OPENAI_API_BASE = "https://dashscope.aliyuncs.com/compatible-mode/v1"

MCP_JSON_PATH = "/home/langchain-mcp-demo/mcp.json"

class State(TypedDict):

messages: Annotated[list, add_messages]

class MCPAgent:

def __init__(self, backend: str = "ollama"):

# -------- 模型选择 --------

if backend == "ollama":

self.llm = ChatOllama(

model="qwen2.5:7b",

temperature=0.7,

streaming=True,

)

else:

self.llm = ChatOpenAI(

model="qwen3-30b-a3b-instruct-2507",

temperature=0.7,

api_key=OPENAI_API_KEY,

base_url=OPENAI_API_BASE,

streaming=True,

)

# -------- 加载 MCP --------

with open(MCP_JSON_PATH, "r", encoding="utf-8") as f:

mcp_config = json.load(f)

client_config = {

name: {

"transport": "stdio",

"command": s["command"],

"args": s["args"],

}

for name, s in mcp_config["mcpServers"].items()

}

client = MultiServerMCPClient(client_config)

self.tools = asyncio.run(client.get_tools())

self.llm = self.llm.bind_tools(self.tools)

self.graph = self._build_graph()

def _agent(self, state: State):

return {"messages": [self.llm.invoke(state["messages"])]}

def _build_graph(self):

g = StateGraph(State)

g.add_node("agent", self._agent)

g.add_node("tools", ToolNode(self.tools))

g.add_edge(START, "agent")

g.add_conditional_edges("agent", tools_condition)

g.add_edge("tools", "agent")

return g.compile(checkpointer=MemorySaver())

# ================== Chainlit 生命周期 ==================

@cl.on_chat_start

async def on_start():

# 默认用 Ollama,你也可以加 UI 选择

cl.user_session.set("agent", MCPAgent(backend="ollama"))

cl.user_session.set("thread_id", "default")

await cl.Message(

content="🤖 MCP Chat Agent 已启动(支持工具调用)"

).send()

@cl.on_message

async def on_message(message: cl.Message):

agent: MCPAgent = cl.user_session.get("agent")

thread_id = cl.user_session.get("thread_id")

msg = cl.Message(content="")

await msg.send()

async for event in agent.graph.astream_events(

{"messages": [("user", message.content)]},

config={"configurable": {"thread_id": thread_id}},

version="v2",

):

etype = event["event"]

if etype == "on_chat_model_stream":

chunk = event["data"]["chunk"]

if chunk.content:

msg.content += chunk.content

await msg.update()

elif etype == "on_tool_start":

await cl.Message(

content=f"🛠️ 正在调用工具:**{event['name']}**",

author="tool"

).send()

elif etype == "on_tool_end":

await cl.Message(

content="✅ 工具执行完成",

author="tool"

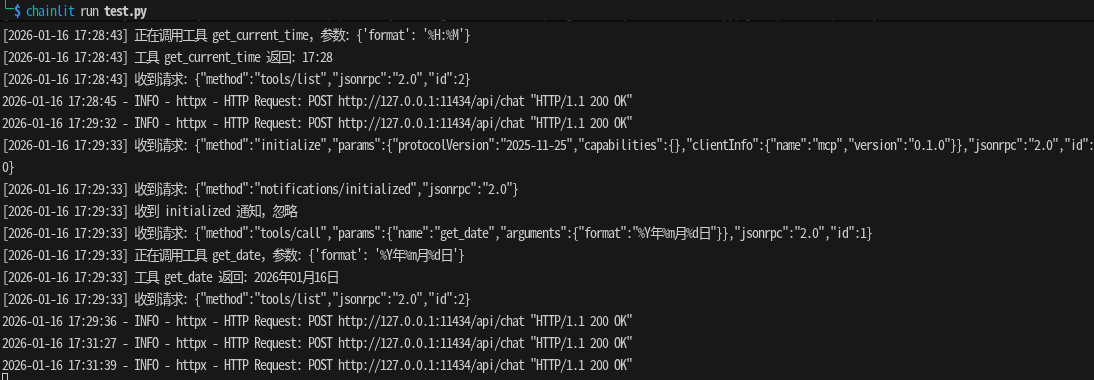

).send()六、运行方式

bash

chainlit run test.py

浏览器访问:

http://localhost:8000

支持功能:

- 流式模型输出

- MCP 工具自动调用

- 工具调用过程可视化

- 多轮对话上下文保持

参考文章

从零到一:用 Python 构建 MCP Server,基于 LangChain 的 Agent 与工具调用实战

创作不易,记得点赞、收藏、加关注!