今日内容预告:

今日主要内容

- helm部署Prometheus

- Prometheus监控k8s集群

- Prometheus基于ServiceMonitor资源监控k8s的pod服务

- Service的ExternalName资源类型

- endpoints资源映射K8S集群外部服务

- ElasticStack采集K8S集群日志

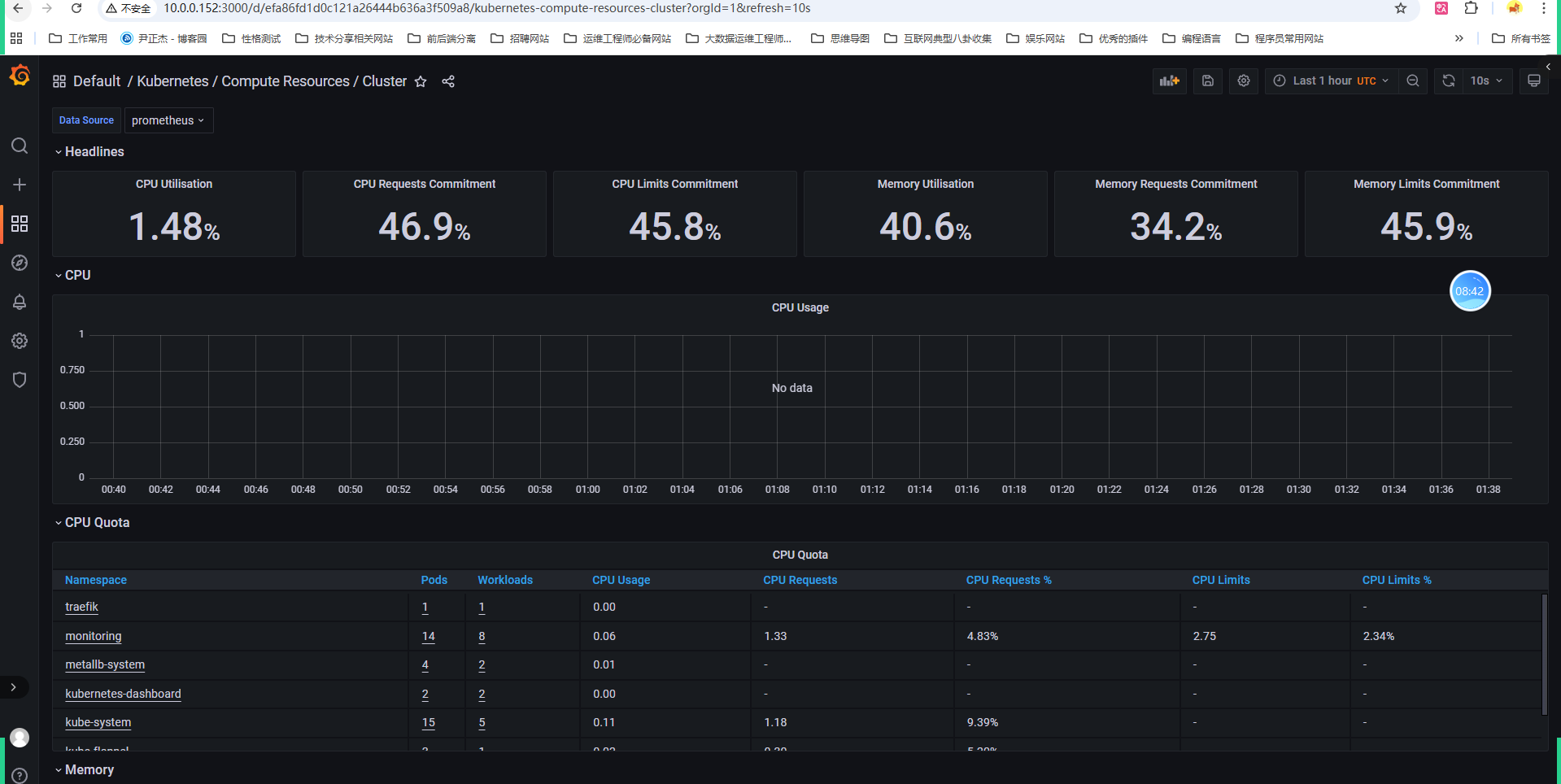

prometheus-operator部署监控K8S集群

1. 下载源代码

bash

wget https://github.com/prometheus-operator/kube-prometheus/archive/refs/tags/v0.11.0.tar.gzsvip下载地址:

bash

[root@master231 ~]# wget http://192.168.21.253/Resources/Kubernetes/Project/Prometheus/manifests/kube-prometheus-0.11.0.tar.gz2. 解压目录

bash

[root@master231 ~]# tar xf kube-prometheus-0.11.0.tar.gz -C /oldboyedu/manifests/add-ons/

[root@master231 ~]# cd /oldboyedu/manifests/add-ons/kube-prometheus-0.11.0/

[root@master231 kube-prometheus-0.11.0]# ll

total 220

drwxrwxr-x 11 root root 4096 Jun 17 2022 ./

drwxr-xr-x 9 root root 4096 Oct 3 09:32 ../

-rwxrwxr-x 1 root root 679 Jun 17 2022 build.sh*

-rw-rw-r-- 1 root root 11421 Jun 17 2022 CHANGELOG.md

-rw-rw-r-- 1 root root 2020 Jun 17 2022 code-of-conduct.md

-rw-rw-r-- 1 root root 3782 Jun 17 2022 CONTRIBUTING.md

drwxrwxr-x 5 root root 4096 Jun 17 2022 developer-workspace/

drwxrwxr-x 4 root root 4096 Jun 17 2022 docs/

-rw-rw-r-- 1 root root 2273 Jun 17 2022 example.jsonnet

drwxrwxr-x 7 root root 4096 Jun 17 2022 examples/

drwxrwxr-x 3 root root 4096 Jun 17 2022 experimental/

drwxrwxr-x 4 root root 4096 Jun 17 2022 .github/

-rw-rw-r-- 1 root root 129 Jun 17 2022 .gitignore

-rw-rw-r-- 1 root root 1474 Jun 17 2022 .gitpod.yml

-rw-rw-r-- 1 root root 2302 Jun 17 2022 go.mod

-rw-rw-r-- 1 root root 71172 Jun 17 2022 go.sum

drwxrwxr-x 3 root root 4096 Jun 17 2022 jsonnet/

-rw-rw-r-- 1 root root 206 Jun 17 2022 jsonnetfile.json

-rw-rw-r-- 1 root root 5130 Jun 17 2022 jsonnetfile.lock.json

-rw-rw-r-- 1 root root 1644 Jun 17 2022 kubescape-exceptions.json

-rw-rw-r-- 1 root root 4773 Jun 17 2022 kustomization.yaml

-rw-rw-r-- 1 root root 11325 Jun 17 2022 LICENSE

-rw-rw-r-- 1 root root 3379 Jun 17 2022 Makefile

drwxrwxr-x 3 root root 4096 Jun 17 2022 manifests/

-rw-rw-r-- 1 root root 221 Jun 17 2022 .mdox.validate.yaml

-rw-rw-r-- 1 root root 8253 Jun 17 2022 README.md

-rw-rw-r-- 1 root root 4463 Jun 17 2022 RELEASE.md

drwxrwxr-x 2 root root 4096 Jun 17 2022 scripts/

drwxrwxr-x 3 root root 4096 Jun 17 2022 tests/3. 导入镜像【线下班学员操作,线上班忽略,到百度云盘找物料包即可!】

3.1 下载镜像

bash

[root@master231 ~]# mkdir prometheus && cd prometheus

[root@master231 ~]# wget http://192.168.21.253/Resources/Kubernetes/Project/Prometheus/batch-load-prometheus-v0.11.0-images.sh

[root@master231 ~]# bash batch-load-prometheus-v0.11.0-images.sh 21

mv oldboyedu-alertmanager-v0.24.0.tar.gz oldboyedu-blackbox-exporter-v0.21.0.tar.gz oldboyedu-configmap-reload-v0.5.0.tar.gz oldboyedu-grafana-v8.5.5.tar.gz oldboyedu-kube-rbac-proxy-v0.12.0.tar.gz oldboyedu-kube-state-metrics-v2.5.0.tar.gz oldboyedu-node-exporter-v1.3.1.tar.gz oldboyedu-prometheus-adapter-v0.9.1.tar.gz oldboyedu-prometheus-config-reloader-v0.57.0.tar.gz oldboyedu-prometheus-operator-v0.57.0.tar.gz oldboyedu-prometheus-v2.36.1.tar.gz prometheus/

docker load -i oldboyedu-alertmanager-v0.24.0.tar.gz

docker load -i oldboyedu-blackbox-exporter-v0.21.0.tar.gz

docker load -i oldboyedu-configmap-reload-v0.5.0.tar.gz

docker load -i oldboyedu-grafana-v8.5.5.tar.gz

docker load -i oldboyedu-kube-rbac-proxy-v0.12.0.tar.gz

docker load -i oldboyedu-kube-state-metrics-v2.5.0.tar.gz

docker load -i oldboyedu-node-exporter-v1.3.1.tar.gz

docker load -i oldboyedu-prometheus-adapter-v0.9.1.tar.gz

docker load -i oldboyedu-prometheus-config-reloader-v0.57.0.tar.gz

docker load -i oldboyedu-prometheus-operator-v0.57.0.tar.gz

docker load -i oldboyedu-prometheus-v2.36.1.tar.gz3.2 拷贝镜像到其他节点

bash

[root@master231 ~]# scp -r prometheus/ 10.0.0.232:~

[root@master231 ~]# scp -r prometheus/ 10.0.0.233:~3.3 其他节点导入镜像

bash

[root@worker232 ~]# cd prometheus/ && for i in `ls *.tar.gz` ; do docker load -i $i; done

[root@worker233 ~]# cd prometheus/ && for i in `ls *.tar.gz` ; do docker load -i $i; done4. 安装Prometheus-Operator

bash

[root@master231 kube-prometheus-0.11.0]# kubectl apply --server-side -f manifests/setup

kubectl wait \

--for condition=Established \

--all CustomResourceDefinition \

--namespace=monitoring

[root@master231 kube-prometheus-0.11.0]# kubectl apply -f manifests/5. 检查Prometheus是否部署成功

bash

[root@master231 kube-prometheus-0.11.0]# kubectl get pods -n monitoring -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

alertmanager-main-0 2/2 Running 0 35s 10.100.203.159 worker232 <none> <none>

alertmanager-main-1 2/2 Running 0 35s 10.100.140.77 worker233 <none> <none>

alertmanager-main-2 2/2 Running 0 35s 10.100.160.140 master231 <none> <none>

blackbox-exporter-746c64fd88-66ph5 3/3 Running 0 42s 10.100.203.158 worker232 <none> <none>

grafana-5fc7f9f55d-qnfwj 1/1 Running 0 41s 10.100.140.86 worker233 <none> <none>

kube-state-metrics-6c8846558c-pp5hf 3/3 Running 0 41s 10.100.203.173 worker232 <none> <none>

node-exporter-6z9kb 2/2 Running 0 40s 10.0.0.231 master231 <none> <none>

node-exporter-gx5dr 2/2 Running 0 40s 10.0.0.233 worker233 <none> <none>

node-exporter-rq8mn 2/2 Running 0 40s 10.0.0.232 worker232 <none> <none>

prometheus-adapter-6455646bdc-4fqcq 1/1 Running 0 39s 10.100.203.162 worker232 <none> <none>

prometheus-adapter-6455646bdc-n8flt 1/1 Running 0 39s 10.100.140.91 worker233 <none> <none>

prometheus-k8s-0 2/2 Running 0 35s 10.100.203.189 worker232 <none> <none>

prometheus-k8s-1 2/2 Running 0 35s 10.100.140.68 worker233 <none> <none>

prometheus-operator-f59c8b954-gm5ww 2/2 Running 0 38s 10.100.203.152 worker232 <none> <none>5. 修改Grafana的svc

bash

[root@master231 kube-prometheus-0.11.0]# cat manifests/grafana-service.yaml

apiVersion: v1

kind: Service

metadata:

...

name: grafana

namespace: monitoring

spec:

type: LoadBalancer

...

[root@master231 kube-prometheus-0.11.0]# kubectl apply -f manifests/grafana-service.yaml

service/grafana configured

[root@master231 kube-prometheus-0.11.0]# kubectl get svc -n monitoring grafana

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

grafana LoadBalancer 10.200.48.10 10.0.0.155 3000:49955/TCP 19m6. 访问Grafana的WebUI

bash

http://10.0.0.152:3000/默认的用户名和密码: admin

dash---default-----点一下集群状态

课堂练习讲解之使用traefik暴露Prometheus的WebUI到K8S集群外部

1. 检查traefik组件是否部署

bash

[root@master231 ingressroutes]# helm list -n traefik

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

traefik-server traefik 1 2025-10-02 16:51:25.389992634 +0800 CST deployed traefik-36.3.0 v3.4.3

[root@master231 ingressroutes]# kubectl get svc,pods -n traefik

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/jiege-traefik-dashboard ClusterIP 10.200.20.87 <none> 8080/TCP 22h

service/traefik-server LoadBalancer 10.200.129.130 10.0.0.151 80:45942/TCP,443:27126/TCP 16h

NAME READY STATUS RESTARTS AGE

pod/traefik-server-fd98df655-k4llh 1/1 Running 0 16h2. 编写资源清单

yaml

# 19-ingressRoute-prometheus.yaml

apiVersion: traefik.io/v1alpha1

kind: IngressRoute

metadata:

name: ingressroute-prometheus

namespace: monitoring

spec:

entryPoints:

- web

routes:

- match: Host(`prom.oldboyedu.com`) && PathPrefix(`/`)

kind: Rule

services:

- name: prometheus-k8s

port: 9090客户端请求流程:

客户端请求 → Traefik (web entryPoint) → IngressRoute路由规则 → prometheus-k8s Service:9090 → Prometheus Pod

bash

[root@master231 ingressroutes]# kubectl apply -f 19-ingressRoute-prometheus.yaml

ingressroute.traefik.io/ingressroute-prometheus created3. windows添加解析

bash

10.0.0.151 prom.oldboyedu.com4. 浏览器访问测试

bash

http://prom.oldboyedu.com/targets?search=5. 其他方案

- NodePort

- port-forward

- LoadBalancer

- Ingress

Prometheus监控云原生应用etcd案例

1. 测试etcd metrics接口

1.1 查看etcd证书存储路径

bash

[root@master231 ~]# egrep "\--key-file|--cert-file|--trusted-ca-file" /etc/kubernetes/manifests/etcd.yaml

- --cert-file=/etc/kubernetes/pki/etcd/server.crt

- --key-file=/etc/kubernetes/pki/etcd/server.key

- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt1.2 测试etcd证书访问的metrics接口

bash

[root@master231 ~]# curl -s --cert /etc/kubernetes/pki/etcd/server.crt --key /etc/kubernetes/pki/etcd/server.key https://10.0.0.231:2379/metrics -k | tail

# TYPE process_virtual_memory_max_bytes gauge

process_virtual_memory_max_bytes 1.8446744073709552e+19

# HELP promhttp_metric_handler_requests_in_flight Current number of scrapes being served.

# TYPE promhttp_metric_handler_requests_in_flight gauge

promhttp_metric_handler_requests_in_flight 1

# HELP promhttp_metric_handler_requests_total Total number of scrapes by HTTP status code.

# TYPE promhttp_metric_handler_requests_total counter

promhttp_metric_handler_requests_total{code="200"} 0

promhttp_metric_handler_requests_total{code="500"} 0

promhttp_metric_handler_requests_total{code="503"} 02. 创建etcd证书的secrets并挂载到Prometheus server

2.1 查找需要挂载etcd的证书文件路径

bash

[root@master231 ~]# egrep "\--key-file|--cert-file|--trusted-ca-file" /etc/kubernetes/manifests/etcd.yaml

- --cert-file=/etc/kubernetes/pki/etcd/server.crt

- --key-file=/etc/kubernetes/pki/etcd/server.key

- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt2.2 根据etcd的实际存储路径创建secrets

bash

[root@master231 ~]# kubectl create secret generic etcd-tls --from-file=/etc/kubernetes/pki/etcd/server.crt --from-file=/etc/kubernetes/pki/etcd/server.key --from-file=/etc/kubernetes/pki/etcd/ca.crt -n monitoring

secret/etcd-tls created

[root@master231 ~]# kubectl -n monitoring get secrets etcd-tls

NAME TYPE DATA AGE

etcd-tls Opaque 3 12s2.3 修改Prometheus的资源,修改后会自动重启

bash

[root@master231 ~]# kubectl -n monitoring edit prometheus k8s

...

spec:

secrets:

- etcd-tls

...

[root@master231 yinzhengjie]# kubectl -n monitoring get pods -l app.kubernetes.io/component=prometheus -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

prometheus-k8s-0 2/2 Running 0 74s 10.100.1.57 worker232 <none> <none>

prometheus-k8s-1 2/2 Running 0 92s 10.100.2.28 worker233 <none> <none>2.4 查看证书是否挂载成功

bash

[root@master231 yinzhengjie]# kubectl -n monitoring exec prometheus-k8s-0 -c prometheus -- ls -l /etc/prometheus/secrets/etcd-tls

total 0

lrwxrwxrwx 1 root 2000 13 Jan 24 14:07 ca.crt -> ..data/ca.crt

lrwxrwxrwx 1 root 2000 17 Jan 24 14:07 server.crt -> ..data/server.crt

lrwxrwxrwx 1 root 2000 17 Jan 24 14:07 server.key -> ..data/server.key

[root@master231 yinzhengjie]# kubectl -n monitoring exec prometheus-k8s-1 -c prometheus -- ls -l /etc/prometheus/secrets/etcd-tls

total 0

lrwxrwxrwx 1 root 2000 13 Jan 24 14:07 ca.crt -> ..data/ca.crt

lrwxrwxrwx 1 root 2000 17 Jan 24 14:07 server.crt -> ..data/server.crt

lrwxrwxrwx 1 root 2000 17 Jan 24 14:07 server.key -> ..data/server.key3. 编写资源清单

yaml

# 01-smon-svc-etcd.yaml

apiVersion: v1

kind: Service

metadata:

name: etcd-k8s

namespace: kube-system

labels:

apps: etcd

spec:

selector:

# 指向对应的pod,# 这个标签需要匹配 Service 的 metadata.labels,k8s集群默认有

component: etcd

ports:

- name: https-metrics

port: 2379

targetPort: 2379

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: smon-etcd-oldboyedu

namespace: monitoring

spec:

# 指定job的标签,可以不设置。

jobLabel: kubeadm-etcd-k8s-yinzhengjie

# 指定监控后端目标的策略

endpoints:

# 监控数据抓取的时间间隔

- interval: 3s

# 指定metrics端口,这个port对应Services.spec.ports.name

port: https-metrics

# Metrics接口路径

path: /metrics

# Metrics接口的协议

scheme: https

# 指定用于连接etcd的证书文件

tlsConfig:

# 指定etcd的CA的证书文件

caFile: /etc/prometheus/secrets/etcd-tls/ca.crt

# 指定etcd的证书文件

certFile: /etc/prometheus/secrets/etcd-tls/server.crt

# 指定etcd的私钥文件

keyFile: /etc/prometheus/secrets/etcd-tls/server.key

# 关闭证书校验,毕竟咱们是自建的证书,而非官方授权的证书文件。

insecureSkipVerify: true

# 监控目标Service所在的命名空间,这个配置可以支持跨名称空间查找

namespaceSelector:

matchNames:

- kube-system

# 监控目标Service目标的标签。

selector:

# 注意,这个标签要和etcd的service的标签保持一致哟

matchLabels:

apps: etcdServiceMonitor 监控流程:

ServiceMonitor 监控 Service,Service 监控 Pod,Prometheus 根据 ServiceMonitor 的配置从 Service 端点抓取 Pod 的指标数据。

bash

[root@master231 servicemonitors]# kubectl apply -f 01-smon-svc-etcd.yaml

service/etcd-k8s created

servicemonitor.monitoring.coreos.com/smon-etcd-oldboyedu created4. Prometheus查看数据

bash

etcd_cluster_version5. Grafana导入模板

bash

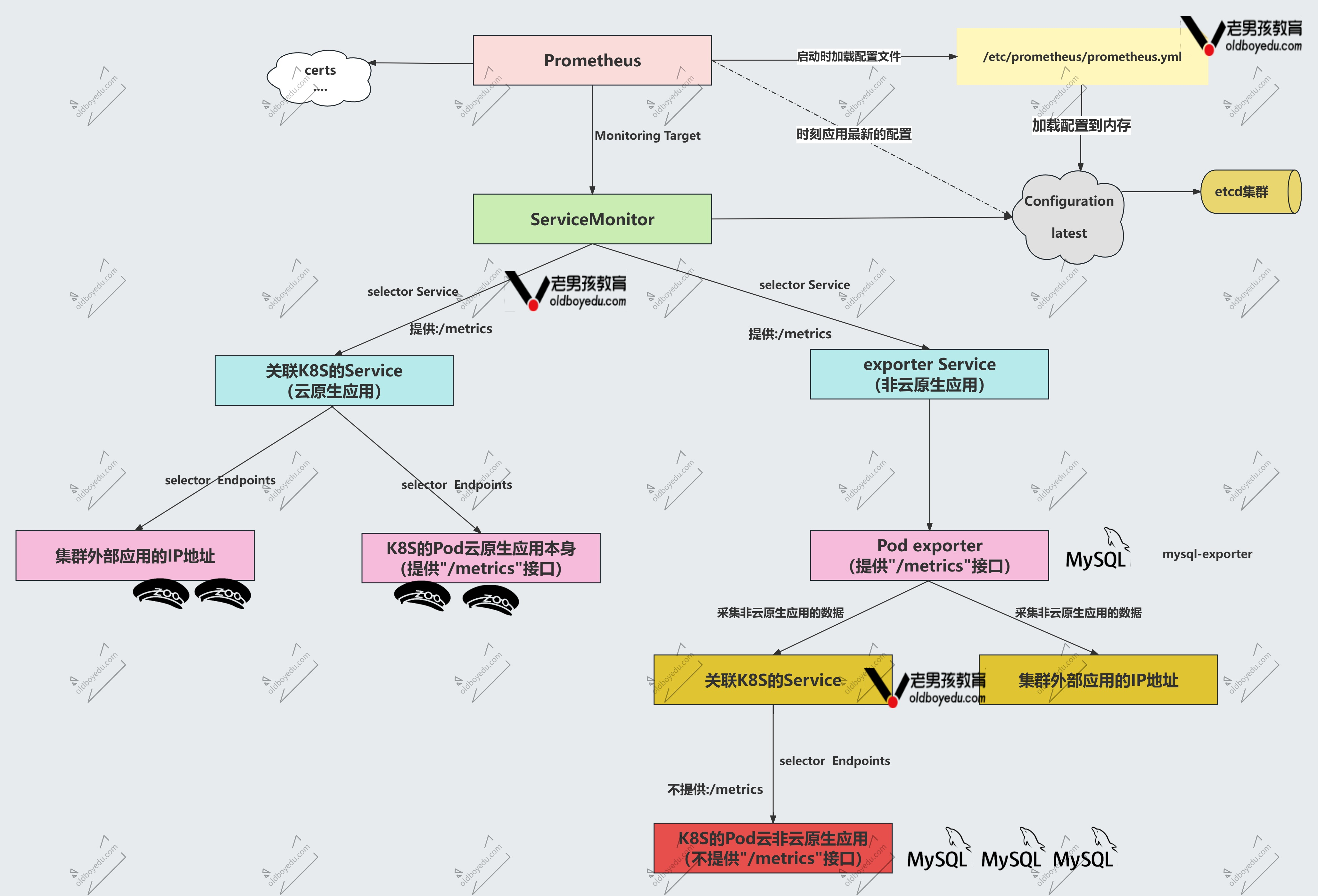

3070smon架构图解

云原生应用:

serviceMonitor不能直接关联pod要关联云原生应用的service

非云原生应用:

serviceMonitor先关联非云原生的service,该service关联mysql的export(pod),该pod关联k8s的service,该service关联它的mysql的pod

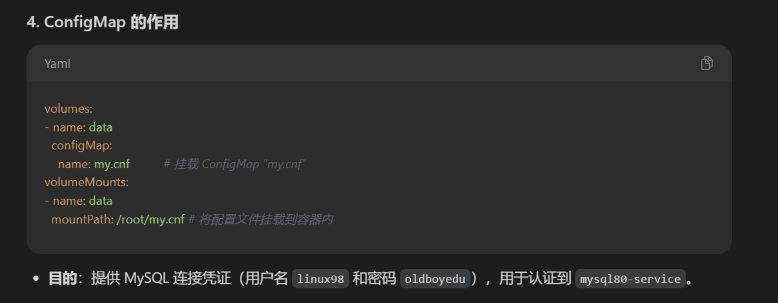

Prometheus监控非云原生应用MySQL案例

1. 编写资源清单

yaml

# 02-smon-mysqld.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql80-deployment

spec:

replicas: 1

selector:

matchLabels:

apps: mysql80

template:

metadata:

labels:

apps: mysql80

spec:

containers:

- name: mysql

image: harbor250.oldboyedu.com/oldboyedu-db/mysql:8.0.36-oracle

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

value: yinzhengjie

- name: MYSQL_USER

value: linux98

- name: MYSQL_PASSWORD

value: "oldboyedu"

---

apiVersion: v1

kind: Service

metadata:

name: mysql80-service

spec:

selector:

apps: mysql80

ports:

- protocol: TCP

port: 3306

targetPort: 3306

---

apiVersion: v1

kind: ConfigMap

metadata:

name: my.cnf

data:

.my.cnf: |-

[client]

user = linux98

password = oldboyedu

[client.servers]

user = linux98

password = oldboyedu

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-exporter-deployment

spec:

replicas: 1

selector:

matchLabels:

apps: mysql-exporter

template:

metadata:

labels:

apps: mysql-exporter

spec:

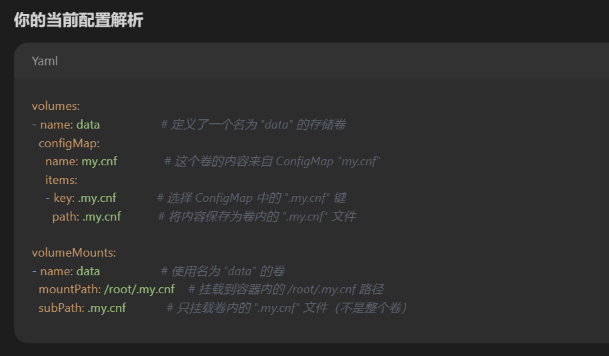

volumes:

- name: data # 卷名称

configMap:

name: my.cnf # 引用的 ConfigMap 名称

items:

- key: .my.cnf # ConfigMap 中的键名

path: .my.cnf #挂载到容器的文件名

containers:

- name: mysql-exporter

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/mysqld-exporter:v0.15.1

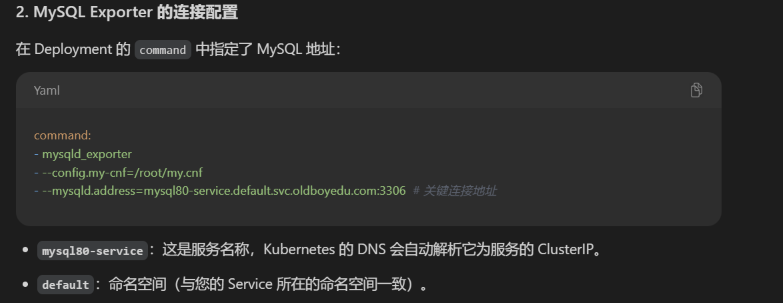

command:

- mysqld_exporter

- --config.my-cnf=/root/my.cnf

- --mysqld.address=mysql80-service.default.svc.oldboyedu.com:3306

securityContext:

runAsUser: 0

ports:

- containerPort: 9104

#env:

#- name: DATA_SOURCE_NAME

# value: mysql_exporter:yinzhengjie@(mysql80-service.default.svc.yinzhengjie.com:3306)

volumeMounts:

- name: data

mountPath: /root/my.cnf #将卷挂载到容器的 /root/my.cnf 文件

subPath: .my.cnf #仅挂载 data 卷中的 .my.cnf 文件(而不是整个卷)

---

apiVersion: v1

kind: Service

metadata:

name: mysql-exporter-service

labels:

apps: mysqld

spec:

selector:

apps: mysql-exporter

ports:

- protocol: TCP

port: 9104

targetPort: 9104 #是exporter的端口

name: mysql80

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: oldboyedu-mysql-smon

spec:

jobLabel: kubeadm-mysql-k8s-yinzhengjie

endpoints:

- interval: 3s

# 这里的端口可以写svc的端口号,也可以写svc的名称。

# 但我推荐写svc端口名称,这样svc就算修改了端口号,只要不修改svc端口的名称,那么我们此处就不用再次修改哟。

# port: 9104

port: mysql80

path: /metrics

scheme: http

namespaceSelector:

matchNames:

- default

selector:

matchLabels:

apps: mysqld

bash

[root@master231 servicemonitors]# kubectl apply -f 02-smon-mysqld.yaml

deployment.apps/mysql80-deployment created

service/mysql80-service created

configmap/my.cnf created

deployment.apps/mysql-exporter-deployment created

service/mysql-exporter-service created

servicemonitor.monitoring.coreos.com/oldboyedu-mysql-smon created

[root@master231 servicemonitors]# kubectl get pods -o wide -l "apps in (mysql80,mysql-exporter)"

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

mysql-exporter-deployment-557cbcb6df-lb5s6 1/1 Running 0 29s 10.100.2.152 worker233 <none> <none>

mysql80-deployment-858d489dc-xt2hh 1/1 Running 0 29s 10.100.2.151 worker233 <none> <none>2. Prometheus访问测试

bash

mysql_up[30s]3. Grafana导入模板

bash

7362

14057

17320课堂练习讲解之smon监控redis实战案例

课堂练习:

- 在k8s集群部署redis服务

- 使用smon资源监控Redis服务

- 使用Grafana出图展示

1. 导入redis-exporter镜像并推送到harbor仓库

bash

[root@worker233 ~]# wget http://192.168.21.253/Resources/Prometheus/images/Redis-exporter/oldboyedu-redis_exporter-v1.74.0-alpine.tar.gz

[root@worker233 ~]# docker load -i oldboyedu-redis_exporter-v1.74.0-alpine.tar.gz

[root@worker233 ~]# docker tag oliver006/redis_exporter:v1.74.0-alpine harbor250.oldboyedu.com/oldboyedu-db/redis_exporter:v1.74.0-alpine

[root@worker233 ~]# docker push harbor250.oldboyedu.com/oldboyedu-db/redis_exporter:v1.74.0-alpine2. 使用Smon监控redis服务

yaml

# 03-smon-redis.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-redis

spec:

replicas: 1

selector:

matchLabels:

apps: redis

template:

metadata:

labels:

apps: redis

spec:

containers:

- image: harbor250.oldboyedu.com/oldboyedu-db/redis:6.0.5

name: db

ports:

- containerPort: 6379

---

apiVersion: v1

kind: Service

metadata:

name: svc-redis

spec:

ports:

- port: 6379

selector:

apps: redis

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis-exporter-deployment

spec:

replicas: 1

selector:

matchLabels:

apps: redis-exporter

template:

metadata:

labels:

apps: redis-exporter

spec:

containers:

- name: redis-exporter

image: harbor250.oldboyedu.com/oldboyedu-db/redis_exporter:v1.74.0-alpine

env:

- name: REDIS_ADDR

value: redis://svc-redis.default.svc:6379

- name: REDIS_EXPORTER_WEB_TELEMETRY_PATH

value: /metrics

- name: REDIS_EXPORTER_WEB_LISTEN_ADDRESS

value: :9121

#command:

#- redis_exporter

#args:

#- -redis.addr redis://svc-redis.default.svc:6379

#- -web.telemetry-path /metrics

#- -web.listen-address :9121

ports:

- containerPort: 9121

---

apiVersion: v1

kind: Service

metadata:

name: redis-exporter-service

labels:

apps: redis

spec:

selector:

apps: redis-exporter

ports:

- protocol: TCP

port: 9121

targetPort: 9121

name: redis-exporter

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: oldboyedu-redis-smon

spec:

endpoints:

- interval: 3s

port: redis-exporter

path: /metrics

scheme: http

namespaceSelector:

matchNames:

- default

selector:

matchLabels:

apps: redis

bash

[root@master231 servicemonitors]# kubectl apply -f 03-smon-redis.yaml

deployment.apps/deploy-redis created

service/svc-redis created

deployment.apps/redis-exporter-deployment created

service/redis-exporter-service created

servicemonitor.monitoring.coreos.com/oldboyedu-redis-smon created

[root@master231 servicemonitors]# kubectl get pods -l "apps in (redis,redis-exporter)" -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

deploy-redis-5dd745fbb9-s4gs8 1/1 Running 0 19s 10.100.2.154 worker233 <none> <none>

redis-exporter-deployment-688cd77669-pr8nm 1/1 Running 0 19s 10.100.1.17 worker232 <none> <none>3. 访问Prometheus的WebUI

bash

http://prom.oldboyedu.com/targets?search=测试key: redis_db_keys

4. 导入Grafana的ID

bash

11835

140915. 测试验证准确性

bash

[root@master231 servicemonitors]# kubectl exec -it deploy-redis-5dd745fbb9-84cmz -- redis-cli -n 5 --raw

127.0.0.1:6379[5]> KEYS *

127.0.0.1:6379[5]> set school 老男孩教育

OK

127.0.0.1:6379[5]> set class Linux99

OK

127.0.0.1:6379[5]> KEYS *

school

class

127.0.0.1:6379[5]> get school

老男孩教育

127.0.0.1:6379[5]> get class

Linux99写入后再次观察Grafana的数据是否准确。

Alertmanager的配置文件使用自定义模板和配置文件定义

1. 参考目录

bash

[root@master231 kube-prometheus-0.11.0]# pwd

/oldboyedu/manifests/add-ons/kube-prometheus-0.11.02. 参考文件

bash

[root@master231 kube-prometheus-0.11.0]# ll manifests/alertmanager-secret.yaml

-rw-rw-r-- 1 root root 1443 Jun 17 2022 manifests/alertmanager-secret.yaml3. Alertmanager引用cm资源【局部参考】

yaml

# kube-prometheus-0.11.0/manifests/alertmanager-alertmanager.yaml

apiVersion: monitoring.coreos.com/v1

kind: Alertmanager

metadata:

...

name: main

namespace: monitoring

spec:

volumes:

- name: data

configMap:

name: cm-alertmanager

items:

- key: oldboyedu.tmpl

path: oldboyedu.tmpl

volumeMounts:

- name: data

mountPath: /oldboyedu/softwares/alertmanager/tmpl

image: quay.io/prometheus/alertmanager:v0.24.0

...思路: 基于configmap写自定义模板,存储卷挂载到一个容器里

彩蛋:

Prometheus的配置文件

bash

[root@master231 kube-prometheus-0.11.0]# ll manifests/prometheus-prometheus.yaml

-rw-rw-r-- 1 root root 1238 Jun 17 2022 manifests/prometheus-prometheus.yamlPrometheus监控自定义程序

1. 编写资源清单:这个属于是云原生的应用

yaml

# 04-smon-golang-login.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-login

spec:

replicas: 1

selector:

matchLabels:

apps: login

template:

metadata:

labels:

apps: login

spec:

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:login

resources:

requests:

memory: 100Mi

cpu: 100m

limits:

cpu: 200m

memory: 200Mi

ports:

- containerPort: 8080

name: login-api

---

apiVersion: v1

kind: Service

metadata:

name: svc-login

labels:

apps: login

spec:

ports:

- port: 8080

targetPort: login-api

name: login

selector:

apps: login

type: ClusterIP

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: yinzhengjie-smon-login

spec:

endpoints:

- interval: 3s

port: "login"

namespaceSelector:

matchNames:

- default

selector:

matchLabels:

apps: login2. 测试验证

bash

[root@master231 servicemonitors]# kubectl apply -f 04-smon-golang-login.yaml

deployment.apps/deploy-login created

service/svc-login created

servicemonitor.monitoring.coreos.com/yinzhengjie-smon-login created

[root@master231 servicemonitors]# kubectl get svc svc-login

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

svc-login ClusterIP 10.200.42.196 <none> 8080/TCP 6m41s

[root@master231 servicemonitors]# curl 10.200.73.82:8080/login

https://www.cnblogs.com/yinzhengjie

[root@master231 servicemonitors]# for i in `seq 10`; do curl 10.200.73.82:8080/login;done

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

https://www.cnblogs.com/yinzhengjie

[root@master231 servicemonitors]# curl -s 10.200.73.82:8080/metrics | grep yinzhengjie_application_login_api

# HELP yinzhengjie_application_login_api Count the number of visits to the /login interface

# TYPE yinzhengjie_application_login_api counter

yinzhengjie_application_login_api 113. Grafana出图展示

相关的查询语句:

yinzhengjie_application_login_api- 老男孩教育apps请求总数。increase(yinzhengjie_application_login_api[1m])- 老男孩教育每分钟请求数量曲线QPS。irate(yinzhengjie_application_login_api[1m])- 老男孩教育每分钟请求量变化率曲线

ExternalName类型案例

1. ExternalName简介:外部映射到内部

ExternalName的主要作用就是将K8S集群外部的服务映射到K8S集群内部。

ExternalName是没有CLUSTER-IP地址。

2. 实战案例

2.1 创建svc

yaml

# 04-svc-ExternalName.yaml

apiVersion: v1

kind: Service

metadata:

name: svc-blog

spec:

type: ExternalName

externalName: baidu.com意思是访问svc-blog就会直接转到baidu

bash

[root@master231 services]# kubectl apply -f 04-svc-ExternalName.yaml

service/svc-blog created

[root@master231 services]# kubectl get svc svc-blog

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

svc-blog ExternalName <none> baidu.com <none> 7s2.2 测试验证

bash

[root@master231 services]# kubectl get svc -n kube-system kube-dns

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.200.0.10 <none> 53/UDP,53/TCP,9153/TCP 14d

[root@master231 services]# kubectl run test-dns -it --rm --image=registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v1 -- sh

If you don't see a command prompt, try pressing enter.

/ # cat /etc/resolv.conf

nameserver 10.200.0.10

search default.svc.oldboyedu.com svc.oldboyedu.com oldboyedu.com

options ndots:5

/ # nslookup -type=a svc-blog

Server: 10.200.0.10

Address: 10.200.0.10:53

** server can't find svc-blog.oldboyedu.com: NXDOMAIN

** server can't find svc-blog.svc.oldboyedu.com: NXDOMAIN

svc-blog.default.svc.oldboyedu.com canonical name = baidu.com

Name: baidu.com

Address: 39.156.70.37

Name: baidu.com

Address: 220.181.7.203

/ # ping svc-blog -c 3

PING svc-blog (220.181.7.203): 56 data bytes

64 bytes from 220.181.7.203: seq=0 ttl=127 time=9.161 ms

64 bytes from 220.181.7.203: seq=1 ttl=127 time=8.774 ms

64 bytes from 220.181.7.203: seq=2 ttl=127 time=6.383 ms

--- svc-blog ping statistics ---

3 packets transmitted, 3 packets received, 0% packet loss

round-trip min/avg/max = 6.383/8.106/9.161 ms

Session ended, resume using 'kubectl attach test-dns -c test-dns -i -t' command when the pod is running

pod "test-dns" deleted温馨提示:

如果服务在K8S集群外部,且服务不在公网,而是在公司内部,则需要我们修改coreDNS的A记录。

endpoints端点映射MySQL案例(重要):外部部署服务,k8s内部可连接外部

1. 什么是endpoints

所谓的endpoints简称为ep,除了ExternalName外的其他svc类型,每个svc都会关联一个ep资源。

当删除Service资源时,会自动删除与Service同名称的endpoints资源。

如果想要映射k8s集群外部的服务,可以先定义一个ep资源,而后再创建一个同名称的svc资源即可。

2. 验证svc关联相应的ep资源

2.1 查看svc和ep的关联性

bash

[root@master231 endpoints]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.200.0.1 <none> 443/TCP 6d3h

svc-apple ClusterIP 10.200.178.42 <none> 80/TCP 27h

svc-blog ExternalName <none> cnblog.com <none> 13m

svc-login ClusterIP 10.200.205.119 <none> 8080/TCP 151m

svc-web01 ClusterIP 10.200.48.59 <none> 80/TCP 21h

svc-web02 ClusterIP 10.200.61.188 <none> 80/TCP 21h

svc-xiuxian ClusterIP 10.200.143.91 <none> 80/TCP 21h

svc-xiuxian-v1 ClusterIP 10.200.228.253 <none> 80/TCP 28h

svc-xiuxian-v2 ClusterIP 10.200.4.52 <none> 80/TCP 28h

svc-xiuxian-v3 ClusterIP 10.200.226.101 <none> 80/TCP 28h

zk-cs ClusterIP 10.200.230.57 <none> 2181/TCP 46h

zk-hs ClusterIP None <none> 2888/TCP,3888/TCP 46h

[root@master231 endpoints]# kubectl get ep

NAME ENDPOINTS AGE

kubernetes 10.0.0.231:6443 6d3h

svc-apple 10.100.1.2:80,10.100.1.254:80,10.100.2.134:80 27h

svc-login 10.100.2.156:8080 151m

svc-web01 10.100.2.143:81 21h

svc-web02 10.100.1.9:82 21h

svc-xiuxian 10.100.1.7:80 21h

svc-xiuxian-v1 10.100.2.135:80 28h

svc-xiuxian-v2 10.100.1.5:80 28h

svc-xiuxian-v3 10.100.2.138:80 28h

zk-cs <none> 46h

zk-hs <none> 46h

[root@master231 endpoints]# kubectl get svc | wc -l

13

[root@master231 endpoints]# kubectl get ep | wc -l

122.2 删除svc时会自动删除同名称的ep资源

bash

[root@master231 endpoints]# kubectl describe svc svc-login | grep Endpoints

Endpoints: 10.100.2.156:8080

[root@master231 endpoints]# kubectl describe ep svc-login

Name: svc-login

Namespace: default

Labels: apps=login

Annotations: endpoints.kubernetes.io/last-change-trigger-time: 2025-10-03T04:21:37Z

Subsets:

Addresses: 10.100.2.156

NotReadyAddresses: <none>

Ports:

Name Port Protocol

---- ---- --------

login 8080 TCP

Events: <none>

[root@master231 endpoints]# kubectl get svc,ep svc-login

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/svc-login ClusterIP 10.200.205.119 <none> 8080/TCP 153m

NAME ENDPOINTS AGE

endpoints/svc-login 10.100.2.156:8080 153m

[root@master231 endpoints]# kubectl delete ep svc-login

endpoints "svc-login" deleted

[root@master231 endpoints]# kubectl get svc,ep svc-login

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/svc-login ClusterIP 10.200.205.119 <none> 8080/TCP 153m

NAME ENDPOINTS AGE

endpoints/svc-login 10.100.2.156:8080 5s

[root@master231 endpoints]# kubectl delete svc svc-login

service "svc-login" deleted

[root@master231 endpoints]# kubectl get svc,ep svc-login

Error from server (NotFound): services "svc-login" not found

Error from server (NotFound): endpoints "svc-login" not found3. endpoint实战案例

3.1 在K8S集群外部部署MySQL数据库

bash

[root@harbor250 ~]# scp -r 10.0.0.231:/etc/docker/certs.d /etc/docker/

# Docker 的 certs.d 目录通常用于存放私有镜像仓库(如 Harbor、Nexus 等)的 TLS 证书,以允许 Docker 客户端安全地访问这些仓库

[root@harbor250 ~]# echo 10.0.0.250 harbor250.oldboyedu.com >> /etc/hosts

[root@harbor250 ~]# docker run --network host -d --name mysql-server -e MYSQL_ALLOW_EMPTY_PASSWORD="yes" -e MYSQL_DATABASE=wordpress -e MYSQL_USER=linux99 -e MYSQL_PASSWORD=oldboyedu harbor250.oldboyedu.com/oldboyedu-db/mysql:8.0.36-oracle

[root@harbor250 ~]# docker ps -l

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d0099ebc484e harbor250.oldboyedu.com/oldboyedu-db/mysql:8.0.36-oracle "docker-entrypoint.s..." About a minute ago Up About a minute mysql-server

[root@harbor250 ~]# ss -ntl | grep 3306

LISTEN 0 70 *:33060 *:*

LISTEN 0 151 *:3306 *:*

[root@harbor250 ~]# docker exec -it mysql-server mysql wordpress

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 9

Server version: 8.0.36 MySQL Community Server - GPL

Copyright (c) 2000, 2024, Oracle and/or its affiliates.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> SHOW TABLES;

Empty set (0.00 sec)

mysql> SELECT DATABASE();

+------------+

| DATABASE() |

+------------+

| wordpress |

+------------+

1 row in set (0.00 sec)

mysql>3.2 k8s集群内部部署wordpress

yaml

# 01-deploy-svc-ep-wordpress.yaml

apiVersion: v1

kind: Endpoints

metadata:

name: svc-db

subsets:

- addresses:

- ip: 10.0.0.250

ports:

- port: 3306

---

apiVersion: v1

kind: Service

metadata:

name: svc-db

spec:

type: ClusterIP

ports:

- protocol: TCP

port: 3306

#selector不需要,因为selector关联pod,现在没有pod在k8s上

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-wp

spec:

replicas: 1

selector:

matchLabels:

apps: wp

template:

metadata:

labels:

apps: wp

spec:

volumes:

- name: data

nfs:

server: 10.0.0.231

path: /yinzhengjie/data/nfs-server/casedemo/wordpress/wp

containers:

- name: wp

image: harbor250.oldboyedu.com/oldboyedu-wp/wordpress:6.7.1-php8.1-apache

env:

- name: WORDPRESS_DB_HOST

value: "svc-db"

- name: WORDPRESS_DB_NAME

value: "wordpress"

- name: WORDPRESS_DB_USER

value: linux99

- name: WORDPRESS_DB_PASSWORD

value: oldboyedu

volumeMounts:

- name: data

mountPath: /var/www/html

---

apiVersion: v1

kind: Service

metadata:

name: svc-wp

spec:

type: LoadBalancer

selector:

apps: wp

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 30090

bash

[root@master231 endpoints]# kubectl apply -f 01-deploy-svc-ep-wordpress.yaml

endpoints/svc-db created

service/svc-db created

deployment.apps/deploy-wp created

service/svc-wp created

[root@master231 endpoints]# kubectl get svc svc-db svc-wp -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

svc-db ClusterIP 10.200.54.170 <none> 3306/TCP 26s <none>

svc-wp LoadBalancer 10.200.65.2 10.0.0.153 80:30090/TCP 26s apps=wp

[root@master231 endpoints]# kubectl get pods -o wide -l apps=wp

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

deploy-wp-67bf79f8df-ktxl2 1/1 Running 0 22s 10.100.2.159 worker233 <none> <none>3.3 访问测试

bash

http://10.0.0.231:30090/

http://10.0.0.153/3.4 初始化wordpress

略,见视频

3.5 验证下数据库是否有数据

bash

[root@harbor250 ~]# docker exec -it mysql-server mysql -e "SHOW TABLES FROM wordpress"

+-----------------------+

| Tables_in_wordpress |

+-----------------------+

| wp_commentmeta |

| wp_comments |

| wp_links |

| wp_options |

| wp_postmeta |

| wp_posts |

| wp_term_relationships |

| wp_term_taxonomy |

| wp_termmeta |

| wp_terms |

| wp_usermeta |

| wp_users |

+-----------------------+项目:EFK架构分析k8s集群日志

在这里插入图片描述

1. k8s日志采集方案

边车模式(sidecar):

可以在原有的容器基础上添加一个新的容器,新的容器称为边车容器,该容器可以负责日志采集,监控,流量代理等功能。

优点:

- 不需要修改原有的架构,就可以实现新的功能。

缺点:

- 相对来说比较消耗资源;

- 获取K8S集群的Pod元数据信息相对麻烦,需要开发相关的功能;

守护进程(ds):

每个工作节点仅有一个pod。

优点:

- 相对边车模式更加节省资源。

缺点:

- 需要学习K8S的RBAC认证体系。

产品内置:

说白了缺啥功能直接让开发实现即可。

优点:

- 运维人员省事,无需安装任何组件。

缺点:

- 推动较慢,因为大多数开发都是业务开发。要么就需要单独的运维开发人员来解决。

ElasticStack对接K8S集群

1. ES集群环境准备

bash

[root@elk91 ~]# curl -k -u elastic:123456 https://10.0.0.91:9200/_cat/nodes

10.0.0.92 81 50 1 0.19 0.14 0.12 cdfhilmrstw - elk92

10.0.0.91 83 66 4 0.04 0.13 0.17 cdfhilmrstw - elk91

10.0.0.93 78 53 1 0.34 0.18 0.15 cdfhilmrstw * elk932. 验证kibana环境

bash

http://10.0.0.91:5601/3. 启动zookeeper集群

bash

[root@elk91 ~]# zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@elk91 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: leader

[root@elk92 ~]# zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@elk92 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

[root@elk93 ~]# zkServer.sh start

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@elk93 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /usr/local/apache-zookeeper-3.8.4-bin/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower4. 启动kafka集群

bash

[root@elk91 ~]# kafka-server-start.sh -daemon $KAFKA_HOME/config/server.properties

[root@elk91 ~]# ss -ntl |grep 9092

LISTEN 0 50 [::ffff:10.0.0.91]:9092 *:*

[root@elk92 ~]# kafka-server-start.sh -daemon $KAFKA_HOME/config/server.properties

[root@elk92 ~]# ss -ntl | grep 9092

LISTEN 0 50 [::ffff:10.0.0.92]:9092 *:*

[root@elk93 ~]# kafka-server-start.sh -daemon $KAFKA_HOME/config/server.properties

[root@elk93 ~]# ss -ntl | grep 9092

LISTEN 0 50 [::ffff:10.0.0.93]:9092 *:* 5. 检查zookeeper的信息

bash

[root@elk93 ~]# zkCli.sh -server 10.0.0.91:2181,10.0.0.92:2181,10.0.0.93:2181

Connecting to 10.0.0.91:2181,10.0.0.92:2181,10.0.0.93:2181

...

WATCHER::

WatchedEvent state:SyncConnected type:None path:null

[zk: 10.0.0.91:2181,10.0.0.92:2181,10.0.0.93:2181(CONNECTED) 0] ls /oldboyedu-linux99-kafka39/brokers/ids

[91, 92, 93]边车模式:少用

6. 编写资源清单filebeat将Pod日志数据写入kafka集群:kafka集群不在k8s内部所以要用endpoint指定kafka集群(边车模式)

yaml

# 01-sidecar-cm-ep-filebeat.yaml :难仔细学习

apiVersion: v1

kind: Endpoints

metadata:

name: svc-kafka

subsets:

- addresses:

- ip: 10.0.0.91

- ip: 10.0.0.92

- ip: 10.0.0.93

ports:

- port: 9092

---

apiVersion: v1

kind: Service

metadata:

name: svc-kafka

spec:

type: ClusterIP

ports:

- protocol: TCP

port: 9092

---

apiVersion: v1

kind: ConfigMap

metadata:

name: cm-filebeat

data:

main: |

filebeat.config.modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: true

output.kafka:

hosts:

- svc-kafka:9092

topic: "oldboyedu-linux99-k8s-external-kafka"

nginx.yml: |

- module: nginx

access:

enabled: true

var.paths: ["/data/access.log"]

error:

enabled: false

var.paths: ["/data/error.log"]

ingress_controller:

enabled: false

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-xiuxian

spec:

replicas: 3

selector:

matchLabels:

apps: elasticstack

version: v3

template:

metadata:

labels:

apps: elasticstack

version: v3

spec:

volumes:

- name: dt

hostPath:

path: /etc/localtime

- name: data

emptyDir: {}

- name: main

configMap:

name: cm-filebeat

items:

- key: main

path: modules-to-es.yaml

- key: nginx.yml

path: nginx.yml

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v1

volumeMounts:

- name: data

mountPath: /var/log/nginx

- name: dt

mountPath: /etc/localtime

- name: c2

image: harbor250.oldboyedu.com/oldboyedu-elasticstack/filebeat:7.17.25

volumeMounts:

- name: dt

mountPath: /etc/localtime

- name: data

mountPath: /data

- name: main

mountPath: /config/modules-to-es.yaml

subPath: modules-to-es.yaml

- name: main

mountPath: /usr/share/filebeat/modules.d/nginx.yml

subPath: nginx.yml

command:

- /bin/bash

- -c

- "filebeat -e -c /config/modules-to-es.yaml --path.data /tmp/xixi"

bash

[root@master231 elasticstack]# kubectl apply -f 01-ds-cm-ep-filebeat.yaml

endpoints/svc-kafka created

service/svc-kafka created

configmap/cm-filebeat created

deployment.apps/deploy-xiuxian created

[root@master231 elasticstack]# kubectl get pods -o wide -l version=v3

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

deploy-xiuxian-59c585c878-hd9lm 2/2 Running 0 8s 10.100.2.163 worker233 <none> <none>

deploy-xiuxian-59c585c878-pdnc6 2/2 Running 0 8s 10.100.2.162 worker233 <none> <none>

deploy-xiuxian-59c585c878-wp9r7 2/2 Running 0 8s 10.100.1.19 worker232 <none> <none>7. 访问业务的Pod日志

bash

[root@master231 services]# curl 10.100.2.163

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8"/>

<title>yinzhengjie apps v1</title>

<style>

div img {

width: 900px;

height: 600px;

margin: 0;

}

</style>

</head>

<body>

<h1 style="color: green">凡人修仙传 v1 </h1>

<div>

<img src="1.jpg">

<div>

</body>

</html>

[root@master231 services]# curl 10.100.2.163/oldboyedu.html

<html>

<head><title>404 Not Found</title></head>

<body>

<center><h1>404 Not Found</h1></center>

<hr><center>nginx/1.20.1</center>

</body>

</html>8. kafka验证数据

bash

[root@elk92 ~]# kafka-topics.sh --bootstrap-server 10.0.0.93:9092 --list | grep kafka

oldboyedu-linux99-k8s-external-kafka

[root@elk92 ~]# kafka-console-consumer.sh --bootstrap-server 10.0.0.93:9092 --topic oldboyedu-linux99-k8s-external-kafka --from-beginning9. logstash采集并分析数据后写入ES集群

bash

[root@elk93 ~]# cat /etc/logstash/conf.d/11-kafka_k8s-to-es.conf

input {

kafka {

bootstrap_servers => "10.0.0.91:9092,10.0.0.92:9092,10.0.0.93:9092"

group_id => "k8s-006"

topics => ["oldboyedu-linux99-k8s-external-kafka"]

auto_offset_reset => "earliest"

}

}

filter {

json {

source => "message"

}

mutate {

remove_field => [ "tags","input","agent","@version","ecs" , "log", "host"]

}

grok {

match => {

"message" => "%{HTTPD_COMMONLOG}"

}

}

geoip {

source => "clientip"

database => "/root/GeoLite2-City_20250311/GeoLite2-City.mmdb"

default_database_type => "City"

}

date {

match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]

}

useragent {

source => "message"

target => "oldboyedu-linux99-useragent"

}

}

output {

# stdout {

# codec => rubydebug

# }

elasticsearch {

hosts => ["https://10.0.0.91:9200","https://10.0.0.92:9200","https://10.0.0.93:9200"]

index => "oldboyedu-logstash-kafka-k8s-%{+YYYY.MM.dd}"

api_key => "a-g-qZkB4BpGEtwMU0Mu:Wy9ivXwfQgKbUSPLY-YUhg"

ssl => true

ssl_certificate_verification => false

}

}

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/11-kafka_k8s-to-es.conf10. kibana出图展示

基于ds模式采集k8s的Pod日志:极其重要

1. 删除上一步环境

bash

[root@master231 elasticstack]# kubectl delete -f 01-sidecar-cm-ep-filebeat.yaml

endpoints "svc-kafka" deleted

service "svc-kafka" deleted

configmap "cm-filebeat" deleted

deployment.apps "deploy-xiuxian" deleted2. 编写资源清单

yaml

# 02-ds-cm-ep-filebeat.yaml

# 1. 定义 Kafka 外部服务的访问端点 (Endpoints + Service)

apiVersion: v1

kind: Endpoints

metadata:

name: svc-kafka # 因为kafka部署在外边,所以叫endpoint

subsets:

- addresses:

- ip: 10.0.0.91

- ip: 10.0.0.92

- ip: 10.0.0.93

ports:

- port: 9092

---

apiVersion: v1

kind: Service

metadata:

name: svc-kafka

spec:

type: ClusterIP

ports:

- protocol: TCP

port: 9092

---

# 部署示例的应用

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-xiuxian

spec:

replicas: 3

selector:

matchLabels:

apps: elasticstack-xiuxian

template:

metadata:

labels:

apps: elasticstack-xiuxian

spec:

volumes:

- name: dt

hostPath:

path: /etc/localtime

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v1

volumeMounts:

- name: dt

mountPath: /etc/localtime

---

# 配置filebeat的RBAC权限

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: default

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: filebeat

labels:

k8s-app: filebeat

rules:

- apiGroups: [""]

resources:

- namespaces

- pods

- nodes

verbs:

- get

- watch

- list

---

# 配置 Filebeat 的核心参数 (ConfigMap)

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

data:

filebeat.yml: |-

filebeat.config:

inputs:

path: ${path.config}/inputs.d/*.yml

reload.enabled: true

modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: true

output.kafka:

hosts:

- svc-kafka:9092

topic: "oldboyedu-linux99-k8s-external-kafka-ds"

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-inputs

data:

kubernetes.yml: |-

- type: docker

containers.ids:

- "*"

processors: #配置 Filebeat 收集所有 Docker 容器的日志,并添加 Kubernetes 元数据(如 Pod Name、Namespace)

- add_kubernetes_metadata:

in_cluster: true

---

# 作用:在每个 Node 上部署一个 Filebeat Pod,收集该节点上所有容器的日志

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat

spec:

selector:

matchLabels:

k8s-app: filebeat

template:

metadata:

labels:

k8s-app: filebeat

spec:

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

operator: Exists

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

image: harbor250.oldboyedu.com/oldboyedu-elasticstack/filebeat:7.17.25

args: [

"-c", "/etc/filebeat.yml",

"-e",

]

securityContext:

runAsUser: 0

resources:

limits:

memory: 200Mi

requests:

cpu: 100m

memory: 100Mi

volumeMounts:

- name: config

mountPath: /etc/filebeat.yml # 容器内路径

readOnly: true

subPath: filebeat.yml # 仅挂载 ConfigMap 中的 filebeat.yml 键

- name: inputs

mountPath: /usr/share/filebeat/inputs.d # /usr/share/filebeat/inputs.d # 容器内目录,将 ConfigMap filebeat-inputs 的所有内容挂载到 inputs.d 目录

readOnly: true

- name: varlibdockercontainers

mountPath: /var/lib/docker/containers # 直接挂载宿主机的 Docker 容器日志目录(/var/lib/docker/containers)

readOnly: true

volumes:

- name: config # 连接volumeMount的

configMap:

defaultMode: 0600

name: filebeat-config # 连接configMap的具体配置的

- name: varlibdockercontainers

hostPath:

path: /var/lib/docker/containers

- name: inputs

configMap:

defaultMode: 0600

name: filebeat-inputs3. 创建资源

bash

[root@master231 elasticstack]# kubectl get pods -o wide -l k8s-app=filebeat

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

filebeat-8dnxs 1/1 Running 0 56s 10.100.0.4 master231 <none> <none>

filebeat-mdcd2 1/1 Running 0 56s 10.100.2.166 worker233 <none> <none>

filebeat-z4jdr 1/1 Running 0 56s 10.100.1.21 worker232 <none> <none>

[root@master231 elasticstack]# kubectl get pods -o wide -l apps=elasticstack-xiuxian

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

deploy-xiuxian-74d9748b99-2rp8v 1/1 Running 0 83s 10.100.1.20 worker232 <none> <none>

deploy-xiuxian-74d9748b99-mdpnf 1/1 Running 0 83s 10.100.2.165 worker233 <none> <none>

deploy-xiuxian-74d9748b99-psnk7 1/1 Running 0 83s 10.100.2.164 worker233 <none> <none>4. kafka验证测试

bash

[root@elk92 ~]# kafka-topics.sh --bootstrap-server 10.0.0.93:9092 --list | grep ds

oldboyedu-linux99-k8s-external-kafka-ds

[root@elk92 ~]# kafka-console-consumer.sh --bootstrap-server 10.0.0.93:9092 --topic oldboyedu-linux99-k8s-external-kafka-ds --from-beginning5. logstash写入数据到ES集群

bash

[root@elk93 ~]# cat /etc/logstash/conf.d/12-kafka_k8s_ds-to-es.conf

input {

kafka {

bootstrap_servers => "10.0.0.91:9092,10.0.0.92:9092,10.0.0.93:9092"

group_id => "k8s-001"

topics => ["oldboyedu-linux99-k8s-external-kafka-ds"]

auto_offset_reset => "earliest"

}

}

filter {

json {

source => "message"

}

mutate {

remove_field => [ "tags","input","agent","@version","ecs" , "log", "host"]

}

grok {

match => {

"message" => "%{HTTPD_COMMONLOG}"

}

}

geoip {

source => "clientip"

database => "/root/GeoLite2-City_20250311/GeoLite2-City.mmdb"

default_database_type => "City"

}

date {

match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]

}

useragent {

source => "message"

target => "oldboyedu-linux99-useragent"

}

}

output {

# stdout {

# codec => rubydebug

# }

elasticsearch {

hosts => ["https://10.0.0.91:9200","https://10.0.0.92:9200","https://10.0.0.93:9200"]

index => "oldboyedu-logstash-kafka-k8s-ds-%{+YYYY.MM.dd}"

api_key => "a-g-qZkB4BpGEtwMU0Mu:Wy9ivXwfQgKbUSPLY-YUhg"

ssl => true

ssl_certificate_verification => false

}

}

[root@elk93 ~]# logstash -rf /etc/logstash/conf.d/12-kafka_k8s_ds-to-es.conf6. kibana查询数据并测试验证

6.1 访问测试

bash

[root@master231 elasticstack]# curl 10.100.1.20

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8"/>

<title>yinzhengjie apps v1</title>

<style>

div img {

width: 900px;

height: 600px;

margin: 0;

}

</style>

</head>

<body>

<h1 style="color: green">凡人修仙传 v1 </h1>

<div>

<img src="1.jpg">

<div>

</body>

</html>

[root@master231 elasticstack]# curl 10.100.1.20/oldboyedu.html

<html>

<head><title>404 Not Found</title></head>

<body>

<center><h1>404 Not Found</h1></center>

<hr><center>nginx/1.20.1</center>

</body>

</html>6.2 kibana基于KQL查询

bash

kubernetes.pod.name : "deploy-xiuxian-74d9748b99-2rp8v"今日内容回顾:

- Prometheus监控k8s集群 *****

- smon架构 *****

- Prometheus采集etcd案例 ***

- Prometheus采集MySQL案例 ****

- Prometheus采集Redis案例 ****

- Prometheus采集自研产品的案例 **

- ExternalName *

- endpoints映射K8S集群外部服务 ****

- elasticstack架构采集K8S集群日志 *****