简介

如果你有自己的 PyTorch 模型且该模型是大型语言模型(LLM),本文档将展示如何手动将其导出并适配到 ExecuTorch,其中包含许多与之前的 export_llm 指南中相同的优化。

本文档提供一个实际示例以利用 ExecuTorch 导入自定义 LLM。主要目标是提供:关于如何将 ExecuTorch 与自定义 LLM 集成的指南。

方法适用于其他语言模型 ,因为 ExecuTorch 具有模型无关性。PyTorch - Exporting custom LLMs

环境准备

首先,需要下载 ExecuTorch 仓库并安装依赖项。ExecuTorch 建议使用 Python 3.10 并使用 Conda 来管理环境。

可以参考另一篇文档:ExecuTorch 系列 1. 从源码构建 ExecuTorch

bash

# Create a directory for this example.

mkdir et-nanogpt

cd et-nanogpt

# Clone the ExecuTorch repository and submodules.

mkdir third-party && cd third-party

git clone -b release/0.7 https://github.com/pytorch/executorch.git

cd executorch

git submodule update --init

# Create a conda environment and install requirements.

conda create -yn executorch python=3.10.0

conda activate executorch

./install_requirements.sh

cd ../..

我这里安装的 python 版本是 3.12.12。

在本地运行 LLM

文档示例使用 Karpathy 的 nanoGPT,教程同样适用于其他大语言模型,因为 ExecuTorch 是模型不变的。

使用 ExecuTorch 运行模型有两个步骤:

- 导出模型:将模型预处理为适合 ExecuTorch Runtime 执行的

.pte格式。 - 运行:加载模型文件并使用 ExecuTorch Runtime 运行。

导出到 ExecuTorch (基础版)

首先,需要下载 nanoGPT 模型和相应的分词器词汇表:

bash

# curl

curl https://raw.githubusercontent.com/karpathy/nanoGPT/master/model.py -O

curl -L https://huggingface.co/openai-community/gpt2/resolve/main/vocab.json?download=true -o vocab.json

# wget

wget https://raw.githubusercontent.com/karpathy/nanoGPT/master/model.py

wget https://huggingface.co/openai-community/gpt2/resolve/main/vocab.json然后,创建一个名为 export_nanogpt.py 的文件,其中包含以下内容:

python

import torch

from executorch.exir import EdgeCompileConfig, to_edge

from torch.nn.attention import sdpa_kernel, SDPBackend

from torch.export import export, export_for_training

from model import GPT

# Load the model.

model = GPT.from_pretrained('gpt2')

# Create example inputs. This is used in the export process to provide

# hints on the expected shape of the model input.

example_inputs = (torch.randint(0, 100, (1, model.config.block_size), dtype=torch.long), )

# Set up dynamic shape configuration. This allows the sizes of the input tensors

# to differ from the sizes of the tensors in `example_inputs` during runtime, as

# long as they adhere to the rules specified in the dynamic shape configuration.

# Here we set the range of 0th model input's 1st dimension as

# [0, model.config.block_size].

# See https://pytorch.org/executorch/main/concepts.html#dynamic-shapes

# for details about creating dynamic shapes.

dynamic_shape = (

{1: torch.export.Dim("token_dim", max=model.config.block_size)},

)

# Trace the model, converting it to a portable intermediate representation.

# The torch.no_grad() call tells PyTorch to exclude training-specific logic.

with torch.nn.attention.sdpa_kernel([SDPBackend.MATH]), torch.no_grad():

m = export_for_training(model, example_inputs, dynamic_shapes=dynamic_shape).module()

traced_model = export(m, example_inputs, dynamic_shapes=dynamic_shape)

# Convert the model into a runnable ExecuTorch program.

edge_config = EdgeCompileConfig(_check_ir_validity=False)

edge_manager = to_edge(traced_model, compile_config=edge_config)

et_program = edge_manager.to_executorch()

# Save the ExecuTorch program to a file.

with open("nanogpt.pte", "wb") as file:

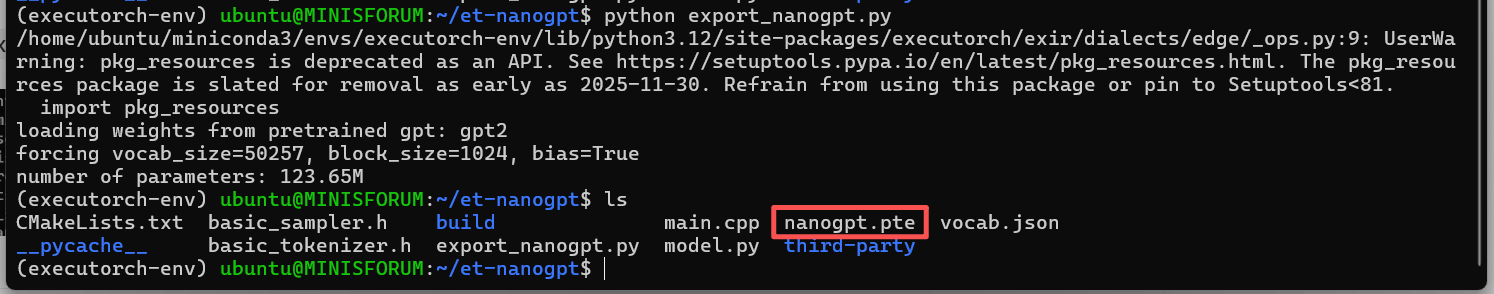

file.write(et_program.buffer)然后,通过python export_nanogpt.py执行该文件,在当前目录下得到导出后的模型:

后端委派 (Backend Delegation)

ExecuTorch 为多个不同目标提供了专用后端,包括但不限于通过 XNNPACK 后端实现 x86 和 ARM CPU 加速,通过 Core ML 后端和 Metal Performance Shader(MPS)后端实现苹果加速,以及通过 Vulkan 后端实现 GPU 加速。

为了在导出期间将模型委托给特定的后端,ExecuTorch 使用了to_edge_transform_and_lower()函数。该函数接收来自torch.export的导出程序以及一个特定于后端的分区器对象。分区器会识别计算图中可由目标后端优化的部分。在to_edge_transform_and_lower()内部,导出的程序会被转换为边缘方言程序。之后,分区器会将兼容的图部分委托给后端以进行加速和优化。任何未被委托的图部分都由 ExecuTorch 的默认算子实现来执行。

也就是说,要将导出的模型委托给特定后端,我们需要先从 ExecuTorch 代码库导入其分区器和边缘编译配置,然后调用to_edge_transform_and_lower。

如下示例,说明如何将 nanoGPT 委派给 XNNPACK:

bash

# export_nanogpt.py

# Load partitioner for Xnnpack backend

from executorch.backends.xnnpack.partition.xnnpack_partitioner import XnnpackPartitioner

# Model to be delegated to specific backend should use specific edge compile config

from executorch.backends.xnnpack.utils.configs import get_xnnpack_edge_compile_config

from executorch.exir import EdgeCompileConfig, to_edge_transform_and_lower

import torch

from torch.export import export

from torch.nn.attention import sdpa_kernel, SDPBackend

from model import GPT

# Load the nanoGPT model.

model = GPT.from_pretrained('gpt2')

# Create example inputs. This is used in the export process to provide

# hints on the expected shape of the model input.

example_inputs = (

torch.randint(0, 100, (1, model.config.block_size - 1), dtype=torch.long),

)

# Set up dynamic shape configuration. This allows the sizes of the input tensors

# to differ from the sizes of the tensors in `example_inputs` during runtime, as

# long as they adhere to the rules specified in the dynamic shape configuration.

# Here we set the range of 0th model input's 1st dimension as

# [0, model.config.block_size].

# See ../concepts.html#dynamic-shapes

# for details about creating dynamic shapes.

dynamic_shape = (

{1: torch.export.Dim("token_dim", max=model.config.block_size - 1)},

)

# Trace the model, converting it to a portable intermediate representation.

# The torch.no_grad() call tells PyTorch to exclude training-specific logic.

with torch.nn.attention.sdpa_kernel([SDPBackend.MATH]), torch.no_grad():

m = export(model, example_inputs, dynamic_shapes=dynamic_shape).module()

traced_model = export(m, example_inputs, dynamic_shapes=dynamic_shape)

# Convert the model into a runnable ExecuTorch program.

# To be further lowered to Xnnpack backend, `traced_model` needs xnnpack-specific edge compile config

edge_config = get_xnnpack_edge_compile_config()

# Converted to edge program and then delegate exported model to Xnnpack backend

# by invoking `to` function with Xnnpack partitioner.

edge_manager = to_edge_transform_and_lower(traced_model, partitioner = [XnnpackPartitioner()], compile_config = edge_config)

et_program = edge_manager.to_executorch()

# Save the Xnnpack-delegated ExecuTorch program to a file.

with open("nanogpt.pte", "wb") as file:

file.write(et_program.buffer)量化

具体请参考:Quantization

更多请参考:ExecuTorch 中的量化

Runtime 的调用

ExecuTorch 提供了一组 Runtime API 和类型来加载和运行模型。

创建一个名为 main.cpp 的文件,其中包含以下内容:

cpp

#include <cstdint>

#include "basic_sampler.h"

#include "basic_tokenizer.h"

#include <executorch/extension/module/module.h>

#include <executorch/extension/tensor/tensor.h>

#include <executorch/runtime/core/evalue.h>

#include <executorch/runtime/core/exec_aten/exec_aten.h>

#include <executorch/runtime/core/result.h>

using executorch::aten::ScalarType;

using executorch::aten::Tensor;

using executorch::extension::from_blob;

using executorch::extension::Module;

using executorch::runtime::EValue;

using executorch::runtime::Result;

// The value of the gpt2 `<|endoftext|>` token.

#define ENDOFTEXT_TOKEN 50256

std::string generate(

Module& llm_model,

std::string& prompt,

BasicTokenizer& tokenizer,

BasicSampler& sampler,

size_t max_input_length,

size_t max_output_length) {

// Convert the input text into a list of integers (tokens) that represents it,

// using the string-to-token mapping that the model was trained on. Each token

// is an integer that represents a word or part of a word.

std::vector<int64_t> input_tokens = tokenizer.encode(prompt);

std::vector<int64_t> output_tokens;

for (auto i = 0u; i < max_output_length; i++) {

// Convert the input_tokens from a vector of int64_t to EValue. EValue is a

// unified data type in the ExecuTorch runtime.

auto inputs = from_blob(

input_tokens.data(),

{1, static_cast<int>(input_tokens.size())},

ScalarType::Long);

// Run the model. It will return a tensor of logits (log-probabilities).

auto logits_evalue = llm_model.forward(inputs);

// Convert the output logits from EValue to std::vector, which is what the

// sampler expects.

Tensor logits_tensor = logits_evalue.get()[0].toTensor();

std::vector<float> logits(

logits_tensor.data_ptr<float>(),

logits_tensor.data_ptr<float>() + logits_tensor.numel());

// Sample the next token from the logits.

int64_t next_token = sampler.sample(logits);

// Break if we reached the end of the text.

if (next_token == ENDOFTEXT_TOKEN) {

break;

}

// Add the next token to the output.

output_tokens.push_back(next_token);

std::cout << tokenizer.decode({next_token});

std::cout.flush();

// Update next input.

input_tokens.push_back(next_token);

if (input_tokens.size() > max_input_length) {

input_tokens.erase(input_tokens.begin());

}

}

std::cout << std::endl;

// Convert the output tokens into a human-readable string.

std::string output_string = tokenizer.decode(output_tokens);

return output_string;

}

int main() {

// Set up the prompt. This provides the seed text for the model to elaborate.

std::cout << "Enter model prompt: ";

std::string prompt;

std::getline(std::cin, prompt);

// The tokenizer is used to convert between tokens (used by the model) and

// human-readable strings.

BasicTokenizer tokenizer("vocab.json");

// The sampler is used to sample the next token from the logits.

BasicSampler sampler = BasicSampler();

// Load the exported nanoGPT program, which was generated via the previous

// steps.

Module model("nanogpt.pte", Module::LoadMode::MmapUseMlockIgnoreErrors);

const auto max_input_tokens = 1024;

const auto max_output_tokens = 30;

std::cout << prompt;

generate(

model, prompt, tokenizer, sampler, max_input_tokens, max_output_tokens);

}将以下文件下载到与 main.cpp 相同的目录中:

bash

curl -O https://raw.githubusercontent.com/pytorch/executorch/main/examples/llm_manual/basic_sampler.h

curl -O https://raw.githubusercontent.com/pytorch/executorch/main/examples/llm_manual/basic_tokenizer.h构建 ExecuTorch

要使用 ExecuTorch Runtime 运行 LLM,还需要构建 ExecuTorch Runtime,具体内容可以参考: ExecuTorch 系列 1. 从源码构建 ExecuTorch 。ExecuTorch 使用 CMake 构建系统。

bash

cd ~/et-nanogpt/third-party/executorch

rm -rf cmake-out && mkdir cmake-out && cd cmake-out

# cmake -DCMAKE_BUILD_TYPE=Release ..

cmake ..

cd ..

cmake --build cmake-out -j$(nproc)构建示例代码

创建一个名为 CMakeLists.txt 的文件,其中包含以下内容:

cmake

cmake_minimum_required(VERSION 3.19)

project(nanogpt_runner)

set(CMAKE_CXX_STANDARD 17)

set(CMAKE_CXX_STANDARD_REQUIRED True)

# Set options for executorch build.

option(EXECUTORCH_ENABLE_LOGGING "" ON)

option(EXECUTORCH_BUILD_EXTENSION_DATA_LOADER "" ON)

option(EXECUTORCH_BUILD_EXTENSION_MODULE "" ON)

option(EXECUTORCH_BUILD_EXTENSION_TENSOR "" ON)

option(EXECUTORCH_BUILD_KERNELS_OPTIMIZED "" ON)

option(EXECUTORCH_BUILD_EXTENSION_FLAT_TENSOR "" ON)

# Include the executorch subdirectory.

add_subdirectory(

${CMAKE_CURRENT_SOURCE_DIR}/third-party/executorch

${CMAKE_BINARY_DIR}/executorch

)

add_executable(nanogpt_runner main.cpp)

target_link_libraries(

nanogpt_runner

PRIVATE executorch

extension_module_static # Provides the Module class

extension_tensor # Provides the TensorPtr class

optimized_native_cpu_ops_lib # Provides baseline cross-platform

# kernels

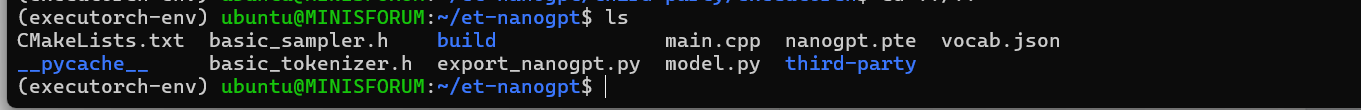

)完成上述准备工作后,完整目录应包含以下文件:

plain

et-nanogpt/

├── CMakeLists.txt

├── main.cpp

├── basic_tokenizer.h

├── basic_sampler.h

├── export_nanogpt.py

├── model.py

├── vocab.json

├── nanogpt.pte

└── third-party

└── executorch

最后,我们只需要构建示例工程:

bash

cd et-nanogpt

mkdir -p build && cd build

cmake ..

make -j$(nproc)运行

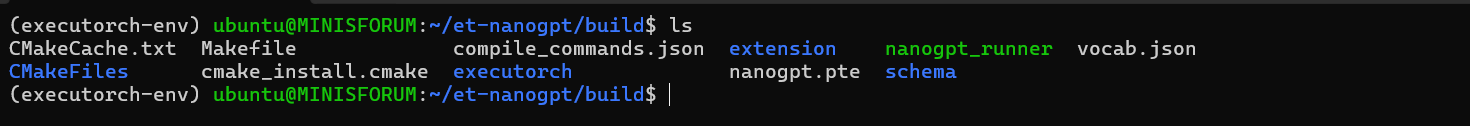

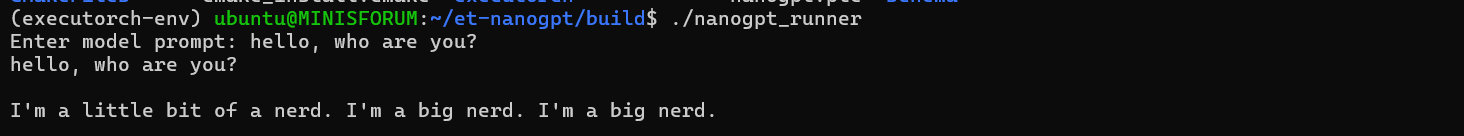

成功构建后,在 et-nanogpt/bulid 目录下生成了可执行文件nanogpt_runner,我们把生成的模型和词汇表文件放入 build 目录。

运行测试代码:

bash

./nanogpt_runner

在我的电脑上跑效果不太好,生成回复的速度非常慢,回答也不准确。

据文档中描述:此时,它可能会运行得非常缓慢。这是因为 ExecuTorch 没有被告知要针对特定的硬件后端 (delegation),并且它以 32 位浮点数 (无量化) 执行所有计算。