一、Istio架构与安装

1.1 架构与核心组件

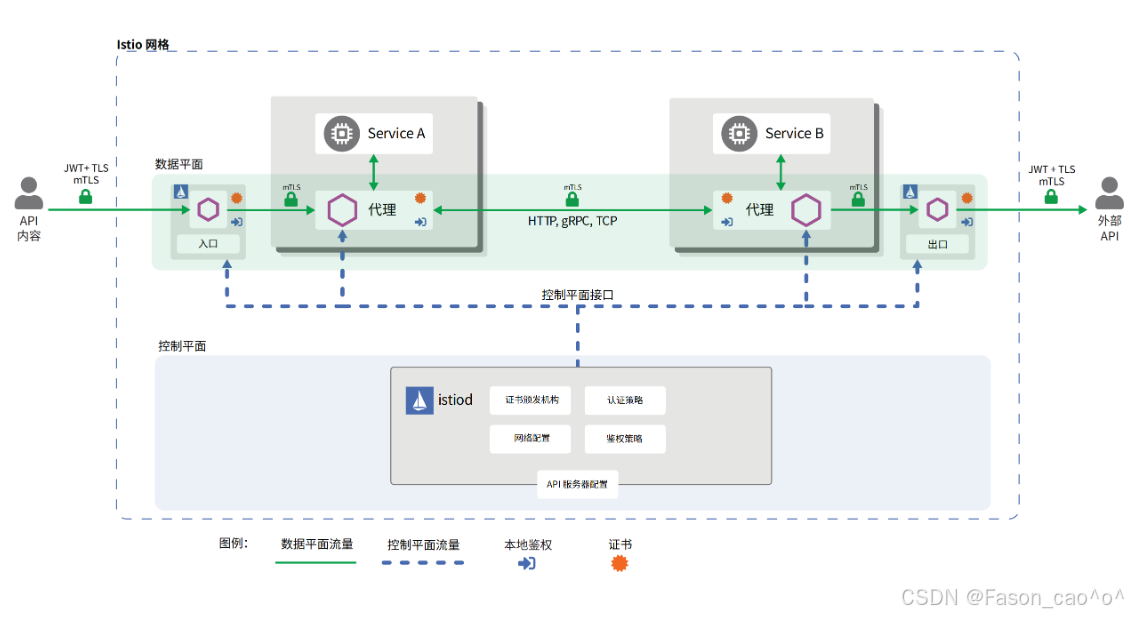

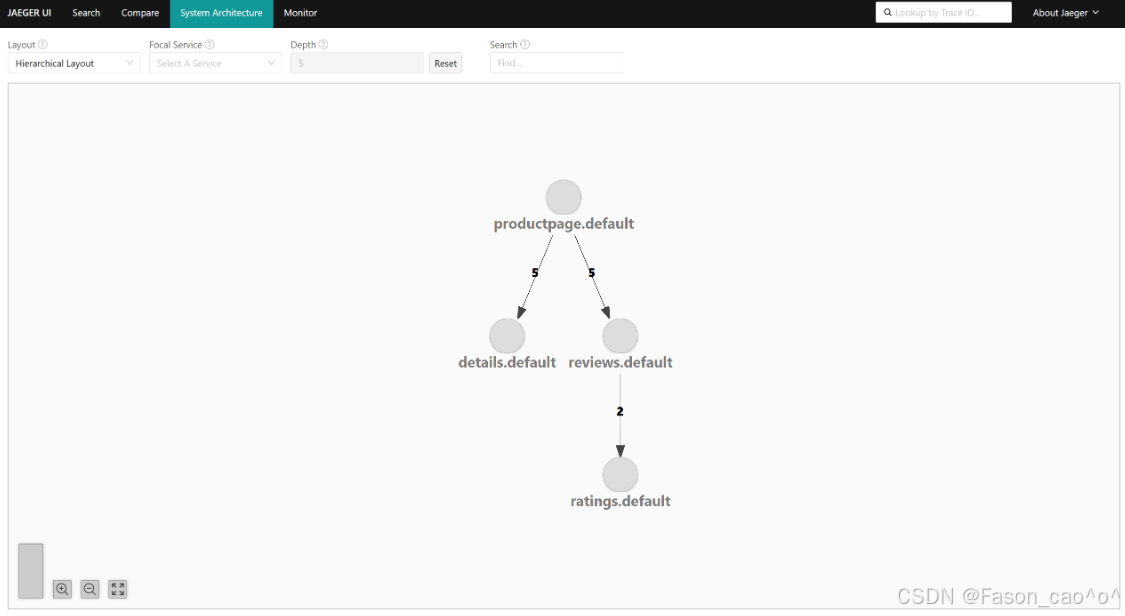

Istio 是云原生场景下的服务网格框架,核心是通过 Sidecar 代理(Envoy)接管微服务的网络通信,无需修改业务代码,就能统一解决微服务架构的网络管理问题,核心作用分以下几点:

-

精细化流量治理

可实现智能路由(如版本分流、请求头匹配路由)、权重分配(灰度发布)、负载均衡、熔断 / 限流、故障注入等能力,比如bookinfo配置的服务多版本流量分配,就是通过 Istio 完成的。

-

服务间安全增强

自动实现服务间通信的 mTLS 加密(无需业务代码适配),同时提供统一的身份认证、权限控制(比如限制特定服务调用某接口),保障微服务通信的安全。

-

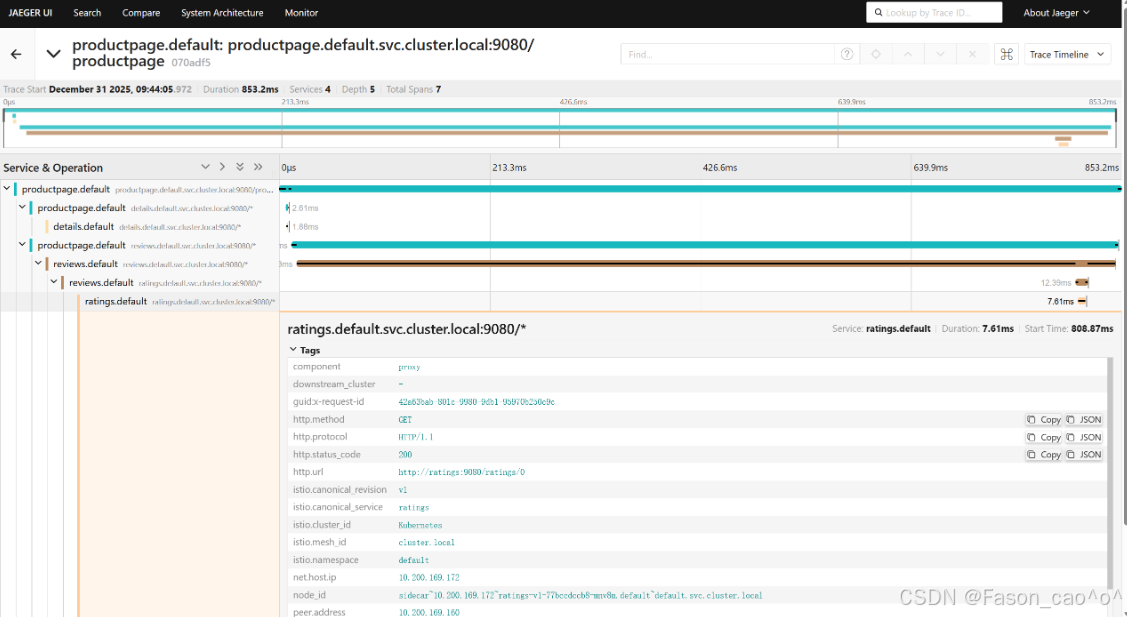

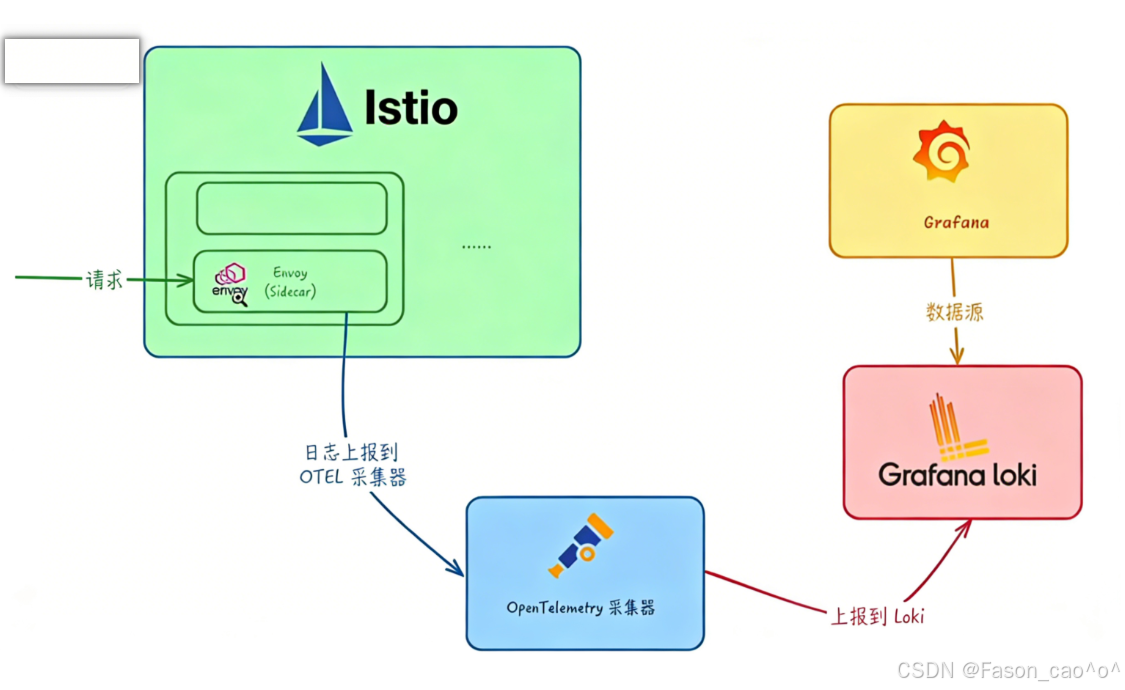

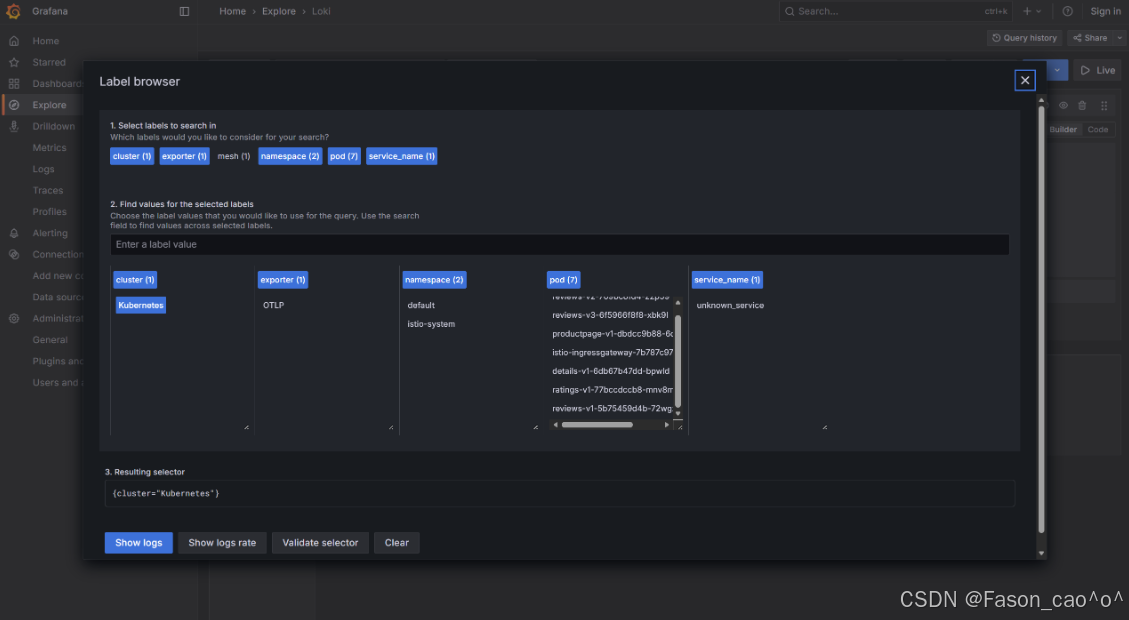

全链路可观测性

统一采集服务调用的监控指标(如延迟、错误率)、分布式追踪(调用链)、访问日志,不用业务服务单独集成监控组件,就能快速排查跨服务的问题。

-

无侵入式接入

以 Sidecar 代理的形式与业务服务部署在同一 Pod 中,业务代码无需任何改造,仅需通过配置(如 VirtualService、DestinationRule)即可启用所有能力。

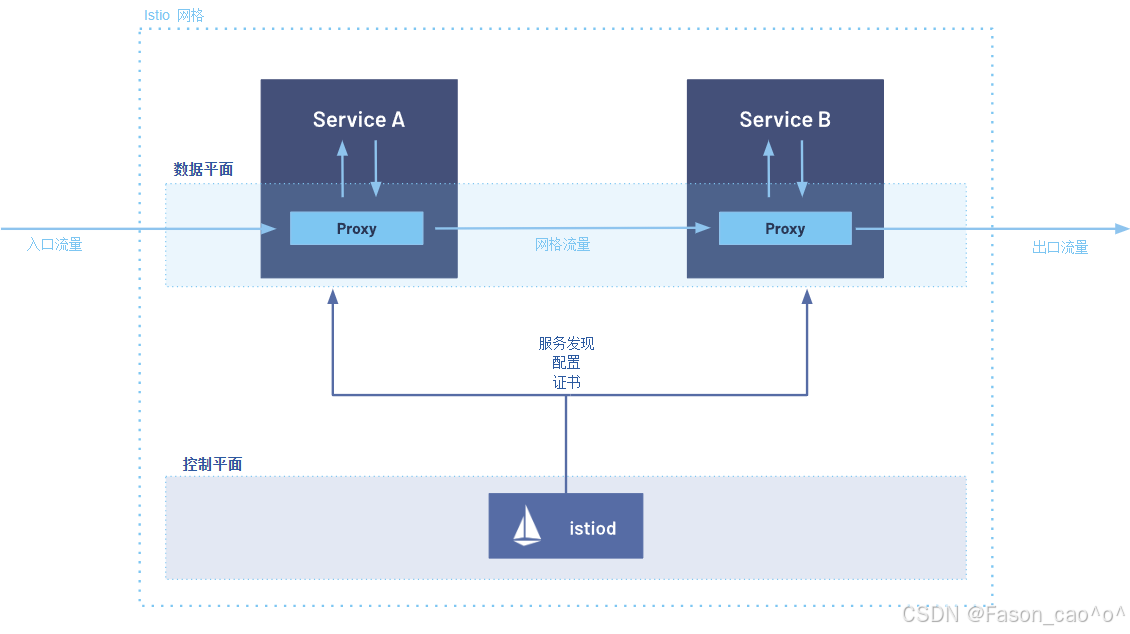

结合图中架构(控制平面 + 数据平面),流量流程分为入口流量→网格内流量→出口流量三个阶段,具体步骤如下:

外部用户 → istio-ingressgateway (入口网关) → Service A 的 Sidecar → Service A

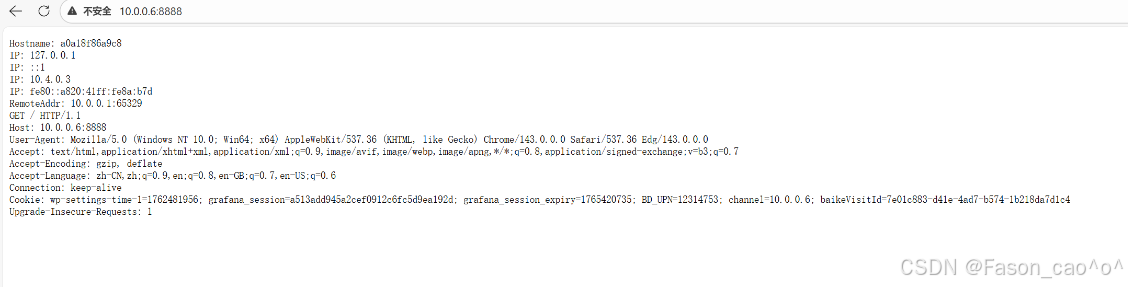

入口流量接入 外部请求(入口流量)首先到达 Service A 对应的 Sidecar Proxy(数据平面组件,即 Envoy)------Sidecar 与业务服务(Service A)同 Pod 部署,是流量进入网格的 "网关"。

Proxy 基于控制平面配置处理请求 Sidecar Proxy 会从控制平面的

istiod获取预先下发的配置(包括路由规则、安全策略、服务发现信息等),对入口流量执行协议解析、认证鉴权、流量路由等处理。流量转发至业务服务 处理后的流量被 Proxy 转发给同 Pod 内的 Service A,Service A 执行业务逻辑。

**网格内服务调用(Service A → Service B)**当 Service A 需要调用 Service B 时,请求不会直接发往 Service B,而是先发送到自身的 Sidecar Proxy。

网格流量转发 Service A 的 Proxy 依据

istiod提供的服务发现信息,找到 Service B 对应的 Sidecar Proxy 地址,将请求转发至 Service B 的 Proxy(此阶段流量为 "网格流量")。Service B 接收请求 Service B 的 Sidecar Proxy 接收请求后,基于

istiod的配置执行负载均衡、熔断检查等处理,再将请求转发给同 Pod 内的 Service B。出口流量透出若 Service B 需要对外请求(出口流量),请求会先发送到自身的 Sidecar Proxy,Proxy 执行出口策略(如限流、黑白名单)后,将流量发往外部目标。

核心组件作用说明表

| 组件类型 | 组件名称 | 作用说明(基于 1.28.1 版本) |

|---|---|---|

| 控制平面 | istiod | 1. 合并了 Pilot、Citadel 等组件,是控制平面唯一核心;2. 负责服务发现、流量规则 / 策略分发;3. 管理网格内的证书(提供 mTLS 加密);4. 向 Sidecar Proxy 推送动态配置。 |

| 数据平面 | Sidecar Proxy(Envoy) | 1. 与业务服务同 Pod 部署,拦截服务的所有入 / 出流量;2. 执行 istiod 下发的流量规则(路由、负载均衡、熔断等);3. 实现网格内的安全通信(mTLS)、监控 metrics 采集。 |

| 业务层 | Service A/B | 网格内的业务服务,专注于执行业务逻辑;所有网络通信均通过 Sidecar Proxy 代理,无需修改业务代码。 |

| 网关 | Gateway | 统一出入口总控中心,把微服务的通用能力(流量、安全、监控)从单个服务中剥离,集中统一管理。 |

1.2 安装istio1.28.1版本

下载软件包

https://github.com/istio/istio/releases/download/1.28.1/istio-1.28.1-linux-amd64.tar.gz

解压安装

bash

tar解压之后直接将bin目录下的istioctl移动到/usr/local/bin/目录下

然后执行安装命令

# 安装Istio(demo Profile包含所有核心组件,包括sidecar-injector)

istioctl install --set profile=demo -y

# 自己测试建议安装demo的模式

[root@k8s-master ~/istio]# kubectl get po -n istio-system

NAME READY STATUS RESTARTS AGE

istio-egressgateway-6f6bb8f7f9-t77rc 1/1 Running 1 (5h24m ago) 26h

istio-ingressgateway-7b787c97fc-c2dk6 1/1 Running 1 (5h24m ago) 26h

istiod-cd86994b8-2t4x2 1/1 Running 1 (5h24m ago) 26h

kiali-7b58697666-l5j89 1/1 Running 0 130mIstio 的 Profile 是官方为不同场景定制的 "部署套餐",省去了手动配置数百个参数的麻烦。核心逻辑是:不同 Profile 包含的组件不同,只有包含 istiod 和 sidecar-injector 的 Profile,才能实现 Envoy 自动注入。

| Profile 名称 | 适用场景 | 核心包含组件 | 特点 |

|---|---|---|---|

demo |

测试 / 学习 / 演示(推荐) | istiod、sidecar-injector、ingressgateway、egressgateway | 功能最全,资源占用适中,适合新手验证注入 |

default |

生产基础部署 | istiod、sidecar-injector、ingressgateway | 精简版,去掉演示组件,保留核心注入功能 |

minimal |

极简部署(仅控制面) | 仅 istiod(无 sidecar-injector / 网关) | 无自动注入功能,需手动配置 |

openshift |

RedHat OpenShift 平台 | 适配 OpenShift 的 istiod、injector、网关 | 兼容 OpenShift 的安全策略和网络规则 |

production |

高可用生产部署 | 多副本 istiod、injector、网关,带监控 |

开启自动注入功能

bash

# 打上label为ns,然后这个名称空间的所有新建的pod都会自动注入一个proxy(envoy).

[root@k8s-master ~/istio]# kubectl label ns default istio-injection=enabled

这样就是在pod下都会存在一个proxy代理容器,也就是标志着接入了服务网格的功能.卸载(拓展)

bash

# 卸载现有Istio(如果安装过)

istioctl uninstall -y --purge

# 删除istio-system命名空间(清理残留)

kubectl delete namespace istio-system二、Istio流量管理

2.1 Envoy代理架构与核心组件

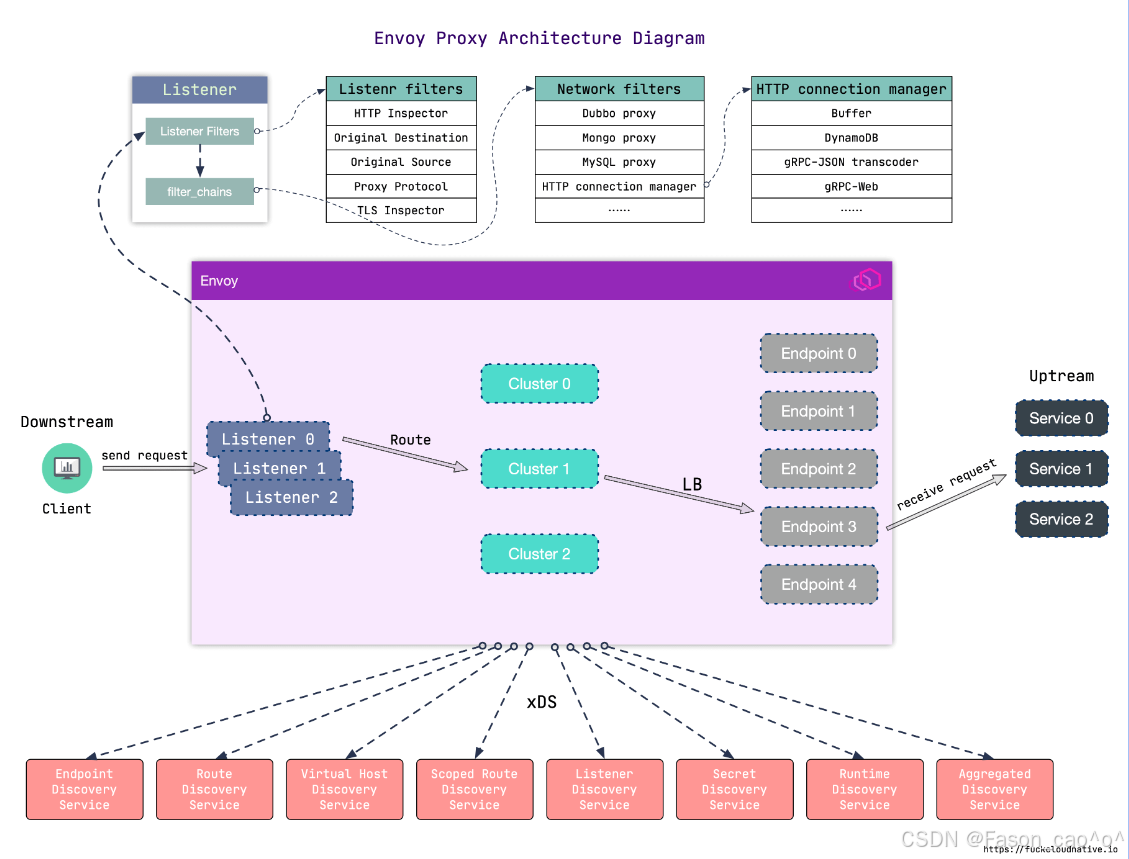

Envoy 作为代理的流量流程是下游(Client)→ Envoy 代理 → 上游(后端服务),具体步骤如下:

下游请求进入 Envoy 客户端(下游)发起请求,首先到达 Envoy 的 Listener(监听器)(图中 Listener 0/1/2)------Listener 是 Envoy 的 "入口",负责监听指定端口的流量(一个 Envoy 可配置多个 Listener 处理不同端口 / 协议的请求)。

Listener 层初步处理 请求进入 Listener 后,先经过 Listener Filters (如图中的 HTTP Inspector、TLS Inspector):这些过滤器会对请求做初步检测 (比如识别请求的协议类型、获取原始目标地址),为后续流程做准备;之后通过

filter_chains进入对应的网络处理链路。协议 / 网络层处理 请求进入 Network Filters (如图中的 HTTP connection manager、Dubbo proxy):这一层是 "协议适配层",会根据请求的协议类型(HTTP、RPC、MySQL 等)进行针对性处理(比如 HTTP 协议会交给

HTTP connection manager解析请求头、管理连接)。路由匹配与集群选择 经过协议处理后,请求会通过 Route(路由规则) 进行匹配:根据请求的域名、路径、请求头等信息,匹配到对应的 Cluster(上游集群)(图中 Cluster 0/1/2)------Cluster 是 "上游服务的逻辑分组"(比如一个后端服务的所有实例会被归为一个 Cluster)。

负载均衡与请求转发 确定目标 Cluster 后,Envoy 会通过 LB(负载均衡) 算法(如轮询、加权随机),从 Cluster 对应的 Endpoint(端点)(图中 Endpoint 0/1/2 等)中选择一个具体的后端服务实例,最终将请求转发到该上游服务。

核心组件

| 组件名称 | 作用说明 |

|---|---|

| Listener Filters | 对进入 Listener 的请求做初步检测(如识别协议、解析原始目标),为后续流程预处理 |

| Listener(监听器) | Envoy 的流量入口,负责监听指定端口的请求;支持多 Listener 对应不同端口 / 协议 |

| Network Filters | 按请求的协议类型(HTTP、Dubbo、MySQL 等)做针对性处理,是协议适配的核心层 |

| HTTP Connection Manager | Network Filters 的子集,专门处理 HTTP 协议请求(解析请求头、管理连接、路由匹配) |

| Route(路由) | 依据请求的域名、路径等信息,匹配对应的上游服务集群(Cluster) |

| Cluster(上游集群) | 后端服务的逻辑分组(如一个服务的所有实例),是负载均衡的作用对象 |

| Endpoint(端点) | 上游服务的具体实例(IP + 端口),是请求最终转发的目标 |

| LB(负载均衡) | 属于 Cluster 的功能,按算法(轮询、加权等)选择 Cluster 内的 Endpoint |

| xDS 服务 | Envoy 的动态配置源(如 Endpoint 发现、Route 配置更新),支撑 Envoy 动态调整配置 |

2.2 请求路由

Envoy 是高性能的云原生代理(常用作服务网格的 Sidecar),核心作用可拆分为以下几点:

-

全链路流量拦截:作为 Sidecar 与业务服务同部署,接管服务的所有入 / 出流量,成为服务通信的 "必经网关",业务服务无需自己处理网络连接逻辑。

-

多协议适配处理:原生支持 HTTP/1.1、HTTP/2、gRPC、TCP、WebSocket 等协议,能自动解析不同协议的请求,还可实现协议转换(如 HTTP/1.1 转 HTTP/2),适配复杂微服务的通信场景。

-

精细化流量管控:内置负载均衡(轮询、加权等)、熔断(限制异常服务请求)、限流(控制请求速率)、故障注入(模拟延迟 / 错误)等能力,保障服务通信的稳定性。

-

可观测性数据采集:在流量路径上天然采集请求的监控指标(延迟、错误率)、访问日志(请求头、响应码)、分布式追踪数据(传递 Trace ID),是服务网格可观测性的核心数据来源。

-

安全通信支撑:支持 mTLS 加密(与其他 Envoy 间的通信加密)、请求身份校验,配合控制平面(如 Istiod)实现服务间的安全访问控制。

-

动态配置更新:通过 xDS 协议从控制平面实时获取路由、端点等配置,无需重启代理即可生效,适配云原生的动态扩缩容、配置变更场景。

DestinationRule

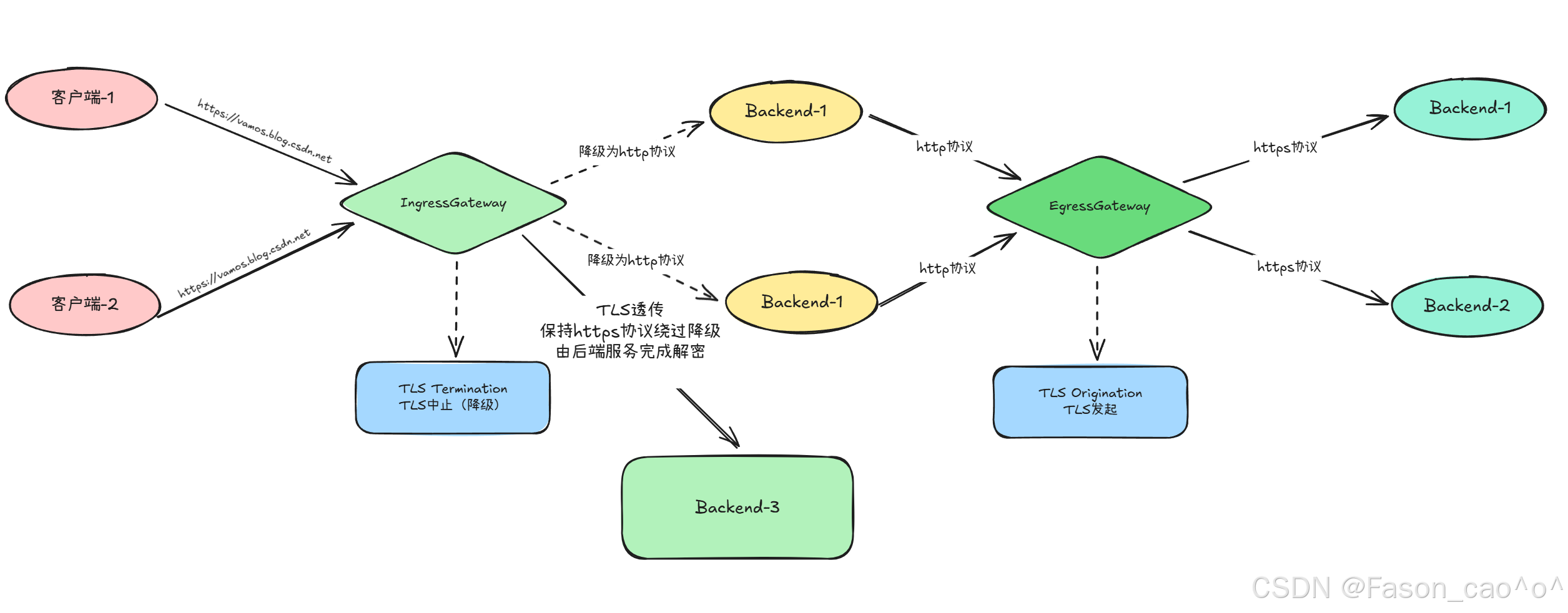

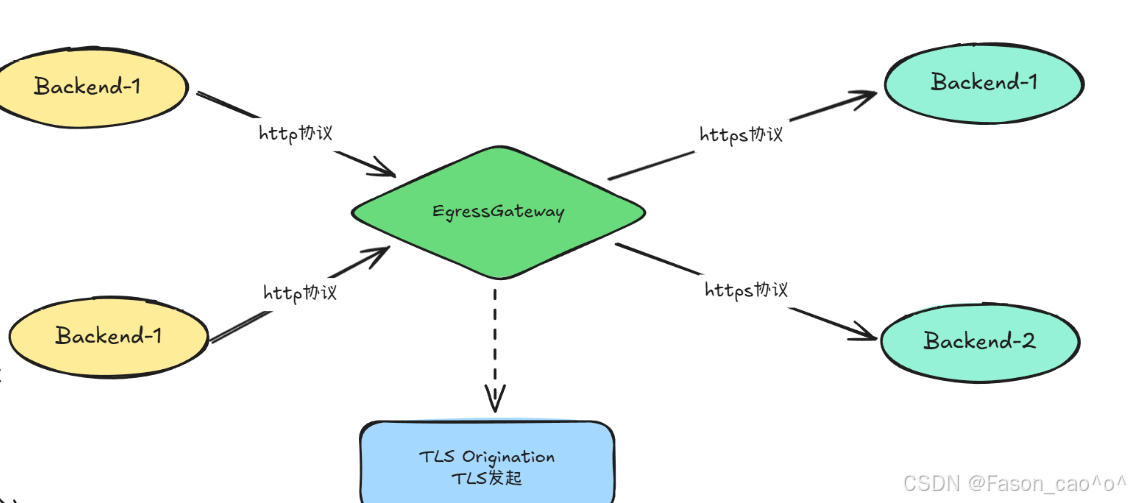

DestinationRule------>是配置envoy下的cluster的

Istio 里的流量路由规则(导航仪) ------ 只管「流量该往哪走」:匹配指定的流量(比如网格内访问 my-nginx 的 80/443 流量),定义转发路径(如先到 Egress Gateway,再到 Nginx),是控制流量走向的核心。

1️⃣ 服务子集(Subsets):就像给服务分组,比如把reviews服务按版本分成v1、v2、v3三个小组,这样你就能精准控制流量流向哪个版本。

2️⃣ 流量策略(Traffic Policy):定义怎么把流量分发给这些子集,比如用轮询(ROUND_ROBIN)、随机(RANDOM)或者最少连接数(LEAST_CONN)等策略。

3️⃣ TLS设置:配置安全通信,比如用ISTIO_MUTUAL启用双向TLS,这是服务网格内部通信的安全最佳实践。

"DestinationRule与VirtualService配合使用,可以实现强大的流量管理功能。"VirtualService是"宏观的交通规划",DestinationRule是"微观的本地交通管理"。

VirtualService

VirtualService ------> 控制envoy的route下的路由表

Istio 里的流量策略规则(执行规范) ------ 只管「流量到目标后该怎么处理」:针对指定目标服务(如 Egress Gateway、Nginx),定义流量的加密方式(mTLS)、负载均衡、SNI、证书关联等规则,是控制流量传输行为的核心。

核心作用是:将客户端请求与目标负载进行解耦,让流量管理变得超级灵活!

1️⃣ 根据请求特征路由:比如"如果请求头里有X-User-Type: VIP,就路由到VIP服务"

2️⃣ 实现精细流量控制:90%流量到v1,10%到v2,完美实现灰度发布

3️⃣ 配置超时和重试:比如"请求超时5秒,重试3次"

4️⃣ 实现重定向:比如"把旧版API请求自动重定向到新版"

VirtualService不需要修改你的应用程序代码!就像给城市交通系统加装了智能调度系统,但公交车和司机(你的服务)完全不需要改变。

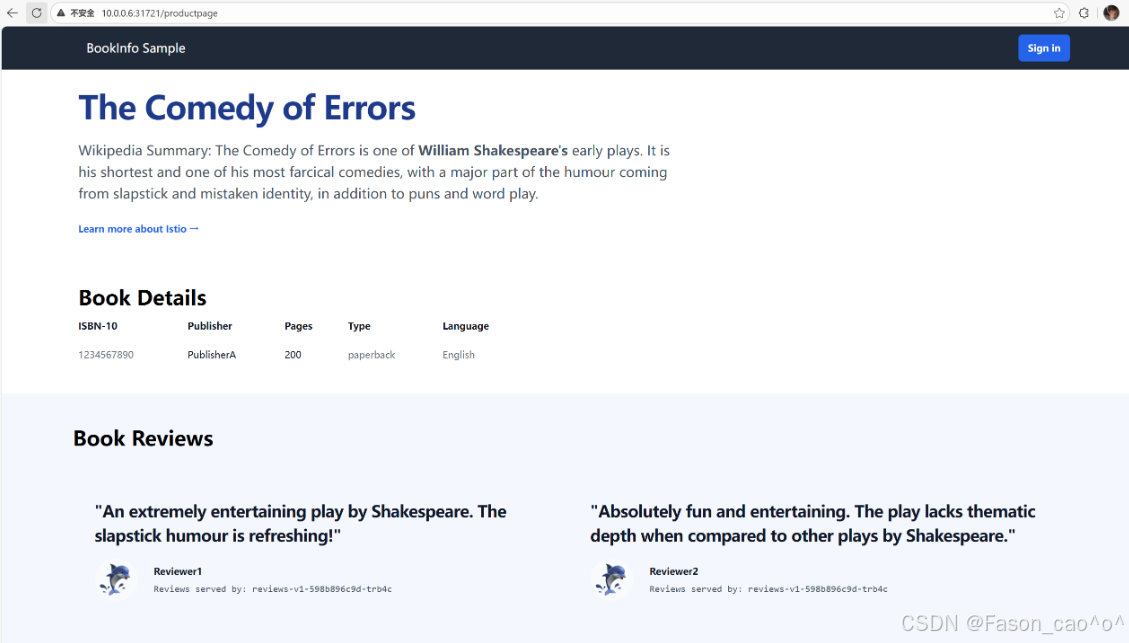

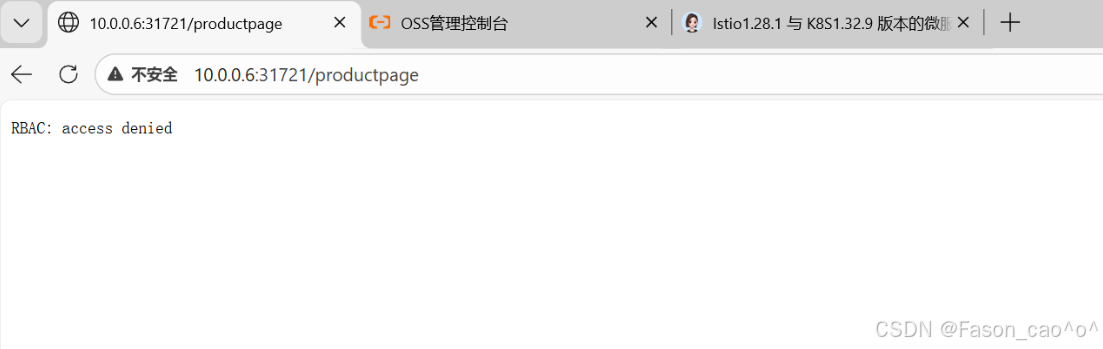

流量管理之固定访问

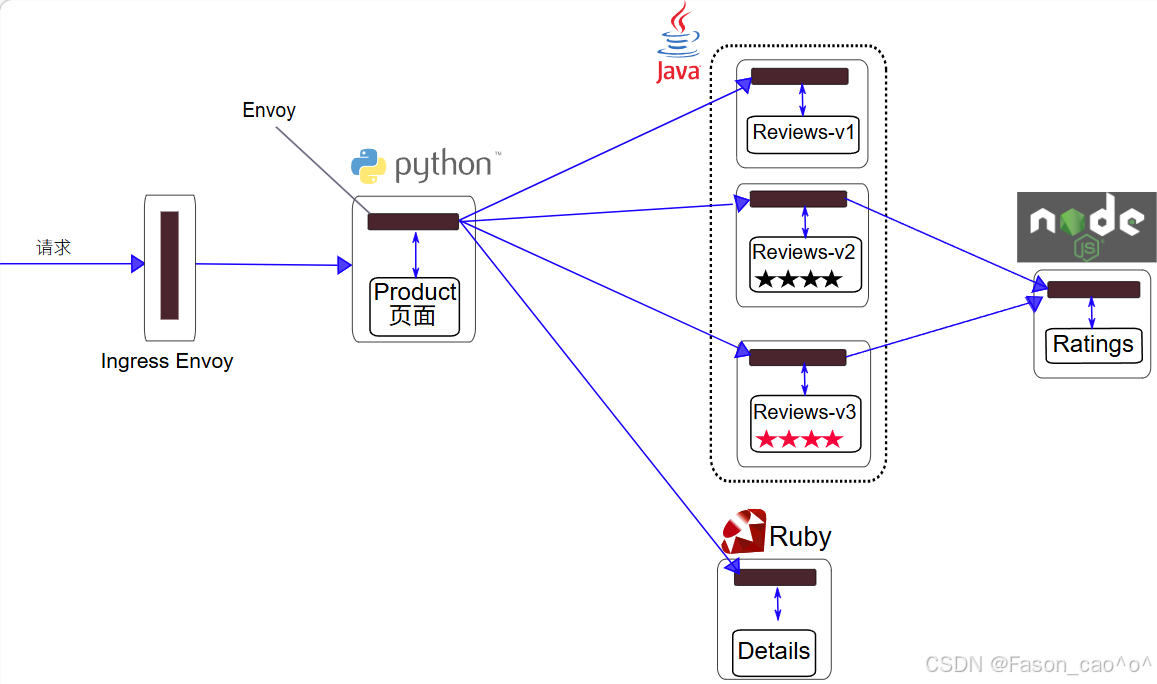

以Bookinfo为例

进入 Istio 安装目录。

Istio 默认自动注入 Sidecar。 为

default命名空间打上标签istio-injection=enabled:

$ kubectl label namespace default istio-injection=enabled使用

kubectl命令来部署应用:

$ kubectl apply -fsamples/bookinfo/platform/kube/bookinfo.yaml上面这条命令会启动

bookinfo应用架构图中显示的全部四个服务。 也会启动三个版本的 reviews 服务:v1、v2 以及 v3。

这里就需要用到DestinationRule资源对象去对流量的集群处理。

bash

# 编写istio DestinationRule的资源清单(格式类似于K8S)

[root@k8s-master ~/istio]# cat dr+vs.yaml

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: reviews

spec:

host: reviews

# 创建cluster的子集

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v3

labels:

version: v3

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- route:

- destination:

host: reviews

subset: v1

bash

[root@k8s-master ~/istio]# kubectl apply -f dr+vs.yaml

destinationrule.networking.istio.io/reviews unchanged

virtualservice.networking.istio.io/reviews created

[root@k8s-master ~/istio]# kubectl get dr

NAME HOST AGE

reviews reviews 2m23s

[root@k8s-master ~/istio]# kubectl get vs

NAME GATEWAYS HOSTS AGE

bookinfo ["bookinfo-gateway"] ["*"] 25h

reviews ["reviews"] 92s这些规则写完之后istio的envoy数据层面是会动态更新的

在没有创建DR的时候envoy的集群cluster信息只有productpage一个outbound|9080||productpage.default.svc.cluster.local

创建完DR资源之后后做了3个reviews的子集的cluster概念因为host: reviews,分别为

outbound|9080|v1|reviews.default.svc.cluster.local

outbound|9080|v2|reviews.default.svc.cluster.local

outbound|9080|v3|reviews.default.svc.cluster.local

变成了4个cluster。

同时对应cluster的endpoint也对应着后端的podIP

bash

[root@k8s-master ~/istio]# istioctl proxy-config endpoint productpage-v1-54bb874995-zp9w2 -n default --cluster "outbound|9080|v1|reviews.default.svc.cluster.local" -o yaml

hostStatuses:

- address:

socketAddress:

address: 10.200.36.90

portValue: 9080

[root@k8s-master ~/istio]# istioctl proxy-config endpoint productpage-v1-54bb874995-zp9w2 -n default --cluster "outbound|9080|v2|reviews.default.svc.cluster.local" -o yaml

hostStatuses:

- address:

socketAddress:

address: 10.200.169.157

portValue: 9080

[root@k8s-master ~/istio]# istioctl proxy-config endpoint productpage-v1-54bb874995-zp9w2 -n default --cluster "outbound|9080|v3|reviews.default.svc.cluster.local" -o yaml

edsServiceName: outbound|9080|v3|reviews.default.svc.cluster.local

hostStatuses:

- address:

socketAddress:

address: 10.200.36.96

portValue: 9080

bash

[root@k8s-master ~/istio]# kubectl get po -o wide -l app=reviews

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

reviews-v1-598b896c9d-trb4c 2/2 Running 2 (6h16m ago) 26h 10.200.36.90 k8s-node1 <none> <none>

reviews-v2-556d6457d-69lx4 2/2 Running 2 (6h16m ago) 26h 10.200.169.157 k8s-node2 <none> <none>

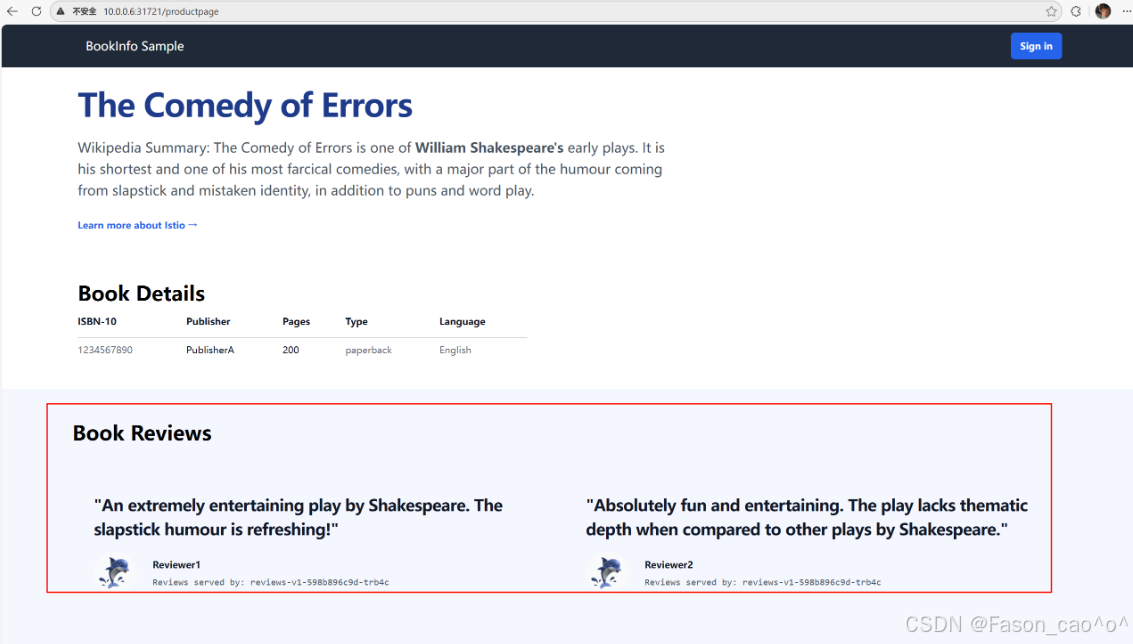

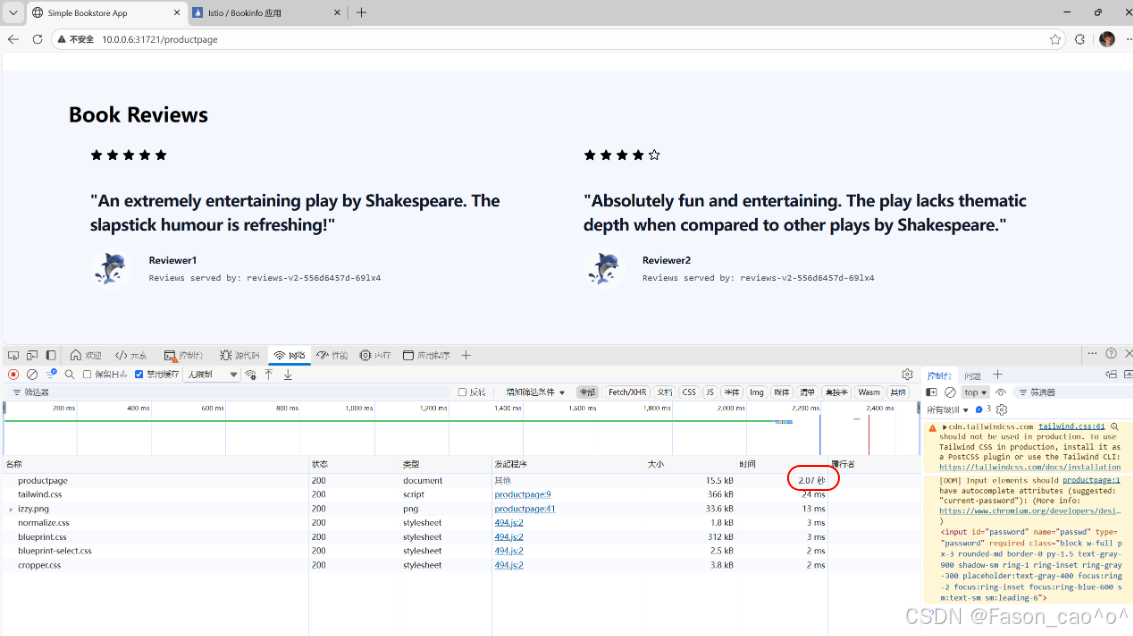

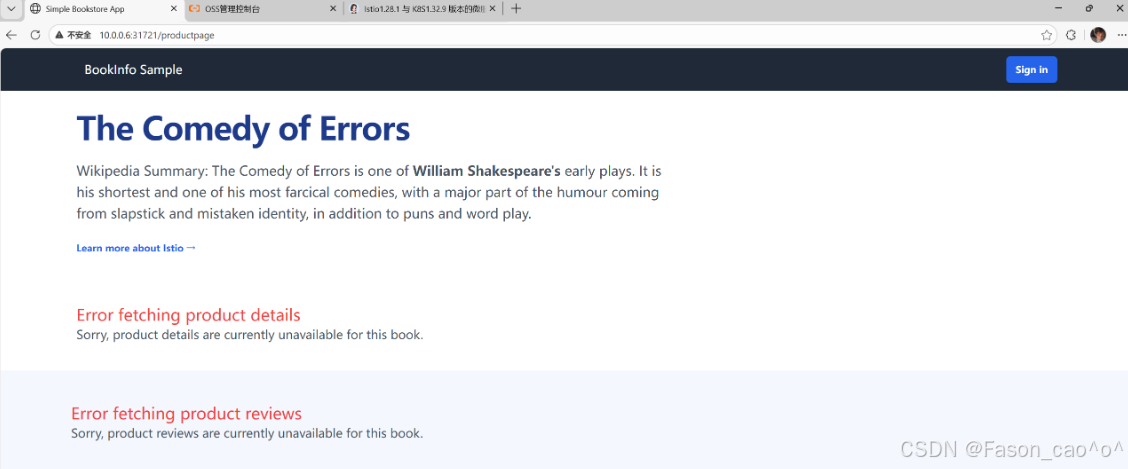

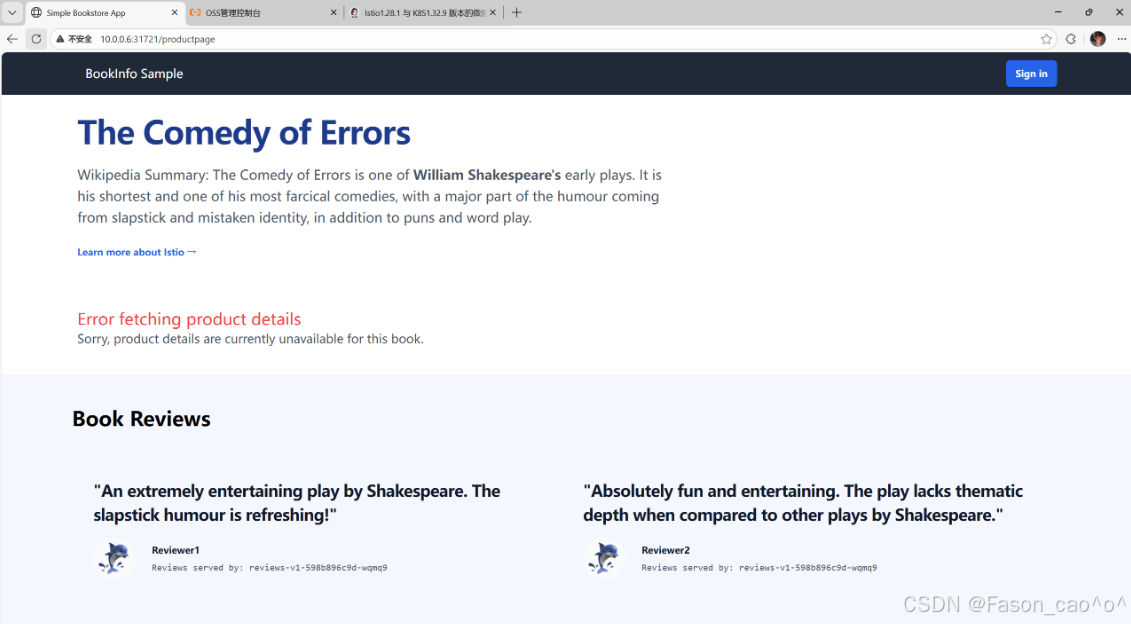

reviews-v3-564544b4d6-cbcf2 2/2 Running 2 (6h16m ago) 26h 10.200.36.96 k8s-node1 <none> <none>做完这个VirtualService规则创建之后我们再访问Simple Bookstore Aphttp://10.0.0.6:31721/productpageSimple Bookstore Ap页面就一直为v1版本了。

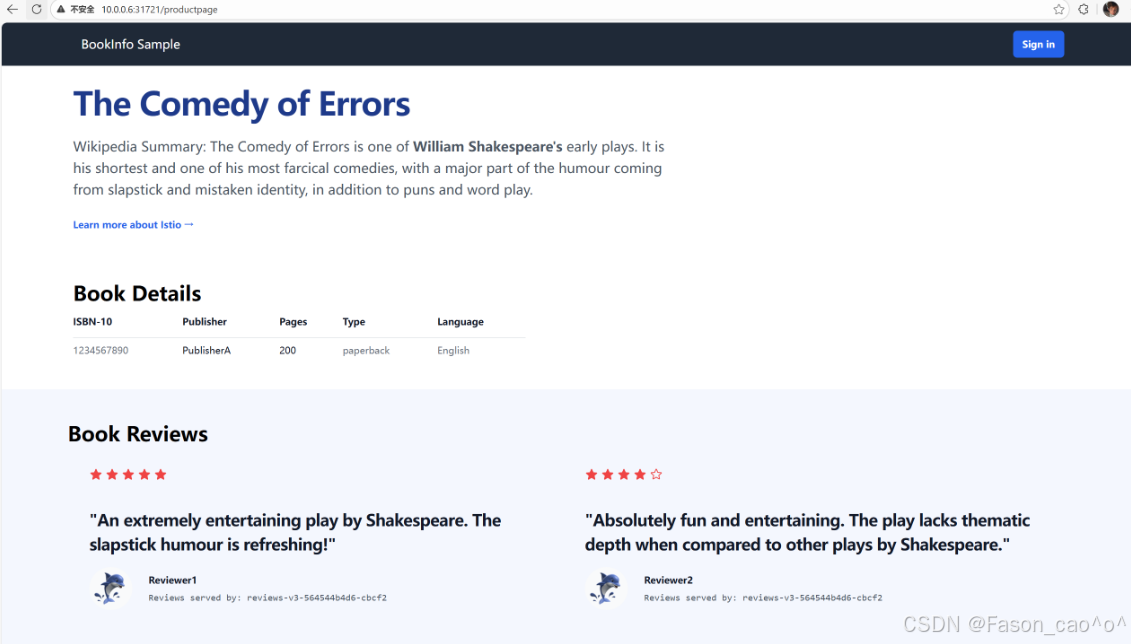

流量管理之权重访问

bash

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: reviews

spec:

host: reviews

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v3

labels:

version: v3

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- route:

# 为 v1 版本分配 50% 流量

- destination:

host: reviews

subset: v1

weight: 50

# 为 v3 版本分配 50% 流量

- destination:

host: reviews

subset: v3

weight: 50

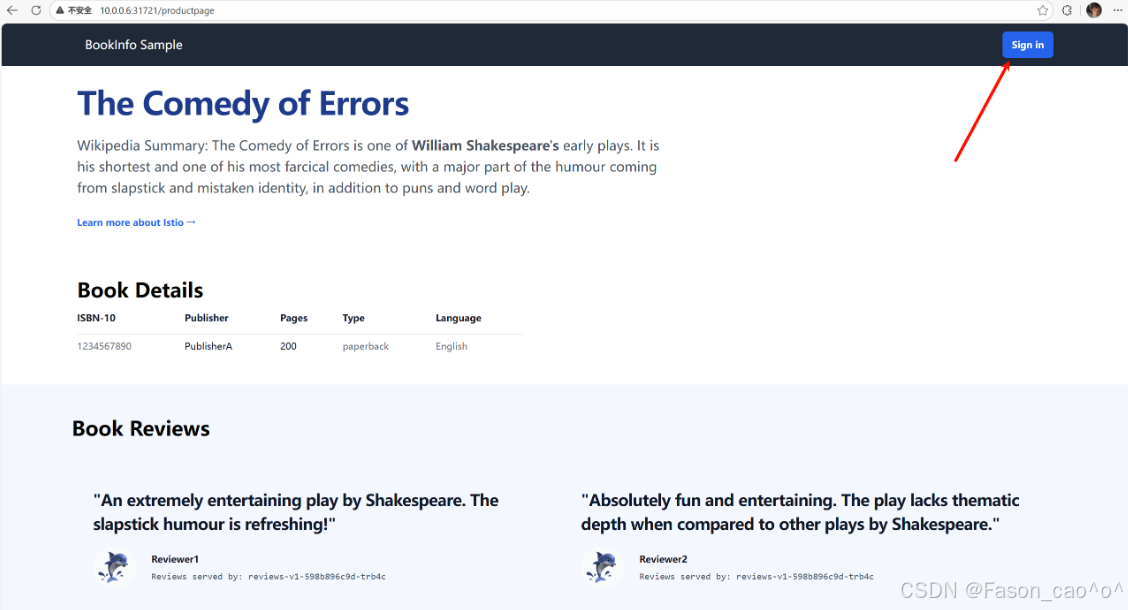

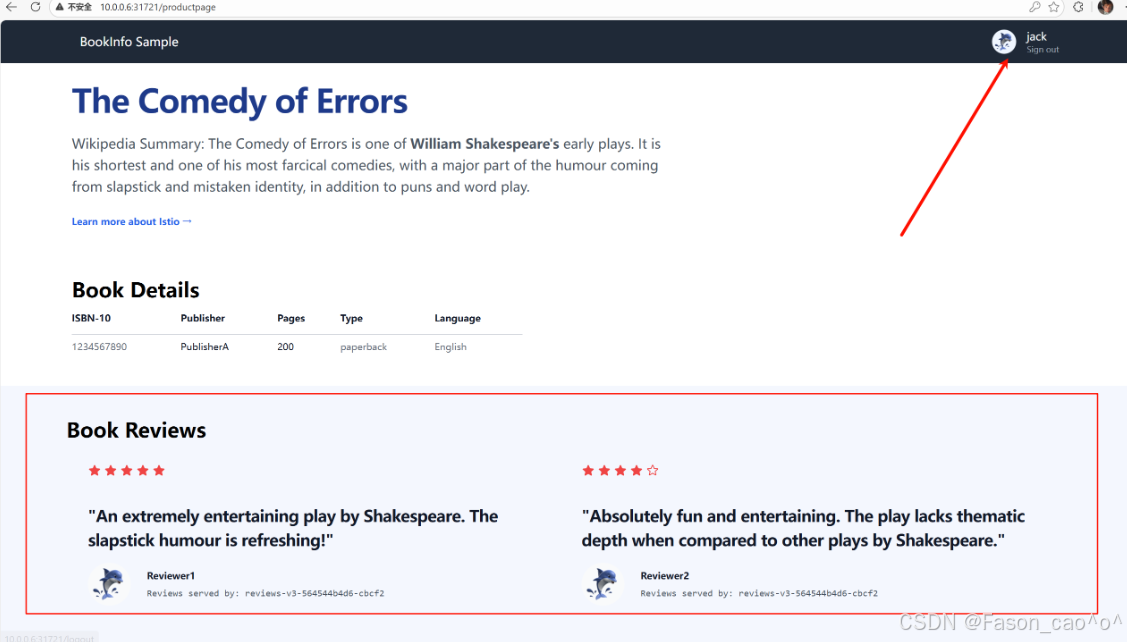

流量管理之请求头访问

bash

[root@k8s-master ~/istio]# cat dr+vs_user_agent.yaml

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: reviews

spec:

host: reviews

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v3

labels:

version: v3

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

# 第一个路由规则:匹配 user=Jason 的请求头,转发到 v2

- match:

- headers:

end-user:

exact: Jason # exact 表示精准匹配字符串

# 忽略名称大小写

# headers:

# user:

# regex: ^[Jj]ason$ # 匹配 Jason 或 jason

route:

- destination:

host: reviews

subset: v2

# 第二个路由规则:匹配 user=jack 的请求头,转发到 v3

- match:

- headers:

end-user:

exact: jack

route:

- destination:

host: reviews

subset: v3

# 第三个路由规则:默认路由(无匹配条件),所有其他请求转发到 v1

- route:

- destination:

host: reviews

subset: v1

到这里我们就明白了要通过 Istio 实现服务的流量管理,需要用到Gateway、 VirtualService、DestinationRule三个 CRD 对象,这些对象其实最终都是去拼凑 Envoy 的配置,每个对象管理Envoy配置的一部分,把这个关系搞清楚我们就能更好的掌握lstio的使用了。

2.3 故障注入

故障注入就是 Istio 不改动业务代码,给服务间流量模拟网络 / 服务故障,验证微服务容错能力的流量治理功能。核心是提前发现服务脆弱点,避免线上真故障出问题,简答核心就 3 点:

-

无侵入:不用改业务代码,靠 Istio Sidecar 拦截流量实现

-

模拟故障:常用延迟(网络卡慢)、中断(服务不可用 / 5xx),也支持限流、重置连接等

-

精准控制:可按服务、接口、比例、来源等条件定向注入,不影响正常业务

注入HTTP延迟故障

给请求加延迟,专门测服务能不能扛住慢响应,验证超时、熔断好不好使,防止线上一慢就雪崩。

bash

# 需要先创建一个vs的路由规则,如果访问头用户信息是jason,那么请求就会被路由到reviews的v2版本上面去,正常都是v1.

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-reviews-test-v2.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- match:

- headers:

end-user:

exact: jason

route:

- destination:

host: reviews

subset: v2

- route:

- destination:

host: reviews

subset: v1延迟两秒访问针对于ratings服务

bash

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-ratings-test-delay.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: ratings

spec:

hosts:

- ratings

http:

- match:

- headers:

end-user:

exact: jason

fault:

delay:

percentage:

value: 100.0

fixedDelay: 2s

route:

- destination:

host: ratings

subset: v1

- route:

- destination:

host: ratings

subset: v1

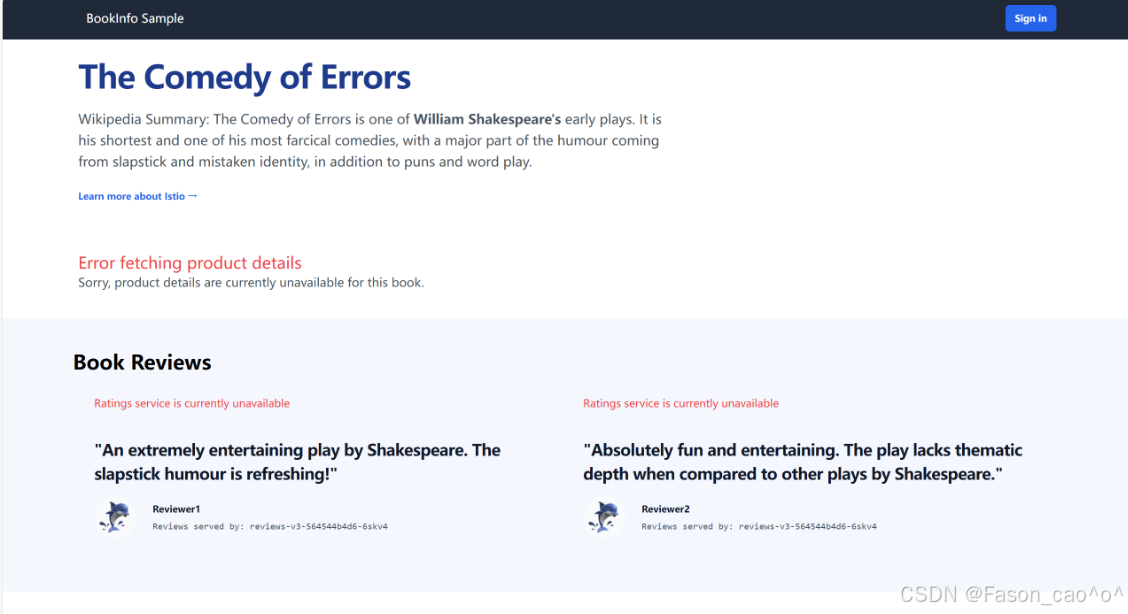

可以看到以及是延迟访问生效了且还是无侵入式的,但是我们的v1版本是不会受到任何影响的。

bash

# 再次查看envoy的路由规则,因为说过vs资源本质上修改的就是envoy的route规则。

[root@k8s-master ~/istio]# istioctl proxy-config route reviews-v2-556d6457d-69lx4 --name 9080 -oyaml

routes:

- decorator:

operation: ratings.default.svc.cluster.local:9080/*

match:

caseSensitive: true

headers:

- name: end-user

stringMatch:

exact: jason

prefix: /

metadata:

filterMetadata:

istio:

config: /apis/networking.istio.io/v1/namespaces/default/virtual-service/ratings

route:

cluster: outbound|9080|v1|ratings.default.svc.cluster.local

maxGrpcTimeout: 0s

retryPolicy:

hostSelectionRetryMaxAttempts: "5"

numRetries: 2

retryHostPredicate:

- name: envoy.retry_host_predicates.previous_hosts

typedConfig:

'@type': type.googleapis.com/envoy.extensions.retry.host.previous_hosts.v3.PreviousHostsPredicate

retryOn: connect-failure,refused-stream,unavailable,cancelled,retriable-status-codes

timeout: 0s

typedPerFilterConfig:

envoy.filters.http.fault:

'@type': type.googleapis.com/envoy.extensions.filters.http.fault.v3.HTTPFault

delay:

fixedDelay: 2s

percentage:

denominator: MILLION

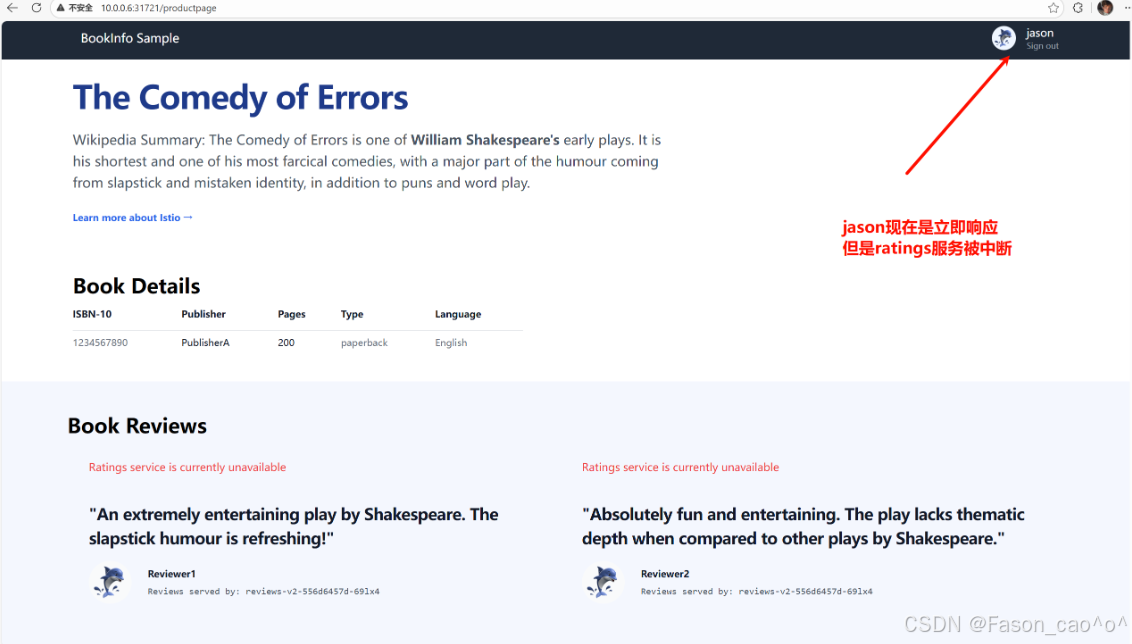

numerator: 1000000注入HTTP abort故障

直接模拟服务报错(返回指定 HTTP 错误码),测服务容错和降级是否生效,防止一个服务挂了连带其他服务雪崩。

-

核心场景:模拟服务直接不可用(如 503 服务忙、404、500),比延迟更极端

-

验证重点:调用方是否能捕获错误、是否自动重试 / 降级、是否熔断,不卡死

-

无侵入:Istio 直接拦截返回错误,业务代码完全不用改

bash

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-ratings-test-abort.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: ratings

spec:

hosts:

- ratings

http:

- match:

- headers:

end-user:

exact: jason

fault:

abort:

percentage:

value: 100.0

httpStatus: 500

route:

- destination:

host: ratings

subset: v1

- route:

- destination:

host: ratings

subset: v1

[root@k8s-master ~/istio]# kubectl apply -f samples/bookinfo/networking/virtual-service-ratings-test-abort.yaml

virtualservice.networking.istio.io/ratings configured

其他的请求只要不是jason的用户请求头都正常。响应服务。

✅ 延迟 vs abort 核心区别(一句话分清)

-

延迟:慢响应 → 测超时、慢调用熔断

-

abort:直接报错 → 测错误处理、降级、故障隔离

2.4 HTTP流量拆分

权重拆分流量

bash

# 先创建好路由,先将流量都请求到v1版本

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-all-v1.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: productpage

spec:

hosts:

- productpage

http:

- route:

- destination:

host: productpage

subset: v1

---

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- route:

- destination:

host: reviews

subset: v1

---

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: ratings

spec:

hosts:

- ratings

http:

- route:

- destination:

host: ratings

subset: v1

---

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: details

spec:

hosts:

- details

http:

- route:

- destination:

host: details

subset: v1

---

bash

# 再创建好子集方便后续的测试

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/destination-rule-all.yaml

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: productpage

spec:

host: productpage

subsets:

- name: v1

labels:

version: v1

---

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: reviews

spec:

host: reviews

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v3

labels:

version: v3

---

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: ratings

spec:

host: ratings

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

- name: v2-mysql

labels:

version: v2-mysql

- name: v2-mysql-vm

labels:

version: v2-mysql-vm

---

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: details

spec:

host: details

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

---

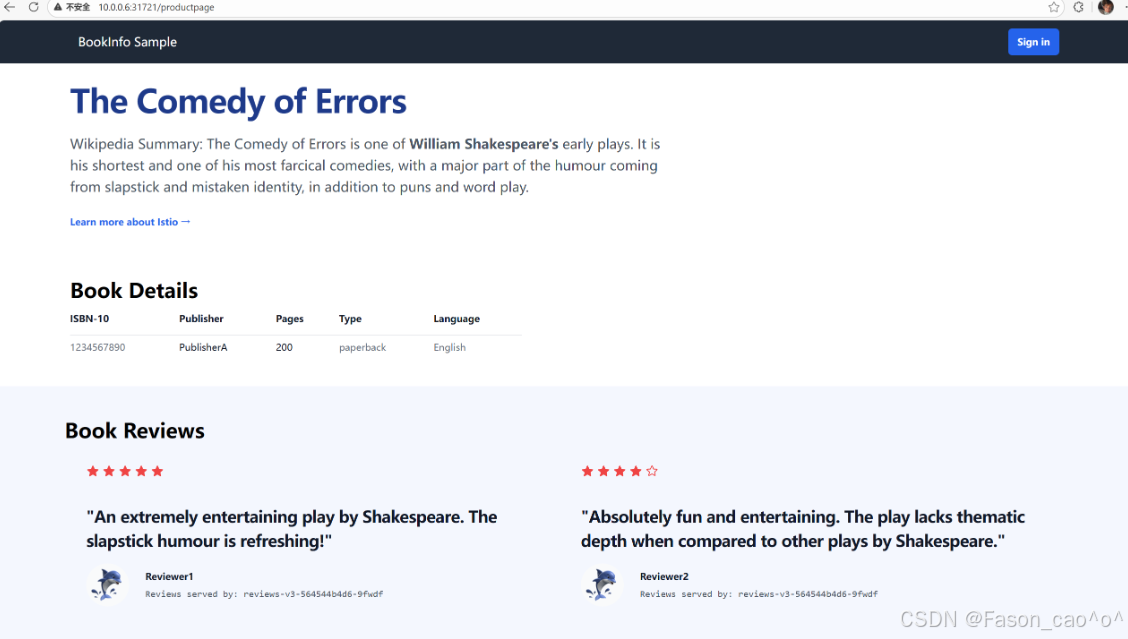

bash

# 再做流量拆分

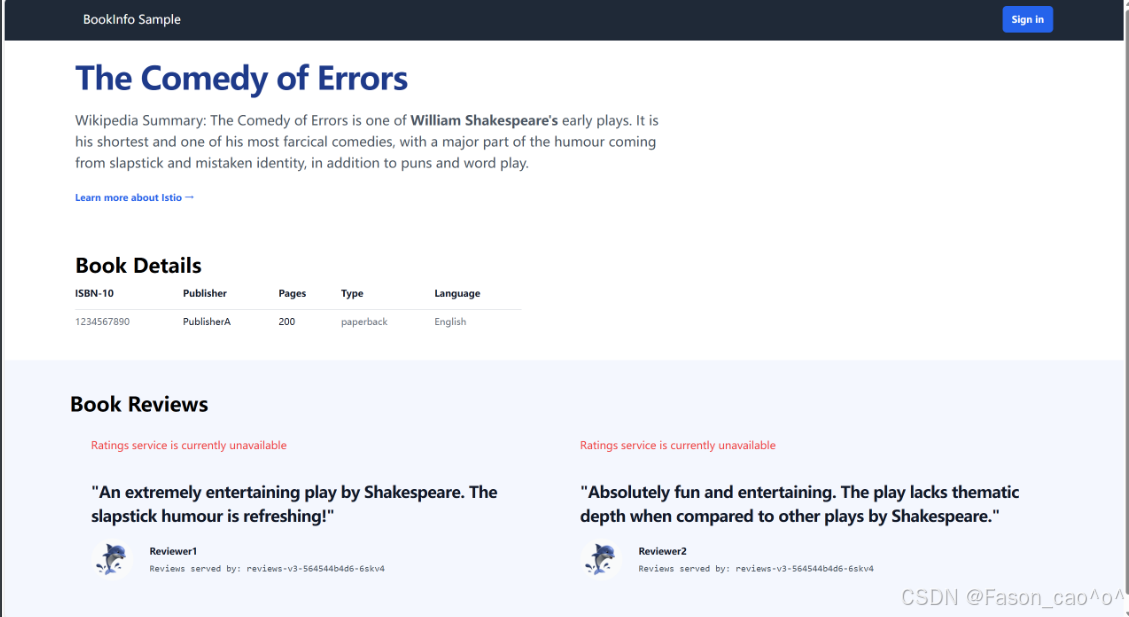

# 将50%的流量路由到v1版本,再将50%的流量路由到v3版本,这样就可以实现我们的灰度发布,金丝雀发布,以及A/B测试。

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-reviews-50-v3.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- route:

- destination:

host: reviews

subset: v1

weight: 50

- destination:

host: reviews

subset: v3

weight: 50

bash

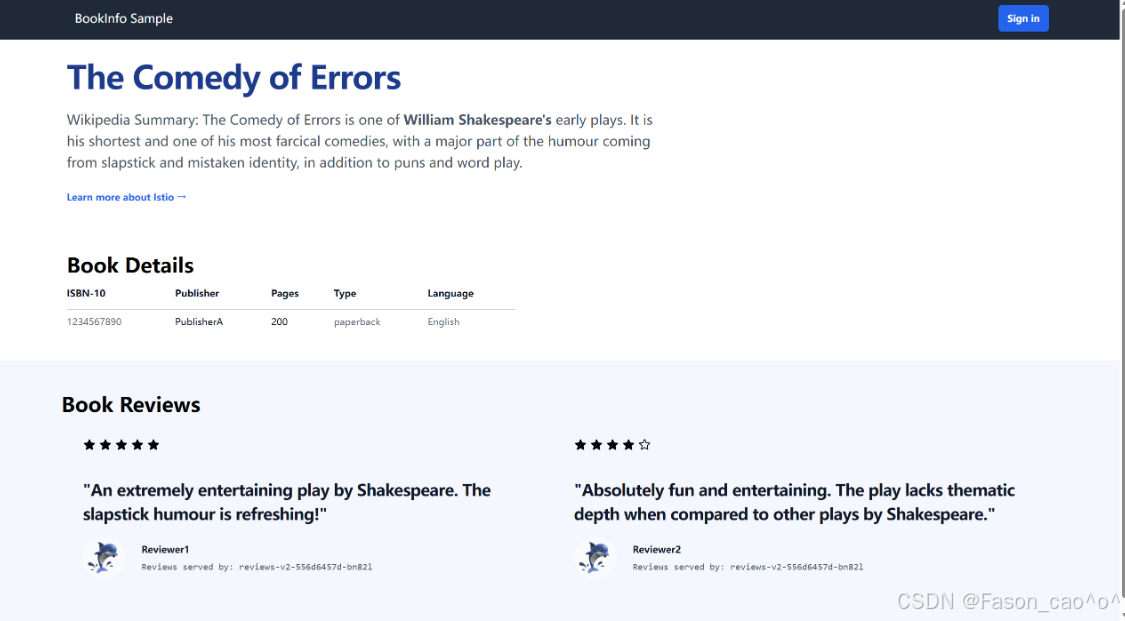

# 测试差不多之后就可以完全将流量路由到v3的新版本即可

[root@k8s-master ~/istio]# cat samples/bookinfo/networking/virtual-service-reviews-v3.yaml

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: reviews

spec:

hosts:

- reviews

http:

- route:

- destination:

host: reviews

subset: v3

流量镜像

也成为影子流量,把生产环境的真实流量 "复制一份" 转发到测试 / 新版本服务,既不影响用户的正常请求,又能拿真实流量验证新服务的可用性、兼容性。

简单说就是原本的请求v1版本的流量会同步流量到新版本v2上面去,对新版本做真实的流量测试,从而去验证新服务。

bash

# 创建一个v1版本的pod,模拟我们的v1稳定版本。

[root@k8s-master ~/istio]# cat httpbin-v1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-v1

spec:

selector:

matchLabels:

app: httpbin

version: v1

template:

metadata:

labels:

app: httpbin

version: v1

spec:

containers:

- image: docker.io/kennethreitz/httpbin:latest

imagePullPolicy: IfNotPresent

name: httpbinv1

command: ["gunicorn","--access-logfile","-","-b","0.0.0.0","httpbin:app"]

ports:

- containerPort: 80

bash

# 创建一个v2版本的Pod,去模拟我们的新版本

[root@k8s-master ~/istio]# cat httpbin-v2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-v2

spec:

selector:

matchLabels:

app: httpbin

version: v2

template:

metadata:

labels:

app: httpbin

version: v2

spec:

containers:

- image: docker.io/kennethreitz/httpbin:latest

imagePullPolicy: IfNotPresent

name: httpbinv2

command: ["gunicorn","--access-logfile","-","-b","0.0.0.0","httpbin:app"]

ports:

- containerPort: 80

bash

# 使用DR资源将新老业务使用Pod的labels去划分两个子集,然后再将流量都先路由到v1版本上去。

[root@k8s-master ~/istio]# cat httpbin-dr-vs-v1.yaml

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: httpbin

spec:

hosts:

- httpbin

http:

- route:

- destination:

host: httpbin

subset: v1

weight: 100

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: httpbin

spec:

host: httpbin

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

bash

# 创建一个curl测试Pod

[root@k8s-master ~/istio]# cat curl.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: sleep

spec:

selector:

matchLabels:

app: sleep

template:

metadata:

labels:

app: sleep

spec:

containers:

- name: sleep

image: docker.io/curlimages/curl:8.9.1

imagePullPolicy: IfNotPresent

command: ["/bin/sleep", "3650d"]

imagePullPolicy: IfNotPresent

bash

# 创建httpbinPod的统一入口。

[root@k8s-master ~/istio]# cat http-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: httpbin

labels:

app: httpbin

spec:

ports:

- name: http

port: 8000

targetPort: 8000

selector:

app: httpbin

bash

[root@k8s-master ~/istio]# kubectl get po -l 'app in (httpbin,sleep)'

NAME READY STATUS RESTARTS AGE

http-v1-8ff584bb6-g4ft9 2/2 Running 0 28m

http-v2-56bddbb874-dbjbp 2/2 Running 0 27m

sleep-7f8d77f79b-4ddsv 2/2 Running 0 22m

[root@k8s-master ~/istio]# kubectl get dr,vs

NAME HOST AGE

...

destinationrule.networking.istio.io/httpbin httpbin 14m

NAME GATEWAYS HOSTS AGE

...

virtualservice.networking.istio.io/httpbin ["httpbin"] 14m

[root@k8s-master ~/istio]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

...

httpbin ClusterIP 10.98.234.250 <none> 8000/TCP 24m

[root@k8s-master ~/istio]#

bash

# 测试

[root@k8s-master ~/istio]# kubectl exec sleep-7f8d77f79b-4ddsv -c sleep -- curl -sS http://httpbin:8000/headers

{

"headers": {

"Accept": "*/*",

"Host": "httpbin:8000",

"User-Agent": "curl/8.9.1",

"X-Envoy-Attempt-Count": "1",

"X-Forwarded-Client-Cert": "By=spiffe://cluster.local/ns/default/sa/default;Hash=3f6be10c16848e6447c5a6685411e35923ad9f3f548b8ba3e1ac95a99ab2d417;Subject=\"\";URI=spiffe://cluster.local/ns/default/sa/default"

}

}

[root@k8s-master ~/istio]# kubectl exec sleep-7f8d77f79b-4ddsv -c sleep -- curl -sS http://httpbin:8000/headers

{

"headers": {

"Accept": "*/*",

"Host": "httpbin:8000",

"User-Agent": "curl/8.9.1",

"X-Envoy-Attempt-Count": "1",

"X-Forwarded-Client-Cert": "By=spiffe://cluster.local/ns/default/sa/default;Hash=3f6be10c16848e6447c5a6685411e35923ad9f3f548b8ba3e1ac95a99ab2d417;Subject=\"\";URI=spiffe://cluster.local/ns/default/sa/default"

}

}

[root@k8s-master ~/istio]# kubectl exec sleep-7f8d77f79b-4ddsv -c sleep -- curl -sS http://httpbin:8000/headers

{

"headers": {

"Accept": "*/*",

"Host": "httpbin:8000",

"User-Agent": "curl/8.9.1",

"X-Envoy-Attempt-Count": "1",

"X-Forwarded-Client-Cert": "By=spiffe://cluster.local/ns/default/sa/default;Hash=3f6be10c16848e6447c5a6685411e35923ad9f3f548b8ba3e1ac95a99ab2d417;Subject=\"\";URI=spiffe://cluster.local/ns/default/sa/default"

}

}

# 可以发现v1版本的日志可以正常接收到请求日志

[root@k8s-master ~/istio]# kubectl logs http-v1-8ff584bb6-g4ft9

[2025-12-24 03:11:30 +0000] [1] [INFO] Starting gunicorn 19.9.0

[2025-12-24 03:11:30 +0000] [1] [INFO] Listening at: http://0.0.0.0:8000 (1)

[2025-12-24 03:11:30 +0000] [1] [INFO] Using worker: sync

[2025-12-24 03:11:30 +0000] [9] [INFO] Booting worker with pid: 9

127.0.0.6 - - [24/Dec/2025:03:20:06 +0000] "GET / HTTP/1.1" 200 9593 "-" "curl/8.14.1"

127.0.0.6 - - [24/Dec/2025:03:25:35 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:32 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:33 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:34 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

# 但是v2版本就不会有

[root@k8s-master ~/istio]# kubectl logs http-v2-56bddbb874-dbjbp

[2025-12-24 03:12:24 +0000] [1] [INFO] Starting gunicorn 19.9.0

[2025-12-24 03:12:24 +0000] [1] [INFO] Listening at: http://0.0.0.0:8000 (1)

[2025-12-24 03:12:24 +0000] [1] [INFO] Using worker: sync

[2025-12-24 03:12:24 +0000] [10] [INFO] Booting worker with pid: 10

bash

# 开启流量镜像功能,也就是请求v1版本的流量,也要同时100%的请求到v2新版本上去。权重可以自己控制。

[root@k8s-master ~/istio]# cat v1-v2mirror.yaml

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: httpbin

spec:

hosts:

- httpbin

http:

- route:

- destination:

host: httpbin

subset: v1

weight: 100

mirror:

host: httpbin

subset: v2

mirrorPercentage:

value: 100.0

bash

[root@k8s-master ~/istio]# kubectl exec sleep-7f8d77f79b-4ddsv -c sleep -- curl -sS http://httpbin:8000/headers

{

"headers": {

"Accept": "*/*",

"Host": "httpbin:8000",

"User-Agent": "curl/8.9.1",

"X-Envoy-Attempt-Count": "1",

"X-Forwarded-Client-Cert": "By=spiffe://cluster.local/ns/default/sa/default;Hash=3f6be10c16848e6447c5a6685411e35923ad9f3f548b8ba3e1ac95a99ab2d417;Subject=\"\";URI=spiffe://cluster.local/ns/default/sa/default"

}

}

[root@k8s-master ~/istio]# kubectl exec sleep-7f8d77f79b-4ddsv -c sleep -- curl -sS http://httpbin:8000/headers

{

"headers": {

"Accept": "*/*",

"Host": "httpbin:8000",

"User-Agent": "curl/8.9.1",

"X-Envoy-Attempt-Count": "1",

"X-Forwarded-Client-Cert": "By=spiffe://cluster.local/ns/default/sa/default;Hash=3f6be10c16848e6447c5a6685411e35923ad9f3f548b8ba3e1ac95a99ab2d417;Subject=\"\";URI=spiffe://cluster.local/ns/default/sa/default"

}

}

# 可以看到流量以及同时请求到新版本了。

[root@k8s-master ~/istio]# kubectl logs http-v1-8ff584bb6-g4ft9

[2025-12-24 03:11:30 +0000] [1] [INFO] Starting gunicorn 19.9.0

[2025-12-24 03:11:30 +0000] [1] [INFO] Listening at: http://0.0.0.0:8000 (1)

[2025-12-24 03:11:30 +0000] [1] [INFO] Using worker: sync

[2025-12-24 03:11:30 +0000] [9] [INFO] Booting worker with pid: 9

127.0.0.6 - - [24/Dec/2025:03:20:06 +0000] "GET / HTTP/1.1" 200 9593 "-" "curl/8.14.1"

127.0.0.6 - - [24/Dec/2025:03:25:35 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:32 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:33 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:40:34 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:43:19 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:43:20 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

[root@k8s-master ~/istio]# kubectl logs http-v2-56bddbb874-dbjbp

[2025-12-24 03:12:24 +0000] [1] [INFO] Starting gunicorn 19.9.0

[2025-12-24 03:12:24 +0000] [1] [INFO] Listening at: http://0.0.0.0:8000 (1)

[2025-12-24 03:12:24 +0000] [1] [INFO] Using worker: sync

[2025-12-24 03:12:24 +0000] [10] [INFO] Booting worker with pid: 10

127.0.0.6 - - [24/Dec/2025:03:43:19 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

127.0.0.6 - - [24/Dec/2025:03:43:20 +0000] "GET /headers HTTP/1.1" 200 355 "-" "curl/8.9.1"

bash

# Envoy代理的route配置requestMirrorPolicies

[root@k8s-master ~/istio]# istioctl proxy-config route http-v2-56bddbb874-dbjbp --name 8000 -oyaml

- ignorePortInHostMatching: true

maxDirectResponseBodySizeBytes: 1048576

name: "8000"

...

requestMirrorPolicies:

- cluster: outbound|8000|v2|httpbin.default.svc.cluster.local

disableShadowHostSuffixAppend: true

runtimeFraction:

defaultValue:

denominator: MILLION

numerator: 1000000

traceSampled: false

retryPolicy:

hostSelectionRetryMaxAttempts: "5"

numRetries: 2

retryHostPredicate:

- name: envoy.retry_host_predicates.previous_hosts

typedConfig:

'@type': type.googleapis.com/envoy.extensions.retry.host.previous_hosts.v3.PreviousHostsPredicate

retryOn: connect-failure,refused-stream,unavailable,cancelled,retriable-status-codes

timeout: 0s

- domains:

- '*'

includeRequestAttemptCount: true

name: allow_any

routes:

- match:

prefix: /

name: allow_any

route:

cluster: PassthroughCluster

maxGrpcTimeout: 0s

timeout: 0s2.5 熔断

Istio 熔断是Sidecar 代理实现的无侵入故障隔离机制,类比电路跳闸:当目标服务故障达到阈值,代理临时切断调用方与目标服务的连接,避免故障扩散,保障整体服务可用性,全程无需改业务代码。

✅ 在 Istio 里,熔断是只能配置在 DestinationRule 中,由目标服务对应的调用方 Sidecar执行熔断,而非目标服务自身:比如服务 B 调用服务 A,熔断规则配在 A 的 DestinationRule,实际是 B 的 Sidecar 来判断是否触发熔断,拦截请求。

谁发起请求,谁就是调用方;谁接收请求,谁就是目标方

核心作用

-

防雪崩:目标服务故障(慢 / 报错),不会拖垮调用方,更不会顺着调用链扩散(比如 C→B→A,A 故障,熔断能让 B 不崩,进而 C 也不崩)

-

保资源:避免调用方因 "一直等待 / 重试故障服务" 耗尽线程、CPU 等资源,保障调用方自身正常提供服务

核心特性

-

快速失败:触发熔断后,调用方请求不阻塞、不等待,直接返回失败,不给调用方造成资源浪费

-

自动恢复:熔断不是永久断连,会按

sleepWindow(冷静期,如 20s)定期发少量试探请求,若目标服务恢复正常,自动恢复正常调用,无需手动干预 -

熔断是保护调用方,不是保护目标服务(目标服务故障时,优先保调用方可用)

-

熔断仅对经过 Istio Sidecar 的流量生效,未注入 Sidecar 的 Pod 不受管控

-

熔断触发后,调用方收到的是 Sidecar 返回的错误(如 503),而非目标服务的错误.

Istio 熔断:Sidecar 替调用方盯着目标服务,故障达标就临时断连,快速失败不阻塞,冷静后自动试探恢复,只保整体不雪崩,全程业务无感知。

bash

[root@k8s-master ~/istio]# cat rd_dr.yaml

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: httpbin

spec:

# 目标服务名:所有针对httpbin服务的流量都会应用下面的熔断规则

host: httpbin

# 流量策略:包含连接池限制、异常检测(熔断)等核心规则

trafficPolicy:

# 连接池配置:限制调用方与httpbin之间的连接/请求数(熔断的基础限流层)

connectionPool:

# TCP层连接池配置(针对TCP层面的连接限制)

tcp:

# 限制调用方对httpbin服务的最大并发TCP连接数为1

# 效果:同一时间只能有1个TCP连接连到httpbin,超过则直接拒绝新连接

maxConnections: 1

# HTTP层连接池配置(针对HTTP请求的精细化限制,优先级高于TCP层)

http:

# 限制HTTP请求排队等待连接的最大队列数为1

# 效果:当TCP连接占满(1个)后,最多只能有1个请求排队,超过则快速失败

http1MaxPendingRequests: 1

# 限制单个TCP连接上允许承载的最大HTTP请求数为1

# 效果:每个连接只能处理1个请求,处理完就关闭重建,完全禁用连接复用

maxRequestsPerConnection: 1

# 异常检测(熔断)配置:检测httpbin实例的异常,触发熔断隔离(真正的断连逻辑)

outlierDetection:

# 连续5xx错误数阈值:只要出现1次5xx错误(如500/503/504),就判定该实例异常

# 效果:1次5xx就标记实例故障,触发熔断隔离

consecutive5xxErrors: 1

# 异常检测的时间间隔:每1秒统计一次错误数/实例状态

# 效果:极高灵敏度,几乎实时检测错误,易触发熔断(生产常用10-30s)

interval: 1s

# 异常实例的基础隔离时间:首次熔断隔离3分钟,后续隔离时间会按次数递增(如第2次6分钟)

# 效果:隔离时间极长,熔断后3分钟内仅发少量试探请求,恢复慢

baseEjectionTime: 3m

# 最大可隔离实例比例:允许隔离httpbin服务100%的实例

# 效果:极端情况下,httpbin所有实例都可能被熔断隔离,导致服务完全不可用(生产常用30-50%)

maxEjectionPercent: 100

这个DestinationRule是给httpbin服务配置极严格的熔断规则(适合测试 / 验证熔断效果,生产环境不会用这么苛刻的阈值),核心是:限制连接 / 请求数到极致,只要出现 1 次 5xx 错误就熔断实例,且隔离时间长达 3 分钟,最多可隔离 100% 实例。connectionPool 连接池参数(分 TCP/HTTP 层,按需配置)

connectionPool 连接池参数(分 TCP/HTTP 层,按需配置)

| 参数分类 | 具体参数 | 核心作用 | 配置示例 | 企业场景说明 |

|---|---|---|---|---|

| HTTP 层 | http.maxConnections | 限制调用方对目标服务的最大并发连接数,超阈值直接拒绝新连接 | http.maxConnections: 50 | 核心兜底参数,防止目标服务连接过载,同时避免调用方创建过多连接耗尽自身资源 |

| HTTP 层 | http.http1MaxPendingRequests | 限制请求排队等待连接的最大队列数,队列满直接快速失败 | http.http1MaxPendingRequests: 20 | 解决连接占满后请求阻塞问题,避免调用方线程被长时间占用,保障调用方可用性 |

| HTTP 层 | http.maxRequestsPerConnection | 单个连接允许承载的最大请求数,超阈值关闭连接重建 | http.maxRequestsPerConnection: 100 | 提升连接复用率,避免单个长连接长期占用导致请求分发不均,减少无效长连接堆积 |

| HTTP 层 | http.httpMaxRequests | 对目标服务的最大并发请求总数(含连接内请求 + 排队请求) | http.httpMaxRequests: 200 | 优先级高于 maxConnections,精准控制总请求量,适配高并发场景,防止请求过载 |

| HTTP 层 | http.idleTimeout | 空闲连接超时时间,超时自动关闭空闲连接 | http.idleTimeout: 30s | 清理无效空闲连接释放资源,适配长连接场景,默认 30s,多数场景无需修改 |

| HTTP 层 | http.maxRetries | 单个请求的最大重试次数,超阈值停止重试 | http.maxRetries: 2 | 配合熔断使用,避免对故障服务频繁重试放大问题,需与异常检测阈值联动 |

| HTTP 层 | http.retriableStatusCodes | 指定可重试的 HTTP 状态码 | http.retriableStatusCodes: 503, 504 | 精细化控制重试场景,只对指定异常码重试,减少无效重试 |

| TCP 层 | tcp.maxConnections | TCP 层最大并发连接数,仅对 TCP 服务生效(非 HTTP) | tcp.maxConnections: 30 | 适配 TCP 微服务、数据库、Redis 等非 HTTP 服务,HTTP 服务无需重复配置 |

| TCP 层 | tcp.connectTimeout | TCP 连接建立超时时间,超时判定连接失败 | tcp.connectTimeout: 2s | 避免调用方等待无效连接过久,默认 30s,高可用场景建议缩短至 1-3s |

| TCP 层 | tcp.tcpKeepalive | TCP 长连接保活配置 |

outlierDetection 异常检测参数(熔断触发核心)

| 具体参数 | 核心作用 | 配置示例 | 企业场景说明 |

|---|---|---|---|

| consecutiveErrors | 判定实例异常的连续错误数阈值,超阈值标记为异常实例 | consecutiveErrors: 5 | 最核心熔断触发条件,错误含 5xx 状态码、连接超时、连接拒绝等,精准识别故障实例 |

| sleepWindow | 异常实例被隔离后的冷静期,期间仅发少量试探请求 | sleepWindow: 30s | 熔断自动恢复关键参数,冷静期内不批量发请求,避免反复触发故障,适配 20-60s |

| interval | 异常状态统计的时间间隔,多久校验一次错误数 / 异常指标 | interval: 10s | 控制熔断检测灵敏度,间隔太短易误触发,太长易漏触发,常用 10-30s |

| baseEjectionTime | 异常实例的基础隔离时间,隔离时间随异常次数递增 | baseEjectionTime: 15s | 递增惩罚机制(第 1 次 15s,第 2 次 30s...),避免实例频繁隔离 / 恢复导致流量抖动 |

| maxEjectionPercent | 目标服务实例池最大可隔离比例,避免全量实例被隔离 | maxEjectionPercent: 50 | 核心兜底参数!防止网络抖动等场景导致全量实例异常,保障服务至少有部分实例可用,常用 30%-50% |

| consecutiveGatewayErrors | 连续网关类错误数阈值(502/503/504),单独触发熔断 | consecutiveGatewayErrors: 3 | 比 consecutiveErrors 更精准,适配网关转发场景,优先对网关类错误熔断 |

| successRateMinimumHosts | 开启成功率熔断的最小实例数,实例不足则不触发 | successRateMinimumHosts: 5 | 成功率熔断前置条件,避免实例过少时统计失真导致误熔断 |

| successRateRequestVolume | 开启成功率熔断的最小请求量,请求不足则不触发 | successRateRequestVolume: 100 | 保障成功率统计样本量,避免少量请求导致成功率波动过大,误触发熔断 |

| successRateThreshold | 成功率阈值,低于阈值则判定实例异常 | successRateThreshold: 80 | 按请求成功率触发熔断,适配 "错误数少但成功率低" 的场景,常用 80%-90% |

| enforcingConsecutiveErrors | 强制执行连续错误熔断的阈值(低于该值不熔断) | enforcingConsecutiveErrors: 3 | 精细化控制熔断触发严格度,避免少量错误就触发熔断,提升稳定性 |

-

connectionPool 是熔断基础:先限制连接 / 请求量,避免过载,再由 outlierDetection 检测异常触发熔断,缺一不可

-

熔断执行逻辑:先触发 connectionPool 限流(快速失败),再累计错误触发 outlierDetection 实例隔离

-

核心兜底必配:http.maxConnections + http.http1MaxPendingRequests + consecutiveErrors + sleepWindow + maxEjectionPercent,满足绝大多数企业场景

bash

[root@k8s-master ~/istio]# cat samples/httpbin/httpbin.yaml

# Copyright Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##################################################################################################

# httpbin service

##################################################################################################

apiVersion: v1

kind: ServiceAccount

metadata:

name: httpbin

---

apiVersion: v1

kind: Service

metadata:

name: httpbin

labels:

app: httpbin

service: httpbin

spec:

ports:

- name: http

port: 8000

targetPort: 8080

selector:

app: httpbin

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: httpbin

spec:

replicas: 1

selector:

matchLabels:

app: httpbin

version: v1

template:

metadata:

labels:

app: httpbin

version: v1

spec:

serviceAccountName: httpbin

containers:

- image: docker.io/mccutchen/go-httpbin:v2.15.0

imagePullPolicy: IfNotPresent

name: httpbin

ports:

- containerPort: 8080部署一个客户端进行测试

bash

[root@k8s-master ~/istio]# cat samples/httpbin/sample-client/fortio-deploy.yaml

apiVersion: v1

kind: Service

metadata:

name: fortio

labels:

app: fortio

service: fortio

spec:

ports:

- port: 8080

name: http

selector:

app: fortio

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: fortio-deploy

spec:

replicas: 1

selector:

matchLabels:

app: fortio

template:

metadata:

annotations:

# This annotation causes Envoy to serve cluster.outbound statistics via 15000/stats

# in addition to the stats normally served by Istio. The Circuit Breaking example task

# gives an example of inspecting Envoy stats via proxy config.

proxy.istio.io/config: |-

proxyStatsMatcher:

inclusionPrefixes:

- "cluster.outbound"

- "cluster_manager"

- "listener_manager"

- "server"

- "cluster.xds-grpc"

labels:

app: fortio

spec:

containers:

- name: fortio

image: docker.io/fortio/fortio:1.71.2

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

name: http-fortio

- containerPort: 8079

name: grpc-ping

bash

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c fortio -- /usr/bin/fortio load -c 1 -qps 0 -n 20 -loglevel Warning http://httpbin:8000/get

{"ts":1766560907.994363,"level":"info","r":1,"file":"logger.go","line":298,"msg":"Log level is now 3 Warning (was 2 Info)"}

Fortio 1.71.2 running at 0 queries per second, 4->4 procs, for 20 calls: http://httpbin:8000/get

Starting at max qps with 1 thread(s) [gomax 4] for exactly 20 calls (20 per thread + 0)

Ended after 64.585669ms : 20 calls. qps=309.67

Aggregated Function Time : count 20 avg 0.0032283574 +/- 0.003243 min 0.001711826 max 0.017105884 sum 0.064567147

# range, mid point, percentile, count

>= 0.00171183 <= 0.002 , 0.00185591 , 20.00, 4

> 0.002 <= 0.003 , 0.0025 , 80.00, 12

> 0.003 <= 0.004 , 0.0035 , 90.00, 2

> 0.004 <= 0.005 , 0.0045 , 95.00, 1

> 0.016 <= 0.0171059 , 0.0165529 , 100.00, 1

# target 50% 0.0025

# target 75% 0.00291667

# target 90% 0.004

# target 99% 0.0168847

# target 99.9% 0.0170838

Error cases : no data

# Socket and IP used for each connection:

[0] 1 socket used, resolved to 10.107.121.13:8000, connection timing : count 1 avg 0.000531627 +/- 0 min 0.000531627 max 0.000531627 sum 0.000531627

Connection time (s) : count 1 avg 0.000531627 +/- 0 min 0.000531627 max 0.000531627 sum 0.000531627

Sockets used: 1 (for perfect keepalive, would be 1)

Uniform: false, Jitter: false, Catchup allowed: true

IP addresses distribution:

10.107.121.13:8000: 1

Code 200 : 20 (100.0 %) #####看这里...当前我们只有一个并发所有不会触发熔断

Response Header Sizes : count 20 avg 245 +/- 0 min 245 max 245 sum 4900

Response Body/Total Sizes : count 20 avg 866 +/- 0 min 866 max 866 sum 17320

All done 20 calls (plus 0 warmup) 3.228 ms avg, 309.7 qps测试限流

bash

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c fortio -- /usr/bin/fortio load -c 2 -qps 0 -n 20 -loglevel Warning http://httpbin:8000/get

{"ts":1766561002.874524,"level":"info","r":1,"file":"logger.go","line":298,"msg":"Log level is now 3 Warning (was 2 Info)"}

Fortio 1.71.2 running at 0 queries per second, 4->4 procs, for 20 calls: http://httpbin:8000/get

Starting at max qps with 2 thread(s) [gomax 4] for exactly 20 calls (10 per thread + 0)

{"ts":1766561002.881833,"level":"warn","r":24,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561002.884690,"level":"warn","r":25,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561002.889991,"level":"warn","r":24,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561002.892426,"level":"warn","r":25,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561002.894924,"level":"warn","r":24,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561002.896708,"level":"warn","r":25,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561002.899812,"level":"warn","r":24,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561002.901889,"level":"warn","r":25,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

Ended after 31.907574ms : 20 calls. qps=626.81

Aggregated Function Time : count 20 avg 0.0029840042 +/- 0.002155 min 0.000266309 max 0.007260489 sum 0.059680084

# range, mid point, percentile, count

>= 0.000266309 <= 0.001 , 0.000633154 , 35.00, 7

> 0.002 <= 0.003 , 0.0025 , 45.00, 2

> 0.003 <= 0.004 , 0.0035 , 60.00, 3

> 0.004 <= 0.005 , 0.0045 , 85.00, 5

> 0.005 <= 0.006 , 0.0055 , 90.00, 1

> 0.006 <= 0.007 , 0.0065 , 95.00, 1

> 0.007 <= 0.00726049 , 0.00713024 , 100.00, 1

# target 50% 0.00333333

# target 75% 0.0046

# target 90% 0.006

# target 99% 0.00720839

# target 99.9% 0.00725528

Error cases : count 8 avg 0.0007740775 +/- 0.0008211 min 0.000266309 max 0.002928705 sum 0.00619262

# range, mid point, percentile, count

>= 0.000266309 <= 0.001 , 0.000633154 , 87.50, 7

> 0.002 <= 0.00292871 , 0.00246435 , 100.00, 1

# target 50% 0.000633155

# target 75% 0.000877718

# target 90% 0.00218574

# target 99% 0.00285441

# target 99.9% 0.00292128

# Socket and IP used for each connection:

[0] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0002575416 +/- 0.0001675 min 0.00012531 max 0.000580808 sum 0.001287708

[1] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0003968148 +/- 0.0003817 min 6.1415e-05 max 0.000864916 sum 0.001984074

Connection time (s) : count 10 avg 0.0003271782 +/- 0.0003029 min 6.1415e-05 max 0.000864916 sum 0.003271782

Sockets used: 10 (for perfect keepalive, would be 2)

Uniform: false, Jitter: false, Catchup allowed: true

IP addresses distribution:

10.107.121.13:8000: 10

Code 200 : 12 (60.0 %) # 两个并发就触发了熔断的限流

Code 503 : 8 (40.0 %)

Response Header Sizes : count 20 avg 147 +/- 120 min 0 max 245 sum 2940

Response Body/Total Sizes : count 20 avg 616 +/- 306.2 min 241 max 866 sum 12320

All done 20 calls (plus 0 warmup) 2.984 ms avg, 626.8 qps

bash

# 查看熔断的数据

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c istio-proxy -- pilot-agent request GET stats | grep httpbin | grep pending

cluster.outbound|8000||httpbin.default.svc.cluster.local;.circuit_breakers.default.remaining_pending: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.circuit_breakers.default.rq_pending_open: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.circuit_breakers.high.rq_pending_open: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_pending_active: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_pending_failure_eject: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_pending_overflow: 54

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_pending_total: 106

这条命令是查看 Istio Sidecar 中httpbin服务(8000 端口)请求排队(pending)相关的熔断统计指标,这些指标能直观反映你之前配置的connectionPool(排队数限制)是否生效。

从上述结果的反馈中可以看出配置的 httpbin 服务http1MaxPendingRequests:1的连接池规则已生效,累计 54 个请求因排队队列满被拒绝(upstream_rq_pending_overflow:54),当前无请求排队(upstream_rq_pending_active:0)、剩余可排队数为 1(remaining_pending:1),累计排队请求共 106 个,暂未触发排队相关熔断。测试故障检测

bash

# 用2个并发、发10个请求到httpbin的500错误接口,触发5xx返回

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c fortio -- /usr/bin/fortio load -c 2 -qps 0 -n 10 -loglevel Warning http://httpbin:8000/status/500

{"ts":1766561354.695825,"level":"info","r":1,"file":"logger.go","line":298,"msg":"Log level is now 3 Warning (was 2 Info)"}

Fortio 1.71.2 running at 0 queries per second, 4->4 procs, for 10 calls: http://httpbin:8000/status/500

Starting at max qps with 2 thread(s) [gomax 4] for exactly 10 calls (5 per thread + 0)

{"ts":1766561354.703040,"level":"warn","r":8,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561354.711022,"level":"warn","r":7,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":500,"status":"HTTP/1.1 500","thread":0,"run":0}

{"ts":1766561354.713069,"level":"warn","r":8,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":500,"status":"HTTP/1.1 500","thread":1,"run":0}

{"ts":1766561354.715562,"level":"warn","r":7,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561354.715824,"level":"warn","r":8,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561354.717383,"level":"warn","r":7,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561354.717470,"level":"warn","r":8,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561354.719267,"level":"warn","r":8,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561354.721007,"level":"warn","r":7,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561354.722475,"level":"warn","r":7,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

Ended after 21.056314ms : 10 calls. qps=474.92

Aggregated Function Time : count 10 avg 0.0037848261 +/- 0.003153 min 0.000741265 max 0.01003455 sum 0.037848261

# range, mid point, percentile, count

>= 0.000741265 <= 0.001 , 0.000870633 , 10.00, 1

> 0.001 <= 0.002 , 0.0015 , 50.00, 4

> 0.002 <= 0.003 , 0.0025 , 60.00, 1

> 0.003 <= 0.004 , 0.0035 , 70.00, 1

> 0.004 <= 0.005 , 0.0045 , 80.00, 1

> 0.009 <= 0.01 , 0.0095 , 90.00, 1

> 0.01 <= 0.0100345 , 0.0100173 , 100.00, 1

# target 50% 0.002

# target 75% 0.0045

# target 90% 0.01

# target 99% 0.0100311

# target 99.9% 0.0100342

Error cases : count 10 avg 0.0037848261 +/- 0.003153 min 0.000741265 max 0.01003455 sum 0.037848261

# range, mid point, percentile, count

>= 0.000741265 <= 0.001 , 0.000870633 , 10.00, 1

> 0.001 <= 0.002 , 0.0015 , 50.00, 4

> 0.002 <= 0.003 , 0.0025 , 60.00, 1

> 0.003 <= 0.004 , 0.0035 , 70.00, 1

> 0.004 <= 0.005 , 0.0045 , 80.00, 1

> 0.009 <= 0.01 , 0.0095 , 90.00, 1

> 0.01 <= 0.0100345 , 0.0100173 , 100.00, 1

# target 50% 0.002

# target 75% 0.0045

# target 90% 0.01

# target 99% 0.0100311

# target 99.9% 0.0100342

# Socket and IP used for each connection:

[0] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0001565162 +/- 5.371e-05 min 7.977e-05 max 0.000241436 sum 0.000782581

[1] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0001619598 +/- 8.053e-05 min 6.5422e-05 max 0.000294337 sum 0.000809799

Connection time (s) : count 10 avg 0.000159238 +/- 6.85e-05 min 6.5422e-05 max 0.000294337 sum 0.00159238

Sockets used: 10 (for perfect keepalive, would be 2)

Uniform: false, Jitter: false, Catchup allowed: true

IP addresses distribution:

10.107.121.13:8000: 10

Code 500 : 2 (20.0 %)

Code 503 : 8 (80.0 %)

Response Header Sizes : count 10 avg 0 +/- 0 min 0 max 0 sum 0

Response Body/Total Sizes : count 10 avg 182.4 +/- 45.08 min 153 max 256 sum 1824

All done 10 calls (plus 0 warmup) 3.785 ms avg, 474.9 qps

bash

# 查看结果

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c istio-proxy -- pilot-agent request GET stats | grep httpbin | egrep "5xx|eject"

cluster.outbound|8000||httpbin.default.svc.cluster.local;.external.upstream_rq_5xx: 64

cluster.outbound|8000||httpbin.default.svc.cluster.local;.http1.requests_rejected_with_underscores_in_headers: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_active: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_consecutive_5xx: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_consecutive_5xx: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_consecutive_gateway_failure: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_consecutive_local_origin_failure: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_failure_percentage: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_local_origin_failure_percentage: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_local_origin_success_rate: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_detected_success_rate: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_consecutive_5xx: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_consecutive_gateway_failure: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_consecutive_local_origin_failure: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_failure_percentage: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_local_origin_failure_percentage: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_local_origin_success_rate: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_success_rate: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_enforced_total: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_overflow: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_success_rate: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.outlier_detection.ejections_total: 1

cluster.outbound|8000||httpbin.default.svc.cluster.local;.update_rejected: 0

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_5xx: 64

cluster.outbound|8000||httpbin.default.svc.cluster.local;.upstream_rq_pending_failure_eject: 0此时 Istio熔断隔离的是httpbin服务的整个实例 ,而非某一个请求:一旦触发熔断,在3分钟(首次)隔离期内,所有发往httpbin:8000的请求(不管是你这次执行的fortio请求,还是其他Pod/客户端的请求)都会被Istio Sidecar拦截,无法转发到被隔离的httpbin实例,都会快速失败,直到隔离期结束或实例恢复。

bash

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c fortio -- /usr/bin/fortio load -c 2 -qps 0 -n 10 -loglevel Warning http://httpbin:8000/status/500

{"ts":1766561544.581381,"level":"info","r":1,"file":"logger.go","line":298,"msg":"Log level is now 3 Warning (was 2 Info)"}

Fortio 1.71.2 running at 0 queries per second, 4->4 procs, for 10 calls: http://httpbin:8000/status/500

Starting at max qps with 2 thread(s) [gomax 4] for exactly 10 calls (5 per thread + 0)

{"ts":1766561544.588499,"level":"warn","r":38,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561544.591050,"level":"warn","r":37,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":500,"status":"HTTP/1.1 500","thread":0,"run":0}

{"ts":1766561544.592075,"level":"warn","r":38,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561544.593288,"level":"warn","r":38,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561544.593408,"level":"warn","r":37,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561544.594983,"level":"warn","r":37,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561544.595347,"level":"warn","r":38,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561544.597415,"level":"warn","r":38,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561544.597536,"level":"warn","r":37,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561544.599721,"level":"warn","r":37,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

Ended after 15.559261ms : 10 calls. qps=642.7

Aggregated Function Time : count 10 avg 0.0024307444 +/- 0.001034 min 0.001126912 max 0.004995371 sum 0.024307444

# range, mid point, percentile, count

>= 0.00112691 <= 0.002 , 0.00156346 , 30.00, 3

> 0.002 <= 0.003 , 0.0025 , 80.00, 5

> 0.003 <= 0.004 , 0.0035 , 90.00, 1

> 0.004 <= 0.00499537 , 0.00449769 , 100.00, 1

# target 50% 0.0024

# target 75% 0.0029

# target 90% 0.004

# target 99% 0.00489583

# target 99.9% 0.00498542

Error cases : count 10 avg 0.0024307444 +/- 0.001034 min 0.001126912 max 0.004995371 sum 0.024307444

# range, mid point, percentile, count

>= 0.00112691 <= 0.002 , 0.00156346 , 30.00, 3

> 0.002 <= 0.003 , 0.0025 , 80.00, 5

> 0.003 <= 0.004 , 0.0035 , 90.00, 1

> 0.004 <= 0.00499537 , 0.00449769 , 100.00, 1

# target 50% 0.0024

# target 75% 0.0029

# target 90% 0.004

# target 99% 0.00489583

# target 99.9% 0.00498542

# Socket and IP used for each connection:

[0] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0001646394 +/- 0.0001064 min 8.9768e-05 max 0.000375805 sum 0.000823197

[1] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0002437228 +/- 0.0001446 min 0.000101941 max 0.000465151 sum 0.001218614

Connection time (s) : count 10 avg 0.0002041811 +/- 0.000133 min 8.9768e-05 max 0.000465151 sum 0.002041811

Sockets used: 10 (for perfect keepalive, would be 2)

Uniform: false, Jitter: false, Catchup allowed: true

IP addresses distribution:

10.107.121.13:8000: 10

Code 500 : 1 (10.0 %)

Code 503 : 9 (90.0 %)

Response Header Sizes : count 10 avg 0 +/- 0 min 0 max 0 sum 0

Response Body/Total Sizes : count 10 avg 172.1 +/- 38.35 min 153 max 256 sum 1721

All done 10 calls (plus 0 warmup) 2.431 ms avg, 642.7 qps

[root@k8s-master ~/istio]# kubectl exec fortio-deploy-55f886bd9f-pj8fg -c fortio -- /usr/bin/fortio load -c 2 -qps 0 -n 10 -loglevel Warning http://httpbin:8000/status/500

{"ts":1766561546.207284,"level":"info","r":1,"file":"logger.go","line":298,"msg":"Log level is now 3 Warning (was 2 Info)"}

Fortio 1.71.2 running at 0 queries per second, 4->4 procs, for 10 calls: http://httpbin:8000/status/500

Starting at max qps with 2 thread(s) [gomax 4] for exactly 10 calls (5 per thread + 0)

{"ts":1766561546.211749,"level":"warn","r":15,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561546.212834,"level":"warn","r":14,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561546.215536,"level":"warn","r":14,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561546.215919,"level":"warn","r":15,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561546.217935,"level":"warn","r":14,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561546.218161,"level":"warn","r":15,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561546.219894,"level":"warn","r":14,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

{"ts":1766561546.220025,"level":"warn","r":15,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561546.221592,"level":"warn","r":15,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":1,"run":0}

{"ts":1766561546.221812,"level":"warn","r":14,"file":"http_client.go","line":1155,"msg":"Non ok http code","code":503,"status":"HTTP/1.1 503","thread":0,"run":0}

Ended after 11.028864ms : 10 calls. qps=906.71

Aggregated Function Time : count 10 avg 0.0021678682 +/- 0.0006841 min 0.001253226 max 0.003853526 sum 0.021678682

# range, mid point, percentile, count

>= 0.00125323 <= 0.002 , 0.00162661 , 60.00, 6

> 0.002 <= 0.003 , 0.0025 , 90.00, 3

> 0.003 <= 0.00385353 , 0.00342676 , 100.00, 1

# target 50% 0.00185065

# target 75% 0.0025

# target 90% 0.003

# target 99% 0.00376817

# target 99.9% 0.00384499

Error cases : count 10 avg 0.0021678682 +/- 0.0006841 min 0.001253226 max 0.003853526 sum 0.021678682

# range, mid point, percentile, count

>= 0.00125323 <= 0.002 , 0.00162661 , 60.00, 6

> 0.002 <= 0.003 , 0.0025 , 90.00, 3

> 0.003 <= 0.00385353 , 0.00342676 , 100.00, 1

# target 50% 0.00185065

# target 75% 0.0025

# target 90% 0.003

# target 99% 0.00376817

# target 99.9% 0.00384499

# Socket and IP used for each connection:

[0] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0003478014 +/- 0.0003191 min 6.8659e-05 max 0.000943249 sum 0.001739007

[1] 5 socket used, resolved to 10.107.121.13:8000, connection timing : count 5 avg 0.0001834538 +/- 4.33e-05 min 0.000106059 max 0.000235412 sum 0.000917269

Connection time (s) : count 10 avg 0.0002656276 +/- 0.0002421 min 6.8659e-05 max 0.000943249 sum 0.002656276

Sockets used: 10 (for perfect keepalive, would be 2)

Uniform: false, Jitter: false, Catchup allowed: true

IP addresses distribution:

10.107.121.13:8000: 10

Code 503 : 10 (100.0 %)

Response Header Sizes : count 10 avg 0 +/- 0 min 0 max 0 sum 0

Response Body/Total Sizes : count 10 avg 153 +/- 0 min 153 max 153 sum 1530

All done 10 calls (plus 0 warmup) 2.168 ms avg, 906.7 qps

[root@k8s-master ~/istio]# 如果再执行访问,执行后所有请求会快速失败,且熔断隔离时间会翻倍至 6 分钟。

-

此时

httpbin实例仍在 3 分钟熔断隔离期内,Istio Sidecar 会直接拒绝将请求转发到该异常实例,请求根本到不了httpbin,因此会直接返回失败(而非 5xx); -

因实例已被隔离,再次执行请求会触发 Istio 的 "递增惩罚" 机制,

baseEjectionTime翻倍,隔离时间从 3 分钟变为 6 分钟。 -

熔断说白了就是目标端触发了之后,在固定的时间内不再接收请求了。

2.6 TCP流量拆分

创建测试用例

bash

[root@k8s-master ~/istio]# cat samples/tcp-echo/tcp-echo-services.yaml

# Copyright 2018 Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Service

metadata:

name: tcp-echo

labels:

app: tcp-echo

service: tcp-echo

spec:

ports:

- name: tcp

port: 9000

- name: tcp-other

port: 9001

# Port 9002 is omitted intentionally for testing the pass through filter chain.

selector:

app: tcp-echo

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tcp-echo-v1

labels:

app: tcp-echo

version: v1

spec:

replicas: 1

selector:

matchLabels:

app: tcp-echo

version: v1

template:

metadata:

labels:

app: tcp-echo

version: v1

spec:

containers:

- name: tcp-echo

image: docker.io/istio/tcp-echo-server:1.3

imagePullPolicy: IfNotPresent

args: [ "9000,9001,9002", "one" ]

ports:

- containerPort: 9000

- containerPort: 9001

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tcp-echo-v2

labels:

app: tcp-echo

version: v2

spec:

replicas: 1

selector:

matchLabels:

app: tcp-echo

version: v2

template:

metadata:

labels:

app: tcp-echo

version: v2

spec:

containers:

- name: tcp-echo

image: docker.io/istio/tcp-echo-server:1.3

imagePullPolicy: IfNotPresent

args: [ "9000,9001,9002", "two" ]

ports:

- containerPort: 9000

- containerPort: 9001

bash

[root@k8s-master ~/istio]# kubectl get po -l app=tcp-echo

NAME READY STATUS RESTARTS AGE

tcp-echo-v1-7bbd599b4d-8mvkn 2/2 Running 0 38m

tcp-echo-v2-5454955849-scnd5 2/2 Running 0 38m创建GET和DR和VS资源

istio-ingressgateway是集群级的网关实例,Gateway 是 "流量规则模板",只要 selector 匹配,无论规则在哪个命名空间,都会被 Istiod 推送给 ingressgateway,最终由 ingressgateway 执行规则处理外部流量。

bash

[root@k8s-master ~/istio]# cat samples/tcp-echo/tcp-echo-all-v1.yaml

# Copyright 2018 Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: networking.istio.io/v1

kind: Gateway

metadata:

name: tcp-echo-gateway

spec:

selector:

istio: ingressgateway

servers:

- port:

# 此端口是bookinfo的资源写好了TCP的端口为31400,可以通过查看ingress-gateway的pod去确认。

number: 31400

name: tcp

protocol: TCP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1

kind: DestinationRule

metadata:

name: tcp-echo-destination

spec:

host: tcp-echo

subsets:

- name: v1

labels:

version: v1

- name: v2

labels:

version: v2

---

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: tcp-echo

spec:

hosts:

- "*"

gateways:

- tcp-echo-gateway

tcp:

- match:

- port: 31400

route:

- destination:

host: tcp-echo

port:

number: 9000

subset: v1

bash

# 这个实例创建了一个TCP的Gateway的入口,允许所有的hosts发生请求,然后就是通过DestinationRule去定义子集,再通过VirtualService去定义路由规则。

[root@k8s-master ~/istio]# kubectl get gateway tcp-echo-gateway

NAME AGE

tcp-echo-gateway 28m

[root@k8s-master ~/istio]# kubectl get vs tcp-echo

NAME GATEWAYS HOSTS AGE

tcp-echo ["tcp-echo-gateway"] ["*"] 28m创建测试客户端

bash

[root@k8s-master ~/istio]# cat samples/sleep/sleep.yaml

# Copyright Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##################################################################################################

# Sleep service

##################################################################################################

apiVersion: v1

kind: ServiceAccount

metadata:

name: sleep

---

apiVersion: v1

kind: Service

metadata:

name: sleep

labels:

app: sleep

service: sleep

spec:

ports:

- port: 80

name: http

selector:

app: sleep

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: sleep

spec:

replicas: 1

selector:

matchLabels:

app: sleep

template:

metadata:

labels:

app: sleep

spec:

terminationGracePeriodSeconds: 0

serviceAccountName: sleep

containers:

- name: sleep

image: docker.io/curlimages/curl:8.9.1

command: ["/bin/sleep", "infinity"]

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: /etc/sleep/tls

name: secret-volume

volumes:

- name: secret-volume

secret:

secretName: sleep-secret

optional: true

---

[root@k8s-master ~/istio]# kubectl get po -l app=sleep

NAME READY STATUS RESTARTS AGE

sleep-65f688f8f5-jxrzc 2/2 Running 0 21m测试访问

bash

# 当前是所有的TCP请求都会路由到v1版本。

[root@k8s-master ~/istio]# for i in `seq 10`; do kubectl exec sleep-65f688f8f5-jxrzc -- sh -c "(date; sleep 1) | nc 10.98.106.118 31400"; done

one Wed Dec 24 08:51:58 UTC 2025

one Wed Dec 24 08:51:59 UTC 2025

one Wed Dec 24 08:52:00 UTC 2025

one Wed Dec 24 08:52:01 UTC 2025

one Wed Dec 24 08:52:02 UTC 2025

one Wed Dec 24 08:52:03 UTC 2025

one Wed Dec 24 08:52:04 UTC 2025

one Wed Dec 24 08:52:06 UTC 2025

one Wed Dec 24 08:52:07 UTC 2025

one Wed Dec 24 08:52:08 UTC 2025测试流量分发

80%路由到v1版本,20%路由到v2版本

bash

[root@k8s-master ~/istio]# cat samples/tcp-echo/tcp-echo-20-v2.yaml

# Copyright 2018 Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: networking.istio.io/v1

kind: VirtualService

metadata:

name: tcp-echo

spec:

hosts:

- "*"

gateways:

- tcp-echo-gateway

tcp:

- match:

- port: 31400

route:

- destination:

host: tcp-echo

port:

number: 9000

subset: v1

weight: 80

- destination:

host: tcp-echo

port:

number: 9000

subset: v2

weight: 20

bash

[root@k8s-master ~/istio]# for i in `seq 10`; do kubectl exec sleep-65f688f8f5-jxrzc -- sh -c "(date; sleep 1) | nc 10.98.106.118 31400"; done

one Wed Dec 24 08:54:05 UTC 2025

one Wed Dec 24 08:54:06 UTC 2025

one Wed Dec 24 08:54:07 UTC 2025

two Wed Dec 24 08:54:08 UTC 2025

one Wed Dec 24 08:54:09 UTC 2025

one Wed Dec 24 08:54:10 UTC 2025

one Wed Dec 24 08:54:11 UTC 2025

two Wed Dec 24 08:54:12 UTC 2025

one Wed Dec 24 08:54:14 UTC 2025

one Wed Dec 24 08:54:15 UTC 2025查看Envoy代理

bash

[root@k8s-master ~/istio]# istioctl -n istio-system proxy-config listener istio-ingressgateway-7b787c97fc-h9ww9 --port 31400 -oyaml

- accessLog:

- filter:

responseFlagFilter:

flags:

- NR

name: envoy.access_loggers.file

typedConfig:

...

path: /dev/stdout

address:

socketAddress:

address: 0.0.0.0

portValue: 31400

filterChains:

- filters:

...

path: /dev/stdout

statPrefix: tcp-echo.default

weightedClusters:

clusters:

- name: outbound|9000|v1|tcp-echo.default.svc.cluster.local

weight: 80

- name: outbound|9000|v2|tcp-echo.default.svc.cluster.local

weight: 20

transportSocketConnectTimeout: 15s

maxConnectionsToAcceptPerSocketEvent: 1

name: 0.0.0.0_31400

trafficDirection: OUTBOUND2.7 istio实现K8S-ingress功能

创建测试Httpbin

bash

[root@k8s-master ~/istio]# cat samples/httpbin/httpbin.yaml

# Copyright Istio Authors

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##################################################################################################

# httpbin service

##################################################################################################

apiVersion: v1

kind: ServiceAccount

metadata:

name: httpbin

---

apiVersion: v1

kind: Service

metadata:

name: httpbin

labels:

app: httpbin

service: httpbin

spec:

ports:

- name: http

port: 8000

targetPort: 8080

selector:

app: httpbin

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: httpbin

spec:

replicas: 1

selector:

matchLabels:

app: httpbin

version: v1

template:

metadata:

labels:

app: httpbin

version: v1

spec:

serviceAccountName: httpbin

containers:

- image: docker.io/mccutchen/go-httpbin:v2.15.0

imagePullPolicy: IfNotPresent

name: httpbin

ports:

- containerPort: 8080创建ingressclass与ingress

bash

[root@k8s-master ~/istio]# cat istio-ingress.yaml

# 创建istio-ingressclass.yaml

apiVersion: networking.k8s.io/v1

kind: IngressClass

metadata:

name: istio # 名称要和Ingress里的ingressClassName一致

spec:

controller: istio.io/ingress-controller # 指定由Istio控制器处理

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: httpbin

spec:

ingressClassName: istio # 指定使用Istio的Ingress Controller处理该Ingress

rules:

- host: httpbin.k8s.local

http:

paths:

- path: /status

pathType: Prefix

backend:

service:

name: httpbin

port:

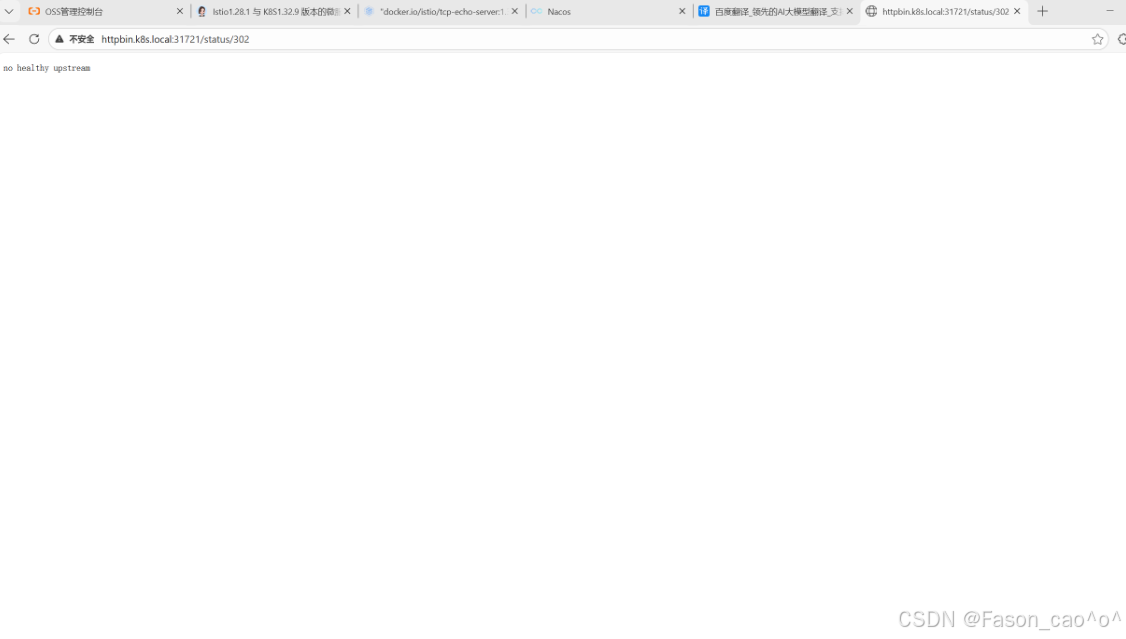

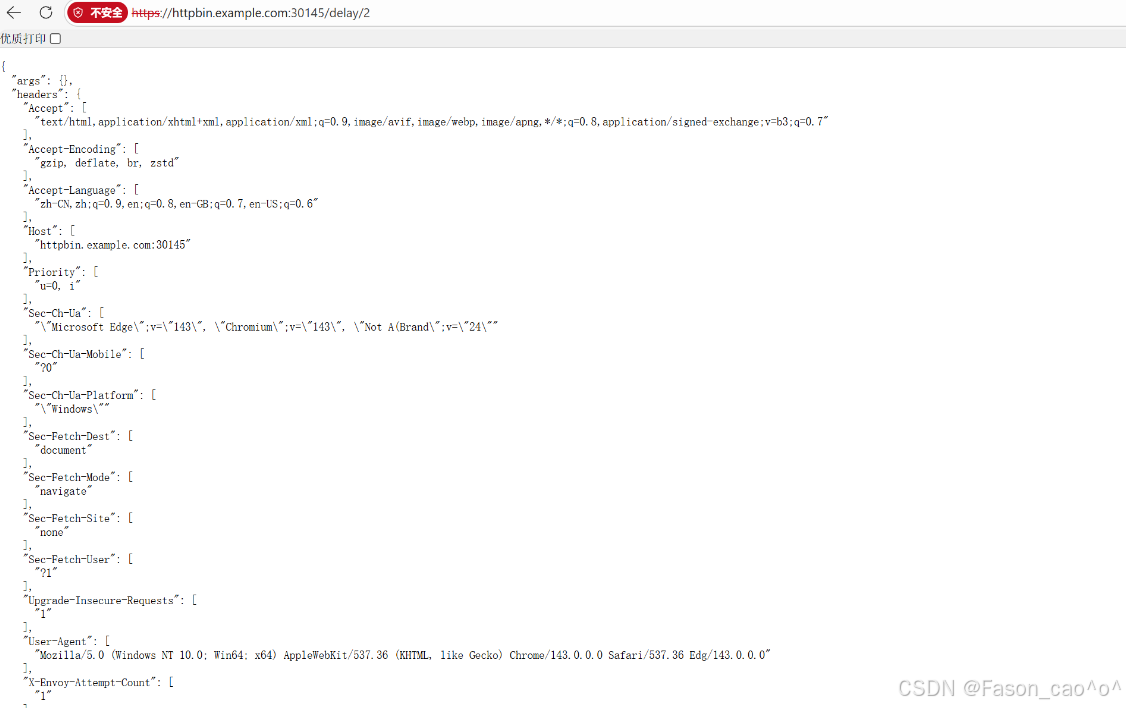

number: 8000测试访问

httpbin.k8s.local:31721/status/302

为什么要加31721端口?

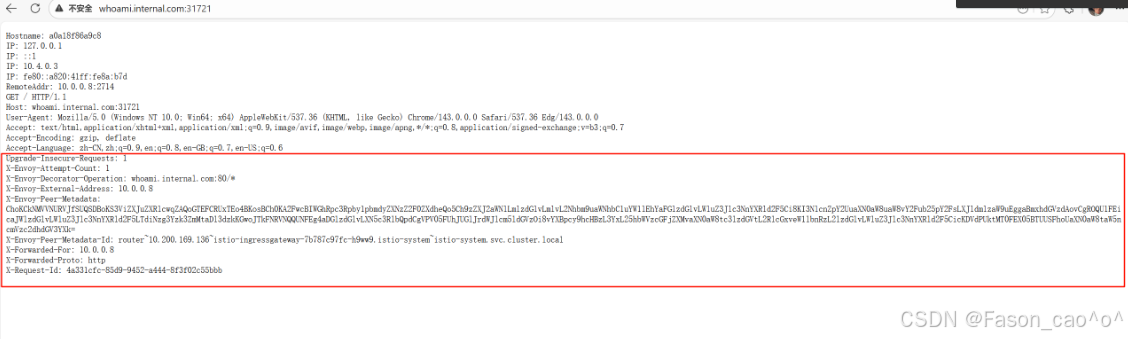

我的 Istio IngressGateway 是LoadBalancer类型,但自建 K8s 集群无法分配外部 IP(EXTERNAL-IP 显示<pending>),因此只能通过 NodePort 端口(31721)访问 IngressGateway 的 80 端口。

bash

[root@k8s-master ~/istio]# kubectl get svc -n istio-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

istio-egressgateway ClusterIP 10.110.249.147 <none> 80/TCP,443/TCP 2d3h

istio-ingressgateway LoadBalancer 10.98.106.118 <pending> 15021:30228/TCP,80:31721/TCP,443:30145/TCP,31400:30778/TCP,15443:31494/TCP 2d3h2.8 istioHTTPS安全网关

创建自签证书

bash

1. OpenSSL 生成证书 / CSR / 私钥的命令

(1)生成 example_certs1 目录的根 CA 证书 + 私钥

openssl req -x509 -sha256 -nodes -days 365 -newkey rsa:2048 -subj '/O=example Inc./CN=example.com' -keyout example_certs1/example.com.key -out example_certs1/example.com.crt

(2)生成 example_certs1/httpbin.example.com的 CSR + 私钥

openssl req -out example_certs1/httpbin.example.com.csr -newkey rsa:2048 -nodes -keyout example_certs1/httpbin.example.com.key -subj "/CN=httpbin.example.com/O=httpbin organization"

(3)用 CA 签名生成 example_certs1/httpbin.example.com证书

openssl x509 -req -sha256 -days 365 -CA example_certs1/example.com.crt -CAkey example_certs1/example.com.key -set_serial 0 -in example_certs1/httpbin.example.com.csr -out example_certs1/httpbin.example.com.crt

(4)生成 example_certs1/helloworld.example.com的 CSR + 私钥

openssl req -out example_certs1/helloworld.example.com.csr -newkey rsa:2048 -nodes -keyout example_certs1/helloworld.example.com.key -subj "/CN=helloworld.example.com/O=helloworld organization"

(5)用 CA 签名生成 example_certs1/helloworld.example.com证书

openssl x509 -req -sha256 -days 365 -CA example_certs1/example.com.crt -CAkey example_certs1/example.com.key -set_serial 1 -in example_certs1/helloworld.example.com.csr -out example_certs1/helloworld.example.com.crt

(6)生成 example_certs1/client.example.com的 CSR + 私钥

openssl req -out example_certs1/client.example.com.csr -newkey rsa:2048 -nodes -keyout example_certs1/client.example.com.key -subj "/CN=client.example.com/O=client organization"

(7)用 CA 签名生成 example_certs1/client.example.com证书

openssl x509 -req -sha256 -days 365 -CA example_certs1/example.com.crt -CAkey example_certs1/example.com.key -set_serial 1 -in example_certs1/client.example.com.csr -out example_certs1/client.example.com.crt

(8)生成 example_certs2 目录的根 CA 证书 + 私钥

openssl req -x509 -sha256 -nodes -days 365 -newkey rsa:2048 -subj '/O=example Inc./CN=example.com' -keyout example_certs2/example.com.key -out example_certs2/example.com.crt

(9)生成 example_certs2/httpbin.example.com的 CSR + 私钥

openssl req -out example_certs2/httpbin.example.com.csr -newkey rsa:2048 -nodes -keyout example_certs2/httpbin.example.com.key -subj "/CN=httpbin.example.com/O=httpbin organization"

(10)用 CA 签名生成 example_certs2/httpbin.example.com证书

openssl x509 -req -sha256 -days 365 -CA example_certs2/example.com.crt -CAkey example_certs2/example.com.key -seexample_certs1 目录(核心业务证书集)

| 文件类型 | 文件名 | 用途说明 |

|---|---|---|

| 自签 CA 证书 | example.com.crt | 根 CA 证书,用于签名所有业务域名证书 |

| 自签 CA 私钥 | example.com.key | 根 CA 私钥,签名业务证书时使用 |

| httpbin 域名 CSR | httpbin.example.com.csr | httpbin.example.com的证书签名请求 |

| httpbin 域名私钥 | httpbin.example.com.key | httpbin.example.com对应的私钥 |

| httpbin 域名证书(CA 签) | httpbin.example.com.crt | 由根 CA 签名的 httpbin 域名证书 |

| helloworld 域名 CSR | helloworld.example.com.csr | helloworld.example.com的证书签名请求(已修正O=笔误) |

| helloworld 域名私钥 | helloworld.example.com.key | helloworld.example.com对应的私钥 |

| helloworld 域名证书(CA 签) | helloworld.example.com.crt | 由根 CA 签名的 helloworld 域名证书 |

| client 域名 CSR | client.example.com.csr | client.example.com的证书签名请求(已修正O=笔误) |

| client 域名私钥 | client.example.com.key | client.example.com对应的私钥 |

| client 域名证书(CA 签) | client.example.com.crt | 由根 CA 签名的 client 域名证书 |

example_certs2 目录(备用 / 重复生成的证书集)

| 文件类型 | 文件名 | 用途说明 |

|---|---|---|

| 自签 CA 证书 | example.com.crt | 第二套根 CA 证书(与 example_certs1 内容一致) |

| 自签 CA 私钥 | example.com.key | 第二套根 CA 私钥(与 example_certs1 内容一致) |

| httpbin 域名 CSR | httpbin.example.com.csr | 第二套httpbin.example.com的证书签名请求 |

| httpbin 域名私钥 | httpbin.example.com.key | 第二套httpbin.example.com对应的私钥 |

| httpbin 域名证书(CA 签) | httpbin.example.com.crt | 第二套由根 CA 签名的 httpbin 域名证书 |

创建TLS网关与VS

bash