内容

hadoop基础理论知识

单机(伪分布式)

完全分布式

高可靠环境

一、分布式基础概念

集群:具有相同功能的计算机构成的一个更加庞大的计算机单元

**分布式:**各个计算机或者其他硬件,各自提供不同的功能,各司其职,他们属于分布式。分开部署, 各司其职。

例如:

分布式存储

分布式搜索(索引)

分布式运算 分布式爬虫 .....

痛点

心跳检查:

从节点可以不断的,以固定间隔,向某个主机发送当前自身的状态,同时相当于通知对方, 我当前在线。

主节点,一般不做广播,而是再从节点汇报心跳后,即时响应。

方式节点宕机

所有节点,为了防止某个节点故障,都需要进行合理的备份,一般备份3个

单点故障:主节点如果宕机,可能会影响整个集群的工作。

解决单点故障

选举

备份

痛点

脑裂

数据同步及分布式锁是分布式环境永久的话题

CAP理论

二、伪分布式环境

2.1 关防火墙

已关则忽略

bash

临时关闭:systemctl stop firewalld

永久关闭:systemctl disable firewalld

查看防火墙状态:systemctl status firewalld2.2 生成公私钥

生成私钥

bash

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa生成公钥

bash

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

bash

[root@c001 ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

Generating public/private rsa key pair.

Created directory '/root/.ssh'.

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

06:64:6a:58:7f:28:62:37:7a:00:c2:f6:a2:5f:5d:36 root@c001

The key's randomart image is:

+--[ RSA 2048]----+

|+ . o |

|.+ o = . |

|. * * + . |

| o B o oE |

|. o .. oS. |

|. .. .. |

| . . |

| . |

| |

+-----------------+

[root@c001 ~]# cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[root@c001 ~]# ssh c001

The authenticity of host 'c001 (192.168.100.101)' can't be established.

ECDSA key fingerprint is 62:d1:b1:ce:d2:cb:f6:fb:71:7c:4d:2f:ae:7a:87:bd.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c001,192.168.100.101' (ECDSA) to the list of known

hosts.

Last login: Mon Mar 23 10:43:25 2026 from 192.168.100.12.3 上传hadoop并解压

bash

[root@c001 download]# rz

rz waiting to receive.

Starting zmodem transfer. Press Ctrl+C to cancel.

Transferring hadoop-2.7.1.tar.gz...

100% 192755 KB 13768 KB/sec 00:00:14 0 Errors

[root@c001 download]# ll

总用量 685336

-rw-r--r--. 1 root root 197381551 7月 10 2024 hadoop-2.7.1.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

[root@c001 download]# tar -zxf hadoop-2.7.1.tar.gz

[root@c001 download]# ll

总用量 685340

drwxr-xr-x. 9 root root 4096 12月 9 2015 hadoop-2.7.1

-rw-r--r--. 1 root root 197381551 7月 10 2024 hadoop-2.7.1.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

[root@c001 download]# cd ..

[root@c001 opt]# mkdir bigdata

[root@c001 opt]# ll

总用量 4

drwxr-xr-x. 2 root root 6 3月 23 10:53 bigdata

drwxr-xr-x. 3 root root 4096 3月 23 10:52 download

[root@c001 opt]# mv download/hadoop-2.7.1 bigdata/

[root@c001 opt]# ll bigdata/

总用量 4

drwxr-xr-x. 9 root root 4096 12月 9 2015 hadoop-2.7.12.4 创建目录

bash

[root@c001 opt]# cd bigdata/hadoop-2.7.1/

[root@c001 hadoop-2.7.1]# pwd

/opt/bigdata/hadoop-2.7.1

[root@c001 hadoop-2.7.1]# mkdir temp

[root@c001 hadoop-2.7.1]# mkdir -p hdfs/name

[root@c001 hadoop-2.7.1]# mkdir -p hdfs/data2.5 修改配置文件

配置文件目录

bash

[root@c001 hadoop-2.7.1]# pwd

/opt/bigdata/hadoop-2.7.1

[root@c001 hadoop-2.7.1]# ll

总用量 36

drwxr-xr-x. 2 root root 4096 12月 9 2015 bin

drwxr-xr-x. 3 root root 19 12月 9 2015 etc

drwxr-xr-x. 4 root root 28 3月 23 10:55 hdfs

drwxr-xr-x. 2 drwxr-xr-x. 3 root root 19 12月 9 2015 lib

drwxr-xr-x. 2 root root 4096 12月 9 2015 libexec

-rw-r--r--. 1 root root 15429 12月 9 2015 LICENSE.txt

-rw-r--r--. 1 root root 101 12月 9 2015 NOTICE.txt

-rw-r--r--. 1 root root 1366 12月 9 2015 README.txt

drwxr-xr-x. 2 root root 4096 12月 9 2015 sbin

drwxr-xr-x. 4 root root 29 12月 9 2015 share

drwxr-xr-x. 2 root root 6 3月 23 10:54 temp

[root@c001 hadoop-2.7.1]# cd etc/

[root@c001 etc]# ll

总用量 4

drwxr-xr-x. 2 root root 4096 12月 9 2015 hadoop

[root@c001 etc]# cd hadoop/

[root@c001 hadoop]# ll

总用量 152

-rw-r--r--. 1 root root 4436 12月 9 2015 capacity-scheduler.xml

-rw-r--r--. 1 root root 1335 12月 9 2015 configuration.xsl

-rw-r--r--. 1 root root 318 12月 9 2015 container-executor.cfg

-rw-r--r--. 1 root root 774 12月 9 2015 core-site.xml

-rw-r--r--. 1 root root 3670 12月 9 2015 hadoop-env.cmd

-rw-r--r--. 1 root root 4224 12月 9 2015 hadoop-env.sh

-rw-r--r--. 1 root root 2598 12月 9 2015 hadoop-metrics2.properties

-rw-r--r--. 1 root root 2490 12月 9 2015 hadoop-metrics.properties

-rw-r--r--. 1 root root 9683 12月 9 2015 hadoop-policy.xml

-rw-r--r--. 1 root root 775 12月 9 2015 hdfs-site.xml

-rw-r--r--. 1 root root 1449 12月 9 2015 httpfs-env.sh

-rw-r--r--. 1 root root 1657 12月 9 2015 httpfs-log4j.properties

-rw-r--r--. 1 root root 21 12月 9 2015 httpfs-signature.secret

-rw-r--r--. 1 root root 620 12月 9 2015 httpfs-site.xml

-rw-r--r--. 1 root root 3518 12月 9 2015 kms-acls.xml

-rw-r--r--. 1 root root 1527 12月 9 2015 kms-env.sh

-rw-r--r--. 1 root root 1631 12月 9 2015 kms-log4j.properties

-rw-r--r--. 1 root root 5511 12月 9 2015 kms-site.xml

-rw-r--r--. 1 root root 11237 12月 9 2015 log4j.properties

-rw-r--r--. 1 root root 951 12月 9 2015 mapred-env.cmd

-rw-r--r--. 1 root root 1383 12月 9 2015 mapred-env.sh

-rw-r--r--. 1 root root 4113 12月 9 2015 mapred-queues.xml.template

-rw-r--r--. 1 root root 758 12月 9 2015 mapred-site.xml.template

-rw-r--r--. 1 root root 10 12月 9 2015 slaves

-rw-r--r--. 1 root root 2316 12月 9 2015 ssl-client.xml.example

-rw-r--r--. 1 root root 2268 12月 9 2015 ssl-server.xml.example

-rw-r--r--. 1 root root 2250 12月 9 2015 yarn-env.cmd

-rw-r--r--. 1 root root 4567 12月 9 2015 yarn-env.sh

-rw-r--r--. 1 root root 690 12月 9 2015 yarn-site.xml

[root@c001 hadoop]# cp mapred-site.xml.template mapred-site.xml

[root@c001 hadoop]# ll

总用量 156

-rw-r--r--. 1 root root 4436 12月 9 2015 capacity-scheduler.xml

-rw-r--r--. 1 root root 1335 12月 9 2015 configuration.xsl

-rw-r--r--. 1 root root 318 12月 9 2015 container-executor.cfg

-rw-r--r--. 1 root root 774 12月 9 2015 core-site.xml

-rw-r--r--. 1 root root 3670 12月 9 2015 hadoop-env.cmd

-rw-r--r--. 1 root root 4224 12月 9 2015 hadoop-env.sh

-rw-r--r--. 1 root root 2598 12月 9 2015 hadoop-metrics2.properties

-rw-r--r--. 1 root root 2490 12月 9 2015 hadoop-metrics.properties

-rw-r--r--. 1 root root 9683 12月 9 2015 hadoop-policy.xmlroot root 101 12月 9 2015 include

-rw-r--r--. 1 root root 775 12月 9 2015 hdfs-site.xml

-rw-r--r--. 1 root root 1449 12月 9 2015 httpfs-env.sh

-rw-r--r--. 1 root root 1657 12月 9 2015 httpfs-log4j.properties

-rw-r--r--. 1 root root 21 12月 9 2015 httpfs-signature.secret

-rw-r--r--. 1 root root 620 12月 9 2015 httpfs-site.xml

-rw-r--r--. 1 root root 3518 12月 9 2015 kms-acls.xml

-rw-r--r--. 1 root root 1527 12月 9 2015 kms-env.sh

-rw-r--r--. 1 root root 1631 12月 9 2015 kms-log4j.properties

-rw-r--r--. 1 root root 5511 12月 9 2015 kms-site.xml

-rw-r--r--. 1 root root 11237 12月 9 2015 log4j.properties

-rw-r--r--. 1 root root 951 12月 9 2015 mapred-env.cmd

-rw-r--r--. 1 root root 1383 12月 9 2015 mapred-env.sh

-rw-r--r--. 1 root root 4113 12月 9 2015 mapred-queues.xml.template

-rw-r--r--. 1 root root 758 3月 23 10:58 mapred-site.xml

-rw-r--r--. 1 root root 758 12月 9 2015 mapred-site.xml.template

-rw-r--r--. 1 root root 10 12月 9 2015 slaves

-rw-r--r--. 1 root root 2316 12月 9 2015 ssl-client.xml.example

-rw-r--r--. 1 root root 2268 12月 9 2015 ssl-server.xml.example

-rw-r--r--. 1 root root 2250 12月 9 2015 yarn-env.cmd

-rw-r--r--. 1 root root 4567 12月 9 2015 yarn-env.sh

-rw-r--r--. 1 root root 690 12月 9 2015 yarn-site.xml修改配置

25行左右

bash

# The only required environment variable is JAVA_HOME. All others are

# optional. When running a distributed configuration it is best to

# set JAVA_HOME in this file, so that it is correctly defined on

# remote nodes.

# The java implementation to use.

export JAVA_HOME=/usr/local/java23行左右,注意,注释要去掉

bash

# some Java parameters

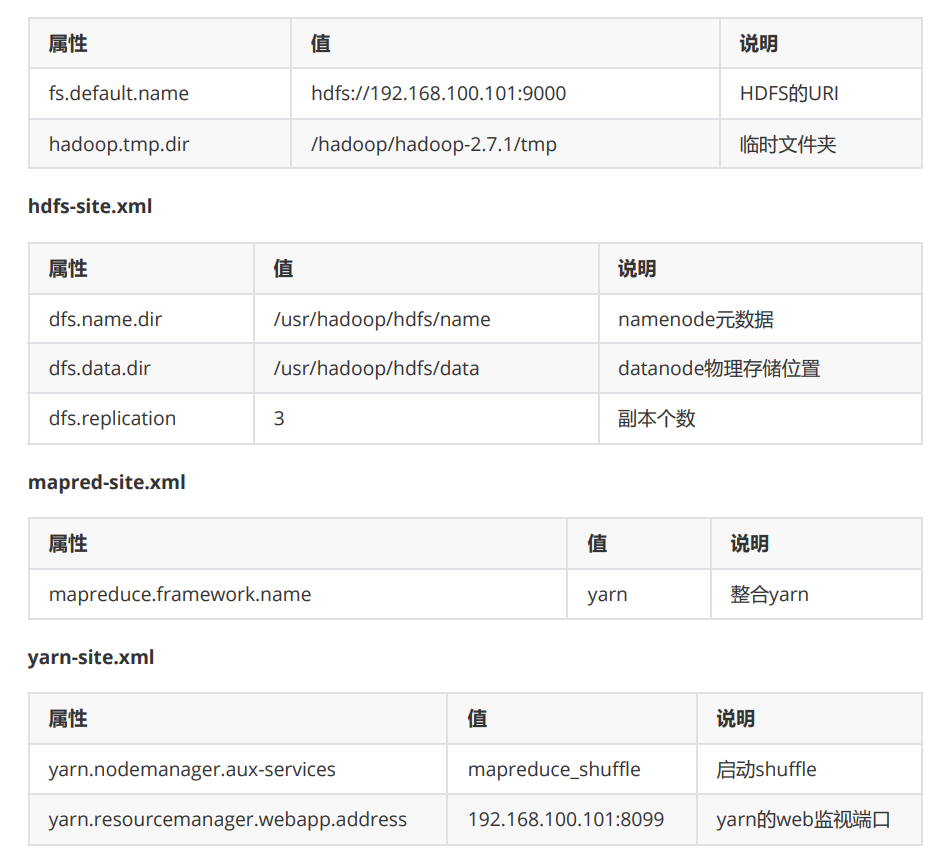

export JAVA_HOME=/usr/local/javacore-site.xml

所有xml的配置格式

bash

<property>

<name>属性</name>

<value>值</value>

<description>备注(随便写)</description>

</property>

配置环境变量

bash

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

26/03/23 11:17:35 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = c001/192.168.100.101

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.1

STARTUP_MSG: classpath = /opt/bigdata/hadoop2.7.1/etc/hadoop:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/asm3.2.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/hadoop-annotations2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/jersey-core1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/apacheds-kerberos-codec2.0.0-M15.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/commonsconfiguration-1.6.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/commonsbeanutils-core-1.8.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-compress-1.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/xz-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/hamcrest-core-1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jersey-json-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/curator-client-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/zookeeper-3.4.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/mockito-all-1.8.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/httpcore-4.2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-collections-3.2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsch-0.1.42.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/gson-2.2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jets3t-0.9.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-math3-3.1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/junit-4.11.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jettison-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/hadoop-auth-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-digester-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsp-api-2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-net-3.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/paranamer-2.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/xmlenc-0.52.jar:/opt/bigdata/hadoop-

2.7.1/share/hadoop/common/lib/stax-api-1.0-2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-httpclient-3.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/avro-1.7.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/activation-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/htrace-core-3.1.0-

incubating.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/curatorframework-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/httpclient4.2.5.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-common2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-nfs2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-common-2.7.1-

tests.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/hdfs:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/asm-3.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/asm-3.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-core-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guice-3.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/javax.inject-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/xz-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-json-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/opt/bigdata/hadoop-

2.7.1/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jettison-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-client-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/activation-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/aopalliance-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-applications-distributedshell2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-tests2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-webproxy-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-client2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-api2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-serversharedcachemanager-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoopyarn-server-applicationhistoryservice-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-resourcemanager2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-registry2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-applicationsunmanaged-am-launcher-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-common-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-common-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-nodemanager2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/asm3.2.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/hadoop-annotations2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-core1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice3.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/javax.inject1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-compress1.4.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/xz1.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/hamcrest-core1.3.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-mapper-asl1.9.13.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice-servlet3.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-guice1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/junit4.11.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/netty3.6.2.Final.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-coreasl-1.9.13.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-server1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/paranamer2.3.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-io2.4.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/leveldbjni-all-

1.8.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/snappy-java1.0.4.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/protobuf-java2.5.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/avro1.7.4.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/log4j1.2.17.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/aopalliance1.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceexamples-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoopmapreduce-client-jobclient-2.7.1-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-common2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-hs-plugins-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-hs-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoopmapreduce-client-jobclient-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-core2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-app-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/contrib/capacityscheduler/*.jar:/opt/bigdata/hadoop-2.7.1/contrib/capacity-scheduler/*.jar

STARTUP_MSG:

build = Unknown -r Unknown; compiled by 'root' on 2015-12-09T10:56Z

STARTUP_MSG: java = 1.8.0_162

************************************************************/

26/03/23 11:17:35 INFO namenode.NameNode: registered UNIX signal handlers for

[TERM, HUP, INT]

26/03/23 11:17:35 INFO namenode.NameNode: createNameNode [-format]

26/03/23 11:17:37 WARN common.Util: Path /opt/bigdata/hadoop-2.7.1/hdfs/name

should be specified as a URI in configuration files. Please update hdfs

configuration.

26/03/23 11:17:37 WARN common.Util: Path /opt/bigdata/hadoop-2.7.1/hdfs/name

should be specified as a URI in configuration files. Please update hdfs

configuration.

Formatting using clusterid: CID-4f33d3d7-fcae-493b-becb-7a2e9111b6bd

26/03/23 11:17:37 INFO namenode.FSNamesystem: No KeyProvider found.

26/03/23 11:17:37 INFO namenode.FSNamesystem: fsLock is fair:true

26/03/23 11:17:37 INFO blockmanagement.DatanodeManager:

dfs.block.invalidate.limit=1000

26/03/23 11:17:37 INFO blockmanagement.DatanodeManager:

dfs.namenode.datanode.registration.ip-hostname-check=true

26/03/23 11:17:37 INFO blockmanagement.BlockManager:

dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

26/03/23 11:17:37 INFO blockmanagement.BlockManager: The block deletion will start

around 2026 三月 23 11:17:37

26/03/23 11:17:37 INFO util.GSet: Computing capacity for map BlocksMap

26/03/23 11:17:37 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:37 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB

26/03/23 11:17:37 INFO util.GSet: capacity = 2^21 = 2097152 entries

26/03/23 11:17:37 INFO blockmanagement.BlockManager:

dfs.block.access.token.enable=false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: defaultReplication =

1

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxReplication =

512

26/03/23 11:17:37 INFO blockmanagement.BlockManager: minReplication =

1

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxReplicationStreams =

2

26/03/23 11:17:37 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks =

false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: replicationRecheckInterval =

3000

26/03/23 11:17:37 INFO blockmanagement.BlockManager: encryptDataTransfer =

false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxNumBlocksToLog =

1000

26/03/23 11:17:37 INFO namenode.FSNamesystem: fsOwner = root

(auth:SIMPLE)

26/03/23 11:17:37 INFO namenode.FSNamesystem: supergroup = supergroup

26/03/23 11:17:37 INFO namenode.FSNamesystem: isPermissionEnabled = true

26/03/23 11:17:37 INFO namenode.FSNamesystem: HA Enabled: false

26/03/23 11:17:37 INFO namenode.FSNamesystem: Append Enabled: true

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map INodeMap

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^20 = 1048576 entries

26/03/23 11:17:38 INFO namenode.FSDirectory: ACLs enabled? false

26/03/23 11:17:38 INFO namenode.FSDirectory: XAttrs enabled? true

26/03/23 11:17:38 INFO namenode.FSDirectory: Maximum size of an xattr: 16384

26/03/23 11:17:38 INFO namenode.NameNode: Caching file names occuring more than 10

times

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map cachedBlocks

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^18 = 262144 entries

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct

= 0.9990000128746033

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes

= 0

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension

= 30000

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf:

dfs.namenode.top.window.num.buckets = 10

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users

= 10

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf:

dfs.namenode.top.windows.minutes = 1,5,25

26/03/23 11:17:38 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

26/03/23 11:17:38 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total

heap and retry cache entry expiry time is 600000 millis

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map NameNodeRetryCache

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB =

297.0 KB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^15 = 32768 entries

26/03/23 11:17:38 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1350162693-

192.168.100.101-1774235858681

26/03/23 11:17:38 INFO common.Storage: Storage directory /opt/bigdata/hadoop2.7.1/hdfs/name

has been successfully formatted.

26/03/23 11:17:38 INFO namenode.NNStorageRetentionManager: Going to retain 1

images with txid >= 0

26/03/23 11:17:39 INFO util.ExitUtil: Exiting with status 0

26/03/23 11:17:39 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at c001/192.168.100.101

************************************************************/

bash

#hadoop搭建

export HADOOP_HOME=/opt/bigdata/hadoop-2.7.1

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin记得刷新

格式化

bash

[root@c001 hadoop-2.7.1]# hadoop namenode -format

bash

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

26/03/23 11:17:35 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = c001/192.168.100.101

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.1STARTUP_MSG: classpath = /opt/bigdata/hadoop2.7.1/etc/hadoop:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/asm3.2.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/hadoop-annotations2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/jersey-core1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/apacheds-kerberos-codec2.0.0-M15.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/commonsconfiguration-1.6.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/commonsbeanutils-core-1.8.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-compress-1.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/xz-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/hamcrest-core-1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jersey-json-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/curator-client-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/zookeeper-3.4.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/mockito-all-1.8.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/httpcore-4.2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-collections-3.2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsch-0.1.42.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/gson-2.2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jets3t-0.9.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-math3-3.1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/junit-4.11.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jettison-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/hadoop-auth-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-digester-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsp-api-2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-net-3.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/paranamer-2.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/xmlenc-0.52.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/stax-api-1.0-2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/commons-httpclient-3.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/avro-1.7.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/activation-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/htrace-core-3.1.0-

incubating.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/curatorframework-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/lib/httpclient4.2.5.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-common2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-nfs2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/common/hadoop-common-2.7.1-

tests.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/hdfs:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/asm-3.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/asm-3.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-core-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guice-3.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/javax.inject-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/xz-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-lang-2.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guava-11.0.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-json-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-cli-1.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jetty-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-codec-1.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jettison-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-server-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/servlet-api-2.5.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-io-2.4.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/jersey-client-1.9.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/log4j-1.2.17.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/activation-1.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/lib/aopalliance-1.0.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-applications-distributedshell2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-tests2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-webproxy-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-client2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-api2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-serversharedcachemanager-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoopyarn-server-applicationhistoryservice-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-resourcemanager2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-registry2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-applicationsunmanaged-am-launcher-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-common-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-common-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/yarn/hadoop-yarn-server-nodemanager2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/asm3.2.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/hadoop-annotations2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-core1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice3.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/javax.inject1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-compress1.4.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/xz1.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/hamcrest-core1.3.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-mapper-asl1.9.13.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice-servlet3.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-guice1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/junit4.11.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/netty3.6.2.Final.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-coreasl-1.9.13.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-server1.9.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/paranamer2.3.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-io2.4.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/snappy-java1.0.4.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/protobuf-java2.5.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/avro1.7.4.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/log4j1.2.17.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/lib/aopalliance1.0.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceexamples-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoopmapreduce-client-jobclient-2.7.1-tests.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-common2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-hs-plugins-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-hs-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoopmapreduce-client-jobclient-2.7.1.jar:/opt/bigdata/hadoop2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-core2.7.1.jar:/opt/bigdata/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduceclient-app-2.7.1.jar:/opt/bigdata/hadoop-2.7.1/contrib/capacityscheduler/*.jar:/opt/bigdata/hadoop-2.7.1/contrib/capacity-scheduler/*.jar

STARTUP_MSG:

build = Unknown -r Unknown; compiled by 'root' on 2015-12-09T10:56Z

STARTUP_MSG: java = 1.8.0_162

************************************************************/

26/03/23 11:17:35 INFO namenode.NameNode: registered UNIX signal handlers for

[TERM, HUP, INT]

26/03/23 11:17:35 INFO namenode.NameNode: createNameNode [-format]

26/03/23 11:17:37 WARN common.Util: Path /opt/bigdata/hadoop-2.7.1/hdfs/name

should be specified as a URI in configuration files. Please update hdfs

configuration.

26/03/23 11:17:37 WARN common.Util: Path /opt/bigdata/hadoop-2.7.1/hdfs/name

should be specified as a URI in configuration files. Please update hdfs

configuration.

Formatting using clusterid: CID-4f33d3d7-fcae-493b-becb-7a2e9111b6bd

26/03/23 11:17:37 INFO namenode.FSNamesystem: No KeyProvider found.

26/03/23 11:17:37 INFO namenode.FSNamesystem: fsLock is fair:true

26/03/23 11:17:37 INFO blockmanagement.DatanodeManager:

dfs.block.invalidate.limit=1000

26/03/23 11:17:37 INFO blockmanagement.DatanodeManager:

dfs.namenode.datanode.registration.ip-hostname-check=true

26/03/23 11:17:37 INFO blockmanagement.BlockManager:

dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

26/03/23 11:17:37 INFO blockmanagement.BlockManager: The block deletion will start

around 2026 三月 23 11:17:37

26/03/23 11:17:37 INFO util.GSet: Computing capacity for map BlocksMap

26/03/23 11:17:37 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:37 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB

26/03/23 11:17:37 INFO util.GSet: capacity = 2^21 = 2097152 entries

26/03/23 11:17:37 INFO blockmanagement.BlockManager:

dfs.block.access.token.enable=false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: defaultReplication =

1

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxReplication =

512

26/03/23 11:17:37 INFO blockmanagement.BlockManager: minReplication =

1

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxReplicationStreams =

226/03/23 11:17:37 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks =

false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: replicationRecheckInterval =

3000

26/03/23 11:17:37 INFO blockmanagement.BlockManager: encryptDataTransfer =

false

26/03/23 11:17:37 INFO blockmanagement.BlockManager: maxNumBlocksToLog =

1000

26/03/23 11:17:37 INFO namenode.FSNamesystem: fsOwner = root

(auth:SIMPLE)

26/03/23 11:17:37 INFO namenode.FSNamesystem: supergroup = supergroup

26/03/23 11:17:37 INFO namenode.FSNamesystem: isPermissionEnabled = true

26/03/23 11:17:37 INFO namenode.FSNamesystem: HA Enabled: false

26/03/23 11:17:37 INFO namenode.FSNamesystem: Append Enabled: true

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map INodeMap

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^20 = 1048576 entries

26/03/23 11:17:38 INFO namenode.FSDirectory: ACLs enabled? false

26/03/23 11:17:38 INFO namenode.FSDirectory: XAttrs enabled? true

26/03/23 11:17:38 INFO namenode.FSDirectory: Maximum size of an xattr: 16384

26/03/23 11:17:38 INFO namenode.NameNode: Caching file names occuring more than 10

times

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map cachedBlocks

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^18 = 262144 entries

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct

= 0.9990000128746033

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes

= 0

26/03/23 11:17:38 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension

= 30000

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf:

dfs.namenode.top.window.num.buckets = 10

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users

= 10

26/03/23 11:17:38 INFO metrics.TopMetrics: NNTop conf:

dfs.namenode.top.windows.minutes = 1,5,25

26/03/23 11:17:38 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

26/03/23 11:17:38 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total

heap and retry cache entry expiry time is 600000 millis

26/03/23 11:17:38 INFO util.GSet: Computing capacity for map NameNodeRetryCache

26/03/23 11:17:38 INFO util.GSet: VM type = 64-bit

26/03/23 11:17:38 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB =

297.0 KB

26/03/23 11:17:38 INFO util.GSet: capacity = 2^15 = 32768 entries

26/03/23 11:17:38 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1350162693-

192.168.100.101-1774235858681

26/03/23 11:17:38 INFO common.Storage: Storage directory /opt/bigdata/hadoop2.7.1/hdfs/name

has been successfully formatted.

26/03/23 11:17:38 INFO namenode.NNStorageRetentionManager: Going to retain 1

images with txid >= 0

26/03/23 11:17:39 INFO util.ExitUtil: Exiting with status 0

26/03/23 11:17:39 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at c001/192.168.100.101

************************************************************/启动和关闭

bash

[root@c001 hadoop-2.7.1]# start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [c001]

c001: starting namenode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadoop-rootnamenode-c001.out

The

authenticity of host 'localhost (::1)' can't be established.

ECDSA key fingerprint is 62:d1:b1:ce:d2:cb:f6:fb:71:7c:4d:2f:ae:7a:87:bd.

Are you sure you want to continue connecting (yes/no)? yes

localhost: Warning: Permanently added 'localhost' (ECDSA) to the list of known

hosts.

localhost: starting datanode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadooproot-datanode-c001.out

Starting

secondary namenodes [0.0.0.0]

The authenticity of host '0.0.0.0 (0.0.0.0)' can't be established.

ECDSA key fingerprint is 62:d1:b1:ce:d2:cb:f6:fb:71:7c:4d:2f:ae:7a:87:bd.

Are you sure you want to continue connecting (yes/no)? yes

0.0.0.0: Warning: Permanently added '0.0.0.0' (ECDSA) to the list of known hosts.

0.0.0.0: starting secondarynamenode, logging to /opt/bigdata/hadoop2.7.1/logs/hadoop-root-secondarynamenode-c001.out

starting

yarn daemons

starting resourcemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarn-rootresourcemanager-c001.out

localhost:

starting nodemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarnroot-nodemanager-c001.out

[root@c001

hadoop-2.7.1]# jps

2880 ResourceManager

2467 NameNode

2583 DataNode

3258 Jps

2974 NodeManager

2735 SecondaryNameNode关闭:stop-all.sh

2.6 HDFS基本概念

NameNode:命名节点

matadata:元数据

数据路径

数据顺序

**Rack:**机架,这是一个逻辑概念

**DataNode:**数据节点,通常就是一台数据服务器,这是一个物理概念

数据块:Block

128M

不足128M,按128M计算

通常block的备份为,当前上传datanode,保留一份;同机架,另一个datanode备 份一份,不同机架datanode再备份一份

ResourceManager: 资源管理器

NodeManager

SecondaryNameNode

bash

[root@c001 tmp]# hadoop fs -mkdir /input

[root@c001 tmp]# hadoop fs -mkdir /output

[root@c001 tmp]# hadoop fs -put wordcount /input

[root@c001 tmp]# hadoop fs -chmod -R 777 /input

[root@c001 tmp]# hadoop fs -ls /

Found 2 items

drwxrwxrwx - root supergroup 0 2026-03-23 16:14 /input

drwxr-xr-x - root supergroup 0 2026-03-23 16:13 /output

[root@c001 tmp]# hadoop fs -ls /input

Found 1 items

-rwxrwxrwx 1 root supergroup 40 2026-03-23 16:14 /input/wordcount

[root@c001 tmp]# hadoop fs -cat /input/wordcount

hello a

hello b

hello c

hello d

hello f

[root@c001 tmp]# rm -rf *

[root@c001 tmp]# ll

总用量 0

[root@c001 tmp]# hadoop fs -get /input/wordcount ./

26/03/23 16:17:12 WARN hdfs.DFSClient: DFSInputStream has been closed already

[root@c001 tmp]# ll

总用量 4

drwxr-xr-x. 2 root root 6 3月 23 16:17 hsperfdata_root

-rw-r--r--. 1 root root 40 3月 23 16:17 wordcount

[root@c001 tmp]# cat wordcount

hello a

hello b

hello c

hello d

hello f2.7 MapReduce

**作用:**分布式离线计算框架

**Map阶段:**数据拆分

对数据进行拆分

**shuffle阶段:**对数据进行预处理和分流

**Reduce阶段:**数据的聚合

对数据进行最后的整合

bash

1. package org.myorg;

2.

3. import java.io.IOException;

4. import java.util.*;

5.

6. import org.apache.hadoop.fs.Path;

7. import org.apache.hadoop.conf.*;

8. import org.apache.hadoop.io.*;

9. import org.apache.hadoop.mapred.*;

10. import org.apache.hadoop.util.*;

11.

12. public class WordCount {

13.

14. public static class Map extends MapReduceBase implements

Mapper<LongWritable, Text, Text, IntWritable> {

15. private final static IntWritable one = new IntWritable(1);

16. private Text word = new Text();

17.

18. public void map(LongWritable key, Text value, OutputCollector<Text,

IntWritable> output, Reporter reporter) throws IOException {

19. String line = value.toString();

20. StringTokenizer tokenizer = new StringTokenizer(line);

21. while (tokenizer.hasMoreTokens()) {

22. word.set(tokenizer.nextToken());

23. output.collect(word, one);

24. }

25. }

26. }

27.

28. public static class Reduce extends MapReduceBase implements Reducer<Text,

IntWritable, Text, IntWritable> {

29. public void reduce(Text key, Iterator<IntWritable> values,

OutputCollector<Text, IntWritable> output, Reporter reporter) throws IOException {

30. int sum = 0;

31. while (values.hasNext()) {

32. sum += values.next().get();

33. }

34. output.collect(key, new IntWritable(sum));

35. }

36. }

37.

38. public static void main(String[] args) throws Exception {

39. JobConf conf = new JobConf(WordCount.class);

40. conf.setJobName("wordcount");

41.

42. conf.setOutputKeyClass(Text.class);

43. conf.setOutputValueClass(IntWritable.class);

44.

45. conf.setMapperClass(Map.class);

46. conf.setCombinerClass(Reduce.class);

47. conf.setReducerClass(Reduce.class);

48.

49. conf.setInputFormat(TextInputFormat.class);

50. conf.setOutputFormat(TextOutputFormat.class);

51.

52. FileInputFormat.setInputPaths(conf, new Path(args[0]));

53. FileOutputFormat.setOutputPath(conf, new Path(args[1]));

54.

55. JobClient.runJob(conf);

57. }

58. }

59.

bash

select count(1) from xxxx三、完全分布式搭建Hadoop

3.1 先删除单机hadoop的数据文件和临时目录以及日志相关内容

bash

[root@c001 ~]# cd /opt/bigdata/hadoop-2.7.1/

[root@c001 hadoop-2.7.1]# ll

总用量 40

drwxr-xr-x. 2 root root 4096 12月 9 2015 bin

drwxr-xr-x. 3 root root 19 12月 9 2015 etc

drwxr-xr-x. 4 root root 28 3月 23 10:55 hdfs

drwxr-xr-x. 2 root root 101 12月 9 2015 include

drwxr-xr-x. 3 root root 19 12月 9 2015 lib

drwxr-xr-x. 2 root root 4096 12月 9 2015 libexec

-rw-r--r--. 1 root root 15429 12月 9 2015 LICENSE.txt

drwxr-xr-x. 3 root root 4096 3月 23 11:19 logs

-rw-r--r--. 1 root root 101 12月 9 2015 NOTICE.txt

-rw-r--r--. 1 root root 1366 12月 9 2015 README.txt

drwxr-xr-x. 2 root root 4096 12月 9 2015 sbin

drwxr-xr-x. 4 root root 29 12月 9 2015 share

drwxr-xr-x. 4 root root 35 3月 23 11:19 temp

[root@c001 hadoop-2.7.1]# cd hdfs/name/

[root@c001 name]# rm -rf *

[root@c001 name]# cd ../data/

[root@c001 data]# rm -rf *

[root@c001 data]# cd ..

[root@c001 hdfs]# cd ..

[root@c001 hadoop-2.7.1]# cd temp/

[root@c001 temp]# rm -rf *

[root@c001 temp]# cd ../logs/

[root@c001 logs]# rm -rf *

[root@c001 logs]# cd /tmp/

[root@c001 tmp]# rm -rf *3.2 配置hosts

bash

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.100.101 c001

192.168.100.102 c002

192.168.100.103 c003 3.3 给另外两台换源

bash

# CentOS-Base.repo

#

# The mirror system uses the connecting IP address of the client and the

# update status of each mirror to pick mirrors that are updated to and

# geographically close to the client. You should use this for CentOS updates

# unless you are manually picking other mirrors.

#

# If the mirrorlist= does not work for you, as a fall back you can try the

# remarked out baseurl= line instead.

#

#

[base]

name=CentOS-$releasever - Base - repo.huaweicloud.com

baseurl=https://repo.huaweicloud.com/centos/$releasever/os/$basearch/

#mirrorlist=https://mirrorlist.centos.org/?

release=$releasever&arch=$basearch&repo=os

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/centos/RPM-GPG-KEY-CentOS-7

#released updates

[updates]

name=CentOS-$releasever - Updates - repo.huaweicloud.com

baseurl=https://repo.huaweicloud.com/centos/$releasever/updates/$basearch/

#mirrorlist=https://mirrorlist.centos.org/?

release=$releasever&arch=$basearch&repo=updates

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/centos/RPM-GPG-KEY-CentOS-7

#additional packages that may be useful

[extras]

name=CentOS-$releasever - Extras - repo.huaweicloud.com

baseurl=https://repo.huaweicloud.com/centos/$releasever/extras/$basearch/

#mirrorlist=https://mirrorlist.centos.org/?

release=$releasever&arch=$basearch&repo=extras

gpgcheck=1

gpgkey=https://repo.huaweicloud.com/centos/RPM-GPG-KEY-CentOS-7

#additional packages that extend functionality of existing packages

[centosplus]

name=CentOS-$releasever - Plus - repo.huaweicloud.com

baseurl=https://repo.huaweicloud.com/centos/$releasever/centosplus/$basearch/

#mirrorlist=https://mirrorlist.centos.org/?

release=$releasever&arch=$basearch&repo=centosplus

gpgcheck=1

enabled=0

gpgkey=https://repo.huaweicloud.com/centos/RPM-GPG-KEY-CentOS-73.4 安装基础依赖

bash

yum install gcc perl autoconf libaio lrzsz -y3.5 关闭防火墙

bash

临时关闭:systemctl stop firewalld

永久关闭:systemctl disable firewalld

查看防火墙状态:systemctl status firewalld3.6 安装JDK

bash

[root@c001 ~]# scp -r /usr/local/java root@c002:/usr/local/

[root@c001 ~]# scp -r /usr/local/java root@c003:/usr/local/在另外两台配置环境变量

bash

#jdk环境变量

export JAVA_HOME=/usr/local/java

export PATH=$PATH:$JAVA_HOME/bin

#mysql环境变量

export MYSQL_HOME=/usr/local/mysql

export PATH=$PATH:$MYSQL_HOME/bin

#hadoop搭建

export HADOOP_HOME=/opt/bigdata/hadoop-2.7.1

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin记得刷新~!!!!

3.7 创建hadoop安装路径

bash

[root@c002 ~]# mkdir -p /opt/bigdata

[root@c003 ~]# mkdir -p /opt/bigdata3.8 配置三台主机SSH免密钥登入⭐

清除1号机原有的密钥

bash

[root@c001 ~]# pwd

/root

[root@c001 ~]# ls -al

总用量 44

dr-xr-x---. 5 root root 4096 3月 24 09:39 .

drwxr-xr-x. 17 root root 4096 3月 24 10:21 ..

-rw-------. 1 root root 887 3月 20 11:20 anaconda-ks.cfg

-rw-------. 1 root root 3180 3月 24 10:21 .bash_history

-rw-r--r--. 1 root root 18 12月 29 2013 .bash_logout

-rw-r--r--. 1 root root 176 12月 29 2013 .bash_profile

-rw-r--r--. 1 root root 176 12月 29 2013 .bashrc

-rw-r--r--. 1 root root 100 12月 29 2013 .cshrc

-rw-------. 1 root root 163 3月 20 15:35 .mysql_history

drwxr-xr-x. 2 root root 38 3月 20 14:47 .oracle_jre_usage

drwxr-----. 3 root root 18 3月 20 14:33 .pki

drwx------. 2 root root 76 3月 23 10:46 .ssh

-rw-r--r--. 1 root root 129 12月 29 2013 .tcshrc

-rw-------. 1 root root 2659 3月 24 09:39 .viminfo

[root@c001 ~]# cd .ssh/

[root@c001 .ssh]# ll

总用量 16

-rw-r--r--. 1 root root 391 3月 23 10:46 authorized_keys

-rw-------. 1 root root 1675 3月 23 10:46 id_rsa

-rw-r--r--. 1 root root 391 3月 23 10:46 id_rsa.pub

-rw-r--r--. 1 root root 886 3月 24 09:54 known_hosts

[root@c001 .ssh]# rm -rf *1、三台主机各自生成私钥

bash

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa 分别查看私钥密文

bash

[root@c001 .ssh]# cat id_rsa.pub

bash

ssh-rsa

AAAAB3NzaC1yc2EAAAADAQABAAABAQDYnKf1mcNITJqn/OVdGcVMdCkUYYv6up/B6aRh1XtrNaaJoK/NlC

Wi1/8qgYY398dXtCwrBMH3oHWYo818TnMI/IWiNcr8/nZSA90QtSfFp6brp8YhdAp9ot36n83pp7q0IINr

Jn+iy22MymVbgPUkv2N2NfWZmH4sDzZlkdaBUYQtR3hcfawTUit6H1puCgnks1sCaDKXkaqmhhCATog7A2

EFDqbSEctFjA37+CIU/UnP8R0xj2CaeNljW9JWkWIOd0ARl9w8MFI/9nAMTLJiHGGdvS0ir9+A3nl93m3E

U6bN9ffmd9hEDj5wbr2kwIfLU7hsCkFU5SiBm2eUVLAd root@c001

ssh-rsa

AAAAB3NzaC1yc2EAAAADAQABAAABAQDvXe409IfsXDmRuVaHacM43xZEa/UKdQMkIvvx8TFBw/QWkMnKje

LYalxuZi7TJjKmGs1IhnqgQjfiV1TC1F2oYzn5OMGhnbL/hytTOAAtT8Z2dzsLd/R5A/UXC2sxxFwmc31I

Gyedcc6T3FBQMcLBNHCpVZvGEnOsnqmeUui3zCoargDFl1aL7rminE0h8jevb34qkq3taBAmLlHxhXnzIL

5rDM6iIDO3zFyAkv6E3WVa4cbRha/49FGqcNpbjJXn8NoKIXubnP6QyDF2tnNrkwi2NRHqW2hmhSkRxrqf

VxuItsVo6Ty8/urf4Pmc/hyt2PI0xOW/uP8a3pwVdnYj root@c002

ssh-rsa

AAAAB3NzaC1yc2EAAAADAQABAAABAQDHzp02SJMCxhfl3Q4nePJW16GWqrZCXpSH76qT+hLwZpOeW8BbmS

ywi5Bg8fMdL1Bs32Jui+J+CiMgtOxo+koL8rvdo5wvvlE3BR6YB1Oh0sCyEB0vk7gdDjTjy+H2oBXfX8dj

V7fM/CGWTLXPjeFOsB10OcnW3ZCdb/KmJ9yggpGj9eB8iQMu+rbPvoSHSh+dPqKYr0HmoAtsbnZ47gOYPn

iV15a5oB0lab/KRyl7LJYqgsgsZm7ynfaMoIfyoz7GRgZU811G+82tY/+4VxG7rUcu+k4ilRrCv6/Fxkou

oGmzzZcnJWmbSaDebGp7U50Rrh9vXJzBjhx6hB4K/213 root@c0032、创建公钥文件

bash

[root@c001 .ssh]# touch authorized_keys将密钥加入公钥文件内

3、将公钥分发到其他主机

bash

[root@c001 .ssh]# scp authorized_keys root@c002:/root/.ssh/

The authenticity of host 'c002 (192.168.100.102)' can't be established.

ECDSA key fingerprint is 40:5f:ac:1a:e4:f4:a7:20:c2:20:2e:9f:0b:84:12:fb.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c002,192.168.100.102' (ECDSA) to the list of known

hosts.

root@c002's password:

authorized_keys 100%

1174 1.2KB/s 00:00

[root@c001 .ssh]# scp authorized_keys root@c003:/root/.ssh/

The authenticity of host 'c003 (192.168.100.103)' can't be established.

ECDSA key fingerprint is d9:4e:2a:dd:64:cc:92:80:3b:64:c7:23:3d:96:1a:38.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c003,192.168.100.103' (ECDSA) to the list of known

hosts.

root@c003's password:

authorized_keys 100%

1174 1.2KB/s 00:003.9 将hosts文件同步到其他主机

bash

[root@c001 /]# scp /etc/hosts root@c002:/etc/

hosts 100%

223 0.2KB/s 00:00

[root@c001 /]# scp /etc/hosts root@c003:/etc/

hosts 3.10 验证ssh免密钥登入生效

bash

Last login: Tue Mar 24 10:32:12 2026 from 192.168.100.1

[root@c001 ~]# ssh c002

Last failed login: Tue Mar 24 10:34:39 CST 2026 from 192.168.100.101 on ssh:notty

There was 1 failed login attempt since the last successful login.

Last login: Tue Mar 24 09:34:44 2026 from 192.168.100.1

[root@c002 ~]# ssh c003

The authenticity of host 'c003 (192.168.100.103)' can't be established.

ECDSA key fingerprint is d9:4e:2a:dd:64:cc:92:80:3b:64:c7:23:3d:96:1a:38.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c003,192.168.100.103' (ECDSA) to the list of known

hosts.

Last login: Tue Mar 24 10:22:10 2026 from 192.168.100.1

[root@c003 ~]# ssh c002

The authenticity of host 'c002 (192.168.100.102)' can't be established.

ECDSA key fingerprint is 40:5f:ac:1a:e4:f4:a7:20:c2:20:2e:9f:0b:84:12:fb.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c002,192.168.100.102' (ECDSA) to the list of known

hosts.

Last login: Tue Mar 24 10:38:12 2026 from c001

[root@c002 ~]# ssh c001

The authenticity of host 'c001 (192.168.100.101)' can't be established.

ECDSA key fingerprint is 62:d1:b1:ce:d2:cb:f6:fb:71:7c:4d:2f:ae:7a:87:bd.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c001,192.168.100.101' (ECDSA) to the list of known

hosts.

Last login: Tue Mar 24 10:38:01 2026 from 192.168.100.13.11 配置slaves

bash

[root@c001 ~]# cat /opt/bigdata/hadoop-2.7.1/etc/hadoop/slaves

c001

c002

c0033.12 把hadoop分发到其他主机

bash

[root@c001 ~]# scp -r /opt/bigdata/hadoop-2.7.1 root@c002:/opt/bigdata/

[root@c001 ~]# scp -r /opt/bigdata/hadoop-2.7.1 root@c003:/opt/bigdata/3.13 再次格式化hadoop

bash

hadoop namenode -format只要在namenode上格式化就行

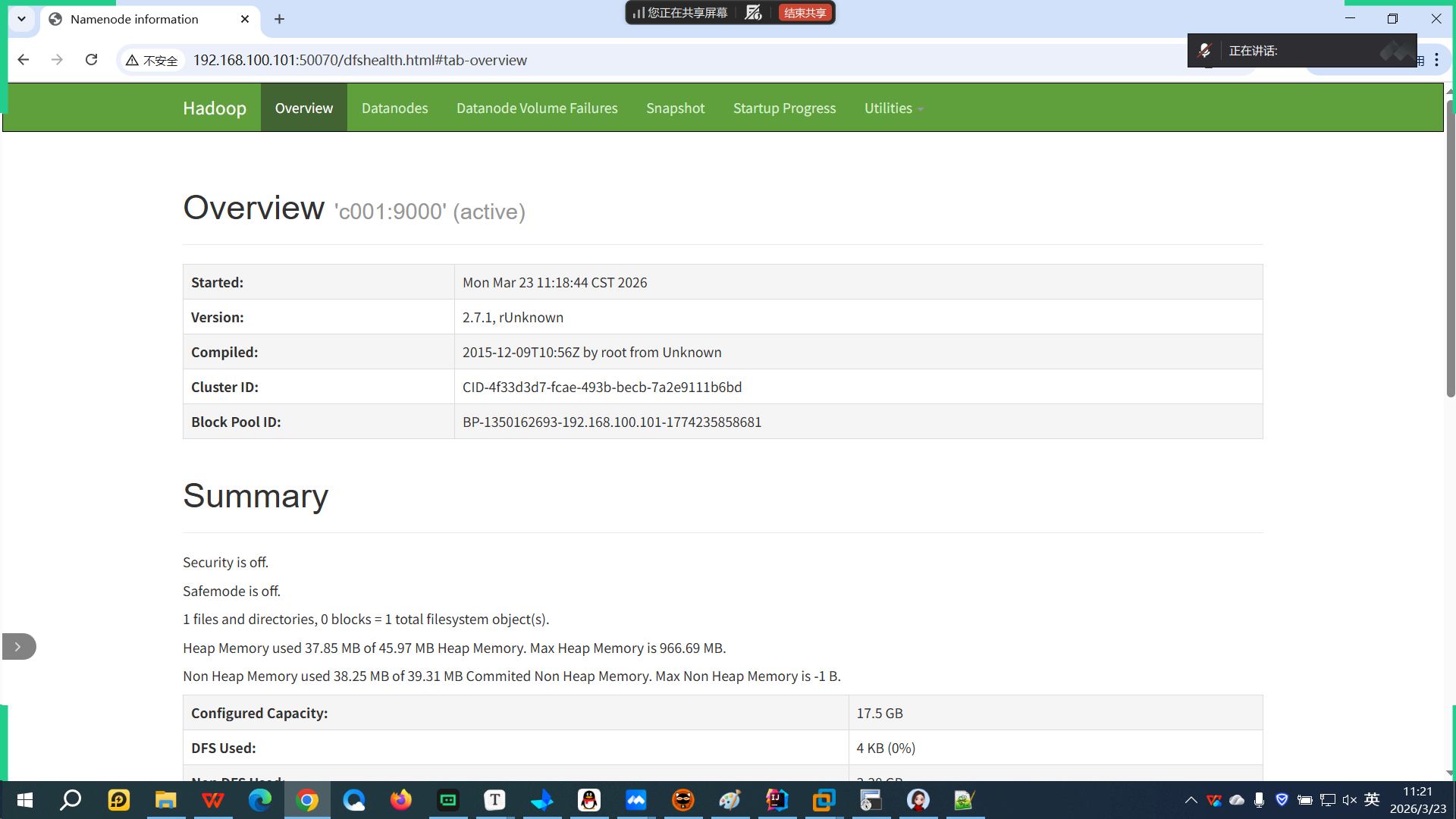

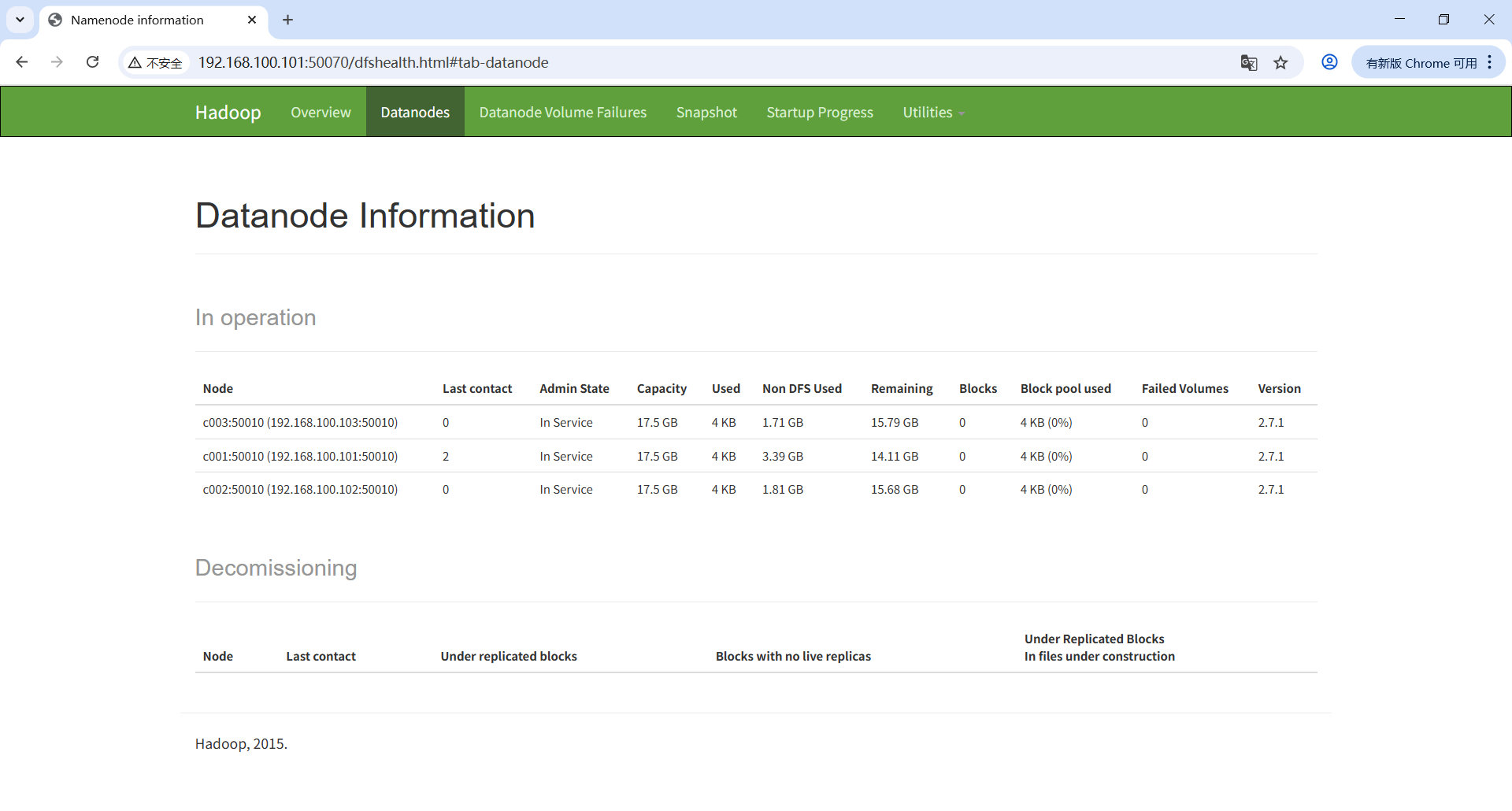

3.14 验证

bash

[root@c001 ~]# jps

2320 NameNode

2592 SecondaryNameNode

2832 NodeManager

2737 ResourceManager

2440 DataNode

3119 Jps

[root@c002 ~]# jps

19412 NodeManager

19508 Jps

19317 DataNode

[root@c003 ~]# jps

2272 NodeManager

2177 DataNode

2369 Jps

四、Hive

内容

搭建环境

使用hive

4.1 基本概念

Hive是一个构建在Hadoop上的数据仓库平台,其设计目标是使Hadoop上的数据操作与传统SQL结 合,让熟悉SQL编程的开发人员能够轻松向Hadoop平台转移。

编写MapReduce程序需要实现Mapper和Reducer两个接口,才能实现简单单词统计。而使用Hive, 则只需建立相应的数据仓库,使用如下的HQL语句即可得出同样的结果。

4.2 搭建Hive

前提

hadoop

mysql(不需要三台都安装,一台就行,哪一台主服务器,哪一台用hive)

1、启动数据和hadoop集群

bash

Last login: Tue Mar 24 15:21:18 2026 from 192.168.100.1

[root@c001 ~]# service mysqld start

Starting MySQL SUCCESS!

[root@c001 ~]# start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [c001]

c001: starting namenode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadoop-rootnamenode-c001.out

c001:

starting datanode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadoop-rootdatanode-c001.out

c003:

starting datanode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadoop-rootdatanode-c003.out

c002:

starting datanode, logging to /opt/bigdata/hadoop-2.7.1/logs/hadoop-rootdatanode-c002.out

Starting

secondary namenodes [0.0.0.0]

0.0.0.0: starting secondarynamenode, logging to /opt/bigdata/hadoop2.7.1/logs/hadoop-root-secondarynamenode-c001.out

starting

yarn daemons

starting resourcemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarn-rootresourcemanager-c001.out

c002:

starting nodemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarn-rootnodemanager-c002.out

c003:

starting nodemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarn-rootnodemanager-c003.out

c001:

starting nodemanager, logging to /opt/bigdata/hadoop-2.7.1/logs/yarn-rootnodemanager-c001.out

[root@c001

~]# jps

2416 NameNode

2544 DataNode

2707 SecondaryNameNode

2968 NodeManager

3226 Jps

2863 ResourceManager

[root@c001 ~]# ssh c002

Last login: Tue Mar 24 15:21:23 2026 from 192.168.100.1

[root@c002 ~]# jps

2112 DataNode

2214 NodeManager

2350 Jps

[root@c002 ~]# ssh c003

Last login: Tue Mar 24 15:21:27 2026 from 192.168.100.1

[root@c003 ~]# jps

2113 DataNode

2215 NodeManager

2351 Jps

[root@c003 ~]# ssh c001

The authenticity of host 'c001 (192.168.100.101)' can't be established.

ECDSA key fingerprint is 62:d1:b1:ce:d2:cb:f6:fb:71:7c:4d:2f:ae:7a:87:bd.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'c001,192.168.100.101' (ECDSA) to the list of known

hosts.

Last login: Wed Mar 25 10:18:28 2026 from 192.168.100.1

[root@c001 ~]# 2、上传hive并解压

bash

[root@c001 ~]# cd /opt/download/

[root@c001 download]# rz

rz waiting to receive.

Starting zmodem transfer. Press Ctrl+C to cancel.

Transferring apache-hive-2.1.1-bin.tar.gz...

100% 146246 KB 14624 KB/sec 00:00:10 0 Errors

[root@c001 download]# ll

总用量 638828

-rw-r--r--. 1 root root 149756462 7月 10 2024 apache-hive-2.1.1-bin.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

[root@c001 download]# tar -zxf apache-hive-2.1.1-bin.tar.gz

[root@c001 download]# ll

总用量 638832

drwxr-xr-x. 9 root root 4096 3月 25 10:23 apache-hive-2.1.1-bin

-rw-r--r--. 1 root root 149756462 7月 10 2024 apache-hive-2.1.1-bin.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz3、上传数据库驱动,并将驱动jar放入hive的lib目录

bash

[root@c001 download]# rz

rz waiting to receive.

Starting zmodem transfer. Press Ctrl+C to cancel.

Transferring mysql-connector-java-8.0.11.tar.gz...

100% 4916 KB 4916 KB/sec 00:00:01 0 Errors

[root@c001 download]# ll

总用量 643752

drwxr-xr-x. 9 root root 4096 3月 25 10:23 apache-hive-2.1.1-bin

-rw-r--r--. 1 root root 149756462 7月 10 2024 apache-hive-2.1.1-bin.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

-rw-r--r--. 1 root root 5034718 11月 11 2024 mysql-connector-java-8.0.11.tar.gz

[root@c001 download]# tar -zxf mysql-connector-java-8.0.11.tar.gz

[root@c001 download]# ll

总用量 643756

drwxr-xr-x. 9 root root 4096 3月 25 10:23 apache-hive-2.1.1-bin

-rw-r--r--. 1 root root 149756462 7月 10 2024 apache-hive-2.1.1-bin.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

drwxr-xr-x. 4 root root 4096 3月 25 2018 mysql-connector-java-8.0.11

-rw-r--r--. 1 root root 5034718 11月 11 2024 mysql-connector-java-8.0.11.tar.gz

[root@c001 download]# cd mysql-connector-java-8.0.11

[root@c001 mysql-connector-java-8.0.11]# ll

总用量 2396

-rw-r--r--. 1 root root 81591 3月 25 2018 build.xml

-rw-r--r--. 1 root root 254260 3月 25 2018 CHANGES

drwxr-xr-x. 2 root root 4096 3月 25 10:24 lib

-rw-r--r--. 1 root root 64997 3月 25 2018 LICENSE

-rw-r--r--. 1 root root 2036609 3月 25 2018 mysql-connector-java-8.0.11.jar

-rw-r--r--. 1 root root 632 3月 25 2018 README

drwxr-xr-x. 8 root root 80 3月 25 2018 src

[root@c001 mysql-connector-java-8.0.11]# cp mysql-connector-java-8.0.11.jar

../apache-hive-2.1.1-bin/lib/

[root@c001 mysql-connector-java-8.0.11]# cd ../apache-hive-2.1.1-bin/lib/

[root@c001 lib]# ll | grep mysql

-rw-r--r--. 1 root root 2036609 3月 25 10:25 mysql-connector-java-8.0.11.jar4、移动hive到安装路径,配置环境变量

bash

[root@c001 download]# pwd

/opt/download

[root@c001 download]# ll

总用量 643756

drwxr-xr-x. 9 root root 4096 3月 25 10:23 apache-hive-2.1.1-bin

-rw-r--r--. 1 root root 149756462 7月 10 2024 apache-hive-2.1.1-bin.tar.gz

-rw-r--r--. 1 root root 189815615 3月 22 2024 jdk-8u162-linux-x64.tar.gz

-rw-r--r--. 1 root root 314581668 4月 24 2024 mysql-5.6.35-linux-glibc2.5-

x86_64.tar.gz

drwxr-xr-x. 4 root root 4096 3月 25 2018 mysql-connector-java-8.0.11

-rw-r--r--. 1 root root 5034718 11月 11 2024 mysql-connector-java-8.0.11.tar.gz

[root@c001 download]# mv apache-hive-2.1.1-bin /opt/bigdata/hive-2.1.1

[root@c001 download]# cd /opt/bigdata/hive-2.1.1/

[root@c001 hive-2.1.1]# pwd

/opt/bigdata/hive-2.1.1

[root@c001 hive-2.1.1]# ll

总用量 84

drwxr-xr-x. 3 root root 4096 3月 25 10:23 bin

drwxr-xr-x. 2 root root 4096 3月 25 10:23 conf

drwxr-xr-x. 4 root root 32 3月 25 10:23 examples

drwxr-xr-x. 7 root root 63 3月 25 10:23 hcatalog

drwxr-xr-x. 2 root root 43 3月 25 10:23 jdbc

drwxr-xr-x. 4 root root 8192 3月 25 10:25 lib

-rw-r--r--. 1 root root 29003 11月 29 2016 LICENSE

-rw-r--r--. 1 root root 578 11月 29 2016 NOTICE

-rw-r--r--. 1 root root 4122 11月 29 2016 README.txt

-rw-r--r--. 1 root root 18501 11月 30 2016 RELEASE_NOTES.txt

drwxr-xr-x. 4 root root 33 3月 25 10:23 scripts

bash

#hive环境变量

export HIVE_HOME=/opt/bigdata/hive-2.1.1

export PATH=$PATH:$HIVE_HOME/bin记得刷新~!!!

5、准备配置文件

bash

[root@c001 conf]# pwd

/opt/bigdata/hive-2.1.1/conf

[root@c001 conf]# ll

总用量 260

-rw-r--r--. 1 root root 1596 11月 29 2016 beeline-log4j2.properties.template

-rw-r--r--. 1 root root 229198 11月 30 2016 hive-default.xml.template

-rw-r--r--. 1 root root 2378 11月 29 2016 hive-env.sh.template

-rw-r--r--. 1 root root 2274 11月 29 2016 hive-exec-log4j2.properties.template

-rw-r--r--. 1 root root 2925 11月 29 2016 hive-log4j2.properties.template

-rw-r--r--. 1 root root 2060 11月 29 2016 ivysettings.xml

-rw-r--r--. 1 root root 2719 11月 29 2016 llap-cli-log4j2.properties.template

-rw-r--r--. 1 root root 4353 11月 29 2016 llap-daemon-log4j2.properties.template

-rw-r--r--. 1 root root 2662 11月 29 2016 parquet-logging.properties

[root@c001 conf]# cp hive-default.xml.template hive-site.xml

[root@c001 conf]# cp hive-env.sh.template hive-env.sh

[root@c001 conf]# ll

总用量 488

-rw-r--r--. 1 root root 1596 11月 29 2016 beeline-log4j2.properties.template

-rw-r--r--. 1 root root 229198 11月 30 2016 hive-default.xml.template

-rw-r--r--. 1 root root 2378 3月 25 10:35 hive-env.sh

-rw-r--r--. 1 root root 2378 11月 29 2016 hive-env.sh.template

-rw-r--r--. 1 root root 2274 11月 29 2016 hive-exec-log4j2.properties.template

-rw-r--r--. 1 root root 2925 11月 29 2016 hive-log4j2.properties.template

-rw-r--r--. 1 root root 229198 3月 25 10:35 hive-site.xml

-rw-r--r--. 1 root root 2060 11月 29 2016 ivysettings.xml

-rw-r--r--. 1 root root 2719 11月 29 2016 llap-cli-log4j2.properties.template

-rw-r--r--. 1 root root 4353 11月 29 2016 llap-daemon-log4j2.properties.template

-rw-r--r--. 1 root root 2662 11月 29 2016 parquet-logging.properties6、创建临时目录

bash

[root@c001 hive-2.1.1]# pwd

/opt/bigdata/hive-2.1.1

[root@c001 hive-2.1.1]# ll

总用量 84

drwxr-xr-x. 3 root root 4096 3月 25 10:23 bin

drwxr-xr-x. 2 root root 4096 3月 25 10:35 conf

drwxr-xr-x. 4 root root 32 3月 25 10:23 examples

drwxr-xr-x. 7 root root 63 3月 25 10:23 hcatalog

drwxr-xr-x. 2 root root 43 3月 25 10:23 jdbc

drwxr-xr-x. 4 root root 8192 3月 25 10:25 lib

-rw-r--r--. 1 root root 29003 11月 29 2016 LICENSE

-rw-r--r--. 1 root root 578 11月 29 2016 NOTICE

-rw-r--r--. 1 root root 4122 11月 29 2016 README.txt

-rw-r--r--. 1 root root 18501 11月 30 2016 RELEASE_NOTES.txt

drwxr-xr-x. 4 root root 33 3月 25 10:23 scripts

[root@c001 hive-2.1.1]# mkdir temp

[root@c001 hive-2.1.1]# chmod 777 temp/

[root@c001 hive-2.1.1]# ll

总用量 84

drwxr-xr-x. 3 root root 4096 3月 25 10:23 bin

drwxr-xr-x. 2 root root 4096 3月 25 10:35 conf

drwxr-xr-x. 4 root root 32 3月 25 10:23 examples

drwxr-xr-x. 7 root root 63 3月 25 10:23 hcatalog

drwxr-xr-x. 2 root root 43 3月 25 10:23 jdbc

drwxr-xr-x. 4 root root 8192 3月 25 10:25 lib

-rw-r--r--. 1 root root 29003 11月 29 2016 LICENSE

-rw-r--r--. 1 root root 578 11月 29 2016 NOTICE

-rw-r--r--. 1 root root 4122 11月 29 2016 README.txt

-rw-r--r--. 1 root root 18501 11月 30 2016 RELEASE_NOTES.txt

drwxr-xr-x. 4 root root 33 3月 25 10:23 scripts

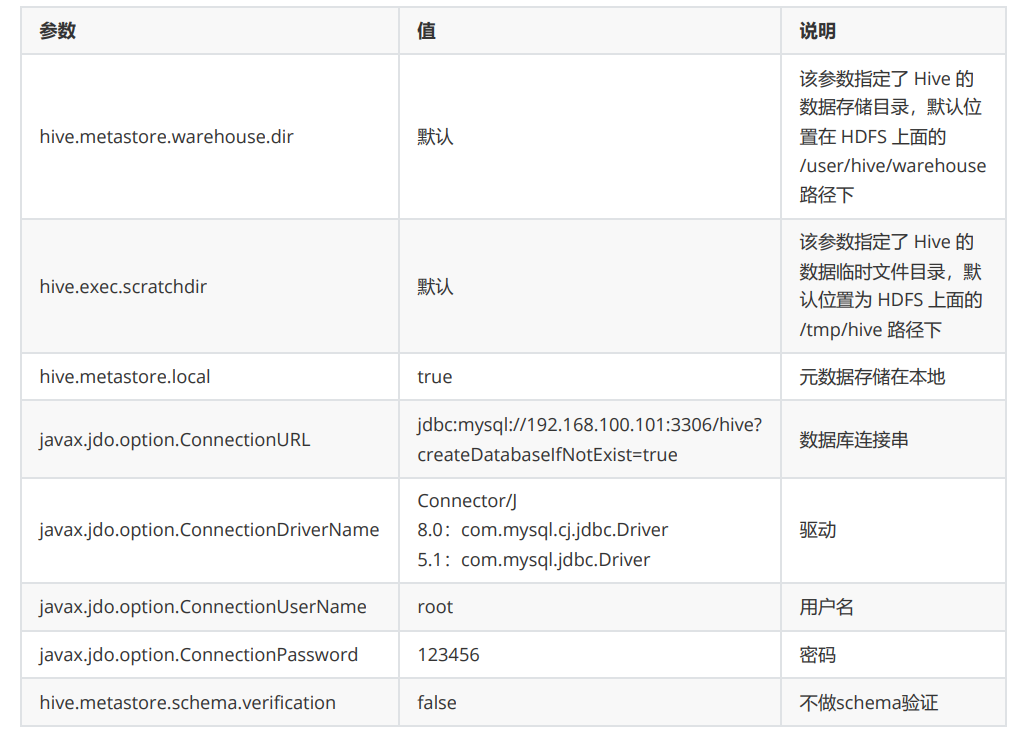

drwxrwxrwx. 2 root root 6 3月 25 10:37 temp7、修改hive-site.xml

修改hive-site.xml中所有包含 ${system:java.io.tmpdir} 字段的 value ,即路径

修改hive-site.xml中所有 {system:user.name} 改为{user.name}

在hdfs上创建数据目录

在hdfs上创建数据目录

bash

[root@c001 hive-2.1.1]# cd temp/

[root@c001 temp]# pwd

/opt/bigdata/hive-2.1.1/temp

[root@c001 temp]# hadoop fs -ls /

[root@c001 temp]# hadoop fs -mkdir -p /user/hive/warehouse

[root@c001 temp]# hadoop fs -ls /

Found 1 items

drwxr-xr-x - root supergroup 0 2026-03-25 10:45 /user

[root@c001 temp]# hadoop fs -chmod -r 777 /user

chmod: `777': No such file or directory

[root@c001 temp]# hadoop fs -chmod -R 777 /user

[root@c001 temp]# hadoop fs -ls /

Found 1 items

drwxrwxrwx - root supergroup 0 2026-03-25 10:45 /user

[root@c001 temp]# hadoop fs -mkdir -p /tmp/hive

[root@c001 temp]# hadoop fs -chmod -R 777 /tmp

[root@c001 temp]# hadoop fs -ls /

Found 2 items

drwxrwxrwx - root supergroup 0 2026-03-25 10:46 /tmp

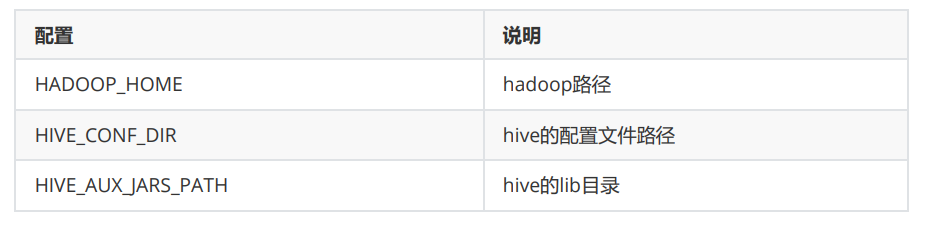

drwxrwxrwx - root supergroup 0 2026-03-25 10:45 /user8、修改hive-env.sh

9、初始化

bash

schematool -initSchema -dbType mysql检查初始化结果

bash

[root@c001 /]# schematool -initSchema -dbType mysql

which: no hbase in

(/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/usr/local/java/bin:/usr/local/

mysql/bin:/opt/bigdata/hadoop-2.7.1/bin:/opt/bigdata/hadoop2.7.1/sbin:/root/bin:/usr/local/java/bin:/usr/local/mysql/bin:/opt/bigdata/hadoop2.7.1/bin:/opt/bigdata/hadoop-2.7.1/sbin:/opt/bigdata/hive-2.1.1/bin)

SLF4J:

Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/bigdata/hive-2.1.1/lib/log4j-slf4j-impl2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J:

Found binding in [jar:file:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-log4j12-

1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J:

See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Metastore connection URL: jdbc:mysql://192.168.100.101:3306/hive?

createDatabaseIfNotExist=true

Metastore Connection Driver : com.mysql.cj.jdbc.Driver

Metastore connection User: root

Starting metastore schema initialization to 2.1.0

Initialization script hive-schema-2.1.0.mysql.sql

Initialization script completed

schemaTool completed

bash

[root@c001 /]# mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 10

Server version: 5.6.35 MySQL Community Server (GPL)

Copyright (c) 2000, 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> use hive;

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> show tables;

+---------------------------+

| Tables_in_hive |

+---------------------------+

| AUX_TABLE |

| BUCKETING_COLS |

| CDS |

| COLUMNS_V2 |

| COMPACTION_QUEUE |

| COMPLETED_COMPACTIONS |

| COMPLETED_TXN_COMPONENTS |

| DATABASE_PARAMS |

| DBS |

| DB_PRIVS |

| DELEGATION_TOKENS |

| FUNCS |

| FUNC_RU |

| GLOBAL_PRIVS |

| HIVE_LOCKS |

| IDXS |

| INDEX_PARAMS |

| KEY_CONSTRAINTS |

| MASTER_KEYS |

| NEXT_COMPACTION_QUEUE_ID |

| NEXT_LOCK_ID |

| NEXT_TXN_ID |

| NOTIFICATION_LOG |

| NOTIFICATION_SEQUENCE |

| NUCLEUS_TABLES |

| PARTITIONS |

| PARTITION_EVENTS |

| PARTITION_KEYS |

| PARTITION_KEY_VALS |

| PARTITION_PARAMS |

| PART_COL_PRIVS |

| PART_COL_STATS |

| PART_PRIVS |

| ROLES |

| ROLE_MAP |

| SDS |

| SD_PARAMS |

| SEQUENCE_TABLE |

| SERDES |

| SERDE_PARAMS |

| SKEWED_COL_NAMES |

| SKEWED_COL_VALUE_LOC_MAP |

| SKEWED_STRING_LIST |

| SKEWED_STRING_LIST_VALUES |

| SKEWED_VALUES |

| SORT_COLS |

| TABLE_PARAMS |

| TAB_COL_STATS |

| TBLS |

| TBL_COL_PRIVS |

| TBL_PRIVS |

| TXNS |

| TXN_COMPONENTS |

| TYPES |

| TYPE_FIELDS |

| VERSION |

| WRITE_SET |

+---------------------------+

57 rows in set (0.00 sec)

bash

[root@c001 /]# hive

which: no hbase in

(/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/usr/local/java/bin:/usr/local/

mysql/bin:/opt/bigdata/hadoop-2.7.1/bin:/opt/bigdata/hadoop2.7.1/sbin:/root/bin:/usr/local/java/bin:/usr/local/mysql/bin:/opt/bigdata/hadoop2.7.1/bin:/opt/bigdata/hadoop-2.7.1/sbin:/opt/bigdata/hive-2.1.1/bin)

SLF4J:

Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/bigdata/hive-2.1.1/lib/log4j-slf4j-impl2.4.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J:

Found binding in [jar:file:/opt/bigdata/hadoop2.7.1/share/hadoop/common/lib/slf4j-log4j12-

1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: