配置因为视频里看不出来,我选的是:

具身机器人/IsaacSim5.1+IsaacLab-2.3.2 pip安装版

RTX 4090 / 24GB*1 卡

这边真心建议跟教程不要跟视频,和教程对不上

教程地址

every-embodied/07-机器人操作、运动控制/Locomotion/01春晚舞蹈机器人复刻.md at main · datawhalechina/every-embodied

列出所有pip命令

pip list

pip配置

git config --global url."https://gh-proxy.org/https://github.com/".insteadOf "https://github.com/"

查看当前配置

pip config list

cd

cd /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot

激活

conda activate phmr

git clone https://github.com/datawhalechina/every-embodied.git

git clone https://github.com/datawhalechina/every-embodied.git

cd every-embodied/07-机器人操作、运动控制/Locomotion/video2robot

cd third_party

3. 克隆 GMR

git clone --depth 1 https://github.com/taeyoun811/GMR.git GMR

4. 克隆 PromptHMR

git clone --depth 1 https://github.com/taeyoun811/PromptHMR.git PromptHMR

cd ..

git apply patches/main.patch

git -C third_party/PromptHMR apply ../../patches/prompthmr.patch

git -C third_party/GMR apply ../../patches/gmr.patch

conda env create -f envs/gmr.yml

报错,继续往下走

ERROR: No matching distribution found for video2robot==0.1.0

phmr.yml里修改为:

prefix: /root/gpufree-data/conda_envs/phmr

conda config --add envs_dirs /root/gpufree-data/conda_envs

conda env create -f envs/phmr.yml

conda activate gmr

cd /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot

pip install -e .

pip install loop-rate-limiters

pip install smplx

pip install imageio

pip install mink

pip install rich

pip install imageioffmpeg

原视频9分钟:

(base) root@gpufree-container:~/gpufree-data#

conda create -p /root/gpufree-data/conda_envs/phmr --clone/root/gpu-freedata/conda_envs/phmr

conda env remove -p /root/gpu-freedata/conda_envs/phmr

conda env list

conda config --add envs_dirs /root/gpufree-data/conda_envs

conda env list

我的路径:

conda create -p /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/envs/phmr --clone /root/gpu-freedata/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/envs/phmr

运行完:EnvironmentLocationNotFound: Not a conda environment: /root/gpu-freedata/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/envs/phmr

conda env remove -p /root/gpu-freedata/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/envs/phmr

conda env list

conda config --add envs_dirs /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/envs

conda env list

懒得弄克隆,跳过

mkdir -p python_libs

git clone https://github.com/Arthur151/chumpy python_libs/chumpy

python -m pip install -e python_libs/chumpy --no-build-isolation

echo 'export PYTHONPATH=$PYTHONPATH:/root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/third_party/PromptHMR' >> ~/.bashrc

source ~/.bashrc

conda activate phmr

conda install -c conda-forge eigen -y

编译 lietorch

mkdir -p python_libs

cd python_libs

git clone https://github.com/princeton-vl/lietorch.git

cd lietorch

git submodule update --init --recursive

python setup.py install

cd ../..

git-lfs install

跳过:

git lfs clone https://huggingface.co/Datawhale/spring-festival-wushu-robot-replication-model

或者国内镜像

git lfs clone https://hf-mirror.com/Datawhale/spring-festival-wushu-robot-replication-model

安装 detectron2

git clone https://github.com/facebookresearch/detectron2.git

cd /root/gpufree-data/detectron2

pip install -e . --no-build-isolation

git clone https://github.com/facebookresearch/segment-anything-2.git

cd segment-anything-2

pip install -e . --no-build-isolation

sed -i 's/load_video_frames, load_video_frames_from_np/load_video_frames/g' /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/third_party/PromptHMR/pipeline/detector/sam2_video_predictor.py

mkdir -p /root/.cache/torch/hub/checkpoints && rm -f /root/.cache/torch/hub/v0.10.0.zip.*.partial && wget -O /root/.cache/torch/hub/v0.10.0.zip https://github.com/pytorch/vision/zipball/v0.10.0 && wget -O /root/.cache/torch/hub/checkpoints/deeplabv3_resnet50_coco-cd0a2569.pth https://download.pytorch.org/models/deeplabv3_resnet50_coco-cd0a2569.pth && ls -lh /root/.cache/torch/hub/v0.10.0.zip /root/.cache/torch/hub/checkpoints/deeplabv3_resnet50_coco-cd0a2569.pth

conda activate phmr

python -m pip install -U fastapi "uvicornstandard" jinja2 python-multipart

pkill -f "video2robot/visualization/robot_viser.py"

cd /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/

export VISER_FIXED_PORT=8789

启动GUI

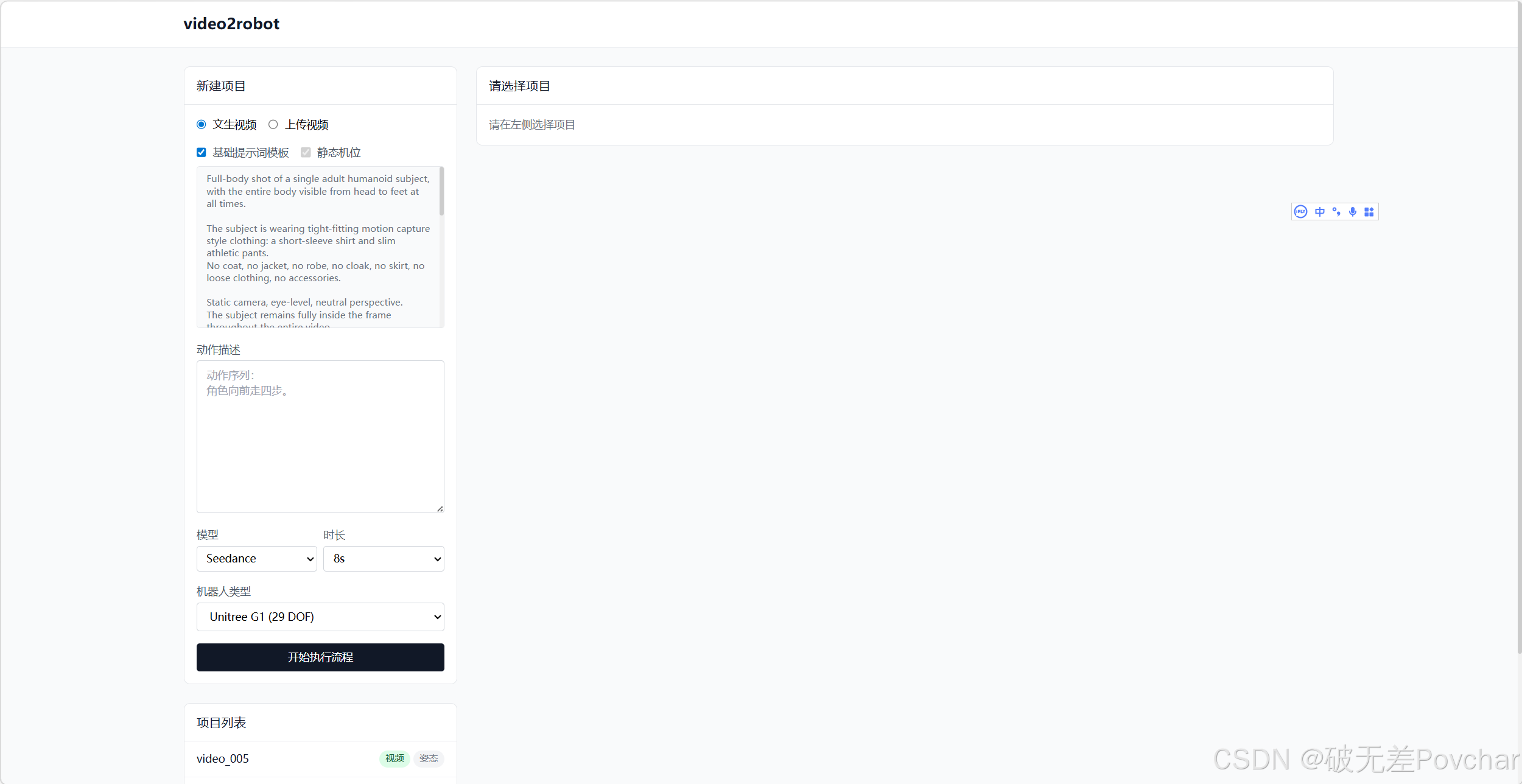

python -m uvicorn web.app:app --host 0.0.0.0 --port 8000

复制这个网址打开

上传视频以后试试:

python scripts/extract_pose.py --project data/video_008

看看有没有报错

# ============================================

# 视频生成与处理流程注释

# ============================================

# 1. 生成视频

# 使用 seedance 模型根据文本描述生成视频

# --model: 指定使用的模型(seedance 是视频生成模型)

# --action: 描述动作的文本提示,这里描述角色向前走四步的动作序列

python scripts/generate_video.py --model seedance --action "动作序列:角色向前走四步"

# 2. 提取姿态信息

# 从生成的视频中提取人体/物体的姿态关键点

# --project: 指定项目目录,视频文件通常位于该目录下

# 这个命令会分析视频中的每一帧,提取骨骼点、关节位置等姿态数据

python scripts/extract_pose.py --project data/video_001

# 3. 转换为机器人控制指令

# 将提取的姿态数据转换为机器人可执行的指令

# --project: 指定项目目录,从该目录读取姿态数据

# --all-tracks: 处理所有轨迹(trajectories),将姿态序列转换为机器人运动轨迹

# 输出:机器人控制指令文件(如关节角度、末端位置等)

python scripts/convert_to_robot.py --project data/video_001 --all-tracks

# 4. 可视化机器人运动

# 在三维环境中可视化机器人的运动轨迹和姿态

# --project: 指定项目目录,读取机器人控制指令数据

# --robot-viser: 使用 Viser 可视化工具(一个三维可视化库)显示机器人模型

# --robot-all: 显示所有机器人相关的信息(包括轨迹、姿态、关节角度等)

# 这个命令会打开一个交互式3D窗口,展示机器人执行动作的动画

python scripts/visualize.py --project data/video_001 --robot-viser --robot-all我装lietorch报错RuntimeError: The detected CUDA version (12.8) mismatches the version that was used to compile PyTorch (13.0)

ai:

conda activate phmr

卸载当前不兼容的 PyTorch

pip uninstall torch torchvision -y

安装与 CUDA 12.x 兼容的版本

pip install torch torchvision --index-url https://download.pytorch.org/whl/cu121

cd /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/python_libs/lietorch

python setup.py install

报错:ModuleNotFoundError: No module named 'droid_backends_intr'怎么办

这是另一个需要编译的模块 droidcalib。按照文档执行:

bash

复制

conda activate phmr

# 设置环境变量

export CPATH="$CONDA_PREFIX/include/eigen3:${CPATH:-}"

# 编译 droidcalib

cd /root/gpufree-data/every-embodied/07-机器人操作、运动控制/Locomotion/video2robot/third_party/PromptHMR/pipeline/droidcalib

python setup.py installpython -m pip install -U torch-scatter --no-build-isolation

python -m pip install -U xformers

ImportError: /opt/conda/envs/phmr/lib/python3.10/site-packages/torch/lib/libtorch_cuda.so: undefined symbol: ncclCommWindowDeregister

pip uninstall torch-scatter -y # 为当前 torch 重新安装(用 --no-build-isolation 确保编译时能找到正确的 torch) pip install torch-scatter -f https://data.pyg.org/whl/torch-2.11.0+cu121.html --no-index --no-build-isolation

# 卸载所有 torch 相关 pip uninstall torch torchvision torch-scatter torch-sparse torch-geometric -y # 清理残留 pip cache purge # 安装 torch 2.4.0 + CUDA 12.1(稳定版本) pip install torch==2.4.0 torchvision==0.19.0 --index-url https://download.pytorch.org/whl/cu121 # 安装 torch-scatter(2.4.0 有预编译包) pip install torch-scatter -f https://data.pyg.org/whl/torch-2.4.0+cu121.html --no-index # 验证 python -c "import torch; print(torch.__version__, torch.version.cuda)"

之后我对模型进行了重下

但是都报错

原址网连不上

镜像:

Failed to fetch some objects from 'https://hf-mirror.com/Datawhale/spring-festival-wushu-robot-replication-model.git/info/lfs'

备用:

Usage: aria2c OPTIONS URI \| MAGNET \| TORRENT_FILE \| METALINK_FILE...

See 'aria2c -h'.

unzip: cannot find or open data/body_models/smpl/smpl.zip, data/body_models/smpl/smpl.zip.zip or data/body_models/smpl/smpl.zip.ZIP.

mv: 对 'data/body_models/smpl/smpl/SMPL_python_v.1.1.0/smpl/models/basicmodel_neutral_lbs_10_207_0_v1.1.0.pkl' 调用 stat 失败: 没有那个文件或目录

mv: 对 'data/body_models/smpl/smpl/SMPL_python_v.1.1.0/smpl/models/basicmodel_f_lbs_10_207_0_v1.1.0.pkl' 调用 stat 失败: 没有那个文件或目录

mv: 对 'data/body_models/smpl/smpl/SMPL_python_v.1.1.0/smpl/models/basicmodel_m_lbs_10_207_0_v1.1.0.pkl' 调用 stat 失败: 没有那个文件或目录

Retrieving folder contents

Error:

HTTPSConnectionPool(host='drive.google.com', port=443): Max retries

exceeded with url:

/drive/folders/1JU7CuU2rKkwD7WWjvSZJKpQFFk_Z6NL7?usp=share_link&hl=en

(Caused by

NewConnectionError("HTTPSConnection(host='drive.google.com',

port=443): Failed to establish a new connection: [Errno 101] Network

is unreachable"))To report issues, please visit https://github.com/wkentaro/gdown/issues.

倒腾两天没成功反正

https://kungfuathletebot.github.io/ 给大家看看这个 机器人武术数据集与单一策略追踪