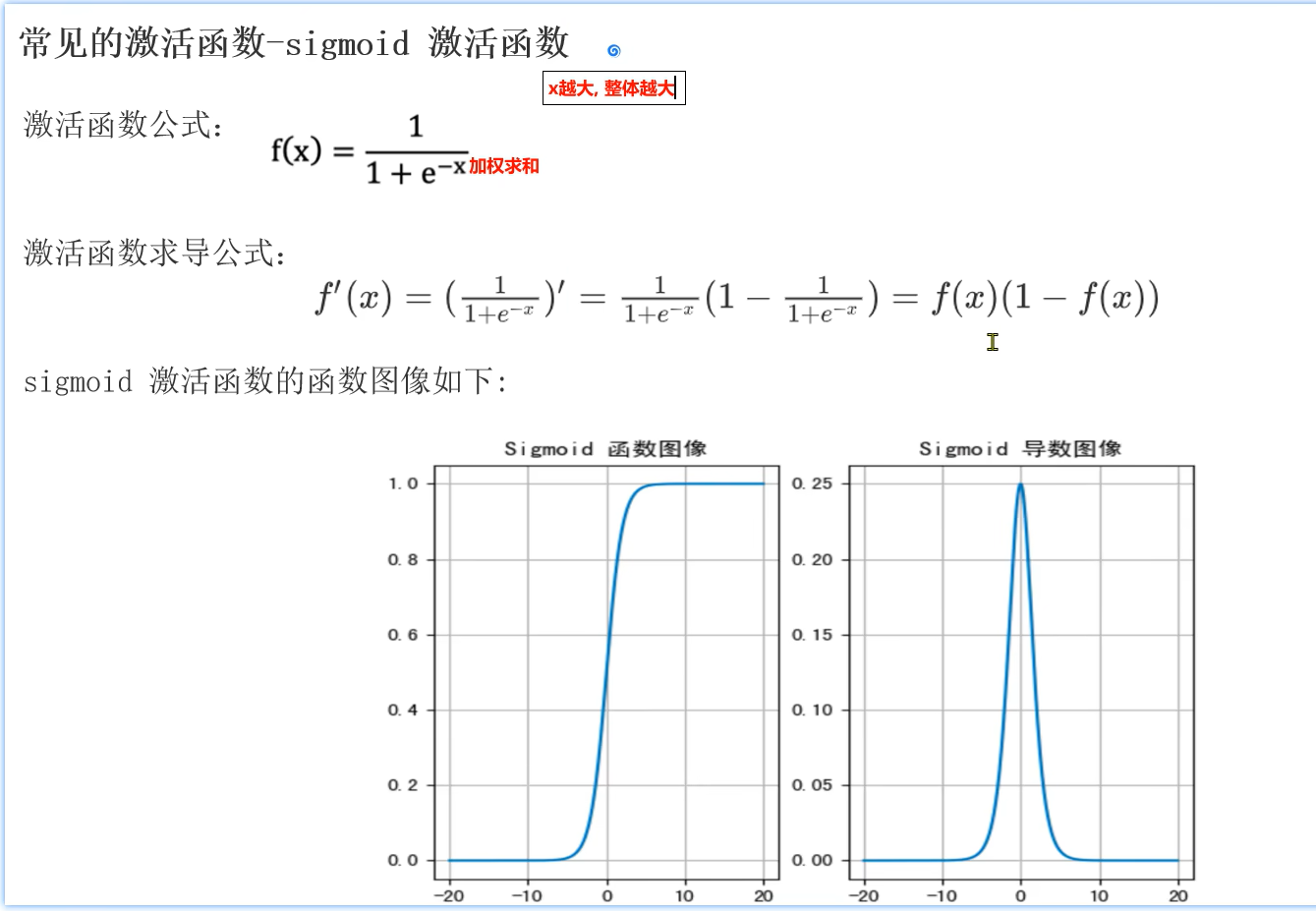

激活函数

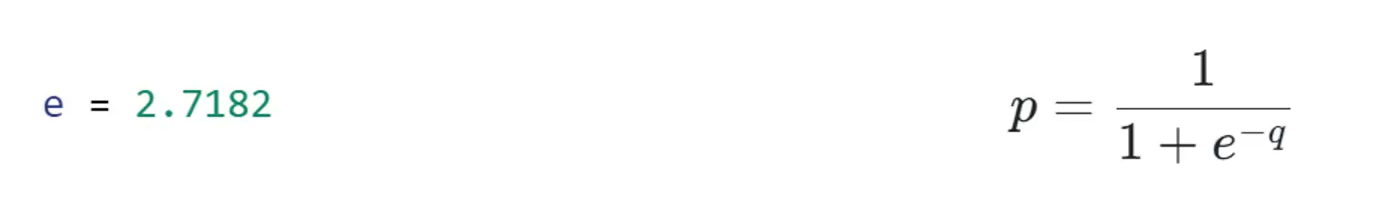

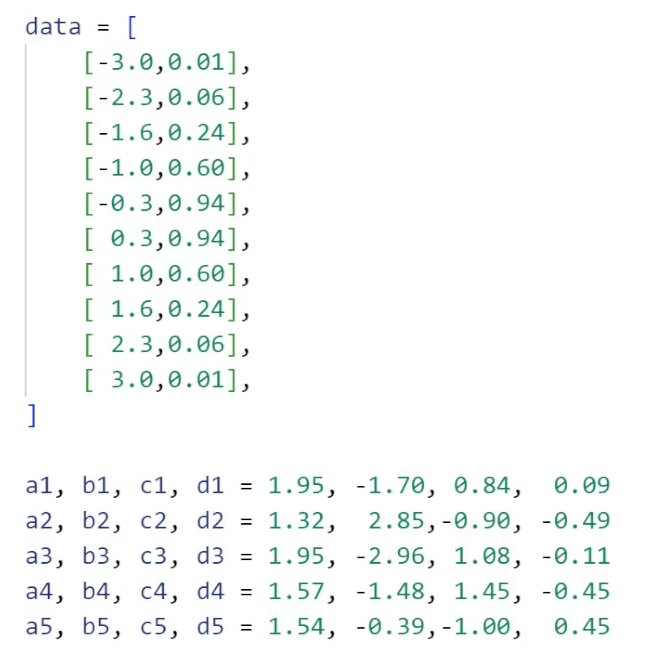

10个样本数据data,20个对Sigmoid函数进行线性变换的参数值(5个Sigmoid函数,每个函数有4个值)

- learning_rage = 0.05 学习率,作用在参数对loss值的导数上,让最终的参数慢慢调整,使得线性变化之后5个Sigmoid函数的结果值慢慢逼近真实值

- 训练1000次进行计算

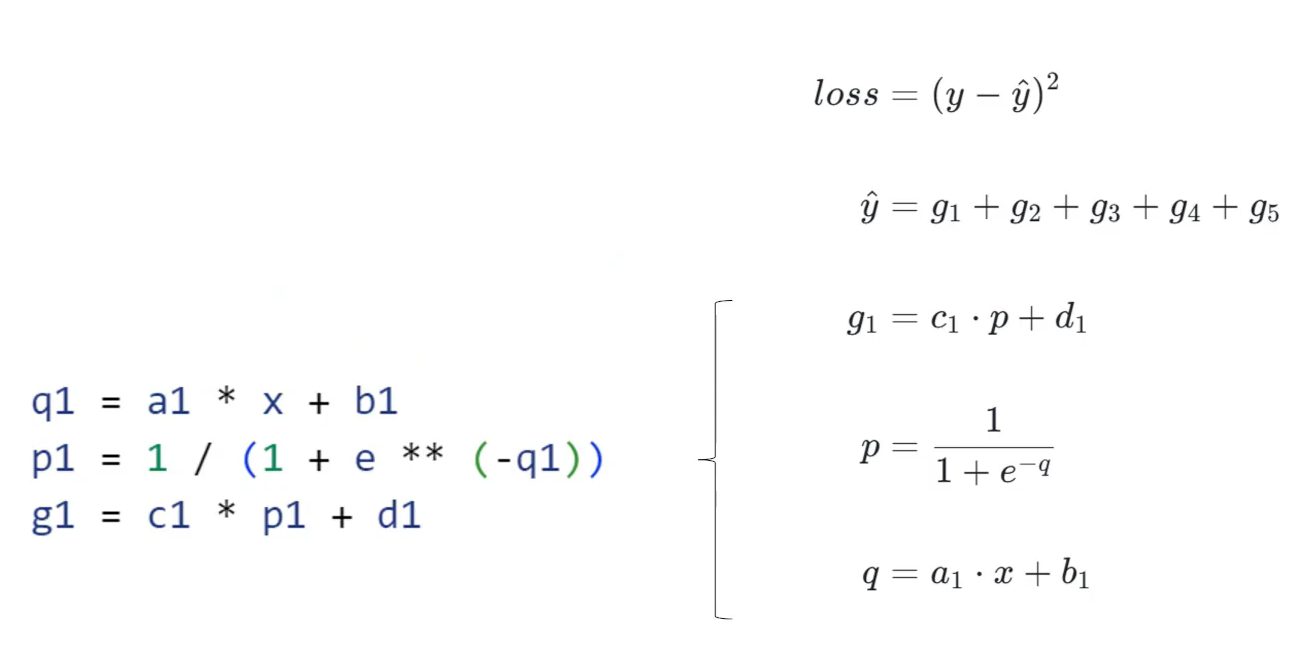

以此类推:计算

python

learning_rage = 0.05

for i in range(1000):

for x,y in data:

q1 = a1 * x +b1

p1 = 1 / (1 + e ** (-q1))

g1 = c1 * p1 + d1

q2 = a2 * x +b2

p2 = 1 / (1 + e ** (-q2))

g2 = c2 * p2 + d2

q3 = a3 * x +b3

p3 = 1 / (1 + e ** (-q3))

g3 = c3 * p3 + d3

q4 = a4 * x +b4

p4 = 1 / (1 + e ** (-q4))

g4 = c4 * p4 + d4

q5 = a5 * x +b5

p5 = 1 / (1 + e ** (-q5))

g5 = c5 * p5 + d5

y_hat = g1 + g2 + g3 + g4 + g5 #预测值

loss = (y - y_hat) ** 2

# 导数的链式法则,分别计算a1-d1的导数

a1_grad = (-2 * (y - y_hat)) * 1 * c1 * p1 * (1 - p1) * x

b1_grad = (-2 * (y - y_hat)) * 1 * c1 * p1 * (1 - p1) * 1

c1_grad = (-2 * (y - y_hat)) * 1 * p1

d1_grad = (-2 * (y - y_hat)) * 1

a2_grad = (-2 * (y - y_hat)) * 1 * c2 * p2 * (1 - p2) * x

b2_grad = (-2 * (y - y_hat)) * 1 * c2 * p2 * (1 - p2) * 1

c2_grad = (-2 * (y - y_hat)) * 1 * p2

d2_grad = (-2 * (y - y_hat)) * 1

a3_grad = (-2 * (y - y_hat)) * 1 * c3 * p3 * (1 - p3) * x

b3_grad = (-2 * (y - y_hat)) * 1 * c3 * p3 * (1 - p3) * 1

c3_grad = (-2 * (y - y_hat)) * 1 * p3

d3_grad = (-2 * (y - y_hat)) * 1

a4_grad = (-2 * (y - y_hat)) * 1 * c4 * p4 * (1 - p4) * x

b4_grad = (-2 * (y - y_hat)) * 1 * c4 * p4 * (1 - p4) * 1

c4_grad = (-2 * (y - y_hat)) * 1 * p4

d4_grad = (-2 * (y - y_hat)) * 1

a1_grad = (-2 * (y - y_hat)) * 1 * c5 * p5 * (1 - p5) * x

b1_grad = (-2 * (y - y_hat)) * 1 * c5 * p5 * (1 - p5) * 1

c1_grad = (-2 * (y - y_hat)) * 1 * p5

d1_grad = (-2 * (y - y_hat)) * 1

a1 = a1 - learning_rate * a1_grad

b1 = b1 - learning_rate * b1_grad

c1 = c1 - learning_rate * c1_grad

d1 = d1 - learning_rate * d1_grad

a2 = a2 - learning_rate * a2_grad

b1 = b1 - learning_rate * b2_grad

c1 = c1 - learning_rate * c2_grad

d1 = d1 - learning_rate * d2_grad

a3 = a3 - learning_rate * a3_grad

b3 = b3 - learning_rate * b3_grad

c3 = c3 - learning_rate * c3_grad

d3 = d3 - learning_rate * d3_grad

a4 = a4 - learning_rate * a4_grad

b4 = b4 - learning_rate * b4_grad

c4 = c4 - learning_rate * c4_grad

d4 = d4 - learning_rate * d4_grad

a5 = a5 - learning_rate * a5_grad

b5 = b5 - learning_rate * b5_grad

c5 = c5 - learning_rate * c5_grad

d5 = d5 - learning_rate * d5_grad

print(f"Epoch {i},Loss:{loss:8f}")

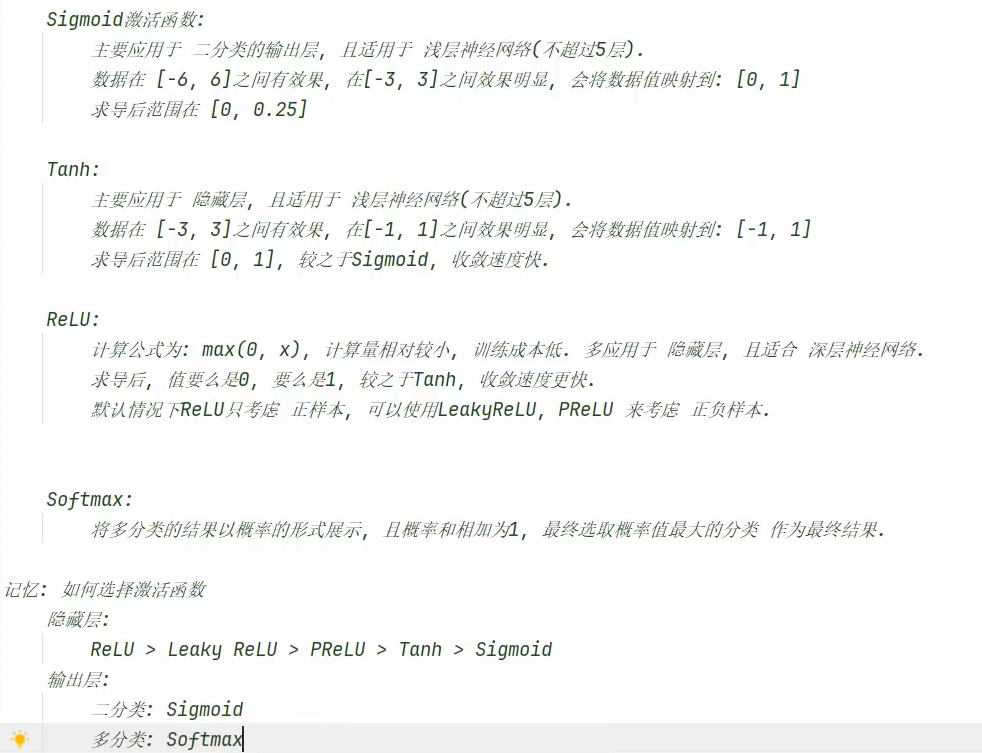

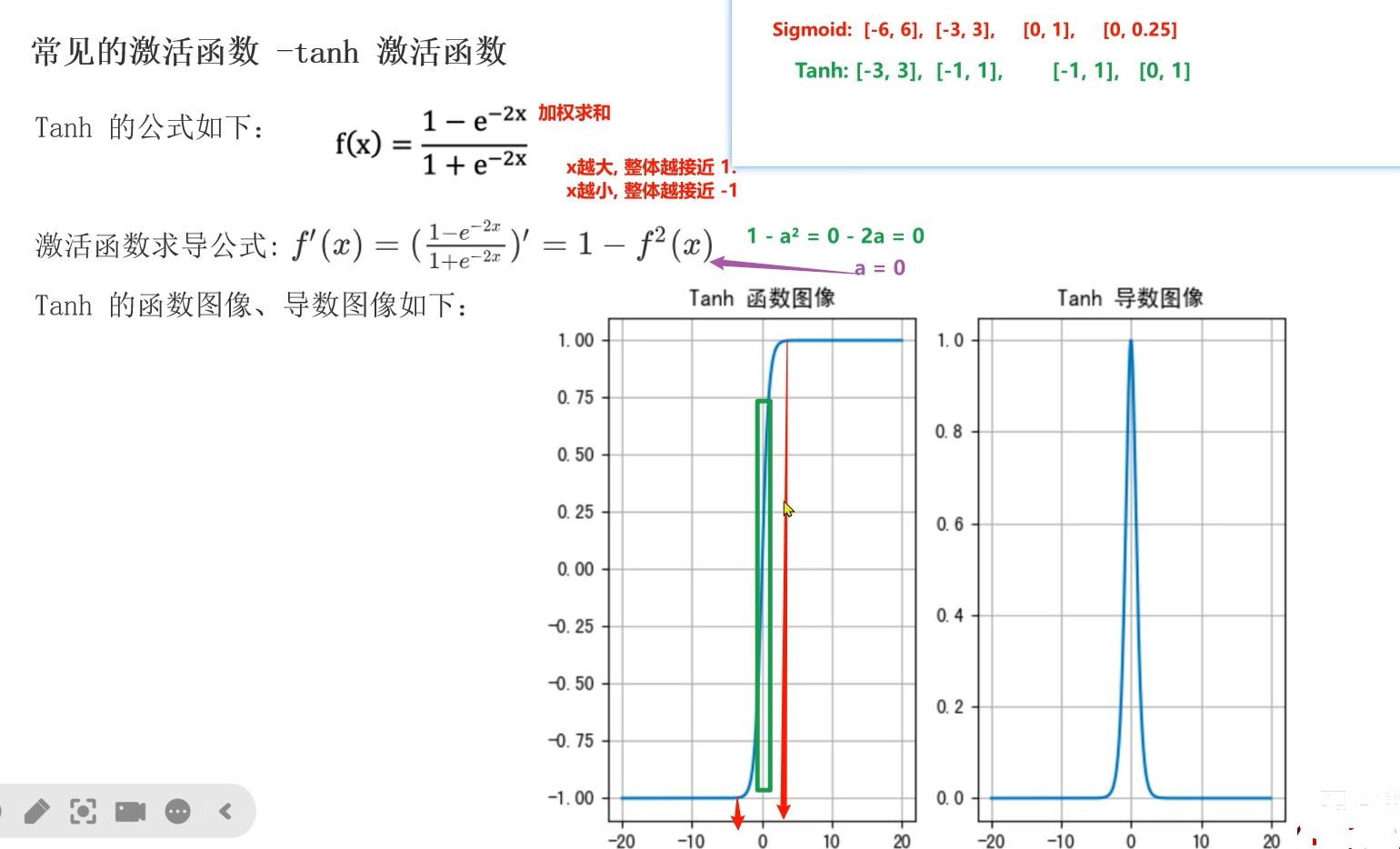

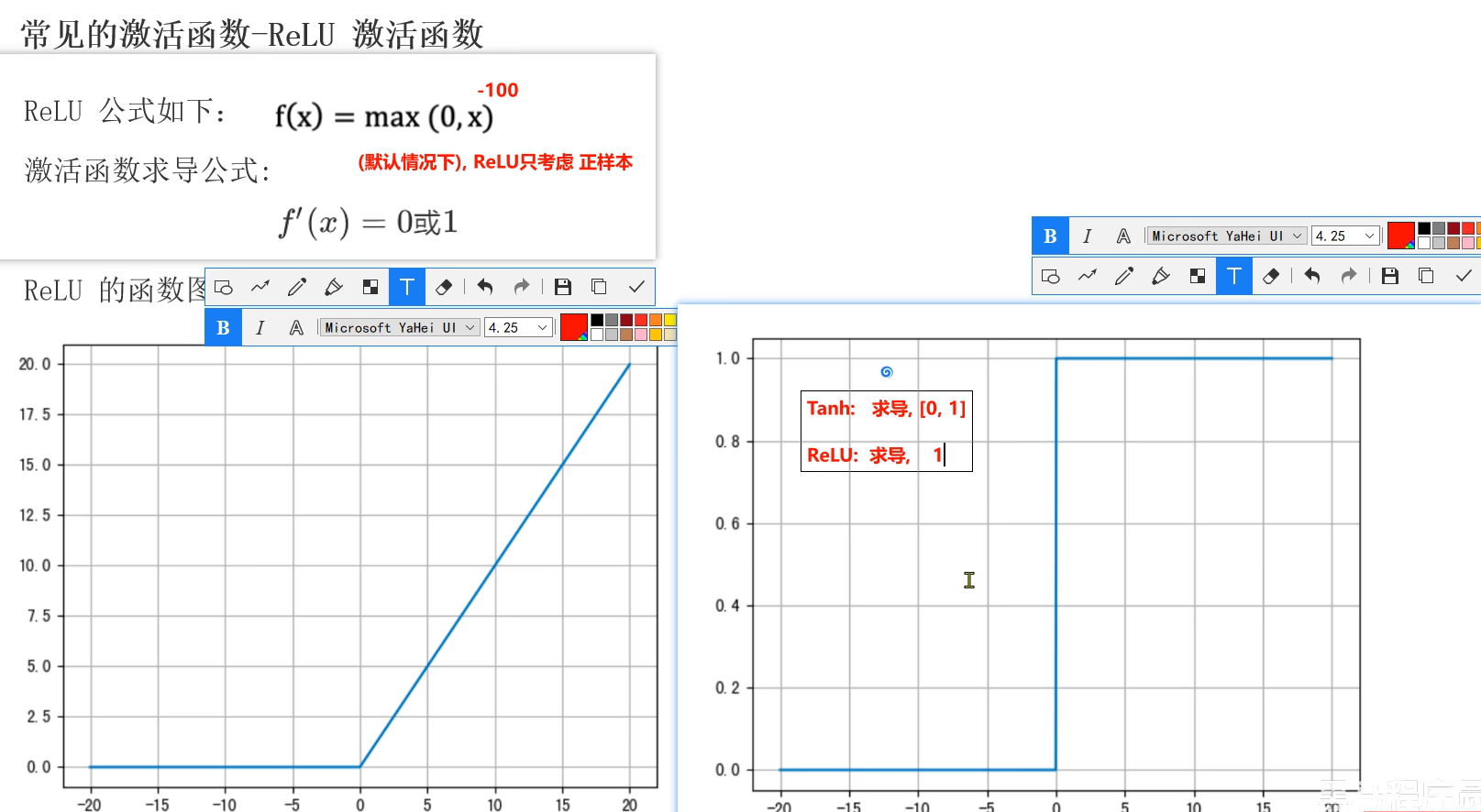

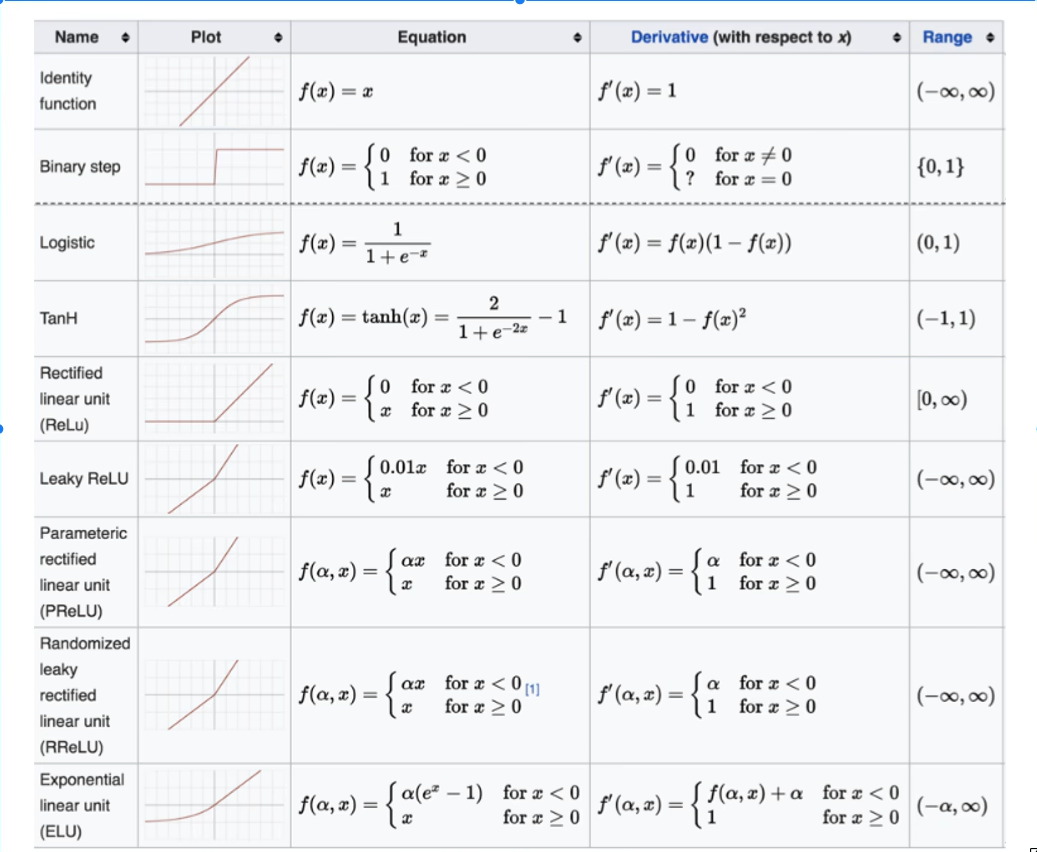

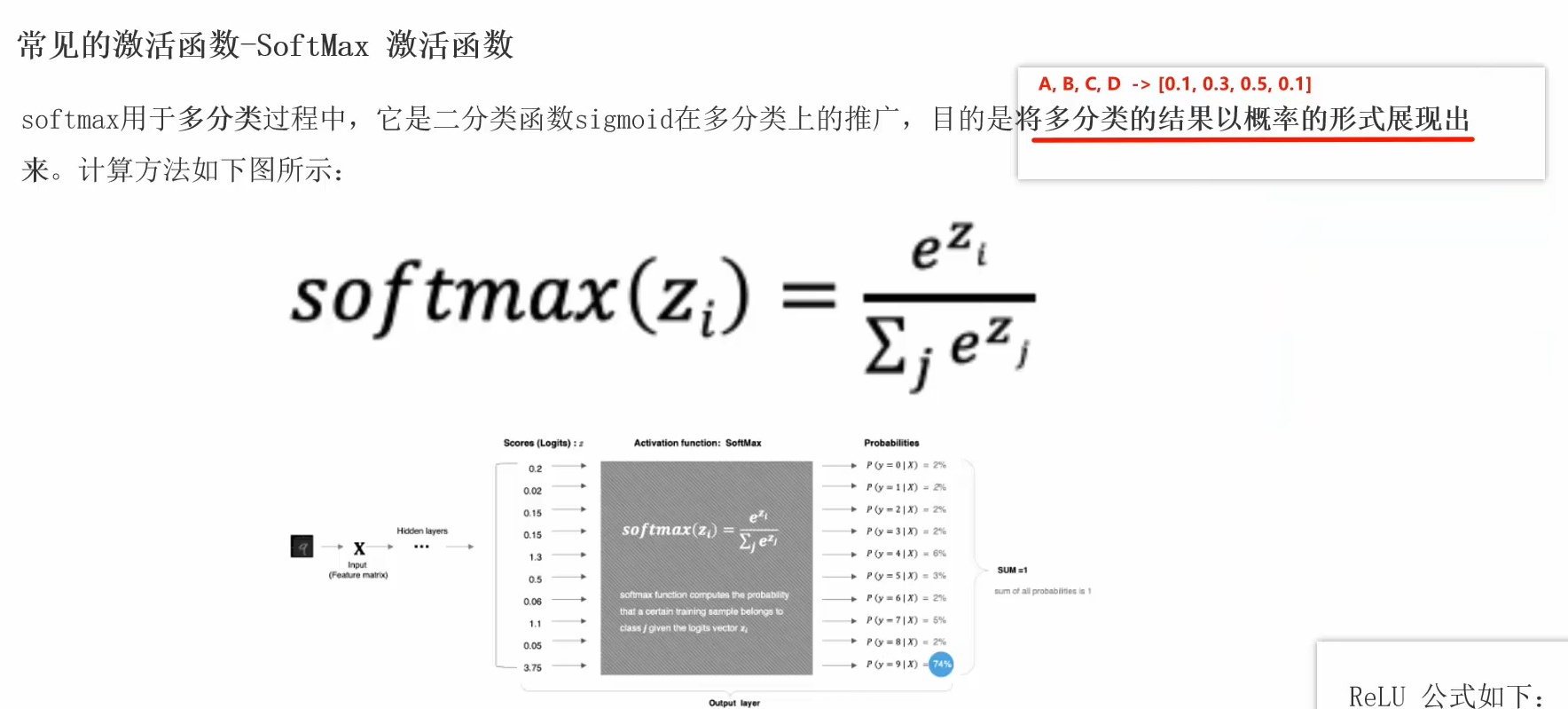

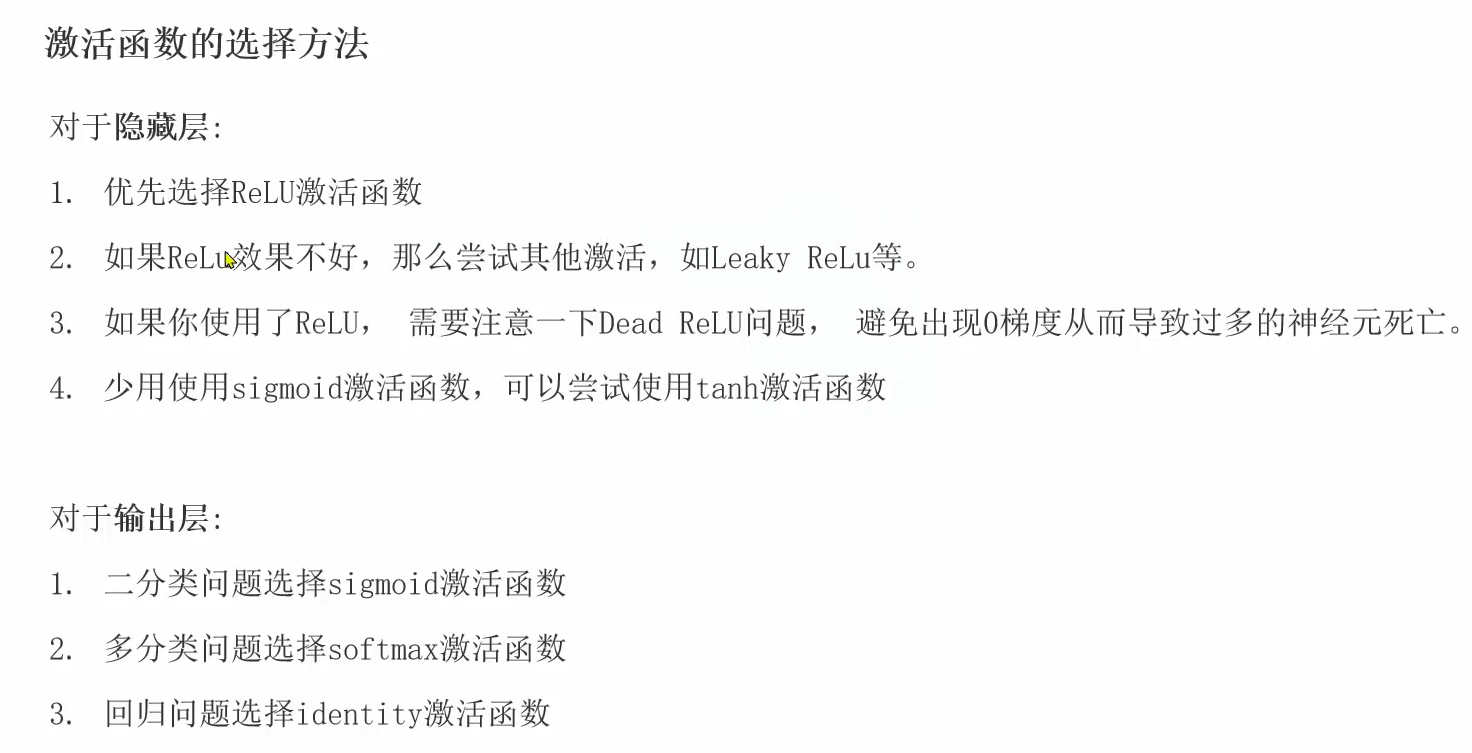

其他激活函数

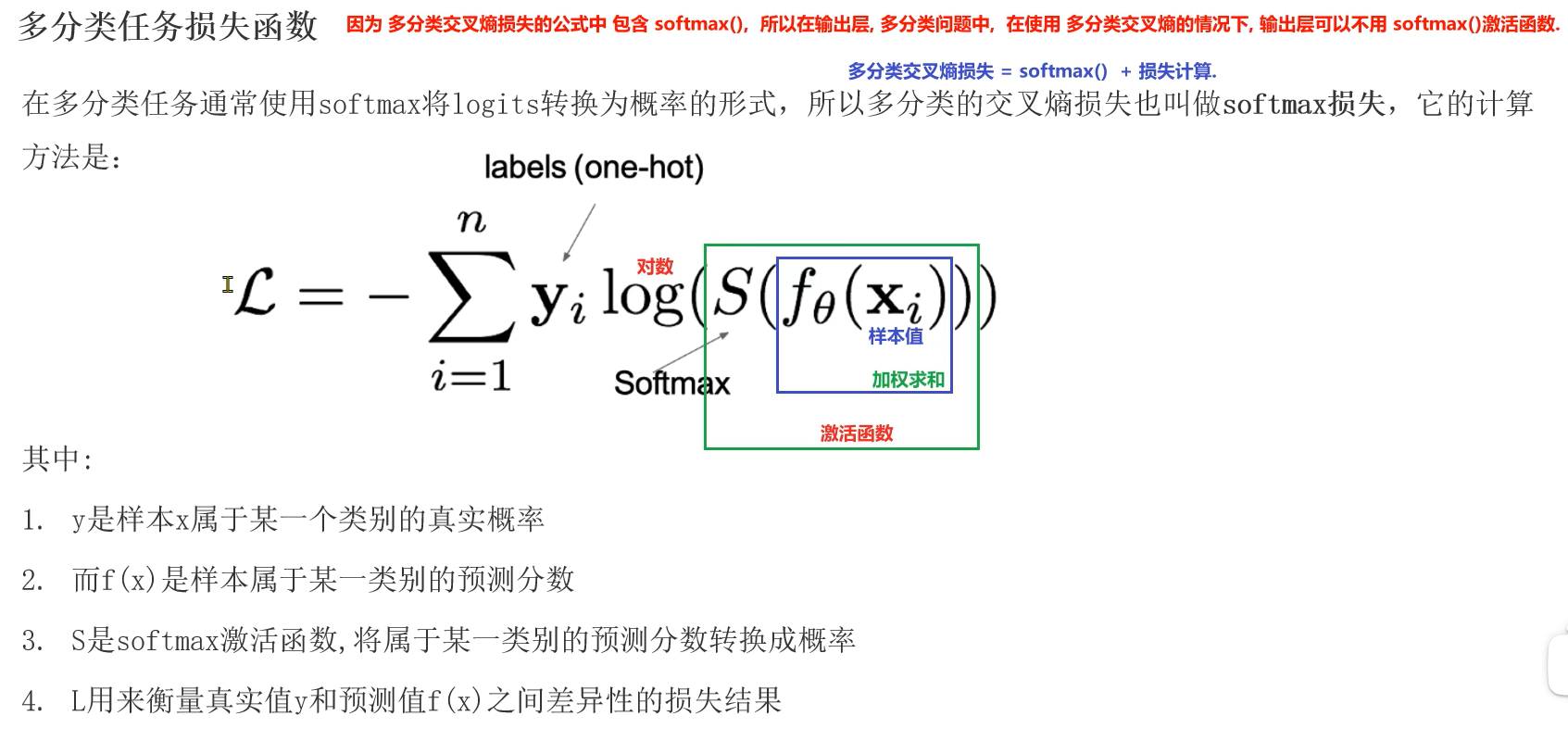

损失函数