LVS+Keepalived 双主架构全规划(LVS→HAProxy→Web)

一、规划架构与资源

| 角色 / IP 类型 | IP 地址 | 备注 |

|---|---|---|

| LVS 节点 1 | 172.25.254.11 | 双主节点 A |

| LVS 节点 2 | 172.25.254.12 | 双主节点 B |

| VIP1(Web 80) | 172.25.254.100 | 节点 1 主,节点 2 备 |

| VIP2(Web 80) | 172.25.254.200 | 节点 2 主,节点 1 备 |

| HAProxy 节点 1 | 172.25.254.21 | 7 层负载均衡 |

| HAProxy 节点 2 | 172.25.254.22 | 7 层负载均衡 |

| Web 节点 1(Docker) | 172.25.254.31 | 运行 Nginx 容器 |

| Web 节点 2(Docker) | 172.25.254.32 | 运行 Nginx 容器 |

二、前置配置

所有主机

2.1 关闭火墙

systemctl stop firewalld && systemctl disable firewalld && systemctl mask firewalld2.2 关闭selinux

sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config三、lvs + keepalived

KA1+KA2需配置以下

dnf install keepalived ipvsadm keepalived wget net-tools -y(1)内核参数

解决 ARP 冲突

cat > /etc/sysctl.conf << EOF

net.ipv4.ip_forward = 1

net.ipv4.conf.all.rp_filter = 0

net.ipv4.conf.default.rp_filter = 0

net.ipv4.conf.eth0.rp_filter = 0

net.ipv4.ip_nonlocal_bind = 1

EOF

sysctl -p参数详解

| 参数 | 作用 | 为什么必须配? |

|---|---|---|

ip_forward = 1 |

开启内核 IP 转发 | LVS 作为四层负载,需要转发客户端请求到后端 HAProxy,关闭则数据包无法转发 |

rp_filter = 0 |

关闭反向路径过滤 | DR 模式下,数据包的源 / 目标 IP 跨网卡,开启会被内核判定为「伪造包」丢弃 |

ip_nonlocal_bind = 1 |

允许绑定非本地 IP | Keepalived/ipvs 需要监听 VIP(非节点原生 IP),关闭则无法绑定,服务启动失败 |

(2)LVS 转发规则

# VIP1 80端口转发到HAProxy

ipvsadm -A -t 172.25.254.100:80 -s rr

ipvsadm -a -t 172.25.254.100:80 -r 172.25.254.21:80 -g

ipvsadm -a -t 172.25.254.100:80 -r 172.25.254.22:80 -g

ipvsadm -A -t 172.25.254.200:80 -s rr

ipvsadm -a -t 172.25.254.200:80 -r 172.25.254.21:80 -g

ipvsadm -a -t 172.25.254.200:80 -r 172.25.254.22:80 -g

[root@KA1 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 rr

-> 172.25.254.21:80 Route 1 0 0

-> 172.25.254.22:80 Route 1 0 0

# VIP2 80端口转发到HAProxy

ipvsadm -A -t 172.25.254.100:80 -s rr

ipvsadm -a -t 172.25.254.100:80 -r 172.25.254.21:80 -g

ipvsadm -a -t 172.25.254.100:80 -r 172.25.254.22:80 -g

ipvsadm -A -t 172.25.254.200:80 -s rr

ipvsadm -a -t 172.25.254.200:80 -r 172.25.254.21:80 -g

ipvsadm -a -t 172.25.254.200:80 -r 172.25.254.22:80 -g

[root@KA2 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 rr

-> 172.25.254.21:80 Route 1 0 0

-> 172.25.254.22:80 Route 1 0 0

TCP 172.25.254.200:80 rr

-> 172.25.254.21:80 Route 1 0 0

-> 172.25.254.22:80 Route 1 0 0

# 保存规则并开机自启

ipvsadm -S > /etc/sysconfig/ipvsadm

systemctl enable --now ipvsadm

[root@KA1 ~]# ipvsadm -S > /etc/sysconfig/ipvsadm

[root@KA1 ~]# systemctl enable --now ipvsadm

Created symlink /etc/systemd/system/multi-user.target.wants/ipvsadm.service → /usr/lib/systemd/system/ipvsadm.service.

[root@KA2 ~]# ipvsadm -S > /etc/sysconfig/ipvsadm

[root@KA2 ~]# systemctl enable --now ipvsadm

Created symlink /etc/systemd/system/multi-user.target.wants/ipvsadm.service → /usr/lib/systemd/system/ipvsadm.service.配置keepalived

KA1配置

[root@KA1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_NODE1

vrrp_skip_check_adv_addr

vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script check_lvs {

script "/usr/bin/ipvsadm -L -n | grep -q 172.25.254.100"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.100

}

track_script { check_lvs }

}

vrrp_instance VI_2 {

state BACKUP

interface eth0

virtual_router_id 52

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.200

}

track_script { check_lvs }

}KA2

[root@KA2 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_NODE2

vrrp_skip_check_adv_addr

vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script check_lvs {

script "/usr/bin/ipvsadm -L -n | grep -q 172.25.254.200"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.100

}

track_script { check_lvs }

}

vrrp_instance VI_2 {

state MASTER

interface eth0

virtual_router_id 52

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.200

}

track_script { check_lvs }

}

virtual_server 172.25.254.100 80 {

delay_loop 3

lb_algo rr

lb_kind DR

protocol TCP

real_server 172.25.254.21 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

}

}

real_server 172.25.254.22 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

}

}

}

virtual_server 172.25.254.200 80 {

delay_loop 3

lb_algo rr

lb_kind DR

protocol TCP

real_server 172.25.254.21 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

}

}

real_server 172.25.254.22 80 {

weight 1

TCP_CHECK {

connect_port 80

connect_timeout 3

}

}

}四、HAProxy

在HA1与HA2上做

[root@HA1/2 ~]# vim /etc/haproxy/haproxy.cfg

frontend web_front

bind *:80

default_backend web_back

backend web_back

balance roundrobin

server web1 172.25.254.31:8080 check inter 2s fall 3

server web2 172.25.254.32:8080 check inter 2s fall 3

#监控http://172.25.254.21:8888/harproxy

listen stats

bind *:8888

stats enable

stats uri /haproxy

stats auth admin:vb

[root@HA1/2 ~]# systemctl restart haproxy.service五、配置nginx

dnf install nginx -y

systemctl enable --noe nginx六、搭建dns服务器与nfs

yum install -y bind bind-utils

systemctl enable --now named

[root@nfs-dns-server ~]# mkdir -p /data/nfs/

[root@nfs-dns-server ~]# vim /etc/exports

/data/nfs/ 172.25.254.31(rw,sync,no_subtree_check,no_root_squash)

/data/nfs/ 172.25.254.32(rw,sync,no_subtree_check,no_root_squash)

#看看共享出来没

[root@nfs-dns-server nfs]# exportfs -rav

exporting 172.25.254.31:/data/nfs

exporting 172.25.254.32:/data/nfs

[root@nfs-dns-server nfs]# showmount -e localhost

Export list for localhost:

/data/nfs 172.25.254.32,172.25.254.31

[root@nfs-dns-server nfs]# systemctl restart nfs-server.service配置named.conf

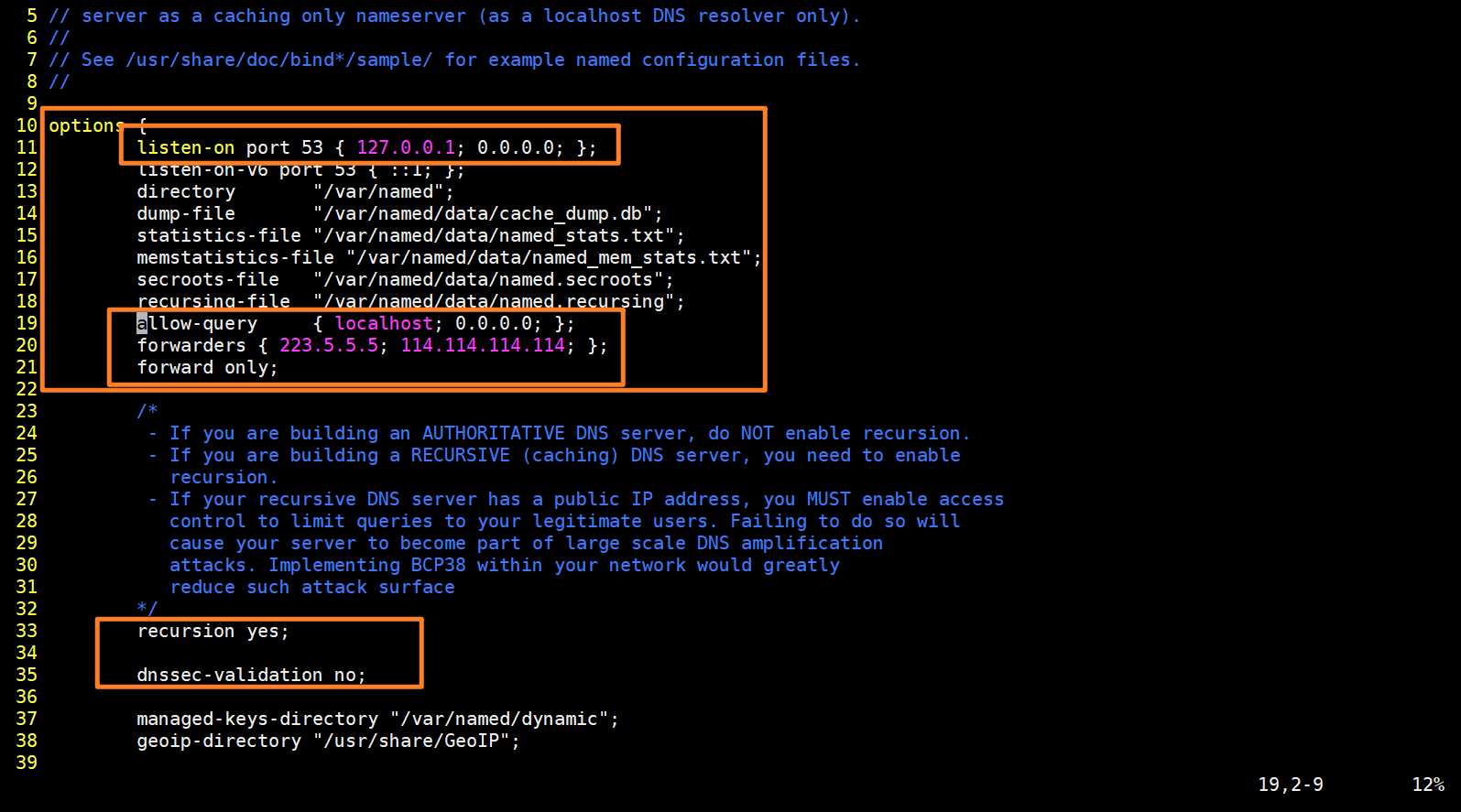

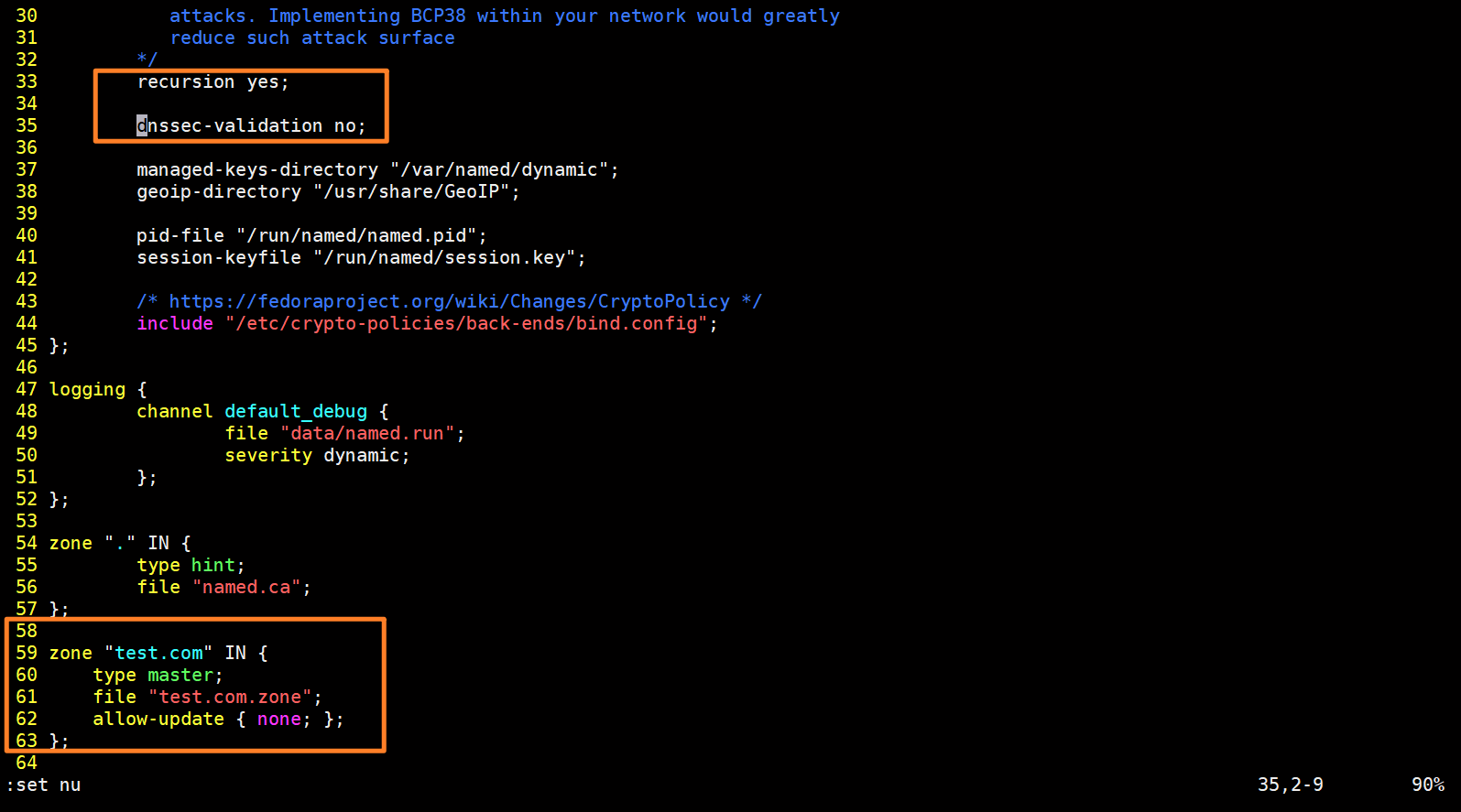

[root@nfs-dns-server ~]# vim /etc/named.conf

10 options {

11 listen-on port 53 { 127.0.0.1; 0.0.0.0; };

12 listen-on-v6 port 53 { ::1; };

13 directory "/var/named";

14 dump-file "/var/named/data/cache_dump.db";

15 statistics-file "/var/named/data/named_stats.txt";

16 memstatistics-file "/var/named/data/named_mem_stats.txt";

17 secroots-file "/var/named/data/named.secroots";

18 recursing-file "/var/named/data/named.recursing";

19 allow-query { localhost; 0.0.0.0; };

20 forwarders { 223.5.5.5; 114.114.114.114; };

21 forward only;

33 recursion yes;

34

35 dnssec-enable no;

36 dnssec-validation no;

60 zone "test.com" IN {

61 type master;

62 file "test.com.zone";

63 allow-update { none; };

64 };

[root@nfs-dns-server ~]# chown named:named /var/named/test.com.zone

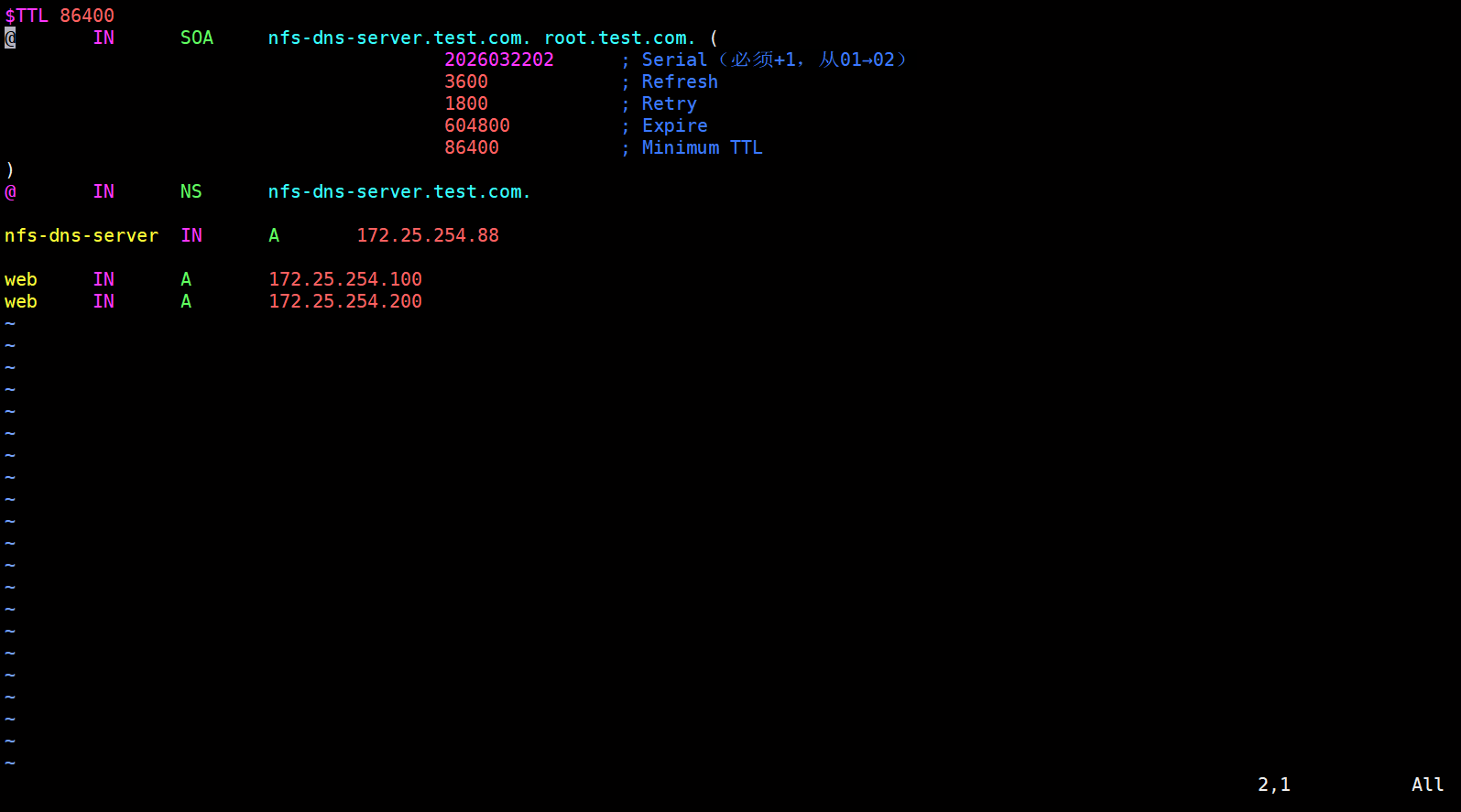

[root@nfs-dns-server ~]# named-checkconf /etc/named.conf创建.zone文件写入a记录

复制模板进行更改

注意名字需要与之前创建的zone的名字一样,后缀为.zone

例如之前写的zone "test.com" ... ------> test.com.zone

[root@nfs-dns-server ~]# cp /var/named/named.empty /var/named/test.com.zone

[root@nfs-dns-server ~]# vim /var/named/test.com.zone

$TTL 86400

@ IN SOA nfs-dns-server.test.com. root.test.com. (

2026032202 ; Serial

3600 ; Refresh

1800 ; Retry

604800 ; Expire

86400 ; Minimum TTL

)

@ IN NS nfs-dns-server.test.com.

nfs-dns-server IN A 172.25.254.88

web IN A 172.25.254.100

web IN A 172.25.254.200

[root@nfs-dns-server ~]# named-checkzone test.com /var/named/test.com.zone

zone test.com/IN: loaded serial 2026032202

OK

[root@nfs-dns-server ~]# systemctl enable --now named

在web主机上

[root@web1/2 ~]# yum install -y nfs-utils

[root@web1/2 ~]# echo "172.25.254.88:/data/nfs/ /usr/share/nginx/html nfs defaults 0 0" >> /etc/fstab

[root@web1/2 ~]# systemctl daemon-reload

[root@web1/2 ~]# mount -a

[root@web1/2 ~]# mount | grep nfs

sunrpc on /var/lib/nfs/rpc_pipefs type rpc_pipefs (rw,relatime)

172.25.254.88:/data/nfs on /mnt/nfs type nfs4 (rw,relatime,vers=4.2,rsize=131072,wsize=131072,namlen=255,hard,proto=tcp,timeo=600,retrans=2,sec=sys,clientaddr=172.25.254.31,local_lock=none,addr=172.25.254.88)

172.25.254.88:/data/nfs on /usr/share/nginx/html type nfs4 (rw,relatime,vers=4.2,rsize=131072,wsize=131072,namlen=255,hard,proto=tcp,timeo=600,retrans=2,sec=sys,clientaddr=172.25.254.31,local_lock=none,addr=172.25.254.88)