二进制基于kubeasz部署 K8s 1.34.x 高可用集群实战指南-第四章:kubeasz部署集群k8s系统(4-4)

第四章:kubeasz 部署工具配置 (192.168.44.160)

4.1 安装基础工具

# 登录部署节点

ssh root@192.168.44.160

# 安装必要工具

apt update

apt install -y ansible git sshpass python3-pip

# 验证

ansible --version4.2 解压并安装 kubeasz

# 进入 root 目录

cd /root

# 解压 kubeasz 离线包(假设已经上传到 /root)

unzip kubeasz-20260111-y99.zip -d kubeasz

# 或者如果文件在其他位置

# unzip /home/lv/kubeasz-20260111-y99.zip -d kubeasz

# 进入 kubeasz 目录

cd kubeasz

# 查看目录结构

ls -la

# 执行安装脚本

chmod +x install.sh

./install.sh

# 或者手动设置路径

mkdir -p /etc/kubeasz

cp -r * /etc/kubeasz/4.3 配置 SSH 免密登录

# 生成 SSH 密钥(如果还没有)

ssh-keygen -t rsa -N "" -f ~/.ssh/id_rsa

# 批量复制公钥到所有节点(包括 160 自身)

for node in 101 102 103 104 105 106 107 108 109 110 111 112 113 160; do

echo "=== 配置 192.168.44.$node ==="

ssh-copy-id root@192.168.44.$node

done4.4 创建集群配置目录

# 创建集群配置目录

mkdir -p /etc/kubeasz/clusters/k8s-cluster1/{ssl,bin,logs}

# 创建 hosts 文件

cat > /etc/kubeasz/clusters/k8s-cluster1/hosts << 'EOF'

[all]

192.168.44.101 k8s_nodename=k8s-master1

192.168.44.102 k8s_nodename=k8s-master2

192.168.44.103 k8s_nodename=k8s-master3

192.168.44.106 k8s_nodename=k8s-etcd1

192.168.44.107 k8s_nodename=k8s-etcd2

192.168.44.108 k8s_nodename=k8s-etcd3

192.168.44.111 k8s_nodename=k8s-node1

192.168.44.112 k8s_nodename=k8s-node2

192.168.44.113 k8s_nodename=k8s-node3

[etcd]

192.168.44.106

192.168.44.107

192.168.44.108

[kube_master]

192.168.44.101

192.168.44.102

192.168.44.103

[kube_node]

192.168.44.111

192.168.44.112

192.168.44.113

[ex_lb]

192.168.44.109 LB_ROLE=master EX_APISERVER_VIP=192.168.44.188 EX_APISERVER_PORT=6443

192.168.44.110 LB_ROLE=backup EX_APISERVER_VIP=192.168.44.188 EX_APISERVER_PORT=6443

[harbor]

192.168.44.104

[chrony]

[all:vars]

CLUSTER_NAME="k8s-cluster1"

CLUSTER_DNS_DOMAIN="cluster.local"

SERVICE_CIDR="10.100.0.0/16"

CLUSTER_CIDR="10.200.0.0/16"

NODE_PORT_RANGE="30000-32767"

SECURE_PORT="6443"

CONTAINER_RUNTIME="containerd"

CLUSTER_NETWORK="calico"

PROXY_MODE="ipvs"

# Harbor 配置

HARBOR_VER="v2.14.1"

HARBOR_DOMAIN="harbor.myarchitect.online"

HARBOR_REGISTRY="harbor.myarchitect.online"

HARBOR_IP="192.168.44.104"

HARBOR_PORT="80"

EOF4.5 创建 config.yml 配置文件

cat > /etc/kubeasz/clusters/k8s-cluster1/config.yml << 'EOF'

# --------------- 全局变量 全部补齐 ---------------

base_dir: "/etc/kubeasz"

bin_dir: "/usr/local/bin"

ca_dir: "{{ cluster_dir }}/ssl"

cluster_dir: "/etc/kubeasz/clusters/k8s-cluster1"

############################

# prepare

############################

INSTALL_SOURCE: "online"

OS_HARDEN: false

############################

# role:deploy

############################

CA_EXPIRY: "876000h"

CERT_EXPIRY: "438000h"

CHANGE_CA: false

CLUSTER_NAME: "cluster1"

CONTEXT_NAME: "context-{{ CLUSTER_NAME }}"

K8S_VER: "1.34.1"

K8S_NODENAME: "{%- if k8s_nodename != '' -%} \

{{ k8s_nodename|replace('_', '-')|lower }} \

{%- else -%} \

k8s-{{ inventory_hostname|replace('.', '-') }} \

{%- endif -%}"

ENABLE_SETTING_HOSTNAME: true

############################

# role:etcd

############################

ETCD_DATA_DIR: "/var/lib/etcd"

ETCD_WAL_DIR: ""

############################

# role:runtime [containerd,docker]

############################

ENABLE_MIRROR_REGISTRY: true

INSECURE_REG:

- "https://harbor.myarchitect.online"

SANDBOX_IMAGE: "harbor.myarchitect.online/baseimages/pause:3.10"

CONTAINERD_STORAGE_DIR: "/var/lib/containerd"

DOCKER_STORAGE_DIR: "/var/lib/docker"

DOCKER_ENABLE_REMOTE_API: false

############################

# role:kube-master

############################

MASTER_CERT_HOSTS:

- "192.168.44.188"

- "192.168.44.101"

- "192.168.44.102"

- "192.168.44.103"

NODE_CIDR_LEN: 24

ENABLE_CLUSTER_AUDIT: false

############################

# role:kube-node

############################

KUBELET_ROOT_DIR: "/var/lib/kubelet"

MAX_PODS: 110

KUBE_RESERVED_ENABLED: "no"

SYS_RESERVED_ENABLED: "no"

############################

# role:network

############################

CALICO_ENABLE_OVERLAY: "Always"

IP_AUTODETECTION_METHOD: "can-reach={{ groups['kube_master'][0] }}"

CALICO_NETWORKING_BACKEND: "bird"

calico_ver: "v3.28.4"

############################

# role:cluster-addon

############################

dns_install: "no"

metricsserver_install: "no"

dashboard_install: "no"

local_path_provisioner_install: "no"

nfs_provisioner_install: "yes"

nfs_server: "192.168.44.120"

nfs_path: "/data/nfs"

nfs_storage_class: "managed-nfs-storage"

openebs_install: "no"

prom_install: "no"

minio_install: "no"

kubeapps_install: "no"

nacos_install: "no"

rocketmq_install: "no"

ingress_nginx_install: "no"

# role:harbor

############################

HARBOR_VER: "v2.14.1"

HARBOR_DOMAIN: "harbor.myarchitect.online"

# Harbor 配置

HARBOR_REGISTRY: "harbor.myarchitect.online"

HARBOR_IP: "192.168.44.104"

HARBOR_PORT: "80"

EOF4.6 配置 hosts 解析

# 添加 Harbor 域名解析到所有节点

for node in 101 102 103 104 105 106 107 108 109 110 111 112 113 160; do

echo "=== 配置 192.168.44.$node ==="

ssh root@192.168.44.$node "grep -q 'harbor.myarchitect.online' /etc/hosts || echo '192.168.44.104 harbor.myarchitect.online' >> /etc/hosts"

ssh root@192.168.44.$node "grep harbor /etc/hosts"

done4.7 验证配置

# 查看 hosts 文件

cat /etc/kubeasz/clusters/k8s-cluster1/hosts

# 查看 config.yml

cat /etc/kubeasz/clusters/k8s-cluster1/config.yml | grep -E "(HARBOR|CLUSTER)"

# 验证 ansible 连接

ansible all -i /etc/kubeasz/clusters/k8s-cluster1/hosts -m ping4.8 纯 YAML 部署方案Calico部署前准备(修复关键问题)

# 1. 修复所有节点的 Harbor IP 解析

echo "=== 修复 Harbor IP ==="

for node in 101 102 103 104 105 106 107 108 109 110 111 112 113 160; do

ssh root@192.168.44.$node "sed -i 's/172.31.7.104/192.168.44.104/g' /etc/hosts"

ssh root@192.168.44.$node "grep harbor /etc/hosts"

done

# 2. 修复 pause 镜像(最关键!)

echo "=== 修复 pause 镜像 ==="

for node in 101 102 103 111 112 113; do

echo "修复 192.168.44.$node"

ssh root@192.168.44.$node "sed -i 's|harbor.myarchitect.online/baseimages/pause:3.10|registry.aliyuncs.com/google_containers/pause:3.10|g' /etc/containerd/config.toml"

ssh root@192.168.44.$node "systemctl restart containerd"

ssh root@192.168.44.$node "systemctl status containerd | grep Active"

done

# 3. 确保 localhost 解析正确

echo "=== 修复 localhost 解析 ==="

for node in 101 102 103 111 112 113; do

ssh root@192.168.44.$node "grep -q '127.0.0.1 localhost' /etc/hosts || echo '127.0.0.1 localhost' >> /etc/hosts"

done

echo "=== 准备工作完成 ==="4.9 部署 Calico 网络(纯 YAML 方式)

cd /etc/kubeasz

# 1. 跳过 kubeasz 的网络部署

echo "=== 跳过 kubeasz 网络部署 ==="

sed -i 's/CLUSTER_NETWORK="calico"/CLUSTER_NETWORK="none"/' clusters/k8s-cluster1/hosts

grep CLUSTER_NETWORK clusters/k8s-cluster1/hosts

# 2. 完成 kubeasz 部署(但不部署网络)

echo "=== 完成剩余部署步骤 ==="

./ezctl setup k8s-cluster1 06 # 这一步会部署除了网络之外的其他组件

# 3. 下载 Calico YAML

echo "=== 下载 Calico 官方 YAML ==="

wget https://raw.githubusercontent.com/projectcalico/calico/v3.28.4/manifests/calico.yaml -O /tmp/calico.yaml

# 4. 可选:修改 Calico YAML 中的网卡检测(如果需要)

# 如果你的网卡不是 ens* 开头,需要修改

# sed -i 's/interface=ens*/interface=eth*/g' /tmp/calico.yaml

# 5. 应用 Calico

echo "=== 部署 Calico 网络 ==="

kubectl apply -f /tmp/calico.yaml

# 6. 监控部署状态

echo "=== 监控 Calico Pod 启动状态 ==="

echo "按 Ctrl+C 退出监控"

sleep 5

watch -n 2 kubectl get pods -n kube-system | grep -E "(calico|NAME)"4.10 验证部署结果

# 等待所有 Pod 启动(约 1-2 分钟)

echo "等待 60 秒让 Pod 启动..."

sleep 60

# 查看所有系统 Pod

echo "=== 查看 kube-system Pods ==="

kubectl get pods -n kube-system -o wide

# 查看 Calico Pods

echo "=== Calico Pods 状态 ==="

kubectl get pods -n kube-system | grep calico

# 查看节点状态

echo "=== 节点状态 ==="

kubectl get nodes

# 如果节点未 Ready,查看详细信息

echo "=== 节点详细信息 ==="

kubectl describe nodes | grep -A 5 "Conditions:"

# 查看 Calico 日志(如果有问题)

# kubectl logs -n kube-system calico-node-xxxxx --tail=50部署环节

可以继续执行部署步骤:

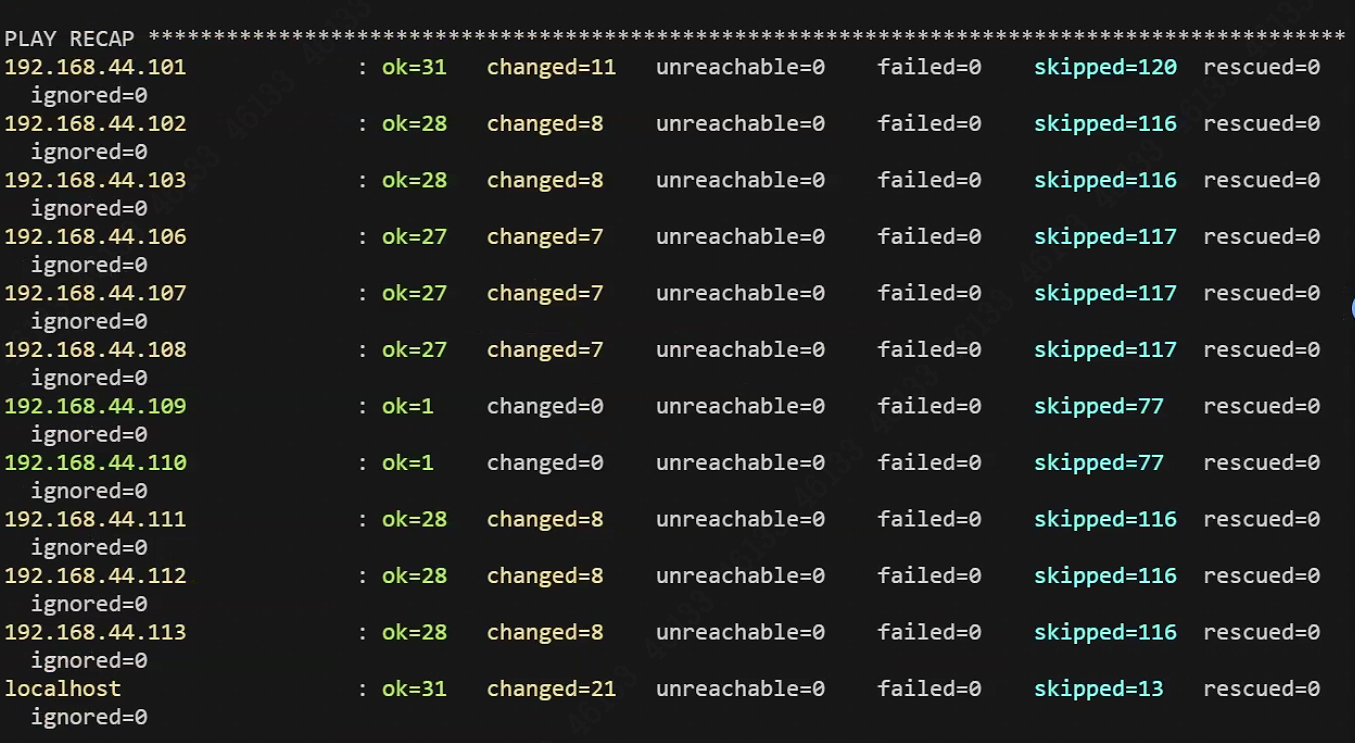

./ezctl setup k8s-cluster1 01 # 环境准备

./ezctl setup k8s-cluster1 02 # 证书和 etcd

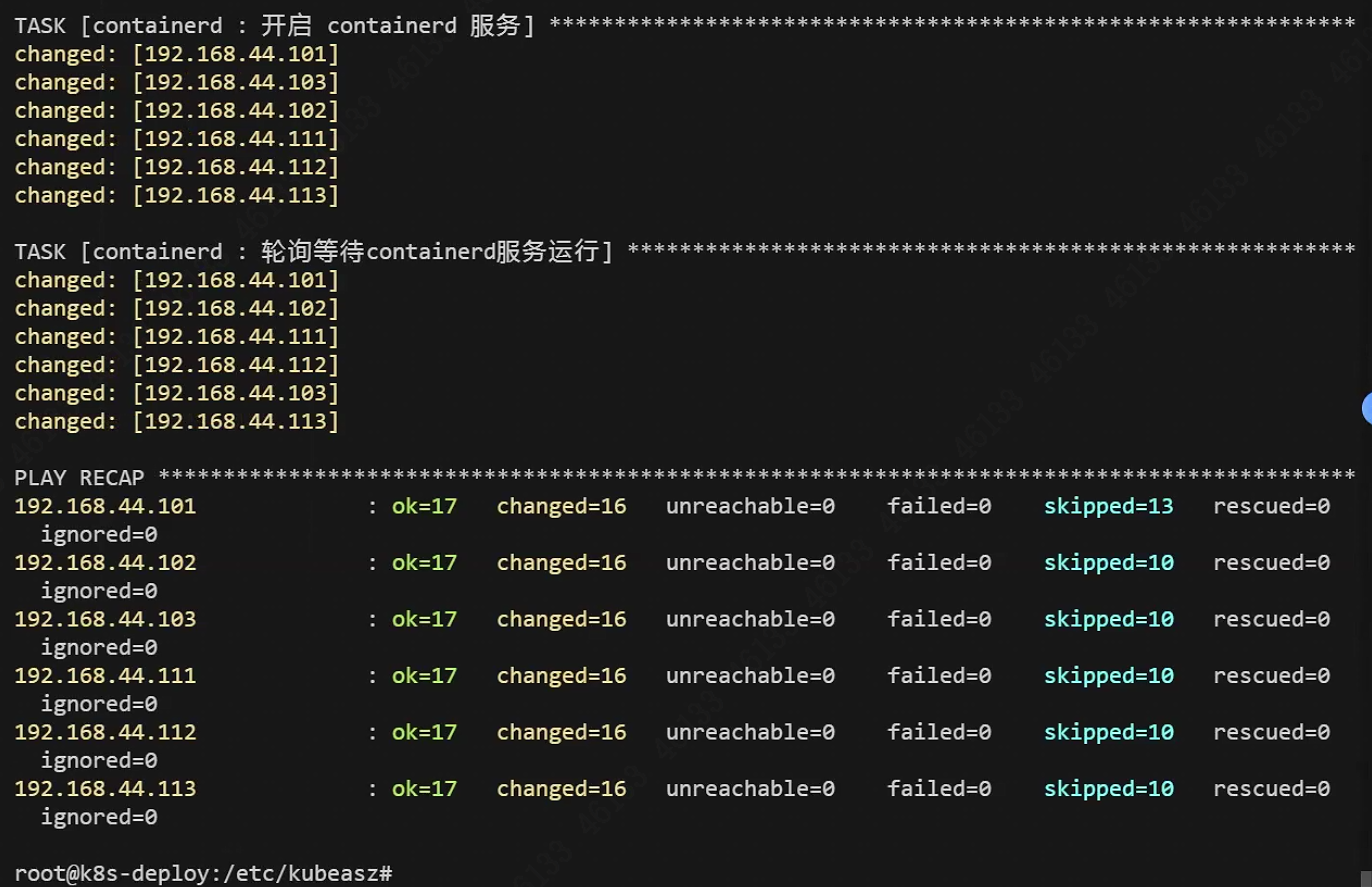

./ezctl setup k8s-cluster1 03 # 容器运行时

./ezctl setup k8s-cluster1 04 # master 节点

./ezctl setup k8s-cluster1 05 # node 节点

./ezctl setup k8s-cluster1 06 # 网络插件作用:

- 关闭防火墙

- 关闭 selinux

- 安装依赖

- 配置时间同步

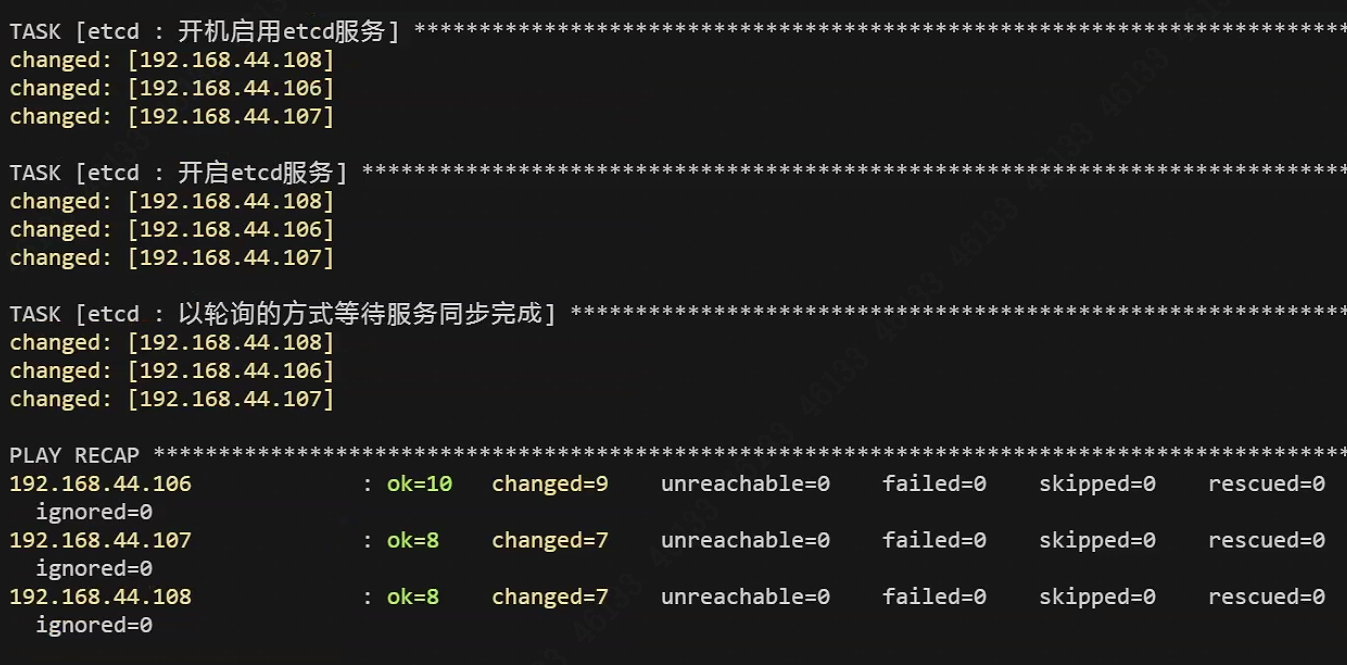

2 步:部署 etcd 集群

./ezctl setup k8s-cluster1 02作用:

- 部署 3 节点 etcd

- 生成 etcd 证书

- 健康检查

第 3 步:部署负载均衡(ex_lb)

./ezctl setup k8s-cluster1 03作用:

- 部署 haproxy + keepalived

- 提供 VIP:192.168.44.188

- 流量转发到 3 台 master

这里卡在:

echo "# Harbor 配置

HARBOR_REGISTRY=\"harbor.myarchitect.online\"

HARBOR_IP=\"192.168.44.104\"

HARBOR_PORT=\"80\"" >> /etc/kubeasz/clusters/k8s-cluster1/hosts

```

### 2. 修改 config.yml 文件

```bash

echo "" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "# Harbor 配置" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "HARBOR_REGISTRY: \"harbor.myarchitect.online\"" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "HARBOR_IP: \"192.168.44.104\"" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "HARBOR_PORT: \"80\"" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

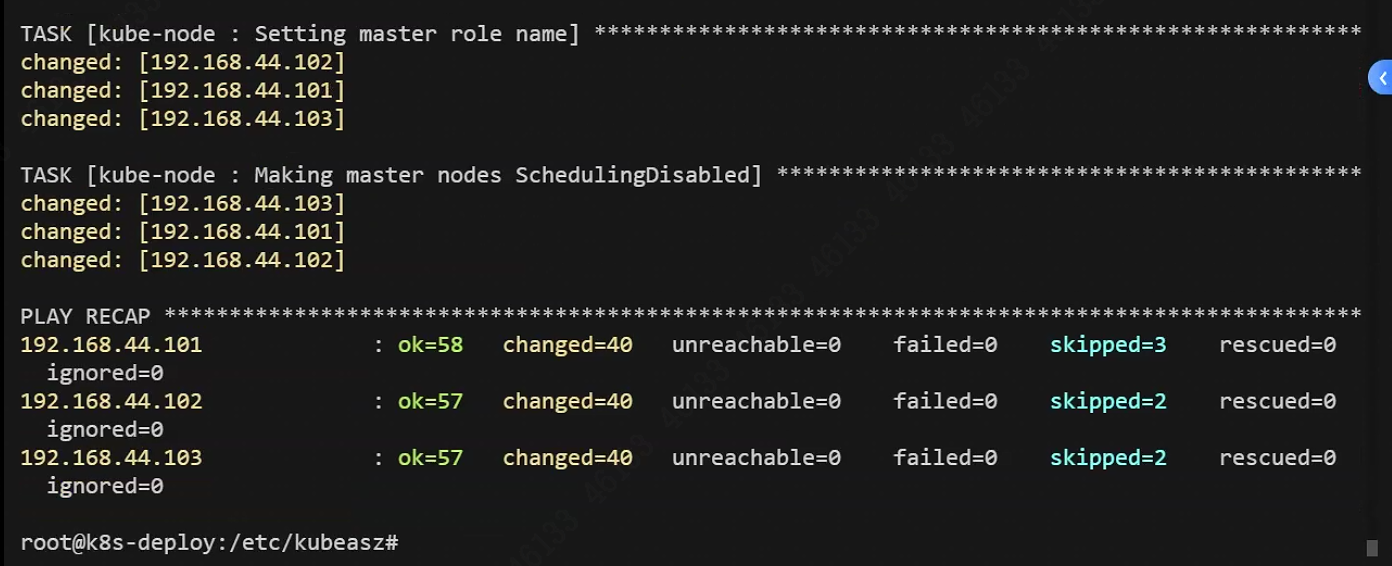

第 4 步:部署 master 组件

./ezctl setup k8s-cluster1 04作用:

- 部署 apiserver、controller-manager、scheduler

- 生成所有证书

- 初始化集群

卡在没有目录

1:TASK [kube-master : 分发controller/scheduler kubeconfig配置文件] **************************************failed: [192.168.44.103] (item=kube-controller-manager.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "13afbad73aaa25ba4740289c192dab6b05a395d7", "item": "kube-controller-manager.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

failed: [192.168.44.102] (item=kube-controller-manager.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "13afbad73aaa25ba4740289c192dab6b05a395d7", "item": "kube-controller-manager.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

failed: [192.168.44.101] (item=kube-controller-manager.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "13afbad73aaa25ba4740289c192dab6b05a395d7", "item": "kube-controller-manager.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

failed: [192.168.44.103] (item=kube-scheduler.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "69ebc123ff01f6a0ad80a9a236ac6b4893a8b7a4", "item": "kube-scheduler.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

failed: [192.168.44.102] (item=kube-scheduler.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "69ebc123ff01f6a0ad80a9a236ac6b4893a8b7a4", "item": "kube-scheduler.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

failed: [192.168.44.101] (item=kube-scheduler.kubeconfig) => {"ansible_loop_var": "item", "changed": false, "checksum": "69ebc123ff01f6a0ad80a9a236ac6b4893a8b7a4", "item": "kube-scheduler.kubeconfig", "msg": "Destination directory /etc/kubernetes does not exist"}

PLAY RECAP ********************************************************************************************192.168.44.101 : ok=9 changed=8 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.44.102 : ok=9 changed=8 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.44.103 : ok=9 changed=8 unreachable=0 failed=1 skipped=0

rescued=0 ignored=0

2:TASK [kube-node : 创建kubelet的配置文件] **************************************************************An exception occurred during task execution. To see the full traceback, use -vvv. The error was: ansible.errors.AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined

fatal: [192.168.44.101]: FAILED! => {"changed": false, "msg": "AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined"}

An exception occurred during task execution. To see the full traceback, use -vvv. The error was: ansible.errors.AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined

fatal: [192.168.44.103]: FAILED! => {"changed": false, "msg": "AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined"}

An exception occurred during task execution. To see the full traceback, use -vvv. The error was: ansible.errors.AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined

fatal: [192.168.44.102]: FAILED! => {"changed": false, "msg": "AnsibleUndefinedVariable: 'ENABLE_LOCAL_DNS_CACHE' is undefined. 'ENABLE_LOCAL_DNS_CACHE' is undefined"}

PLAY RECAP ********************************************************************************************192.168.44.101 : ok=41 changed=31 unreachable=0 failed=1 skipped=2 rescued=0 ignored=0

192.168.44.102 : ok=39 changed=30 unreachable=0 failed=1 skipped=2 rescued=0 ignored=0

192.168.44.103 : ok=39 changed=31 unreachable=0 failed=1 skipped=2 rescued=0 ignored=0

1:手动在 master 节点创建目录

bash

# 在所有 master 节点创建目录

for node in 192.168.44.101 192.168.44.102 192.168.44.103; do

ssh root@$node "mkdir -p /etc/kubernetes"

done

2:解决方案

缺少 `ENABLE_LOCAL_DNS_CACHE` 变量配置。需要在 `config.yml` 文件中添加这个变量。

### 1. 修改 config.yml 文件

vi /etc/kubeasz/clusters/k8s-cluster1/config.yml

在 `[all:vars]` 部分或适当位置添加以下内容:

# DNS 缓存配置

ENABLE_LOCAL_DNS_CACHE: false

### 2. 快速添加命令

echo "" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "# DNS 缓存配置" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

echo "ENABLE_LOCAL_DNS_CACHE: false" >> /etc/kubeasz/clusters/k8s-cluster1/config.yml

```

### 3. 验证配置

grep ENABLE_LOCAL_DNS_CACHE /etc/kubeasz/clusters/k8s-cluster1/config.yml

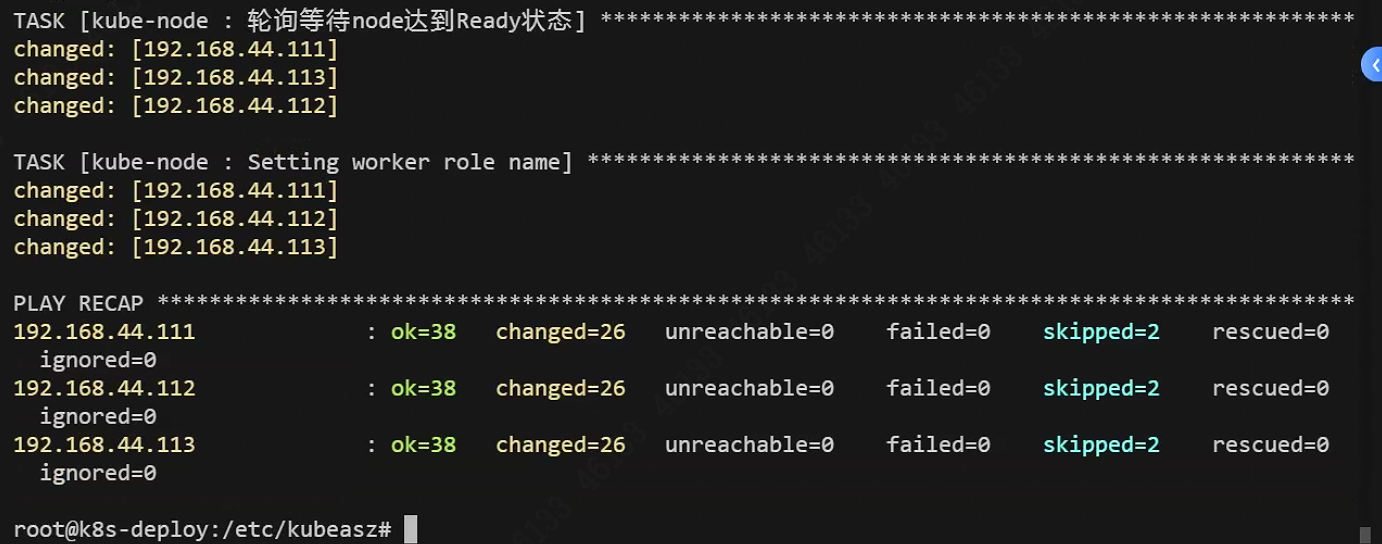

第 5 步:部署 node 节点

./ezctl setup k8s-cluster1 05作用:

- 部署 kubelet、kube-proxy

- 加入集群

卡点:3个node节点 手动添加目录

ssh root@192.168.44.111 -113

mkdir -p /etc/kubernetes/ssl

TASK [kube-node : 分发kubeconfig] *********************************************************************fatal: [192.168.44.113]: FAILED! => {"changed": false, "checksum": "c0a24a04e92d86cd655e2a11a55bcef47c26ac82", "msg": "Destination directory /etc/kubernetes does not exist"}

fatal: [192.168.44.112]: FAILED! => {"changed": false, "checksum": "425c9836564a61186109453ff9005bb46203fe79", "msg": "Destination directory /etc/kubernetes does not exist"}

fatal: [192.168.44.111]: FAILED! => {"changed": false, "checksum": "2b27e7f5e9db9e2de8177b89db0b20498e077db9", "msg": "Destination directory /etc/kubernetes does not exist"}

PLAY RECAP ********************************************************************************************192.168.44.111 : ok=20 changed=19 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.44.112 : ok=20 changed=19 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.44.113 : ok=20 changed=19 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

root@k8s-deploy:/etc/kubeasz#

第 6 步:部署网络插件(calico)

./ezctl setup k8s-cluster1 06作用:

-

部署 calico

-

配置集群网络

-

节点互通

查看 calico 版本

kubectl get pods -n kube-system -l k8s-app=calico-node -o yaml | grep "image:" | head -1

查看 calico 版本详情

kubectl describe pod -n kube-system -l k8s-app=calico-node | grep "Image:" | head -1

root@k8s-deploy:~# # 查看 calico 版本

kubectl get pods -n kube-system -l k8s-app=calico-node -o yaml | grep "image:" | head -1查看 calico 版本详情

kubectl describe pod -n kube-system -l k8s-app=calico-node | grep "Image:" | head -1

image: docker.io/calico/node:v3.28.4

Image: docker.io/calico/cni:v3.28.4

原因

kubeasz 的 calico 角色需要 calico_ver_main变量来生成主版本号(v3.28),用于拼接镜像名称或配置文件路径。

ASK [calico : 配置 calico DaemonSet yaml文件] ********************************************************fatal: [192.168.44.101]: FAILED! => {"msg": "The task includes an option with an undefined variable. The error was: 'calico_ver_main' is undefined. 'calico_ver_main' is undefined\n\nThe error appears to be in '/etc/kubeasz/roles/calico/tasks/main.yml': line 22, column 7, but may\nbe elsewhere in the file depending on the exact syntax problem.\n\nThe offending line appears to be:\n\n\n - name: 配置 calico DaemonSet yaml文件\n ^ here\n"}

NO MORE HOSTS LEFT ************************************************************************************

PLAY RECAP ********************************************************************************************192.168.44.101 : ok=5 changed=4 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.44.102 : ok=1 changed=0 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

192.168.44.103 : ok=1 changed=0 unreachable=0 failed=0 skipped=0

--处理办法---

cat >> /etc/kubeasz/clusters/k8s-cluster1/config.yml << 'EOF'

# Calico 完整配置

calico_ver_main: "v3.28"

EOF

问题2:

TASK [calico : 准备 calicoctl配置文件] ****************************************************************changed: [192.168.44.111]

changed: [192.168.44.103]

changed: [192.168.44.102]

changed: [192.168.44.112]

changed: [192.168.44.101]

changed: [192.168.44.113]

FAILED - RETRYING: [192.168.44.101]: 轮询等待calico-node 运行 (15 retries left).

FAILED - RETRYING: [192.168.44.102]: 轮询等待calico-node 运行 (15 retries left).

FAILED - RETRYING: [192.168.44.112]: 轮询等待calico-node 运行 (15 retries left).

FAILED - RETRYING: [192.168.44.103]: 轮询等待calico-node 运行 (15 retries left).

FAILED - RETRYING: [192.168.44.111]: 轮询等待calico-node 运行 (15 retries left).

FAILED - RETRYING: [192.168.44.101]: 轮询等待calico-node 运行 (14 retries left).

FAILED - RETRYING: [192.168.44.112]: 轮询等待calico-node 运行 (14 retries left).

FAILED - RETRYING: [192.168.44.111]: 轮询等待calico-node 运行 (14 retries left).

root@k8s-master1:~# kubectl get pods -n kube-system | grep calico

# 查看 calico-node 日志

kubectl logs -n kube-system -l k8s-app=calico-node --tail=50

# 查看 calico 守护进程状态

kubectl get daemonset -n kube-system calico-node

calico-kube-controllers-78dcb7b647-rk2q4 0/1 ContainerCreating 0 113s

calico-node-7hxd5 0/1 Init:0/2 0 113s

calico-node-c9s7k 0/1 Init:ErrImagePull 0 113s

calico-node-dvgwb 0/1 Init:ErrImagePull 0 113s

calico-node-jbs2h 0/1 Init:0/2 0 113s

calico-node-sdwq7 0/1 Init:0/2 0 113s

calico-node-w2fm7 0/1 Init:0/2 0 113s

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Defaulted container "calico-node" out of: calico-node, install-cni (init), mount-bpffs (init)

Error from server (BadRequest): container "calico-node" in pod "calico-node-7hxd5" is waiting to start: PodInitializing

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

calico-node 6 6 0 6 0 kubernetes.io/os=linux 114s

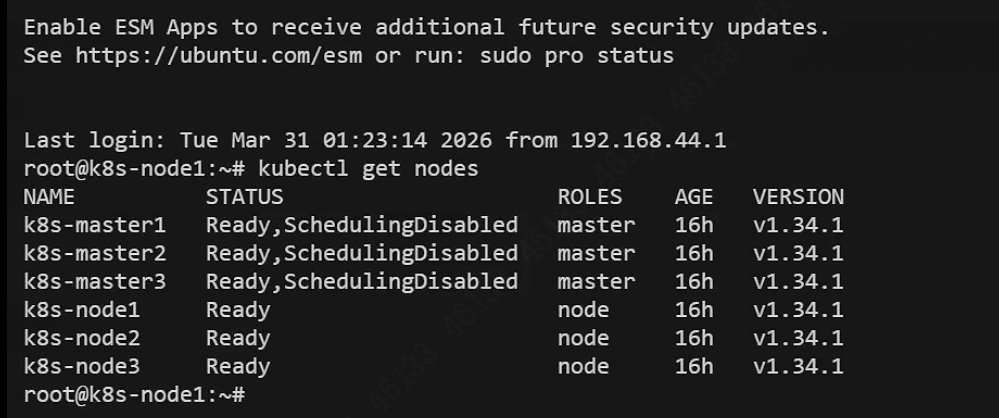

root@k8s-master1:~# 五、最终检查

kubectl get node所有节点:Ready

离线文件请在CSDN文件中自行下载离线文件:

kubeasz-20260110.zip