文章目录

- 简介

- [Claude code实现](#Claude code实现)

- [Agent SDK实现](#Agent SDK实现)

- SDK->openRouter->deepseek

- OpenRouter兼顾不到的情况

简介

理论上说,两者是不兼容的,就是说如果你希望用openai的模型,就不能用claude code,因为两者发送消息的格式不兼容。但是有一种解决方案,就是用网关做中转,即claude code发出去的消息先过网关,转化成openai模型能接受的消息,回来时同理

OpenAI模型 网关 Claude Code OpenAI模型 网关 Claude Code 接收并中转处理 处理请求 发送消息 转发消息 返回响应 转发响应

Claude code实现

Claude Code / Agent SDK → OpenRouter 这个网关 → 再由 OpenRouter 路由到别的模型

OpenRouter 的集成文档明确写了:把 ANTHROPIC_BASE_URL 设成它的 API 地址后,Claude Code 会直接用自己的原生协议去跟 OpenRouter 说话,不需要你本地再起一个代理;OpenRouter 这层负责兼容 Anthropic 风格接口、做模型映射,并保留像 tool use、thinking 这类高级能力。

先看 Claude Code 的最小配置例子。

# 1) 你的 OpenRouter key

export OPENROUTER_API_KEY="你的_openrouter_key"

# 2) 告诉 Claude Code:不要直连 Anthropic,改走 gateway

export ANTHROPIC_BASE_URL="https://openrouter.ai/api"

# 3) 把认证 token 交给 gateway

export ANTHROPIC_AUTH_TOKEN="$OPENROUTER_API_KEY"

# 4) 很重要:显式清空 Anthropic API key,避免冲突

export ANTHROPIC_API_KEY=""OpenRouter 的文档就是这样配的,而且特别强调 ANTHROPIC_API_KEY 要显式置空;如果你之前已经登录过 Claude Code,最好先在 Claude Code 里执行 /logout,不然缓存的登录态可能继续生效。官方环境变量文档也说明了:Claude Code 会读取这些环境变量,而且 ANTHROPIC_API_KEY 一旦存在,会优先于你的订阅登录态。

如果你还想指定"经过 gateway 后,到底用哪个模型",可以再加这些环境变量:

export ANTHROPIC_DEFAULT_OPUS_MODEL="anthropic/claude-opus-4.6"

export ANTHROPIC_DEFAULT_SONNET_MODEL="anthropic/claude-sonnet-4.6"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="anthropic/claude-haiku-4.5"

export CLAUDE_CODE_SUBAGENT_MODEL="anthropic/claude-opus-4.6"Agent SDK实现

不用在代码里额外写"OpenRouter 客户端",因为 Agent SDK 本身就是 Claude Code runtime。Anthropic 的 Agent SDK 文档说明它继承了 Claude Code 的工具、agent loop 和上下文管理;OpenRouter 也明确写了,Agent SDK 用同样的环境变量就能接上去。也就是说,先在 shell 里 export 上面那几个变量,然后代码还是正常写

SDK->openRouter->deepseek

先注册一个OpenRouter的账号,然后拿到OpenRouter api key

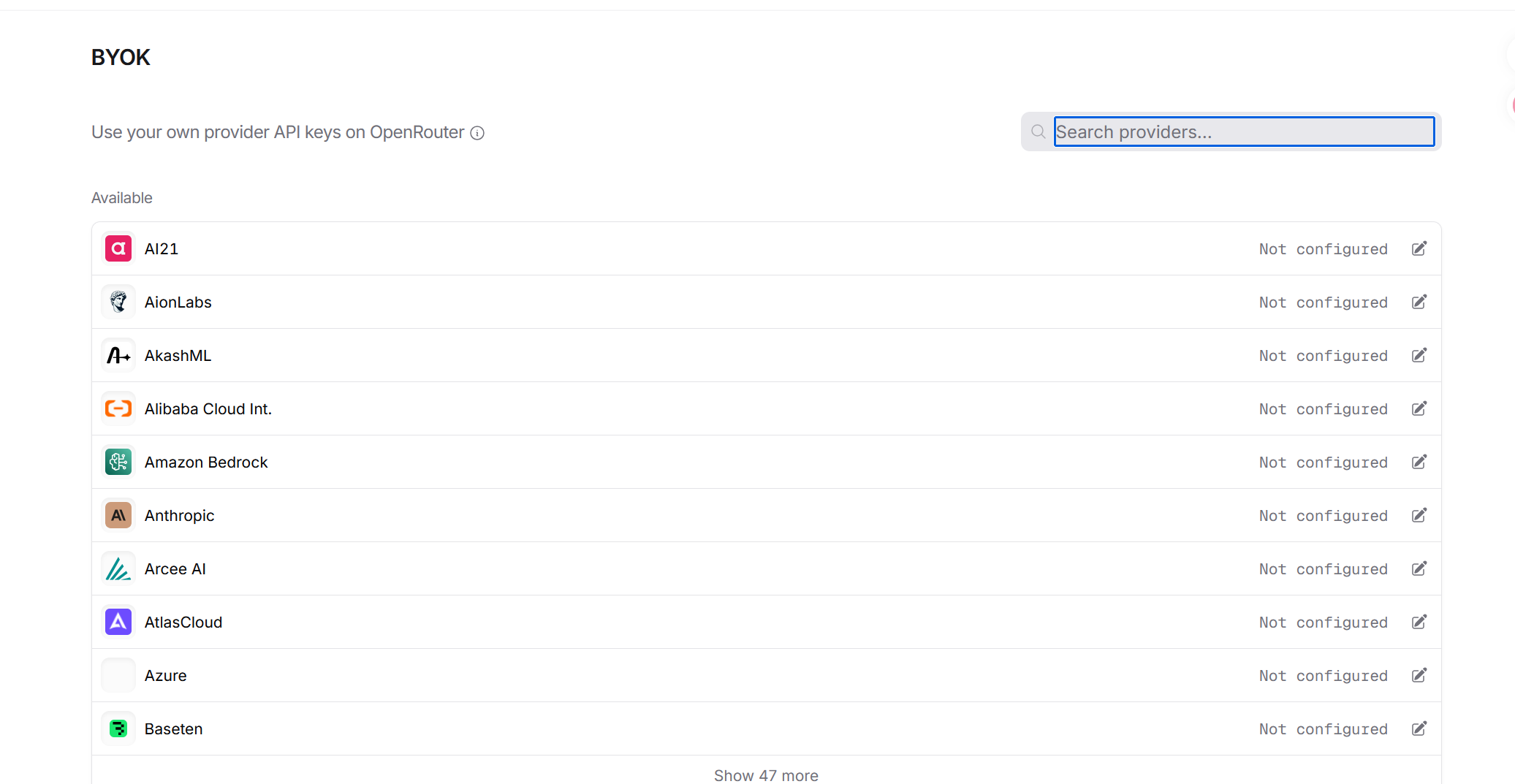

接下来配置BYOK模式(中转到特定的模型用你自己的api key,走自己的厂商额度/结算,而不是用OpenRouter去消费)

接下来只需要改三个东西即可

os.environ["ANTHROPIC_BASE_URL"] = "https://openrouter.ai/api"

os.environ["ANTHROPIC_AUTH_TOKEN"] = OPENROUTER_API_KEY #你的OpenRouter api key,用于认证身份

os.environ["ANTHROPIC_API_KEY"] = "" # 必须显式为空

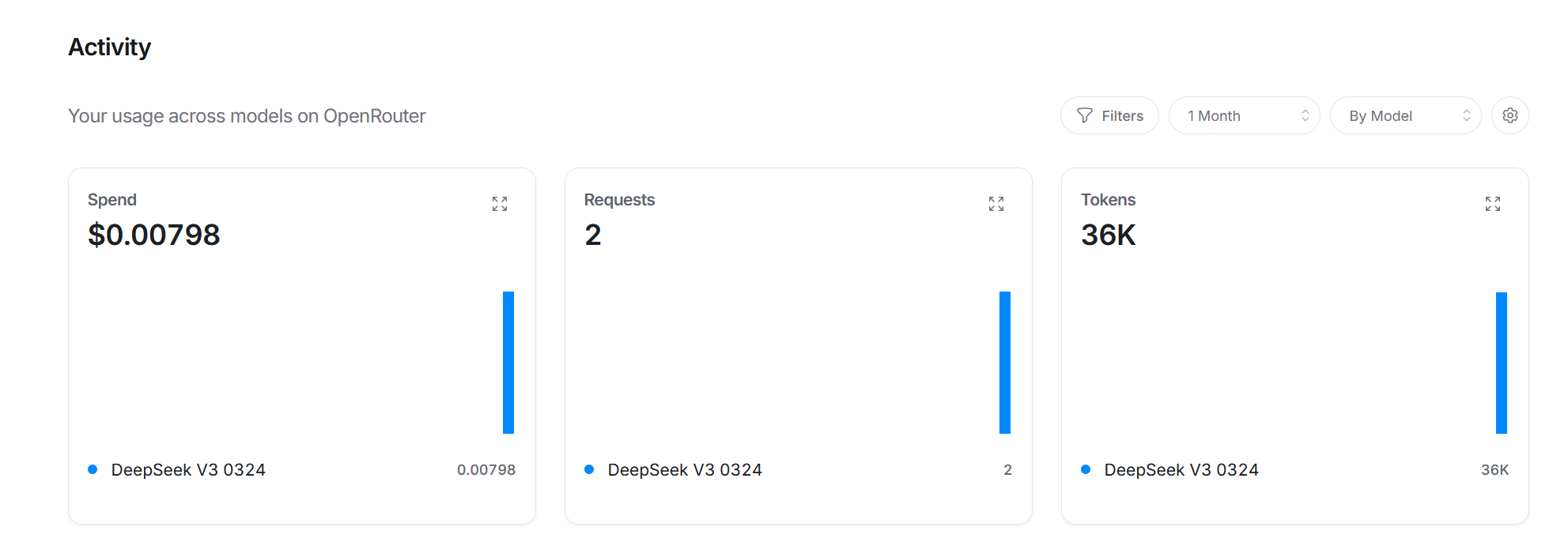

os.environ["ANTHROPIC_MODEL"] = OPENROUTER_MODEL #模型名称其他代码正常写,可以从OP看到调用记录就说明设置成功了

OpenRouter兼顾不到的情况

目前OpenRouter只能转发主流的66个平台,但是我们总能从一些小的二手站点拿到key做测试,而OP是不能使用这些站点的key的,所以就只能在本地做一个中间转发

以下是proxy代码

它只是一个纯协议转换库。

它只负责做两件事:

- 把 Anthropic / Claude Messages 格式 转成 OpenAI Chat Completions 格式

- 把 OpenAI Chat Completions 响应 转回 Anthropic / Claude Messages 响应格式

所以它本身:

- 不监听端口

- 不持有 key

- 不绑定 iFlow / OpenRouter / 阿里云 / 任何平台

- 不发 HTTP 请求

你可以把它理解成一个"中间格式翻译器"。

import json

import uuid

from typing import Any, Dict, List, Optional

def _text_blocks_to_text(content: Any) -> str:

if isinstance(content, str):

return content

if isinstance(content, list):

texts: List[str] = []

for block in content:

if isinstance(block, dict) and block.get("type") == "text":

texts.append(block.get("text", ""))

return "\n".join(x for x in texts if x)

return str(content)

def anthropic_tools_to_openai(

tools: Optional[List[Dict[str, Any]]],

) -> Optional[List[Dict[str, Any]]]:

"""

Anthropic tools -> OpenAI tools

"""

if not tools:

return None

out: List[Dict[str, Any]] = []

for tool in tools:

out.append(

{

"type": "function",

"function": {

"name": tool["name"],

"description": tool.get("description", ""),

"parameters": tool.get(

"input_schema",

{"type": "object", "properties": {}},

),

},

}

)

return out

def anthropic_tool_choice_to_openai(tool_choice: Any) -> Any:

"""

Anthropic:

{"type":"auto"}

{"type":"any"}

{"type":"none"}

{"type":"tool","name":"xxx"}

OpenAI:

"auto"

"required"

"none"

{"type":"function","function":{"name":"xxx"}}

"""

if not tool_choice:

return "auto"

if isinstance(tool_choice, str):

if tool_choice in {"auto", "required", "none"}:

return tool_choice

return "auto"

if isinstance(tool_choice, dict):

t = tool_choice.get("type")

if t == "auto":

return "auto"

if t == "any":

return "required"

if t == "none":

return "none"

if t == "tool" and tool_choice.get("name"):

return {

"type": "function",

"function": {"name": tool_choice["name"]},

}

return "auto"

def anthropic_messages_to_openai_messages(

system: Any,

messages: List[Dict[str, Any]],

) -> List[Dict[str, Any]]:

"""

Anthropic Messages API -> OpenAI chat.completions messages

支持:

- system

- user/assistant text

- assistant.tool_use -> assistant.tool_calls

- user.tool_result -> tool

"""

out: List[Dict[str, Any]] = []

if system:

if isinstance(system, str):

out.append({"role": "system", "content": system})

elif isinstance(system, list):

system_texts: List[str] = []

for block in system:

if isinstance(block, dict) and block.get("type") == "text":

system_texts.append(block.get("text", ""))

if system_texts:

out.append({"role": "system", "content": "\n".join(system_texts)})

for msg in messages:

role = msg.get("role")

content = msg.get("content")

if isinstance(content, str):

out.append({"role": role, "content": content})

continue

if not isinstance(content, list):

out.append({"role": role, "content": str(content)})

continue

if role == "assistant":

text_parts: List[str] = []

tool_calls: List[Dict[str, Any]] = []

for block in content:

if not isinstance(block, dict):

continue

block_type = block.get("type")

if block_type == "text":

text_parts.append(block.get("text", ""))

elif block_type == "tool_use":

tool_calls.append(

{

"id": block["id"],

"type": "function",

"function": {

"name": block["name"],

"arguments": json.dumps(

block.get("input", {}),

ensure_ascii=False,

),

},

}

)

assistant_msg: Dict[str, Any] = {

"role": "assistant",

"content": "\n".join(x for x in text_parts if x) or None,

}

if tool_calls:

assistant_msg["tool_calls"] = tool_calls

out.append(assistant_msg)

continue

if role == "user":

pending_user_text: List[str] = []

def flush_user_text() -> None:

if pending_user_text:

out.append(

{

"role": "user",

"content": "\n".join(pending_user_text),

}

)

pending_user_text.clear()

for block in content:

if not isinstance(block, dict):

pending_user_text.append(str(block))

continue

block_type = block.get("type")

if block_type == "text":

pending_user_text.append(block.get("text", ""))

elif block_type == "tool_result":

flush_user_text()

tool_content = block.get("content", "")

if isinstance(tool_content, list):

tool_content = _text_blocks_to_text(tool_content)

elif not isinstance(tool_content, str):

tool_content = json.dumps(tool_content, ensure_ascii=False)

out.append(

{

"role": "tool",

"tool_call_id": block["tool_use_id"],

"content": tool_content,

}

)

flush_user_text()

continue

out.append({"role": role, "content": _text_blocks_to_text(content)})

return out

def anthropic_request_to_openai_chat_completions(

anthropic_request: Dict[str, Any],

model: str,

) -> Dict[str, Any]:

"""

完整 Anthropic 请求 -> OpenAI chat.completions 请求体

"""

system = anthropic_request.get("system")

messages = anthropic_request.get("messages", [])

tools = anthropic_request.get("tools")

tool_choice = anthropic_request.get("tool_choice")

temperature = anthropic_request.get("temperature")

max_tokens = anthropic_request.get("max_tokens", 1024)

payload: Dict[str, Any] = {

"model": model,

"messages": anthropic_messages_to_openai_messages(system, messages),

"max_tokens": max_tokens,

"stream": False,

}

if temperature is not None:

payload["temperature"] = temperature

openai_tools = anthropic_tools_to_openai(tools)

if openai_tools:

payload["tools"] = openai_tools

payload["tool_choice"] = anthropic_tool_choice_to_openai(tool_choice)

return payload

def openai_choice_to_anthropic_message(

choice: Dict[str, Any],

model: str,

) -> Dict[str, Any]:

"""

OpenAI chat.completions 的单个 choice -> Anthropic Messages response

"""

message = choice["message"]

finish_reason = choice.get("finish_reason")

content_blocks: List[Dict[str, Any]] = []

raw_content = message.get("content")

if isinstance(raw_content, str) and raw_content.strip():

content_blocks.append({"type": "text", "text": raw_content})

elif isinstance(raw_content, list):

texts: List[str] = []

for item in raw_content:

if isinstance(item, dict) and item.get("type") == "text":

texts.append(item.get("text", ""))

if texts:

content_blocks.append({"type": "text", "text": "\n".join(texts)})

tool_calls = message.get("tool_calls") or []

for tool_call in tool_calls:

fn = tool_call.get("function", {})

raw_args = fn.get("arguments", "{}")

try:

parsed_args = json.loads(raw_args) if isinstance(raw_args, str) else raw_args

except Exception:

parsed_args = {"raw_arguments": raw_args}

content_blocks.append(

{

"type": "tool_use",

"id": tool_call["id"],

"name": fn["name"],

"input": parsed_args,

}

)

if tool_calls:

stop_reason = "tool_use"

elif finish_reason == "length":

stop_reason = "max_tokens"

else:

stop_reason = "end_turn"

return {

"id": f"msg_{uuid.uuid4().hex}",

"type": "message",

"role": "assistant",

"model": model,

"content": content_blocks,

"stop_reason": stop_reason,

"stop_sequence": None,

}

def openai_response_to_anthropic_response(

openai_response: Dict[str, Any],

model: str,

fallback_input_tokens: int = 0,

) -> Dict[str, Any]:

"""

OpenAI 完整响应 -> Anthropic 完整响应

"""

choice = openai_response["choices"][0]

usage = openai_response.get("usage", {})

result = openai_choice_to_anthropic_message(choice, model=model)

result["usage"] = {

"input_tokens": usage.get("prompt_tokens", fallback_input_tokens),

"output_tokens": usage.get("completion_tokens", 0),

}

return result

def estimate_anthropic_input_tokens(anthropic_request: Dict[str, Any]) -> int:

"""

只是占位估算,方便本地桥接先跑通。

不是精确 tokenizer。

"""

raw = json.dumps(anthropic_request, ensure_ascii=False)

return max(1, len(raw) // 4)runtime 代理文件常用方式

from proxy_core import (

anthropic_request_to_openai_chat_completions,

openai_response_to_anthropic_response,

estimate_anthropic_input_tokens,

)

# 1. 收到 Anthropic 风格请求

anthropic_request = await request.json()

# 2. 转成 OpenAI 风格请求

openai_payload = anthropic_request_to_openai_chat_completions(

anthropic_request=anthropic_request,

model=UPSTREAM_MODEL,

)

# 3. 发给上游

resp = await client.post(

CHAT_COMPLETIONS_URL,

headers=headers,

json=openai_payload,

)

openai_response = resp.json()

# 4. 再转回 Anthropic 风格响应

anthropic_response = openai_response_to_anthropic_response(

openai_response=openai_response,

model=UPSTREAM_MODEL,

fallback_input_tokens=estimate_anthropic_input_tokens(anthropic_request),

)

# 5. 返回给 Claude / Agent SDK

return anthropic_response