网上有cnn的源码(lenet),也有原理!(cpu版本的,很原始的那种,但还是比我的自研cnn好!)

所以改he凯明初始化,softmax,批处理,vgg,残差都实验成功了!

以及cudnn下也实现了bn和残差,自然也实现了并发!

但是自己的初心还是放不下,mnist下,应该有一个自己安心的版本!毕竟是自己原创的!

基于bpnet,怎样达成cnn呢?

其实就一句话:不放弃,磕到底,最终你会意识到权重和卷积核是一回事,这件事想通了,就基本成功了!

这次修改,其实我已经透彻了cnn的数学原理,也就是读过人家的paper了!

前头极简cnn几篇博客已经分析透彻了cnn的数学原理,也做了达成!

这次是审视和修改自己的初心,肯定还是比极简cnn复杂的多!

毕竟是训练mnist数据集!

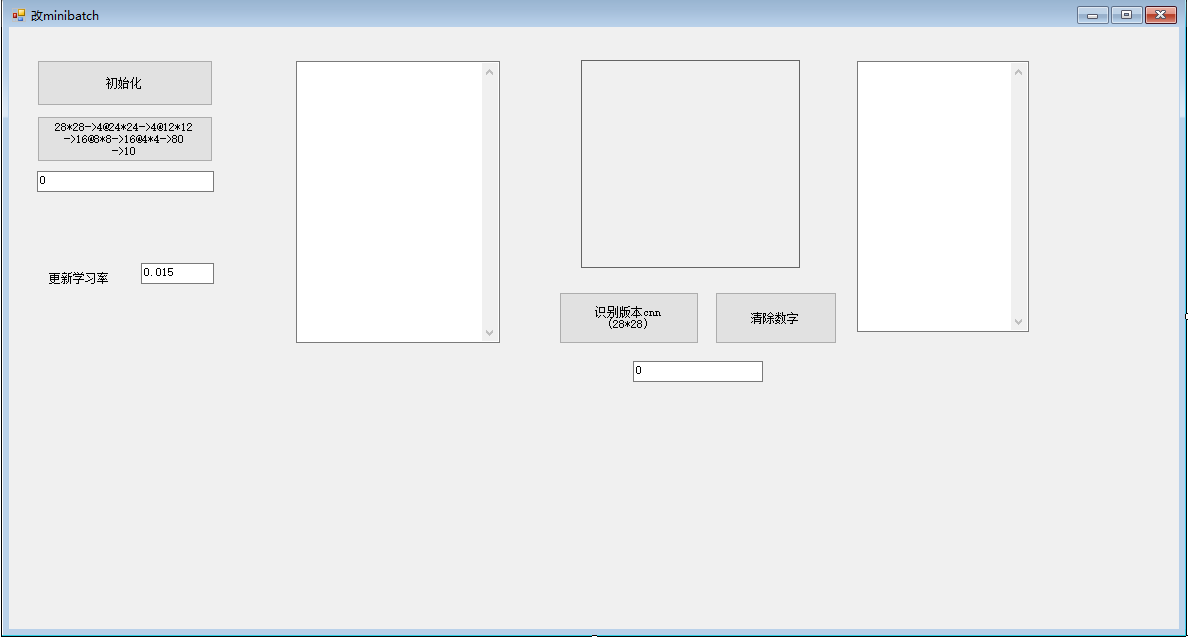

虽然batch=10,但还是cpu版本的!c#达成!使用了he初始化和softmax,以及leaky relu!

卷积时的bias不好处理,也删除了,只保留了全连接中的bias!

训练一轮6万次,上了90分,训练时间降到1分07秒,没有batch要1分37秒!learnrate=0.015

第二轮,第三轮,讲的学习率到0.01,上96没问题,继续降低学习率,成绩还能上!

lr=0.005,超过97分!其实成绩我并不关注了,手写了十个数字,正常,基本全能正确识别!

只是在正确的cnn理论下约束自己的初心,使其数学化,达成安心版本!

其实我的版本里,有一些东西不在cnn理论中,而且训练成绩不降反升!这也是我为什么做记录的原因,人工智能困扰的地方,也可能时处女地!

好,先说在cnn理论下,自己初心达成的安心版本!

using System;

using System.Collections.Generic;

using System.ComponentModel;

using System.Data;

using System.Drawing;

using System.Linq;

using System.Text;

using System.Windows.Forms;

using System.IO;

using System.Drawing.Imaging;

namespace 自己的20核

{

public partial class Form1 : Form

{

public Form1()

{

InitializeComponent();

}

Random ran = new Random();

public double randomNormalDistribution()

{

double u = 0.0, v = 0.0, w = 0.0, c = 0.0;

do

{

//获得两个(-1,1)的独立随机变量

u = ran.NextDouble() * 2 - 1.0;

v = ran.NextDouble() * 2 - 1.0;

w = u * u + v * v;

} while (w == 0.0 || w >= 1.0);

//这里就是 Box-Muller转换

c = Math.Sqrt((-2 * Math.Log(w)) / w);

return u * c;

}

double, w576cnn = new double25, 4;

double, w1cnn = new double25, 4;

double, w1cnn2 = new double25, 4;

double, w1cnn3 = new double25, 4;

double, w1cnn4 = new double25, 4;

double, delw1cnn = new double25, 4;

double, delw1cnn2 = new double25, 4;

double, delw1cnn3 = new double25, 4;

double, delw1cnn4 = new double25, 4;

double, w12cnn = new double16 \* 16, 80;

double, w2cnn = new double80, 10;

//double\[\] bIOcnn = new double256;//不用B和deltaB,降低难度

//double\[\] bI2Ocnn = new double80;

//double\[\] bycnn = new double10;

void init()

{

//lenet-1

for (int i = 0; i < 25; i++)

for (int j = 0; j < 4; j++)

{

w576cnni, j = randomNormalDistribution() / Math.Sqrt(25*batch_size / 2f);

}

for (int i = 0; i < 25; i++)

for (int j = 0; j < 4; j++)

{

w1cnni, j = randomNormalDistribution() / Math.Sqrt(25 * batch_size / 2f);

w1cnn2i, j = randomNormalDistribution() / Math.Sqrt(25 * batch_size / 2f);

w1cnn4i, j = randomNormalDistribution() / Math.Sqrt(25 * batch_size / 2f);

w1cnn3i, j = randomNormalDistribution() / Math.Sqrt(25 * batch_size / 2f);

}

for (int i = 0; i < 16 * 16; i++)

for (int j = 0; j < 80; j++)

{

w12cnni, j = randomNormalDistribution() / Math.Sqrt(256 * batch_size / 2f);

}

for (int i = 0; i < 80; i++)

for (int j = 0; j < 10; j++)

{

w2cnni, j = randomNormalDistribution() / Math.Sqrt(80 * batch_size / 2f);

}

//for (int i = 0; i < 256; i++)

//{ bIOcnni = randomNormalDistribution() / Math.Sqrt(256 / 2f); }

//for (int i = 0; i < 80; i++)

//{ bI2Ocnni = randomNormalDistribution() / Math.Sqrt(80 / 2f); }

//for (int i = 0; i < 10; i++)

//{ bycnni = randomNormalDistribution() / Math.Sqrt(10 / 2f); }

}

List<byte\[\]> alltraindataorg = new List<byte\[\]>();

double\[\]\[\] hello28 = new double60000\[\];

List<int> labels = new List<int>();

void ReadMnistImages(string filePath)

{

alltraindataorg.Clear();

using (FileStream fileStream = new FileStream(filePath, FileMode.Open, FileAccess.Read))

using (BinaryReader reader = new BinaryReader(fileStream))

{

// 跳过文件头的前16个字节

reader.ReadBytes(16);

while (fileStream.Position < fileStream.Length)

{

byte\[\] image = reader.ReadBytes(28 * 28); // MNIST图像大小为28x28

alltraindataorg.Add(image);

}

}

}

void ReadMnistLabels(string filePath)

{

labels.Clear();

using (FileStream fileStream = new FileStream(filePath, FileMode.Open, FileAccess.Read))

using (BinaryReader reader = new BinaryReader(fileStream))

{

// 跳过文件头的前8个字节

reader.ReadBytes(8);

while (fileStream.Position < fileStream.Length)

{

byte label = reader.ReadByte(); // 读取标签

// 处理标签数据

// ...

labels.Add(label);

}

}

}

double learnRate = 0.2f; //学习率

private void button29_Click(object sender, EventArgs e)

{

init();

////////////duqu mnist shujuji ,DuQu Mnist ShuJuJi//2024090111004

string imagesFilePath = "c:\\train-images.idx3-ubyte";

string labelsFilePath = "c:\\train-labels.idx1-ubyte";

ReadMnistImages(imagesFilePath);

ReadMnistLabels(labelsFilePath);

for (int xx = 0; xx < 60000; xx++)

{

hello28xx = new double28 \* 28;

for (int i = 0; i < 28 * 28; i++)

{

hello28xxi = alltraindataorgxxi / 255f;

}

}//上头的初始化应该独立出去

//learnRate = 0.015f;//不错,上96了202502272143

learnRate = 0.015f;

showbuffer2pictmod4(new Byte28 \* 28, 28, 28, pictureBox20);

}

void showbuffer2pictmod4(byte\[\] buffer, int ww, int hh, PictureBox destImg)

{

int mod = ww % 4;//解决四位对齐问题20150716

int temproiw = ww + (4 - mod) % 4;//其实这都是和显示相关,处理图像其实不必考虑,

//顯示

byte\[\] cutvalues = new bytetemproiw \* hh \* 3;

int bytes = temproiw * hh * 3;

Bitmap cutPic24 = new Bitmap(temproiw, hh, System.Drawing.Imaging.PixelFormat.Format24bppRgb);

BitmapData _cutPic = cutPic24.LockBits(new Rectangle(0, 0, temproiw, hh), ImageLockMode.ReadWrite,

cutPic24.PixelFormat);

IntPtr ptr = _cutPic.Scan0;//得到首地址

for (int i = 0; i < hh; i++)

{

for (int j = 0; j < ww; j++)

{

int n = i * ww + j;

//int m = 3 * n;

int m = 3 * (i * temproiw + j);

cutvaluesm = buffern;

cutvaluesm + 1 = buffern;

cutvaluesm + 2 = buffern;

}

}

System.Runtime.InteropServices.Marshal.Copy(cutvalues, 0, ptr, bytes);

cutPic24.UnlockBits(_cutPic);

destImg.Image = cutPic24;

}

int\[\] last = new int60000;

int batch_size = 10;

public void updatesumresult()

{

//最后更新d

for (int j = 0; j < 10; j++)//10

for (int i = 0; i < 80; i++)//15

{

w2cnni, j -= learnRate * 临时dzw2i, j / batch_size;

}

for (int j = 0; j < 80; j++)//15

for (int i = 0; i < 16 * 16; i++)//256

{

w12cnni, j -= learnRate * 临时dzw12i, j / batch_size;

}

//for (int i = 0; i < 10; i++)

//{

// bycnni -= learnRate * 临时记录dbyi;

//}

//for (int i = 0; i < 80; i++)

//{

// bI2Ocnni -= learnRate * 临时记录dbhI2Oi;

//}

//for (int i = 0; i < 256; i++)

//{

// bIOcnni -= learnRate * 临时记录dbhIOi;

//}

//卷积核k \* 5 + z, (tidai) % 4 -= delta * learnRate;

for (int i = 0; i < 25; i++)

for(int j=0;j<4;j++)

{

w1cnni, j -= delw1cnni, j * learnRate/batch_size;

w1cnn2i, j -= delw1cnn2i, j * learnRate / batch_size;

w1cnn3i, j -= delw1cnn3i, j * learnRate / batch_size;

w1cnn4i, j -= delw1cnn4i, j * learnRate / batch_size;

}

//图像变了10次了,怎么会对?

for (int i = 0; i < 25; i++)

{

w576cnni, 0 -= (delta576w03i + delta576w02i + delta576w01i + delta576w00i) * learnRate / batch_size;

}

for (int i = 0; i < 25; i++)

{

w576cnni, 1 -= (delta576w13i + delta576w12i + delta576w11i + delta576w10i) * learnRate / batch_size;

}

for (int i = 0; i < 25; i++)

{

w576cnni, 2 -= (delta576w23i + delta576w22i + delta576w21i + delta576w20i) * learnRate / batch_size;

}

for (int i = 0; i < 25; i++)

{

w576cnni, 3 -= (delta576w33i + delta576w32i + delta576w31i + delta576w30i) * learnRate / batch_size;

}

}

public void reset_update_all()

{

for (int j = 0; j < 10; j++)//10

{

for (int i = 0; i < 80; i++)//15

{

临时dzw2i, j = 0;

}

}

for (int j = 0; j < 80; j++)//

{

for (int i = 0; i < 16 * 16; i++)//256

{

临时dzw12i, j = 0;

}

}

//for (int i = 0; i < 16; i++)

//{

// deltacnni = 0;

// deltacnn1i = 0;

// deltacnn2i = 0;

// deltacnn3i = 0;

// deltacnn5i = 0;

// deltacnn6i = 0;

// deltacnn7i = 0;

// deltacnn8i = 0;

// deltacnn9i = 0;

// deltacnn10i = 0;

// deltacnn11i = 0;

// deltacnn12i = 0;

// deltacnn13i = 0;

// deltacnn14i = 0;

// deltacnn15i = 0;

// deltacnn16i = 0;

//}

for (int i = 0; i < 25; i++)

{

delw1cnni, 0 = 0; delw1cnni, 1 = 0; delw1cnni, 2 = 0; delw1cnni, 3 = 0;

delw1cnn2i, 0 = 0; delw1cnn2i, 1 = 0; delw1cnn2i, 2 = 0; delw1cnn2i, 3 = 0;

delw1cnn3i, 0 = 0; delw1cnn3i, 1 = 0; delw1cnn3i, 2 = 0; delw1cnn3i, 3 = 0;

delw1cnn4i, 0 = 0; delw1cnn4i, 1 = 0; delw1cnn4i, 2 = 0; delw1cnn4i, 3 = 0;

delta576w00i = 0;

delta576w01i = 0;

delta576w02i = 0;

delta576w03i = 0;

delta576w10i = 0;

delta576w11i = 0;

delta576w12i = 0;

delta576w13i = 0;

delta576w20i = 0;

delta576w21i = 0;

delta576w22i = 0;

delta576w23i = 0;

delta576w30i = 0;

delta576w31i = 0;

delta576w32i = 0;

delta576w33i = 0;

}

}

private void button30cnn_Click(object sender, EventArgs e)

{

last=new int60000;

DateTime dt = DateTime.Now;

for (int cas = 0; cas < 6000; cas++)

{

reset_update_all();

for (int j = 0;j < 10; j++)

{

int n = cas * batch_size + j;

traincnn(n);//改minibatch,要把更新单独出来,10次一更新,要累加,就要改变初始化值202507130503

}

updatesumresult();//10次一更新

}

int P = 100;

int he = 59100;

float junzhi = 0;

for (int n = 0; n < 9; n++)

{

int hh = 0;

for (int i = 0; i < P; i++)

{

hh += lasthe + i;

}

textBox47.Text += he.ToString() + "," + hh.ToString() + "\r\n";

he += 100;

junzhi += hh;

}

junzhi = junzhi / 9f;

TimeSpan sp = DateTime.Now - dt;

textBox47.Text += "均值:" + junzhi.ToString() + "\r\n";

textBox48.Text = sp.ToString();

}

double\[\] hIcnntemp1 = new double576; double\[\] hIcnntemp = new double576;

double\[\] hIcnntemp2 = new double576; double\[\] hIcnntemp3 = new double576;

List<Point> jilumn1na = new List<Point>(); List<Point> jilumnna = new List<Point>();

List<Point> jilumn2na = new List<Point>(); List<Point> jilumn3na = new List<Point>();

double\[\] hIcnn1na = new double144; double\[\] hIcnnna = new double144;

double\[\] hIcnn2na = new double144; double\[\] hIcnn3na = new double144;

double\[\] hI遗忘1 = new double144; double\[\] hI遗忘 = new double144;

double\[\] hI遗忘2 = new double144; double\[\] hI遗忘3 = new double144;

public double NDrelu(double x)

{

return x > 0 ? x : 0.01f * x;//=max(0.1x,x)

}

public double dNDrelu(double x)

{

// return x * (1 - x);

return x > 0 ? 1f : 0.01f;

}

List<double\[\]> hebingbackImg = new List<double\[\]>();

List<List<Point>> hebingpos = new List<List<Point>>();

double\[\] hocnnhebing = new double16 \* 16;

void maxpooling(int p后h, int p后w,int 前图h,int 前图w,double\[\] 前图,double\[\] p后图,ref List<Point> maxP相对位置)//24*24->12*12,2*2取最大

{

for (int i = 0; i < p后h; i++)

for (int j = 0; j < p后w; j++)//

{

int l = (i) * p后w + j;

double tempb = 0;

Point tempPt = new Point();

for (int m = 0; m < 2; m++)

for (int n = 0; n < 2; n++)//需要记住m,n和序号L,202409181018

{

int k = (i * 2 + m) * 前图w+ j * 2 + n;

if (前图k > tempb)

{

tempb = 前图k;//如果是最大值,核就变得没有意义,back怎么办?202409170727

tempPt = new Point(n, m);//m是h,n是w,这个要小心用错

}

}

maxP相对位置.Add(tempPt);//25个数据,就有25组m,n,序号一一对应

p后图l = tempb;//25个数据,通过这个关系,就能找到14*14matrix中去。202409181038

}

}

public void cnnforward()//202409180943使用4层网络,抄过来(这里还是三层网络),14*14->5*5->15->10

{

hIcnntemp = new double576;

hIcnntemp1 = new double576; hIcnntemp2 = new double576; hIcnntemp3 = new double576;

hIcnn1na = new double144; hIcnn2na = new double144; hIcnn3na = new double144;

hIcnnna = new double144;//每一幅图片都要重置

hI遗忘 = new double144; hI遗忘2 = new double144; hI遗忘3 = new double144;

hI遗忘1 = new double144;//每一幅图片都要重置

jilumnna.Clear();//forwardcnn执行一次,就是换一张图片

jilumn1na.Clear();

jilumn2na.Clear(); jilumn3na.Clear();

for (int i = 0; i < 24; i++)

for (int j = 0; j < 24; j++)//

{

int l = (i) * 24 + j;

for (int m = 0; m < 5; m++)

for (int n = 0; n < 5; n++)

{

int k = (i + m) * 28 + j + n;

hIcnntempl += xIk * w576cnnm \* 5 + n, 0;//始终用零组,其他三个组不用

//为了方便,如此设计202409181006

hIcnntemp1l += xIk * w576cnnm \* 5 + n, 1;

hIcnntemp2l += xIk * w576cnnm \* 5 + n, 2;

hIcnntemp3l += xIk * w576cnnm \* 5 + n, 3;

}

}

////第二步,24*24》12*12

maxpooling(12, 12, 24, 24, hIcnntemp, hIcnnna, ref jilumnna);

maxpooling(12, 12, 24, 24, hIcnntemp1, hIcnn1na, ref jilumn1na);

maxpooling(12, 12, 24, 24, hIcnntemp2, hIcnn2na, ref jilumn2na);

maxpooling(12, 12, 24, 24, hIcnntemp3, hIcnn3na, ref jilumn3na);

//4*12*12 //第一次maxpooling返回位置

for (int i = 0; i < 144; i++)//12*12时才做sigmod,挺有意思202507120618

{

hI遗忘i = NDrelu(hIcnnnai);

hI遗忘1i = NDrelu(hIcnn1nai);

hI遗忘2i = NDrelu(hIcnn2nai);

hI遗忘3i = NDrelu(hIcnn3nai);

}

/////////

// 上面已经达成12*12的四张特征图202409211852,下面每张特征图再卷积成8*8,下采样,生成4张4*4

hebingbackImg = new List<double\[\]>(); //16*4*4

hebingpos = new List<List<Point>>();//第二次maxpooling返回位置

List<double\[\]> tempbackImg = new List<double\[\]>();

List<List<Point>>

temp = chongfu(hI遗忘, w1cnn, ref tempbackImg);//重复一次,生成4张4*4特征图和16个位置记录要返回,4次就是,16张图,64个位置记录

for (int i = 0; i < 4; i++)

{

hebingpos.Add(tempi);

hebingbackImg.Add(tempbackImgi);

}

tempbackImg.Clear();

temp.Clear();

temp = chongfu(hI遗忘1, w1cnn2, ref tempbackImg);//重复一次,生成4张4*4特征图和16个位置记录要返回,4次就是,16张图,64个位置记录

for (int i = 0; i < 4; i++)

{

hebingpos.Add(tempi);

hebingbackImg.Add(tempbackImgi);

}

tempbackImg.Clear();

temp.Clear();

temp = chongfu(hI遗忘2, w1cnn3, ref tempbackImg);//重复一次,生成4张4*4特征图和16个位置记录要返回,4次就是,16张图,64个位置记录

for (int i = 0; i < 4; i++)

{

hebingpos.Add(tempi);

hebingbackImg.Add(tempbackImgi);

}

tempbackImg.Clear();

temp.Clear();

temp = chongfu(hI遗忘3, w1cnn4, ref tempbackImg);//重复一次,生成4张4*4特征图和16个位置记录要返回,4次就是,16张图,64个位置记录

for (int i = 0; i < 4; i++)

{

hebingpos.Add(tempi);

hebingbackImg.Add(tempbackImgi);

}

hocnnhebing = new double16 \* 16;

for (int i = 0; i < 16; i++)

for (int j = 0; j < 16; j++)//4*4

{

int n = i * 16 + j;

// hocnnhebingn = NDrelu(hebingbackImgij + bIOcnnn);//4*4时才做sigmod,挺有意思202507120618

hocnnhebingn = NDrelu(hebingbackImgij );

}

//下面开始全连接202409181044

hI2cnn = new double80;//15gai 25

for (int i = 0; i < 16 * 16; i++)//128

for (int j = 0; j < 80; j++)//60

hI2cnnj += (hocnnhebingi) * w12cnni, j;//75,25

//通过激活函数对隐藏层进行计算

for (int i = 0; i < 80; i++)

// hO2cnni = NDrelu(hI2cnni + bI2Ocnni);

hO2cnni = NDrelu(hI2cnni );

yicnn = new double10;

//通过w2计算隐藏层-输出层

for (int i = 0; i < 80; i++)

for (int j = 0; j < 10; j++)

yicnnj += hO2cnni * w2cnni, j;

//通过激活函数求yo

for (int i = 0; i < 10; i++)

// yOcnni = NDrelu(yicnni + bycnni);

yOcnni = NDrelu(yicnni );

////////////////////////////

//下面是softmax,//yo相当与zi

double maxtemp = yOcnn0;

for (int i = 1; i < 10; i++)

{

if (yOcnni > maxtemp)

maxtemp = yOcnni;

}

double sum = 0;

// 首先找出yo中最大,然后softmax

for (int i = 0; i < 10; i++)

{

sum += Math.Exp(yOcnni - maxtemp);

}

for (int i = 0; i < 10; i++)

{

softmi = Math.Exp(yOcnni - maxtemp) / sum;//概率计算完毕

}

}

double\[\] hI2cnn = new double80;

double\[\] hO2cnn = new double80;

double\[\] yicnn = new double10;

double\[\] yOcnn = new double10;

double\[\] ThIcnntemp = new double64;

double\[\] ThIcnntemp1 = new double64; double\[\] ThIcnntemp2 = new double64; double\[\] ThIcnntemp3 = new double64;

double\[\] ThIcnn1na = new double16; double\[\] ThIcnn2na = new double16; double\[\] ThIcnn3na = new double16;

double\[\] ThIcnnna = new double16;//每一幅图片都要重置

List<Point> Tjilumn1na = new List<Point>(); List<Point> Tjilumnna = new List<Point>();

List<Point> Tjilumn2na = new List<Point>(); List<Point> Tjilumn3na = new List<Point>();

public List<List<Point>> chongfu(double\[\] 输入图像, double, 卷积核,ref List<double\[\]> fanhuituxiang)

{

List<List<Point>> templlp = new List<List<Point>>();

// 上面已经达成12*12的四张特征图202409211852,下面每张特征图再卷积成8*8,下采样,生成4张4*4

ThIcnntemp = new double64;

ThIcnntemp1 = new double64; ThIcnntemp2 = new double64; ThIcnntemp3 = new double64;

ThIcnn1na = new double16; ThIcnn2na = new double16; ThIcnn3na = new double16;

ThIcnnna = new double16;//每一幅图片都要重置

Tjilumnna.Clear();//forwardcnn执行一次,就是换一张图片

Tjilumn1na.Clear();

Tjilumn2na.Clear(); Tjilumn3na.Clear();

for (int i = 0; i < 8; i++)

for (int j = 0; j < 8; j++)//

{

int l = (i) * 8 + j;

for (int m = 0; m < 5; m++)

for (int n = 0; n < 5; n++)

{

int k = (i + m) * 12 + j + n;

ThIcnntempl += 输入图像k * 卷积核m \* 5 + n, 0;//始终用零组,其他三个组不用

//为了方便,如此设计202409181006

ThIcnntemp1l += 输入图像k * 卷积核m \* 5 + n, 1;

ThIcnntemp2l += 输入图像k * 卷积核m \* 5 + n, 2;

ThIcnntemp3l += 输入图像k * 卷积核m \* 5 + n, 3;//一个图像用四个卷积核生成4个特征图*8*8

}

}

//第二步,8*8-》4*4

maxpooling(4, 4, 8, 8, ThIcnntemp, ThIcnnna, ref Tjilumnna);

templlp.Add(Tjilumnna); fanhuituxiang.Add(ThIcnnna);

maxpooling(4, 4, 8, 8, ThIcnntemp1, ThIcnn1na, ref Tjilumn1na);

templlp.Add(Tjilumn1na); fanhuituxiang.Add(ThIcnn1na);

maxpooling(4, 4, 8, 8, ThIcnntemp2, ThIcnn2na, ref Tjilumn2na);

templlp.Add(Tjilumn2na); fanhuituxiang.Add(ThIcnn2na);

maxpooling(4, 4, 8, 8, ThIcnntemp3, ThIcnn3na, ref Tjilumn3na); //程序中的0初始化值,已经核实过,是ok的20250712

templlp.Add(Tjilumn3na); fanhuituxiang.Add(ThIcnn3na);

return templlp;

}

double\[\] softm = new double10;

double\[\] xI = new double28 \* 28;

int\[\] d = new int10;

void traincnn(int cas)

{

//是否需要重置deltaB?deltaW?在minibatch中?要

xI = hello28cas;//28*28

int num = labelscas;

d = new int10;

dnum = 1;

cnnforward();

int ans = getAnsSoftmaxcnn();

if (num == ans)

lastcas = 1;

softmnum = softmnum - 1;

backcnn();

}

public double\[\] 更新卷积核(double\[\] 中间值, int 卷积核序号, double\[\] 图像, double, 卷积核)//=4,=5,卷积核序号从1开始

{

//第四个卷积核202409191112

double\[\] deltacnn3 = new double16;//每一个deltacnn,都对应25个14*14中的数据元素,以及一个5*5的卷积核

int kk = 0;

int tidai = 卷积核序号 - 1;

int temp = (tidai) * 16;

for (int i = temp; i < temp + 16; i++)

{

for (int j = 0; j < 80; j++)//15

{

// deltacnn3kk = 中间值j * w12cnni, j * dsigmoid(hocnnhebingi);

// 临时记录dbhIOi =?这个怎么办?202502271112

deltacnn3kk += 中间值j * w12cnni, j * dNDrelu(hocnnhebingi);

}

// deltacnn3kk /= 80;

kk++;

}

//for (int i = 0; i < 16; i++)

//{

// Point temppt = 求二维(i, hebingpostidaii.Y, hebingpostidaii.X);//3代表第si波16个

// for (int k = 0; k < 5; k++)

// for (int z = 0; z < 5; z++)

// {

// int newIndex = (temppt.Y + k) * 12 + (temppt.X + z);

// double delta = 图像newIndex * deltacnn3i;//一共25个

// // w1cnn1k \* 5 + z, 卷积核序号 - 1 -= delta * learnRate;

// 卷积核k \* 5 + z, (tidai) % 4 -= delta * learnRate;

// }

// }

return deltacnn3;

}

public void last更新卷积核minibatch(double\[\] 更新卷积核ret, int 卷积核序号, double\[\] 图像, double, 卷积核)//=4,=5,卷积核序号从1开始

{

int tidai = 卷积核序号 - 1;

for (int i = 0; i < 16; i++)

{

Point temppt = 求二维(i, hebingpostidaii.Y, hebingpostidaii.X);//3代表第si波16个

for (int k = 0; k < 5; k++)

for (int z = 0; z < 5; z++)

{

int newIndex = (temppt.Y + k) * 12 + (temppt.X + z);

// 更新卷积核reti += 图像newIndex * 更新卷积核reti;//一共25个

卷积核k \* 5 + z, (tidai) % 4 += 图像newIndex * 更新卷积核reti;

//// w1cnn1k \* 5 + z, 卷积核序号 - 1 -= delta * learnRate;

//卷积核k \* 5 + z, (tidai) % 4 -= delta * learnRate;

}

}

//// 更新卷积核reti = 更新卷积核reti / 25f;

// for (int k = 0; k < 25; k++)

// { 卷积核k, (tidai) % 4 /= 16; }

}

public void last更新卷积核(double\[\] 更新卷积核ret, int 卷积核序号, double\[\] 图像, double, 卷积核)//=4,=5,卷积核序号从1开始

{

int tidai = 卷积核序号 - 1;

for (int i = 0; i < 16; i++)

{

Point temppt = 求二维(i, hebingpostidaii.Y, hebingpostidaii.X);//3代表第si波16个

for (int k = 0; k < 5; k++)

for (int z = 0; z < 5; z++)

{

int newIndex = (temppt.Y + k) * 12 + (temppt.X + z);

double delta = 图像newIndex * 更新卷积核reti;//一共25个

// w1cnn1k \* 5 + z, 卷积核序号 - 1 -= delta * learnRate;

卷积核k \* 5 + z, (tidai) % 4 -= delta * learnRate;

}

}

}

public Point 求二维(int index, int mm, int nn)

{

Point rePt = new Point();

for (int i = 0; i < 5; i++)

for (int j = 0; j < 5; j++)

{

int n = i * 5 + j;

if (index == n)

{

int h = i * 2 + mm;

int w = j * 2 + nn;

rePt.X = w;

rePt.Y = h;

i = 5;

j = 5;//end 2 for loop;

}

}

return rePt;

}

void 求deltaW576( double\[\] deltacnnx, double, w1cnnx,int 指定核, List<Point> 记录mn,double\[\] deltaW576, double\[\] hI遗忘图,int pool1W=12,int pool1前原图W=28)

{

double\[\] deltacnnXx = new double16;

for (int i = 0; i < 16; i++)//16

{

for (int j = 0; j < 25; j++)//25

{

deltacnnXxi += deltacnnxi * w1cnnxj, 指定核;

}

// deltacnnXxi /= 25;//图像中有25个位置导致

}

// double\[\] delta576w0 = new double25;

for (int i = 0; i < 16; i++)

{

Point temppt = 求二维(i, 记录mni.Y, 记录mni.X);

for (int k = 0; k < 5; k++)

for (int z = 0; z < 5; z++)

{

int newIndex = (temppt.Y + k) * pool1前原图W + (temppt.X + z);

int biasIndex = (temppt.Y / 2 + k) * pool1W + temppt.X / 2 + z;

//double delta = deltacnnXi * dNDrelu(hI遗忘biasIndex) * learnRate;//一共25个 ,12*12=hI遗忘

//w576cnnk \* 5 + z, 0 -= xInewIndex * delta;//28*28,更新四个卷积核,先不更新,想用minibatch,10次运算,更新一次

double delta = deltacnnXxi * dNDrelu(hI遗忘图biasIndex);//一共25个 ,12*12=hI遗忘

deltaW576k \* 5 + z += xInewIndex * delta;//28*28,更新四个卷积核,先不更新,想用minibatch,10次运算,更新一次

//deltaW576k \* 5 + z = xInewIndex * delta;//28*28,更新四个卷积核,先不更新,想用minibatch,10次运算,更新一次

}

}

//for (int k = 0; k < 25; k++)

//{

// deltaW576k /= 16f;

//}

}

//反向传播函数

double, 临时dzw2 = new double80, 10;

double, 临时dzw12 = new double16 \* 4 \* 4, 80;

double\[\] delta576w00 = new double25;

double\[\] delta576w01 = new double25;

double\[\] delta576w02 = new double25;

double\[\] delta576w03 = new double25;

double\[\] delta576w10 = new double25;

double\[\] delta576w11 = new double25;

double\[\] delta576w12 = new double25;

double\[\] delta576w13 = new double25;

double\[\] delta576w20 = new double25;

double\[\] delta576w21 = new double25;

double\[\] delta576w22 = new double25;

double\[\] delta576w23 = new double25;

double\[\] delta576w30 = new double25;

double\[\] delta576w31 = new double25;

double\[\] delta576w32 = new double25;

double\[\] delta576w33 = new double25;

void backcnn()//应该引入结局b的更新,这样可以加快收敛速度202409140818

{

double\[\] 替代 = new double10;

//double, 临时dzw2 = new double80, 10;

//double, 临时dzw12 = new double16\*4\*4, 80;

////更新delta w1cnn【25,4】《--来自临时dzw12【0-63,80】,第一4*4*4

////更新delta w2cnn【25,4】《--来自临时dzw12【64-127,80】,第二4*4*4

////更新delta w3cnn【25,4】《--来自临时dzw12【128-191,80】,第三4*4*4

////更新delta w4cnn【25,4】《--来自临时dzw12【191-255,80】,第四4*4*4

//double\[\] 临时记录dby = new double10;

//double\[\] 临时记录dbhI2O = new double80;

//double\[\] 临时记录dbhIO = new double256;

//////double\[\] 临时记录db = new double144;

//////double\[\] 临时记录db1 = new double144;

//////double\[\] 临时记录db2 = new double144;

//////double\[\] 临时记录db3 = new double144;

double\[\] deltacnn = new double16;

double\[\] deltacnn1 = new double16;

double\[\] deltacnn2 = new double16;

double\[\] deltacnn3 = new double16;

double\[\] deltacnn5 = new double16;

double\[\] deltacnn6 = new double16;

double\[\] deltacnn7 = new double16;

double\[\] deltacnn8 = new double16;

double\[\] deltacnn9 = new double16;

double\[\] deltacnn10 = new double16;

double\[\] deltacnn11 = new double16;

double\[\] deltacnn12 = new double16;

double\[\] deltacnn13 = new double16;//卷积核w1cnn425,0,5*5的卷积核

double\[\] deltacnn14 = new double16;//卷积核w1cnn425,1

double\[\] deltacnn15 = new double16;//卷积核w1cnn425,2

double\[\] deltacnn16 = new double16;//卷积核w1cnn425,3

for (int j = 0; j < 10; j++)//10

{

// 临时记录dbyj =

替代j = softmj * dNDrelu(yOcnnj);

for (int i = 0; i < 80; i++)//15

{

临时dzw2i, j += 替代j * hO2cnni;//这里出现+的原因是batch导致的20260401

}

}

double\[\] W3 = new double80;//

for (int j = 0; j < 80; j++)//

for (int k = 0; k < 10; k++)//10

W3j += 替代k * w2cnnj, k;

double\[\] 替代2 = new double80;

for (int j = 0; j < 80; j++)//

{

// 临时记录dbhI2Oj =

替代2j = dNDrelu(hO2cnnj) * W3j;

for (int i = 0; i < 16 * 16; i++)//256

{

临时dzw12i, j += 替代2j * (hocnnhebingi); //这里出现+的原因是batch导致的20260401

}

}

//上头是全连接

//下头是卷积核,处理变得不一样,头4个与与w1cnn有关,

//即第一4*4*4=64,更新deltaw1cnn【25,0】,更新deltaw1cnn【25,1】,

//更新deltaw1cnn【25,2】,更新deltaw1cnn【25,3】

//卷积核中一个元素,在图像中可以找到很多位置,这个问题怎么办?202507121726

//取均值,有80,求和后,除80,如果有25,求和后,除以25,试试看,公式中没给出表达,办法比问题多。

for (int i = 0; i < 16; i++)//25

{

for (int j = 0; j < 80; j++)//

{

// 临时记录dbhIOi =

deltacnni += 替代2j * w12cnni, j * dNDrelu(hocnnhebingi);//与w1cnn有关

}//全连接还是好处理202409200708

// deltacnni/= 80;//80次,取个均值

}

//另一个卷积核202409191112

//每一个deltacnn,都对应25个14*14中的数据元素,以及一个5*5的卷积核

for (int i = 16; i < 32; i++)//25

{

for (int j = 0; j < 80; j++)//

{

// 临时记录dbhIOi =

deltacnn1i - 16 += 替代2j * w12cnni, j * dNDrelu(hocnnhebingi);//与w1cnn有关

}

// deltacnn1i - 16 /= 80;//80次,取个均值

}

//第三个卷积核202409191112

//每一个deltacnn,都对应25个14*14中的数据元素,以及一个5*5的卷积核

for (int i = 32; i < 48; i++)//25

{

for (int j = 0; j < 80; j++)//

{

// 临时记录dbhIOi =

deltacnn2i - 32 += 替代2j * w12cnni, j * dNDrelu(hocnnhebingi);//与w1cnn有关

}

// deltacnn2i - 32 /= 80;//80次,取个均值

}

//第四个卷积核202409191112

//每一个deltacnn,都对应25个14*14中的数据元素,以及一个5*5的卷积核

for (int i = 48; i < 64; i++)//25

{

for (int j = 0; j < 80; j++)//15

{

// 临时记录dbhIOi =

deltacnn3i - 48 += 替代2j * w12cnni, j * dNDrelu(hocnnhebingi);//与w1cnn有关

}

// deltacnn3i - 48 /= 80;//80次,取个均值

}

//4个卷积核已经更新完,下面第5个到16

deltacnn5 = 更新卷积核(替代2, 5, hIcnn1na, w1cnn2);//5->0,w1cnn225,(5 - 1) % 4->w1cnn225,(6 - 1) % 4->w1cnn225,(7 - 1) % 4->w1cnn225,(8 - 1) % 4

deltacnn6 = 更新卷积核(替代2, 6, hIcnn1na, w1cnn2);

deltacnn7 = 更新卷积核(替代2, 7, hIcnn1na, w1cnn2);

deltacnn8 = 更新卷积核(替代2, 8, hIcnn1na, w1cnn2);

deltacnn9 = 更新卷积核(替代2, 9, hIcnn2na, w1cnn3);//9->0,w1cnn325,(9- 1) % 4->w1cnn325,(10 - 1) % 4->w1cnn325,(11- 1) % 4->w1cnn325,(12 - 1) % 4

deltacnn10 = 更新卷积核(替代2, 10, hIcnn2na, w1cnn3);

deltacnn11 = 更新卷积核(替代2, 11, hIcnn2na, w1cnn3);

deltacnn12 = 更新卷积核(替代2, 12, hIcnn2na, w1cnn3);

deltacnn13 = 更新卷积核(替代2, 13, hIcnn3na, w1cnn4);//卷积核w1cnn425,0,5*5的卷积核

deltacnn14 = 更新卷积核(替代2, 14, hIcnn3na, w1cnn4);//卷积核w1cnn425,1

deltacnn15 = 更新卷积核(替代2, 15, hIcnn3na, w1cnn4);//卷积核w1cnn425,2

deltacnn16 = 更新卷积核(替代2, 16, hIcnn3na, w1cnn4);//卷积核w1cnn425,3//hIcnn3na是12*12的图像《-8**《-4*4,backup

//for (int i = 0; i < 16; i++)//四个没有更新,发现一个delta|B错误202507130520,为改正,屏蔽

//{

// 临时记录dbhIO64 + i = deltacnn5i;

// 临时记录dbhIO64 + 16 + i = deltacnn6i;

// 临时记录dbhIO64 + 16 \* 2 + i = deltacnn7i;

// 临时记录dbhIO64 + 16 \* 3 + i = deltacnn8i;

// 临时记录dbhIO64 + 16 \* 4 + i = deltacnn9i;

// 临时记录dbhIO64 + 16 \* 5 + i = deltacnn10i;

// 临时记录dbhIO64 + 16 \* 6 + i = deltacnn11i;

// 临时记录dbhIO64 + 16 \* 7 + i = deltacnn12i;

// 临时记录dbhIO64 + 16 \* 8 + i = deltacnn13i;

// 临时记录dbhIO64 + 16 \* 9 + i = deltacnn14i;

// 临时记录dbhIO64 + 16 \* 10 + i = deltacnn15i;

// 临时记录dbhIO64 + 16 \* 11 + i = deltacnn16i;

//}

////继续向上,四个卷积核刚好对应一副原始图像,即四个卷积核向上对应一个卷积核28*28-》24*24-》12*12

////卷积核w1cnn25,4对应xIk * w576cnnm \* 5 + n, 0

////卷积核w1cnn125,4对应xIk * w576cnnm \* 5 + n, 1

// double\[\] deltacnnX = new double16;//每一个deltacnnx,都对应25个28*28中的数据元素,以及一个5*5的卷积核

求deltaW576( deltacnn, w1cnn, 0, jilumnna, delta576w00, hI遗忘);

求deltaW576( deltacnn1, w1cnn, 1, jilumnna, delta576w01, hI遗忘);

求deltaW576( deltacnn2, w1cnn, 2, jilumnna, delta576w02, hI遗忘);

求deltaW576( deltacnn3, w1cnn, 3, jilumnna, delta576w03, hI遗忘);

//第二轮开

求deltaW576( deltacnn5, w1cnn2, 0, jilumn1na, delta576w10, hI遗忘1);

求deltaW576( deltacnn6, w1cnn2, 1, jilumn1na, delta576w11, hI遗忘1);

求deltaW576( deltacnn7, w1cnn2, 2, jilumn1na, delta576w12, hI遗忘1);

求deltaW576( deltacnn8, w1cnn2, 3, jilumn1na, delta576w13, hI遗忘1);

//第san轮开始

求deltaW576( deltacnn9, w1cnn3, 0, jilumn2na, delta576w20, hI遗忘2);

求deltaW576( deltacnn10, w1cnn3, 1, jilumn2na, delta576w21, hI遗忘2);

求deltaW576( deltacnn11, w1cnn3, 2, jilumn2na, delta576w22, hI遗忘2);

求deltaW576( deltacnn12, w1cnn3, 3, jilumn2na, delta576w23, hI遗忘2);

////最后yilun

求deltaW576( deltacnn13, w1cnn4, 0, jilumn3na, delta576w30, hI遗忘3);

求deltaW576( deltacnn14, w1cnn4, 1, jilumn3na, delta576w31, hI遗忘3);

求deltaW576( deltacnn15, w1cnn4, 2, jilumn3na, delta576w32, hI遗忘3);

求deltaW576( deltacnn16, w1cnn4, 3, jilumn3na, delta576w33, hI遗忘3);

////最后更新d

//for (int j = 0; j < 10; j++)//10

// for (int i = 0; i < 80; i++)//15

// {

// w2cnni, j -= learnRate * 临时dzw2i, j;

// }

//for (int j = 0; j < 80; j++)//15

// for (int i = 0; i < 16 * 16; i++)//256

// {

// w12cnni, j -= learnRate * 临时dzw12i, j;

// }

////for (int i = 0; i < 10; i++)

////{

//// bycnni -= learnRate * 临时记录dbyi;

////}

////for (int i = 0; i < 80; i++)

////{

//// bI2Ocnni -= learnRate * 临时记录dbhI2Oi;

////}

////for (int i = 0; i < 256; i++)

////{

//// bIOcnni -= learnRate * 临时记录dbhIOi;

////}

//last更新卷积核(deltacnn, 1, hIcnnna, w1cnn);

//last更新卷积核(deltacnn1, 2, hIcnnna, w1cnn);

//last更新卷积核(deltacnn2, 3, hIcnnna, w1cnn);

//last更新卷积核(deltacnn3, 4, hIcnnna, w1cnn);

//last更新卷积核(deltacnn5, 5, hIcnn1na, w1cnn2);

//last更新卷积核(deltacnn6, 6, hIcnn1na, w1cnn2);

//last更新卷积核(deltacnn7, 7, hIcnn1na, w1cnn2);

//last更新卷积核(deltacnn8, 8, hIcnn1na, w1cnn2);

//last更新卷积核(deltacnn9, 9, hIcnn2na, w1cnn3);

//last更新卷积核(deltacnn10, 10, hIcnn2na, w1cnn3);

//last更新卷积核(deltacnn11, 11, hIcnn2na, w1cnn3);

//last更新卷积核(deltacnn12, 12, hIcnn2na, w1cnn3);

//last更新卷积核(deltacnn13, 13, hIcnn3na, w1cnn4);

//last更新卷积核(deltacnn14, 14, hIcnn3na, w1cnn4);

//last更新卷积核(deltacnn15, 15, hIcnn3na, w1cnn4);

//last更新卷积核(deltacnn16, 16, hIcnn3na, w1cnn4);

//for (int i = 0; i < 25; i++)

//{

// w576cnni, 0 -= (delta576w03i + delta576w02i + delta576w01i + delta576w00i) / 4 * learnRate;

//}

//for (int i = 0; i < 25; i++)

//{

// w576cnni, 1 -= (delta576w13i + delta576w12i + delta576w11i + delta576w10i) / 4 * learnRate;

//}

//for (int i = 0; i < 25; i++)

//{

// w576cnni, 2 -= (delta576w23i + delta576w22i + delta576w21i + delta576w20i) / 4 * learnRate;

//}

//for (int i = 0; i < 25; i++)

//{

// w576cnni, 3 -= (delta576w33i + delta576w32i + delta576w31i + delta576w30i) / 4 * learnRate;

//}

last更新卷积核minibatch(deltacnn, 1, hIcnnna, delw1cnn);

last更新卷积核minibatch(deltacnn1, 2, hIcnnna, delw1cnn);

last更新卷积核minibatch(deltacnn2, 3, hIcnnna, delw1cnn);

last更新卷积核minibatch(deltacnn3, 4, hIcnnna, delw1cnn);

last更新卷积核minibatch(deltacnn5, 5, hIcnn1na, delw1cnn2);

last更新卷积核minibatch(deltacnn6, 6, hIcnn1na, delw1cnn2);

last更新卷积核minibatch(deltacnn7, 7, hIcnn1na, delw1cnn2);

last更新卷积核minibatch(deltacnn8, 8, hIcnn1na, delw1cnn2);

last更新卷积核minibatch(deltacnn9, 9, hIcnn2na, delw1cnn3);

last更新卷积核minibatch(deltacnn10, 10, hIcnn2na, delw1cnn3);

last更新卷积核minibatch(deltacnn11, 11, hIcnn2na, delw1cnn3);

last更新卷积核minibatch(deltacnn12, 12, hIcnn2na, delw1cnn3);

last更新卷积核minibatch(deltacnn13, 13, hIcnn3na, delw1cnn4);

last更新卷积核minibatch(deltacnn14, 14, hIcnn3na, delw1cnn4);

last更新卷积核minibatch(deltacnn15, 15, hIcnn3na, delw1cnn4);

last更新卷积核minibatch(deltacnn16, 16, hIcnn3na, delw1cnn4);

}

int getAnsSoftmaxcnn()

{

int ans = 0;

for (int i = 1; i <10; i++)

if (softmi > softmans) ans = i;

return ans;

}

private void button31_Click(object sender, EventArgs e)

{

Bitmap curBitmap = (Bitmap)pictureBox20.Image;

Rectangle rc = new Rectangle(0, 0, curBitmap.Width, curBitmap.Height);

System.Drawing.Imaging.BitmapData bmpdata = curBitmap.LockBits(rc,

System.Drawing.Imaging.ImageLockMode.ReadWrite,

curBitmap.PixelFormat);

IntPtr imageptr = bmpdata.Scan0;

int ww = curBitmap.Width;

int hh = curBitmap.Height;

int bytes = 0;

if (curBitmap.PixelFormat == PixelFormat.Format24bppRgb)

{

bytes = ww * hh * 3;//此处针对的是24位位图

}

byte\[\] rgbValues = new bytebytes;

System.Runtime.InteropServices.Marshal.Copy(imageptr, rgbValues, 0, bytes);

curBitmap.UnlockBits(bmpdata);

// showbuffer2pictmod4(showoutput14, 14, 14, pictureBox10);

byte\[\] buffer8 = new byte28 \* 28;

double\[\] buffer8d = new double28 \* 28;

if (curBitmap.PixelFormat == PixelFormat.Format24bppRgb)

{

for (int ii = 0; ii < 28; ii++)

{

for (int j = 0; j < 28; j++)

{

int n = ii * 28 + j;

int k = (ii) * 28 + j;

// int m = 3 * k;

buffer8k = rgbValuesn \* 3;//这里都是灰度图像20221209

// buffer8k = (byte)(0.3 * rgbValuesn \* 3 + 2 + 0.59 * rgbValuesn \* 3 + 1 + 0.11 * rgbValuesn \* 3);//这里是1024*768图像

buffer8dk = rgbValuesn \* 3 / 255f;//这里都是灰度图像20221209

}

}

}

showbuffer2pictmod4(buffer8, 28, 28, pictureBox20);

textBox51.Text = "";

xI = buffer8d;

cnnforward();

// int ans = getAnscnn();

int ans = getAnsSoftmaxcnn();

for (int i = 0; i < 10; i++)

textBox51.Text += ((float)softmi).ToString() + "\r\n";

textBox49.Text = ans.ToString() + ";" + ((float)yOcnnans).ToString();

}

private void button30_Click(object sender, EventArgs e)

{

showbuffer2pictmod4(new byte28 \* 28, 28, 28, pictureBox20);

}

Bitmap weitu28;

float bilix28 = 0; float biliy28 = 0;

private void pictureBox20_MouseMove(object sender, MouseEventArgs e)

{

if (huax28)

{

Graphics g = pictureBox20.CreateGraphics();

g.DrawLine(new Pen(Color.White, 12f), qidianx28, new Point(e.X, e.Y));//huabi gao kuai yidian

// Bitmap curBitmap = (Bitmap)pictureBox9.Image;

//curBitmap.SetPixel(e.X, e.Y, Color.White);

//weitu28.SetPixel((int)(e.X * bilix28), (int)(e.Y * biliy28), Color.White); ;

try

{

weitu28.SetPixel((int)(qidianx28.X * bilix28), (int)(qidianx28.Y * biliy28 - 1), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(qidianx28.X * bilix28), (int)(qidianx28.Y * biliy28 + 1), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(qidianx28.X * bilix28), (int)(qidianx28.Y * biliy28), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(qidianx28.X * bilix28 - 1), (int)(qidianx28.Y * biliy28), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(qidianx28.X * bilix28 + 1), (int)(qidianx28.Y * biliy28), Color.FromArgb(255, 255, 255));

qidianx28 = new Point(e.X, e.Y);

weitu28.SetPixel((int)(e.X * bilix28 - 1), (int)(e.Y * biliy28 - 1), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(e.X * bilix28 + 1), (int)(e.Y * biliy28 + 1), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(e.X * bilix28), (int)(e.Y * biliy28), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(e.X * bilix28 - 1), (int)(e.Y * biliy28 + 1), Color.FromArgb(255, 255, 255));

weitu28.SetPixel((int)(e.X * bilix28 + 1), (int)(e.Y * biliy28 - 1), Color.FromArgb(255, 255, 255));

}

catch (Exception ee)

{ }

}

}

private void pictureBox20_MouseDown(object sender, MouseEventArgs e)

{

weitu28 = (Bitmap)pictureBox20.Image;

bilix28 = weitu28.Width * 1f / pictureBox20.Width;

biliy28 = weitu28.Height * 1f / pictureBox20.Height;

if (MouseButtons.Left == e.Button)

{

qidianx28.X = e.X;

qidianx28.Y = e.Y;

// e.Button

huax28 = true;

}

}

Point qidianx28 = new Point();

bool huax28 = false;

private void pictureBox20_MouseUp(object sender, MouseEventArgs e)

{

huax28 = false;

}

private void textBox1_TextChanged(object sender, EventArgs e)

{

this.learnRate = Convert.ToDouble(textBox1.Text);

}

}

}