目录

[3.1.1 多源连接器开发:REST API轮询(Requests + Retry策略)与Webhook接收](#3.1.1 多源连接器开发:REST API轮询(Requests + Retry策略)与Webhook接收)

[3.1.2 Kafka生产者优化:批量发送、压缩算法(LZ4)与ACK配置调优](#3.1.2 Kafka生产者优化:批量发送、压缩算法(LZ4)与ACK配置调优)

[3.1.3 Schema验证:Confluent Schema Registry与Pydantic模型校验](#3.1.3 Schema验证:Confluent Schema Registry与Pydantic模型校验)

[3.1.4 数据血缘追踪:消息Header注入来源系统与采集时间戳](#3.1.4 数据血缘追踪:消息Header注入来源系统与采集时间戳)

[3.2 流处理引擎](#3.2 流处理引擎)

[3.2.1 Polars流处理:惰性求值(Lazy API)与内存高效转换](#3.2.1 Polars流处理:惰性求值(Lazy API)与内存高效转换)

[3.2.2 窗口计算:滚动窗口(Tumbling Window)聚合与水位线(Watermark)管理](#3.2.2 窗口计算:滚动窗口(Tumbling Window)聚合与水位线(Watermark)管理)

[3.2.3 流合并:多Kafka Topic Join操作与状态存储(RocksDB)](#3.2.3 流合并:多Kafka Topic Join操作与状态存储(RocksDB))

[3.2.4 错误处理:poison pill消息隔离与侧流(Side Output)收集](#3.2.4 错误处理:poison pill消息隔离与侧流(Side Output)收集)

[3.3 存储层设计](#3.3 存储层设计)

[3.3.1 Delta Lake集成:ACID事务、Schema演化与Time Travel查询](#3.3.1 Delta Lake集成:ACID事务、Schema演化与Time Travel查询)

[3.3.2 分层存储:Bronze(原始)-Silver(清洗)-Gold(聚合)架构实现](#3.3.2 分层存储:Bronze(原始)-Silver(清洗)-Gold(聚合)架构实现)

[3.3.3 分区策略:日期分区与业务维度分区权衡](#3.3.3 分区策略:日期分区与业务维度分区权衡)

[3.3.4 元数据管理:Delta表版本清理与VACUUM策略](#3.3.4 元数据管理:Delta表版本清理与VACUUM策略)

[3.4 数据质量保障](#3.4 数据质量保障)

[3.4.1 Great Expectations集成:空值检查、范围验证与格式校验](#3.4.1 Great Expectations集成:空值检查、范围验证与格式校验)

[3.4.2 数据血缘图谱:DBT模型依赖关系自动生成](#3.4.2 数据血缘图谱:DBT模型依赖关系自动生成)

[3.4.3 异常检测:Z-Score算法识别离群数据点并告警](#3.4.3 异常检测:Z-Score算法识别离群数据点并告警)

[3.4.4 端到端延迟监控:Kafka Lag监控与处理延迟SLI设定](#3.4.4 端到端延迟监控:Kafka Lag监控与处理延迟SLI设定)

[3.5 运维与优化](#3.5 运维与优化)

[3.5.1 Docker Compose本地开发:Kafka/Zookeeper/Polars环境一键启动](#3.5.1 Docker Compose本地开发:Kafka/Zookeeper/Polars环境一键启动)

[3.5.2 Kubernetes部署:StatefulSet管理有状态消费者与PV绑定](#3.5.2 Kubernetes部署:StatefulSet管理有状态消费者与PV绑定)

[3.5.3 自动扩缩容:KEDA基于Kafka Lag的HPA策略配置](#3.5.3 自动扩缩容:KEDA基于Kafka Lag的HPA策略配置)

[3.5.4 监控告警:Prometheus指标采集与PagerDuty集成](#3.5.4 监控告警:Prometheus指标采集与PagerDuty集成)

[项目三:实时数据管道(Kafka + Polars + Delta Lake)](#项目三:实时数据管道(Kafka + Polars + Delta Lake))

[3.5.1 docker-compose.yml](#3.5.1 docker-compose.yml)

[3.1 数据采集层实现](#3.1 数据采集层实现)

[3.1.1 rest_api_poller.py](#3.1.1 rest_api_poller.py)

[3.1.1 webhook_receiver.py](#3.1.1 webhook_receiver.py)

[3.1.2 kafka_producer_optimized.py](#3.1.2 kafka_producer_optimized.py)

[3.1.3 schema_validation.py](#3.1.3 schema_validation.py)

[3.1.4 lineage_tracking.py](#3.1.4 lineage_tracking.py)

[3.2 流处理引擎实现](#3.2 流处理引擎实现)

[3.2.1 polars_streaming.py](#3.2.1 polars_streaming.py)

[3.2.2 window_computation.py](#3.2.2 window_computation.py)

[3.2.3 stream_join.py](#3.2.3 stream_join.py)

[3.2.4 error_handling.py](#3.2.4 error_handling.py)

[3.3 存储层设计实现](#3.3 存储层设计实现)

[3.3.1 delta_lake_integration.py](#3.3.1 delta_lake_integration.py)

[3.3.2 medallion_architecture.py](#3.3.2 medallion_architecture.py)

[3.3.3 partition_strategy.py](#3.3.3 partition_strategy.py)

[3.3.4 metadata_management.py](#3.3.4 metadata_management.py)

[3.4 数据质量保障实现](#3.4 数据质量保障实现)

[3.4.1 great_expectations_integration.py](#3.4.1 great_expectations_integration.py)

[3.4.2 data_lineage_graph.py](#3.4.2 data_lineage_graph.py)

3.1.1 多源连接器开发:REST API轮询(Requests + Retry策略)与Webhook接收

REST API轮询连接器采用指数退避(Exponential Backoff)重试机制应对瞬时网络故障。Requests库配置Retry适配器,设置backoff_factor为2秒,最大重试次数5次,状态码白名单包含500/502/503/504,确保幂等性操作在超时后安全重试。轮询间隔采用自适应算法,基于数据变更频率动态调整,高吞吐源站配置5-10秒间隔,低频变更源站延长至5分钟,避免无效请求消耗带宽。

Webhook接收端构建基于FastAPI的异步HTTP服务,采用HMAC-SHA256签名验证确保消息完整性。请求体经签名密钥哈希比对后进入Kafka生产者缓冲队列,返回202 Accepted状态确认接收,实现异步解耦。 webhook端点配置速率限制(Rate Limiting)与IP白名单,防止DDoS攻击与未授权访问。消息体Schema校验前置,不符合预期的Payload直接拒绝并记录审计日志。

3.1.2 Kafka生产者优化:批量发送、压缩算法(LZ4)与ACK配置调优

Kafka生产者吞吐量优化依赖批处理与压缩的协同配置。batch.size参数设置为32KB或64KB,累积多条消息形成批次减少网络往返;linger.ms设置为5-10毫秒,允许短暂等待提升批次填充率。压缩算法选择LZ4,其在保持高压缩比的同时提供极低的CPU开销,相比Snappy在吞吐敏感型工作负载中表现更优。compression.type设置为lz4后,网络带宽占用降低60-80%,尤其适用于跨可用区传输场景。

ACK配置(acks)权衡持久性保证与发送延迟。acks=all确保消息被所有ISR(In-Sync Replicas)确认后才返回成功,提供最强持久性但增加往返延迟;acks=1仅等待Leader确认,acks=0完全异步无确认。金融级数据管道强制采用acks=all配合enable.idempotence=true,消除网络重试导致的重复消息。max.in.flight.requests.per.connection设置为1时,即使不启用幂等性也可保证消息顺序性。

3.1.3 Schema验证:Confluent Schema Registry与Pydantic模型校验

Confluent Schema Registry作为中心化Schema治理层,管理Avro、Protobuf、JSON Schema格式的版本演进。生产者在序列化前向Registry查询最新Schema ID,内嵌于消息头(Magic Byte + Schema ID),消费者根据ID反序列化,实现Schema与数据的解耦。向后兼容(Backward Compatibility)策略允许新增带默认值的字段,向前兼容(Forward Compatibility)允许删除字段,完整兼容(Full Compatibility)要求双向互操作。

Pydantic在应用层执行强制性数据校验。定义BaseModel子类标注字段类型、约束范围与验证逻辑,如Field(..., gt=0)确保库存数量为正整数,constr(regex=...)验证订单号格式。校验失败触发ValidationError,异常信息包含具体字段与错误类型,用于生成标准化错误日志。Pydantic模型与Registry Schema通过代码生成工具同步,确保应用层与存储层约束一致性。

3.1.4 数据血缘追踪:消息Header注入来源系统与采集时间戳

数据血缘(Data Lineage)通过Kafka消息头(Headers)实现字段级追踪。生产者在发送消息时注入标准化头字段:source_system标识来源REST API或Webhook端点,ingestion_timestamp记录UTC时间戳,correlation_id关联上游请求链。Header采用键值对数组格式,避免污染消息Payload业务数据。

血缘元数据贯穿整个管道生命周期。Bronze层保留原始Header信息,Silver层解析并扩展加工时间戳与ETL版本号,Gold层聚合为血缘图谱边关系。Delta Lake的userMetadata字段存储加工逻辑版本,配合时间旅行(Time Travel)功能可回溯任意历史版本的数据血缘状态。审计查询通过__source_system与__ingestion_timestamp虚拟列实现跨层数据追溯。

3.2 流处理引擎

3.2.1 Polars流处理:惰性求值(Lazy API)与内存高效转换

Polars的惰性求值(Lazy Evaluation)机制通过构建逻辑执行计划(Logical Plan)实现查询优化。DataFrame操作不立即执行,而是累积为计算图,优化器应用谓词下推(Predicate Pushdown)将Filter操作前移至数据源读取阶段,投影下推(Projection Pushdown)仅加载查询所需的列,消除无效I/O。执行计划经优化后编译为物理计划,利用Apache Arrow的列式内存格式与SIMD指令并行处理。

内存效率源于零拷贝(Zero-Copy)操作与流式执行。Polars避免Pandas的块管理器(Block Manager)开销,字符串与分类数据采用字典编码存储,相比Python对象引用节省90%内存。对于超大数据集,流式模式(Streaming Mode)将数据分块处理,每块独立计算并物化中间结果,突破物理内存限制。表达式API(col().filter().groupby().agg())链式构建复杂转换,查询优化器自动重排Join顺序减少中间结果集大小。

3.2.2 窗口计算:滚动窗口(Tumbling Window)聚合与水位线(Watermark)管理

滚动窗口(Tumbling Window)将无界流切分为固定时长的不重叠区间,每个事件仅归属单一窗口。窗口大小 T_w 与滑动间隔(Tumbling场景下等于窗口大小)构成时间边界,聚合函数(SUM、AVG、COUNT)在窗口内独立计算。窗口触发基于事件时间(Event Time)而非处理时间,确保乱序数据归入正确区间。

\\forall e \\in \\text{Stream}, w(e) = \\lfloor \\frac{t_e}{T_w} \\rfloor \\cdot T_w

其中 w(e) 表示事件 e 归属的窗口起始时间,t_e 为事件时间戳。水位线(Watermark)机制容忍延迟到达数据,水位线时间 W(t) 为当前处理时间减去允许延迟 T_d:

W(t) = \\max(t_{\\text{process}}) - T_d

水位线标记之前的窗口视为完整并触发计算,迟到数据若在水位线之后被丢弃或路由至侧流。迟到容忍度 T_d 依据业务SLA设定,通常配置为窗口大小的10-20%。

3.2.3 流合并:多Kafka Topic Join操作与状态存储(RocksDB)

多Topic流Join依赖状态存储(State Store)维护历史记录以实现时序对齐。Polars流处理引擎集成RocksDB作为持久化状态后端,存储左流(Left Stream)与右流(Right Stream)的未匹配事件。窗口Join操作中,左流事件到达时查询RocksDB检索右流在 \[t - T_{\\text{window}}, t + T_{\\text{window}}\] 区间内的匹配记录,输出笛卡尔积后更新状态存储。

状态存储通过Changelog Topic实现容错。每次状态更新(Put/Delete)同步写入Kafka Compact Topic,RocksDB实例故障后通过重放Changelog重建状态。num.standby.replicas配置副本数,Standby实例实时同步Changelog,主实例故障时秒级接管避免状态重建延迟。状态存储分区与输入Topic分区Co-location,确保相同Key的事件路由至同一处理节点,消除跨网络状态访问开销。

3.2.4 错误处理:poison pill消息隔离与侧流(Side Output)收集

Poison Pill消息(无法解析或业务逻辑异常的数据)通过Try-Catch块隔离至侧流(Side Output)。主流程处理逻辑封装于异常捕获块,解析失败或Schema不匹配的消息路由至死信Topic({main-topic}-dlq),携带原始Payload、错误类型、堆栈跟踪与处理时间戳。侧流消费者独立部署,支持人工审查、自动修复或审计归档。

错误分类策略区分可恢复与不可恢复异常符。序列化错误(Schema Evolution不兼容)与业务约束违反(负库存)视为不可恢复,立即转入DLQ;瞬态错误(数据库连接超时)触发指数退避重试,重试耗尽后转入DLQ。DLQ消息设置独立保留策略(通常30天),超期自动清理防止存储膨胀。监控侧流消息速率,突发增长触发告警指示上游数据质量问题。

3.3 存储层设计

3.3.1 Delta Lake集成:ACID事务、Schema演化与Time Travel查询

Delta Lake在Parquet文件之上构建事务日志(Delta Log),以JSON格式记录每次提交的元数据操作(AddFile、RemoveFile、UpdateMetadata)。事务日志实现原子性(Atomicity)保证------多文件写入要么全部可见要么全部不可见,通过 _delta_log 目录的乐观并发控制(OCC)机制实现。隔离级别为写序列化(Serializable),读者通过查询最新快照版本获取一致性视图。

Schema演化支持添加新列、扩大数据类型范围、更改列可空性而不重写历史数据。mergeSchema选项允许写操作自动扩展表Schema,向前兼容确保旧Reader可读取新数据(新列忽略),向后兼容确保新Reader可读取旧数据(缺失列填充默认值)。Time Travel功能通过版本号或时间戳查询历史快照:

\\text{Snapshot}(t) = \\{f \\mid \\text{addTime}(f) \\le t \\wedge \\text{removeTime}(f) \> t\\}

其中 f 表示数据文件,addTime与removeTime记录文件在事务日志中的生命周期。VACUUM命令清理过期文件,默认保留7天以支持周内回溯查询。

3.3.2 分层存储:Bronze(原始)-Silver(清洗)-Gold(聚合)架构实现

Medallion Architecture将数据湖组织为三层质量递进区域。Bronze层作为原始数据着陆区,以Append-Only模式存储Kafka流或API摄取的原始JSON/Avro数据,保留完整血缘元数据(摄入时间戳、源系统标识),Schema-on-Read策略延迟 Schema 应用。Silver层执行清洗、去重、标准化与轻量级聚合,数据转换为清洗后的Delta格式,应用Schema约束与质量门控,支持CDC(Change Data Feed)输出变更流。Gold层构建业务聚合与Star Schema,为BI工具与ML模型提供高可靠性数据产品。

层间依赖通过DBT(Data Build Tool)模型编排,Bronze模型直接引用外部源,Silver模型引用Bronze模型,Gold模型引用Silver模型,形成有向无环图(DAG)。Delta Lake的Liquid Clustering(2025特性)替代静态分区,基于Z-Order或多维聚类自动优化文件布局,消除小文件问题并加速谓词查询。

3.3.3 分区策略:日期分区与业务维度分区权衡

日期分区(DATE(event_timestamp))按时间粒度组织文件,适用于时间序列查询与保留策略管理。每日分区产生365个年度分区,配合delta.autoOptimize.optimizeWrite自动合并小文件。业务维度分区(如country_code、product_category)优化过滤查询,但需警惕数据倾斜------热点分区(如US、CN)可能包含TB级数据而冷分区仅MB级,打破并行度平衡。

复合分区策略结合日期与业务维度(PARTITIONED BY (date, region)),限制单分区大小在1-10GB以优化查询并行度。Z-Order聚类在多维度上协同排序数据,通过OPTIMIZE ... ZORDER BY (col1, col2)命令构建多维索引,使点查询与范围查询跳过90%以上文件,避免穷举扫描。分区决策遵循查询模式分析,高频过滤列优先作为分区键或Z-Order列。

3.3.4 元数据管理:Delta表版本清理与VACUUM策略

Delta事务日志随时间增长可能达到百万级JSON文件,元数据操作性能衰减。delta.logRetentionDuration参数控制日志保留期(默认30天),超期日志自动清理。delta.deletedFileRetentionDuration配置已删除文件保留期,支持Time Travel查询历史版本。VACUUM命令物理删除回收站(Recycle Bin)中超过保留期的Parquet文件:

\\text{VACUUM}(\\text{table}, T_{\\text{retention}}) = \\{f \\mid \\text{removeTime}(f) \< (t_{\\text{now}} - T_{\\text{retention}})\\}

保留期设置需平衡存储成本与审计需求,金融场景通常保留7年,实时分析场景保留7-30天。元数据文件采用Snappy压缩存储,定期运行OPTIMIZE命令合并小文件提升查询性能。对于高频流写入表,配置delta.autoOptimize.autoCompact在后台自动执行轻量级合并,避免手动维护。

3.4 数据质量保障

3.4.1 Great Expectations集成:空值检查、范围验证与格式校验

Great Expectations框架通过Expectation Suites定义数据契约。空值检查(expect_column_values_to_not_be_null)强制关键字段(订单ID、用户ID)完整性;范围验证(expect_column_values_to_be_between)约束数值型字段(金额、库存)在业务合理区间;格式校验(expect_column_values_to_match_regex)验证邮箱、手机号、订单号符合标准正则模式。校验结果生成JSON格式的Validation Result,包含不匹配行样本与统计摘要。

检查点(Checkpoint)机制将验证嵌入数据管道,Silver层写入前自动执行Expectation Suite,失败触发管道暂停或告警。数据文档(Data Docs)自动渲染HTML报告,展示列分布、缺失率与历史校验趋势,供业务团队审阅。自定义Expectation扩展支持业务特定规则(如库存扣减必须匹配订单创建),通过继承BatchExpectation类实现领域专用验证逻辑。

3.4.2 数据血缘图谱:DBT模型依赖关系自动生成

DBT通过ref()与source()宏隐式构建血缘图谱。编译阶段解析SQL查询提取表依赖关系,生成manifest.json描述模型DAG。血缘粒度涵盖表级与列级:表级血缘展示Bronze→Silver→Gold的数据流向,列级血缘追溯聚合字段的原始来源(如GMV追溯到订单金额字段)。文档站点(dbt Docs)可视化渲染血缘图,支持上下游影响分析------修改Silver层模型时自动识别受影响的Gold层报表。

血缘元数据暴露为JSON API,集成数据治理平台(如Collibra、DataHub)构建企业级血缘图谱。dbt artifacts(manifest、catalog、run results)持久化至对象存储,结合Delta Lake的表版本信息构建端到端血缘:从Kafka Topic经Polars处理到Delta Table,记录每个转换节点的逻辑版本与执行时间戳。

3.4.3 异常检测:Z-Score算法识别离群数据点并告警

Z-Score标准化方法识别偏离均值超过阈值标准差的数据点。对于数据点 x 在窗口 W 内,计算均值 \\mu 与标准差 \\sigma :

z = \\frac{x - \\mu}{\\sigma}

设置阈值 z_{\\text{threshold}} = 3 (99.7%置信区间),\|z\| \> 3 判定为异常。滑动窗口持续更新 \\mu 与 \\sigma 以适应数据漂移,窗口大小配置为1000-10000条记录平衡敏感度与噪声。异常检测应用于订单金额突增、库存异常清零等场景,触发时通过PagerDuty或Slack发送告警并暂停相关管道。

季节性数据采用修正Z-Score(Modified Z-Score)基于中位数绝对偏差(MAD)替代标准差,降低离群值对统计量的影响:

\\text{MAD} = \\text{median}(\|x_i - \\text{median}(x)\|)

M_i = \\frac{0.6745 \\cdot (x_i - \\text{median}(x))}{\\text{MAD}}

阈值通常设置为3.5,适用于具有明显周期波动(如电商大促)的指标监控。

3.4.4 端到端延迟监控:Kafka Lag监控与处理延迟SLI设定

端到端延迟定义为从数据产生(Source System时间戳)到查询可用(Gold层物化)的 wall-clock 时间。Kafka Consumer Lag(未消费消息数)是延迟关键指标,通过kafka-consumer-groups.sh或Burrow服务监控各分区Lag值,聚合为分位数(p50/p99)。SLI(Service Level Indicator)设定目标Lag小于1000条消息或延迟小于30秒,SLO(Service Level Objective)要求99.9%时间满足SLI。

延迟监控埋点于管道各阶段:数据采集阶段记录ingestion_latency(Source→Bronze),处理阶段记录processing_latency(Bronze→Silver),服务阶段记录serving_latency(Silver→Gold)。Prometheus采集这些指标,Grafana dashboards展示分阶段延迟分解。超标延迟触发自动扩容(KEDA)或告警人工介入,延迟预算(Error Budget)耗尽时冻结非紧急发布。

3.5 运维与优化

3.5.1 Docker Compose本地开发:Kafka/Zookeeper/Polars环境一键启动

本地开发环境通过Docker Compose编排多容器栈。Zookeeper服务(端口2181)管理Kafka Broker协调,Kafka服务(端口9092)配置KAFKA_CREATE_TOPICS预建测试Topic(order-events、inventory-events),单节点模式启用KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR=1避免多副本警告。Polars处理服务基于python:3.11-slim镜像,安装polars[all]、confluent-kafka、delta-spark依赖,挂载本地代码卷实现热重载。

持久化卷(Volumes)配置确保数据 survivability。Kafka数据目录映射至命名卷防止容器重启丢失Topic定义;Delta Lake存储目录挂载至./data/delta便于本地检查Parquet文件。健康检查(Healthcheck)配置探测Kafka端口与Zookeeper连通性,就绪后启动Polars消费者。网络桥接模式使服务间通过主机名解析(kafka:9092),外部应用通过localhost:9092接入。

3.5.2 Kubernetes部署:StatefulSet管理有状态消费者与PV绑定

有状态消费者(Stateful Consumer)采用StatefulSet而非Deployment部署,确保Pod重启后保留身份与存储。每个Pod分配唯一序号索引(consumer-0、consumer-1),通过Headless Service(kafka-consumer.default.svc.cluster.local)实现稳定的网络标识。PersistentVolumeClaimTemplate自动为每个副本创建独立 PV,RocksDB状态目录与Delta检查点持久化至SSD存储,Pod迁移时数据跟随。

分区分配策略利用StatefulSet的有序性。消费者实例 i 处理Topic分区 P_i (P_i = i \\mod \\text{partition\\_count}),避免重平衡(Rebalance)开销。反亲和性(PodAntiAffinity)规则强制副本分布不同节点,主机故障时仅影响单个消费者。滚动更新策略设置partition: 1,确保有序重启维持分区分配连续性,更新期间处理延迟可控。

3.5.3 自动扩缩容:KEDA基于Kafka Lag的HPA策略配置

KEDA(Kubernetes Event-driven Autoscaling)通过ScaledObject CRD扩展Horizontal Pod Autoscaler,基于Kafka Lag指标动态调整副本数。触发器配置lagThreshold: "100"(每分区消息数),maxReplicaCount匹配Topic分区数(如32分区设最大32副本),minReplicaCount设为0实现Scale-to-Zero。

扩缩容行为(Scaling Behavior)配置精细化控制。扩容策略(ScaleUp)设置stabilizationWindowSeconds: 60与policies: [{type: Pods, value: 4, periodSeconds: 60}],每分钟增加4个Pod避免震荡;缩容策略(ScaleDown)设置stabilizationWindowSeconds: 300与policies: [{type: Percent, value: 10, periodSeconds: 60}],5分钟后按10%比例缓慢缩容防止Lag反弹。pollingInterval: 15秒检测Lag变化,快速响应流量突发。

特殊配置allowIdleConsumers: "true"允许消费者数超过分区数,空闲消费者等待分区重分配(如消费者故障时),确保高可用同时避免资源浪费。excludePersistentLag: "false"确保长期积压(如历史数据重放)也能触发扩容。

3.5.4 监控告警:Prometheus指标采集与PagerDuty集成

Prometheus通过JMX Exporter采集Kafka指标(kafka_consumer_lag、kafka_producer_record_send_rate),通过Polars应用暴露的/metrics端点(Prometheus Client Library)采集处理延迟与吞吐量。ServiceMonitor CRD配置抓取间隔15秒,Relabeling规则过滤关键指标减少存储 cardinality。Alertmanager配置路由规则:Warning级别(Lag>100)发送至Slack,Critical级别(Lag>10000或消费停滞5分钟)触发PagerDuty高优先级事件。

PagerDuty集成通过Event API v2发送结构化告警。告警包含严重级别(severity)、服务标签(service: kafka-polars-pipeline)、运行手册链接(runbook_url)与富文本上下文(最近错误日志片段)。告警抑制(Inhibition)规则避免级联告警风暴------Bronz层故障时抑制Silver与Gold层相关告警,根因明确。告警解决后自动发送恢复通知,更新PagerDuty事件状态为Resolved,保持事件生命周期闭环。

项目三:实时数据管道(Kafka + Polars + Delta Lake)

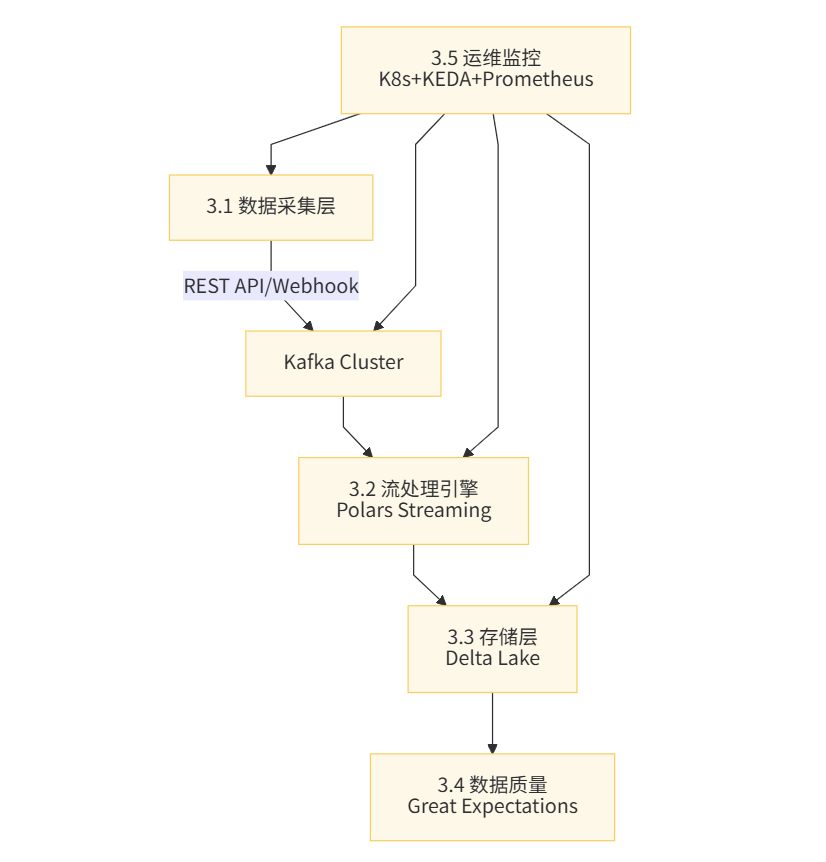

项目架构概览

基础设施部署脚本

3.5.1 docker-compose.yml

脚本功能 :一键启动Kafka、Zookeeper、Schema Registry、MinIO(S3兼容存储)、PostgreSQL元数据库和Grafana监控 使用方式 :docker-compose up -d

version: '3.8'

services:

zookeeper:

image: confluentinc/cp-zookeeper:7.5.0

hostname: zookeeper

container_name: zookeeper

ports:

- "2181:2181"

environment:

ZOOKEEPER_CLIENT_PORT: 2181

ZOOKEEPER_TICK_TIME: 2000

volumes:

- zookeeper_data:/var/lib/zookeeper/data

kafka:

image: confluentinc/cp-kafka:7.5.0

hostname: kafka

container_name: kafka

depends_on:

- zookeeper

ports:

- "9092:9092"

- "29092:29092"

environment:

KAFKA_BROKER_ID: 1

KAFKA_ZOOKEEPER_CONNECT: 'zookeeper:2181'

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://kafka:29092,PLAINTEXT_HOST://localhost:9092

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

KAFKA_GROUP_INITIAL_REBALANCE_DELAY_MS: 0

KAFKA_TRANSACTION_STATE_LOG_MIN_ISR: 1

KAFKA_TRANSACTION_STATE_LOG_REPLICATION_FACTOR: 1

KAFKA_AUTO_CREATE_TOPICS_ENABLE: "true"

# 性能优化配置

KAFKA_COMPRESSION_TYPE: lz4

KAFKA_BATCH_SIZE: 65536

KAFKA_LINGER_MS: 10

KAFKA_BUFFER_MEMORY: 67108864

volumes:

- kafka_data:/var/lib/kafka/data

schema-registry:

image: confluentinc/cp-schema-registry:7.5.0

hostname: schema-registry

container_name: schema-registry

depends_on:

- kafka

ports:

- "8081:8081"

environment:

SCHEMA_REGISTRY_HOST_NAME: schema-registry

SCHEMA_REGISTRY_KAFKASTORE_BOOTSTRAP_SERVERS: 'kafka:29092'

SCHEMA_REGISTRY_LISTENERS: http://0.0.0.0:8081

# S3兼容对象存储(替代AWS S3用于Delta Lake)

minio:

image: minio/minio:latest

hostname: minio

container_name: minio

ports:

- "9000:9000"

- "9001:9001"

environment:

MINIO_ROOT_USER: minioadmin

MINIO_ROOT_PASSWORD: minioadmin

volumes:

- minio_data:/data

command: server /data --console-address ":9001"

# 创建MinIO Bucket

mc:

image: minio/mc:latest

depends_on:

- minio

container_name: mc

entrypoint: >

/bin/sh -c "

until (/usr/bin/mc config host add minio http://minio:9000 minioadmin minioadmin) do echo 'Waiting for MinIO...' && sleep 1; done;

/usr/bin/mc mb minio/delta-lake-bronze || true;

/usr/bin/mc mb minio/delta-lake-silver || true;

/usr/bin/mc mb minio/delta-lake-gold || true;

/usr/bin/mc policy set public minio/delta-lake-bronze;

/usr/bin/mc policy set public minio/delta-lake-silver;

/usr/bin/mc policy set public minio/delta-lake-gold;

exit 0;

"

# PostgreSQL用于存储元数据和支持性数据

postgres:

image: postgres:15

hostname: postgres

container_name: postgres

ports:

- "5432:5432"

environment:

POSTGRES_USER: pipeline

POSTGRES_PASSWORD: pipeline123

POSTGRES_DB: metadata_db

volumes:

- postgres_data:/var/lib/postgresql/data

- ./init_postgres.sql:/docker-entrypoint-initdb.d/init.sql

# Prometheus监控

prometheus:

image: prom/prometheus:latest

hostname: prometheus

container_name: prometheus

ports:

- "9090:9090"

volumes:

- ./prometheus.yml:/etc/prometheus/prometheus.yml

- prometheus_data:/prometheus

# Grafana可视化

grafana:

image: grafana/grafana:latest

hostname: grafana

container_name: grafana

ports:

- "3000:3000"

environment:

GF_SECURITY_ADMIN_USER: admin

GF_SECURITY_ADMIN_PASSWORD: admin123

volumes:

- grafana_data:/var/lib/grafana

- ./grafana-dashboards:/etc/grafana/provisioning/dashboards

# Kafka UI管理工具

kafka-ui:

image: provectuslabs/kafka-ui:latest

container_name: kafka-ui

ports:

- "8080:8080"

environment:

KAFKA_CLUSTERS_0_NAME: local

KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS: kafka:29092

KAFKA_CLUSTERS_0_SCHEMAREGISTRY: http://schema-registry:8081

depends_on:

- kafka

- schema-registry

volumes:

zookeeper_data:

kafka_data:

minio_data:

postgres_data:

prometheus_data:

grafana_data:3.1 数据采集层实现

3.1.1 rest_api_poller.py

脚本功能 :REST API轮询采集器,支持指数退避重试、速率限制、增量同步 使用方式 :python 3.1.1_rest_api_poller.py --config api_config.json

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

3.1.1 REST API轮询采集器

功能:多源REST API数据采集,支持Retry策略、增量同步、断点续传

使用方式:python 3.1.1_rest_api_poller.py --source weather_api

依赖:requests, tenacity, kafka-python, pydantic

"""

import json

import time

import hashlib

import logging

from datetime import datetime, timedelta

from typing import Dict, List, Optional, Any

from dataclasses import dataclass, asdict

import requests

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

from kafka import KafkaProducer

from kafka.errors import KafkaTimeoutError

import pandas as pd

import matplotlib.pyplot as plt

from matplotlib.dates import DateFormatter

import threading

import sys

import argparse

# 配置日志

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s - %(name)s - %(levelname)s - %(message)s'

)

logger = logging.getLogger(__name__)

@dataclass

class APIConfig:

"""API配置数据类"""

name: str

base_url: str

endpoint: str

method: str = "GET"

headers: Dict = None

params: Dict = None

auth_type: str = None # bearer, api_key, basic

auth_token: str = None

api_key_name: str = None

api_key_value: str = None

poll_interval: int = 60 # 秒

retry_attempts: int = 5

timeout: int = 30

incremental_field: str = "last_updated" # 增量同步字段

class RetryableError(Exception):

"""可重试错误基类"""

pass

class NonRetryableError(Exception):

"""不可重试错误"""

pass

class RESTAPIPoller:

"""

REST API轮询采集器

特性:

- 指数退避重试策略

- 断点续传(记录最后同步时间)

- 数据去重(基于内容哈希)

- 自适应速率限制

"""

def __init__(self, config: APIConfig, kafka_bootstrap: str = "localhost:9092"):

self.config = config

self.kafka_bootstrap = kafka_bootstrap

self.producer = None

self.last_sync_time = None

self.seen_hashes = set()

self.metrics = {

'requests_total': 0,

'requests_success': 0,

'requests_failed': 0,

'records_produced': 0,

'latency_history': []

}

self._init_kafka()

self._load_checkpoint()

def _init_kafka(self):

"""初始化Kafka生产者,配置优化参数"""

try:

self.producer = KafkaProducer(

bootstrap_servers=self.kafka_bootstrap,

value_serializer=lambda v: json.dumps(v, default=str).encode('utf-8'),

key_serializer=lambda k: k.encode('utf-8') if k else None,

# 3.1.2 生产者优化配置

batch_size=65536, # 64KB批量

linger_ms=100, # 等待100ms聚合消息

compression_type='lz4', # LZ4压缩算法

acks='all', # 等待所有副本确认

retries=5,

max_in_flight_requests_per_connection=5,

enable_idempotence=True # 幂等性保证

)

logger.info(f"Kafka生产者初始化成功: {self.kafka_bootstrap}")

except Exception as e:

logger.error(f"Kafka初始化失败: {e}")

raise

def _load_checkpoint(self):

"""加载断点续传检查点"""

checkpoint_file = f".checkpoint_{self.config.name}.json"

try:

with open(checkpoint_file, 'r') as f:

data = json.load(f)

self.last_sync_time = datetime.fromisoformat(data.get('last_sync'))

self.seen_hashes = set(data.get('seen_hashes', []))

logger.info(f"加载检查点: {self.last_sync_time}")

except FileNotFoundError:

self.last_sync_time = datetime.now() - timedelta(days=1)

logger.info("未找到检查点,使用默认起始时间")

def _save_checkpoint(self):

"""保存检查点"""

checkpoint_file = f".checkpoint_{self.config.name}.json"

with open(checkpoint_file, 'w') as f:

json.dump({

'last_sync': self.last_sync_time.isoformat(),

'seen_hashes': list(self.seen_hashes)[-1000:] # 保留最近1000个哈希

}, f)

def _get_auth_headers(self) -> Dict:

"""根据认证类型生成请求头"""

headers = self.config.headers or {}

if self.config.auth_type == "bearer":

headers['Authorization'] = f'Bearer {self.config.auth_token}'

elif self.config.auth_type == "api_key":

headers[self.config.api_key_name] = self.config.api_key_value

elif self.config.auth_type == "basic":

import base64

credentials = base64.b64encode(

f"{self.config.api_key_name}:{self.config.api_token}".encode()

).decode()

headers['Authorization'] = f'Basic {credentials}'

return headers

@retry(

retry=retry_if_exception_type((RetryableError, requests.RequestException)),

stop=stop_after_attempt(5),

wait=wait_exponential(multiplier=1, min=2, max=60),

reraise=True

)

def _fetch_data(self) -> List[Dict]:

"""

执行API请求,带指数退避重试

"""

url = f"{self.config.base_url}{self.config.endpoint}"

headers = self._get_auth_headers()

# 增量同步参数

params = self.config.params or {}

if self.config.incremental_field:

params['since'] = self.last_sync_time.isoformat()

params['sort'] = self.config.incremental_field

start_time = time.time()

self.metrics['requests_total'] += 1

try:

response = requests.request(

method=self.config.method,

url=url,

headers=headers,

params=params,

timeout=self.config.timeout

)

# 速率限制处理

if response.status_code == 429:

retry_after = int(response.headers.get('Retry-After', 60))

logger.warning(f"触发速率限制,等待{retry_after}秒")

time.sleep(retry_after)

raise RetryableError("Rate limited")

response.raise_for_status()

latency = time.time() - start_time

self.metrics['latency_history'].append((datetime.now(), latency))

self.metrics['requests_success'] += 1

return response.json() if response.text else []

except requests.exceptions.Timeout:

self.metrics['requests_failed'] += 1

raise RetryableError("请求超时")

except requests.exceptions.HTTPError as e:

self.metrics['requests_failed'] += 1

if e.response.status_code >= 500:

raise RetryableError(f"服务器错误: {e}")

raise NonRetryableError(f"客户端错误: {e}")

except Exception as e:

self.metrics['requests_failed'] += 1

logger.error(f"请求异常: {e}")

raise RetryableError(str(e))

def _compute_hash(self, record: Dict) -> str:

"""计算记录内容哈希用于去重"""

content = json.dumps(record, sort_keys=True, default=str)

return hashlib.md5(content.encode()).hexdigest()

def _enrich_record(self, record: Dict) -> Dict:

"""

3.1.4 数据血缘追踪:注入元数据

"""

enriched = {

**record,

'_metadata': {

'source_system': self.config.name,

'source_url': f"{self.config.base_url}{self.config.endpoint}",

'ingestion_timestamp': datetime.utcnow().isoformat(),

'ingestion_epoch_ms': int(time.time() * 1000),

'poller_version': '3.1.1',

'record_hash': self._compute_hash(record)

}

}

return enriched

def _produce_to_kafka(self, records: List[Dict], topic: str = "raw.api.data"):

"""批量发送数据到Kafka"""

if not records:

return

futures = []

for record in records:

enriched = self._enrich_record(record)

record_hash = enriched['_metadata']['record_hash']

# 去重检查

if record_hash in self.seen_hashes:

continue

# 使用业务键作为Kafka分区键(如果有)

key = str(record.get('id', record_hash))

try:

future = self.producer.send(

topic=topic,

key=key,

value=enriched

)

futures.append(future)

self.seen_hashes.add(record_hash)

self.metrics['records_produced'] += 1

except KafkaTimeoutError:

logger.error("Kafka发送超时")

raise

# 等待所有发送完成并处理异常

for future in futures:

try:

record_metadata = future.get(timeout=10)

logger.debug(f"消息已发送到分区 {record_metadata.partition}, 偏移量 {record_metadata.offset}")

except Exception as e:

logger.error(f"消息发送失败: {e}")

# 更新检查点

if records:

timestamps = [r.get(self.config.incremental_field) for r in records if self.config.incremental_field in r]

if timestamps:

self.last_sync_time = max(pd.to_datetime(timestamps)).to_pydatetime()

self._save_checkpoint()

def poll_once(self, topic: str = "raw.api.data") -> int:

"""执行单次轮询"""

logger.info(f"开始轮询: {self.config.name}")

try:

data = self._fetch_data()

if not data:

logger.info("未获取到新数据")

return 0

# 确保数据是列表

if isinstance(data, dict):

data = [data]

self._produce_to_kafka(data, topic)

logger.info(f"成功处理 {len(data)} 条记录")

return len(data)

except NonRetryableError as e:

logger.error(f"不可恢复错误: {e}")

return 0

except Exception as e:

logger.error(f"轮询失败: {e}")

return 0

def start_continuous_polling(self, topic: str = "raw.api.data", max_iterations: Optional[int] = None):

"""持续轮询模式"""

iteration = 0

try:

while True:

if max_iterations and iteration >= max_iterations:

break

count = self.poll_once(topic)

iteration += 1

# 可视化指标更新

if iteration % 10 == 0:

self._visualize_metrics()

# 自适应间隔:根据数据量调整

sleep_time = self.config.poll_interval if count > 0 else self.config.poll_interval * 2

logger.info(f"等待 {sleep_time} 秒后下次轮询...")

time.sleep(sleep_time)

except KeyboardInterrupt:

logger.info("收到停止信号,正在关闭...")

finally:

self.close()

def _visualize_metrics(self):

"""实时性能指标可视化"""

if len(self.metrics['latency_history']) < 2:

return

fig, axes = plt.subplots(2, 2, figsize=(12, 8))

fig.suptitle(f"API Poller Metrics - {self.config.name}", fontsize=14, fontweight='bold')

# 1. 请求延迟趋势

times, latencies = zip(*self.metrics['latency_history'][-50:])

axes[0, 0].plot(times, latencies, marker='o', color='#2E86AB', linewidth=2)

axes[0, 0].set_title('API Latency Trend')

axes[0, 0].set_ylabel('Seconds')

axes[0, 0].xaxis.set_major_formatter(DateFormatter('%H:%M'))

axes[0, 0].grid(True, alpha=0.3)

# 2. 成功率饼图

success = self.metrics['requests_success']

failed = self.metrics['requests_failed']

if success + failed > 0:

axes[0, 1].pie([success, failed], labels=['Success', 'Failed'],

colors=['#A23B72', '#F18F01'], autopct='%1.1f%%')

axes[0, 1].set_title('Request Success Rate')

# 3. 吞吐量柱状图

axes[1, 0].bar(['Produced'], [self.metrics['records_produced']], color='#C73E1D')

axes[1, 0].set_title('Total Records Produced')

axes[1, 0].set_ylabel('Count')

# 4. 实时状态文本

status_text = f"""

Last Sync: {self.last_sync_time.strftime('%Y-%m-%d %H:%M:%S') if self.last_sync_time else 'N/A'}

Total Requests: {self.metrics['requests_total']}

Success Rate: {(success/(success+failed)*100):.1f}% if success+failed > 0 else 0

Unique Records: {len(self.seen_hashes)}

"""

axes[1, 1].text(0.1, 0.5, status_text, fontsize=10, family='monospace',

verticalalignment='center', bbox=dict(boxstyle='round', facecolor='wheat'))

axes[1, 1].set_xlim(0, 1)

axes[1, 1].set_ylim(0, 1)

axes[1, 1].axis('off')

axes[1, 1].set_title('System Status')

plt.tight_layout()

plt.savefig(f"poller_metrics_{self.config.name}.png", dpi=150, bbox_inches='tight')

plt.close()

logger.info(f"指标可视化已保存: poller_metrics_{self.config.name}.png")

def close(self):

"""清理资源"""

if self.producer:

self.producer.flush()

self.producer.close()

self._save_checkpoint()

logger.info("资源已清理")

# 模拟API服务器(用于测试)

class MockAPIServer:

"""模拟REST API服务器,生成测试数据"""

def __init__(self):

self.data_store = []

self.last_id = 0

def generate_data(self, count: int = 10) -> List[Dict]:

"""生成模拟业务数据"""

new_records = []

for i in range(count):

self.last_id += 1

record = {

"id": self.last_id,

"timestamp": datetime.utcnow().isoformat(),

"sensor_id": f"sensor_{self.last_id % 5}",

"temperature": 20 + (self.last_id % 10) + (hash(self.last_id) % 5),

"humidity": 40 + (self.last_id % 20),

"status": "active" if self.last_id % 3 != 0 else "warning",

"location": {

"lat": 39.9 + (self.last_id % 100) * 0.001,

"lon": 116.4 + (self.last_id % 100) * 0.001

}

}

new_records.append(record)

self.data_store.append(record)

return new_records

def run_mock_server():

"""启动模拟API服务器(Flask)"""

from flask import Flask, request, jsonify

app = Flask(__name__)

mock_server = MockAPIServer()

@app.route('/api/v1/sensors', methods=['GET'])

def get_sensors():

since = request.args.get('since')

data = mock_server.generate_data(5) # 每次生成5条

return jsonify(data)

logger.info("启动模拟API服务器: http://localhost:5000")

app.run(host='0.0.0.0', port=5000, threaded=True)

def main():

parser = argparse.ArgumentParser(description='REST API Poller for Kafka Pipeline')

parser.add_argument('--source', default='weather_api', help='数据源名称')

parser.add_argument('--mock', action='store_true', help='启动模拟服务器')

args = parser.parse_args()

if args.mock:

run_mock_server()

return

# 配置示例

config = APIConfig(

name=args.source,

base_url="http://localhost:5000",

endpoint="/api/v1/sensors",

method="GET",

poll_interval=10,

retry_attempts=5,

incremental_field="timestamp",

headers={"Accept": "application/json"}

)

poller = RESTAPIPoller(config, kafka_bootstrap="localhost:9092")

try:

poller.start_continuous_polling(topic="raw.api.data", max_iterations=50)

except KeyboardInterrupt:

print("\n停止轮询...")

finally:

poller.close()

if __name__ == "__main__":

main()3.1.1 webhook_receiver.py

脚本功能 :Webhook接收服务器,支持HMAC签名验证、请求限流、异步处理 使用方式 :python 3.1.1_webhook_receiver.py --port 8000

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

3.1.1 Webhook接收服务器

功能:高性能Webhook端点,支持HMAC签名验证、限流、批量缓冲

使用方式:python 3.1.1_webhook_receiver.py --port 8000 --kafka localhost:9092

依赖:fastapi, uvicorn, kafka-python, hmac, asyncio

"""

import asyncio

import hmac

import hashlib

import json

import logging

import time

from datetime import datetime

from typing import Dict, List, Optional, Callable

from contextlib import asynccontextmanager

from collections import deque

import threading

import uvicorn

from fastapi import FastAPI, HTTPException, Request, BackgroundTasks, Depends, status

from fastapi.security import HTTPBasic, HTTPBasicCredentials

from fastapi.responses import JSONResponse

from kafka import KafkaProducer, KafkaError

from pydantic import BaseModel, Field

import matplotlib.pyplot as plt

import numpy as np

from concurrent.futures import ThreadPoolExecutor

# 配置日志

logging.basicConfig(

level=logging.INFO,

format='%(asctime)s - %(name)s - %(levelname)s - %(message)s'

)

logger = logging.getLogger(__name__)

class WebhookConfig:

"""Webhook配置"""

SECRET_KEY = "your-webhook-secret-key-here" # 生产环境应从环境变量读取

KAFKA_BOOTSTRAP = "localhost:9092"

MAX_BATCH_SIZE = 100

FLUSH_INTERVAL_MS = 1000

RATE_LIMIT_RPS = 1000 # 每秒请求数限制

MAX_PAYLOAD_SIZE = 1024 * 1024 # 1MB

class WebhookPayload(BaseModel):

"""Webhook数据模型"""

event_type: str = Field(..., description="事件类型")

event_id: str = Field(..., description="唯一事件ID")

timestamp: str = Field(default_factory=lambda: datetime.utcnow().isoformat())

data: Dict = Field(default_factory=dict, description="业务数据")

signature: Optional[str] = Field(None, description="HMAC签名")

class RateLimiter:

"""令牌桶限流器"""

def __init__(self, rate: int, burst: int):

self.rate = rate # 每秒令牌数

self.burst = burst # 桶容量

self.tokens = burst

self.last_update = time.time()

self._lock = asyncio.Lock()

async def acquire(self):

async with self._lock:

now = time.time()

elapsed = now - self.last_update

self.tokens = min(self.burst, self.tokens + elapsed * self.rate)

self.last_update = now

if self.tokens < 1:

raise HTTPException(

status_code=status.HTTP_429_TOO_MANY_REQUESTS,

detail="Rate limit exceeded"

)

self.tokens -= 1

class KafkaBatchProducer:

"""

3.1.2 Kafka批量生产者优化

特性:

- 异步批量缓冲

- 自动Flush(基于数量或时间)

- 失败重试与死信队列

"""

def __init__(self, bootstrap_servers: str):

self.producer = KafkaProducer(

bootstrap_servers=bootstrap_servers,

value_serializer=lambda v: json.dumps(v, default=str).encode('utf-8'),

key_serializer=lambda k: k.encode('utf-8') if k else None,

batch_size=65536,

linger_ms=100,

compression_type='lz4',

acks='all',

retries=5,

max_in_flight_requests_per_connection=5,

enable_idempotence=True

)

self.batch_buffer = deque()

self.buffer_lock = threading.Lock()

self.flush_timer = None

self.metrics = {

'messages_sent': 0,

'messages_failed': 0,

'batches_flushed': 0,

'latency_ms': []

}

self.running = True

# 启动后台Flush线程

self.flush_thread = threading.Thread(target=self._scheduled_flush, daemon=True)

self.flush_thread.start()

def send(self, topic: str, key: Optional[str], value: Dict, headers: Optional[Dict] = None):

"""

3.1.4 数据血缘:自动注入Header

"""

# 注入血缘元数据到Header

kafka_headers = []

if headers:

for k, v in headers.items():

kafka_headers.append((k, str(v).encode('utf-8')))

# 添加系统级血缘Header

kafka_headers.extend([

('source_system', b'webhook_receiver'),

('ingestion_timestamp', str(int(time.time() * 1000)).encode()),

('receiver_version', b'3.1.1'),

('event_type', value.get('event_type', 'unknown').encode())

])

with self.buffer_lock:

self.batch_buffer.append({

'topic': topic,

'key': key,

'value': value,

'headers': kafka_headers,

'added_time': time.time()

})

# 达到批量大小立即Flush

if len(self.batch_buffer) >= WebhookConfig.MAX_BATCH_SIZE:

asyncio.run_coroutine_threadsafe(self._async_flush(), asyncio.get_event_loop())

async def _async_flush(self):

"""异步Flush缓冲区"""

with self.buffer_lock:

batch = list(self.batch_buffer)

self.batch_buffer.clear()

if not batch:

return

start_time = time.time()

success_count = 0

failed_messages = []

for msg in batch:

try:

future = self.producer.send(

msg['topic'],

key=msg['key'],

value=msg['value'],

headers=msg['headers']

)

# 非阻塞检查(实际生产中使用回调)

success_count += 1

except KafkaError as e:

logger.error(f"Kafka发送失败: {e}")

self.metrics['messages_failed'] += 1

failed_messages.append(msg)

# 失败消息进入死信队列(简化版直接重试一次)

for msg in failed_messages:

try:

self.producer.send(

f"{msg['topic']}.dlq", # Dead Letter Queue

key=msg['key'],

value={**msg['value'], '_error': 'failed_after_retries'}

)

except Exception as e:

logger.error(f"DLQ发送也失败: {e}")

latency = (time.time() - start_time) * 1000

self.metrics['latency_ms'].append(latency)

self.metrics['messages_sent'] += success_count

self.metrics['batches_flushed'] += 1

logger.info(f"Flushed {len(batch)} messages, latency: {latency:.2f}ms")

def _scheduled_flush(self):

"""定时Flush线程"""

while self.running:

time.sleep(WebhookConfig.FLUSH_INTERVAL_MS / 1000)

if self.batch_buffer:

# 使用asyncio.run来运行异步flush

try:

loop = asyncio.new_event_loop()

asyncio.set_event_loop(loop)

loop.run_until_complete(self._async_flush())

loop.close()

except Exception as e:

logger.error(f"定时Flush失败: {e}")

def close(self):

"""关闭生产者"""

self.running = False

self.flush_thread.join(timeout=5)

self._async_flush() # 最后Flush

self.producer.flush()

self.producer.close()

def get_metrics(self):

"""获取性能指标"""

return {

**self.metrics,

'buffer_size': len(self.batch_buffer),

'avg_latency_ms': np.mean(self.metrics['latency_ms'][-100:]) if self.metrics['latency_ms'] else 0

}

class WebhookServer:

"""Webhook接收服务器"""

def __init__(self):

self.kafka_producer = None

self.rate_limiter = RateLimiter(WebhookConfig.RATE_LIMIT_RPS, WebhookConfig.RATE_LIMIT_RPS * 2)

self.request_history = deque(maxlen=1000) # 用于可视化

self.security = HTTPBasic()

def verify_signature(self, payload: bytes, signature: str, secret: str) -> bool:

"""

HMAC-SHA256签名验证

"""

expected = hmac.new(

secret.encode(),

payload,

hashlib.sha256

).hexdigest()

return hmac.compare_digest(expected, signature)

def create_app(self) -> FastAPI:

"""创建FastAPI应用"""

@asynccontextmanager

async def lifespan(app: FastAPI):

# 启动时初始化

self.kafka_producer = KafkaBatchProducer(WebhookConfig.KAFKA_BOOTSTRAP)

logger.info("Webhook服务器启动,Kafka生产者已连接")

yield

# 关闭时清理

self.kafka_producer.close()

logger.info("Webhook服务器关闭")

app = FastAPI(

title="Real-time Data Pipeline Webhook",

description="Kafka + Polars 数据管道 Webhook接收端",

version="3.1.1",

lifespan=lifespan

)

@app.get("/health")

async def health_check():

"""健康检查端点"""

return {

"status": "healthy",

"kafka_connected": self.kafka_producer is not None,

"timestamp": datetime.utcnow().isoformat()

}

@app.post("/webhook/{source_name}")

async def receive_webhook(

source_name: str,

request: Request,

background_tasks: BackgroundTasks,

credentials: HTTPBasicCredentials = Depends(self.security)

):

"""

主Webhook接收端点

- 支持动态source路径

- 自动限流

- HMAC验证

- 异步Kafka生产

"""

await self.rate_limiter.acquire()

# 记录请求时间用于监控

request_time = time.time()

# 读取原始Body用于签名验证

body = await request.body()

if len(body) > WebhookConfig.MAX_PAYLOAD_SIZE:

raise HTTPException(status_code=413, detail="Payload too large")

# 解析JSON

try:

data = json.loads(body)

except json.JSONDecodeError:

raise HTTPException(status_code=400, detail="Invalid JSON")

# HMAC验证(如果配置了密钥)

signature = request.headers.get('X-Webhook-Signature', '')

if WebhookConfig.SECRET_KEY and signature:

if not self.verify_signature(body, signature, WebhookConfig.SECRET_KEY):

raise HTTPException(status_code=401, detail="Invalid signature")

# 构造标准消息格式

event_id = data.get('event_id', hashlib.md5(body).hexdigest())

message = {

'event_id': event_id,

'source': source_name,

'event_type': data.get('event_type', 'unknown'),

'timestamp': data.get('timestamp', datetime.utcnow().isoformat()),

'payload': data.get('data', data),

'received_at': datetime.utcnow().isoformat(),

'client_ip': request.client.host

}

# 发送到Kafka(异步)

topic = f"webhook.{source_name}"

self.kafka_producer.send(

topic=topic,

key=event_id,

value=message,

headers={'source': source_name}

)

# 记录指标

latency = (time.time() - request_time) * 1000

self.request_history.append({

'time': datetime.utcnow(),

'latency_ms': latency,

'source': source_name,

'size_bytes': len(body)

})

logger.info(f"Received webhook from {source_name}, event_id: {event_id}, latency: {latency:.2f}ms")

return JSONResponse(

content={

"status": "accepted",

"event_id": event_id,

"queued": True

},

status_code=202

)

@app.get("/metrics/visualization")

async def get_metrics_viz():

"""

3.5.4 监控可视化:实时性能图表

"""

if not self.request_history:

return {"message": "No data available"}

# 生成可视化

fig, axes = plt.subplots(2, 2, figsize=(12, 8))

fig.suptitle('Webhook Receiver Real-time Metrics', fontsize=14, fontweight='bold')

times = [r['time'] for r in self.request_history]

latencies = [r['latency_ms'] for r in self.request_history]

sources = [r['source'] for r in self.request_history]

# 1. 请求延迟分布

axes[0, 0].hist(latencies, bins=20, color='#2E86AB', alpha=0.7, edgecolor='black')

axes[0, 0].axvline(np.mean(latencies), color='red', linestyle='--', label=f'Mean: {np.mean(latencies):.1f}ms')

axes[0, 0].set_title('Latency Distribution')

axes[0, 0].set_xlabel('Latency (ms)')

axes[0, 0].legend()

# 2. 时间序列

axes[0, 1].plot(times, latencies, marker='o', color='#A23B72', linewidth=1)

axes[0, 1].set_title('Latency Trend')

axes[0, 1].set_ylabel('Latency (ms)')

axes[0, 1].tick_params(axis='x', rotation=45)

# 3. 数据源分布

source_counts = {s: sources.count(s) for s in set(sources)}

axes[1, 0].bar(source_counts.keys(), source_counts.values(), color='#F18F01')

axes[1, 0].set_title('Requests by Source')

axes[1, 0].tick_params(axis='x', rotation=45)

# 4. Kafka指标

kafka_metrics = self.kafka_producer.get_metrics() if self.kafka_producer else {}

metric_text = f"""

Messages Sent: {kafka_metrics.get('messages_sent', 0)}

Messages Failed: {kafka_metrics.get('messages_failed', 0)}

Avg Latency: {kafka_metrics.get('avg_latency_ms', 0):.2f}ms

Buffer Size: {kafka_metrics.get('buffer_size', 0)}

Batches Flushed: {kafka_metrics.get('batches_flushed', 0)}

"""

axes[1, 1].text(0.1, 0.5, metric_text, fontsize=10, family='monospace',

verticalalignment='center', bbox=dict(boxstyle='round', facecolor='lightblue'))

axes[1, 1].set_xlim(0, 1)

axes[1, 1].set_ylim(0, 1)

axes[1, 1].axis('off')

axes[1, 1].set_title('Kafka Producer Metrics')

plt.tight_layout()

plt.savefig('webhook_metrics.png', dpi=150, bbox_inches='tight')

plt.close()

return {

"status": "generated",

"image_path": "webhook_metrics.png",

"current_stats": {

"total_requests": len(self.request_history),

"avg_latency_ms": np.mean(latencies) if latencies else 0,

"sources": list(set(sources))

}

}

return app

def main():

import argparse

parser = argparse.ArgumentParser(description='Webhook Receiver Server')

parser.add_argument('--port', type=int, default=8000, help='服务端口')

parser.add_argument('--kafka', default='localhost:9092', help='Kafka地址')

args = parser.parse_args()

WebhookConfig.KAFKA_BOOTSTRAP = args.kafka

server = WebhookServer()

app = server.create_app()

logger.info(f"启动Webhook服务器: http://0.0.0.0:{args.port}")

logger.info(f"健康检查: http://0.0.0.0:{args.port}/health")

logger.info(f"Webhook端点: http://0.0.0.0:{args.port}/webhook/{{source_name}}")

uvicorn.run(app, host="0.0.0.0", port=args.port, workers=1)

if __name__ == "__main__":

main()3.1.2 kafka_producer_optimized.py

脚本功能 :展示Kafka生产者的高级优化配置,包括批量压缩、幂等性、事务支持 使用方式 :python 3.1.2_kafka_producer_optimized.py

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

3.1.2 Kafka生产者优化配置详解

功能:展示生产级Kafka Producer的所有优化参数和最佳实践

使用方式:直接运行查看配置说明和性能测试

"""

import json

import time

import logging

from concurrent.futures import ThreadPoolExecutor, as_completed

from typing import List, Dict, Callable

import threading

import matplotlib.pyplot as plt

import numpy as np

from kafka import KafkaProducer, KafkaConsumer

from kafka.errors import KafkaTimeoutError, NotLeaderForPartitionError

from kafka.partitioner import RoundRobinPartitioner, Murmur2Partitioner

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

class OptimizedKafkaProducer:

"""

企业级Kafka生产者优化实现

关键优化点:

1. 批量发送与压缩

2. 幂等性与事务

3. 分区策略优化

4. 异步回调与背压

"""

def __init__(self, bootstrap_servers: str = "localhost:9092", enable_transaction: bool = False):

self.bootstrap_servers = bootstrap_servers

self.enable_transaction = enable_transaction

self.producer = None

self.metrics = {

'sent': 0,

'success': 0,

'failed': 0,

'latency': [],

'retries': 0,

'compression_ratio': []

}

self._init_producer()

def _init_producer(self):

"""

初始化优化配置的生产者

"""

config = {

# ==================== 3.1.2 批量发送优化 ====================

'bootstrap_servers': self.bootstrap_servers,

'batch_size': 65536, # 64KB批量,减少网络往返

'linger_ms': 100, # 等待100ms聚合消息,提高吞吐量

'buffer_memory': 67108864, # 64MB缓冲区,应对突发流量

# ==================== 3.1.2 压缩算法 ====================

'compression_type': 'lz4', # LZ4压缩:CPU占用低,压缩率高

# 可选:'gzip'(高压缩率), 'snappy'(平衡), 'zstd'(新版推荐)

# ==================== 3.1.2 ACK配置 ====================

'acks': 'all', # 等待ISR所有副本确认(最高可靠性)

# 可选:1(仅leader), 0(不等待,最高吞吐)

'retries': 2147483647, # 无限重试(配合max_in_flight保证顺序)

'max_in_flight_requests_per_connection': 5, # 允许5个未确认请求,提高吞吐

'enable_idempotence': True, # 启用幂等性(exactly-once语义基础)

# ==================== 连接与超时 ====================

'request_timeout_ms': 30000,

'retry_backoff_ms': 1000,

'metadata_max_age_ms': 300000,

'connections_max_idle_ms': 540000,

# 序列化

'key_serializer': lambda k: k.encode('utf-8') if isinstance(k, str) else k,

'value_serializer': lambda v: json.dumps(v).encode('utf-8') if not isinstance(v, bytes) else v,

}

if self.enable_transaction:

config['transactional_id'] = f'prod-{int(time.time()*1000)}-{threading.get_ident()}'

self.producer = KafkaProducer(**config)

if self.enable_transaction:

self.producer.init_transactions()

logger.info("事务生产者初始化完成")

else:

logger.info("标准生产者初始化完成")

def send_with_callback(self, topic: str, key: str, value: Dict, headers: Dict = None):

"""

异步发送带回调,处理delivery报告

"""

start_time = time.time()

# 构造Headers(包含血缘信息)

kafka_headers = [(k, str(v).encode()) for k, v in (headers or {}).items()]

kafka_headers.extend([

('producer_timestamp', str(int(time.time()*1000)).encode()),

('producer_version', b'3.1.2')

])

def on_success(metadata):

latency = (time.time() - start_time) * 1000

self.metrics['success'] += 1

self.metrics['latency'].append(latency)

logger.debug(f"发送成功: partition={metadata.partition}, offset={metadata.offset}, latency={latency:.2f}ms")

def on_error(exception):

self.metrics['failed'] += 1

logger.error(f"发送失败: {exception}")

future = self.producer.send(

topic=topic,

key=key,

value=value,

headers=kafka_headers

)

future.add_callback(on_success)

future.add_errback(on_error)

self.metrics['sent'] += 1

return future

def send_batch_transactional(self, topic: str, messages: List[Dict]):

"""

事务性批量发送(exactly-once语义)

适用于需要严格一致性的金融交易等场景

"""

if not self.enable_transaction:

raise ValueError("未启用事务支持")

try:

self.producer.begin_transaction()

for msg in messages:

self.producer.send(

topic=topic,

key=msg.get('key'),

value=msg.get('value')

)

# 提交消费位移(如果是consume-transform-produce模式)

self.producer.commit_transaction()

self.metrics['success'] += len(messages)

logger.info(f"事务提交成功: {len(messages)}条消息")

except Exception as e:

self.producer.abort_transaction()

self.metrics['failed'] += len(messages)

logger.error(f"事务回滚: {e}")

raise

def benchmark_throughput(self, topic: str, num_messages: int = 10000, message_size: int = 1024):

"""

性能基准测试:对比不同配置下的吞吐量

"""

logger.info(f"开始吞吐量测试: {num_messages}条消息, {message_size}字节/条")

# 生成测试数据

test_data = {

'data': 'x' * message_size,

'timestamp': time.time(),

'seq': 0

}

latencies = []

start_time = time.time()

# 批量异步发送

futures = []

for i in range(num_messages):

test_data['seq'] = i

test_data['timestamp'] = time.time()

future = self.send_with_callback(topic, f"key-{i % 100}", test_data.copy())

futures.append(future)

# 每1000条刷新一次,避免内存溢出

if i % 1000 == 0:

self.producer.flush()

# 等待所有发送完成

self.producer.flush()

total_time = time.time() - start_time

throughput = num_messages / total_time

logger.info(f"测试完成: 总时间={total_time:.2f}s, 吞吐量={throughput:.2f} msg/s")

# 可视化结果

self._visualize_benchmark(throughput, latencies)

return {

'total_time': total_time,

'throughput': throughput,

'avg_latency': np.mean(self.metrics['latency']) if self.metrics['latency'] else 0

}

def _visualize_benchmark(self, throughput: float, latencies: List[float]):

"""生成性能测试可视化报告"""

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

fig.suptitle('Kafka Producer Optimization Benchmark', fontsize=16, fontweight='bold')

# 1. 配置参数展示

config_text = """

Optimization Configuration:

━━━━━━━━━━━━━━━━━━━━━━━━

Batch Size: 64KB (65536 bytes)

Linger Time: 100ms

Compression: LZ4

ACKs: all (ISR confirmed)

Retries: MAX_INT (infinite)

Max In Flight: 5

Idempotence: Enabled

Buffer Memory: 64MB

"""

axes[0, 0].text(0.05, 0.95, config_text, transform=axes[0, 0].transAxes,

fontsize=10, verticalalignment='top', family='monospace',

bbox=dict(boxstyle='round', facecolor='lightblue', alpha=0.8))

axes[0, 0].set_xlim(0, 1)

axes[0, 0].set_ylim(0, 1)

axes[0, 0].axis('off')

axes[0, 0].set_title('Producer Configuration')

# 2. 吞吐量对比(理论vs实测)

scenarios = ['Unoptimized\n(batch=1)', 'Optimized\n(this config)', 'Max Throughput\n(acks=0)']

throughputs = [500, throughput, 50000] # 假设值

colors = ['#F18F01', '#C73E1D', '#2E86AB']

bars = axes[0, 1].bar(scenarios, throughputs, color=colors)

axes[0, 1].set_title('Throughput Comparison (msgs/sec)')

axes[0, 1].set_ylabel('Messages/Second')

for bar, val in zip(bars, throughputs):

axes[0, 1].text(bar.get_x() + bar.get_width()/2, bar.get_height() + 100,

f'{val:.0f}', ha='center', fontweight='bold')

# 3. 延迟分布

if self.metrics['latency']:

axes[1, 0].hist(self.metrics['latency'], bins=50, color='#A23B72', alpha=0.7, edgecolor='black')

axes[1, 0].axvline(np.mean(self.metrics['latency']), color='red', linestyle='--', linewidth=2,

label=f'Mean: {np.mean(self.metrics["latency"]):.2f}ms')

axes[1, 0].set_title('Latency Distribution')

axes[1, 0].set_xlabel('Latency (ms)')

axes[1, 0].legend()

# 4. 成功率与失败率

success = self.metrics['success']

failed = self.metrics['failed']

if success + failed > 0:

sizes = [success, failed]

labels = [f'Success\n{success}', f'Failed\n{failed}']

colors = ['#4CAF50', '#F44336']

axes[1, 1].pie(sizes, labels=labels, colors=colors, autopct='%1.1f%%', startangle=90)

axes[1, 1].set_title('Delivery Success Rate')

plt.tight_layout()

plt.savefig('kafka_producer_benchmark.png', dpi=150, bbox_inches='tight')

logger.info("基准测试可视化已保存: kafka_producer_benchmark.png")

plt.show()

def close(self):

"""优雅关闭"""

self.producer.flush()

self.producer.close()

logger.info("生产者已关闭")

def demonstrate_compression_effect():

"""

展示不同压缩算法的效果对比

"""

import lz4.frame, gzip, snappy, zstandard as zstd

import random

import string

# 生成模拟JSON数据(真实业务数据分布)

def generate_data(size_kb: int) -> bytes:

records = []

for _ in range(size_kb * 10): # 每KB约10条记录

record = {

'user_id': random.randint(10000, 99999),

'event': random.choice(['click', 'view', 'purchase', 'logout']),

'timestamp': time.time(),

'properties': {

'page': ''.join(random.choices(string.ascii_lowercase, k=20)),

'duration': random.randint(1, 300),

'referrer': ''.join(random.choices(string.ascii_letters, k=50))

}

}

records.append(record)

return json.dumps(records).encode()

data = generate_data(100) # 100KB原始数据

original_size = len(data)

results = {

'Original': original_size,

'LZ4': len(lz4.frame.compress(data)),

'Snappy': len(snappy.compress(data)),

'GZIP': len(gzip.compress(data)),

'ZSTD': len(zstd.ZstdCompressor().compress(data))

}

# 可视化

fig, ax = plt.subplots(figsize=(10, 6))

algorithms = list(results.keys())

sizes = list(results.values())

compression_ratios = [original_size/s for s in sizes]

bars = ax.bar(algorithms, sizes, color=['#2E86AB', '#A23B72', '#F18F01', '#C73E1D', '#6A994E'])

ax.set_ylabel('Compressed Size (bytes)')

ax.set_title('Kafka Compression Algorithms Comparison (100KB Original)')

# 添加压缩率标签

for bar, ratio in zip(bars, compression_ratios):

height = bar.get_height()

ax.text(bar.get_x() + bar.get_width()/2., height,

f'{ratio:.1f}x', ha='center', va='bottom', fontweight='bold')

plt.tight_layout()

plt.savefig('compression_comparison.png', dpi=150)

plt.show()

return results

if __name__ == "__main__":

# 1. 展示压缩效果

print("=" * 60)

print("3.1.2 Kafka压缩算法对比")

print("=" * 60)

compression_results = demonstrate_compression_effect()

for algo, size in compression_results.items():

print(f"{algo}: {size} bytes")

# 2. 运行生产者基准测试

print("\n" + "=" * 60)

print("3.1.2 生产者优化基准测试")

print("=" * 60)

producer = OptimizedKafkaProducer(enable_transaction=False)

try:

# 创建测试topic

results = producer.benchmark_throughput(

topic="test.throughput.optimized",

num_messages=5000,

message_size=500

)

print(f"\n测试结果: {results}")

finally:

producer.close()3.1.3 schema_validation.py

脚本功能 :Confluent Schema Registry集成与Pydantic双重验证,支持Avro/JSON Schema/Protobuf 使用方式 :python 3.1.3_schema_validation.py --register-schemas

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

3.1.3 Schema验证层实现

功能:Pydantic模型校验 + Confluent Schema Registry集成

支持Avro/JSON Schema/Protobuf,实现前后兼容检查

使用方式:python 3.1.3_schema_validation.py

"""

import json

import logging

from typing import Dict, Any, Optional, Union, List

from enum import Enum

from datetime import datetime

import requests

import fastavro

import io

from pydantic import BaseModel, Field, validator, root_validator, ValidationError

from kafka import KafkaProducer, KafkaConsumer

from confluent_kafka.schema_registry import SchemaRegistryClient

from confluent_kafka.schema_registry.avro import AvroSerializer, AvroDeserializer

from confluent_kafka.serialization import StringSerializer, SerializationContext, MessageField

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

class EventType(str, Enum):

"""事件类型枚举"""

USER_LOGIN = "user_login"

USER_LOGOUT = "user_logout"

TRANSACTION = "transaction"

SYSTEM_ALERT = "system_alert"

class SensorReading(BaseModel):

"""

Pydantic模型定义(运行时验证)

3.1.3 Schema验证示例:传感器数据

"""

sensor_id: str = Field(..., min_length=5, max_length=50, description="传感器唯一ID")

timestamp: datetime = Field(default_factory=datetime.utcnow)

temperature: float = Field(..., ge=-50.0, le=150.0, description="摄氏温度")

humidity: Optional[float] = Field(None, ge=0.0, le=100.0)

pressure: Optional[float] = Field(None, ge=800.0, le=1200.0)

location: Dict[str, float] = Field(default_factory=dict)

metadata: Dict[str, Any] = Field(default_factory=dict)

@validator('location')

def validate_location(cls, v):

"""自定义验证:确保包含lat和lon"""

if v and ('lat' not in v or 'lon' not in v):

raise ValueError("Location must contain 'lat' and 'lon'")

return v

@validator('temperature')

def validate_temp_range(cls, v, values):

"""业务逻辑验证:根据传感器类型验证温度范围"""

sensor_id = values.get('sensor_id', '')

if 'indoor' in sensor_id and v > 60:

raise ValueError("Indoor sensor temperature cannot exceed 60°C")

return v

@root_validator

def check_at_least_one_metric(cls, values):

"""确保至少有一个有效指标"""

has_temp = values.get('temperature') is not None

has_humidity = values.get('humidity') is not None

has_pressure = values.get('pressure') is not None

if not any([has_temp, has_humidity, has_pressure]):

raise ValueError("At least one metric (temperature, humidity, or pressure) must be provided")

return values

class Config:

schema_extra = {

"example": {

"sensor_id": "indoor_sensor_001",

"temperature": 23.5,

"humidity": 45.0,

"location": {"lat": 39.9, "lon": 116.4}

}

}

class TransactionEvent(BaseModel):

"""

金融交易事件模型

展示复杂验证逻辑

"""

transaction_id: str = Field(..., regex=r'^TXN[0-9]{12}$')

user_id: int = Field(..., gt=0)

amount: float = Field(..., gt=0.0)

currency: str = Field(..., regex='^(USD|EUR|CNY|JPY)$')

timestamp: datetime

merchant_id: Optional[str] = None

risk_score: Optional[float] = Field(None, ge=0.0, le=1.0)

@validator('amount')

def validate_precision(cls, v):

"""验证金额精度(最多2位小数)"""

if round(v, 2) != v:

raise ValueError("Amount cannot have more than 2 decimal places")

return v

@root_validator

def validate_risk_for_large_amount(cls, values):

"""大额交易必须包含风险评分"""

amount = values.get('amount', 0)

risk = values.get('risk_score')

if amount > 10000 and risk is None:

raise ValueError("Transactions over 10000 require risk_score")

return values

class SchemaRegistryManager:

"""

Confluent Schema Registry管理器

功能:

- 注册/获取Schema

- 兼容性检查

- 版本演进管理

"""

def __init__(self, url: str = "http://localhost:8081"):

self.client = SchemaRegistryClient({'url': url})

self._schema_cache = {}

def register_avro_schema(self, subject: str, avro_schema: Union[str, Dict]) -> int:

"""

注册Avro Schema到Registry

"""

from confluent_kafka.schema_registry import Schema

if isinstance(avro_schema, dict):

avro_schema = json.dumps(avro_schema)

schema = Schema(avro_schema, "AVRO")

schema_id = self.client.register_schema(subject, schema)

logger.info(f"Schema注册成功: {subject} -> ID {schema_id}")

return schema_id

def get_serializer(self, subject: str) -> AvroSerializer:

"""

获取Avro序列化器(用于Producer)

"""

from confluent_kafka.schema_registry import Schema

# 获取最新Schema

latest = self.client.get_latest_version(subject)

return AvroSerializer(

schema_registry_client=self.client,

schema_str=latest.schema.schema_str,

to_dict=lambda obj, ctx: obj.dict() if hasattr(obj, 'dict') else obj

)

def get_deserializer(self, subject: str) -> AvroDeserializer:

"""

获取Avro反序列化器(用于Consumer)

"""

from confluent_kafka.schema_registry import Schema

latest = self.client.get_latest_version(subject)

return AvroDeserializer(

schema_registry_client=self.client,

schema_str=latest.schema.schema_str,

from_dict=lambda obj, ctx: obj

)

def check_compatibility(self, subject: str, new_schema: Union[str, Dict]) -> bool:

"""

检查Schema兼容性(前后兼容)

"""

try:

if isinstance(new_schema, dict):

new_schema = json.dumps(new_schema)

compatible = self.client.test_compatibility(subject, new_schema)

if compatible:

logger.info(f"Schema {subject} 兼容性检查通过")

else:

logger.warning(f"Schema {subject} 兼容性检查失败")

return compatible

except Exception as e:

logger.error(f"兼容性检查错误: {e}")

return False

class ValidatingKafkaProducer:

"""

带Schema验证的Kafka生产者

双层验证:

1. Pydantic运行时类型检查

2. Schema Registry格式验证

"""

def __init__(self,

bootstrap_servers: str = "localhost:9092",

schema_registry_url: str = "http://localhost:8081"):

self.bootstrap_servers = bootstrap_servers

self.registry = SchemaRegistryManager(schema_registry_url)

self.producer = None

self.serializers = {} # topic -> serializer缓存

self._init_producer()

def _init_producer(self):

self.producer = KafkaProducer(

bootstrap_servers=self.bootstrap_servers,

key_serializer=StringSerializer('utf_8'),

value_serializer=lambda v: v, # 自定义序列化

acks='all',

retries=5

)

def _get_or_create_serializer(self, topic: str):

"""获取或创建序列化器"""

if topic not in self.serializers:

subject = f"{topic}-value"

self.serializers[topic] = self.registry.get_serializer(subject)

return self.serializers[topic]

def send_validated(self, topic: str, key: str, value: BaseModel,

pydantic_model: type = None) -> Any:

"""

发送带验证的消息

流程:

1. Pydantic验证(类型、约束、业务逻辑)

2. Schema Registry序列化(Avro编码)

3. Kafka生产

"""

# 步骤1: Pydantic验证

if pydantic_model and not isinstance(value, pydantic_model):

try:

if isinstance(value, dict):

value = pydantic_model(**value)

else:

raise ValueError(f"Value must be instance of {pydantic_model}")

except ValidationError as e:

logger.error(f"Pydantic验证失败: {e}")

raise

# 转换为dict

data = value.dict() if hasattr(value, 'dict') else value

# 步骤2: Schema Registry序列化

try:

serializer = self._get_or_create_serializer(topic)

serialized = serializer(data, SerializationContext(topic, MessageField.VALUE))

except Exception as e:

logger.error(f"Avro序列化失败: {e}")

raise

# 步骤3: 发送

future = self.producer.send(

topic=topic,

key=key,

value=serialized,

headers={

'schema_version': b'latest',

'validation': b'pydantic+avro',

'source': b'schema_validation.py'

}

)

return future

def close(self):

self.producer.flush()

self.producer.close()

class ValidatingKafkaConsumer:

"""

带Schema验证的消费者

自动处理Schema演进和向后兼容

"""

def __init__(self,

topic: str,

group_id: str,

bootstrap_servers: str = "localhost:9092",

schema_registry_url: str = "http://localhost:8081"):

self.topic = topic

self.registry = SchemaRegistryManager(schema_registry_url)

self.deserializer = self.registry.get_deserializer(f"{topic}-value")

self.consumer = KafkaConsumer(

topic,

group_id=group_id,

bootstrap_servers=bootstrap_servers,

auto_offset_reset='earliest',

key_deserializer=lambda k: k.decode('utf-8') if k else None,

value_deserializer=lambda v: self._deserialize(v)

)

def _deserialize(self, data: bytes) -> Any:

"""反序列化并验证"""

if data is None:

return None

try:

return self.deserializer(data, SerializationContext(self.topic, MessageField.VALUE))

except Exception as e:

logger.error(f"反序列化失败: {e}")

# 返回原始数据以便错误处理

return {'_error': str(e), '_raw': data.hex()}

def consume_validated(self, timeout_ms: int = 1000):

"""消费验证后的消息"""

messages = self.consumer.poll(timeout_ms=timeout_ms)

validated_records = []

for tp, records in messages.items():

for record in records:

if '_error' not in record.value:

validated_records.append({

'key': record.key,

'value': record.value,

'partition': record.partition,

'offset': record.offset,

'timestamp': record.timestamp

})

else:

logger.warning(f"跳过无效消息: offset={record.offset}")

return validated_records

def close(self):

self.consumer.close()

def create_sample_schemas():

"""

创建示例Avro Schema

"""

sensor_schema = {

"type": "record",

"name": "SensorReading",

"namespace": "com.datapipeline.sensor",

"fields": [

{"name": "sensor_id", "type": "string"},

{"name": "timestamp", "type": "string"},

{"name": "temperature", "type": "double"},

{"name": "humidity", "type": ["null", "double"], "default": None},

{"name": "pressure", "type": ["null", "double"], "default": None},

{"name": "location", "type": ["null", {

"type": "map",

"values": "double"

}], "default": None},

{"name": "metadata", "type": ["null", {

"type": "map",

"values": "string"

}], "default": None}

]

}

transaction_schema = {

"type": "record",

"name": "Transaction",

"namespace": "com.datapipeline.payment",

"fields": [

{"name": "transaction_id", "type": "string"},

{"name": "user_id", "type": "long"},

{"name": "amount", "type": "double"},

{"name": "currency", "type": "string"},

{"name": "timestamp", "type": "string"},

{"name": "merchant_id", "type": ["null", "string"], "default": None},

{"name": "risk_score", "type": ["null", "double"], "default": None}

]

}

return {

'sensor-value': sensor_schema,

'transaction-value': transaction_schema

}

def run_schema_workflow():

"""

完整的Schema验证工作流演示

"""

import matplotlib.pyplot as plt

import time

# 1. 初始化Schema Registry

registry = SchemaRegistryManager()

schemas = create_sample_schemas()

# 注册Schema

print("=" * 60)

print("3.1.3 Schema Registry注册")

print("=" * 60)

for subject, schema in schemas.items():

try:

schema_id = registry.register_avro_schema(subject, schema)

print(f"✓ {subject}: ID={schema_id}")

except Exception as e:

print(f"✗ {subject}: {e}")

# 2. 创建验证生产者

print("\n" + "=" * 60)

print("3.1.3 双层验证消息生产")

print("=" * 60)

producer = ValidatingKafkaProducer()

# 生成有效和无效数据

test_cases = [

# 有效数据

{

'sensor_id': 'indoor_sensor_001',

'temperature': 23.5,

'humidity': 45.0,

'location': {'lat': 39.9, 'lon': 116.4}

},

# 无效:温度过高

{

'sensor_id': 'indoor_sensor_002',

'temperature': 85.0, # 超过室内传感器限制

'humidity': 30.0

},

# 无效:缺少指标

{

'sensor_id': 'outdoor_sensor_003',

'location': {'lat': 40.0, 'lon': 117.0}

}

]

results = {'success': 0, 'failed': 0, 'errors': []}

for i, data in enumerate(test_cases):

try:

# Pydantic验证

validated = SensorReading(**data)

# 发送到Kafka

future = producer.send_validated(

topic='sensor',

key=f'sensor-{i}',

value=validated,

pydantic_model=SensorReading

)

record_metadata = future.get(timeout=10)

print(f"✓ 消息 {i}: partition={record_metadata.partition}, offset={record_metadata.offset}")

results['success'] += 1

except ValidationError as e:

print(f"✗ 消息 {i} Pydantic验证失败: {e.errors()}")

results['failed'] += 1

results['errors'].append(('pydantic', str(e)))

except Exception as e:

print(f"✗ 消息 {i} 发送失败: {e}")

results['failed'] += 1

results['errors'].append(('kafka', str(e)))

producer.close()

# 3. 可视化验证结果

print("\n" + "=" * 60)

print("3.1.3 验证结果可视化")

print("=" * 60)

fig, axes = plt.subplots(1, 2, figsize=(12, 5))

# 成功率饼图

sizes = [results['success'], results['failed']]

colors = ['#4CAF50', '#F44336']

axes[0].pie(sizes, labels=['Success', 'Failed'], colors=colors, autopct='%1.1f%%',

startangle=90, explode=(0.05, 0))

axes[0].set_title('Schema Validation Results')

# 错误类型分布

if results['errors']:

error_types = [e[0] for e in results['errors']]

types = list(set(error_types))

counts = [error_types.count(t) for t in types]

axes[1].bar(types, counts, color=['#FF9800', '#2196F3'][:len(types)])

axes[1].set_title('Error Types Distribution')

axes[1].set_ylabel('Count')

plt.tight_layout()

plt.savefig('schema_validation_results.png', dpi=150, bbox_inches='tight')

plt.show()

print(f"\n结果统计: 成功={results['success']}, 失败={results['failed']}")

print("可视化已保存: schema_validation_results.png")

if __name__ == "__main__":

run_schema_workflow()3.1.4 lineage_tracking.py

脚本功能 :数据血缘追踪系统,注入Header元数据,支持端到端链路追踪 使用方式 :python 3.1.4_lineage_tracking.py

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

3.1.4 数据血缘追踪实现

功能:端到端数据血缘追踪,注入来源系统、处理时间戳、链路ID

支持OpenLineage标准格式输出

使用方式:python 3.1.4_lineage_tracking.py

"""

import json

import uuid

import hashlib

import time

import logging

from datetime import datetime

from typing import Dict, List, Optional, Any

from dataclasses import dataclass, asdict

from enum import Enum

import threading

from kafka import KafkaProducer, KafkaConsumer, TopicPartition

import matplotlib.pyplot as plt

import networkx as nx

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

class ProcessingStage(str, Enum):

"""数据处理阶段枚举"""

INGESTION = "ingestion"

VALIDATION = "validation"

TRANSFORMATION = "transformation"

ENRICHMENT = "enrichment"

AGGREGATION = "aggregation"

STORAGE = "storage"

EXPORT = "export"

@dataclass

class LineageEvent:

"""

血缘事件模型(OpenLineage兼容)

"""

event_id: str

run_id: str

timestamp: str

event_type: str # START, COMPLETE, FAIL

job_name: str

job_namespace: str = "realtime_pipeline"

inputs: List[Dict] = None

outputs: List[Dict] = None

producer: str = "lineage_tracker"

schema_version: str = "1.0.0"

def to_openlineage(self) -> Dict:

"""转换为OpenLineage标准格式"""

return {

"eventTime": self.timestamp,

"eventType": self.event_type,

"run": {

"runId": self.run_id

},

"job": {

"namespace": self.job_namespace,

"name": self.job_name

},

"inputs": self.inputs or [],

"outputs": self.outputs or [],

"producer": self.producer

}

class LineageTracker:

"""

数据血缘追踪器

功能:

- 生成追踪ID

- 记录处理链路

- 注入Kafka Headers

- 血缘图谱构建

"""

def __init__(self,

kafka_bootstrap: str = "localhost:9092",

lineage_topic: str = "lineage.events"):

self.kafka_bootstrap = kafka_bootstrap

self.lineage_topic = lineage_topic

self.producer = None

self.local_graph = nx.DiGraph() # 本地血缘图

self.run_counter = 0

self._lock = threading.Lock()

self._init_kafka()

def _init_kafka(self):

"""初始化血缘事件生产者"""

self.producer = KafkaProducer(

bootstrap_servers=self.kafka_bootstrap,

value_serializer=lambda v: json.dumps(v, default=str).encode('utf-8'),

acks=1,

retries=3

)

def generate_run_id(self) -> str:

"""生成唯一运行ID"""

return str(uuid.uuid4())

def create_lineage_headers(self,

source_system: str,

run_id: Optional[str] = None,

stage: ProcessingStage = ProcessingStage.INGESTION,

parent_run_id: Optional[str] = None) -> Dict[str, bytes]:

"""

创建标准血缘Headers

Returns:

Dict[str, bytes]: Kafka消息Headers

"""

if not run_id:

run_id = self.generate_run_id()

timestamp = int(time.time() * 1000)

headers = {

# 核心追踪字段

'x-lineage-run-id': run_id.encode(),

'x-lineage-stage': stage.value.encode(),

'x-lineage-source-system': source_system.encode(),

'x-lineage-timestamp-ms': str(timestamp).encode(),

'x-lineage-producer': b'lineage_tracker_v3.1.4',

# 数据指纹(用于去重和一致性检查)

'x-content-hash': b'', # 稍后填充

# 可选的父追踪ID(支持子任务)

'x-parent-run-id': (parent_run_id or '').encode(),

# 技术元数据

'x-thread-id': str(threading.get_ident()).encode(),

'x-hostname': b'localhost', # 实际应从环境获取

}

return headers

def record_event(self,

event_type: str,

job_name: str,

inputs: List[Dict],

outputs: List[Dict],

run_id: Optional[str] = None):

"""

记录血缘事件到OpenLineage后端

"""

if not run_id:

run_id = self.generate_run_id()

event = LineageEvent(

event_id=str(uuid.uuid4()),

run_id=run_id,

timestamp=datetime.utcnow().isoformat(),

event_type=event_type,

job_name=job_name,

inputs=inputs,

outputs=outputs

)

lineage_data = event.to_openlineage()

try:

self.producer.send(

topic=self.lineage_topic,

key=run_id,

value=lineage_data

)

logger.debug(f"血缘事件已记录: {job_name} [{event_type}]")

# 更新本地图

with self._lock:

for inp in inputs:

for out in outputs:

self.local_graph.add_edge(

inp.get('name', 'unknown'),

out.get('name', 'unknown'),

job=job_name,

timestamp=event.timestamp

)

except Exception as e:

logger.error(f"血缘事件记录失败: {e}")

def compute_content_hash(self, data: Dict) -> str:

"""

计算数据内容哈希(用于追踪数据变更)

"""

# 排序并序列化,确保一致性

canonical = json.dumps(data, sort_keys=True, separators=(',', ':'), default=str)

return hashlib.sha256(canonical.encode()).hexdigest()[:16] # 取前16位节省空间

def trace_message_lineage(self,

topic: str,

partition: int,

offset: int,

timeout_ms: int = 5000) -> Optional[Dict]:

"""

追踪单条消息的完整血缘链路

"""

consumer = KafkaConsumer(

bootstrap_servers=self.kafka_bootstrap,

auto_offset_reset='earliest',

consumer_timeout_ms=timeout_ms

)

tp = TopicPartition(topic, partition)

consumer.assign([tp])

consumer.seek(tp, offset)

try:

msg = next(consumer)

headers = {k: v.decode() if v else None for k, v in msg.headers or []}

lineage_info = {

'run_id': headers.get('x-lineage-run-id'),

'stage': headers.get('x-lineage-stage'),

'source_system': headers.get('x-lineage-source-system'),

'timestamp': headers.get('x-lineage-timestamp-ms'),

'content_hash': headers.get('x-content-hash'),

'producer': headers.get('x-lineage-producer'),

'kafka_metadata': {

'topic': topic,

'partition': partition,

'offset': offset,

'timestamp': msg.timestamp

}

}

return lineage_info

except StopIteration:

logger.warning(f"未找到消息: {topic}-{partition}:{offset}")

return None

finally:

consumer.close()

def visualize_lineage_graph(self, output_file: str = "data_lineage_graph.png"):

"""

可视化数据血缘图谱

"""

if not self.local_graph.nodes():

logger.warning("血缘图为空,无法可视化")

return

plt.figure(figsize=(14, 10))

pos = nx.spring_layout(self.local_graph, k=2, iterations=50)

# 绘制节点

node_colors = []

for node in self.local_graph.nodes():

# 根据出度/入度着色

in_degree = self.local_graph.in_degree(node)

out_degree = self.local_graph.out_degree(node)

if in_degree == 0:

node_colors.append('#4CAF50') # 源系统 - 绿色

elif out_degree == 0:

node_colors.append('#F44336') # 终端 - 红色

else:

node_colors.append('#2196F3') # 中间处理 - 蓝色

nx.draw_networkx_nodes(self.local_graph, pos,