文章目录

API设置

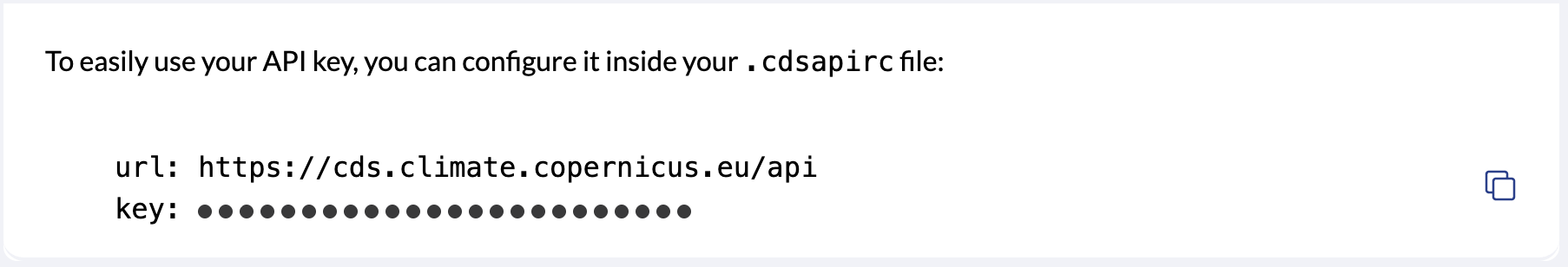

注册并登录climate data store,在profile里复制这个

将刚刚复制的内容粘贴到/Users/nizhuzhu/.cdsapirc文件里,我用vim比较顺手

bash

vim /Users/nizhuzhu/.cdsapircpython-cdsapi并行下载

bash

pip install cdsapi # 我下在py38的环境里了更改通用配置参数,运行就行了

python

# -*- coding: utf-8 -*-

"""

通用CDS数据下载器

支持任意数据集,按指定参数维度拆分下载(如:年/月/超前月数等)

支持并行下载

"""

# 准备工作:

# vim /Users/nizhuzhu/.cdsapirc

# 将api复制进这个文件中

#

import cdsapi

import os

from concurrent.futures import ThreadPoolExecutor, as_completed

from threading import Lock

import time

import random

from itertools import product

# ===================== 通用配置区 =====================

# 官网选择完数据粘贴过来就行

# 输出目录

OUTPUT_DIR = "/Volumes/Elements SE/DOST_MOST/8_predict/0_raw/Meteo"

os.makedirs(OUTPUT_DIR, exist_ok=True)

# CDS数据集名称

DATASET = "seasonal-monthly-single-levels"

# 基础请求参数(固定部分)

BASE_REQUEST = {

"originating_centre": "meteo_france",

"system": "6",

"variable": [

"2m_temperature",

"total_precipitation"

],

"product_type": [

"hindcast_climate_mean",

"monthly_mean"

],

"year": [

"1993", "1994", "1995",

"1996", "1997", "1998",

"1999", "2000", "2001",

"2002", "2003", "2004",

"2005", "2006", "2007",

"2008", "2009", "2010",

"2011", "2012", "2013",

"2014", "2015", "2016"

],

"leadtime_month": [

"1",

"2",

"3",

"4",

"5",

"6"

],

"data_format": "netcdf"

}

# 需要拆分的参数维度(每个组合生成一个文件),比如这个就会所有年份每个月生成一个文件

# 键:参数名,值:该参数需要迭代的取值列表

ITER_PARAMS = {

# "year": [str(y) for y in range(1993, 2027)], # 1993-2016

"month": ["01", "02", "03", "04", "05", "06",

"07", "08", "09", "10", "11", "12"]

}

# 文件名模板(可以使用拆分参数的变量名,如 {year}_{month})

FILENAME_TEMPLATE = "{month}_hindcasts24.nc"

# 下载并发数(建议 2-3,避免服务器限制)

MAX_WORKERS = 3

# 重试配置

MAX_RETRIES = 3

RETRY_DELAY = 5

# ===================== 并行下载代码 =====================

print_lock = Lock()

completed_count = 0

total_count = 0

def log_print(message):

with print_lock:

print(message)

def generate_requests():

"""

根据ITER_PARAMS生成所有请求组合和对应的文件路径

返回:列表,每个元素为 (request_dict, filepath)

"""

# 获取所有参数组合

param_names = list(ITER_PARAMS.keys())

param_values_list = [ITER_PARAMS[name] for name in param_names]

all_combinations = list(product(*param_values_list))

requests = []

for combo in all_combinations:

# 构建请求参数:复制基础请求,然后添加本次迭代的参数

req = BASE_REQUEST.copy()

for name, value in zip(param_names, combo):

# CDS API要求参数值为列表(即使单个值也用列表)

req[name] = [value]

# 生成文件名

format_dict = {name: value for name, value in zip(param_names, combo)}

filename = FILENAME_TEMPLATE.format(**format_dict)

filepath = os.path.join(OUTPUT_DIR, filename)

requests.append((req, filepath))

return requests

def download_single_file(request, filepath, client=None):

"""下载单个文件(支持重试)"""

global completed_count

filename = os.path.basename(filepath)

# 检查文件是否已存在

if os.path.exists(filepath):

with print_lock:

completed_count += 1

return (True, filename, "Already exists")

if client is None:

client = cdsapi.Client()

for attempt in range(MAX_RETRIES):

try:

log_print(f"[{completed_count + 1}/{total_count}] Downloading {filename} "

f"(attempt {attempt + 1}/{MAX_RETRIES})...")

client.retrieve(DATASET, request).download(filepath)

with print_lock:

completed_count += 1

log_print(f" ✓ Saved {filename}")

return (True, filename, None)

except Exception as e:

error_msg = str(e)

log_print(f" ✗ Error downloading {filename}: {error_msg}")

if attempt < MAX_RETRIES - 1:

sleep_time = RETRY_DELAY + random.uniform(0, 2)

log_print(f" → Retrying in {sleep_time:.1f}s...")

time.sleep(sleep_time)

else:

with open("download_errors.log", "a") as f:

f.write(f"{time.ctime()}: {filename} - {error_msg}\n")

with print_lock:

completed_count += 1

return (False, filename, error_msg)

return (False, filename, "Max retries exceeded")

def parallel_download(requests_list):

"""并行下载所有请求"""

global completed_count, total_count

completed_count = 0

total_count = len(requests_list)

results = []

with ThreadPoolExecutor(max_workers=MAX_WORKERS) as executor:

future_to_req = {

executor.submit(download_single_file, req, fpath): (req, fpath)

for req, fpath in requests_list

}

for future in as_completed(future_to_req):

try:

success, filename, error = future.result()

results.append((filename, success, error))

except Exception as e:

req, fpath = future_to_req[future]

log_print(f" ✗ Unexpected error for {os.path.basename(fpath)}: {str(e)}")

results.append((os.path.basename(fpath), False, str(e)))

return results

if __name__ == "__main__":

# 生成所有请求

requests_list = generate_requests()

print(f"Total files to download: {len(requests_list)}")

print(f"Output directory: {OUTPUT_DIR}")

print(f"Parallel workers: {MAX_WORKERS}")

print(f"Max retries per file: {MAX_RETRIES}")

print("-" * 50)

start_time = time.time()

results = parallel_download(requests_list)

# 统计

successful = sum(1 for _, success, err in results if success and err != "Already exists")

skipped = sum(1 for _, _, err in results if err == "Already exists")

failed = sum(1 for _, success, _ in results if not success)

elapsed = time.time() - start_time

print("\n" + "=" * 50)

print("Download Summary:")

print(f" Total: {len(results)}")

print(f" Newly downloaded: {successful}")

print(f" Skipped (exists): {skipped}")

print(f" Failed: {failed}")

print(f" Time elapsed: {elapsed / 60:.1f} minutes")

print("=" * 50)

if failed > 0:

print(f"Failed downloads recorded in: download_errors.log")