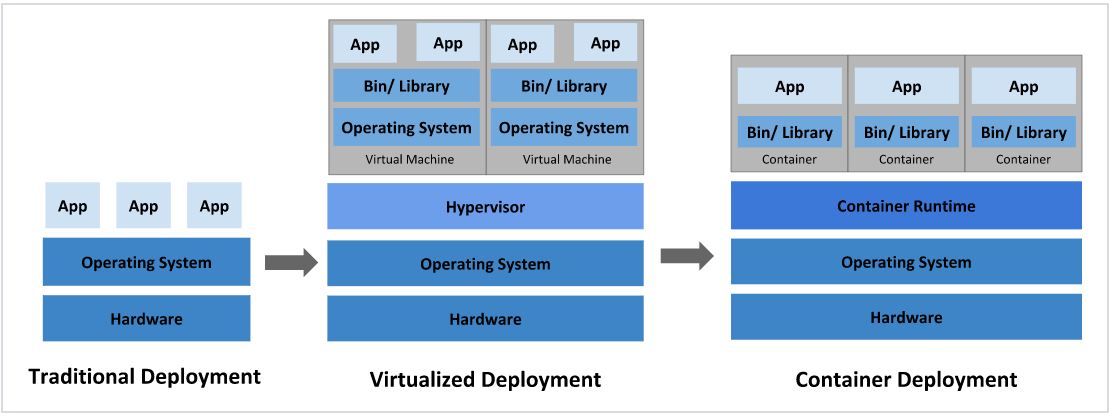

1 应用部署方式演变

在部署应用程序的方式上,主要经历了三个阶段:

传统部署:互联网早期,会直接将应用程序部署在物理机上

- 优点:简单,不需要其它技术的参与

- 缺点:不能为应用程序定义资源使用边界,很难合理地分配计算资源,而且程序之间容易产生影响

**虚拟化部署:**可以在一台物理机上运行多个虚拟机,每个虚拟机都是独立的一个环境

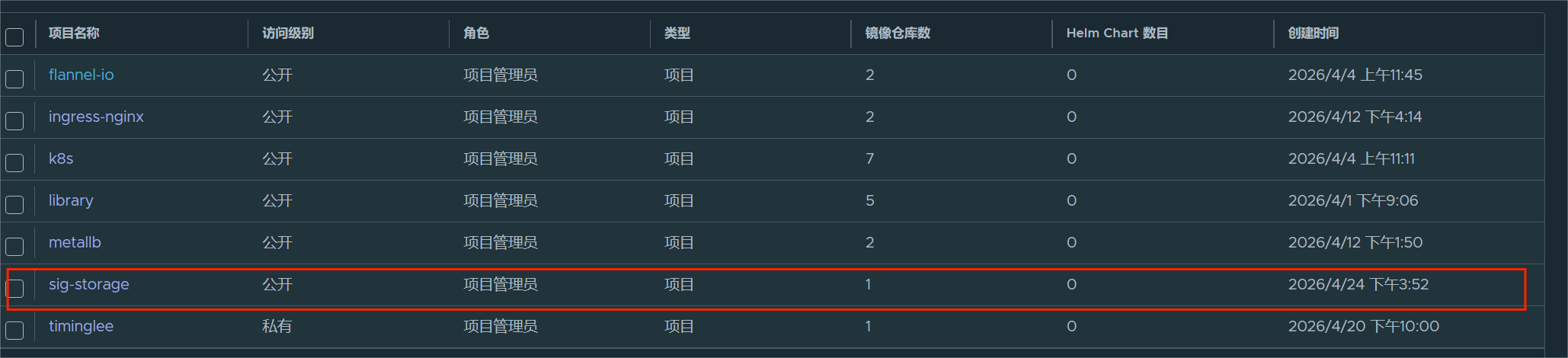

- 优点:程序环境不会相互产生影响,提供了一定程度的安全性

- 缺点:增加了操作系统,浪费了部分资源

**容器化部署:**与虚拟化类似,但是共享了操作系统

!NOTE

容器化部署方式给带来很多的便利,但是也会出现一些问题,比如说:

一个容器故障停机了,怎么样让另外一个容器立刻启动去替补停机的容器

当并发访问量变大的时候,怎么样做到横向扩展容器数量

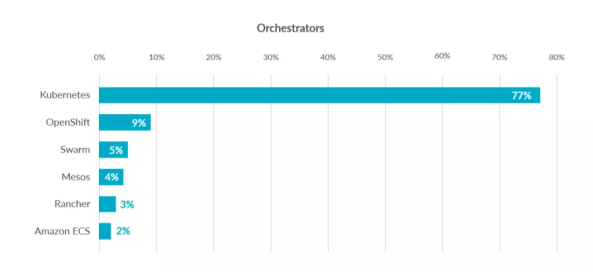

2 容器编排应用

为了解决这些容器编排问题,就产生了一些容器编排的软件:

- Swarm:Docker自己的容器编排工具

- Mesos:Apache的一个资源统一管控的工具,需要和Marathon结合使用

- Kubernetes:Google开源的的容器编排工具

3 kubernetes 简介

- 在Docker 作为高级容器引擎快速发展的同时,在Google内部,容器技术已经应用了很多年

- Borg系统运行管理着成千上万的容器应用。

- Kubernetes项目来源于Borg,可以说是集结了Borg设计思想的精华,并且吸收了Borg系统中的经验和教训。

- Kubernetes对计算资源进行了更高层次的抽象,通过将容器进行细致的组合,将最终的应用服务交给用户。

kubernetes的本质是一组服务器集群,它可以在集群的每个节点上运行特定的程序,来对节点中的容器进行管理。目的是实现资源管理的自动化,主要提供了如下的主要功能:

- 自我修复:一旦某一个容器崩溃,能够在1秒中左右迅速启动新的容器

- 弹性伸缩:可以根据需要,自动对集群中正在运行的容器数量进行调整

- 服务发现:服务可以通过自动发现的形式找到它所依赖的服务

- 负载均衡:如果一个服务起动了多个容器,能够自动实现请求的负载均衡

- 版本回退:如果发现新发布的程序版本有问题,可以立即回退到原来的版本

- 存储编排:可以根据容器自身的需求自动创建存储卷

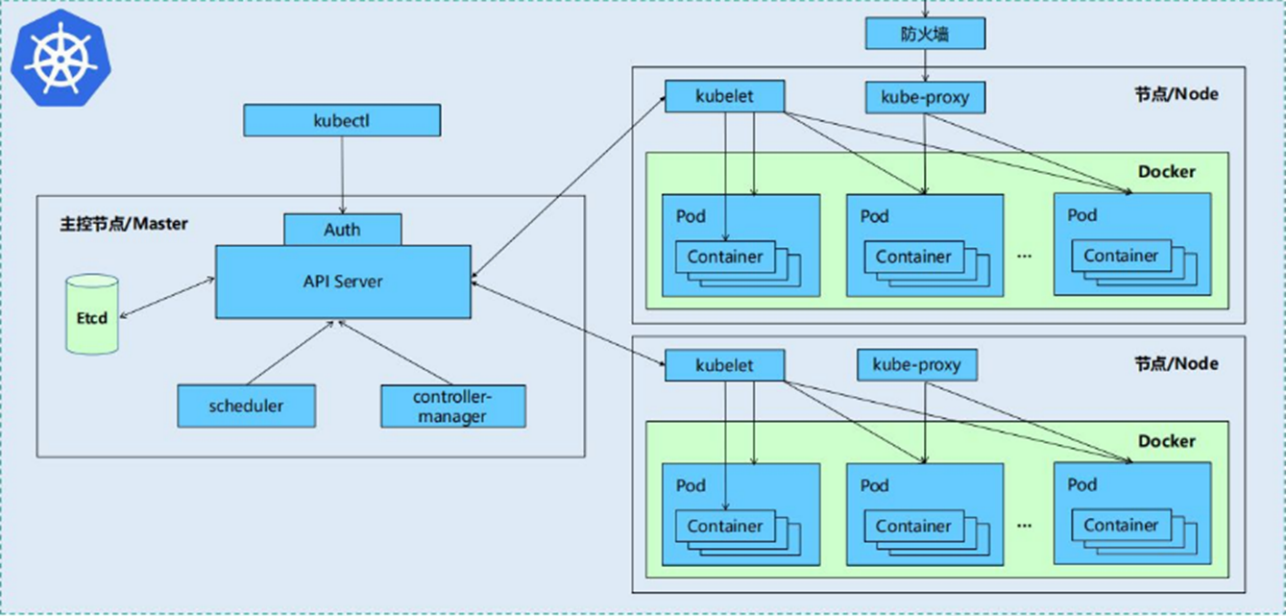

4 K8S的设计架构

1.4.1 K8S各个组件用途

一个kubernetes集群主要是由控制节点(master)、工作节点(node)构成,每个节点上都会安装不同的组件

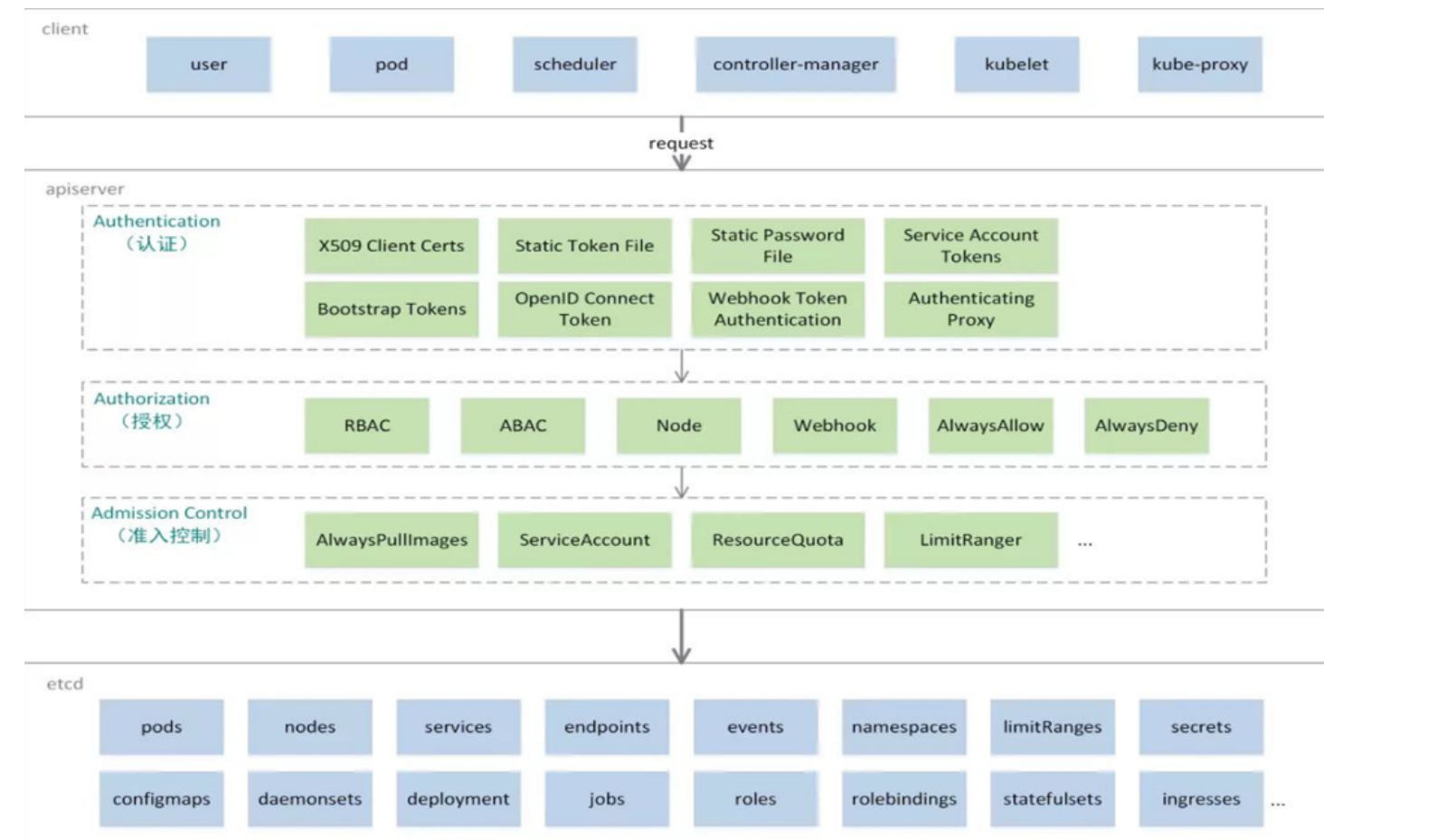

1 master:集群的控制平面,负责集群的决策

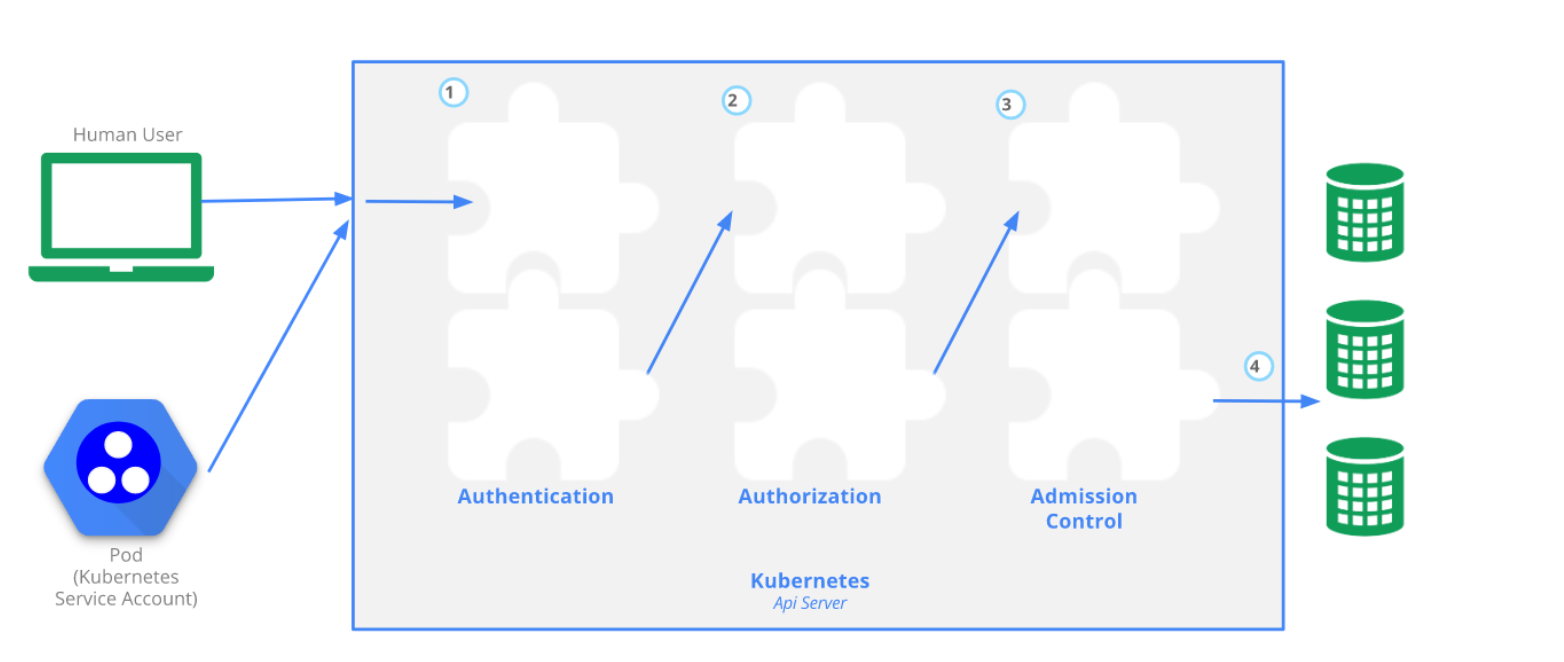

- ApiServer : 资源操作的唯一入口,接收用户输入的命令,提供认证、授权、API注册和发现等机制

- Scheduler : 负责集群资源调度,按照预定的调度策略将Pod调度到相应的node节点上

- ControllerManager : 负责维护集群的状态,比如程序部署安排、故障检测、自动扩展、滚动更新等

- Etcd :负责存储集群中各种资源对象的信息

2 node:集群的数据平面,负责为容器提供运行环境

- kubelet:负责维护容器的生命周期,同时也负责Volume(CVI)和网络(CNI)的管理

- Container runtime:负责镜像管理以及Pod和容器的真正运行(CRI)

- kube-proxy:负责为Service提供cluster内部的服务发现和负载均衡

1.4.2 K8S 各组件之间的调用关系

当我们要运行一个web服务时

- kubernetes环境启动之后,master和node都会将自身的信息存储到etcd数据库中

- web服务的安装请求会首先被发送到master节点的apiServer组件

- apiServer组件会调用scheduler组件来决定到底应该把这个服务安装到哪个node节点上

- 在此时,它会从etcd中读取各个node节点的信息,然后按照一定的算法进行选择,并将结果告知apiServer

- apiServer调用controller-manager去调度Node节点安装web服务

- kubelet接收到指令后,会通知docker,然后由docker来启动一个web服务的pod

- 如果需要访问web服务,就需要通过kube-proxy来对pod产生访问的代理

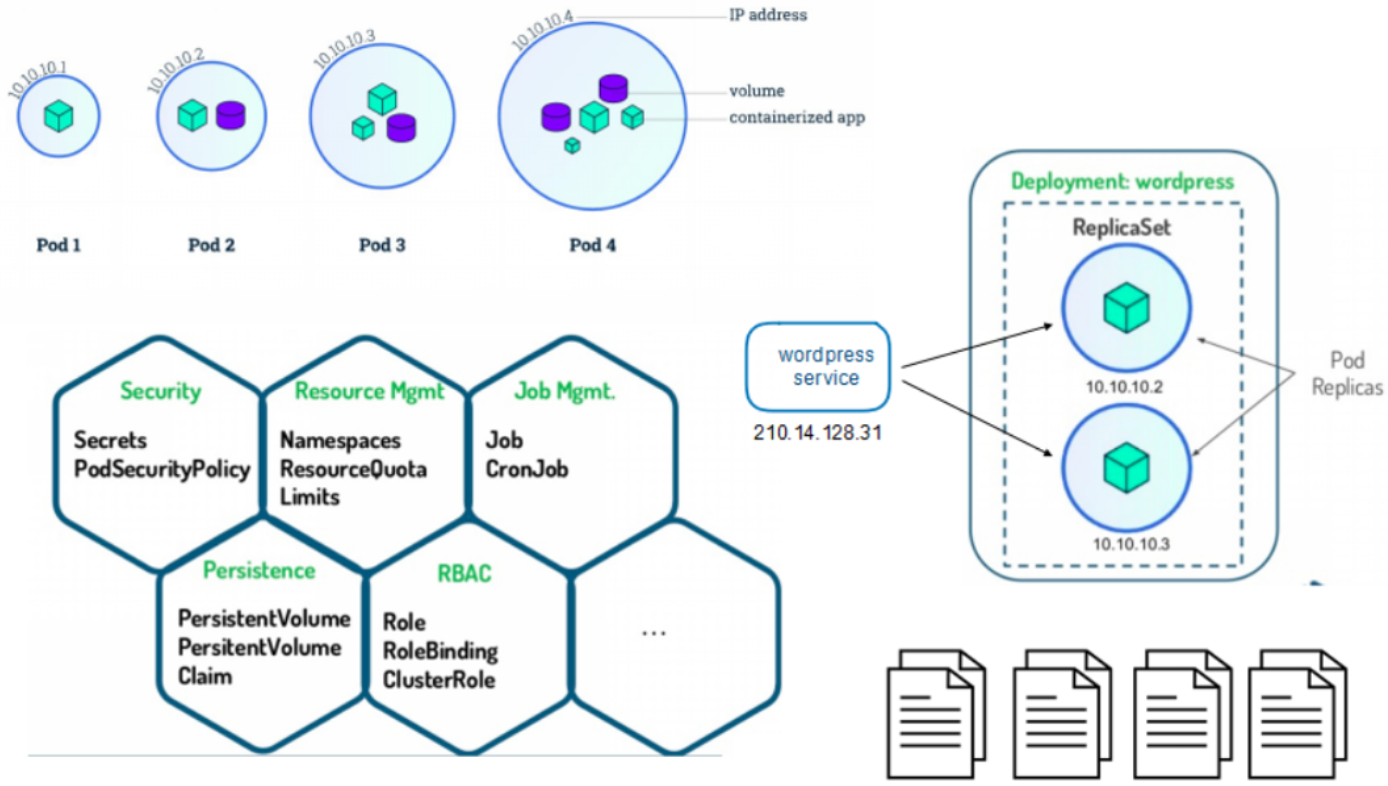

1.4.3 K8S 的 常用名词感念

- Master:集群控制节点,每个集群需要至少一个master节点负责集群的管控

- Node:工作负载节点,由master分配容器到这些node工作节点上,然后node节点上的

- Pod:kubernetes的最小控制单元,容器都是运行在pod中的,一个pod中可以有1个或者多个容器

- Controller:控制器,通过它来实现对pod的管理,比如启动pod、停止pod、伸缩pod的数量等等

- Service:pod对外服务的统一入口,下面可以维护者同一类的多个pod

- Label:标签,用于对pod进行分类,同一类pod会拥有相同的标签

- NameSpace:命名空间,用来隔离pod的运行环境

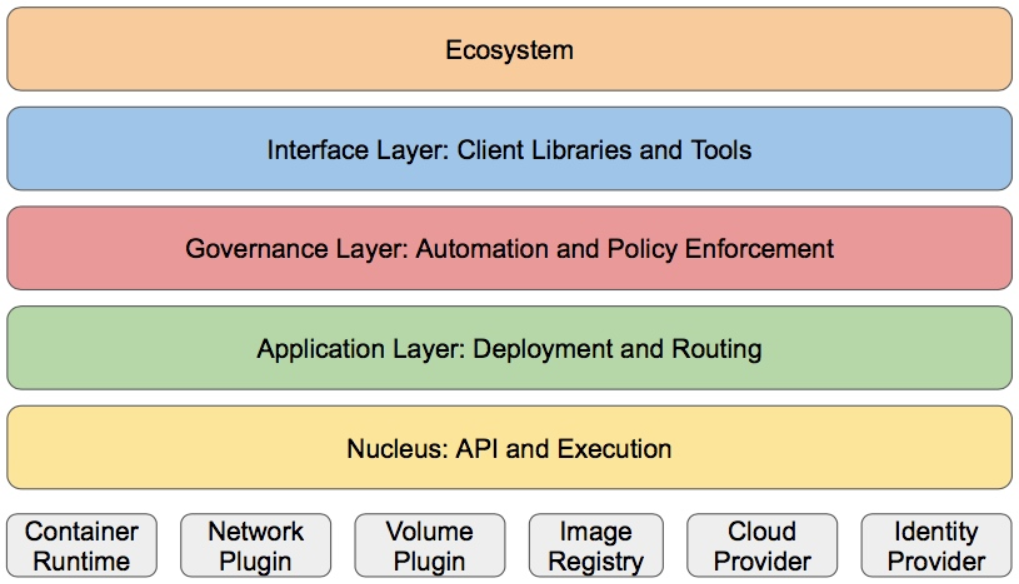

1.4.4 k8S的分层架构

- 核心层:Kubernetes最核心的功能,对外提供API构建高层的应用,对内提供插件式应用执行环境

- 应用层:部署(无状态应用、有状态应用、批处理任务、集群应用等)和路由(服务发现、DNS解析等)

- 管理层:系统度量(如基础设施、容器和网络的度量),自动化(如自动扩展、动态Provision等)以及策略管理(RBAC、Quota、PSP、NetworkPolicy等)

- 接口层:kubectl命令行工具、客户端SDK以及集群联邦

- 生态系统:在接口层之上的庞大容器集群管理调度的生态系统,可以划分为两个范畴

- Kubernetes外部:日志、监控、配置管理、CI、CD、Workflow、FaaS、OTS应用、ChatOps等

- Kubernetes内部:CRI、CNI、CVI、镜像仓库、Cloud Provider、集群自身的配置和管理等

5 K8S集群环境搭建

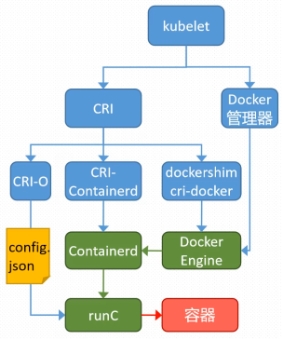

5.1 k8s中容器的管理方式

K8S 集群创建方式有3种:

centainerd

默认情况下,K8S在创建集群时使用的方式

docker

Docker使用的普记录最高,虽然K8S在1.24版本后已经费力了kubelet对docker的支持,但时可以借助cri-docker方式来实现集群创建

cri-o

CRI-O的方式是Kubernetes创建容器最直接的一种方式,在创建集群的时候,需要借助于cri-o插件的方式来实现Kubernetes集群的创建。

!NOTE

docker 和cri-o 这两种方式要对kubelet程序的启动参数进行设置

5.2 k8s 集群部署

K8S中文官网:https://kubernetes.io/zh-cn/

|--------|----------------|------------------|

| 主机名 | IP | 角色 |

| harbor | 172.25.254.200 | harbor仓库 |

| master | 172.25.254.100 | master,k8s集群控制节点 |

| node1 | 172.25.254.10 | worker,k8s集群工作节点 |

| node2 | 172.25.254.20 | worker,k8s集群工作节点 |

- 所有节点禁用selinux和防火墙

- 所有节点同步时间和解析

- 所有节点安装docker-ce

- 所有节点禁用swap,注意注释掉/etc/fstab文件中的定义

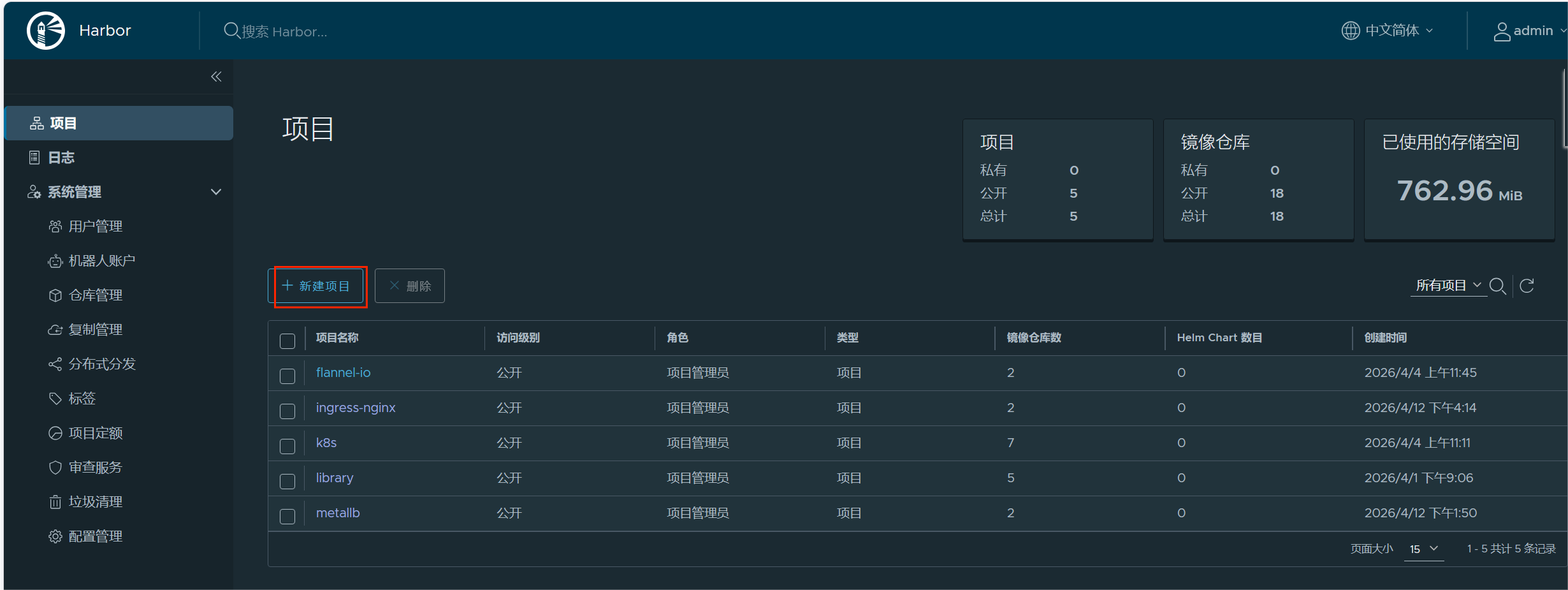

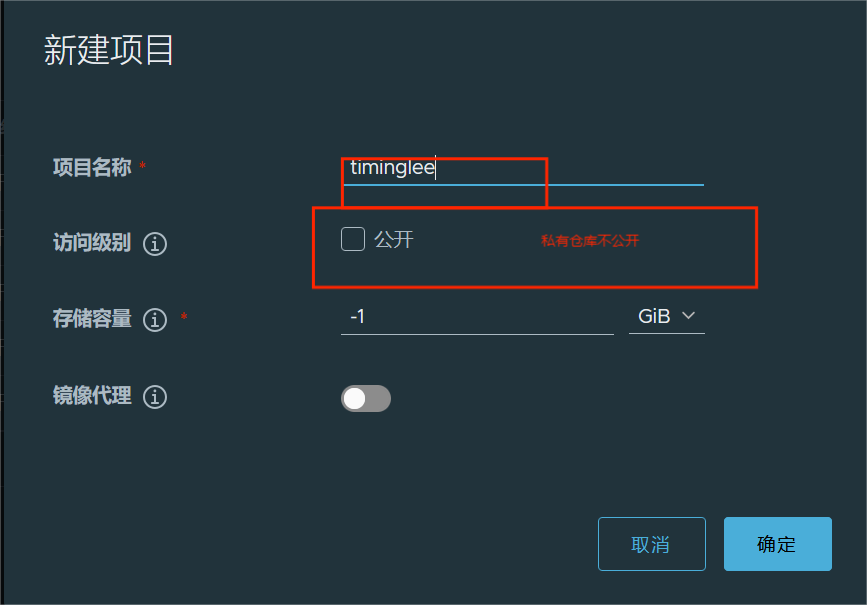

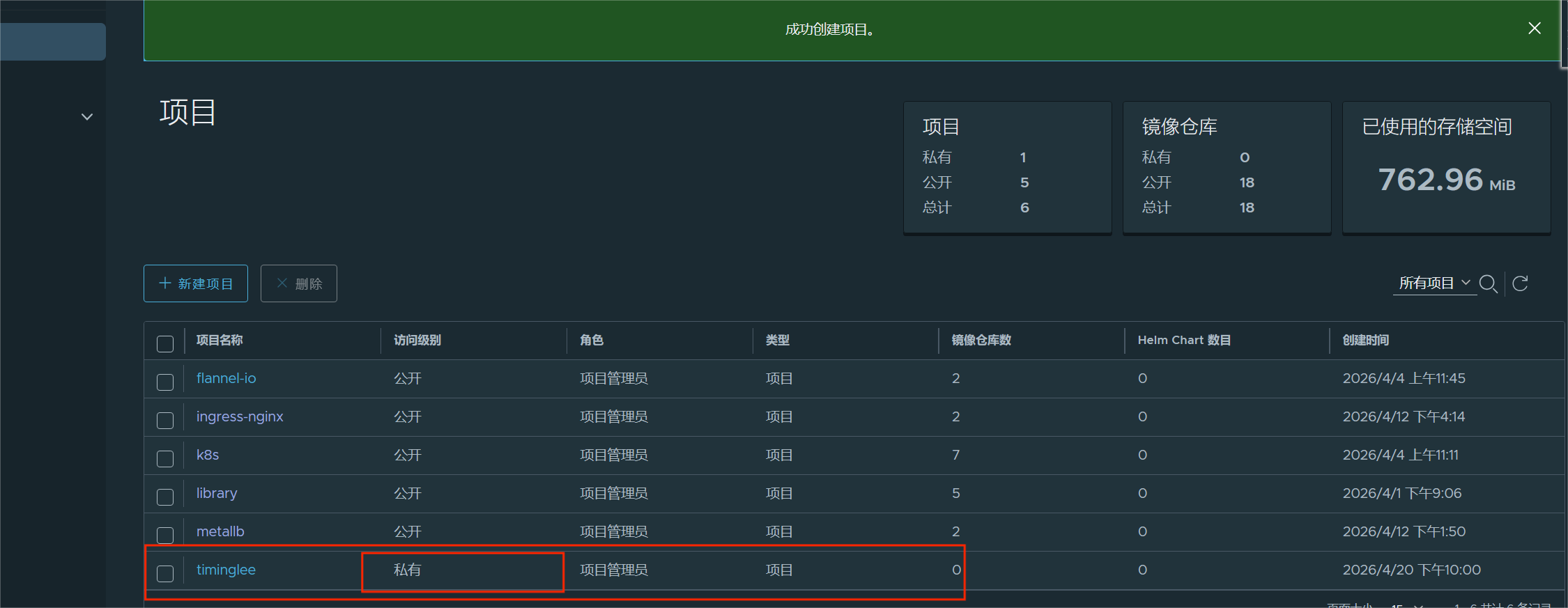

6.构建harbor镜像仓库

#安装docekr(四台机子都要操作)

bash

[root@harbor ~]# cat > /etc/yum.repos.d/docker.repo <<EOF

[docker]

name = docker

baseurl = https://mirrors.aliyun.com/docker-ce/linux/rhel/9.6/x86_64/stable/

gpgcheck = 0

EOF

[root@harbor ~]# dnf install docker-ce-3:28.5.2-1.el9 -y

[root@harbor ~]# echo br_netfilter > /etc/modules-load.d/docker_mod.conf

//让 Linux 开机时自动加载网桥过滤模块,Docker 网络必须用它

[root@harbor ~]# modprobe -a br_netfilter //立刻加载 br_netfilter 内核模块

[root@harbor ~]# vim /etc/sysctl.d/docker.conf

net.bridge.bridge-nf-call-iptables = 1

//让 Linux 网桥(Docker 容器网络)的流量经过 iptables 防火墙规则

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

//开启 Linux 内核 IP 转发

[root@harbor ~]# sysctl --system

[root@harbor ~]# vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --iptables=true

[root@harbor ~]# systemctl daemon-reload

[root@harbor ~]# systemctl enable --now docker#生成密钥(在harbor机子操作)

bash

[root@harbor ~]# mkdir /data/certs -p

[root@harbor ~]# mkdir /data/certs -p

[root@harbor ~]# openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/timinglee.org.key \

-addext "subjectAltName = DNS:reg.timinglee.org" \

-x509 -days 365 -out /data/certs/timinglee.org.crt

You are about to be asked to enter information that will be incorporated

into your certificate request.

What you are about to enter is what is called a Distinguished Name or a DN.

There are quite a few fields but you can leave some blank

For some fields there will be a default value,

If you enter '.', the field will be left blank.

-----

Country Name (2 letter code) [XX]:CN

State or Province Name (full name) []:Shannxi

Locality Name (eg, city) [Default City]:Xi'an

Organization Name (eg, company) [Default Company Ltd]:kubernetes

Organizational Unit Name (eg, section) []:harbor

Common Name (eg, your name or your server's hostname) []:reg.timinglee.org

Email Address []:admin@timinglee.org#编辑harbor配置文件

bash

[root@harbor ~]# tar zxf harbor-offline-installer-v2.5.4.tgz -C /opt/

[root@harbor ~]# cd /opt/harbor/

[root@harbor harbor]# ls

common.sh harbor.v2.5.4.tar.gz harbor.yml.tmpl install.sh LICENSE prepare

[root@harbor harbor]# cp harbor.yml.tmpl harbor.yml

[root@harbor harbor]# vim harbor.yml

hostname: reg.timinglee.org

certificate: /data/certs/timinglee.org.crt

private_key: /data/certs/timinglee.org.key

harbor_admin_password: lee

[root@harbor harbor]# ./install.sh --with-chartmuseum#启动并验证

bash

[root@harbor harbor]# mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

[root@harbor harbor]# cp /data/certs/timinglee.org.crt /etc/docker/certs.d/reg.timinglee.org/ca.crt

[root@harbor harbor]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.200 harbor reg.timinglee.org

[root@harbor harbor]# systemctl restart docker

[root@harbor harbor]# docker compose up -d

自动下载镜像

自动创建容器

自动后台运行

让你的仓库可以正常访问

[root@harbor harbor]# docker login reg.timinglee.org -u admin

Password:

WARNING! Your credentials are stored unencrypted in '/root/.docker/config.json'.

Configure a credential helper to remove this warning. See

https://docs.docker.com/go/credential-store/

Login Succeeded提示:所有机子需要关闭swap

bash

systemctl disable --now swap.target

systemctl mask swap.target#安装docker配置可以使用harbor仓库

bash

[root@harbor/master/node1/node2]# mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

#在harbor主机中分发证书到所有主机

[root@harbor ~]# for i in 100 10 20

> do

> scp /data/certs/timinglee.org.crt root@172.25.254.$i:/etc/docker/certs.d/reg.timinglee.org/ca.crt

> done

[root@harbor/master/node1/node2]# systemctl enable docker

[root@harbor/master/node1/node2]# systemctl restart docker#所有主机配置docker加速器

bash

[root@harbor/master/node1/node2]# cat >/etc/docker/daemon.json <<EOF

{

"registry-mirrors":["https://reg.timinglee.org"]

}

EOF

[root@harbor/master/node1/node2]# systemctl restart docker

[root@harbor/master/node1/node2]# docker info

//可以在结尾看到看到

Registry Mirrors:

https://reg.timinglee.org/#所有主机彼此建立解析

bash

[root@harbor/master/node1/node2]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 master

172.25.254.10 node1

172.25.254.20 node2

172.25.254.200 reg.timinglee.org

bash

[root@harbor/master/node1/node2]# vim /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name = kubernetes

baseurl = https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.35/rpm/

gpgcheck = 0

#检测

[root@harbor/master/node1/node2]# dnf list kubelet

正在更新 Subscription Management 软件仓库。

无法读取客户身份

本系统尚未在权利服务器中注册。可使用 "rhc" 或 "subscription-manager" 进行注册。

上次元数据过期检查:0:01:04 前,执行于 2026年04月03日 星期五 18时37分25秒。

可安装的软件包

kubelet.aarch64 1.35.3-150500.1.1 kubernetes

kubelet.ppc64le 1.35.3-150500.1.1 kubernetes

kubelet.s390x 1.35.3-150500.1.1 kubernetes

kubelet.src 1.35.3-150500.1.1 kubernetes

kubelet.x86_64 1.35.3-150500.1.1 kubernetes以上操作完成后重启主机检测swap分区

bash

[root@harbor/master/node1/node2]# swapon -s //没有任何输出表示ok7.kubernetes的部署

#安装cri-dockerd(所有主机中安装)

bash

[root@master ~]# ls

anaconda-ks.cfg cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

[root@master ~]# scp cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm root@172.25.254.10:/root

root@172.25.254.10's password:

cri-dockerd-0.3.14-3.el8.x86_64.rpm 100% 11MB 178.6MB/s 00:00

libcgroup-0.41-19.el8.x86_64.rpm 100% 69KB 44.4MB/s 00:00

[root@master ~]# scp cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm root@172.25.254.20:/root

root@172.25.254.20's password:

cri-dockerd-0.3.14-3.el8.x86_64.rpm 100% 11MB 164.9MB/s 00:00

libcgroup-0.41-19.el8.x86_64.rpm 100% 69KB 47.1MB/s 00:00

[root@master/node1/node2]# ls

anaconda-ks.cfg cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

[root@harbor/master/node1/node2]# rpm -ivh *.rpm

警告:libcgroup-0.41-19.el8.x86_64.rpm: 头V4 RSA/SHA256 Signature, 密钥 ID 6d745a60: NOKEY

Verifying... ################################# [100%]

准备中... ################################# [100%]

正在升级/安装...

1:libcgroup-0.41-19.el8 ################################# [ 50%]

2:cri-dockerd-3:0.3.14-3.el8 ################################# [100%]

[root@master/node1/node2]# vim /lib/systemd/system/cri-docker.service

10 ExecStart=/usr/bin/cri-dockerd --container-runtime-endpoint fd:// --network-plugin=cni --pod-infra-container-image=reg.timinglee.org/k8s/pause:3.10.1

[root@master/node1/node2 ~]# systemctl daemon-reload

[root@master/node1/node2 ~]# systemctl restart cri-docker.service

[root@master/node1/node2 ~]# ll /var/run/cri-dockerd.sock

srw-rw---- 1 root docker 0 4月 4 10:42 /var/run/cri-dockerd.sock#安装构建kubernetes 集群所需软件

master节点

bash

[root@master ~]# dnf install kubelet kubeadm kubectl -y

[root@master ~]# systemctl enable --now kubelet.service

Created symlink /etc/systemd/system/multi-user.target.wants/kubelet.service

/usr/lib/systemd/system/kubelet.service.node节点

bash

[root@node1 ~]# dnf install kubelet kubeadm -y

[root@node1 ~]# systemctl enable --now kubelet.service

Created symlink /etc/systemd/system/multi-user.target.wants/kubelet.service → /usr/lib/systemd/system/kubelet.service.master节点中 kubectl 和kubeadm 补齐

bash

[root@master ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@master ~]# echo "source <(kubeadm completion bash)" >> ~/.bashrc

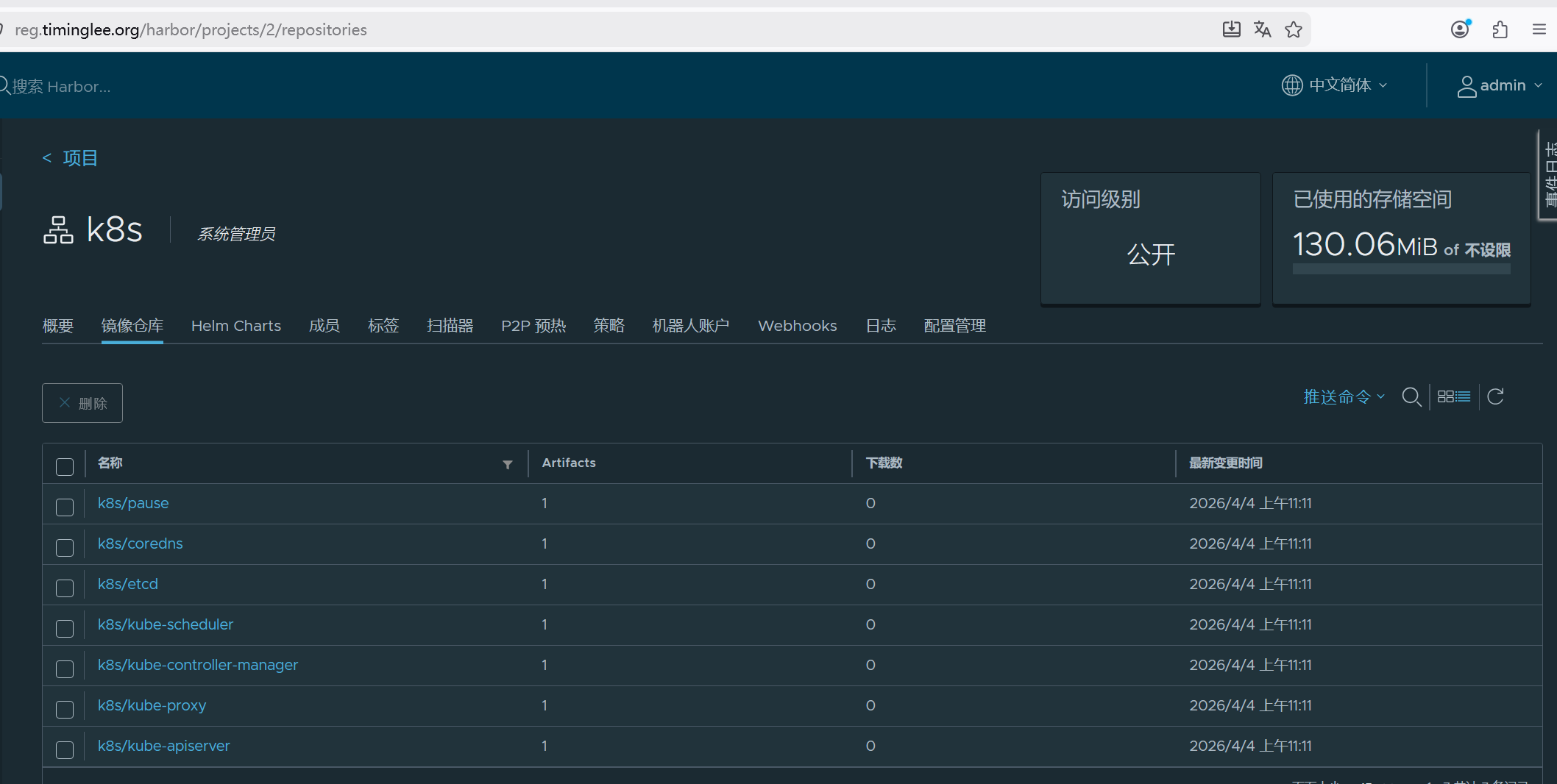

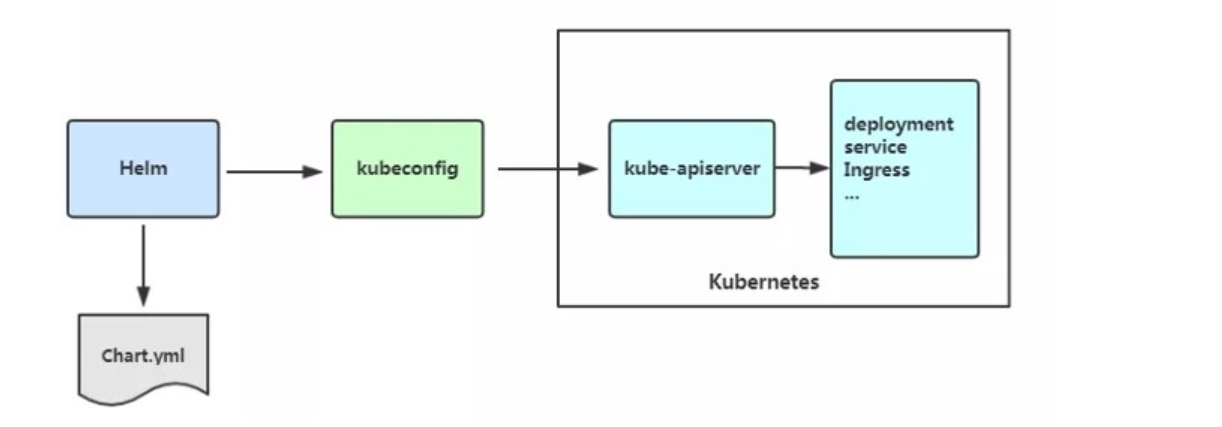

[root@master ~]# source ~/.bashrc下载kubernetes集群所需镜像

bash

[root@master ~]# kubeadm config images pull \

> --image-repository registry.aliyuncs.com/google_containers \

> --kubernetes-version v1.35.3 \

> --cri-socket=unix:///var/run/cri-dockerd.sock

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.13.1

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.10.1

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.6.6-0上传镜像到本地harbor

bash

[root@master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/google/{system("docker tag "$0" reg.timinglee.org/k8s/"$3)}'

[root@master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/timinglee/{system("docker push "$0)}'

The push refers to repository [reg.timinglee.org/k8s/kube-apiserver]

b26c04e79c49: Pushed

e2e29eb5f5ab: Pushed

33b37ab0b090: Pushed

6e7fbcf090d0: Pushed

1a73b54f556b: Pushed

4cde6b0bb6f5: Pushed

bd3cdfae1d3f: Pushed

6f1cdceb6a31: Pushed

af5aa97ebe6c: Pushed

4d049f83d9cf: Pushed

114dde0fefeb: Pushed

4840c7c54023: Pushed

8fa10c0194df: Pushed

a33ba213ad26: Pushed

v1.35.3: digest: sha256:d84de59faea16b559d4f2e4d05f4a50cd189b6d02ff9805d2c29eb8b64094b0a size: 3233

The push refers to repository [reg.timinglee.org/k8s/kube-proxy]

091c6dc44e2a: Pushed

ff32924af9e3: Pushed

v1.35.3: digest: sha256:3673be50038d0e33dc265311eba1bfb17268c40f52dab8baf79ee8ac125cbd0c size: 740

The push refers to repository [reg.timinglee.org/k8s/kube-controller-manager]

097e54dc40b4: Pushed

e2e29eb5f5ab: Mounted from k8s/kube-apiserver

33b37ab0b090: Mounted from k8s/kube-apiserver

6e7fbcf090d0: Mounted from k8s/kube-apiserver

1a73b54f556b: Mounted from k8s/kube-apiserver

4cde6b0bb6f5: Mounted from k8s/kube-apiserver

bd3cdfae1d3f: Mounted from k8s/kube-apiserver

6f1cdceb6a31: Mounted from k8s/kube-apiserver

af5aa97ebe6c: Mounted from k8s/kube-apiserver

4d049f83d9cf: Mounted from k8s/kube-apiserver

114dde0fefeb: Mounted from k8s/kube-apiserver

4840c7c54023: Mounted from k8s/kube-apiserver

8fa10c0194df: Mounted from k8s/kube-apiserver

a33ba213ad26: Mounted from k8s/kube-apiserver

v1.35.3: digest: sha256:988a0684f0ffdc95a06f0bcac01d7540f623876b855736cb70cc7aaaca12c731 size: 3233

The push refers to repository [reg.timinglee.org/k8s/kube-scheduler]

87448da54384: Pushed

e2e29eb5f5ab: Mounted from k8s/kube-controller-manager

33b37ab0b090: Mounted from k8s/kube-controller-manager

6e7fbcf090d0: Mounted from k8s/kube-controller-manager

1a73b54f556b: Mounted from k8s/kube-controller-manager

4cde6b0bb6f5: Mounted from k8s/kube-controller-manager

bd3cdfae1d3f: Mounted from k8s/kube-controller-manager

6f1cdceb6a31: Mounted from k8s/kube-controller-manager

af5aa97ebe6c: Mounted from k8s/kube-controller-manager

4d049f83d9cf: Mounted from k8s/kube-controller-manager

114dde0fefeb: Mounted from k8s/kube-controller-manager

4840c7c54023: Mounted from k8s/kube-controller-manager

8fa10c0194df: Mounted from k8s/kube-controller-manager

a33ba213ad26: Mounted from k8s/kube-controller-manager

v1.35.3: digest: sha256:212b077fceb2ca7930097d992c20eae7d9574fe058c6d83b6eac83f07bf7b16c size: 3233

The push refers to repository [reg.timinglee.org/k8s/etcd]

37a37e743e2e: Pushed

660aacb22b09: Pushed

81ec00dccb40: Pushed

b336e209998f: Pushed

f4aee9e53c42: Pushed

1a73b54f556b: Mounted from k8s/kube-scheduler

2a92d6ac9e4f: Pushed

bbb6cacb8c82: Pushed

6f1cdceb6a31: Mounted from k8s/kube-scheduler

af5aa97ebe6c: Mounted from k8s/kube-scheduler

4d049f83d9cf: Mounted from k8s/kube-scheduler

a80545a98dcd: Pushed

8fa10c0194df: Mounted from k8s/kube-scheduler

f920c5680b0b: Pushed

3.6.6-0: digest: sha256:f6fd2f574e114f4a229e320b387ddc8c412fe6e2156f94965f07e091a64cec42 size: 3235

The push refers to repository [reg.timinglee.org/k8s/coredns]

098153c557ae: Pushed

f5b520875067: Pushed

bfe9137a1b04: Pushed

f4aee9e53c42: Mounted from k8s/etcd

1a73b54f556b: Mounted from k8s/etcd

2a92d6ac9e4f: Mounted from k8s/etcd

bbb6cacb8c82: Mounted from k8s/etcd

6f1cdceb6a31: Mounted from k8s/etcd

af5aa97ebe6c: Mounted from k8s/etcd

4d049f83d9cf: Mounted from k8s/etcd

114dde0fefeb: Mounted from k8s/kube-scheduler

4840c7c54023: Mounted from k8s/kube-scheduler

8fa10c0194df: Mounted from k8s/etcd

bff7f7a9d443: Pushed

v1.13.1: digest: sha256:246e7333fde10251c693b68f13d21d6d64c7dbad866bbfa11bd49315e3f725a7 size: 3233

The push refers to repository [reg.timinglee.org/k8s/pause]

cfaaf0813a4d: Pushed

3.10.1: digest: sha256:d31e7f29ada8b13c6dd047ec4805720fcf14df5fccbb27903e374dc56225ca49 size: 526

#在master中初始化kubernetes集群

在master中完成集群初始化

bash

[root@master ~]# kubeadm init --pod-network-cidr=10.244.0.0/16 \

--image-repository reg.timinglee.org/k8s \

--kubernetes-version v1.35.3 \

--cri-socket=unix:///var/run/cri-dockerd.sock

[init] Using Kubernetes version: v1.35.3

[preflight] Running pre-flight checks

[WARNING ContainerRuntimeVersion]: You must update your container runtime to a version that supports the CRI method RuntimeConfig. Falling back to using cgroupDriver from kubelet config will be removed in 1.36. For more information, see https://git.k8s.io/enhancements/keps/sig-node/4033-group-driver-detection-over-cri

[WARNING SystemVerification]: kernel release 5.14.0-570.12.1.el9_6.x86_64 is unsupported. Supported LTS versions from the 5.x series are 5.4, 5.10 and 5.15. Any 6.x version is also supported. For cgroups v2 support, the recommended version is 5.10 or newer

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master] and IPs [10.96.0.1 172.25.254.100]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master] and IPs [172.25.254.100 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master] and IPs [172.25.254.100 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "super-admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/instance-config.yaml"

[patches] Applied patch of type "application/strategic-merge-patch+json" to target "kubeletconfiguration"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests"

[kubelet-check] Waiting for a healthy kubelet at http://127.0.0.1:10248/healthz. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 501.66428ms

[control-plane-check] Waiting for healthy control plane components. This can take up to 4m0s

[control-plane-check] Checking kube-apiserver at https://172.25.254.100:6443/livez

[control-plane-check] Checking kube-controller-manager at https://127.0.0.1:10257/healthz

[control-plane-check] Checking kube-scheduler at https://127.0.0.1:10259/livez

[control-plane-check] kube-controller-manager is healthy after 1.00355242s

[control-plane-check] kube-scheduler is healthy after 2.366129185s

[control-plane-check] kube-apiserver is healthy after 4.003066854s

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: edkm6l.key5alswo3n4kz8r

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.25.254.100:6443 --token edkm6l.key5alswo3n4kz8r \

--discovery-token-ca-cert-hash sha256:1c4d35623b9aab09984bb8a62383a85e38fea77d530b10b69a52151b50efce#每个人不一样,其他主机加入本集群的凭证

kubeadm join 172.25.254.100:6443 --token edkm6l.key5alswo3n4kz8r \

--discovery-token-ca-cert-hash sha256:1c4d35623b9aab09984bb8a62383a85e38fea77d530b10b69a52151b50efc

#如果忘了 查看

bash

[root@master ~]# kubeadm token create --print-join-command

kubeadm join 172.25.254.100:6443 --token b6idt2.wez387fr7930anq0 --discovery-token-ca-cert-hash sha256:1c4d35623b9aab09984bb8a62383a85e38fea77d530b10b69a52151b50efce56 #如果初始化出问题

bash

[root@master ~]# kubeadm reset --cri-socket=unix:///var/run/cri-dockerd.sock #可以重置集群设定添加kubernets环境变量到本机

bash

[root@master ~]# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" > ~/.bash_profile

[root@master ~]# source ~/.bash_profile

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane 14m v1.35.3添加node节点到本集群

bash

[root@node1/node2 ~]# kubeadm join 172.25.254.100:6443 --token b6idt2.wez387fr7930anq0 --discovery-token-ca-cert-hash

sha256:1c4d35623b9aab09984bb8a62383a85e38fea77d530b10b69a52151b50efce56 --cri-socket unix:///var/run/cri-dockerd.sock

[preflight] Running pre-flight checks

[WARNING ContainerRuntimeVersion]: You must update your container runtime to a version that supports the CRI method RuntimeConfig. Falling back to using cgroupDriver from kubelet config will be removed in 1.36. For more information, see https://git.k8s.io/enhancements/keps/sig-node/4033-group-driver-detection-over-cri

[WARNING SystemVerification]: kernel release 5.14.0-570.12.1.el9_6.x86_64 is unsupported. Supported LTS versions from the 5.x series are 5.4, 5.10 and 5.15. Any 6.x version is also supported. For cgroups v2 support, the recommended version is 5.10 or newer

[preflight] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[preflight] Use 'kubeadm init phase upload-config kubeadm --config your-config-file' to re-upload it.

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/instance-config.yaml"

[patches] Applied patch of type "application/strategic-merge-patch+json" to target "kubeletconfiguration"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-check] Waiting for a healthy kubelet at http://127.0.0.1:10248/healthz. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 501.004359ms

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

#测试

[root@master ~]# kubectl get nodes #可以看到集群中主机但是因为网络插件问题状态是NotReady

NAME STATUS ROLES AGE VERSION

master NotReady control-plane 21m v1.35.3

node1 NotReady <none> 4m5s v1.35.3

node2 NotReady <none> 4m v1.35.3

#安装网络插件

bash

[root@master ~]# ls

anaconda-ks.cfg cri-dockerd-0.3.14-3.el8.x86_64.rpm flannel-0.28.1.tar kube-flannel.yml libcgroup-0.41-19.el8.x86_64.rpm

19.el8.x86_64.rpm

[root@master ~]# docker load -i flannel-0.28.1.tar

5aa68bbbc67e: Loading layer [==================================================>] 8.724MB/8.724MB

2c8aa52d4746: Loading layer [==================================================>] 3.018MB/3.018MB

Loaded image: ghcr.io/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

256f393e029f: Loading layer [==================================================>] 8.607MB/8.607MB

70dc5e033175: Loading layer [==================================================>] 9.554MB/9.554MB

13a60c10faeb: Loading layer [==================================================>] 17.13MB/17.13MB

9a7d8cf9ff51: Loading layer [==================================================>] 1.547MB/1.547MB

03767449b95b: Loading layer [==================================================>] 51.85MB/51.85MB

b6233bc105d7: Loading layer [==================================================>] 5.632kB/5.632kB

9738bb9596cf: Loading layer [==================================================>] 6.144kB/6.144kB

00012e17b6cc: Loading layer [==================================================>] 2.173MB/2.173MB

5f70bf18a086: Loading layer [==================================================>] 1.024kB/1.024kB

5668da16a30b: Loading layer [==================================================>] 2.178MB/2.178MB

Loaded image: ghcr.io/flannel-io/flannel:v0.28.1

[root@master ~]# docker tag ghcr.io/flannel-io/flannel-cni-plugin:v1.9.0-flannel1 reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

[root@master ~]# docker push reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

The push refers to repository [reg.timinglee.org/flannel-io/flannel-cni-plugin]

2c8aa52d4746: Pushed

5aa68bbbc67e: Pushed

v1.9.0-flannel1: digest: sha256:b3d30c221113b30fea3e8a7fccb145e929b097d0319b9eeb6b5a591b10b5c671 size: 739

[root@master ~]# docker tag ghcr.io/flannel-io/flannel:v0.28.1 reg.timinglee.org/flannel-io/flannel:v0.28.1

[root@master ~]# docker push reg.timinglee.org/flannel-io/flannel:v0.28.1

The push refers to repository [reg.timinglee.org/flannel-io/flannel]

5668da16a30b: Pushed

5f70bf18a086: Pushed

00012e17b6cc: Pushed

9738bb9596cf: Pushed

b6233bc105d7: Pushed

03767449b95b: Pushed

9a7d8cf9ff51: Pushed

13a60c10faeb: Pushed

70dc5e033175: Pushed

256f393e029f: Pushed

v0.28.1: digest: sha256:e671adbc267460164555159210066d3304a43e3b5dd85cc0b5b6ad62e83aab52 size: 2414

[root@master ~]# vim kube-flannel.yml

148 image: flannel-io/flannel:v0.28.1

175 image: flannel-io/flannel-cni-plugin:v1.9.0-flannel1

186 image: flannel-io/flannel:v0.28.1

[root@master ~]# grep "image:" kube-flannel.yml

image: flannel-io/flannel:v0.28.1

image: flannel-io/flannel-cni-plugin:v1.9.0-flannel1

image: flannel-io/flannel:v0.28.1

[root@master ~]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

serviceaccount/flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

[root@master ~]# kubectl get nodes #需要等待10秒钟 NotReady→Ready

NAME STATUS ROLES AGE VERSION

master NotReady control-plane 46m v1.35.3

node1 NotReady <none> 28m v1.35.3

node2 Ready <none> 28m v1.35.3

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane 46m v1.35.3

node1 Ready <none> 28m v1.35.3

node2 Ready <none> 28m v1.35.38.pod

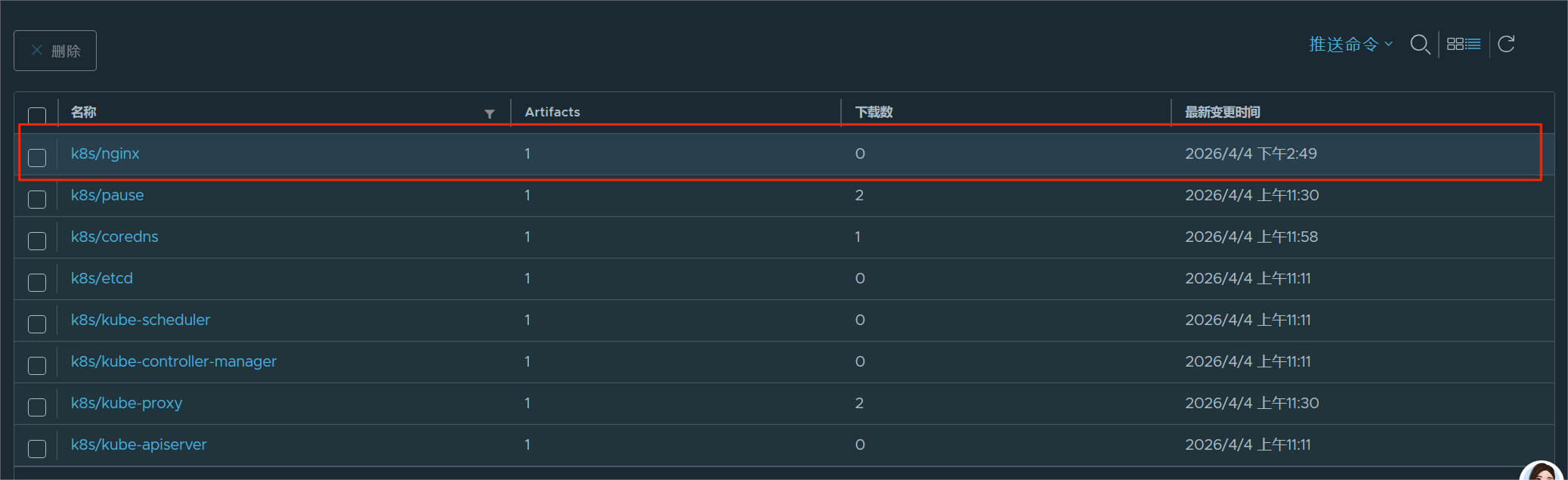

提示:记得把nginx的镜像导入harbor仓库

bash

[root@harbor ~]# ls

anaconda-ks.cfg harbor-offline-installer-v2.5.4.tgz nginx-1.23.tar.gz

[root@harbor ~]# docker load -i nginx-1.23.tar.gz

8cbe4b54fa88: Loading layer [==================================================>] 84.01MB/84.01MB

5dd6bfd241b4: Loading layer [==================================================>] 62.51MB/62.51MB

043198f57be0: Loading layer [==================================================>] 3.584kB/3.584kB

2731b5cfb616: Loading layer [==================================================>] 4.608kB/4.608kB

6791458b3942: Loading layer [==================================================>] 3.584kB/3.584kB

4d33db9fdf22: Loading layer [==================================================>] 7.168kB/7.168kB

Loaded image: nginx:1.23

[root@harbor ~]# docker images

i Info → U In Use

IMAGE ID DISK USAGE CONTENT SIZE EXTRA

goharbor/chartmuseum-photon:v2.5.4 e5134e6ca037 231MB 0B U

goharbor/harbor-core:v2.5.4 fb4df7c64e84 208MB 0B U

goharbor/harbor-db:v2.5.4 76e7b3295f2b 225MB 0B U

goharbor/harbor-exporter:v2.5.4 388b5ac2eed4 87.4MB 0B

goharbor/harbor-jobservice:v2.5.4 01ec4f1c5ddd 233MB 0B U

goharbor/harbor-log:v2.5.4 1c30eb78ebc4 161MB 0B U

goharbor/harbor-portal:v2.5.4 bba3d21bc4b9 162MB 0B U

goharbor/harbor-registryctl:v2.5.4 984f0c8cd458 136MB 0B U

goharbor/nginx-photon:v2.5.4 0e682f78c76f 154MB 0B U

goharbor/notary-server-photon:v2.5.4 e542ccac08c2 112MB 0B

goharbor/notary-signer-photon:v2.5.4 65644cf6aaa1 109MB 0B

goharbor/prepare:v2.5.4 5582f3ef9fbe 163MB 0B

goharbor/redis-photon:v2.5.4 c89d59625d5a 155MB 0B U

goharbor/registry-photon:v2.5.4 5e2d95b5227f 78.1MB 0B U

goharbor/trivy-adapter-photon:v2.5.4 1142826e8329 251MB 0B

nginx:1.23 a7be6198544f 142MB 0B

[root@harbor ~]# docker tag nginx:1.23 reg.timinglee.org/k8s/nginx:latest

[root@harbor ~]# docker push reg.timinglee.org/k8s/nginx:latest

The push refers to repository [reg.timinglee.org/k8s/nginx]

4d33db9fdf22: Pushed

6791458b3942: Pushed

2731b5cfb616: Pushed

043198f57be0: Pushed

5dd6bfd241b4: Pushed

8cbe4b54fa88: Pushed

latest: digest: sha256:a97a153152fcd6410bdf4fb64f5622ecf97a753f07dcc89dab14509d059736cf size: 1570

(1)资源使用的方法

命令式

bash

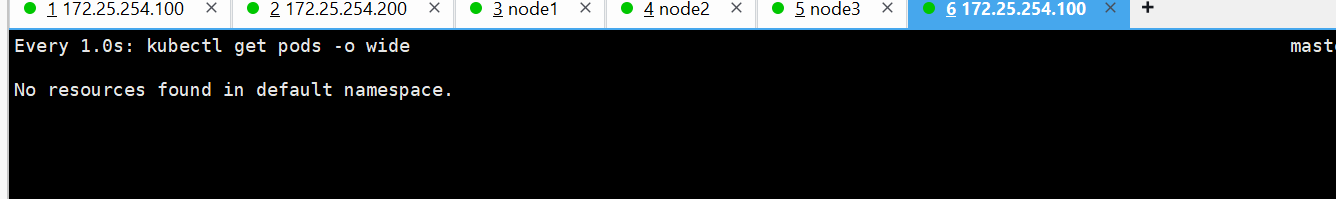

[root@master ~]# kubectl get pods #镜像大,要等待5秒

NAME READY STATUS RESTARTS AGE

webpod 0/1 ContainerCreating 0 18s

[root@master ~]# kubectl describe pods webpod #查看过程

Name: webpod

Namespace: default

Priority: 0

Service Account: default

Node: node1/172.25.254.10

Start Time: Sat, 04 Apr 2026 14:51:20 +0800

Labels: run=webpod

Annotations: <none>

Status: Running

IP: 10.244.1.4

IPs:

IP: 10.244.1.4

Containers:

webpod:

Container ID: docker://a7fb5817d057e407b70715a90da45351fa46dd6f5451b55946395dead209ce6a

Image: reg.timinglee.org/k8s/nginx:latest

Image ID: docker-pullable://reg.timinglee.org/k8s/nginx@sha256:a97a153152fcd6410bdf4fb64f5622ecf97a753f07dcc89dab14509d059736cf

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sat, 04 Apr 2026 14:51:54 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-dxqs4 (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-dxqs4:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 46s default-scheduler Successfully assigned default/webpod to node1

Normal Pulling 46s kubelet spec.containers{webpod}: Pulling image "reg.timinglee.org/k8s/nginx:latest"

Normal Pulled 42s kubelet spec.containers{webpod}: Successfully pulled image "reg.timinglee.org/k8s/nginx:latest" in 3.298s (3.298s including waiting). Image size: 142145851 bytes.

Normal Created 12s kubelet spec.containers{webpod}: Container created

Normal Started 12s kubelet spec.containers{webpod}: Container started

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

webpod 1/1 Running 0 52s

bash

[root@master ~]# kubectl get pods -o wide #查看运行机子

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

webpod 1/1 Running 0 12m 10.244.1.4 node1 <none> <none>

[root@master ~]# kubectl delete pods webpod #删除容器

pod "webpod" deleted from default namespaceyaml文件方式

bash

[root@master ~]# kubectl create deployment test --image nginx --replicas 1 --dry-run=client -o yaml > test.yml

root@master ~]# cat test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 1

selector:

matchLabels:

app: test

# strategy: {}

template:

metadata:

labels:

app: test

spec:

containers:

- image: image: reg.timinglee.org/k8s/nginx:latest

name: nginx

#resources: {}

#status: {}

#建立式

[root@master ~]# kubectl create -f test.yml

deployment.apps/test created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-r2bs8 1/1 Running 0 22s

[root@master ~]# kubectl delete -f test.yml

deployment.apps "test" deleted from default namespace

[root@master ~]# kubectl get pods

No resources found in default namespace.

#声明式

[root@master ~]# kubectl apply -f test.yml

deployment.apps/test created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 10s

#注意建立只能建立不能更新,声明可以

[root@master ~]# vim test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 2 #只修改pod数量

selector:

matchLabels:

app: test

# strategy: {}

template:

metadata:

labels:

app: test

spec:

containers:

- image: reg.timinglee.org/k8s/nginx:latest

name: nginx

#resources: {}

#status: {}

root@master ~]# kubectl create -f test.yml

Error from server (AlreadyExists): error when creating "test.yml": deployments.apps "test" already exists

[root@master ~]# kubectl apply -f test.yml

deployment.apps/test configured

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 59s

test-7676f665c8-vsc69 1/1 Running 0 4s(2).资源类型

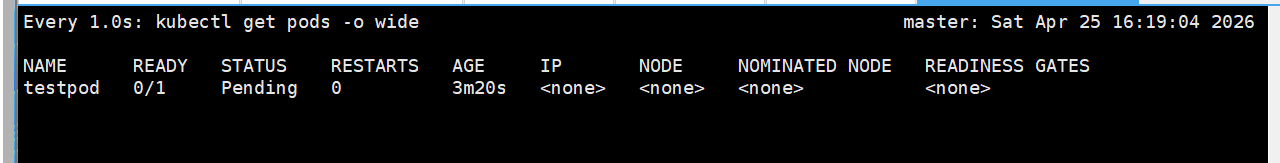

如果出现以下错误:

bash

[root@master ~]# kubectl -n timinglee get pods

NAME READY STATUS RESTARTS AGE

testpod 0/1 ErrImagePull 0 17s可以通过以下方式检查:

bash

kubectl -n timinglee get pods #看这个 Pod 现在状态

kubectl -n timinglee describe pod testpod #看详细错误原因

kubectl -n timinglee logs testpod 看容器日志node

bash

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane 4h37m v1.35.3

node1 Ready <none> 4h20m v1.35.3

node2 Ready <none> 4h19m v1.35.3

[root@master ~]# kubeadm token create --print-join-command #复制到节点上运行,就能加入集群!

kubeadm join 172.25.254.100:6443 --token p680ky.5har9twdw68nu2pp --discovery-token-ca-cert-hash sha256:1c4d35623b9aab09984bb8a62383a85e38fea77d530b10b69a52151b50efce56 namespace

bash

[root@master ~]# kubectl get namespaces #查看 Kubernetes 里所有命名空间

NAME STATUS AGE

default Active 5h45m

kube-flannel Active 4h59m

kube-node-lease Active 5h45m

kube-public Active 5h45m

kube-system Active 5h45m

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 76m

test-7676f665c8-vsc69 1/1 Running 0 75m

[root@master ~]# kubectl -n kube-flannel get pods #查看集群网络插件 Flannel 的运行状态

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-5p8w4 1/1 Running 0 4h59m

kube-flannel-ds-d6545 1/1 Running 0 4h59m

kube-flannel-ds-kmcp5 1/1 Running 0 4h59m

[root@master ~]# kubectl create namespace timinglee

namespace/timinglee created

[root@master ~]# kubectl get namespaces

NAME STATUS AGE

default Active 5h54m

kube-flannel Active 5h8m

kube-node-lease Active 5h54m

kube-public Active 5h54m

kube-system Active 5h54m

timinglee Active 12s

[root@master ~]# kubectl -n timinglee run testpod --image reg.timinglee.org/k8s/nginx:latest

pod/testpod created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 93m

test-7676f665c8-vsc69 1/1 Running 0 92m

[root@master ~]# kubectl -n timinglee get pods

NAME READY STATUS RESTARTS AGE

testpod 1/1 Running 0 28s

(3).kubectl命令

bash

[root@master ~]# cat test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 4

selector:

matchLabels:

app: test

# strategy: {}

template:

metadata:

labels:

app: test

spec:

containers:

# - image: reg.timinglee.org/k8s/nginx:latest

- image: reg.timinglee.org/k8s/myapp:v1

#name: nginx

name: myapp

#resources: {}

#status: {}

[root@master ~]# kubectl -n timinglee delete pods testpod #清理testpod 避免系统卡顿

pod "testpod" deleted from timinglee namespace

[root@master ~]# kubectl apply -f test.yml

[root@master ~]# kubectl get deployments.apps

NAME READY UP-TO-DATE AVAILABLE AGE

test 2/2 2 2 122m

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 123m

test-7676f665c8-vsc69 1/1 Running 0 122m

[root@master ~]# kubectl edit deployments.apps test

replicas: 4

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-hdv67 1/1 Running 0 10s

test-7676f665c8-kvwvt 1/1 Running 0 10s

test-7676f665c8-qvk4m 1/1 Running 0 125m

test-7676f665c8-vsc69 1/1 Running 0 124m

[root@master ~]# kubectl edit deployments.apps test

deployment.apps/test edited

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-qvk4m 1/1 Running 0 150m

[root@master ~]# kubectl edit deployments.apps test

deployment.apps/test edited

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-7676f665c8-4qqkp 1/1 Running 0 2s

test-7676f665c8-fwn2x 1/1 Running 0 2s

test-7676f665c8-mrl85 1/1 Running 0 2s

test-7676f665c8-qvk4m 1/1 Running 0 151m

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-7676f665c8-4qqkp 1/1 Running 0 14s 10.244.2.6 node2 <none> <none>

test-7676f665c8-fwn2x 1/1 Running 0 14s 10.244.2.7 node2 <none> <none>

test-7676f665c8-mrl85 1/1 Running 0 14s 10.244.1.13 node1 <none> <none>

test-7676f665c8-qvk4m 1/1 Running 0 151m 10.244.1.8 node1 <none> <none>

[root@master ~]# kubectl delete service test

service "test" deleted from default namespace

[root@master ~]# kubectl expose deployment test --port 80 --target-port 80

service/test exposed

[root@master ~]# kubectl describe service test

Name: test

Namespace: default

Labels: app=test

Annotations: <none>

Selector: app=test

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.102.57.191

IPs: 10.102.57.191

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.15:80,10.244.2.8:80,10.244.1.16:80 + 1 more...

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

[root@master ~]# curl 10.102.57.191

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ~]# curl 10.102.57.191/hostname.html

test-664bc5f8f8-7g2d8

[root@master ~]# kubectl logs pods/test-664bc5f8f8-7g2d8

10.244.0.0 - - [04/Apr/2026:10:57:01 +0000] "GET /hostname.html HTTP/1.1" 200 22 "-" "curl/7.76.1" "-"attech

bash

[root@master ~]# kubectl run testpod -it --image=reg.timinglee.org/k8s/myapp:v1 -- /bin/sh

All commands and output from this session will be recorded in container logs, including credentials and sensitive information passed through the command prompt.

If you don't see a command prompt, try pressing enter.

/ #

/ #

/ # Session ended, resume using 'kubectl attach testpod -c testpod -i -t' command when the pod is running

[root@master ~]# kubectl attach testpod -it

All commands and output from this session will be recorded in container logs, including credentials and sensitive information passed through the command prompt.

If you don't see a command prompt, try pressing enter.

/ #

/ #

/ # Session ended, resume using 'kubectl attach testpod -c testpod -i -t' command when the pod is running

[root@master ~]# kubectl exec -it testpod -c testpod -- /bin/sh

/ #

/ #

/ # exec attach failed: error on attach stdin: read escape sequence

command terminated with exit code 126

[root@master ~]# kubectl exec -it testpod -c testpod -- /bin/sh

/ # ls

bin dev etc home lib media mnt proc root run sbin srv sys tmp usr var

/ # exec attach failed: error on attach stdin: read escape sequence

command terminated with exit code 126扩容

提示:刚开始可能会出现:

bash

[root@master ~]# kubectl get pods

E0405 10:03:59.231503 4255 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

E0405 10:03:59.232155 4255 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

E0405 10:03:59.233963 4255 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

E0405 10:03:59.234521 4255 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

E0405 10:03:59.236080 4255 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

The connection to the server localhost:8080 was refused - did you specify the right host or port?

[root@master ~]# kubectl apply -f test.yml

error: error validating "test.yml": error validating data: failed to download openapi: Get "http://localhost:8080/openapi/v2?timeout=32s": dial tcp [::1]:8080: connect: connection refused; if you choose to ignore these errors, turn validation off with --validate=false解决方案:

bash

[root@master ~]# source ~/.bash_profile扩容

bash

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-664bc5f8f8-5cj42 1/1 Running 1 (35m ago) 15h

testpod 1/1 Running 1 (35m ago) 14h

[root@master ~]# vim test.yml

[root@master ~]# kubectl edit deployments.apps test

deployment.apps/test edited

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-664bc5f8f8-5cj42 1/1 Running 1 (37m ago) 15h

test-664bc5f8f8-lxzt4 1/1 Running 0 2s

test-664bc5f8f8-p46tl 1/1 Running 0 2s

test-664bc5f8f8-v5zfq 1/1 Running 0 2s

testpod 1/1 Running 1 (37m ago) 14h

[root@master ~]# kubectl scale deployment test --replicas 6

deployment.apps/test scaled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-664bc5f8f8-5cj42 1/1 Running 1 (37m ago) 15h

test-664bc5f8f8-6lznm 1/1 Running 0 2s

test-664bc5f8f8-lxzt4 1/1 Running 0 34s

test-664bc5f8f8-p46tl 1/1 Running 0 34s

test-664bc5f8f8-pvt8f 1/1 Running 0 2s

test-664bc5f8f8-v5zfq 1/1 Running 0 34s

testpod 1/1 Running 1 (37m ago) 14h

[root@master ~]# kubectl scale deployment test --replicas 1

deployment.apps/test scaled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-664bc5f8f8-5cj42 1/1 Running 1 (37m ago) 15h

testpod 1/1 Running 1 (37m ago) 14h

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

test-664bc5f8f8-5cj42 1/1 Running 1 (37m ago) 15h app=test,pod-template-hash=664bc5f8f8

testpod 1/1 Running 1 (37m ago) 14h run=testpod

[root@master ~]# kubectl label pods testpod name=lee

pod/testpod labeled

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

test-664bc5f8f8-5cj42 1/1 Running 1 (38m ago) 15h app=test,pod-template-hash=664bc5f8f8

testpod 1/1 Running 1 (38m ago) 14h name=lee,run=testpod

[root@master ~]# kubectl label pods testpod name-

pod/testpod unlabeled

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

test-664bc5f8f8-5cj42 1/1 Running 1 (38m ago) 15h app=test,pod-template-hash=664bc5f8f8

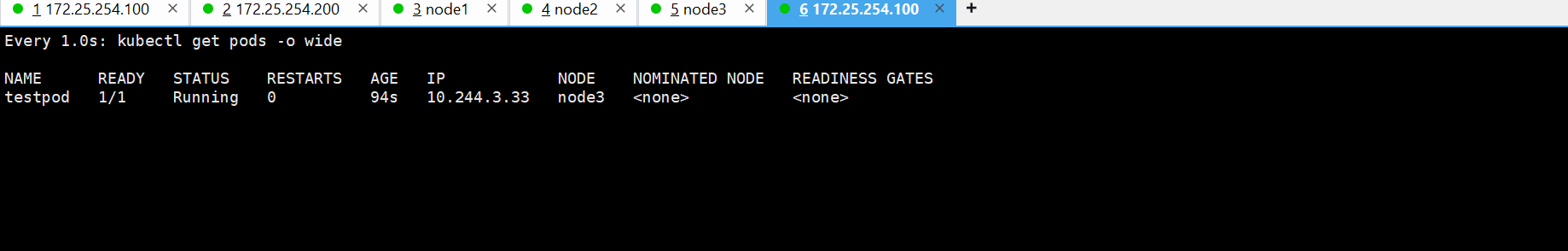

testpod 1/1 Running 1 (38m ago) 14h run=testpod(4).Pod应用

自助式管理pod

bash

[root@master ~]# cat test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 4

selector:

matchLabels:

app: test

# strategy: {}

template:

metadata:

labels:

app: test

spec:

containers:

# - image: reg.timinglee.org/k8s/nginx:latest

- image: reg.timinglee.org/library/myapp-v2:latest

#name: nginx

name: myapp-v2

#resources: {}

#status: {}

[root@master pod]# kubectl run myappv2 --image myapp:v2 --port 80

pod/myappv2 created

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ContainerCreating 0 8s #创建中

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ErrImagePull 0 20s #镜像拉取失败

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ImagePullBackOff 0 3m48s #尝试从新拉去镜像

[root@master ~]# kubectl run myappv2 --image=reg.timinglee.org/library/myapp-v2:latest --port=80

pod/myappv2 created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 1/1 Running 0 55s

[root@master ~]# kubectl delete pods myappv2

pod "myappv2" deleted from default namespace

[root@master ~]# kubectl get pods

No resources found in default namespace.提示:记得上传镜像

bash

[root@harbor ~]# docker tag timinglee/myapp:v2 reg.timinglee.org/library/myapp-v1:latest

[root@harbor ~]# docker push reg.timinglee.org/library/myapp-v1:latest

The push refers to repository [reg.timinglee.org/library/myapp-v1]

05a9e65e2d53: Mounted from library/myapp

68695a6cfd7d: Mounted from library/myapp

c1dc81a64903: Mounted from library/myapp

8460a579ab63: Mounted from library/myapp

d39d92664027: Mounted from library/myapp

latest: digest: sha256:5f4afc8302ade316fc47c99ee1d41f8ba94dbe7e3e7747dd87215a15429b9102 size: 1362

[root@harbor ~]# docker tag timinglee/myapp:v2 reg.timinglee.org/library/myapp-v2:latest

[root@harbor ~]# docker push reg.timinglee.org/library/myapp-v2:latest

The push refers to repository [reg.timinglee.org/library/myapp-v2]

05a9e65e2d53: Mounted from library/myapp-v1

68695a6cfd7d: Mounted from library/myapp-v1

c1dc81a64903: Mounted from library/myapp-v1

8460a579ab63: Mounted from library/myapp-v1

d39d92664027: Mounted from library/myapp-v1

latest: digest: sha256:5f4afc8302ade316fc47c99ee1d41f8ba94dbe7e3e7747dd87215a15429b9102 size: 1362

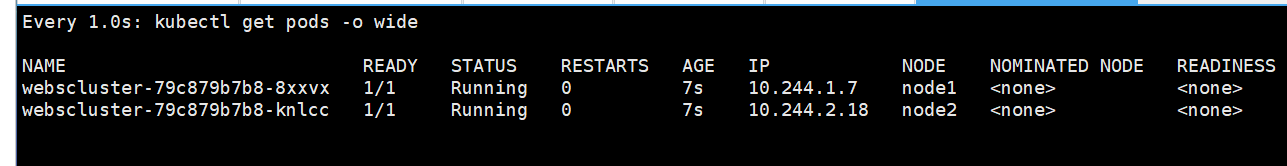

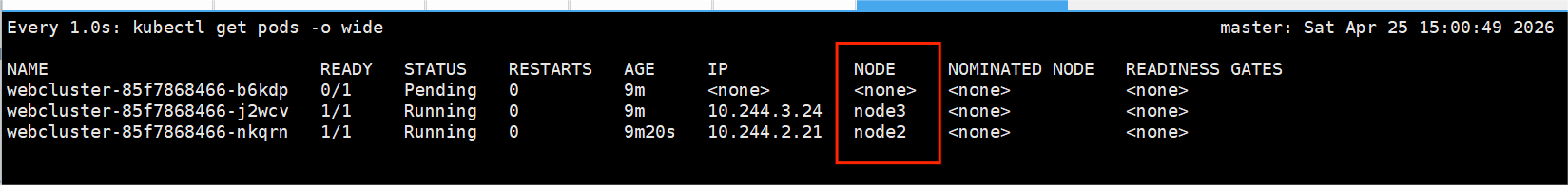

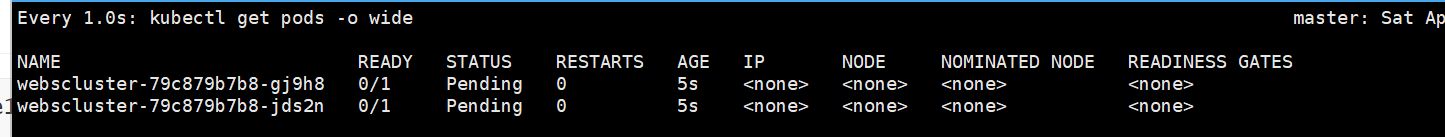

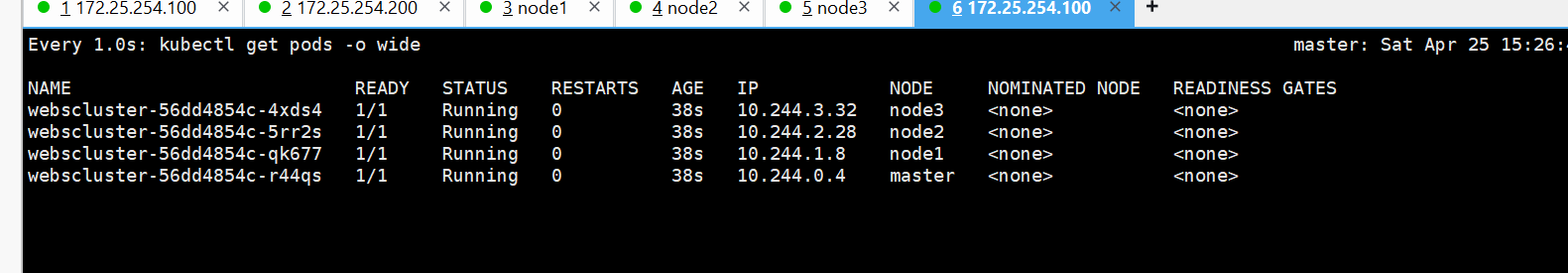

利用控制器管理pod

bash

#创建一个部署(Deployment),名字叫 webcluster

[root@master ~]# kubectl create deployment webcluster --image reg.timinglee.org/library/myapp-v2:latest --replicas 1

deployment.apps/webcluster created

#查看所有部署,显示详细信息(镜像、标签、运行状态)

[root@master ~]# kubectl get deployment.apps -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

webcluster 1/1 1 1 27s myapp-v2 reg.timinglee.org/library/myapp-v2:latest app=webcluster

[root@master ~]# kubectl scale deployment webcluster --replicas 2

deployment.apps/webcluster scaled

[root@master ~]# kubectl get deployment.apps -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

webcluster 2/2 2 2 68s myapp-v2 reg.timinglee.org/library/myapp-v2:latest app=webcluster

[root@master ~]# kubectl scale deployment webcluster --replicas 1

deployment.apps/webcluster scaled

[root@master ~]# kubectl get deployment.apps -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

webcluster 1/1 1 1 81s myapp-v2 reg.timinglee.org/library/myapp-v2:latest app=webcluster

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

webcluster-585458b5b7-tdfvq 1/1 Running 0 114s

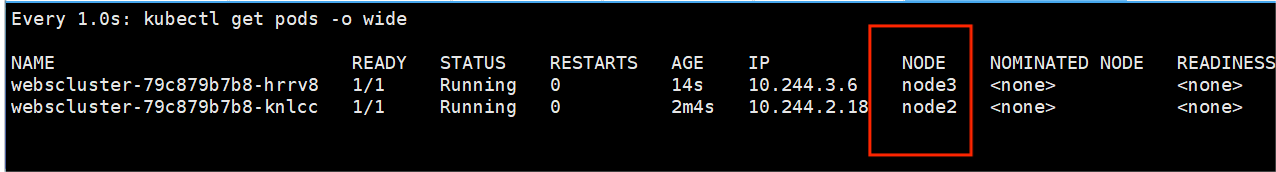

#去掉这个 Pod 的 app 标签

[root@master ~]# kubectl label pods webcluster-585458b5b7-tdfvq app-

pod/webcluster-585458b5b7-tdfvq unlabeled

[root@master ~]# kubectl get pods

#为啥两个,因为你把标签删了 → Deployment 找不到这个 Pod 了

→ Deployment 以为 Pod 丢了 → 立刻新建了一个 Pod

所以现在变成 2 个 Pod

NAME READY STATUS RESTARTS AGE

webcluster-585458b5b7-kw5z5 1/1 Running 0 10s

webcluster-585458b5b7-tdfvq 1/1 Running 0 5m58s

[root@master ~]# kubectl label pods webcluster-585458b5b7-tdfvq app=webcluster

pod/webcluster-585458b5b7-tdfvq labeled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

webcluster-585458b5b7-tdfvq 1/1 Running 0 6m30s

#更新版本

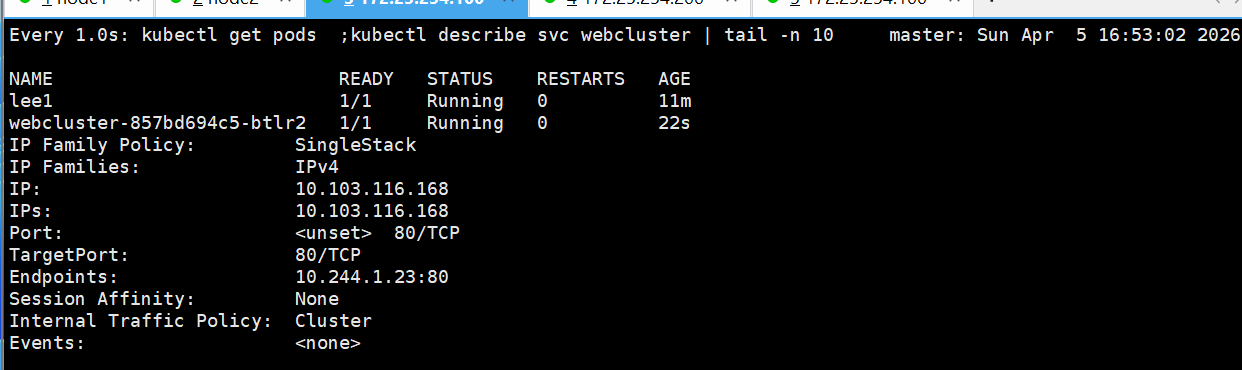

#给 webcluster 这个部署开一个入口(Service)

[root@master ~]# kubectl expose deployment webcluster --port 80 --target-port 80

service/webcluster exposed

[root@master ~]# kubectl describe svc webcluster | tail -n 10

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.103.116.168

IPs: 10.103.116.168

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.13:80

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

[root@master ~]# curl 10.103.116.168

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

#更新镜像 → 改成 v1

[root@master ~]# kubectl set image deployments/webcluster myapp-v2=reg.timinglee.org/library/myapp-v1:latest

deployment.apps/webcluster image updated

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

[root@master ~]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

1 <none>

2 <none>

[root@master ~]# kubectl rollout undo deployment webcluster --to-revision 1

deployment.apps/webcluster rolled back

[root@master ~]# curl 10.103.116.168

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>利用yaml文件部署应用

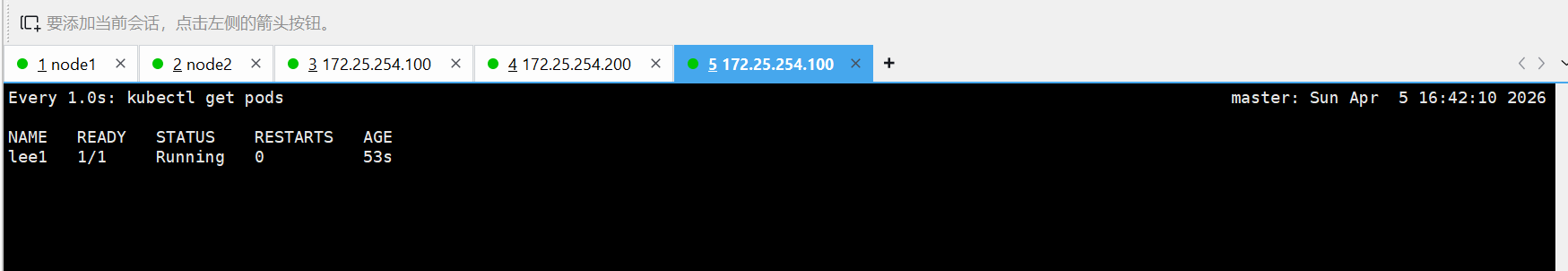

#运行单个容器

bash

[root@master ~]# kubectl run lee1 --image reg.timinglee.org/library/myapp-v1:latest --dry-run=client -o yaml > 1test.yml

[root@master ~]# vim 1test.yml

# API版本,固定写法,Pod用v1

apiVersion: v1

# 资源类型:这里是创建一个Pod

kind: Pod

# 元数据:Pod的名字、标签

metadata:

# 标签,用于筛选、匹配

labels:

run: lee1

# Pod的名字:lee1

name: lee1

# 规格:Pod里的容器内容

spec:

# 容器列表

containers:

# 第一个容器

- image: reg.timinglee.org/library/myapp-v1:latest # 镜像地址(你的私有仓库v1)

name: lee1 # 容器名字:lee1

resources: {} # 资源限制(没配置就是空)

dnsPolicy: ClusterFirst # DNS策略,默认集群内优先

restartPolicy: Always # 重启策略:挂了就自动重启

# 状态字段,创建时不用写,K8s自动填充

status: {}

[root@master ~]# kubectl apply -f 1test.yml

pod/lee1 created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 1/1 Running 0 7s

webcluster-585458b5b7-7v864 1/1 Running 0 3h5m

[root@master ~]# kubectl delete deployments.app webcluster

deployment.apps "webcluster" deleted from default namespace

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 1/1 Running 0 82s

[root@master ~]# kubectl describe pods

Name: lee1

Namespace: default

Priority: 0

Service Account: default

Node: node1/172.25.254.10

Start Time: Sun, 05 Apr 2026 14:56:03 +0800

Labels: run=lee1

Annotations: <none>

Status: Running

IP: 10.244.1.17

IPs:

IP: 10.244.1.17

Containers:

lee1:

Container ID: docker://77f218983d90d2b5a2b2137fa05bd0dee1d8f92b46646d514c4022054c4091b9

Image: reg.timinglee.org/library/myapp-v1:latest

Image ID: docker-pullable://reg.timinglee.org/library/myapp-v1@sha256:5f4afc8302ade316fc47c99ee1d41f8ba94dbe7e3e7747dd87215a15429b9102

Port: <none>

Host Port: <none>

State: Running

Started: Sun, 05 Apr 2026 14:56:04 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-xw4bb (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-xw4bb:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 93s default-scheduler Successfully assigned default/lee1 to node1

Normal Pulling 92s kubelet spec.containers{lee1}: Pulling image "reg.timinglee.org/library/myapp-v1:latest"

Normal Pulled 92s kubelet spec.containers{lee1}: Successfully pulled image "reg.timinglee.org/library/myapp-v1:latest" in 66ms (66ms including waiting). Image size: 15504059 bytes.

Normal Created 92s kubelet spec.containers{lee1}: Container created

Normal Started 92s kubelet spec.containers{lee1}: Container started

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 1/1 Running 0 111s

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 2m3s 10.244.1.17 node1 <none> <none>

[root@master ~]# kubectl delete -f 1test.yml

pod "lee1" deleted from default namespace

[root@master ~]# kubectl get pods

No resources found in default namespace.#运行多个容器

bash

[root@master ~]# cp 1test.yml 2test.yml

[root@master ~]# vim 2test.yml

[root@master ~]# kubectl apply -f 2test.yml

pod/lee1 created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 2/2 Running 0 6s

[root@master ~]# cat 2test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: reg.timinglee.org/library/myapp-v1:latest

name: lee1

- image: busybox:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 20000#理解pod间的网络整合

需要在172.25.254.200的主机中把busyboxplus:latest加入harbor中

bash

[root@harbor xia]# ls

busyboxplus.tar.gz

[root@harbor xia]# mv busyboxplus.tar.gz /root

[root@harbor xia]# cd

[root@harbor ~]# docker load -i busyboxplus.tar.gz

5f70bf18a086: Loading layer [==================================================>] 1.024kB/1.024kB

075a34aac01b: Loading layer [==================================================>] 13.43MB/13.43MB

774600fa57ae: Loading layer [==================================================>] 6.144kB/6.144kB

Loaded image: busyboxplus:latest

[root@harbor ~]# docker tag busyboxplus:latest reg.timinglee.org/library/busyboxplus:latest

[root@harbor ~]# docker push reg.timinglee.org/library/busyboxplus:latest

The push refers to repository [reg.timinglee.org/library/busyboxplus]

5f70bf18a086: Pushed

774600fa57ae: Pushed

075a34aac01b: Pushed

latest: digest: sha256:9d1c242c1fd588a1b8ec4461d33a9ba08071f0cc5bb2d50d4ca49e430014ab06 size: 1353

bash

[root@master ~]# cp 2test.yml 3test.yml

[root@master ~]# vim 3test.yml

[root@master ~]# cat 3test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: reg.timinglee.org/library/myapp-v1:latest

name: lee1

- image: busyboxplus:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 20000

[root@master ~]# kubectl apply -f 3test.yml

pod/lee1 configured

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 2/2 Running 0 11m

[root@master ~]# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

/ # curl localhost

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

/ # exec attach failed: error on attach stdin: read escape sequence

command terminated with exit code 126#端口映射

bash

[root@master ~]# cp 1test.yml 4test.yml

[root@master ~]# vim 4test.yml

[root@master ~]# cat 4test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: reg.timinglee.org/library/myapp-v1:latest

name: myapp-v1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

[root@master ~]# kubectl apply -f 4test.yml

pod/lee1 created

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 17s 10.244.1.20 node1 <none> <none>

[root@master ~]# curl 172.25.254.10

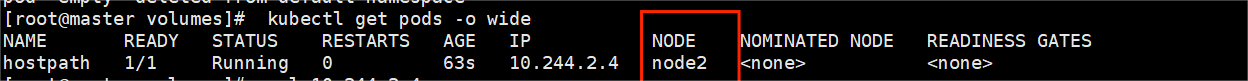

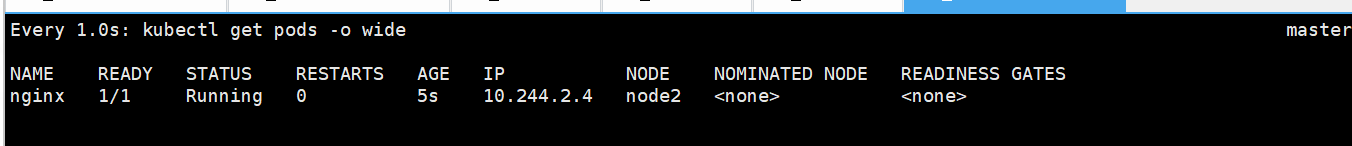

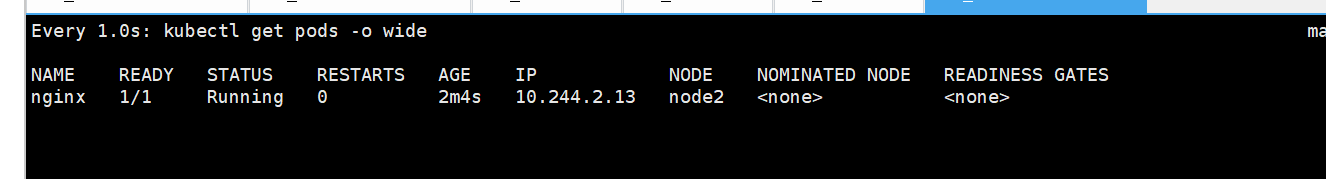

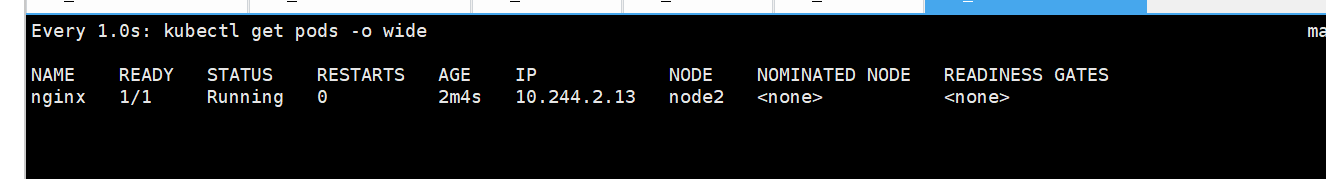

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>#选择运行节点

bash

[root@master ~]# cp 4test.yml 5test.yml

[root@master ~]# vim 5test.yml

[root@master ~]# cat 5test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

nodeSelector:

kubernetes.io/hostname: node2

containers:

- image: reg.timinglee.org/library/myapp-v1:latest

name: myapp-v1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

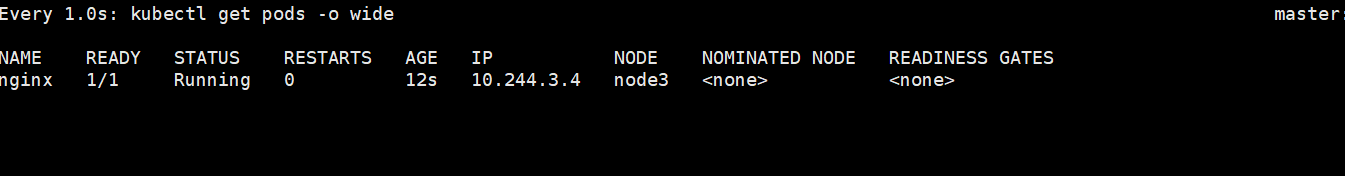

[root@master ~]# kubectl apply -f 5test.yml

pod/lee1 created

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 22s 10.244.2.13 node2 <none> <none>

此处节点为node2,先前是node1#共享宿主机网络

bash

[root@master ~]# cp 5test.yml 6test.yml

[root@master ~]# vim 6test.yml

[root@master ~]# cat 6test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

hostNetwork: true

nodeSelector:

kubernetes.io/hostname: node2

containers:

- image: reg.timinglee.org/library/busybox:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 1000

[root@master ~]# kubectl apply -f 6test.yml

pod/lee1 created

[root@master ~]# kubectl exec -it pods/lee1 -c reg.timinglee.org/library/busybox:latest -- /bin/sh

Error from server (BadRequest): container reg.timinglee.org/library/busybox:latest is not valid for pod lee1

[root@master ~]# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq qlen 1000

link/ether 00:0c:29:a7:9f:74 brd ff:ff:ff:ff:ff:ff

inet 172.25.254.20/24 brd 172.25.254.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fea7:9f74/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue

link/ether 36:ef:89:6a:8f:43 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue

link/ether 72:1a:37:ec:de:df brd ff:ff:ff:ff:ff:ff

inet 10.244.2.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::701a:37ff:feec:dedf/64 scope link

valid_lft forever preferred_lft forever

5: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue qlen 1000

link/ether 76:38:51:2a:bc:7c brd ff:ff:ff:ff:ff:ff

inet 10.244.2.1/24 brd 10.244.2.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::7438:51ff:fe2a:bc7c/64 scope link

valid_lft forever preferred_lft forever

6: veth5ca446a6@eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master cni0

link/ether 26:af:20:83:e7:47 brd ff:ff:ff:ff:ff:ff

inet6 fe80::24af:20ff:fe83:e747/64 scope link

valid_lft forever preferred_lft forever

7: veth06041591@eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master cni0

link/ether c2:31:7e:93:cb:56 brd ff:ff:ff:ff:ff:ff

inet6 fe80::c031:7eff:fe93:cb56/64 scope link

valid_lft forever preferred_lft forever

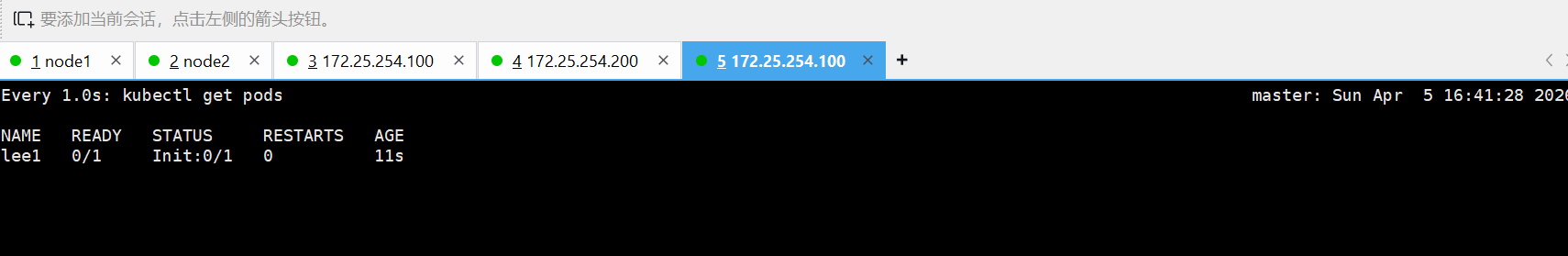

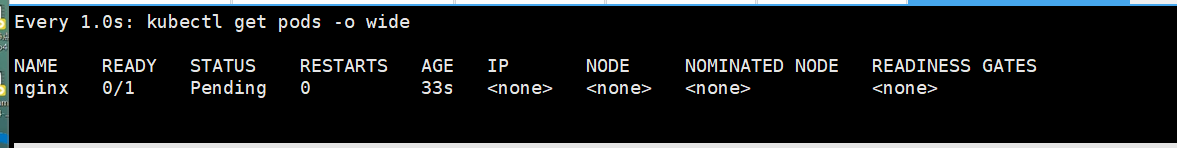

/ # (5).pod的生命周期

init 容器

bash

[root@master ~]# cp 6test.yml init.yml

[root@master ~]# vim init.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

initContainers:

- name: init-myservice

image: busybox

command: ["sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"]

containers:

- image: reg.timinglee.org/library/myapp-v1

name: myapp-v1

[root@master ~]# kubectl apply -f init.yml

pod/lee1 created在同一台机子中打开监控

bash

[root@master ~]# watch -n 1 kubectl get pods监控前

bash

[root@master ~]# kubectl exec -it pods/lee1 -c init-myservice -- /bin/sh

/ # touch /testfile

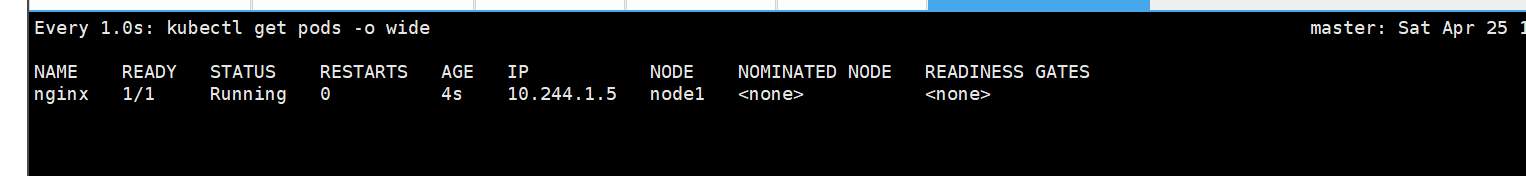

/ # command terminated with exit code 137监控后的变化

livenessprobe(存活探针)

bash

[root@master ~]# kubectl create deployment webcluster --image myapp-v1 --replicas 1 --dry-run=client -o yaml > liveness.yml

[root@master ~]# kubectl apply -f liveness.yml

deployment.apps/webcluster created

[root@master ~]# watch -n 1 "kubectl get pods ;kubectl describe svc webcluster | tail -n 10"

bash

[root@master ~]# kubectl exec -it pods/webcluster-857bd694c5-btlr2 -c myapp-v1 -- /bin/sh

/ #

/ #

/ #

/ #

/ #

/ #

/ # nginx -s stop

2026/04/05 08:57:34 [notice] 13#13: signal process started

/ # command terminated with exit code 137

[root@master ~]#

bash

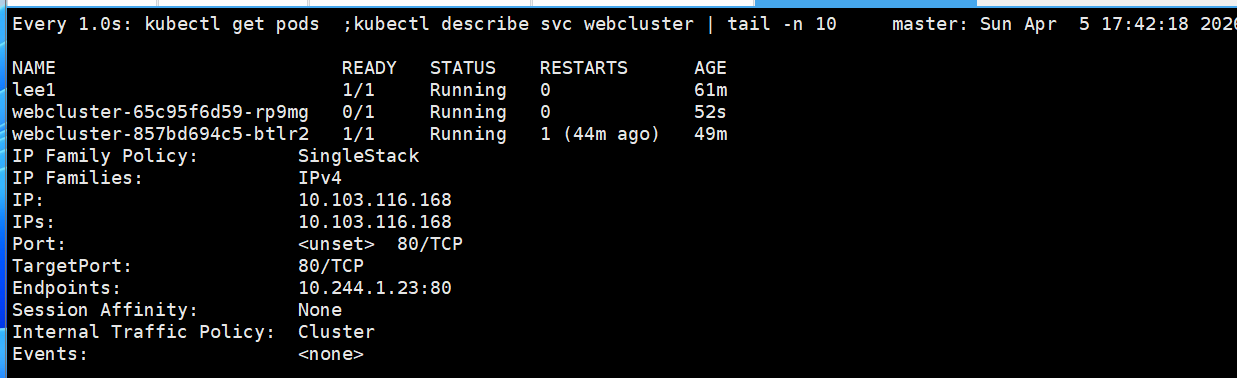

[root@master ~]# cp liveness.yml Readiness.yml

[root@master ~]# vim Readiness.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster # 标签,用来识别这个应用

name: webcluster # 部署名字叫 webcluster

spec:

replicas: 1 # 启动 1 个 Pod 副本

selector: # 选择器:管理哪些 Pod

matchLabels:

app: webcluster

template: # Pod 模板(创建 Pod 就用这个)

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp-v1 # 使用 myapp-v1 镜像

name: myapp-v1 # 容器名字

readinessProbe: # 就绪检查(核心功能)

httpGet: # 用 HTTP 检查

path: /test.html # 检查这个页面是否能访问

port: 80 # 检查 80 端口

initialDelaySeconds: 1 # 启动后等 1 秒开始检查

periodSeconds: 3 # 每 3 秒检查一次

timeoutSeconds: 1 # 超时 1 秒算失败

# 服务 Service(用来访问 Pod)

apiVersion: v1

kind: Service

metadata:

labels:

app: webcluster

name: webcluster

spec:

ports:

- port: 80 # Service 自己的端口

protocol: TCP

targetPort: 80 # 转发到 Pod 的 80 端口

selector:

app: webcluster # 选择标签为 app=webcluster 的 Pod[root@master ~]# kubectl apply -f Readiness.yml

deployment.apps/webcluster configured

bash

#监控

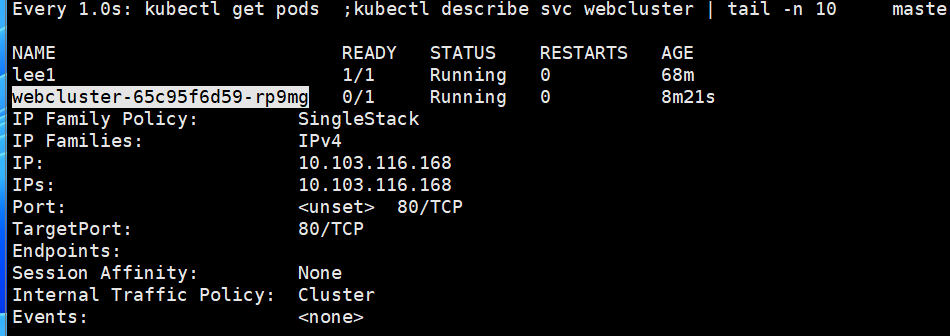

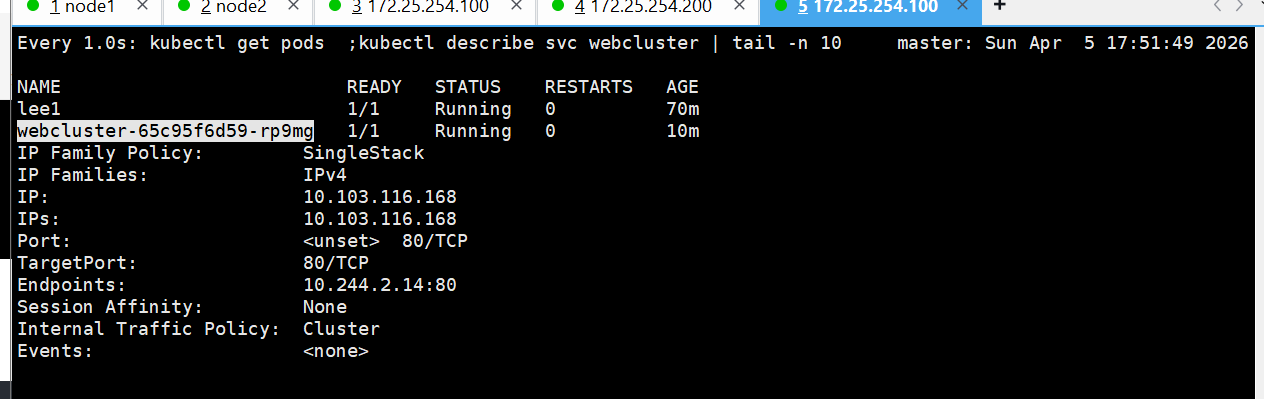

[root@master pod]# watch -n 1 "kubectl get pods ;kubectl describe svc webcluster | tail -n 10"监控前

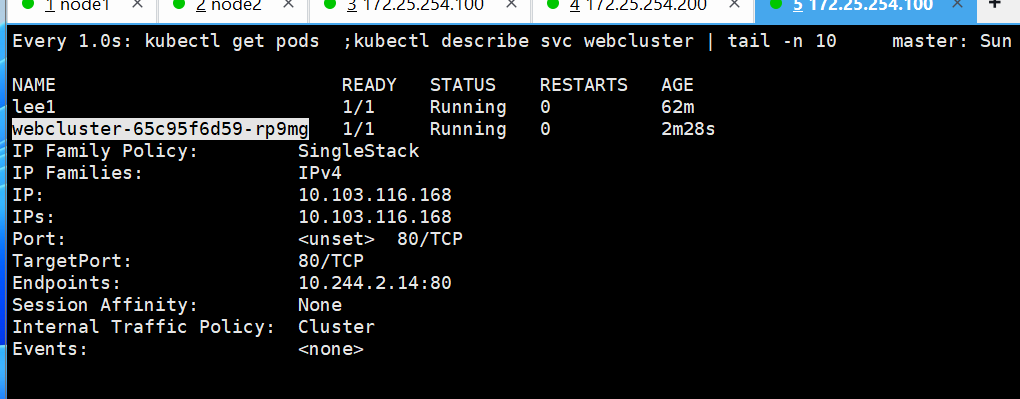

bash

[root@master ~]# kubectl exec -it pods/webcluster-65c95f6d59-rp9mg -c myapp-v1 -- /bin/sh

/ # echo timinglee > /usr/share/nginx/html/test.html

如果删除了/usr/share/nginx/html/test.html

bash

root@master ~]# kubectl exec -it pods/webcluster-65c95f6d59-rp9mg -c myapp-v1 -- /bin/sh

/ # echo timinglee > /usr/share/nginx/html/test.html

/ # rm -rf /usr/share/nginx/html/test.html

恢复

bash

[root@master ~]# kubectl exec -it pods/webcluster-65c95f6d59-rp9mg -c myapp-v1 -- /bin/sh

/ # echo timinglee > /usr/share/nginx/html/test.html

/ # rm -rf /usr/share/nginx/html/test.html

/ # echo timinglee > /usr/share/nginx/html/test.html

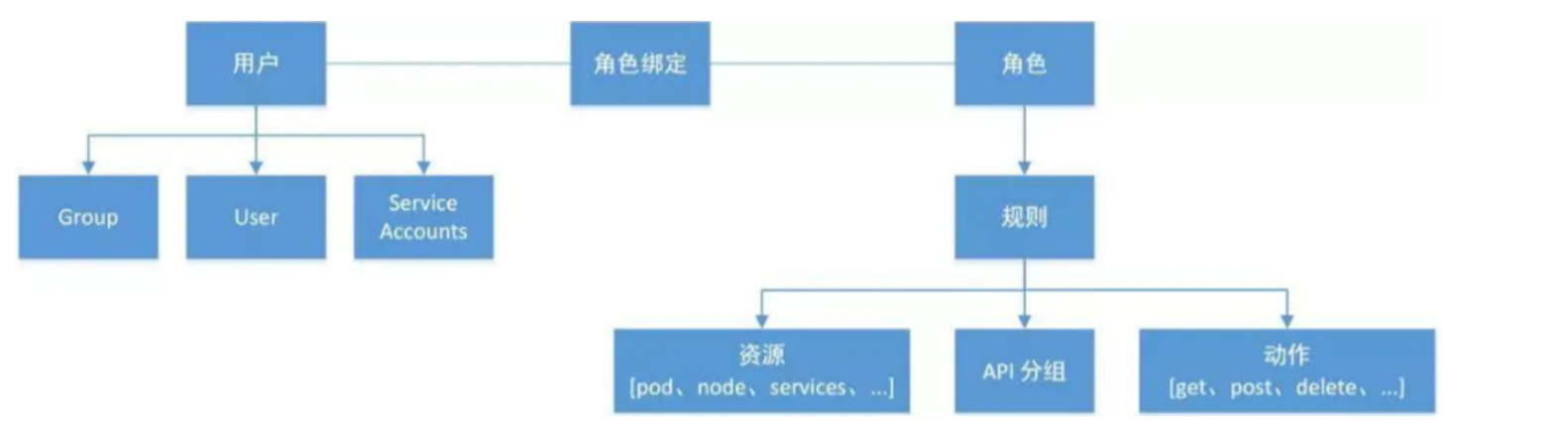

9.k8s中的控制器应用

(1)什么是控制器

控制器也是管理pod的一种手段

- 自主式pod:pod退出或意外关闭后不会被重新创建

- -控制器管理的 Pod:在控制器的生命周期里,始终要维持 Pod 的副本数目

Pod控制器是管理pod的中间层,使用Pod控制器之后,只需要告诉Pod控制器,想要多少个什么样的Pod就可以了,它会创建出满足条件的Pod并确保每一个Pod资源处于用户期望的目标状态。如果Pod资源在运行中出现故障,它会基于指定策略重新编排Pod

当建立控制器后,会把期望值写入etcd,k8s中的apiserver检索etcd中我们保存的期望状态,并对比pod的当前状态,如果出现差异代码自驱动立即恢复

(2)控制器常用类型

|--------------------------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 控制器名称 | 控制器用途 |

| Replication Controller | 比较原始的pod控制器,已经被废弃,由ReplicaSet替代 |

| ReplicaSet | ReplicaSet 确保任何时间都有指定数量的 Pod 副本在运行 |

| Deployment | 一个 Deployment 为 Pod 和 ReplicaSet 提供声明式的更新能力 |

| DaemonSet | DaemonSet 确保全指定节点上运行一个 Pod 的副本 |

| StatefulSet | StatefulSet 是用来管理有状态应用的工作负载 API 对象。 |

| Job | 执行批处理任务,仅执行一次任务,保证任务的一个或多个Pod成功结束 |

| CronJob | Cron Job 创建基于时间调度的 Jobs。 |

| HPA全称Horizontal Pod Autoscaler | 根据资源利用率自动调整service中Pod数量,实现Pod水平自动缩放 |

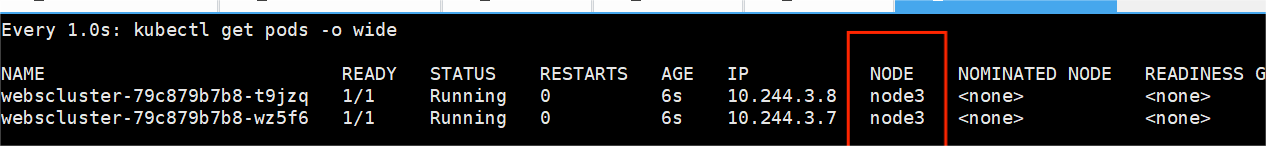

(3)replicaset控制器

bash

[root@master ~# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > repset.yml

[root@master ~]# vim repset.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 4 #运行几个

selector:

matchLabels:

app: webcluster

# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: reg.timinglee.org/library/myapp:v1

name: myapp

[root@master ~# kubectl apply -f repset.yml

#打开一个新的shell监控结果

[root@master ~]# watch -n 1 kubectl get pods --show-labels

Every 1.0s: kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

webcluster-566bdd4bff-6znbd 1/1 Running 0 13m 10.244.1.3 node1 <none> <none>

webcluster-566bdd4bff-9wnx9 1/1 Running 0 13m 10.244.2.5 node2 <none> <none>

webcluster-566bdd4bff-rxj25 1/1 Running 0 5m52s 10.244.2.6 node2 <none> <none>

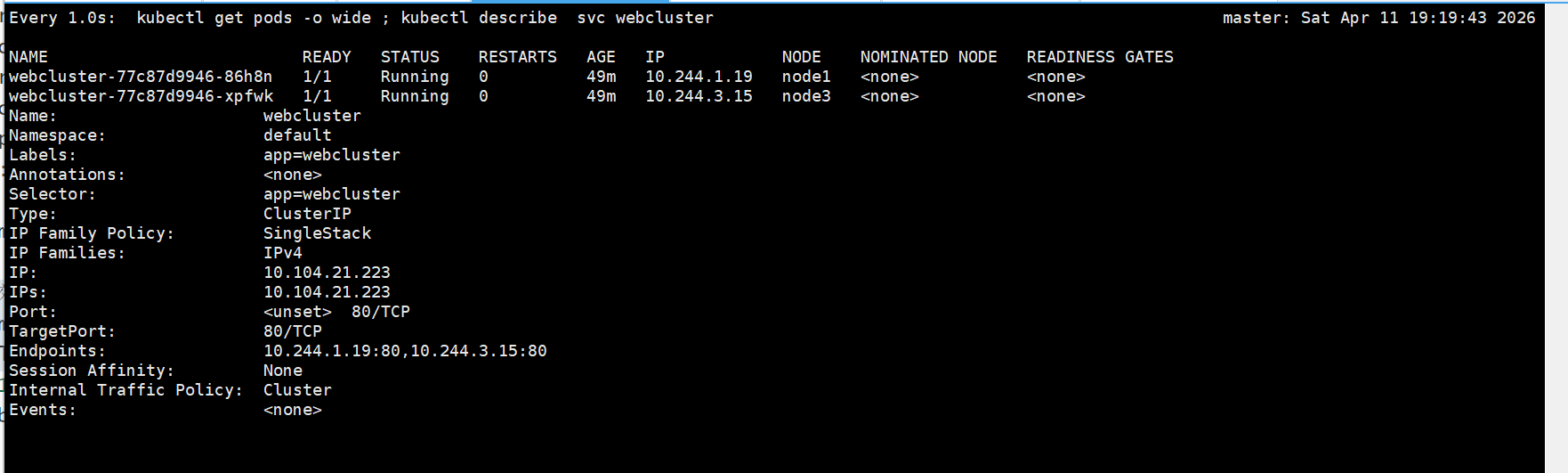

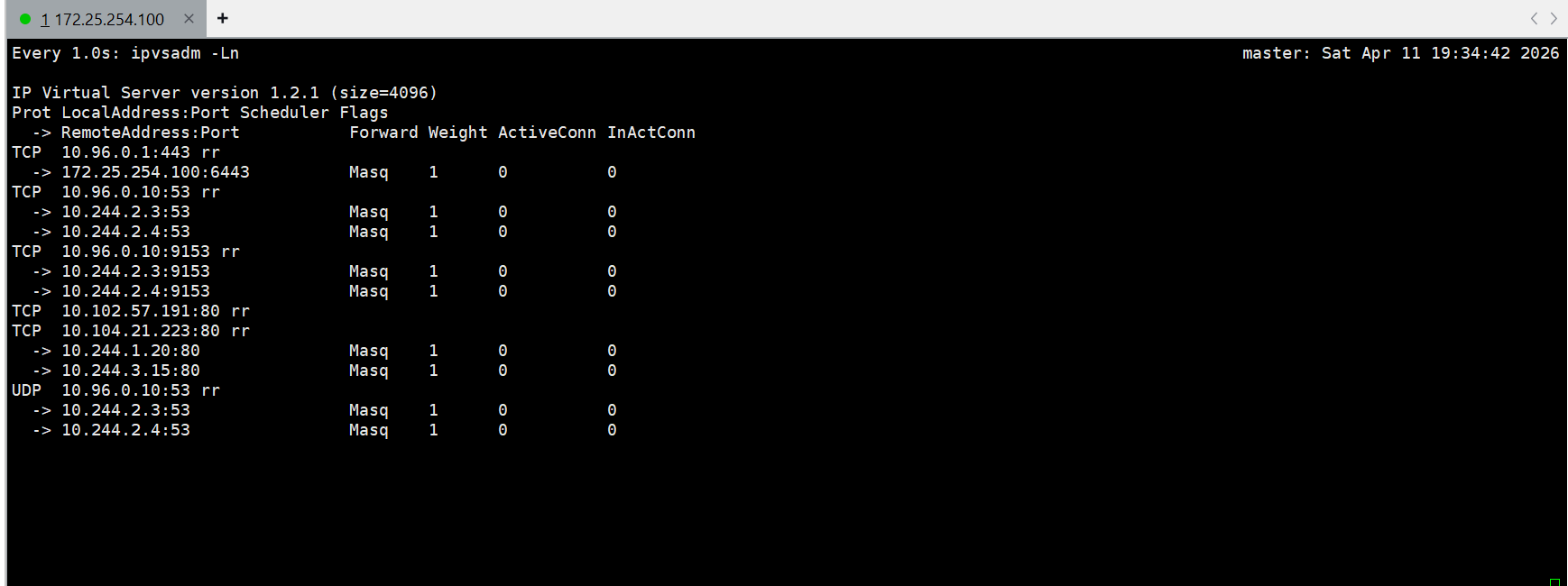

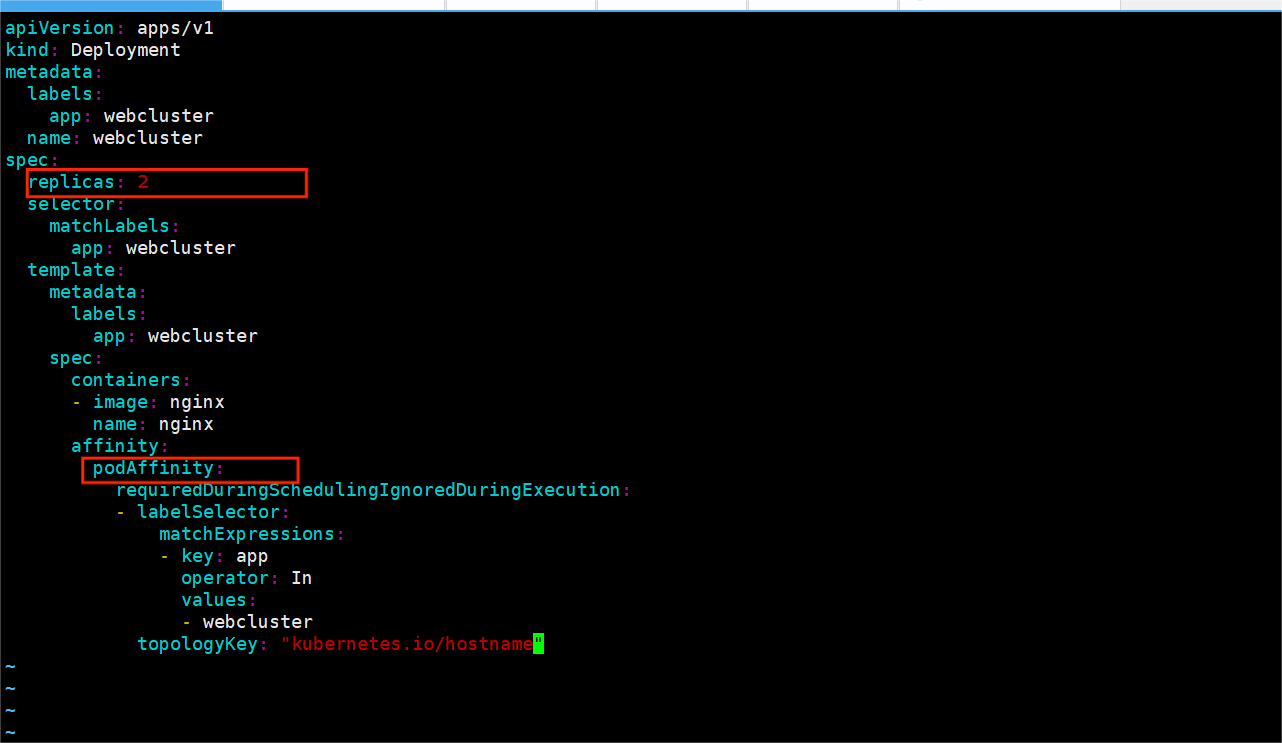

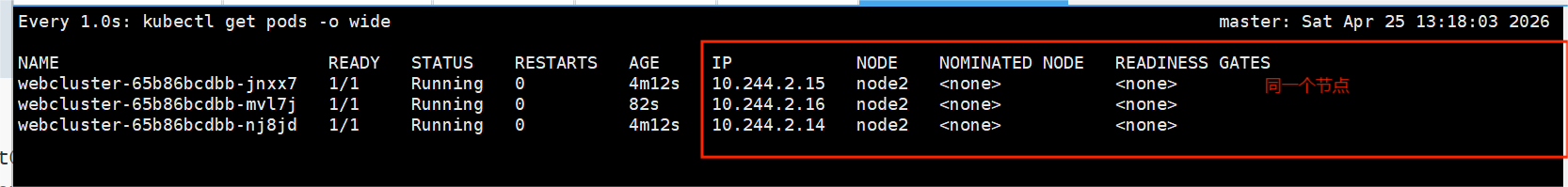

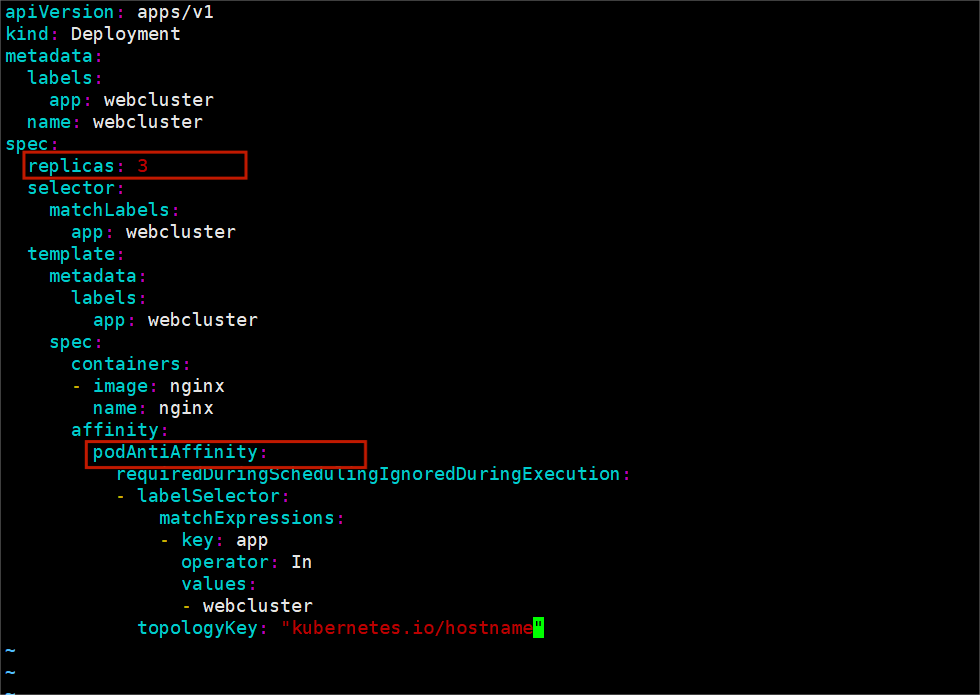

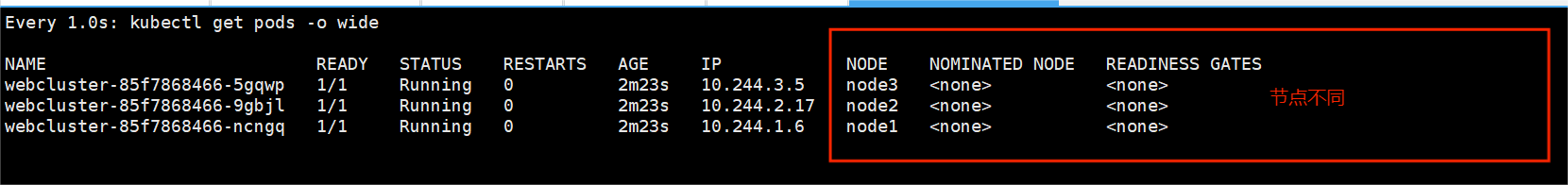

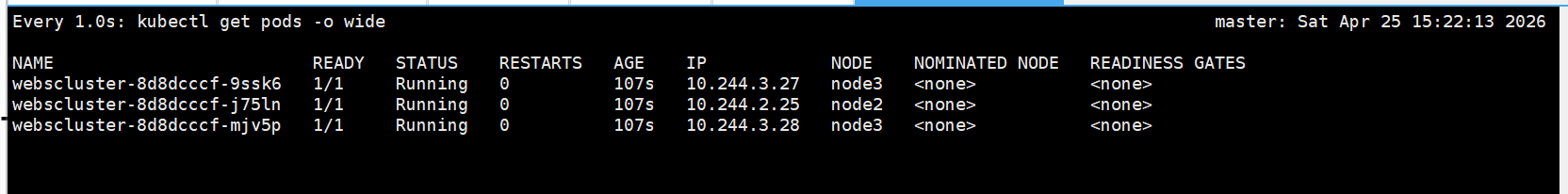

webcluster-566bdd4bff-smpzv 1/1 Running 0 5m52s 10.244.1.5 node1 <none> <none>(3)deployment

监控

bash

[root@master ~]# watch -n 1 " kubectl get pods --show-labels;echo ====;kubectl get replicasets.apps"建立deployment控制器

bash

[root@master ~]# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > dep.yml

[root@master controler]# vim dep.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

minReadySeconds: 5 #延迟5秒

replicas: 6

selector:

matchLabels:

app: webcluster

strategy:

rollingUpdate:

maxSurge: 1 #更新时pod数量最多比期望值多一个

maxUnavailable: 0 #不能使用pod数量比期望值数量多0

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: reg.timinglee.org/library/myapp:v2

name: myapp

[root@master ~]# kubectl apply -f dep.yml

#监控会显示效果

#发布服务

[root@master ~]# kubectl expose deployment webcluster --port 80 --target-port 80

[root@master ~]# kubectl describe services

Name: kubernetes

Namespace: default

Labels: component=apiserver

provider=kubernetes

Annotations: <none>

Selector: <none>

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.0.1

IPs: 10.96.0.1

Port: https 443/TCP

TargetPort: 6443/TCP

Endpoints: 172.25.254.100:6443

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

Name: test

Namespace: default

Labels: app=test

Annotations: <none>

Selector: app=test

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.102.57.191

IPs: 10.102.57.191

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints:

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

Name: webcluster

Namespace: default

Labels: app=webcluster

Annotations: <none>

Selector: app=webcluster

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.104.21.223

IPs: 10.104.21.223

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.8:80,10.244.2.5:80

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

#访问:

[root@master ~]# curl 10.104.21.223

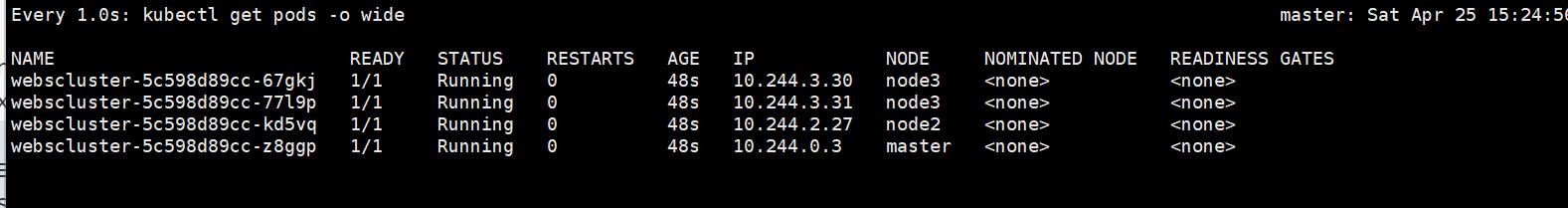

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>升级和回滚

bash

[root@master ~]# vim dep.yml

。。。。。。。

spec:

containers:

- image: myapp:v2 #升级为版本2

name: myapp

[root@master ~]# kubectl apply -f dep.yml

deployment.apps/webcluster configured

[root@master ~]# curl 10.104.21.223

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ~]# vim dep.yml

。。。。。。。

spec:

containers:

- image: myapp:v1 #回滚为版本1

name: myapp

[root@master ~]# kubectl apply -f dep.yml

deployment.apps/webcluster configured

[root@master ~]# curl 10.104.21.223

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>(4)版本更新管理及优化

bash

[root@master ~]# vim dep.yml

spec:

minReadySeconds: 5

replicas: 6 #把pod数量设定为6方便观察

selector:

[root@master~]# kubectl apply -f dep.yml

deployment.apps/webcluster configured查看更新策略信息

bash

[root@master deployment]# kubectl describe deployments.apps webcluster

Name: webcluster

Namespace: default

CreationTimestamp: Sun, 05 Apr 2026 16:52:40 +0800

Labels: app=webcluster

Annotations: deployment.kubernetes.io/revision: 10

Selector: app=webcluster

Replicas: 6 desired | 6 updated | 6 total | 6 available | 0 unavailable

StrategyType: RollingUpdate

MinReadySeconds: 5

RollingUpdateStrategy: 25% max unavailable, 25% max surge

bash

root@master ~]# vim dep.yml

。。。。。。。

spec:

minReadySeconds: 5

replicas: 6

selector:

matchLabels:

app: webcluster

strategy:

rollingUpdate:

maxSurge: 1 #更新时pod数量最多比期望值多一个

maxUnavailable: 0 #不能使用pod数量比期望值数量多0

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

[root@master ~]# kubectl apply -f dep.yml更新暂停和恢复

bash

[root@master deployment]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

1 <none>

2 <none>

4 <none>

5 <none>

6 <none>

7 <none>

9 <none>

10 <none>

[root@master deployment]# kubectl rollout pause deployment webcluster #暂停更新

deployment.apps/webcluster paused

[root@master deployment]# vim dep.yml

。。。

containers:

- image: myapp:v2

name: myapp

[root@master deployment]# kubectl apply -f dep.yml

deployment.apps/webcluster configured

[root@master deployment]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

1 <none>

2 <none>

4 <none>

5 <none>

6 <none>

7 <none>

10 <none>

11 <none>

[root@master deployment]# kubectl rollout resume deployment webcluster

deployment.apps/webcluster resumed#开启更新

[root@master deployment]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

1 <none>

2 <none>

4 <none>

5 <none>

6 <none>

7 <none>

10 <none>

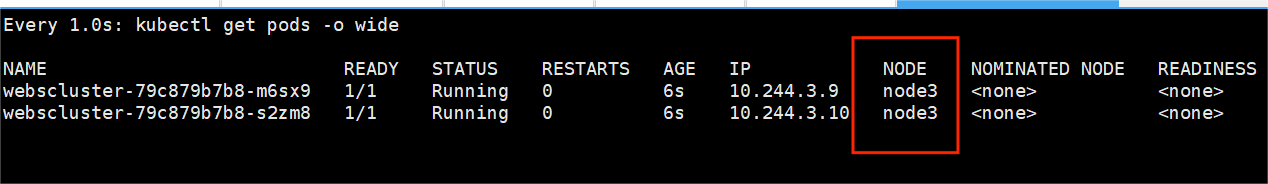

11 <none>(5)DaemonSet

bash

[root@master ~]# kubectl create deployment daemonset --image myapp:v1 --dry-run=client -o yaml > daemonset.yml

[root@master ~]# vim daemonset.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

labels:

app: daemonset

name: daemonset

spec:

# replicas: 1

selector:

matchLabels:

app: daemonset

template:

metadata:

labels:

app: daemonset

spec:

containers:

- image: reg.timinglee.org/library/myapp:v1

name: myapp

#另外开启一个主机node3,并设定在初始化集群时的所有设定确保所有服务的开启

#在master中重新生成集群主机注册时需要的token

[root@master ~]# kubeadm token create --print-join-command