智能体(Agent)开发与部署项目

项目简介

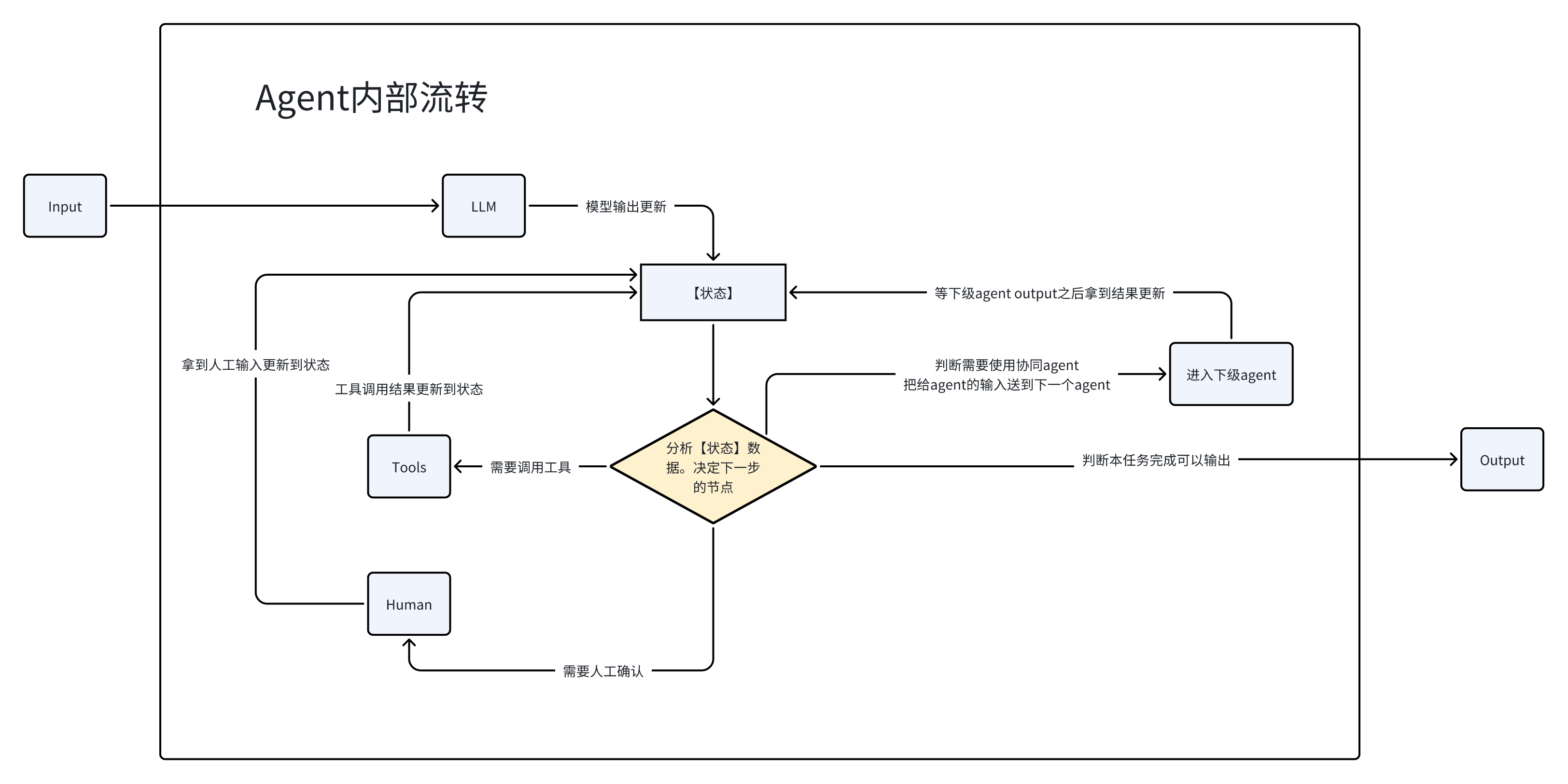

项目描述:基于LangChain框架构建可调度、可扩展的大模型智能体,结合 tool 工具调用、YAML 配置体系、状态机流转、API Key 调用大模型 ,实现多步骤自动化任务执行与复杂逻辑交互。

项目代码

智能体目录如下

markdown

COMMON_RESEARCH_BROWSER

├── agents

│ └── root.yaml

├── tools

│ └── bing_tools.py

├── config.json

├── start_chrome.py

└── meta.yamlmeta.yaml

yaml

schema_version: v1

created_at: 2024-05-15

id: common_research_browser

name: common_research_browser

description: "通用研究browser"

authors: [""]

version: "1.0.0"

tags: [""]

# 入口定义

entry_point:

agent_id: "root"

config:

skip_plan: true

ignore_buildin_tools: ["ls", "write_file", "read_file","glob","ask_about_file","grep","read_file","shell"]root.yaml

yaml

metadata:

schema_version: "1.0.0"

created_at: "2024-12-24"

id: "root"

agent:

name: "通用研究"

description: "通用研究"

role_description: |

你是一位研究员

advanced_instructions: |

- 研究用户的问题并想办法解决问题

- 你不能向用户进行任何确认。遇到不明确的地方只能查资料或者自己思考。

- 多进行尝试

sop:

name: "通用研究"

description: 通用研究

steps:

- 思考用户的问题

- 进行规划

- 按照计划进行开展/合理使用工具

- 使用简短答案回答

tools:

- id: bing_search

name: 检索

description: 搜索工具

- id: fetch_website

name: 获取网页

description: 获取网页内容

config:

human_intervention:

enabled: false

memory_enabled: true

max_iterations: 10start_chrome.py

python

"""

启动带调试端口的 Chrome 浏览器。

运行后 Chrome 会在 9222 端口开启调试模式,可以用 Playwright 连接。

启动是幂等的:如果调试端口已在运行,则直接复用。

"""

import os

import platform

import socket

import subprocess

import tempfile

import sys

import urllib.request

import urllib.error

import json

# Chrome 路径配置,可通过环境变量 CHROME_PATH 覆盖

CHROME_PATH: str | None = os.environ.get("CHROME_PATH")

def get_chrome_path() -> str:

"""获取 Chrome 可执行文件路径,支持 macOS、Windows 和 Linux。"""

if CHROME_PATH:

return CHROME_PATH

system = platform.system()

if system == "Darwin": # macOS

return "/Applications/Google Chrome.app/Contents/MacOS/Google Chrome"

elif system == "Windows":

# Windows 常见安装路径

possible_paths = [

os.path.join(os.environ.get("PROGRAMFILES", ""), "Google", "Chrome", "Application", "chrome.exe"),

os.path.join(os.environ.get("PROGRAMFILES(X86)", ""), "Google", "Chrome", "Application", "chrome.exe"),

os.path.join(os.environ.get("LOCALAPPDATA", ""), "Google", "Chrome", "Application", "chrome.exe"),

]

for path in possible_paths:

if path and os.path.exists(path):

return path

raise FileNotFoundError("未找到 Chrome,请设置环境变量 CHROME_PATH 指定 Chrome 路径")

elif system == "Linux":

# Linux 常见路径

possible_paths = [

"/usr/bin/google-chrome",

"/usr/bin/google-chrome-stable",

"/usr/bin/chromium",

"/usr/bin/chromium-browser",

]

for path in possible_paths:

if os.path.exists(path):

return path

raise FileNotFoundError("未找到 Chrome/Chromium,请设置环境变量 CHROME_PATH 指定路径")

else:

raise OSError(f"不支持的操作系统: {system}")

def is_chrome_debug_running(debug_port: int) -> bool:

"""检查指定端口是否已有 Chrome 调试实例在运行。

通过请求 Chrome DevTools Protocol 的 /json/version 端点来验证。

"""

try:

url = f"http://127.0.0.1:{debug_port}/json/version"

with urllib.request.urlopen(url, timeout=2) as response:

data = json.loads(response.read().decode())

# 确认是 Chrome 的调试端口

if "Browser" in data or "webSocketDebuggerUrl" in data:

return True

except (urllib.error.URLError, socket.timeout, OSError, json.JSONDecodeError):

pass

return False

def start_chrome(

debug_port: int = 9222,

chrome_path: str | None = None,

listen_address: str = "0.0.0.0",

) -> bool:

"""启动带调试端口的 Chrome 浏览器(幂等操作)。

如果指定端口已有 Chrome 调试实例在运行,则直接复用,不会重复启动。

Args:

debug_port: 调试端口,默认 9222

chrome_path: Chrome 可执行文件路径,默认自动检测

listen_address: 监听地址,默认 0.0.0.0 允许远程连接

Returns:

bool: True 表示新启动了 Chrome,False 表示复用已有实例

"""

# 检查是否已有实例在运行

if is_chrome_debug_running(debug_port):

print(f"Chrome 调试实例已在端口 {debug_port} 运行,复用现有实例")

return False

chrome_path = chrome_path or get_chrome_path()

# 使用临时目录作为用户数据目录,避免与已打开的 Chrome 冲突

user_data_dir = tempfile.mkdtemp(prefix="chrome_debug_")

print(f"启动 Chrome,调试端口: {debug_port},监听地址: {listen_address}")

print(f"用户数据目录: {user_data_dir}")

# 启动 Chrome 进程(前台运行,Ctrl+C 可终止)

process = subprocess.Popen([

chrome_path,

f"--remote-debugging-port={debug_port}",

f"--remote-debugging-address={listen_address}",

f"--user-data-dir={user_data_dir}",

"--no-first-run",

"--no-default-browser-check",

])

print("Chrome 已启动,按 Ctrl+C 关闭...")

try:

process.wait()

except KeyboardInterrupt:

print("\n正在关闭 Chrome...")

process.terminate()

return True

if __name__ == "__main__":

port = int(sys.argv[1]) if len(sys.argv) > 1 else 9222

custom_path = sys.argv[2] if len(sys.argv) > 2 else None

start_chrome(port, custom_path)bing_tools.py

python

import json

import base64

import urllib.parse

from langchain_core.tools import tool

from playwright.sync_api import sync_playwright

def extract_real_url(bing_url: str) -> str:

"""

从 Bing 跟踪链接中提取真实 URL

Bing 链接格式: https://www.bing.com/ck/a?...&u=a1aHR0cHM6Ly...&...

其中 u 参数是 base64 编码的真实 URL (前缀 a1 需要去掉)

"""

if not bing_url or "bing.com/ck/a" not in bing_url:

return bing_url

try:

parsed = urllib.parse.urlparse(bing_url)

params = urllib.parse.parse_qs(parsed.query)

if "u" in params:

encoded = params["u"][0]

# 去掉前缀 'a1'

if encoded.startswith("a1"):

encoded = encoded[2:]

# 添加 base64 padding

padding = 4 - len(encoded) % 4

if padding != 4:

encoded += "=" * padding

# base64 解码

decoded = base64.b64decode(encoded).decode("utf-8")

return decoded

except Exception:

pass

return bing_url

@tool

def bing_search(query: str, mkt: str = "zh-cn") -> str:

"""

使用 Bing 搜索进行网页搜索 (基于 Playwright 增强版,包含反爬处理和真实URL提取)

Args:

query: 用户问题

mkt: 市场代码,默认为 "zh-cn"

Returns:

搜索结果列表字符串,包含标题、真实URL和摘要

"""

results = []

# 构造搜索 URL

# 注意:直接构造 URL 有时比先去主页再输入更直接,但在反爬严格时,search_playwright.py 的策略(先主页后搜索)更稳妥

# 这里我们采用 search_playwright.py 的逻辑

try:

with sync_playwright() as p:

# 使用 Firefox,参考 search_playwright.py 建议,避免某些系统依赖问题且反爬表现较好

browser = p.chromium.launch(headless=False)

# 创建上下文,配置 User-Agent 和 Viewport

context = browser.new_context(

viewport={"width": 1920, "height": 1080},

locale=mkt,

)

page = context.new_page()

# 1. 先访问 Bing 主页 (模拟人类行为)

try:

page.goto("https://www.bing.com/", wait_until="domcontentloaded", timeout=10000)

page.wait_for_timeout(1000) # 稍作等待

except Exception as e:

print(f"访问主页警告 (非致命): {e}")

# 2. 设置国际版 cookie (可选,参考 search_playwright.py)

context.add_cookies([{"name": "ENSEARCH", "value": "BENVER=1", "domain": ".bing.com", "path": "/"}])

# 3. 执行搜索

# search_url = f"https://www.bing.com/search?q={urllib.parse.quote(query)}"

# print(query)

query = "Eliud Kipchoge marathon world record pace time"

search_url = f"https://www.bing.com/search?q={urllib.parse.quote(query).replace("%20","+")}"

print(search_url)

page.goto(search_url, wait_until="domcontentloaded")

# 4. 等待结果加载

try:

page.wait_for_selector("li.b_algo", timeout=10000)

page.wait_for_timeout(500) # 等待渲染稳定

except Exception:

browser.close()

return f"未找到关于'{query}'的搜索结果 (请求超时或无结果)"

# 5. 提取结果

items = page.query_selector_all("li.b_algo")

content = page.content()

print(content)

import time

time.sleep(100)

# 限制提取前 10 条

for item in items[:10]:

try:

# 提取标题和链接

title_elem = item.query_selector("h2 a")

title = title_elem.inner_text() if title_elem else "N/A"

raw_url = title_elem.get_attribute("href") if title_elem else "N/A"

# 提取真实 URL

url = extract_real_url(raw_url)

print(url)

# 提取摘要

summary_elem = item.query_selector("p")

summary = summary_elem.inner_text() if summary_elem else "N/A"

results.append({"title": title.strip(), "url": url, "summary": summary.strip()})

except Exception as e:

continue

browser.close()

if not results:

return f"未找到关于'{query}'的搜索结果。"

# 格式化输出供 LLM 阅读

formatted_results = []

for i, result in enumerate(results, 1):

formatted_results.append(

f"{i}. 标题: {result['title']}\n" f" 链接: {result['url']}\n" f" 摘要: {result['summary']}\n"

)

return "\n".join(formatted_results)

except Exception as e:

return f"搜索过程中发生错误: {str(e)}"

@tool

def fetch_website(url: str) -> str:

"""

获取网页内容 (同步 Playwright 实现,带基础反爬配置)

"""

try:

with sync_playwright() as p:

# 同样使用 Firefox 保持一致性,或使用 Chromium

browser = p.firefox.launch(headless=True)

# 注入 User-Agent 防止直接被拒

context = browser.new_context(

user_agent="Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/122.0.0.0 Safari/537.36"

)

page = context.new_page()

# 设置较长的超时时间

page.goto(url, timeout=30000, wait_until="domcontentloaded")

# 移除脚本和样式,净化内容

page.evaluate(

"""

() => {

const elements = document.querySelectorAll('script, style, link[rel="stylesheet"], nav, footer, iframe');

elements.forEach(el => el.remove());

}

"""

)

# 获取 body 文本

text = page.evaluate("document.body.innerText")

browser.close()

# 简单的文本清洗

lines = [line.strip() for line in text.splitlines() if line.strip()]

# 限制返回长度,防止 token 溢出 (这里保留前 500 行作为示例,可根据需要调整)

content = "\n".join(lines[:500])

return content if content else "网页内容为空"

except Exception as e:

return f"获取网页内容失败: {str(e)}"如果想要调用智能体中的某个工具,应该尝试用invoke方法

llm.invoke(question)

python

from tools.bing_tools import bing_search

query = "Eliud Kipchoge marathon world record pace time"

result = bing_search.invoke({"query": query})

print(result)config.json

可以填一些硬编码的数据(比如地理信息、某一确定位置的经纬度坐标),可供智能体使用

{

"lat": 23.968876,

"lon": 122.263931

},

{

"lat": 24.006509,

"lon": 122.403996

},

{

"lat": 24.054162,

"lon": 122.631946

},

{

"lat": 23.986440,

"lon": 122.769265

},

{

"lat": 23.923702,

"lon": 122.892853

},

{

"lat": 23.352655,

"lon": 122.912077

},

{

"lat": 23.254296,

"lon": 122.563287

},

{

"lat": 23.254296,

"lon": 122.563287

},

{

"lat": 23.352655,

"lon": 122.277663

},

{

"lat": 23.591942,

"lon": 122.255692

}制作common_research智能体

·配置体系:通过meta.yaml 定义智能体元信息、能力清单与输入输出规范,使用root.yaml 完成工作流编排、依赖管理与全局参数初始化。

·工具封装:基于 @tool 装饰器 标准化封装数据查询、格式转换、外部接口调用等功能,实现工具自动注册与调度执行。

·状态管理:基于LangChain 状态转移机制 ,实现智能体意图识别 →工具选择 →执行 →结果汇总 →结束的闭环流转,支持多轮对话与异常回退处理。

·模型调用:后端服务通过API Key 配置 对接大模型服务,完成模型选择、请求封装 、流式输出解析与 Token 长度控制。

multi_agent\graph_libs.py 用于构建状态图,状态图展示了程序运转的流程

封装成multi-agent前后端服务

制作好智能体后,将其封装成multi-agent前后端服务

前后端multi-agent项目

-

项目概览: 多智能体框架,后端为 FastAPI(Python),前端在 web-client(React + pnpm)

-

快速安装与运行(Windows 下,推荐在 WSL2 内或 PowerShell):

-

创建并激活 Python 虚拟环境,然后安装依赖(使用项目脚本更简单):

bash

python -m venv .venv

# Windows PowerShell:

.venv\Scripts\Activate.ps1

# 或 WSL/macOS:

source .venv/bin/activate

# 使用项目 helper(推荐)

uv sync

# 或直接

pip install -r requirements.txt- 启动后端(开发模式):

bash

uv run python main.py

# 或

uv run uvicorn server.app:app --reload --port 8000- 启动前端(在新终端):

bash

cd web-client

pnpm install

pnpm dev后端核心有session与chat接口

看每个接口实现什么功能,输入是什么,输出是什么,但是这样看不到递归调用后最终实现的功能,即整个运行流程未知,整个运行流程需要从函数一次次调用过程缕清

比如,session.py能够得到session_id,chat.py能够通过session_id进行chat,但是一直感觉不出来彼此的衔接

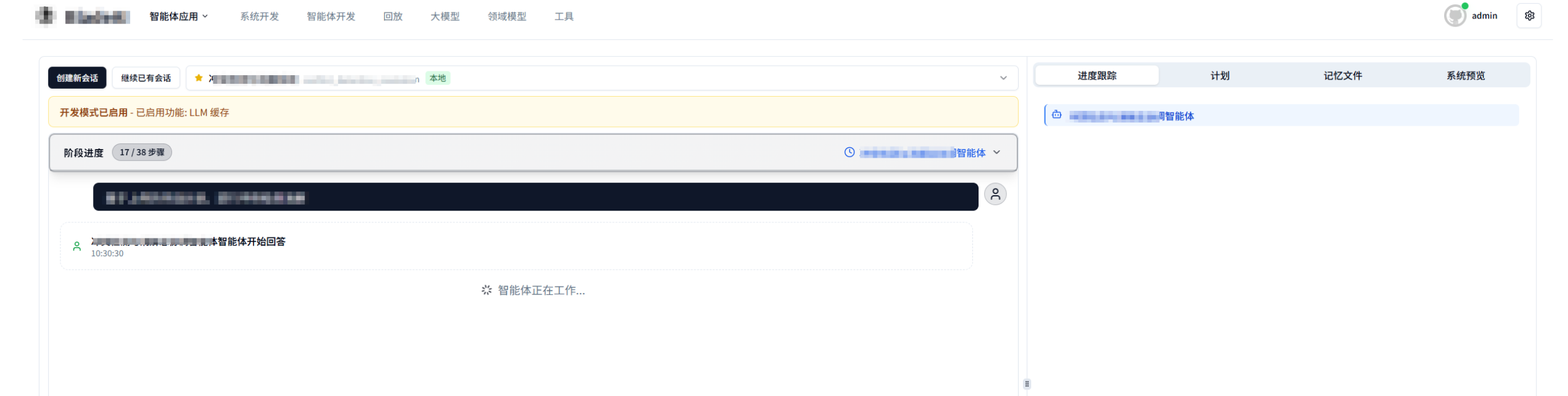

开启对话时,会有两个接口

server/api/v1/endpoints/chat/chat.py /agent/v1/{session_id}/chat 接口

server/api/v1/endpoints/chat/session.py @router.post("/agent/v1/session",

multi_agent/graph_libs.py

我的是联想笔记本,通过按Fn+F12,进入到网页调试窗口

前端页面点击接口对应的某些按钮,能够在网页调试窗口看到请求、响应信息

服务启动之后,界面如下

选择智能体,点击按钮开启对话,有关chat和session的请求与响应如下

bash

创建会话的请求为:

curl 'http://127.0.0.1:8000/agent/v1/session' \

-H 'Accept: */*' \

-H 'Accept-Language: en-GB,en-US;q=0.9,en;q=0.8,zh-CN;q=0.7,zh;q=0.6' \

-H 'Connection: keep-alive' \

-H 'Origin: http://localhost:5173' \

-H 'Referer: http://localhost:5173/' \

-H 'Sec-Fetch-Dest: empty' \

-H 'Sec-Fetch-Mode: cors' \

-H 'Sec-Fetch-Site: cross-site' \

-H 'Sec-Fetch-Storage-Access: active' \

-H 'User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.0.0 Safari/537.36' \

-H 'content-type: application/json' \

-H 'sec-ch-ua: "Google Chrome";v="143", "Chromium";v="143", "Not A(Brand";v="24"' \

-H 'sec-ch-ua-mobile: ?0' \

-H 'sec-ch-ua-platform: "Windows"' \

--data-raw '{"agent_group_id":"common_research","agent_group_type":"local","develop_mode":false}'

response为:

{

"session_id": "sess-0113_095518-c88d6f",

"status": "success",

"created_at": 1768269318.134176,

"message": null,

"title": "untitled conversation"

}

进行会话聊天的请求为:

curl 'http://127.0.0.1:8000/agent/v1/sess-0113_095518-c88d6f/chat' \

-H 'Accept: */*' \

-H 'Accept-Language: en-GB,en-US;q=0.9,en;q=0.8,zh-CN;q=0.7,zh;q=0.6' \

-H 'Connection: keep-alive' \

-H 'Content-Type: application/json' \

-H 'Origin: http://localhost:5173' \

-H 'Referer: http://localhost:5173/' \

-H 'Sec-Fetch-Dest: empty' \

-H 'Sec-Fetch-Mode: cors' \

-H 'Sec-Fetch-Site: cross-site' \

-H 'Sec-Fetch-Storage-Access: active' \

-H 'User-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.0.0 Safari/537.36' \

-H 'sec-ch-ua: "Google Chrome";v="143", "Chromium";v="143", "Not A(Brand";v="24"' \

-H 'sec-ch-ua-mobile: ?0' \

-H 'sec-ch-ua-platform: "Windows"' \

--data-raw '{"input_type":"user_message","input_data":{"id":"usr_mkby1yc4wboiqeh","content":"北京周边旅游,一个人,1.15日出发,预算1000。"}}'

response为:

data: {

"interaction_id": "int-14c95b",

"event_id": "run-fafde74e-656c-4e1a-8d17-5e0e64945402",

"timestamp": 1768269591.7768538,

"ts_str": "2026-01-13 09:59:51",

"stream_id": "stream_response_001",

"stream_start": false,

"stream_end": false,

"is_end": false,

"end_reason": null,

"source": {

"type": "agent",

"agent_id": "root",

"agent_name": "通用研究",

"agent_type": "internal"

},

"event_type": "token",

"response_type": "token",

"data": {

"content": "我来",

"additional_kwargs": { },

"response_metadata": {

"agent_id": "root",

"agent_name": "通用研究"

},

"type": "AIMessageChunk",

"name": null,

"id": "run-fafde74e-656c-4e1a-8d17-5e0e64945402",

"example": false,

"tool_calls": [ ],

"invalid_tool_calls": [ ],

"usage_metadata": null,

"tool_call_chunks": [ ]

}

}

data: {

"interaction_id": "int-bc795d",

"event_id": "run-fafde74e-656c-4e1a-8d17-5e0e64945402",

"timestamp": 1768269591.7925339,

"ts_str": "2026-01-13 09:59:51",

"stream_id": "stream_response_001",

"stream_start": false,

"stream_end": false,

"is_end": false,

"end_reason": null,

"source": {

"type": "agent",

"agent_id": "root",

"agent_name": "通用研究",

"agent_type": "internal"

},

"event_type": "token",

"response_type": "token",

"data": {

"content": "帮您",

"additional_kwargs": { },

"response_metadata": {

"agent_id": "root",

"agent_name": "通用研究"

},

"type": "AIMessageChunk",

"name": null,

"id": "run-fafde74e-656c-4e1a-8d17-5e0e64945402",

"example": false,

"tool_calls": [ ],

"invalid_tool_calls": [ ],

"usage_metadata": null,

"tool_call_chunks": [ ]

}

}Session会话创建(初始化智能体)

session的request playload为

{agent_group_id: "AreaAlert", agent_group_type: "local", develop_mode: false}

agent_group_id:"AreaAlert"

agent_group_type:"local"

develop_mode:false前端发送 payload,payload 中包含 agent_group_id:"AreaAlert"

session的response为

{

"session_id": "sess-1229_094817-e7d5b0",

"status": "success",

"created_at": 1766972897.759765,

"message": null,

"title": "untitled conversation"

}前端选择AreaAlert智能体,会发送一个 agent/v1/session 的 Post 请求(疑问点1)

session.py使用的FastAPI的修饰器定义路由

@router.post( # 明确指定了HTTP方法是POST

"/agent/v1/session", # <--- 这里定义了具体的 URL 路径

response_model=CreateSessionResponse,

operation_id="create_session",

)

async def create_session(request: CreateSessionRequest):函数签名包括函数名、参数列表、返回值类型

api/v1/endpoint/chat/session.py 中的 create_session 函数被调用

python

@router.post(

"/agent/v1/session",

response_model=CreateSessionResponse, #返回值必须是CreateSessionResponse类型

operation_id="create_session", #当 接收到POST /agent/v1/session请求时,调用create_session函数来处理请求

)

async def create_session(request: CreateSessionRequest): # async def声明异步函数,当处理耗时操作时(如读写数据库),释放CPU资源,提高并发性能

# FastAPI会自动读取HTTP请求的Body(JSON格式),将其转换为CreateSessionRequest类型的对象

session_instance = await handle_create_session( #await等待后台真正把会话创建好(包括数据库入库、智能体加载),最终拿到session_instance

# 将 request对象中的各个字段一一解包,传递给handle_create_session函数

agent_group_id=request.agent_group_id, # 能在浏览器的查看到

user_id=request.user_id,

scene_id=request.scene_id,

scene_version_id=request.scene_version_id,

agent_group_type=request.agent_group_type, # 能在浏览器的查看到

title=request.title,

session_id=request.session_id,

agent_team_version_id=request.agent_team_version_id,

injected_headers=request.injected_headers,

develop_mode=request.develop_mode, # 能在浏览器的查看到

develop_config=request.develop_config,

)

return CreateSessionResponse( #返回的CreateSessionResponse包含有session_id、status、created_at、title等字段

session_id=session_instance.session_id,

status="success",

created_at=session_instance.meta.created_at.timestamp(),

title=session_instance.meta.title,

)api/v1/endpoint/chat/session.py 中的 create_session 函数内部调用 api/v1/endpoint/chat/utils.py中的 handle_create_session

api/v1/endpoint/chat/utils.py中的 handle_create_session代码如下,该代码调用 service/session.py 中的 SessionService.create_session

python

async def handle_create_session(

agent_group_id: str,

user_id: str,

agent_group_type: Literal["local", "scene", "agent_team"] = "local",

scene_id: Optional[int] = None,

scene_version_id: Optional[int] = None,

title: str = "untitled conversation",

session_id: Optional[str] = None,

agent_team_version_id: Optional[int] = None,

injected_headers: Optional[dict[str, str]] = None,

develop_mode: bool = False,

develop_config: Optional[DevelopConfig] = None,

):

"""Common session creation logic that can be reused."""

if agent_group_type == "agent_team" and not agent_team_version_id:

raise HTTPException(

status_code=400, detail="agent_team_version_id is required when agent_group_type is 'agent_team'"

)

checkpointer_url = config.get("database", "checkpointer_url", fallback="sqlite://./checkpointer.db")

try:

session_instance = await SessionService.create_session(

agent_group_id=agent_group_id,

checkpointer_url=checkpointer_url,

user_id=user_id,

scene_id=scene_id,

scene_version_id=scene_version_id,

agent_group_type=agent_group_type,

title=title,

session_id=session_id,

agent_team_version_id=agent_team_version_id,

injected_headers=injected_headers,

develop_mode=develop_mode,

develop_config=develop_config,

)

return session_instance

except ValueError as e:

traceback.print_exc()

raise HTTPException(status_code=400, detail=str(e))

except Exception as e:

traceback.print_exc()

raise HTTPException(status_code=500, detail=f"Failed to create session: {str(e)}")services/session.py中实现了真正创建session的代码,在services/session.py中,SessionService类下面的create_session方法

python

@classmethod

async def create_session(

cls,

agent_group_id: str,

checkpointer_url: str,

user_id: str = "placeholder",

scene_id: int | None = None,

scene_version_id: int | None = None,

agent_team_version_id: int | None = None,

agent_group_type: Literal["local", "scene", "agent_team"] = "local",

title: str = "untitled conversation",

session_id: str | None = None,

injected_headers: dict[str, str] | None = None,

develop_mode: bool = False,

develop_config: DevelopConfig | None = None,

) -> SessionInstance:

start_time = time.perf_counter()

log.info(f"========== 开始创建 Session: agent_group={agent_group_id} ==========")

if session_id is not None:

session = await cls.get_session_metadata(session_id)

if session is not None:

raise ValueError(f"Session {session_id} already exists")

if agent_group_type == "agent_team":

assert agent_team_version_id is not None, "agent_team_version_id is required"

session = Session(

agent_group_id=agent_group_id,

agent_group_type=AgentGroupType(agent_group_type),

scene_version_id=None,

checkpointer_url=checkpointer_url,

user_id=user_id,

root_agent_id="",

root_agent_name="",

title=title,

id=session_id, # type: ignore

agent_team_version_id=agent_team_version_id,

injected_headers=json.dumps(injected_headers) if injected_headers else None,

)

session = await cls.create_session_in_db(session)

session_dir = create_session_temp_dir(session.id) #创建会话的临时目录

checkpointer = await CheckpointerService.create_checkpointer(checkpointer_url)

# TODO: 前端适配完agent_team后,删除该代码

scene_version = await cls.get_scene_version(scene_id, scene_version_id)

agent_group = await AgentGroupService.create_agent_group( # 读取智能体配置文件

type=agent_group_type,

agent_group_id=agent_group_id,

scene_version=scene_version,

agent_group_path=session_dir / AGENT_GROUP_DIR_NAME,

first_init=True,

agent_team_version_id=agent_team_version_id,

)

# Configure session from agent group (with develop mode overrides)

await cls.configure_session_from_agent_group(

session=session,

agent_group=agent_group,

session_dir=session_dir,

develop_config=develop_config,

scene_version=scene_version,

)

session = await cls.update_session_in_db(session)

# Parse injected_headers back from JSON string

headers_dict = json.loads(session.injected_headers) if session.injected_headers else None

agent_group_instance = await AgentGroupInstanceService.create_agent_group_instance( # 将静态的配置变成动态的运行实例

id=session.id,

agent_group=agent_group,

checkpointer=checkpointer,

injected_headers=headers_dict,

develop_mode=develop_mode,

develop_config=develop_config,

)

total_time_ms = (time.perf_counter() - start_time) * 1000

log.info(f"========== Session 创建完成: {session.id}, 总耗时: {total_time_ms:.2f}ms ==========")

return SessionInstance(

meta=session,

checkpointer=checkpointer,

agent_group=agent_group,

agent_group_instance=agent_group_instance,

)services/session.py中,SessionService类下面的create_session方法,会将静态的配置变成动态的运行实例,这一过程会调用AgentGroupInstanceService类的create_agent_group_instance方法(在services/agent_group_instance.py中)

python

class AgentGroupInstanceService:

@classmethod

@timing_decorator

async def create_agent_group_instance(

cls,

id: str,

agent_group: AgentGroup,

checkpointer: Checkpointer,

injected_headers: dict[str, str] | None = None,

develop_mode: bool = False,

develop_config: DevelopConfig | None = None,

) -> AgentGroupInstanceCore:

# Check if instance already exists (unlikely with random session IDs)

existing_instance = await agent_group_instance_cache.get(id)

if existing_instance:

return existing_instance

# Create new instance

agent_group_instance = AgentGroupInstanceCore(

id=id,

agent_group=agent_group,

checkpointer=checkpointer,

candidate_model_configs=candidate_model_configs(),

langfuse_settings=langfuse_settings,

tool_library_path=str(file_settings.tool_library_path),

injected_headers=injected_headers,

develop_mode=develop_mode,

develop_config=develop_config,

)

await agent_group_instance.build() #build函数创建了state_graph

# Cache the instance

await agent_group_instance_cache.set(id, agent_group_instance)

return agent_group_instanceservices/agent_group_instance.py中的create_agent_group_instance方法,调用了multi_agent/core/agent_group_instance.py中的build函数,build函数实现如下,最终创建了state_graph

python

@timing_decorator

async def build(self, entry_agent: str | None = None) -> CompiledStateGraph:

if self.graph:

return self.graph

# 加载工具缓存,避免每个 agent 重复加载

load_tools(str(self.agent_group.path))

state_graph = await self._build_agent_group_instance(self.agent_group, self.stop_event) #具体看我们写的代码

if entry_agent:

state_graph = await self._change_entry_agent(state_graph, entry_agent)

graph = state_graph.compile(checkpointer=self.checkpointer)

self.graph = graph

# self.get_graph_pic("png", f"/home/ybz/codes/multi-agent/graph-{self.agent_group.meta.id}.png")

return self.graph进行GAIA-benchMark

1.数据集下载

需要在benchMark项目根目录下,使用modelscope下载GAIA数据集

在下载前,请先通过如下命令安装ModelScope

pip install modelscope命令行下载

下载完整数据集

modelscope download --dataset AI-ModelScope/GAIA下载单个文件到指定本地文件夹(以下载README.md到当前路径下"dir"目录为例)

modelscope download --dataset AI-ModelScope/GAIA README.md --local_dir ./dir更多更丰富的命令行下载选项,可参见具体文档

SDK下载

#数据集下载

from modelscope.msdatasets import MsDataset

ds = MsDataset.load('AI-ModelScope/GAIA')

#您可按需配置 subset_name、split,参照"快速使用"示例代码Git下载

请确保 lfs 已经被正确安装

git lfs install

git clone https://www.modelscope.cn/datasets/AI-ModelScope/GAIA.git2.启动multi_agent服务:进入multi-agent项目根目录,执行以下指令

Plain

uv run main.py3.进入到 multi-agent/agent-templates/common_research_browser智能体路径,修改start_chrome.py。

在start_chrome.py大约21行的位置,将chrome安装路径,修改为自己的chrome安装路径

Python

CHROME_PATH = "/mnt/c/Program Files/Google/Chrome/Application/chrome.exe"4.运行start_chrome.py,运行结果如下:

Plain

warning02@Admin:/mnt/d/workspace/multi-agent/agent_templates/common_research_browser$ python3 start_chrome.py

启动 Chrome,调试端口: 9222,监听地址: 0.0.0.0

用户数据目录: C:\Users\17591\AppData\Local\Temp\chrome_debug

Chrome 已启动,按 Ctrl+C 关闭...运行后,会弹出chrome浏览器。

5.进入到benchMark路径,执行 benchMark_common_research_browser.py,只测试level_1、不包含附件,运行结果如下

Plain

==================================================

BENCHMARK COMPLETED

==================================================

Total: 42

Correct: 25

Timeout: 2

Accuracy: 59.52%

==================================================benchMark日志

{'task_id': 'e1fc63a2-da7a-432f-be78-7c4a95598703', 'Question': 'If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.', 'Level': '1', 'Final answer': '17', 'file_name': '', 'file_path': '', 'Annotator Metadata': {'Steps': '1. Googled Eliud Kipchoge marathon pace to find 4min 37sec/mile\n2. Converted into fractions of hours.\n3. Found moon periapsis in miles (225,623 miles).\n4. Multiplied the two to find the number of hours and rounded to the nearest 100 hours.', 'Number of steps': '4', 'How long did this take?': '20 Minutes', 'Tools': '1. A web browser.\n2. A search engine.\n3. A calculator.', 'Number of tools': '3'}}

正在测试第 5 题...

==================================================

[Question 5]

问题:If Eliud Kipchoge could maintain his record-making marathon pace indefinitely, how many thousand hours would it take him to run the distance between the Earth and the Moon its closest approach? Please use the minimum perigee value on the Wikipedia page for the Moon when carrying out your calculation. Round your result to the nearest 1000 hours and do not use any comma separators if necessary.

回答:Now I have the key information I need:

1. **Eliud Kipchoge's marathon record**: From the search results, I can see that Kipchoge ran the marathon distance (42.195 km) in 1:59:40 (1 hour, 59 minutes, 40 seconds) in Vienna 2019. This is the fastest marathon time ever recorded.

2. **Moon's minimum perigee distance**: From the Wikipedia pages, I can see that the Moon's perigee (closest approach) varies from 356,400 km to 370,400 km. The minimum perigee value is 356,400 km.

Now I need to calculate:

1. Kipchoge's pace in km/h

2. How many hours it would take him to run 356,400 km at that pace

3. Round to the nearest 1000 hours

Let me do the calculations:

**Step 1: Calculate Kipchoge's pace**

- Distance: 42.195 km

- Time: 1 hour, 59 minutes, 40 seconds = 1 + 59/60 + 40/3600 hours = 1 + 0.9833 + 0.0111 = 1.9944 hours

- Pace = Distance / Time = 42.195 km / 1.9944 hours ≈ 21.15 km/h

**Step 2: Calculate time to run Moon distance**

- Moon distance: 356,400 km

- Time = Distance / Pace = 356,400 km / 21.15 km/h ≈ 16,848 hours

**Step 3: Round to nearest 1000 hours**

- 16,848 hours rounds to 17,000 hours

Let me double-check my calculations:

Time conversion: 1:59:40 = 1 + 59/60 + 40/3600 = 1 + 0.983333 + 0.011111 = 1.994444 hours

Pace: 42.195 / 1.994444 ≈ 21.152 km/h

Time for Moon distance: 356,400 / 21.152 ≈ 16,849 hours

Rounding 16,849 to nearest 1000: 17,000 hours<final-answer>

17000

</final-answer>

Ground Truth:17

错误×

原因:答案不匹配

{'task_id': '32102e3e-d12a-4209-9163-7b3a104efe5d', 'Question': 'The attached spreadsheet shows the inventory for a movie and video game rental store in Seattle, Washington. What is the title of the oldest Blu-Ray recorded in this spreadsheet? Return it as appearing in the spreadsheet.', 'Level': '2', 'Final answer': 'Time-Parking 2: Parallel Universe', 'file_name': '32102e3e-d12a-4209-9163-7b3a104efe5d.xlsx', 'file_path': '2023/validation/32102e3e-d12a-4209-9163-7b3a104efe5d.xlsx', 'Annotator Metadata': {'Steps': '1. Open the attached file.\n2. Compare the years given in the Blu-Ray section to find the oldest year, 2009.\n3. Find the title of the Blu-Ray disc that corresponds to the year 2009: Time-Parking 2: Parallel Universe.', 'Number of steps': '3', 'How long did this take?': '1 minute', 'Tools': '1. Microsoft Excel', 'Number of tools': '1'}}

{'task_id': '8e867cd7-cff9-4e6c-867a-ff5ddc2550be', 'Question': 'How many studio albums were published by Mercedes Sosa between 2000 and 2009 (included)? You can use the latest 2022 version of english wikipedia.', 'Level': '1', 'Final answer': '3', 'file_name': '', 'file_path': '', 'Annotator Metadata': {'Steps': '1. I did a search for Mercedes Sosa\n2. I went to the Wikipedia page for her\n3. I scrolled down to "Studio albums"\n4. I counted the ones between 2000 and 2009', 'Number of steps': '4', 'How long did this take?': '5 minutes', 'Tools': '1. web browser\n2. google search', 'Number of tools': '2'}}6.提供给 common_research_browser 智能体以MCP工具,以提交答案到数据库

进入agent-colosseo/envs/003-GAIA-benchMark/system

python3 mcp_server.py| 维度 | MCP 工具 | Agent 工具 |

|---|---|---|

| 目的 | 模拟真实业务环境 | 提供通用能力 |

| 场景 | 测试/评估 Agent | Agent 执行任务 |

| 数据 | 操作环境数据库 | 访问外部资源 |

| 可替换性 | 环境固定,Agent 可换 | Agent 固定,Agent工具可拓展 |

7.碰到的问题:

1.会被反爬机制制裁

2.有些需要登陆账号之后才能观看视频,才能统计视频中人物出现次数

3.看网页文本的能力还行,看视频、看pdf能力不太行

先运行 start_chrome.py,再运行common_research_browser智能体,即,创建一个有头的chrome浏览器,能一定程度应对反爬

尝试让机器模拟人的行为,寻找输入框在哪里(通过class_id匹配),往输入框输入query,然后点击(往输入框输入enter)(三种动作都由机器执行)