一、Linux

查看当前目录磁盘占用大小

分页查看大文件 big.log

过滤当前目录下所有 .txt 文件

bash

du -sh

less big.log

ls | grep ".txt$"du -sh 查看目录总大小,快速定位磁盘占用大户

less 分页查看文件,比 vim、cat 更适合看大日志

正则 $.txt 匹配以 txt 结尾文件,过滤指定后缀

二、SQL

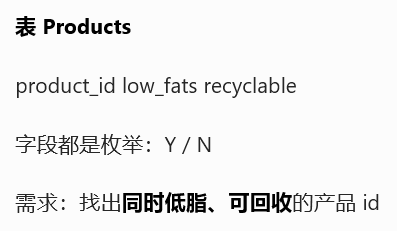

1757. 可回收且低脂的产品

sql

SELECT product_id

FROM Products

WHERE low_fats = 'Y' AND recyclable = 'Y';多条件 AND 精准筛选

标签型字段组合过滤,用户画像、商品标签圈选基础模板

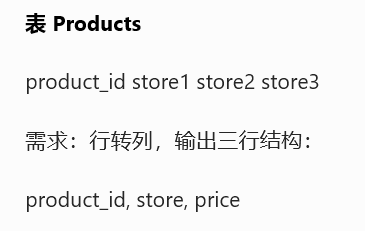

1795. 每个产品在不同商店的价格

sql

SELECT product_id, 'store1' AS store, store1 AS price FROM Products

UNION ALL

SELECT product_id, 'store2' AS store, store2 AS price FROM Products

UNION ALL

SELECT product_id, 'store3' AS store, store3 AS price FROM Products;UNION ALL 列转行经典写法

宽表转长表,数仓建模、拉链表、宽长互转必考

手动构造维度列,适配多门店、多渠道拆分

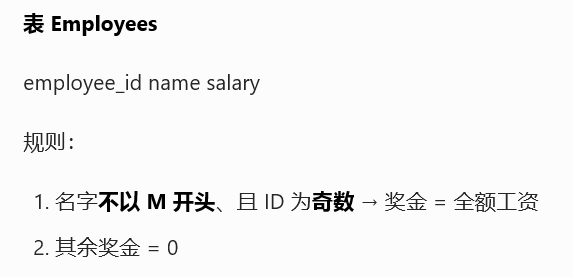

1873. 计算特殊奖金

sql

SELECT

employee_id,

CASE

WHEN employee_id % 2 = 1 AND name NOT LIKE 'M%'

THEN salary

ELSE 0

END AS bonus

FROM Employees

ORDER BY employee_id;取模 % 判断奇偶

LIKE 'M%' 前缀模糊匹配

CASE 多规则分支计算衍生字段

薪资核算、补贴规则类 SQL 通用套路

三、Pyspark

python

from pyspark.sql import SparkSession

from pyspark.sql.functions import col, lit, when

spark = SparkSession.builder.master("local[*]").appName("Day32").getOrCreate()

# 1. 同时低脂+可回收

prod = spark.createDataFrame([

(1,"Y","Y"),(2,"Y","N")

], ["product_id","low_fats","recyclable"])

prod.filter((col("low_fats")=="Y") & (col("recyclable")=="Y")).show()

# 2. 宽表转长表 行转列

df = spark.createDataFrame([

(1,100,200,150)

], ["product_id","store1","store2","store3"])

df1 = df.select("product_id", lit("store1").alias("store"), col("store1").alias("price"))

df2 = df.select("product_id", lit("store2").alias("store"), col("store2").alias("price"))

df3 = df.select("product_id", lit("store3").alias("store"), col("store3").alias("price"))

df1.unionAll(df2).unionAll(df3).show()

spark.stop()Spark 多条件过滤用 &,条件加括号

lit() 构造常量字段,对应 SQL 手写字符串

unionAll 实现宽表拆分成多行,和 UNION ALL 逻辑一致

四、算法

125. 验证回文串

python

def isPalindrome(s: str) -> bool:

s = ''.join(ch.lower() for ch in s if ch.isalnum())

return s == s[::-1]只保留字母数字、统一转小写

切片反转字符串直接对比

字符串预处理 + 回文判断,面试基础高频题