文章目录

前言

介绍利用PyTorch实现Logistic回归的分类问题

一、分类问题简介

分类问题的输出为属于每一个类别的概率,概率值最大的即为所属类别。最常见的Sigmoid函数:Logistic函数。

二、示例

1.示例步骤

1.构建模型 class LogisticRegressionModel(torch.nn.Module):

2.定义损失函数和优化器

criterion = torch.nn.BCELoss(size_average=False)

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

3.训练过程

2.示例代码

代码如下(示例):

python

import torch

import matplotlib.pyplot as plt

import torch.nn.functional as F

# prepare dataset

x_data = torch.Tensor([[1.0], [2.0], [3.0]])

y_data = torch.Tensor([[0], [0], [1]])

# design model using class

class LogisticRegressionModel(torch.nn.Module):

def __init__(self):

super(LogisticRegressionModel, self).__init__()

self.linear = torch.nn.Linear(1, 1)

def forward(self, x):

y_pred = F.sigmoid(self.linear(x))

return y_pred

model = LogisticRegressionModel()

# construct loss and optimizer

# 默认情况下,loss会基于element平均,如果size_average=False的话,loss会被累加。

criterion = torch.nn.BCELoss(size_average=False)

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

epoch_list = []

loss_list = []

# training cycle forward, backward, update

for epoch in range(10000):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

print(epoch, loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

epoch_list.append(epoch)

loss_list.append(loss.item())

print('w = ', model.linear.weight.item())

print('b = ', model.linear.bias.item())

x_test = torch.Tensor([[4.0]])

y_test = model(x_test)

print('y_pred = ', y_test.data)

plt.plot(epoch_list, loss_list)

plt.ylabel('loss')

plt.xlabel('epoch')

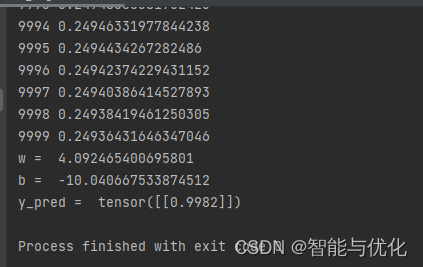

plt.show()得到如下结果:

总结

PyTorch学习5:Logistic回归