ICCV-2019

He K, Girshick R, Dollár P. Rethinking imagenet pre-training[C]//Proceedings of the IEEE/CVF international conference on computer vision. 2019: 4918-4927.

文章目录

- [1 Background and Motivation](#1 Background and Motivation)

- [2 Related Work](#2 Related Work)

- [3 Advantages / Contributions](#3 Advantages / Contributions)

- [4 Method](#4 Method)

-

- [4.1 Normalization](#4.1 Normalization)

- [4.2 Convergence](#4.2 Convergence)

- [5 Experiments](#5 Experiments)

-

- [5.1 Datasets and Metrics](#5.1 Datasets and Metrics)

- [5.2 Training from scratch to match accuracy](#5.2 Training from scratch to match accuracy)

- [5.3 Training from scratch with less data](#5.3 Training from scratch with less data)

- [5.4 Discussions](#5.4 Discussions)

- [6 Conclusion(own) / Future work](#6 Conclusion(own) / Future work)

1 Background and Motivation

ImageNet pre-train + targets domain fine-tune 成了计算机视觉许多任务的标配

相比于 train from scratch,learned low-level features (e.g., edges, textures) that do not need to be re-learned during fine-tuning.

作者发现 ImageNet pre-train

- speeds up convergence

- not automatically give better regularization

- no benefit when the target tasks/metrics are more sensitive to spatially well localized predictions

作者通过更好的 normalization 技术和 train longer,使得 train from scratch 媲美 pre-train,打破了做 CV 相关任务的思维惯性

2 Related Work

- Pre-training and fine-tuning

The marginal benefit from the kind of large-scale pre-training data used to date diminishes rapidly------6× (ImageNet-5k), 300× (JFT), and even 3000× (Instagram) larger than ImageNet. - Detection from scratch

作者的方法 match fine-tuning accuracy when training from scratch even without making any architectural specializations

3 Advantages / Contributions

验证只要 normalization 合适 train longer 的情况下,train from scratch 不比 ImageNet pre-train 效果差

4 Method

-

model normalization

- normalized parameter initialization(参数初始化)

- activation normalization layers(BN、IN、GN 等)

-

training length

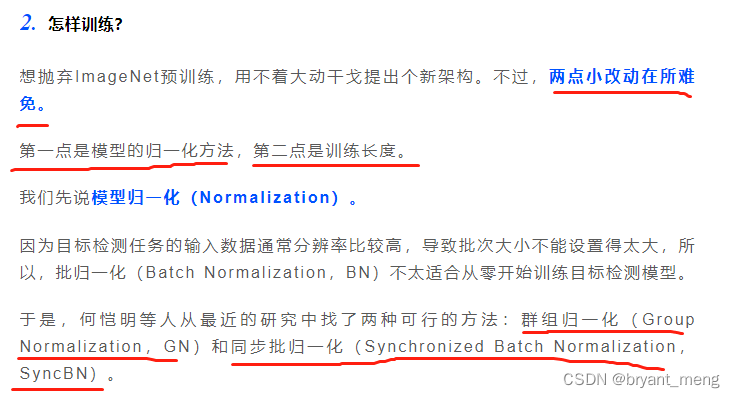

4.1 Normalization

BN train from scratch 在分类任务上 ok,检测任务上效果会下降,因为输入分辨率一般检测会比分类大,GPU 显存吃不下那么大的 batch size

pre-train 的时候如果 batch size 过小可以固定 BN 参数,负面影响会小一些

改进方案

- GN(【GN】《Group Normalization》)

insensitive to batch sizes - Synchronized Batch Normalization (SyncBN)

implementation of BN with batch statistics computed across multiple devices (GPUs).

4.2 Convergence

trained for longer

scratch 6x schedule 才达到 pre-train + fine-tune 2x schedule 的 pixels 量级(检测输入分辨率比分类输入分辨率高),images 和 instances 还远达不到

5 Experiments

(1)Architecture

Mask R-CNN with ResNet or ResNeXt plus Feature Pyramid Network (FPN) backbones.

作者 fine-tune 的时候也 tuned with GN or SyncBN, rather than freezing them. They have higher accuracy than the frozen

ones

(2)Learning rate scheduling

training longer for the first (large) learning rate is useful, but training for longer on small learning rates often leads to overfitting.

这个结论作者好像没有专门拿出来实验验证

(3)Hyper-parameters

一般在 pre-train + fine-tune 上 grid search 好,然后同步到 train from scratch

5.1 Datasets and Metrics

datasets

-

COCO

-

PASCAL VOC

metric

- A P b b o x AP^{bbox} APbbox 和 A P m a s k AP^{mask} APmask

5.2 Training from scratch to match accuracy

(1)Baselines with GN and SyncBN

train from scratch 的时候,作者的 GN 比 SyncBN 还是猛一些的

random init 并不比 pre-train 差,随着训练的深入 can catch up

(2)Multiple detection metrics

全方位都有 catch up 的可能

(3)Enhanced baselines

Training-time scale augmentation,the shorter side of images is randomly sampled from [640, 800] pixels. 训练会拉的更长,9x scratch,6x pretrain

Cascade R-CNN, as a method focusing on improving localization accuracy

Test-time augmentation,combining the predictions from multiple scaling transformations

可以看到,加了各类训练测试增广后,train from scratch 收敛的最终结果也不弱于 ImageNet pre-train

(4)Large models trained from scratch

模型加大,结论依然成立

(5)vs. previous from-scratch results

作者的方法比较好,结构也没有转门为 train from scratch 设计,

previous works reported no evidence that models without ImageNet pre-training can be comparably good as their ImageNet pre-training counterparts.

(6)Keypoint detection

只用了 2x 3x schedules 就追上了 pre-train

ImageNet pre-training, which has little explicit localization information, does not help keypoint detection

(7)Models without BN/GN --- VGG nets

pre-train:AP of 35.6 after an extremely long 9× training schedule

scratch:35.2 after an 11× schedule

making minimal/no changes

5.3 Training from scratch with less data

(1)35k COCO training images

图7左图反馈出,ImageNet pretraining does not automatically help reduce overfitting

图7中图,fine-tune grid search 超参数 的出来的结果,random init 同步该超参数,效果可以媲美

(2)10k COCO training images

grid search for hyper-parameters on the models that use ImageNet pre-training, and apply them to the models trained from scratch.

还是可以媲美

(3)Breakdown regime: 1k COCO training images

数据集过少的时候,train from scratch 容易过拟合,虽然 loss 下降的差不多,但是验证集上的精度相差较大

即使 grid search scratch 的超参数,得到的 5.4 AP 和 9.9 AP 差距还是过大

(4)Breakdown regime: PASCAL VOC

pretrain 82.7 mAP at 18k iterations.

train from scratch 77.6 mAP at 144k iterations and does not catch up even training longer

数据集比较少的时候 pre-train 的优势明显

We suspect that the fewer instances (and categories) has a similar negative impact as insufficient training data, which can explain why training from scratch on VOC is not able to catch up as observed on COCO

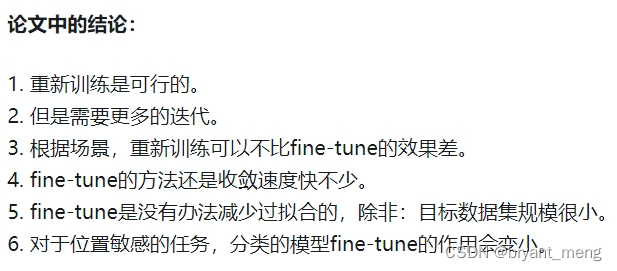

5.4 Discussions

-

Training from scratch on target tasks is possible without architectural changes

-

Training from scratch requires more iterations to sufficiently converge

-

Training from scratch can be no worse than its ImageNet pre-training counterparts under many circumstances, down to as few as 10k COCO images

-

ImageNet pre-training speeds up convergence on the target task

-

ImageNet pre-training does not necessarily help reduce overfitting unless we enter a very small data regime.

-

ImageNet pre-training helps less if the target task is more sensitive to localization than classification.

(1)Is ImageNet pre-training necessary?

No,unless the target dataset is too small (e.g., <10k COCO images)

(2)Is ImageNet helpful?

Yes,before larger-scale data was available

(3)Is big data helpful?

Yes,it would be more effective to collect data in the target domain

(4)Shall we pursuit universal representations?

Yes

its(ImageNet pretrain) role will shed light into potential future directions for the community to move forward

6 Conclusion(own) / Future work

-

our study suggests that collecting data and training on the target tasks is a solution worth considering, especially when

there is a significant gap between the source pre-training task and the target task

-

检测任务中的 BN 使得 train from scratch 变难,因为相对于分类任务,检测任务的输入分辨率变大,batch size 变小,BN 的效果会下降

-

pre-train fine-tune 的时候,可以冻住 BN 参数

节选一些其他博主的解读

何恺明"终结"ImageNet预训练时代:从0开始训练神经网络,效果比肩COCO冠军

-

使用 Group Normalization。

-

使用同步的 Batch Norm(SyncBN)

这两种方式都可以解决 BN 对于 batch-size 依赖的问题。

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 知乎

https://www.zhihu.com/question/303234604/answer/539216875

综上所述, 这其实是个 GN 的广告, 我们就是可以不要 ImageNet 预训练...

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - YaqiLYU的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/537226250

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 波粒二象性的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/538017657

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 大师兄的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/537484574

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 柯小波的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/536738647

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 知乎1345的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/536835723

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - mileistone的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/536820942

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - 放浪者的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/538929756

如何评价何恺明等 arxiv 新作 Rethinking ImageNet Pre-training? - cstghitpku的回答 - 知乎

https://www.zhihu.com/question/303234604/answer/537421748

打破常规思维