- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

code

python3

from __future__ import unicode_literals, print_function, division

from io import open

import unicodedata

import string

import re

import random

import torch

import torch.nn as nn

from torch import optim

import torch.nn.functional as F

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(device)cpu

python3

SOS_token = 0

EOS_token = 1

# 语言类,方便对语料库进行操作

class Lang:

def __init__(self, name):

self.name = name

self.word2index = {}

self.word2count = {}

self.index2word = {0: "SOS", 1: "EOS"}

self.n_words = 2 # Count SOS and EOS

def addSentence(self, sentence):

for word in sentence.split(' '):

self.addWord(word)

def addWord(self, word):

if word not in self.word2index:

self.word2index[word] = self.n_words

self.word2count[word] = 1

self.index2word[self.n_words] = word

self.n_words += 1

else:

self.word2count[word] += 1

python3

def unicodeToAscii(s):

return ''.join(

c for c in unicodedata.normalize('NFD', s)

if unicodedata.category(c) != 'Mn'

)

# 小写化,剔除标点与非字母符号

def normalizeString(s):

s = unicodeToAscii(s.lower().strip())

s = re.sub(r"([.!?])", r" \1", s)

s = re.sub(r"[^a-zA-Z.!?]+", r" ", s)

return s

python3

def readLangs(lang1, lang2, reverse=False):

print("Reading lines...")

# 以行为单位读取文件

lines = open('./data/%s-%s.txt'%(lang1,lang2), encoding='utf-8').\

read().strip().split('\n')

# 将每一行放入一个列表中

# 一个列表中有两个元素,A语言文本与B语言文本

pairs = [[normalizeString(s) for s in l.split('\t')] for l in lines]

# 创建Lang实例,并确认是否反转语言顺序

if reverse:

pairs = [list(reversed(p)) for p in pairs]

input_lang = Lang(lang2)

output_lang = Lang(lang1)

else:

input_lang = Lang(lang1)

output_lang = Lang(lang2)

return input_lang, output_lang, pairs

python3

MAX_LENGTH = 10 # 定义语料最长长度

eng_prefixes = (

"i am ", "i m ",

"he is", "he s ",

"she is", "she s ",

"you are", "you re ",

"we are", "we re ",

"they are", "they re "

)

def filterPair(p):

return len(p[0].split(' ')) < MAX_LENGTH and \

len(p[1].split(' ')) < MAX_LENGTH and p[1].startswith(eng_prefixes)

def filterPairs(pairs):

# 选取仅仅包含 eng_prefixes 开头的语料

return [pair for pair in pairs if filterPair(pair)]

python3

def prepareData(lang1, lang2, reverse=False):

# 读取文件中的数据

input_lang, output_lang, pairs = readLangs(lang1, lang2, reverse)

print("Read %s sentence pairs" % len(pairs))

# 按条件选取语料

pairs = filterPairs(pairs[:])

print("Trimmed to %s sentence pairs" % len(pairs))

print("Counting words...")

# 将语料保存至相应的语言类

for pair in pairs:

input_lang.addSentence(pair[0])

output_lang.addSentence(pair[1])

# 打印语言类的信息

print("Counted words:")

print(input_lang.name, input_lang.n_words)

print(output_lang.name, output_lang.n_words)

return input_lang, output_lang, pairs

input_lang, output_lang, pairs = prepareData('eng', 'fra', True)

print(random.choice(pairs))Reading lines...

Read 135842 sentence pairs

Trimmed to 10599 sentence pairs

Counting words...

Counted words:

fra 4345

eng 2803

'elles le font correctement .', 'they re doing it right .'

python3

class EncoderRNN(nn.Module):

def __init__(self, input_size, hidden_size):

super(EncoderRNN, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(input_size, hidden_size)

self.gru = nn.GRU(hidden_size, hidden_size)

def forward(self, input, hidden):

embedded = self.embedding(input).view(1, 1, -1)

output = embedded

output, hidden = self.gru(output, hidden)

return output, hidden

def initHidden(self):

return torch.zeros(1, 1, self.hidden_size, device=device)

python3

class DecoderRNN(nn.Module):

def __init__(self, hidden_size, output_size):

super(DecoderRNN, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(output_size, hidden_size)

self.gru = nn.GRU(hidden_size, hidden_size)

self.out = nn.Linear(hidden_size, output_size)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden):

output = self.embedding(input).view(1, 1, -1)

output = F.relu(output)

output, hidden = self.gru(output, hidden)

output = self.softmax(self.out(output[0]))

return output, hidden

def initHidden(self):

return torch.zeros(1, 1, self.hidden_size, device=device)

python3

# 将文本数字化,获取词汇index

def indexesFromSentence(lang, sentence):

return [lang.word2index[word] for word in sentence.split(' ')]

# 将数字化的文本,转化为tensor数据

def tensorFromSentence(lang, sentence):

indexes = indexesFromSentence(lang, sentence)

indexes.append(EOS_token)

return torch.tensor(indexes, dtype=torch.long, device=device).view(-1, 1)

# 输入pair文本,输出预处理好的数据

def tensorsFromPair(pair):

input_tensor = tensorFromSentence(input_lang, pair[0])

target_tensor = tensorFromSentence(output_lang, pair[1])

return (input_tensor, target_tensor)

python3

teacher_forcing_ratio = 0.5

def train(input_tensor, target_tensor,

encoder, decoder,

encoder_optimizer, decoder_optimizer,

criterion, max_length=MAX_LENGTH):

# 编码器初始化

encoder_hidden = encoder.initHidden()

# grad属性归零

encoder_optimizer.zero_grad()

decoder_optimizer.zero_grad()

input_length = input_tensor.size(0)

target_length = target_tensor.size(0)

# 用于创建一个指定大小的全零张量(tensor),用作默认编码器输出

encoder_outputs = torch.zeros(max_length, encoder.hidden_size, device=device)

loss = 0

# 将处理好的语料送入编码器

for ei in range(input_length):

encoder_output, encoder_hidden = encoder(input_tensor[ei], encoder_hidden)

encoder_outputs[ei] = encoder_output[0, 0]

# 解码器默认输出

decoder_input = torch.tensor([[SOS_token]], device=device)

decoder_hidden = encoder_hidden

use_teacher_forcing = True if random.random() < teacher_forcing_ratio else False

# 将编码器处理好的输出送入解码器

if use_teacher_forcing:

# Teacher forcing: Feed the target as the next input

for di in range(target_length):

decoder_output, decoder_hidden = decoder(decoder_input, decoder_hidden)

loss += criterion(decoder_output, target_tensor[di])

decoder_input = target_tensor[di] # Teacher forcing

else:

# Without teacher forcing: use its own predictions as the next input

for di in range(target_length):

decoder_output, decoder_hidden = decoder(decoder_input, decoder_hidden)

topv, topi = decoder_output.topk(1)

decoder_input = topi.squeeze().detach() # detach from history as input

loss += criterion(decoder_output, target_tensor[di])

if decoder_input.item() == EOS_token:

break

loss.backward()

encoder_optimizer.step()

decoder_optimizer.step()

return loss.item() / target_length

python3

import time

import math

def asMinutes(s):

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)

def timeSince(since, percent):

now = time.time()

s = now - since

es = s / (percent)

rs = es - s

return '%s (- %s)' % (asMinutes(s), asMinutes(rs))

python3

def trainIters(encoder,decoder,n_iters,print_every=1000,

plot_every=100,learning_rate=0.01):

start = time.time()

plot_losses = []

print_loss_total = 0 # Reset every print_every

plot_loss_total = 0 # Reset every plot_every

encoder_optimizer = optim.SGD(encoder.parameters(), lr=learning_rate)

decoder_optimizer = optim.SGD(decoder.parameters(), lr=learning_rate)

# 在 pairs 中随机选取 n_iters 条数据用作训练集

training_pairs = [tensorsFromPair(random.choice(pairs)) for i in range(n_iters)]

criterion = nn.NLLLoss()

for iter in range(1, n_iters + 1):

training_pair = training_pairs[iter - 1]

input_tensor = training_pair[0]

target_tensor = training_pair[1]

loss = train(input_tensor, target_tensor, encoder,

decoder, encoder_optimizer, decoder_optimizer, criterion)

print_loss_total += loss

plot_loss_total += loss

if iter % print_every == 0:

print_loss_avg = print_loss_total / print_every

print_loss_total = 0

print('%s (%d %d%%) %.4f' % (timeSince(start, iter / n_iters),

iter, iter / n_iters * 100, print_loss_avg))

if iter % plot_every == 0:

plot_loss_avg = plot_loss_total / plot_every

plot_losses.append(plot_loss_avg)

plot_loss_total = 0

return plot_losses

python3

hidden_size = 256

encoder1 = EncoderRNN(input_lang.n_words, hidden_size).to(device)

attn_decoder1 = DecoderRNN(hidden_size, output_lang.n_words).to(device)

plot_losses = trainIters(encoder1, attn_decoder1, 20000, print_every=5000)

python3

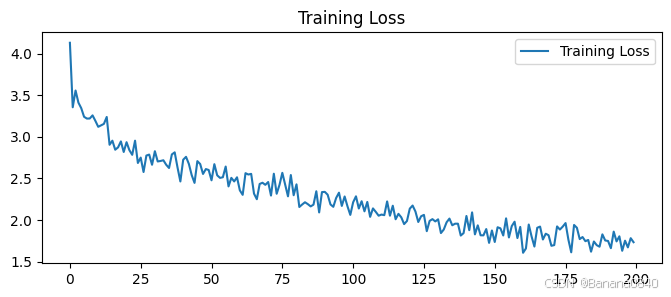

7m 2s (- 21m 6s) (5000 25%) 2.8981

13m 59s (- 13m 59s) (10000 50%) 2.3636

21m 3s (- 7m 1s) (15000 75%) 2.0134

28m 10s (- 0m 0s) (20000 100%) 1.7973

python3

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") # 忽略警告信息

# plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 # 分辨率

epochs_range = range(len(plot_losses))

plt.figure(figsize=(8, 3))

plt.subplot(1, 1, 1)

plt.plot(epochs_range, plot_losses, label='Training Loss')

plt.legend(loc='upper right')

plt.title('Training Loss')

plt.show()

总结

构建基于PyTorch的seq2seq翻译系统是一个综合性的过程,它首先涉及数据的收集与预处理,包括将源语言和目标语言的文本对转换为适合模型训练的格式,并构建相应的词汇表。随后,定义包含编码器(负责将源语言序列编码为上下文信息)和解码器(利用编码信息生成目标语言序列)的seq2seq模型架构。在训练阶段,通过迭代训练数据,优化模型参数以最小化翻译损失,如交叉熵损失,同时采用正则化技术和梯度裁剪来防止过拟合和梯度爆炸。最后,在独立的测试集上评估模型的翻译性能,通过计算如BLEU分数等指标来衡量其准确性和流畅度。这一过程不仅要求深入理解seq2seq模型和PyTorch框架,还需要细致的数据处理和模型调优策略,以构建出高效且准确的翻译系统。