import torch

from torch import Tensor

from collections import OrderedDict

import torch.nn.functional as F

from torch import nn

from torch.jit.annotations import Tuple, List, Dict

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, in_channel, out_channel, stride=1, downsample=None, norm_layer=None):

super(Bottleneck, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

self.conv1 = nn.Conv2d(in_channels=in_channel, out_channels=out_channel,

kernel_size=(1,1), stride=(1,1), bias=False) # squeeze channels

self.bn1 = norm_layer(out_channel)

# -----------------------------------------

self.conv2 = nn.Conv2d(in_channels=out_channel, out_channels=out_channel,

kernel_size=(3,3), stride=(stride,stride), bias=False, padding=(1,1))

self.bn2 = norm_layer(out_channel)

# -----------------------------------------

self.conv3 = nn.Conv2d(in_channels=out_channel, out_channels=out_channel * self.expansion,

kernel_size=(1,1), stride=(1,1), bias=False) # unsqueeze channels

self.bn3 = norm_layer(out_channel * self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

def forward(self, x):

identity = x

if self.downsample is not None:

identity = self.downsample(x)

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, blocks_num, num_classes=1000, include_top=True, norm_layer=None):

'''

:param block:块

:param blocks_num:块数

:param num_classes: 分类数

:param include_top:

:param norm_layer: BN

'''

super(ResNet, self).__init__()

if norm_layer is None:

norm_layer = nn.BatchNorm2d

self._norm_layer = norm_layer

self.include_top = include_top

self.in_channel = 64

self.conv1 = nn.Conv2d(in_channels=3, out_channels=self.in_channel, kernel_size=(7,7), stride=(2,2),

padding=(3,3), bias=False)

self.bn1 = norm_layer(self.in_channel)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, blocks_num[0])

self.layer2 = self._make_layer(block, 128, blocks_num[1], stride=2)

self.layer3 = self._make_layer(block, 256, blocks_num[2], stride=2)

self.layer4 = self._make_layer(block, 512, blocks_num[3], stride=2)

if self.include_top:

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # output size = (1, 1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

'''

初始化

'''

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

def _make_layer(self, block, channel, block_num, stride=1):

norm_layer = self._norm_layer

downsample = None

if stride != 1 or self.in_channel != channel * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.in_channel, channel * block.expansion, kernel_size=(1,1), stride=(stride,stride), bias=False),

norm_layer(channel * block.expansion))

layers = []

layers.append(block(self.in_channel, channel, downsample=downsample,

stride=stride, norm_layer=norm_layer))

self.in_channel = channel * block.expansion

for _ in range(1, block_num):

layers.append(block(self.in_channel, channel, norm_layer=norm_layer))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

if self.include_top:

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.fc(x)

return x

class IntermediateLayerGetter(nn.ModuleDict):

"""

Module wrapper that returns intermediate layers from a models

It has a strong assumption that the modules have been registered

into the models in the same order as they are used.

This means that one should **not** reuse the same nn.Module

twice in the forward if you want this to work.

Additionally, it is only able to query submodules that are directly

assigned to the models. So if `models` is passed, `models.feature1` can

be returned, but not `models.feature1.layer2`.

Arguments:

model (nn.Module): models on which we will extract the features

return_layers (Dict[name, new_name]): a dict containing the names

of the modules for which the activations will be returned as

the key of the dict, and the value of the dict is the name

of the returned activation (which the user can specify).

"""

__annotations__ = {

"return_layers": Dict[str, str],

}

def __init__(self, model, return_layers):

if not set(return_layers).issubset([name for name, _ in model.named_children()]):

raise ValueError("return_layers are not present in models")

# {'layer1': '0', 'layer2': '1', 'layer3': '2', 'layer4': '3'}

orig_return_layers = return_layers

return_layers = {k: v for k, v in return_layers.items()}

layers = OrderedDict()

# 遍历模型子模块按顺序存入有序字典

# 只保存layer4及其之前的结构,舍去之后不用的结构

for name, module in model.named_children():

layers[name] = module

if name in return_layers:

del return_layers[name]

if not return_layers:

break

super(IntermediateLayerGetter, self).__init__(layers)

self.return_layers = orig_return_layers

def forward(self, x):

out = OrderedDict()

# 依次遍历模型的所有子模块,并进行正向传播,

# 收集layer1, layer2, layer3, layer4的输出

for name, module in self.named_children():

x = module(x)

if name in self.return_layers:

out_name = self.return_layers[name]

out[out_name] = x

return out

class FeaturePyramidNetwork(nn.Module):

"""

Module that adds a FPN from on top of a set of feature maps. This is based on

`"Feature Pyramid Network for Object Detection" <https://arxiv.org/abs/1612.03144>`_.

The feature maps are currently supposed to be in increasing depth

order.

The input to the models is expected to be an OrderedDict[Tensor], containing

the feature maps on top of which the FPN will be added.

Arguments:

in_channels_list (list[int]): number of channels for each feature map that

is passed to the module

out_channels (int): number of channels of the FPN representation

extra_blocks (ExtraFPNBlock or None): if provided, extra operations will

be performed. It is expected to take the fpn features, the original

features and the names of the original features as input, and returns

a new list of feature maps and their corresponding names

"""

def __init__(self, in_channels_list, out_channels, extra_blocks=None):

super(FeaturePyramidNetwork, self).__init__()

# 用来调整resnet特征矩阵(layer1,2,3,4)的channel(kernel_size=1)

self.inner_blocks = nn.ModuleList()

# 对调整后的特征矩阵使用3x3的卷积核来得到对应的预测特征矩阵

self.layer_blocks = nn.ModuleList()

for in_channels in in_channels_list:

if in_channels == 0:

continue

inner_block_module = nn.Conv2d(in_channels, out_channels, (1,1))

layer_block_module = nn.Conv2d(out_channels, out_channels, (3,3), padding=(1,1))

self.inner_blocks.append(inner_block_module)

self.layer_blocks.append(layer_block_module)

# initialize parameters now to avoid modifying the initialization of top_blocks

for m in self.children():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_uniform_(m.weight, a=1)

nn.init.constant_(m.bias, 0)

self.extra_blocks = extra_blocks

def get_result_from_inner_blocks(self, x, idx):

# type: (Tensor, int) -> Tensor

"""

This is equivalent to self.inner_blocks[idx](x),

but torchscript doesn't support this yet

"""

num_blocks = len(self.inner_blocks)

if idx < 0:

idx += num_blocks

i = 0

out = x

for module in self.inner_blocks:

if i == idx:

out = module(x)

i += 1

return out

def get_result_from_layer_blocks(self, x, idx):

# type: (Tensor, int) -> Tensor

"""

This is equivalent to self.layer_blocks[idx](x),

but torchscript doesn't support this yet

"""

num_blocks = len(self.layer_blocks)

if idx < 0:

idx += num_blocks

i = 0

out = x

for module in self.layer_blocks:

if i == idx:

out = module(x)

i += 1

return out

def forward(self, x):

# type: (Dict[str, Tensor]) -> Dict[str, Tensor]

"""

Computes the FPN for a set of feature maps.

Arguments:

x (OrderedDict[Tensor]): feature maps for each feature level.

Returns:

results (OrderedDict[Tensor]): feature maps after FPN layers.

They are ordered from highest resolution first.

"""

# unpack OrderedDict into two lists for easier handling

names = list(x.keys())

x = list(x.values())

# 将resnet layer4的channel调整到指定的out_channels

# last_inner = self.inner_blocks[-1](x[-1])

last_inner = self.get_result_from_inner_blocks(x[-1], -1)

# result中保存着每个预测特征层

results = []

# 将layer4调整channel后的特征矩阵,通过3x3卷积后得到对应的预测特征矩阵

# results.append(self.layer_blocks[-1](last_inner))

results.append(self.get_result_from_layer_blocks(last_inner, -1))

# 倒序遍历resenet输出特征层,以及对应inner_block和layer_block

# layer3 -> layer2 -> layer1 (layer4已经处理过了)

# for feature, inner_block, layer_block in zip(

# x[:-1][::-1], self.inner_blocks[:-1][::-1], self.layer_blocks[:-1][::-1]

# ):

# if not inner_block:

# continue

# inner_lateral = inner_block(feature)

# feat_shape = inner_lateral.shape[-2:]

# inner_top_down = F.interpolate(last_inner, size=feat_shape, mode="nearest")

# last_inner = inner_lateral + inner_top_down

# results.insert(0, layer_block(last_inner))

for idx in range(len(x) - 2, -1, -1):

inner_lateral = self.get_result_from_inner_blocks(x[idx], idx)

feat_shape = inner_lateral.shape[-2:]

inner_top_down = F.interpolate(last_inner, size=feat_shape, mode="nearest")

last_inner = inner_lateral + inner_top_down

results.insert(0, self.get_result_from_layer_blocks(last_inner, idx))

# 在layer4对应的预测特征层基础上生成预测特征矩阵5

if self.extra_blocks is not None:

results, names = self.extra_blocks(results, names)

# make it back an OrderedDict

out = OrderedDict([(k, v) for k, v in zip(names, results)])

return out

class LastLevelMaxPool(torch.nn.Module):

"""

Applies a max_pool2d on top of the last feature map

"""

def forward(self, x, names):

# type: (List[Tensor], List[str]) -> Tuple[List[Tensor], List[str]]

names.append("pool")

x.append(F.max_pool2d(x[-1], 1, 2, 0))

return x, names

class BackboneWithFPN(nn.Module):

"""

Adds a FPN on top of a models.

Internally, it uses torchvision.models._utils.IntermediateLayerGetter to

extract a submodel that returns the feature maps specified in return_layers.

The same limitations of IntermediatLayerGetter apply here.

Arguments:

backbone (nn.Module)

return_layers (Dict[name, new_name]): a dict containing the names

of the modules for which the activations will be returned as

the key of the dict, and the value of the dict is the name

of the returned activation (which the user can specify).

in_channels_list (List[int]): number of channels for each feature map

that is returned, in the order they are present in the OrderedDict

out_channels (int): number of channels in the FPN.

Attributes:

out_channels (int): the number of channels in the FPN

"""

def __init__(self, backbone, return_layers, in_channels_list, out_channels):

'''

:param backbone: 特征层

:param return_layers: 返回的层数

:param in_channels_list: 输入通道数

:param out_channels: 输出通道数

'''

super(BackboneWithFPN, self).__init__()

'返回有序字典模型'

self.body = IntermediateLayerGetter(backbone, return_layers=return_layers)

self.fpn = FeaturePyramidNetwork(

in_channels_list=in_channels_list,

out_channels=out_channels,

extra_blocks=LastLevelMaxPool(),

)

# super(BackboneWithFPN, self).__init__(OrderedDict(

# [("body", body), ("fpn", fpn)]))

self.out_channels = out_channels

def forward(self, x):

x = self.body(x)

x = self.fpn(x)

return x

def resnet50_fpn_backbone():

# FrozenBatchNorm2d的功能与BatchNorm2d类似,但参数无法更新

# norm_layer=misc.FrozenBatchNorm2d

resnet_backbone = ResNet(Bottleneck, [3, 4, 6, 3],

include_top=False)

# freeze layers

# 冻结layer1及其之前的所有底层权重(基础通用特征)

for name, parameter in resnet_backbone.named_parameters():

if 'layer2' not in name and 'layer3' not in name and 'layer4' not in name:

'''

冻结权重,不参与训练

'''

parameter.requires_grad_(False)

# 字典名字

return_layers = {'layer1': '0', 'layer2': '1', 'layer3': '2', 'layer4': '3'}

# in_channel 为layer4的输出特征矩阵channel = 2048

in_channels_stage2 = resnet_backbone.in_channel // 8

in_channels_list = [

in_channels_stage2, # layer1 out_channel=256

in_channels_stage2 * 2, # layer2 out_channel=512

in_channels_stage2 * 4, # layer3 out_channel=1024

in_channels_stage2 * 8, # layer4 out_channel=2048

]

out_channels = 256

return BackboneWithFPN(resnet_backbone, return_layers, in_channels_list, out_channels)

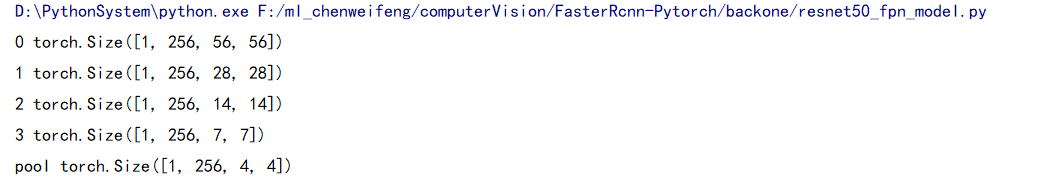

if __name__ == '__main__':

net = resnet50_fpn_backbone()

x = torch.randn(1,3,224,224)

for key,value in net(x).items():

print(key,value.shape)