目录

简介

Mem0 是一个强大的记忆系统,可以帮助 AI 应用存储和检索历史对话信息。本教程将介绍如何在 LangChain 应用中集成 Mem0,实现一个具有记忆能力的旅行顾问 AI。

环境准备

首先需要安装必要的依赖:

bash

pip install langchain openai mem0基础配置

首先,我们需要设置基本的配置信息:

python:d:\agent-llm\mem0_test\langchain_mem0.py

from openai import OpenAI

from mem0 import Memory

from mem0.configs.base import MemoryConfig

from mem0.embeddings.configs import EmbedderConfig

from mem0.llms.configs import LlmConfig

# 集中管理配置

API_KEY = "your-api-key"

BASE_URL = "your-base-url"

# 配置 Mem0

config = MemoryConfig(

llm = LlmConfig(

provider="openai",

config={

"model": "qwen-turbo",

"api_key": API_KEY,

"openai_base_url": BASE_URL

}

),

embedder = EmbedderConfig(

provider="openai",

config={

"embedding_dims": 1536,

"model": "text-embedding-v2",

"api_key": API_KEY,

"openai_base_url": BASE_URL

}

)

)

mem0 = Memory(config=config)核心组件说明

1. 提示模板设计

我们使用 LangChain 的 ChatPromptTemplate 来构建对话模板:

python

prompt = ChatPromptTemplate.from_messages([

SystemMessage(content="""You are a helpful travel agent AI..."""),

MessagesPlaceholder(variable_name="context"),

HumanMessage(content="{input}")

])2. 上下文检索

retrieve_context 函数负责从 Mem0 中检索相关记忆:

python

def retrieve_context(query: str, user_id: str) -> List[Dict]:

memories = mem0.search(query, user_id=user_id)

seralized_memories = ' '.join([mem["memory"] for mem in memories["results"]])

return [

{

"role": "system",

"content": f"Relevant information: {seralized_memories}"

},

{

"role": "user",

"content": query

}

]3. 响应生成

generate_response 函数使用 LangChain 的链式调用生成回复:

python

def generate_response(input: str, context: List[Dict]) -> str:

chain = prompt | llm

response = chain.invoke({

"context": context,

"input": input

})

return response.content4. 记忆存储

save_interaction 函数将对话保存到 Mem0:

python

def save_interaction(user_id: str, user_input: str, assistant_response: str):

interaction = [

{"role": "user", "content": user_input},

{"role": "assistant", "content": assistant_response}

]

mem0.add(interaction, user_id=user_id)工作流程解析

-

记忆检索 :当用户发送消息时,系统会使用 Mem0 的

search方法检索相关的历史对话。 -

上下文整合:系统将检索到的记忆整合到提示模板中,确保 AI 能够理解历史上下文。

-

响应生成:使用 LangChain 的链式调用生成回复。

-

记忆存储:将新的对话内容存储到 Mem0 中,供future使用。

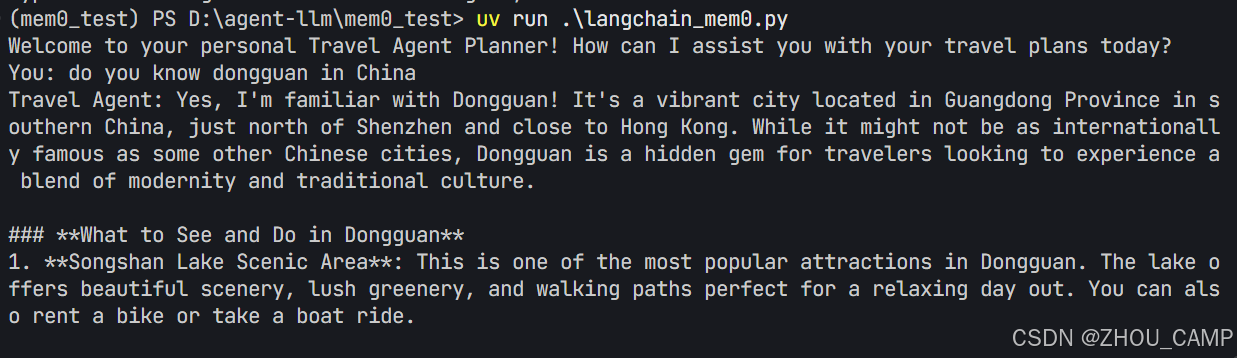

使用示例

python

if __name__ == "__main__":

print("Welcome to your personal Travel Agent Planner!")

user_id = "john"

while True:

user_input = input("You: ")

if user_input.lower() in ['quit', 'exit', 'bye']:

break

response = chat_turn(user_input, user_id)

print("Travel Agent:", response)关键特性

- 用户隔离 :通过

user_id实现多用户数据隔离 - 语义搜索:Mem0 使用向量嵌入进行语义相似度搜索

- 上下文感知:AI 能够理解并利用历史对话信息

- 灵活扩展:易于集成到现有的 LangChain 应用中

完整代码与效果

python

from openai import OpenAI

from mem0 import Memory

from mem0.configs.base import MemoryConfig

from mem0.embeddings.configs import EmbedderConfig

from mem0.llms.configs import LlmConfig

from langchain_openai import ChatOpenAI

from langchain_core.messages import SystemMessage, HumanMessage, AIMessage

from langchain_core.prompts import ChatPromptTemplate, MessagesPlaceholder

from typing import List, Dict

# 集中管理配置

API_KEY = "your api key"

BASE_URL = "https://dashscope.aliyuncs.com/compatible-mode/v1"

# OpenAI客户端配置

openai_client = OpenAI(

api_key=API_KEY,

base_url=BASE_URL,

)

# LangChain LLM配置

llm = ChatOpenAI(

temperature=0,

openai_api_key=API_KEY,

openai_api_base=BASE_URL,

model="qwen-turbo"

)

# Mem0配置

config = MemoryConfig(

llm = LlmConfig(

provider="openai",

config={

"model": "qwen-turbo",

"api_key": API_KEY,

"openai_base_url": BASE_URL

}

),

embedder = EmbedderConfig(

provider="openai",

config={

"embedding_dims": 1536,

"model": "text-embedding-v2",

"api_key": API_KEY,

"openai_base_url": BASE_URL

}

)

)

mem0 = Memory(config=config)

prompt = ChatPromptTemplate.from_messages([

SystemMessage(content="""You are a helpful travel agent AI. Use the provided context to personalize your responses and remember user preferences and past interactions.

Provide travel recommendations, itinerary suggestions, and answer questions about destinations.

If you don't have specific information, you can make general suggestions based on common travel knowledge."""),

MessagesPlaceholder(variable_name="context"),

HumanMessage(content="{input}")

])

def retrieve_context(query: str, user_id: str) -> List[Dict]:

"""Retrieve relevant context from Mem0"""

memories = mem0.search(query, user_id=user_id)

seralized_memories = ' '.join([mem["memory"] for mem in memories["results"]])

context = [

{

"role": "system",

"content": f"Relevant information: {seralized_memories}"

},

{

"role": "user",

"content": query

}

]

return context

def generate_response(input: str, context: List[Dict]) -> str:

"""Generate a response using the language model"""

chain = prompt | llm

response = chain.invoke({

"context": context,

"input": input

})

return response.content

def save_interaction(user_id: str, user_input: str, assistant_response: str):

"""Save the interaction to Mem0"""

interaction = [

{

"role": "user",

"content": user_input

},

{

"role": "assistant",

"content": assistant_response

}

]

mem0.add(interaction, user_id=user_id)

def chat_turn(user_input: str, user_id: str) -> str:

# Retrieve context

context = retrieve_context(user_input, user_id)

# Generate response

response = generate_response(user_input, context)

# Save interaction

save_interaction(user_id, user_input, response)

return response

if __name__ == "__main__":

print("Welcome to your personal Travel Agent Planner! How can I assist you with your travel plans today?")

user_id = "john"

while True:

user_input = input("You: ")

if user_input.lower() in ['quit', 'exit', 'bye']:

print("Travel Agent: Thank you for using our travel planning service. Have a great trip!")

break

response = chat_turn(user_input, user_id)

print(f"Travel Agent: {response}")