python

复制代码

import torch

import torchvision

from torch import nn

from PIL import Image

import matplotlib.pyplot as plt

def preprocess(img, image_shape):

transforms = torchvision.transforms.Compose([

torchvision.transforms.Resize(image_shape),

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize(mean=rgb_mean, std=rgb_std)])

return transforms(img).unsqueeze(0)

def postprocess(img):

img = img[0].to(rgb_std.device)

img = torch.clamp(img.permute(1, 2, 0) * rgb_std + rgb_mean, 0, 1)

return torchvision.transforms.ToPILImage()(img.permute(2, 0, 1))

def compute_loss(X, contents_Y_hat, styles_Y_hat, contents_Y, styles_Y_gram):

# 分别计算内容损失、风格损失和全变分损失

contents_l = [content_loss(Y_hat, Y) * content_weight for Y_hat, Y in zip(

contents_Y_hat, contents_Y)]

styles_l = [style_loss(Y_hat, Y) * style_weight for Y_hat, Y in zip(

styles_Y_hat, styles_Y_gram)]

tv_l = tv_loss(X) * tv_weight

# 对所有损失求和

l = sum(10 * styles_l + contents_l + [tv_l])

return contents_l, styles_l, tv_l, l

############################################################################################################

def content_loss(Y_hat, Y):

# 我们从动态计算梯度的树中分离目标:

# 这是一个规定的值,而不是一个变量。

return torch.square(Y_hat - Y.detach()).mean()

def gram(X):

num_channels, n = X.shape[1], X.numel() // X.shape[1]

X = X.reshape((num_channels, n))

return torch.matmul(X, X.T) / (num_channels * n)

def style_loss(Y_hat, gram_Y):

return torch.square(gram(Y_hat) - gram_Y.detach()).mean()

def tv_loss(Y_hat):

return 0.5 * (torch.abs(Y_hat[:, :, 1:, :] - Y_hat[:, :, :-1, :]).mean() +

torch.abs(Y_hat[:, :, :, 1:] - Y_hat[:, :, :, :-1]).mean())

############################################################################################################

class SynthesizedImage(nn.Module):

def __init__(self, img_shape, **kwargs):

super(SynthesizedImage, self).__init__(**kwargs)

self.weight = nn.Parameter(torch.rand(*img_shape))

def forward(self):

return self.weight

def get_inits(X, device, lr, styles_Y):

gen_img = SynthesizedImage(X.shape).to(device)

gen_img.weight.data.copy_(X.data)

trainer = torch.optim.Adam(gen_img.parameters(), lr=lr)

styles_Y_gram = [gram(Y) for Y in styles_Y]

return gen_img(), styles_Y_gram, trainer

############################################################################################################

def train(X, contents_Y, styles_Y, device, lr, num_epochs, lr_decay_epoch):

X, styles_Y_gram, trainer = get_inits(X, device, lr, styles_Y)

scheduler = torch.optim.lr_scheduler.StepLR(trainer, lr_decay_epoch, 0.8)

content_loss_list = []

style_loss_list = []

tv_loss_list = []

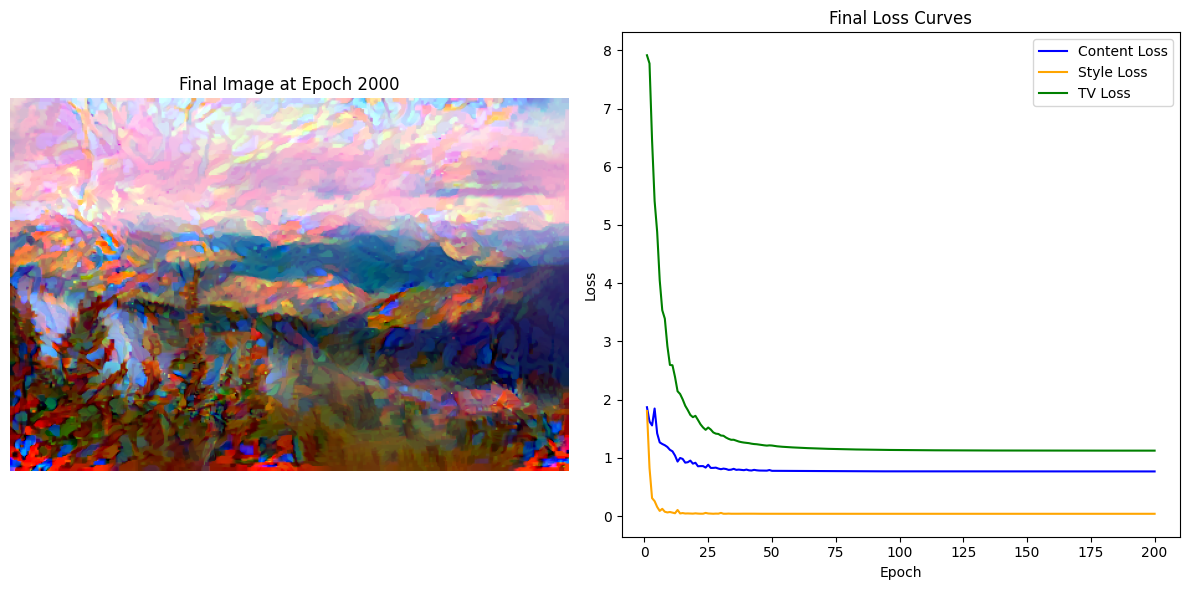

fig, (ax_img, ax_loss) = plt.subplots(1, 2, figsize=(12, 6))

for epoch in range(num_epochs):

trainer.zero_grad()

contents_Y_hat, styles_Y_hat = extract_features(X, content_layers, style_layers)

contents_l, styles_l, tv_l, l = compute_loss(X, contents_Y_hat, styles_Y_hat, contents_Y, styles_Y_gram)

l.backward()

trainer.step()

scheduler.step()

if (epoch + 1) % 10 == 0:

content_loss_list.append(float(sum(contents_l)))

style_loss_list.append(float(sum(styles_l)))

tv_loss_list.append(float(tv_l))

ax_img.clear()

ax_img.imshow(postprocess(X)) # 显示生成的图像

ax_img.axis('off') # 关闭坐标轴

ax_img.set_title(f'Final Image at Epoch {num_epochs}')

# 显示损失曲线

ax_loss.clear() # 清空损失曲线

ax_loss.plot(range(1, len(content_loss_list) + 1), content_loss_list, label='Content Loss', color='blue')

ax_loss.plot(range(1, len(style_loss_list) + 1), style_loss_list, label='Style Loss', color='orange')

ax_loss.plot(range(1, len(tv_loss_list) + 1), tv_loss_list, label='TV Loss', color='green')

ax_loss.set_xlabel('Epoch')

ax_loss.set_ylabel('Loss')

ax_loss.legend()

ax_loss.set_title('Final Loss Curves')

plt.tight_layout()

plt.show()

return X

def extract_features(X, content_layers, style_layers):

contents = []

styles = []

for i in range(len(net)):

X = net[i](X)

if i in style_layers:

styles.append(X)

if i in content_layers:

contents.append(X)

return contents, styles

def get_contents(image_shape, device):

content_X = preprocess(content_img, image_shape).to(device)

contents_Y, _ = extract_features(content_X, content_layers, style_layers)

return content_X, contents_Y

def get_styles(image_shape, device):

style_X = preprocess(style_img, image_shape).to(device)

_, styles_Y = extract_features(style_X, content_layers, style_layers)

return style_X, styles_Y

############################################################################################################

rgb_mean = torch.tensor([0.485, 0.456, 0.406])

rgb_std = torch.tensor([0.229, 0.224, 0.225])

pretrained_net = torchvision.models.vgg19(pretrained=True)

style_layers, content_layers = [0, 5, 10, 19, 28], [25]#挑选一些层间特征设计成风格层和内容层

net = nn.Sequential(*[pretrained_net.features[i] for i in range(max(content_layers + style_layers) + 1)])

############################################################################################################

content_weight, style_weight, tv_weight = 1, 1e3, 10

image_shape = (300, 450)

device=torch.device('cuda' if torch.cuda.is_available() else 'cpu')

net = net.to(device)

content_X, contents_Y = get_contents(image_shape, device)

_, styles_Y = get_styles(image_shape, device)

output = train(content_X, contents_Y, styles_Y, device, 0.3, 2000, 50)

############################################################################################################