【摘 要】

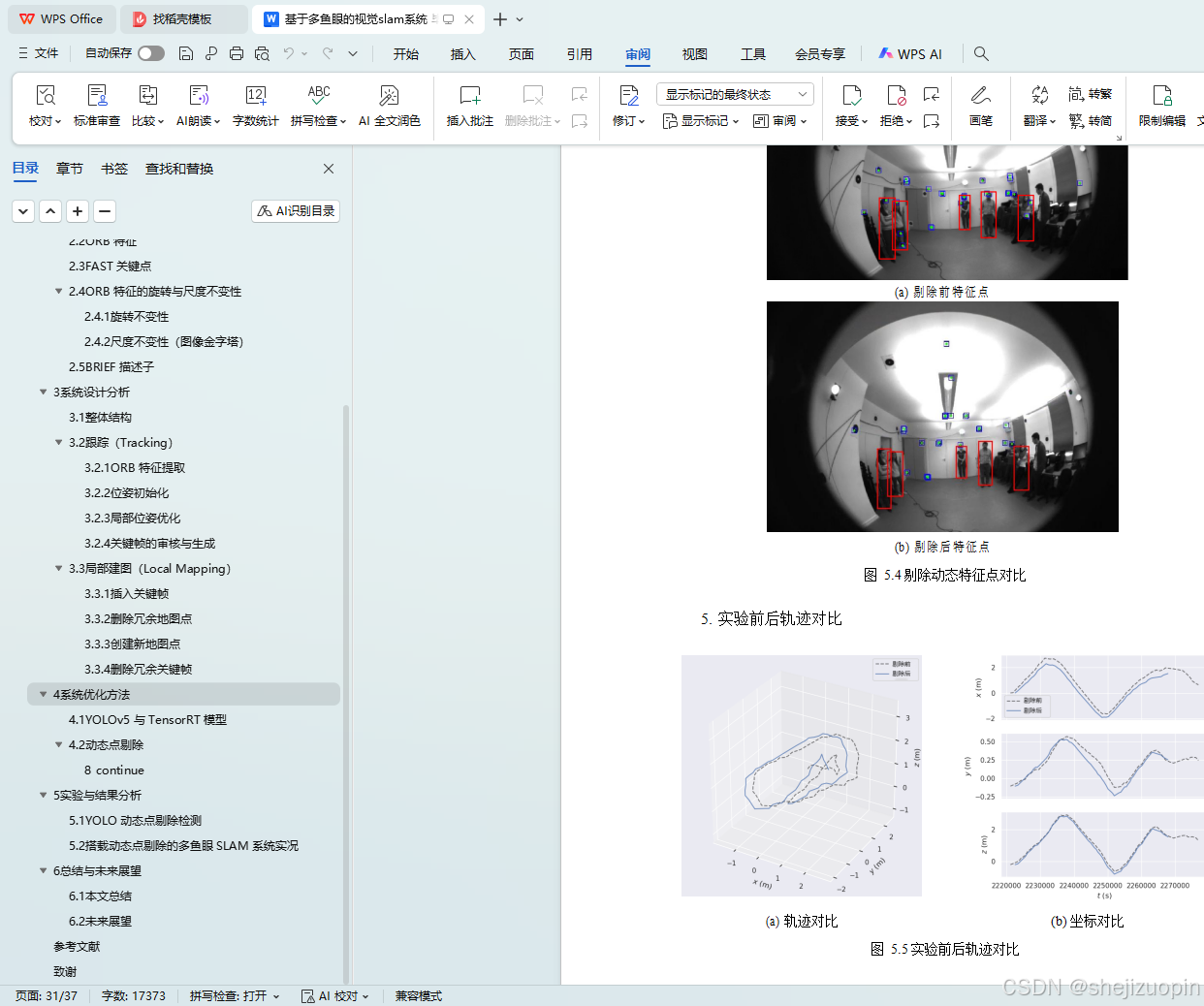

同时定位与建图(Simultaneous Localization and Mapping,SLAM),是目前智能机体自主定位的主流技术,能够持续获取环境信息并实时预估系统位姿信息,得到较为准确的环境地图与移动轨迹。伴随着计算机视觉和机器人技术的发展,视觉 SLAM 技术在自主导航、智能交通等领域得到了广泛应用。然而多个鱼眼相机组成的 SLAM 系统,受到外界动态环境的影响较大,在特征点提取与匹配方面仍有较大的进步空间。本文在现有的多鱼眼相机视觉 SLAM 系统上,添加了对动态物体进行筛选的 YOLO 目标检测模型,实现了在多个鱼眼相机工作的同时,对每个相机所捕获的图片进行动态点剔除,删除不断移动的人身上的动态点,使得系统提取的特征点尽量来自于静态的环境,加速特征点之间的匹配,进而提高系统的稳定性。本文通过实验验证了该系统在室内环境下的定位和建图效果,实时地显示出每个相机的图片特征点筛选情况,并将得到的轨迹图与原始轨迹进行对比,结果表明该系统具有良好的性能。从目前的研究现状来看,基于多鱼眼的视觉 SLAM 系统仍可以在许多方面得到提升,如使用 GPU 加速特征点的提取与匹配、采取更严格的关键帧选取策略等。这些将是本系统未来优化的主要方向。

关键词: 视觉 SLAM 系统,多鱼眼相机系统,YOLO 目标检测

ABSTRACT

Simultaneous Localization and Mapping ( SLAM ) is the mainstream technology of autonomous positioning of intelligent organisms. It can continuously obtain environmen- tal information and estimate the pose information of the system in real time, and obtain more accurate environmental maps and moving trajectories. With the development of computer vision and robot technology, visual SLAM technology has been widely used in autonomous navigation, intelligent transportation and other fields. However, the SLAM system composed of multiple fisheye cameras is greatly affected by the external dynamic environment, and there is still much room for improvement in feature point extraction and matching. In this paper, a YOLO target detection model for screening dynamic objects is added to the existing multi-fisheye camera visual SLAM system. While working with multiple fisheye cameras, the dynamic points of the pictures captured by each camera are removed, and the dynamic points on the constantly moving people are deleted, so that the feature points extracted by the system are as far as possible from the static environment, thereby improving the accuracy and robustness of the system. In this paper, the Simul- taneous Localization and mapping effects of the system in the indoor environment are verified by experiments. The image feature point screening of each camera is displayed in real time, and the obtained trajectory map is compared with the original trajectory. The results show that the system has good performance. From the current research status, the visual SLAM system based on multi-fisheye can still be improved in many aspects, such as using GPU to accelerate the extraction and matching of feature points, and adopt- ing stricter key frame selection strategies. These will be the main direction of the future optimization of the system.

Keywords: Visual SLAM system, multi-fisheye camera system, YOLO target detection

目录

1绪论 1

1.1选题背景与意义 1

1.2国内外研究现状和相关工作 2

1.3本文的论文结构与章节安排 3

2相关知识 5

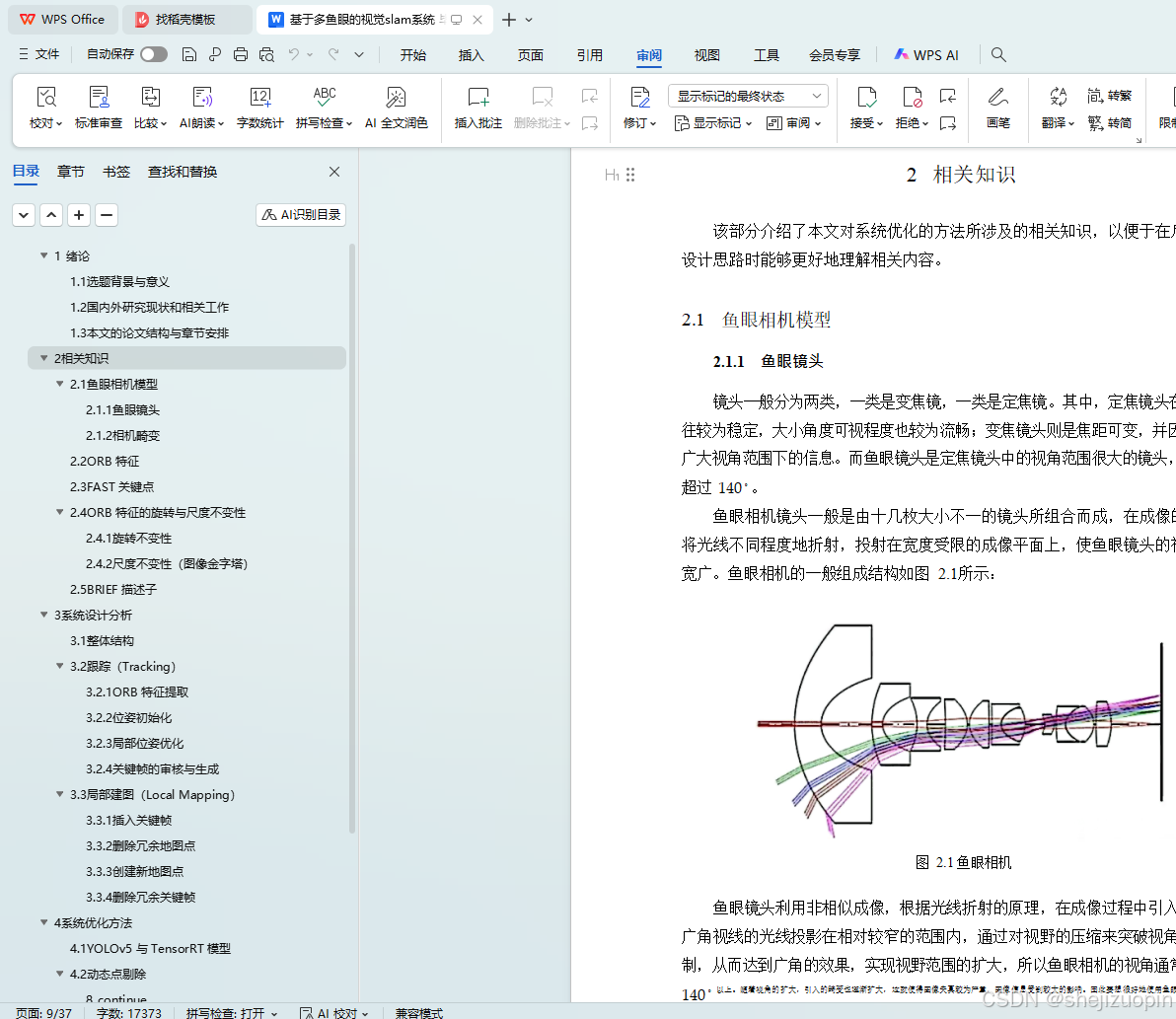

2.1鱼眼相机模型 5

2.2ORB 特征 7

2.3FAST 关键点 8

2.4ORB 特征的旋转与尺度不变性 9

2.5BRIEF 描述子 11

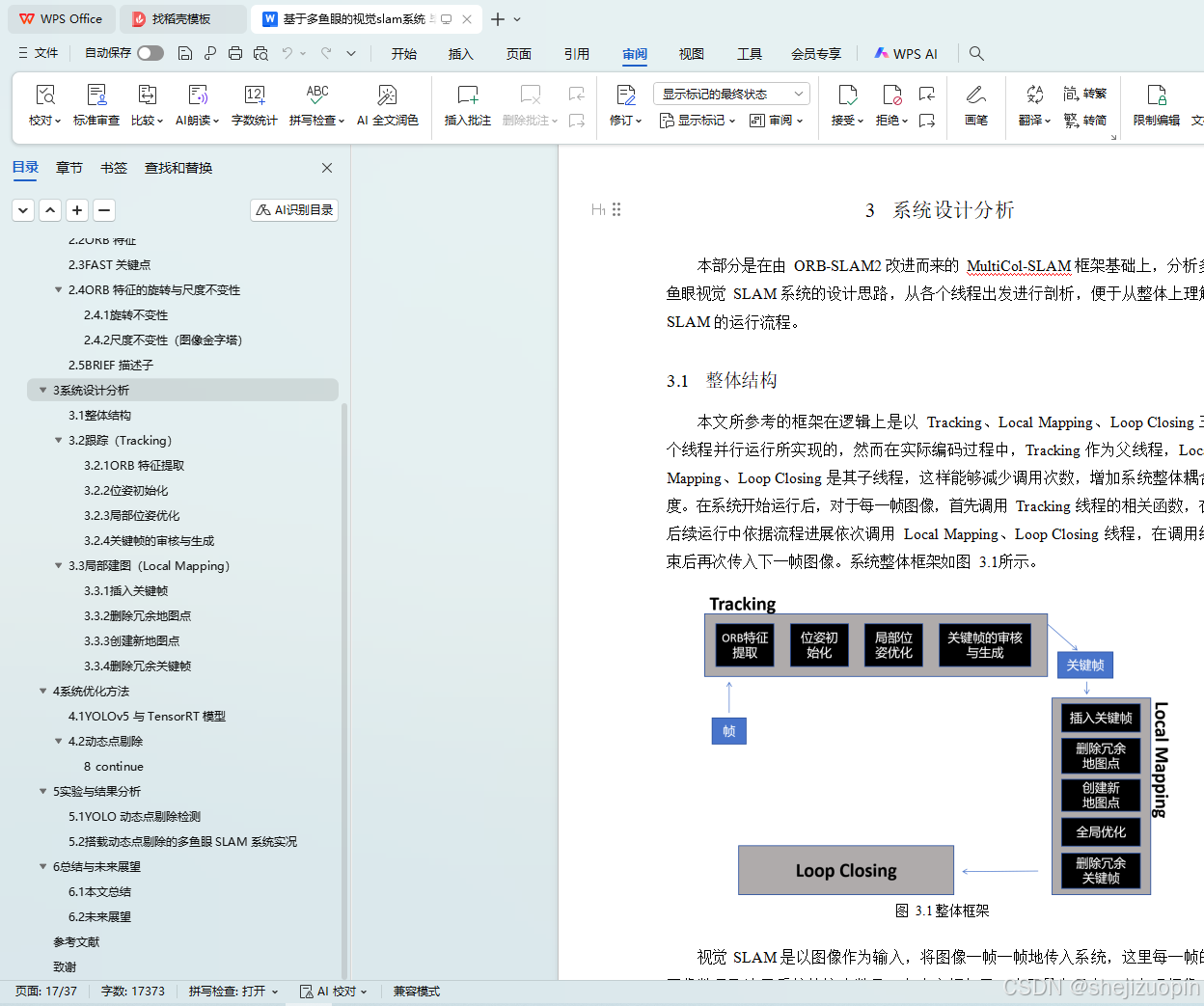

3系统设计分析 12

3.1整体结构 12

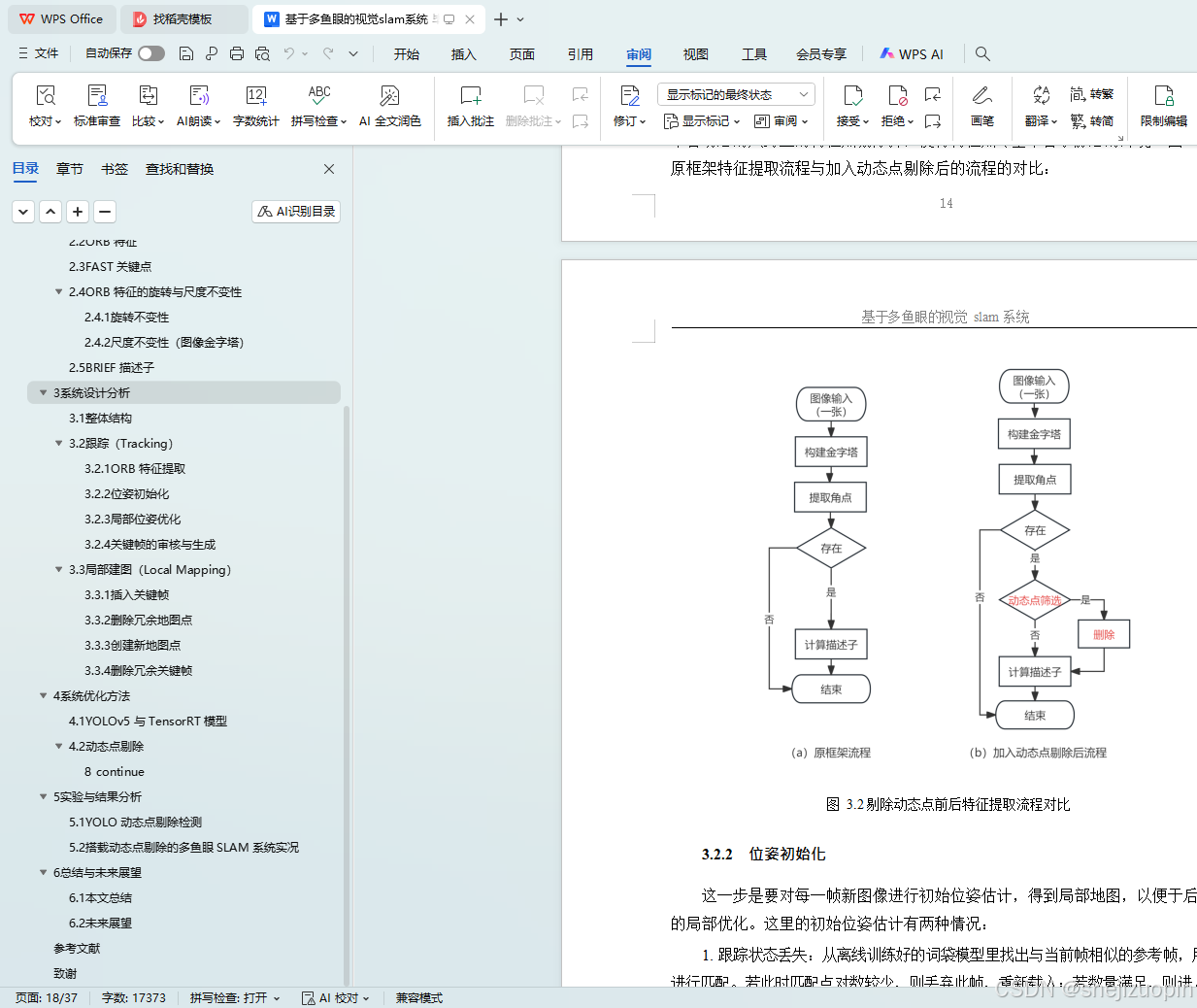

3.2跟踪(Tracking) 13

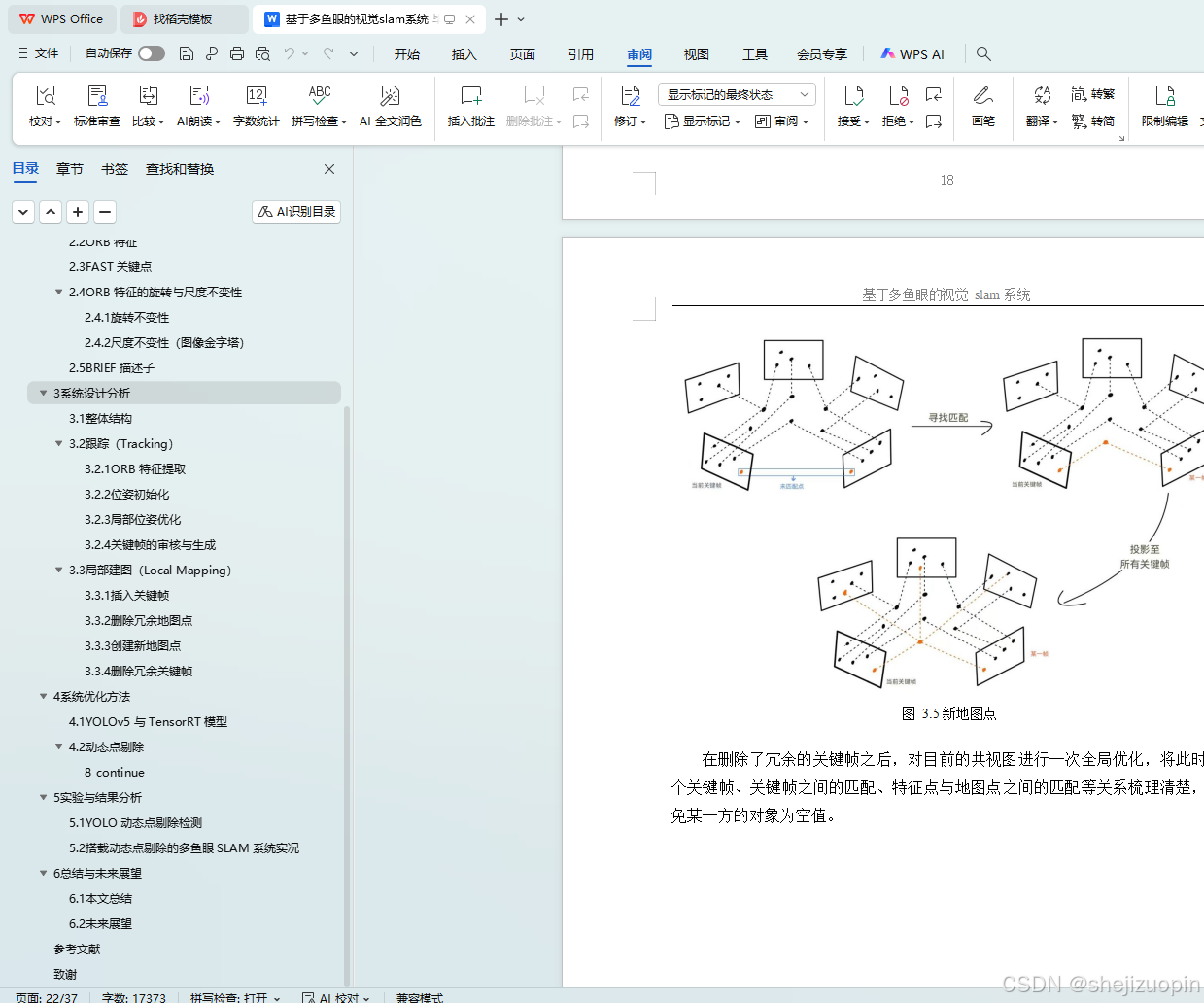

3.3局部建图(Local Mapping) 16

4系统优化方法 19

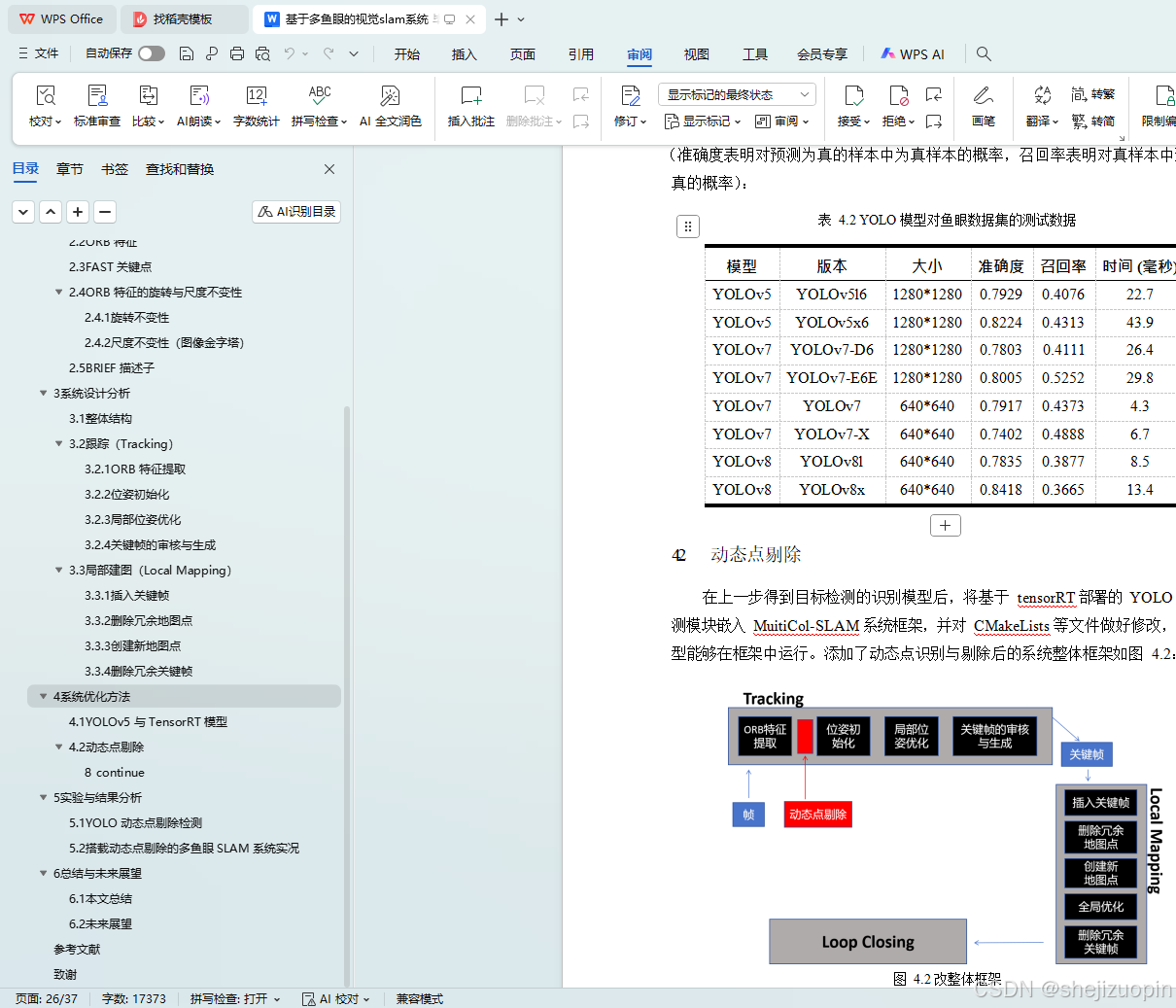

4.1YOLOv5 与 TensorRT 模型 19

4.2动态点剔除 21

5实验与结果分析 23

5.1YOLO 动态点剔除检测 23

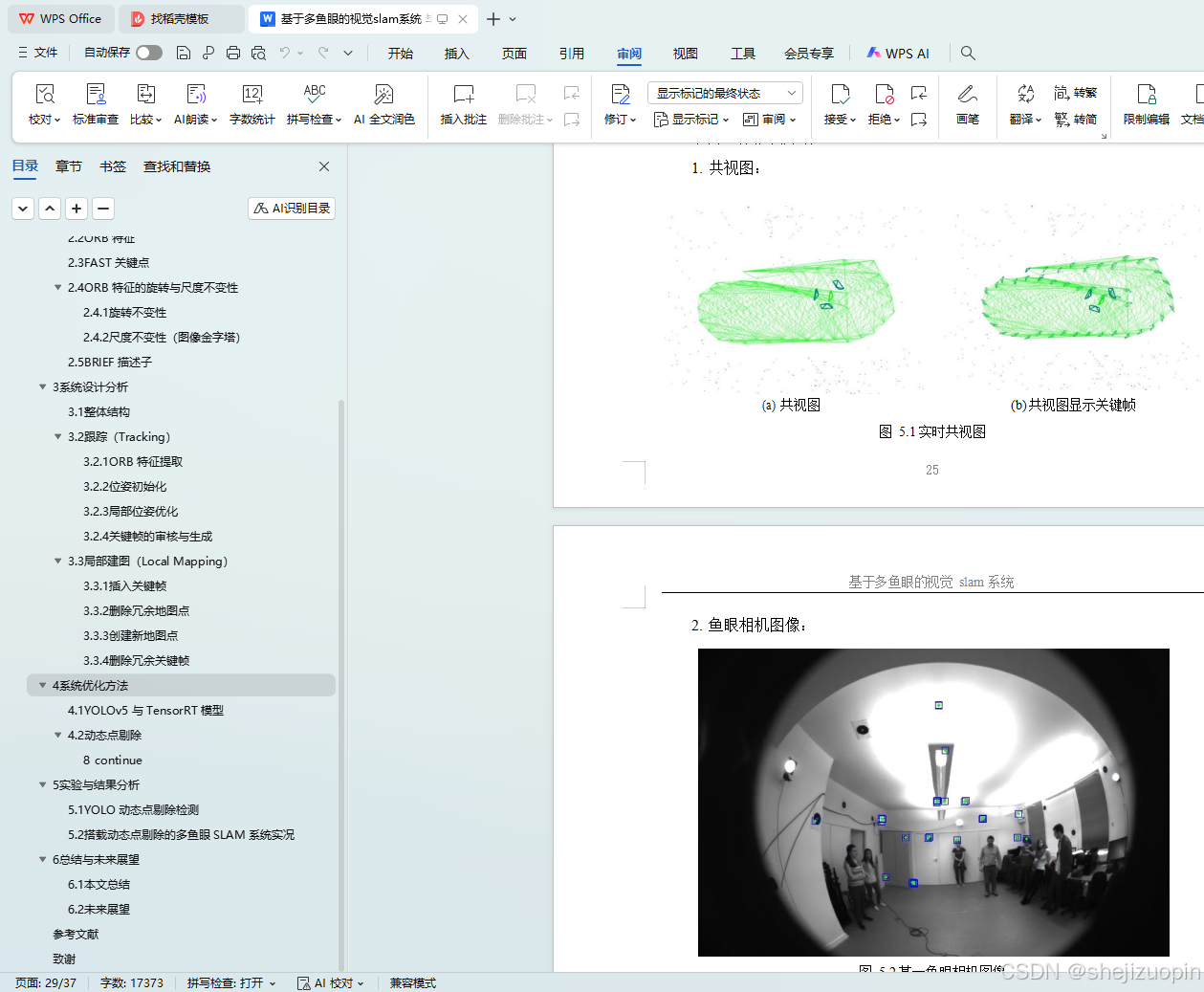

5.2搭载动态点剔除的多鱼眼 SLAM 系统实况 24

6总结与未来展望 27

6.1本文总结 27

6.2未来展望 27

参考文献 29

致谢 32