摘要:本文介绍了TensorFlow中的词嵌入技术,重点讲解了Word2vec模型的实现方法。词嵌入将离散单词映射为连续向量,是自然语言处理的重要环节。通过跳字模型训练,代码展示了如何生成训练数据对、构建嵌入层、计算NCE损失,并使用TensorBoard可视化结果。实验部分训练了一个数字单词嵌入模型,最终输出了与"one"最相似的单词及其余弦相似度。整个过程涵盖了数据预处理、模型构建、训练优化和结果分析等完整流程,为理解词嵌入技术提供了实践参考。

目录

[TensorFlow - 词嵌入](#TensorFlow - 词嵌入)

[Word2vec 模型](#Word2vec 模型)

TensorFlow - 词嵌入

词嵌入是将单词这类离散对象映射为向量和实数的概念,它是机器学习中输入数据处理的重要环节。该概念包含一系列标准函数,能够将离散的输入对象有效转换为可用于模型训练的有效向量。

词嵌入的输入示例如下所示 ------

蓝色:(0.01359, 0.00075997, 0.24608, ..., -0.2524, 1.0048, 0.06259)

布鲁斯(blues):(0.01396, 0.11887, -0.48963, ..., 0.033483, -0.10007, 0.1158)

橙色:(-0.24776, -0.12359, 0.20986, ..., 0.079717, 0.23865, -0.014213)

橙子(oranges):(-0.35609, 0.21854, 0.080944, ..., -0.35413, 0.38511, -0.070976)

Word2vec 模型

Word2vec 是无监督词嵌入技术中最常用的方法。该模型通过跳字模型(skip-grams)进行训练,让模型能够根据给定的输入单词预测其上下文词汇。

TensorFlow 支持多种方式实现该模型,可逐步提升模型的复杂度与优化程度,同时还能结合多线程概念和高级抽象语法来实现。

python

import os

import math

import numpy as np

import tensorflow as tf

from tensorflow.contrib.tensorboard.plugins import projector

batch_size = 64

embedding_dimension = 5

negative_samples = 8

LOG_DIR = "logs/word2vec_intro"

digit_to_word_map ={

1:"One",2:"Two",3:"Three",4:"Four",5:"Five",6:"Six",7:"Seven",8:"Eight",9:"Nine"}

sentences =[]

# 生成两种句子------奇数数字序列和偶数数字序列

for i in range(10000):

rand_odd_ints = np.random.choice(range(1,10,2), 3)

sentences.append(" ".join([digit_to_word_map[r] for r in rand_odd_ints]))

rand_even_ints = np.random.choice(range(2,10,2), 3)

sentences.append(" ".join([digit_to_word_map[r] for r in rand_even_ints]))

# 建立单词到索引的映射

word2index_map ={}

index = 0

for sent in sentences:

for word in sent.lower().split():

if word not in word2index_map:

word2index_map[word] = index

index += 1

index2word_map ={index: word for word, index in word2index_map.items()}

vocabulary_size = len(index2word_map)

# 生成跳字模型的样本对

skip_gram_pairs =[]

for sent in sentences:

tokenized_sent = sent.lower().split()

for i in range(1, len(tokenized_sent)-1):

word_context_pair = [[word2index_map[tokenized_sent[i-1]],

word2index_map[tokenized_sent[i+1]]],

word2index_map[tokenized_sent[i]]

]

skip_gram_pairs.append([word_context_pair[1], word_context_pair[0][0]])

skip_gram_pairs.append([word_context_pair[1], word_context_pair[0][1]])

def get_skipgram_batch(batch_size):

instance_indices = list(range(len(skip_gram_pairs)))

np.random.shuffle(instance_indices)

batch = instance_indices[:batch_size]

x = [skip_gram_pairs[i][0] for i in batch]

y = [[skip_gram_pairs[i][1]] for i in batch]

return x, y

# 批次数据示例

x_batch, y_batch = get_skipgram_batch(8)

x_batch

y_batch

[index2word_map[word] for word in x_batch]

[index2word_map[word[0]] for word in y_batch]

# 定义输入数据和标签的占位符

train_inputs = tf.placeholder(tf.int32, shape = [batch_size])

train_labels = tf.placeholder(tf.int32, shape = [batch_size,1])

# 嵌入查找表目前仅支持CPU运行

with tf.name_scope("embeddings"):

embeddings = tf.Variable(

tf.random_uniform([vocabulary_size, embedding_dimension],-1.0, 1.0),

name ='embedding')

# 本质是一个查找表

embed = tf.nn.embedding_lookup(embeddings, train_inputs)

# 定义噪声对比估计损失的参数变量

nce_weights = tf.Variable(

tf.truncated_normal([vocabulary_size, embedding_dimension],

stddev = 1.0 / math.sqrt(embedding_dimension))

)

nce_biases = tf.Variable(tf.zeros([vocabulary_size]))

# 计算噪声对比估计损失

loss = tf.reduce_mean(

tf.nn.nce_loss(weights = nce_weights, biases = nce_biases, inputs = embed,

labels = train_labels,num_sampled = negative_samples,

num_classes = vocabulary_size))

# 记录损失值到TensorBoard

tf.summary.scalar("NCE_loss", loss)

# 学习率衰减设置

global_step = tf.Variable(0, trainable = False)

learningRate = tf.train.exponential_decay(learning_rate = 0.1,

global_step = global_step, decay_steps = 1000, decay_rate = 0.95, staircase = True)

# 定义训练步骤

train_step = tf.train.GradientDescentOptimizer(learningRate).minimize(loss)

merged = tf.summary.merge_all()

with tf.Session() as sess:

train_writer = tf.summary.FileWriter(LOG_DIR, tf.get_default_graph())

saver = tf.train.Saver()

# 写入元数据文件

with open(os.path.join(LOG_DIR, 'metadata.tsv'),"w") as metadata:

metadata.write('Name\tClass\n')

for k, v in index2word_map.items():

metadata.write('%s\t%d\n'% (v, k))

# 配置TensorBoard的嵌入可视化

config = projector.ProjectorConfig()

embedding = config.embeddings.add()

embedding.tensor_name = embeddings.name

# 将张量与元数据文件关联

embedding.metadata_path = os.path.join(LOG_DIR, 'metadata.tsv')

projector.visualize_embeddings(train_writer, config)

# 初始化所有全局变量

tf.global_variables_initializer().run()

# 模型训练循环

for step in range(1000):

x_batch, y_batch = get_skipgram_batch(batch_size)

summary, _ = sess.run([merged, train_step],

feed_dict ={train_inputs: x_batch, train_labels: y_batch})

train_writer.add_summary(summary, step)

# 每100步保存模型并打印损失值

if step % 100 == 0:

saver.save(sess, os.path.join(LOG_DIR, "w2v_model.ckpt"), step)

loss_value = sess.run(loss, feed_dict ={

train_inputs: x_batch, train_labels: y_batch})

print("Loss at %d: %.5f" %(step, loss_value) )

# 使用前对嵌入向量做归一化处理

norm = tf.sqrt(tf.reduce_sum(tf.square(embeddings),1, keep_dims =True))

normalized_embeddings = embeddings / norm

normalized_embeddings_matrix = sess.run(normalized_embeddings)

# 选取参考单词"one"的嵌入向量

ref_word = normalized_embeddings_matrix[word2index_map["one"]]

# 计算余弦相似度

cosine_dists = np.dot(normalized_embeddings_matrix, ref_word)

# 按相似度降序排序并取前10个(排除自身)

ff = np.argsort(cosine_dists)[::-1][1:10]

# 打印结果

for f in ff:

print(index2word_map[f])

print(cosine_dists[f])输出结果

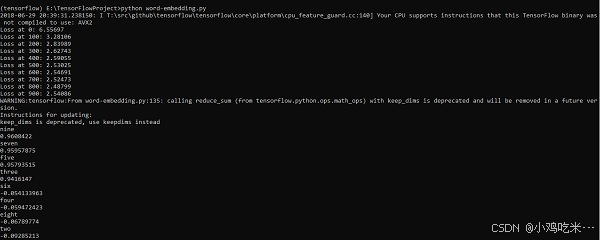

上述代码运行后会得到以下输出 ------seven nine five three six four eight two(系统提示)你的 CPU 支持的 AVX2 指令集,未在当前编译的 TensorFlow 二进制文件中启用第 0 步损失值:6.55697第 100 步损失值:3.28106第 200 步损失值:2.83989第 300 步损失值:2.62743第 400 步损失值:2.59055第 500 步损失值:2.57025第 600 步损失值:2.54691第 700 步损失值:2.52473第 800 步损失值:2.48799第 900 步损失值:2.54086