https://github.com/MiniMax-AI/Mini-Agent

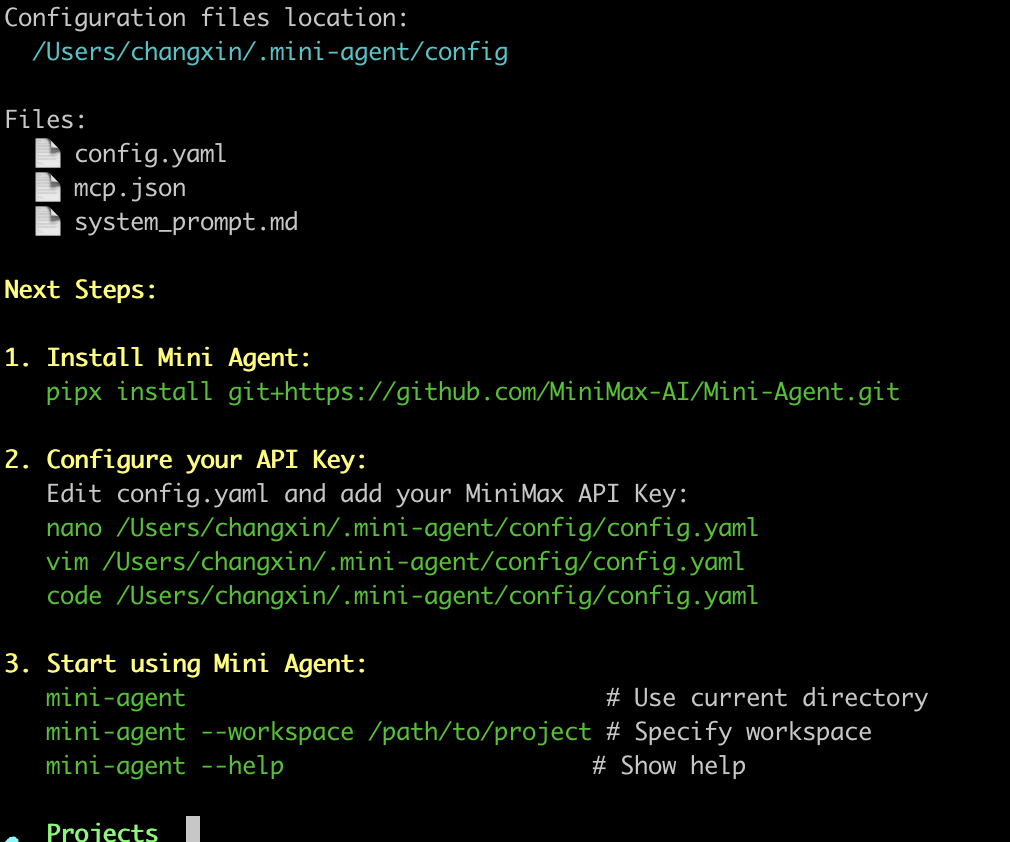

参考mini agent中的quick start指南,首先完成agent的安装。

🚀 Quick Start Mode (Recommended for Beginners)

接下来,尝试执行"mini-agent"指令,可以看到:无法联网,确认了key的使用是正确的。接下来,可以看到agent可以正常的进行任务执行,明确对于mini-agent这个任务很好的分析了。

☁ Mini-Agent [main] ls

CODE_OF_CONDUCT.md MANIFEST.in mini_agent

CODE_OF_CONDUCT_CN.md README.md pyproject.toml

CONTRIBUTING.md README_CN.md scripts

CONTRIBUTING_CN.md docs tests

LICENSE examples uv.lock

☁ Mini-Agent [main] mini-agent

✅ LLM retry mechanism enabled (max 3 retries)

✅ Loaded Bash Output tool

✅ Loaded Bash Kill tool

Loading Claude Skills...

✅ Discovered 15 Claude Skills

✅ Loaded Skill tool (get_skill)

Loading MCP tools...

MCP timeouts: connect=10.0s, execute=60.0s, sse_read=120.0s

Skipping disabled server: minimax_search

Skipping disabled server: memory

Total MCP tools loaded: 0

⚠️ No available MCP tools found

✅ Loaded Bash tool (cwd: /Users/ch/Projects/Mini-Agent)

✅ Loaded file operation tools (workspace: /Users/ch/Projects/Mini-Agent)

✅ Loaded session note tool

✅ Loaded system prompt (from: /Users/ch/Projects/Mini-Agent/mini_agent/config/system_prompt.md)

✅ Injected 15 skills metadata into system prompt

╔══════════════════════════════════════════════════════════╗

║ 🤖 Mini Agent - Multi-turn Interactive Session ║

╚══════════════════════════════════════════════════════════╝

┌──────────────────────────────────────────────────────────┐

│ Session Info │

├──────────────────────────────────────────────────────────┤

│ Model: MiniMax-M2.5 │

│ Workspace: /Users/ch/Projects/Mini-Agent │

│ Message History: 1 messages │

│ Available Tools: 8 tools │

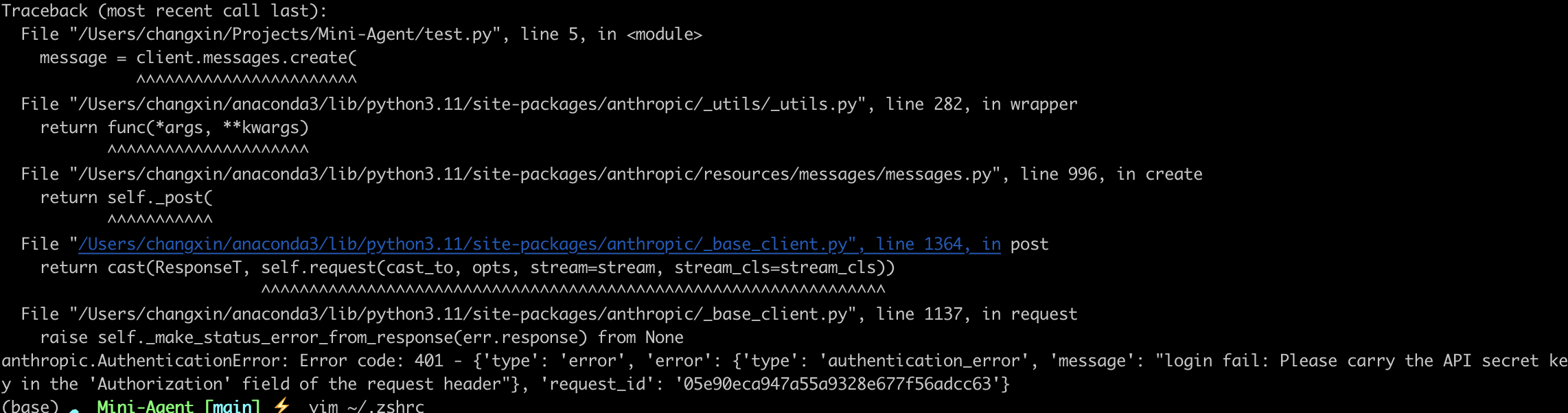

└──────────────────────────────────────────────────────────┘参考API的使用链接,确认了基本上无法使用的原因是因为https://platform.minimaxi.com/docs/api-reference/text-anthropic-api

"没有充钱"

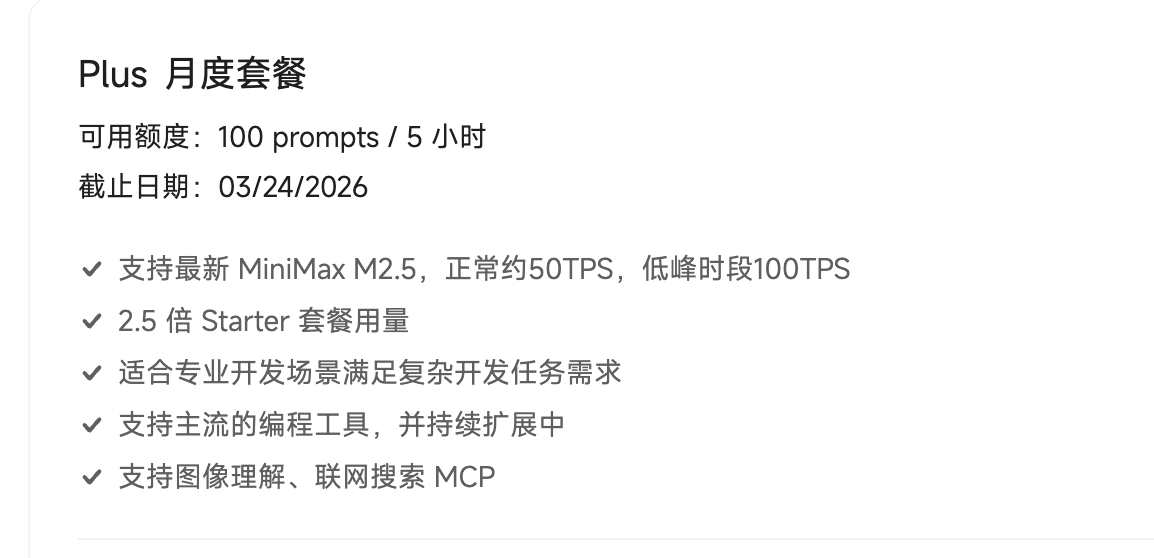

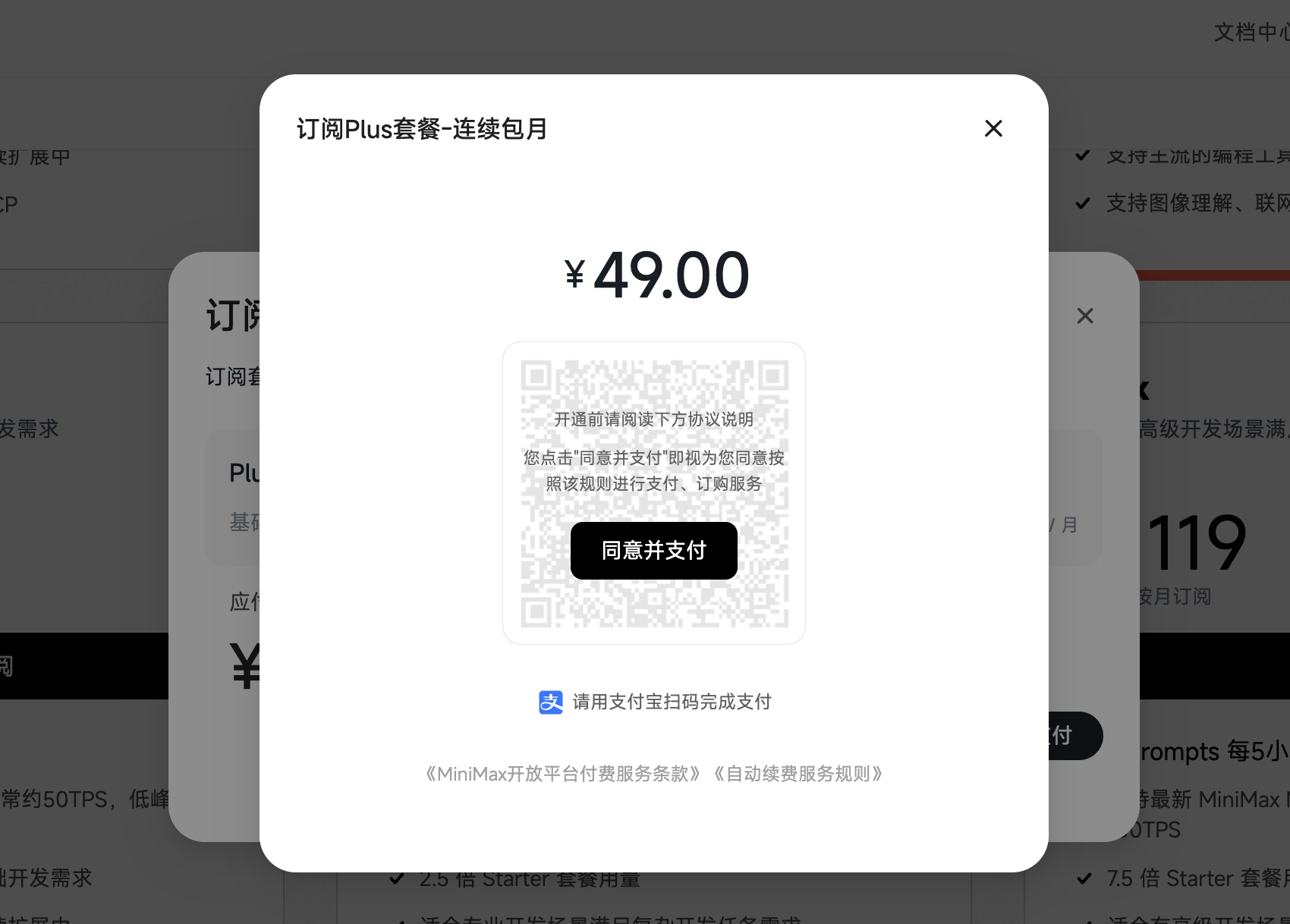

选择了一个plus月度套餐,

Agent › Thinking... (Esc to cancel)

📝 Log file: /Users/changxin/.mini-agent/log/agent_run_20260222_002838.log

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 1/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

The user has sent a simple greeting. I should respond in a friendly and helpful manner, introducing myself and offering assistance.

🤖 Assistant:

Hello! I'm Mini-Agent, an AI assistant powered by MiniMax. I'm here to help you with a wide range of tasks.

I have access to various tools that allow me to:

- **Read, write, and edit files** - I can work with files in your workspace

- **Execute bash commands** - Run terminal commands, manage git, install packages, etc.

- **Use specialized skills** - Including PDF manipulation, spreadsheet work, presentations, document editing, and more

How can I help you today? Feel free to ask me to:

- Analyze or modify files in your project

- Run development commands

- Create documents, presentations, or spreadsheets

- Execute any other tasks within my capabilities

What would you like to work on?

⏱️ Step 1 completed in 3.92s (total: 3.92s)

────────────────────────────────────────────────────────────

You › show me how this code base is implemented

Agent › Thinking... (Esc to cancel)

📝 Log file: /Users/changxin/.mini-agent/log/agent_run_20260222_002921.log

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 1/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

The user wants me to explore and explain how this codebase is implemented. I should first understand the structure of the project, then examine the key files to understand the architecture and implementation.

Let me start by:

1. Looking at the directory structure

2. Finding key configuration files (package.json, requirements.txt, etc.)

3. Understanding the main entry points and architecture

I'll start by exploring the workspace to understand what kind of project this is.

🤖 Assistant:

I'll explore this codebase to understand its structure and implementation. Let me start by examining the project structure and key files.

🔧 Tool Call: bash

Arguments:

{

"command": "ls -la"

}

✓ Result: total 640

drwxr-xr-x@ 21 changxin staff 672 Feb 22 00:13 .

drwxr-xr-x@ 10 changxin staff 320 Feb 21 23:47 ..

drwxr-xr-x@ 12 changxin staff 384 Feb 21 23:45 .git

-rw-r--r--@ 1 changxin staff 545 Feb 21 23:45 .gitignore

-rw-r--r--@ 1 changxin staff 106 Feb 21 23:45 .gitmodul...

⏱️ Step 1 completed in 4.69s (total: 4.69s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 2/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Good, this is a Python project (I can see pyproject.toml). Let me examine the key files to understand the project structure:

1. pyproject.toml - for project configuration

2. README.md - for project overview

3. The mini_agent directory - for the main source code

4. The tests directory - for tests

Let me explore these to understand the implementation.

🔧 Tool Call: read_file

Arguments:

{

"path": "pyproject.toml"

}

✓ Result: 1|[project]

2|name = "mini-agent"

3|version = "0.1.0"

4|description = "Minimal single agent demo with basic file tools and MCP support"

5|readme = "README.md"

6|requires-python = ">=3.10"

7|authors = [

8| {name = "Mini Agent Team"}

9|]

10|license =...

⏱️ Step 2 completed in 1.90s (total: 6.59s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 3/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me explore the main source code directory to understand the structure better.

🔧 Tool Call: bash

Arguments:

{

"command": "find mini_agent -type f -name \"*.py\" | head -50"

}

✓ Result: mini_agent/tools/skill_tool.py

mini_agent/tools/mcp_loader.py

mini_agent/tools/bash_tool.py

mini_agent/tools/__init__.py

mini_agent/tools/file_tools.py

mini_agent/tools/note_tool.py

mini_agent/tools/skill_loader.py

mini_agent/tools/base.py

mini_agent/llm/__init__.py

mini_agent/llm/llm_wrapper.py

min...

⏱️ Step 3 completed in 1.65s (total: 8.23s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 4/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now I have a good overview. Let me examine the core files to understand the architecture:

1. mini_agent/agent.py - the main agent

2. mini_agent/cli.py - CLI entry point

3. mini_agent/tools/base.py - base tool class

4. mini_agent/tools/file_tools.py - file tools

5. mini_agent/tools/bash_tool.py - bash tool

6. mini_agent/llm/ - LLM clients

7. mini_agent/config.py - configuration

8. mini_agent/schema/schema.py - data schemas

Let me read these core files to understand the implementation.

🔧 Tool Call: bash

Arguments:

{

"command": "tree mini_agent -I '__pycache__|*.pyc' -L 3"

}

✗ Error: Command failed with exit code 127

/bin/sh: tree: command not found

⏱️ Step 4 completed in 2.77s (total: 11.01s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 5/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

tree is not available, let me use find instead.

🔧 Tool Call: bash

Arguments:

{

"command": "find mini_agent -type d | grep -v __pycache__ | sort"

}

✓ Result: mini_agent

mini_agent/acp

mini_agent/config

mini_agent/llm

mini_agent/schema

mini_agent/skills

mini_agent/skills/.claude-plugin

mini_agent/skills/algorithmic-art

mini_agent/skills/algorithmic-art/templates

mini_agent/skills/artifacts-builder

mini_agent/skills/artifacts-builder/scripts

mini_agent/ski...

⏱️ Step 5 completed in 6.85s (total: 17.86s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 6/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now I have a good understanding of the structure. Let me read the core files to understand how the codebase is implemented. I'll start with the main entry points and core components.

🤖 Assistant:

Now let me examine the core files to understand the implementation:

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/__init__.py"

}

✓ Result: 1|"""Mini Agent - Minimal single agent with basic tools and MCP support."""

2|

3|from .agent import Agent

4|from .llm import LLMClient

5|from .schema import FunctionCall, LLMProvider, LLMResponse, Message, ToolCall

6|

7|__version__ = "0.1.0"

8|

9|__all__ ...

⏱️ Step 6 completed in 1.62s (total: 19.48s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 7/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me read the agent.py file to understand the core agent implementation.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/agent.py"

}

✓ Result: 1|"""Core Agent implementation."""

2|

3|import asyncio

4|import json

5|from pathlib import Path

6|from time import perf_counter

7|from typing import Optional

8|

9|import tiktoken

10|

11|from .llm import LLMClient

12|from .logger import AgentLo...

⏱️ Step 7 completed in 1.34s (total: 20.82s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 8/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me read the tools base class to understand how tools are implemented.

🤖 Assistant:

Now let me examine the tools system:

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/tools/base.py"

}

✓ Result: 1|"""Base tool classes."""

2|

3|from typing import Any

4|

5|from pydantic import BaseModel

6|

7|

8|class ToolResult(BaseModel):

9| """Tool execution result."""

10|

11| success: bool

12| content: str = ""

13| error: str | None =...

⏱️ Step 8 completed in 2.19s (total: 23.00s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 9/100 │

╰──────────────────────────────────────────────────────────╯

⏹️ Esc pressed, cancelling...

^[OQ

🧠 Thinking:

Now let me read the file tools to understand how they are implemented.

Cleaned up 1 incomplete message(s)

⚠️ Task cancelled by user.

────────────────────────────────────────────────────────────

You › continue the previous task

Agent › Thinking... (Esc to cancel)

📝 Log file: /Users/changxin/.mini-agent/log/agent_run_20260222_003122.log

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 1/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

The user wants me to continue exploring the codebase implementation. I was in the middle of examining the code structure. Let me continue by looking at more key files to understand the full implementation.

I should look at:

1. The LLM client implementation

2. The tools implementations

3. The schema definitions

4. The CLI entry point

Let me continue exploring.

🤖 Assistant:

I'll continue exploring the codebase implementation. Let me examine more key components:

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/llm/__init__.py"

}

✓ Result: 1|"""LLM clients package supporting both Anthropic and OpenAI protocols."""

2|

3|from .anthropic_client import AnthropicClient

4|from .base import LLMClientBase

5|from .llm_wrapper import LLMClient

6|from .openai_client import OpenAIClient

7|

8|__all__ = ["LLM...

⏱️ Step 1 completed in 4.62s (total: 4.62s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 2/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Let me continue examining the LLM wrapper and the clients to understand how they work.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/llm/llm_wrapper.py"

}

✓ Result: 1|"""LLM client wrapper that supports multiple providers.

2|

3|This module provides a unified interface for different LLM providers

4|(Anthropic and OpenAI) through a single LLMClient class.

5|"""

6|

7|import logging

8|

9|from ..retry import RetryConfig

...

⏱️ Step 2 completed in 2.16s (total: 6.77s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 3/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Let me continue exploring the schema, the tools implementations, and the CLI to get a complete picture of the implementation.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/schema/schema.py"

}

✓ Result: 1|from enum import Enum

2|from typing import Any

3|

4|from pydantic import BaseModel

5|

6|

7|class LLMProvider(str, Enum):

8| """LLM provider types."""

9|

10| ANTHROPIC = "anthropic"

11| OPENAI = "openai"

12|

13|

14|class Func...

⏱️ Step 3 completed in 2.66s (total: 9.43s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 4/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Let me also look at the tools implementations to see how they are built.

🤖 Assistant:

Now let me examine the built-in tools:

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/tools/__init__.py"

}

✓ Result: 1|"""Tools module."""

2|

3|from .base import Tool, ToolResult

4|from .bash_tool import BashTool

5|from .file_tools import EditTool, ReadTool, WriteTool

6|from .note_tool import RecallNoteTool, SessionNoteTool

7|

8|__all__ = [

9| "Tool",

10| "Too...

⏱️ Step 4 completed in 1.23s (total: 10.66s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 5/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Let me examine one of the tool implementations and also the CLI.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/tools/file_tools.py"

}

✓ Result: 1|"""File operation tools."""

2|

3|from pathlib import Path

4|from typing import Any

5|

6|import tiktoken

7|

8|from .base import Tool, ToolResult

9|

10|

11|def truncate_text_by_tokens(

12| text: str,

13| max_tokens: int,

14|) -> ...

⏱️ Step 5 completed in 1.86s (total: 12.53s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 6/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Let me also examine the CLI to understand the entry point, and also look at the bash tool.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/cli.py"

}

✓ Result: 1|"""

2|Mini Agent - Interactive Runtime Example

3|

4|Usage:

5| mini-agent [--workspace DIR] [--task TASK]

6|

7|Examples:

8| mini-agent # Use current directory as workspace (interactive mode)

9| mini-agent --workspace...

⏱️ Step 6 completed in 9.26s (total: 21.78s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 7/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me check the config to understand the configuration structure, and also look at the skill system.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/config.py"

}

✓ Result: 1|"""Configuration management module

2|

3|Provides unified configuration loading and management functionality

4|"""

5|

6|from pathlib import Path

7|

8|import yaml

9|from pydantic import BaseModel, Field

10|

11|

12|class RetryConfig(BaseModel):...

⏱️ Step 7 completed in 4.27s (total: 26.05s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 8/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me look at one of the LLM clients to understand how they work, and also the skill tool to understand the skill system.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/llm/anthropic_client.py"

}

✓ Result: 1|"""Anthropic LLM client implementation."""

2|

3|import logging

4|from typing import Any

5|

6|import anthropic

7|

8|from ..retry import RetryConfig, async_retry

9|from ..schema import FunctionCall, LLMResponse, Message, TokenUsage, ToolCall

10|from ....

⏱️ Step 8 completed in 4.68s (total: 30.73s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 9/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now let me also look at the skill tool to understand how skills are loaded and used.

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/tools/skill_tool.py"

}

✓ Result: 1|"""

2|Skill Tool - Tool for Agent to load Skills on-demand

3|

4|Implements Progressive Disclosure (Level 2): Load full skill content when needed

5|"""

6|

7|from typing import Any, Dict, List, Optional

8|

9|from .base import Tool, ToolResult

10|from ...

⏱️ Step 9 completed in 3.14s (total: 33.87s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 10/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now I have a good understanding of the codebase. Let me also quickly check the skill loader to understand the progressive disclosure pattern, and then I'll provide a comprehensive summary of the codebase implementation.

🤖 Assistant:

Now let me check the skill loader to understand the skill loading mechanism:

🔧 Tool Call: read_file

Arguments:

{

"path": "mini_agent/tools/skill_loader.py"

}

✓ Result: 1|"""

2|Skill Loader - Load Claude Skills

3|

4|Supports loading skills from SKILL.md files and providing them to Agent

5|"""

6|

7|import re

8|from dataclasses import dataclass

9|from pathlib import Path

10|from typing import Dict, List, Optional

1...

⏱️ Step 10 completed in 2.29s (total: 36.16s)

╭──────────────────────────────────────────────────────────╮

│ 💭 Step 11/100 │

╰──────────────────────────────────────────────────────────╯

🧠 Thinking:

Now I have a comprehensive understanding of the codebase. Let me provide a detailed summary of how this codebase is implemented.

🤖 Assistant:

Now I have a comprehensive understanding of this codebase. Let me provide you with a detailed explanation of how it's implemented:

---

## Mini-Agent Codebase Implementation Overview

### 1. **Project Structure**

```

mini_agent/

├── __init__.py # Package exports

├── agent.py # Core Agent implementation

├── cli.py # Command-line interface

├── config.py # Configuration management

├── logger.py # Logging utilities

├── retry.py # Retry logic

├── llm/ # LLM client implementations

│ ├── __init__.py

│ ├── base.py # Base LLM client

│ ├── llm_wrapper.py # Unified LLM client wrapper

│ ├── anthropic_client.py # Anthropic/MiniMax protocol

│ └── openai_client.py # OpenAI protocol

├── schema/ # Data models

│ └── schema.py # Pydantic models (Message, ToolCall, etc.)

├── tools/ # Tool implementations

│ ├── base.py # Base Tool class

│ ├── file_tools.py # Read/Write/Edit files

│ ├── bash_tool.py # Bash command execution

│ ├── note_tool.py # Session memory

│ ├── skill_tool.py # Skill loading tool

│ ├── skill_loader.py # Skill discovery & loading

│ └── mcp_loader.py # MCP protocol support

├── skills/ # Built-in skills (submodule)

│ ├── document-skills/ # PDF, PPTX, DOCX, XLSX skills

│ ├── algorithmic-art # p5.js art generation

│ ├── canvas-design # Visual design

│ ├── webapp-testing # Playwright testing

│ └── ... # Many more skills

└── config/ # Configuration files

```

---

### 2. **Core Components**

#### **2.1 Agent (`mini_agent/agent.py`)**

The `Agent` class is the heart of the system:

- **Initialization**: Takes LLM client, system prompt, tools, max_steps, workspace_dir, token_limit

- **Message History**: Maintains conversation with system/user/assistant/tool messages

- **Run Loop**: Executes steps up to max_steps, calling LLM and executing tools

- **Token Management**: Uses tiktoken to estimate tokens and triggers summarization when exceeding limit

- **Cancellation Support**: Can be cancelled via Esc key or external event

```python

# Key flow:

1. Add user message

2. Check token limit → summarize if needed

3. Call LLM with tools

4. Execute tool calls if any

5. Repeat until no tools called or max_steps

```

#### **2.2 LLM Clients (`mini_agent/llm/`)**

- **LLMClient (wrapper)**: Unified interface supporting both Anthropic and OpenAI protocols

- **AnthropicClient**: Uses official Anthropic SDK with extended thinking support

- **OpenAIClient**: OpenAI-compatible API support

- **Retry Logic**: Configurable exponential backoff retry

#### **2.3 Tools System (`mini_agent/tools/`)**

- **Base Tool**: Abstract class with name, description, parameters, execute()

- **File Tools**: ReadTool, WriteTool, EditTool - workspace-aware file operations

- **BashTool**: Execute shell commands with output streaming

- **Note Tools**: SessionNoteTool, RecallNoteTool - persistent memory

- **SkillTool**: On-demand skill loading via `get_skill`

- **MCP Tools**: Load external MCP servers

---

### 3. **Skill System (Progressive Disclosure)**

The skill system implements **3 levels of progressive disclosure**:

| Level | What Agent Sees | When |

|-------|-----------------|------|

| **Level 1** | Skill names + descriptions | In system prompt |

| **Level 2** | Full skill content | When `get_skill` called |

| **Level 3+** | Resources/scripts with absolute paths | When skill content references them |

Skills are defined in `SKILL.md` files with YAML frontmatter:

```yaml

---

name: xlsx

description: Comprehensive spreadsheet manipulation...

---

# Skill content here

```

---

### 4. **Configuration (`mini_agent/config.py`)**

Configuration is loaded from `config.yaml` with priority:

1. `mini_agent/config/config.yaml` (development)

2. `~/.mini-agent/config/config.yaml` (user)

3. `<package>/config/config.yaml` (installed)

Config sections:

- **LLM**: api_key, api_base, model, provider, retry

- **Agent**: max_steps, workspace_dir, system_prompt_path

- **Tools**: enable_file_tools, enable_bash, enable_skills, enable_mcp, etc.

---

### 5. **CLI (`mini_agent/cli.py`)**

Entry point provides:

- **Interactive Mode**: Multi-turn conversation with prompt_toolkit

- **Non-Interactive Mode**: Execute single task with `--task` flag

- **Commands**: `/help`, `/clear`, `/history`, `/stats`, `/log`, `/exit`

- **Keyboard Shortcuts**: Esc to cancel, Ctrl+C to exit, etc.

---

### 6. **Key Features**

| Feature | Implementation |

|---------|----------------|

| **Multi-turn Conversation** | Message history with role tracking |

| **Tool Calling** | LLM generates tool calls, Agent executes them |

| **Token Management** | tiktoken-based estimation + summarization |

| **Cancellation** | asyncio.Event + Esc key listener thread |

| **Retry Logic** | Exponential backoff decorator |

| **MCP Support** | Load external tools via MCP protocol |

| **Logging** | Detailed request/response/tool logs |

---

### 7. **Data Flow**

```

User Input

↓

Agent.add_user_message()

↓

Agent.run() [Loop]

├── Check token limit → _summarize_messages()

├── llm.generate() → LLMClient

│ └── AnthropicClient/OpenAIClient

├── Parse response (content, thinking, tool_calls)

└── For each tool_call:

└── tool.execute() → ToolResult

↓

Return final response

```

---

### 8. **Built-in Skills**

The project includes many specialized skills:

- **document-skills**: pdf, pptx, docx, xlsx - Document manipulation

- **algorithmic-art**: p5.js generative art

- **canvas-design**: Visual design creation

- **webapp-testing**: Playwright browser testing

- **internal-comms**: Internal communication templates

- **theme-factory**: Theming for artifacts

- **mcp-builder**: MCP server creation

- And more...

---

This is a well-architected AI agent framework that combines:

1. **LLM integration** with multiple providers

2. **Tool system** with extensibility

3. **Skill system** with progressive disclosure

4. **MCP support** for external tools

5. **User-friendly CLI** with interactive mode

⏱️ Step 11 completed in 59.77s (total: 95.93s)