LLMs之Pretrained:《Training Language Models via Neural Cellular Automata》翻译与解读

导读 :这篇论文证明了"合成结构"也能教会模型通用能力:作者用 NCA 生成可控的非语言合成数据,先让模型学习更纯粹的规则与依赖,再接入自然语言预训练,结果不仅提升语言建模效率,还能迁移到数学、代码和推理任务;其中最重要的启示是,合成数据的价值不在于替代语义本身,而在于为模型提供更高质量、可定制的计算结构。

>> 背景痛点:

● 高质量自然语言数据正在逼近瓶颈:论文指出,大模型预训练高度依赖自然语言,但高质量文本是有限资源,而且获取与清洗成本高。

● 自然语言自带偏置,且知识与推理纠缠:作者认为,自然语言数据不仅包含人类偏见,还把事实知识、推理过程与表达形式混杂在一起,不利于把"学到的能力"拆分出来研究。

● 传统合成数据往往不够"像真实世界":既有算法合成数据在语言领域较少见,且很多方法生成的分布过窄、同质性过强,难以在匹配 token 预算下超过自然语言训练。

● 仅靠规模堆数据并不一定最优:论文强调,合成数据的有效性不只是"更多",而是取决于数据生成器的结构性质与复杂度是否匹配目标域。

>> 具体的解决方案:

● 用神经元胞自动机(NCA)生成非语言合成数据:作者提出用 NCA 这种可参数化、可控、可大规模生成的动力系统,作为 LLM 的"预预训练"数据来源。

● 采用"合成先行、自然语言随后"的两阶段框架:先在 NCA 动态上做 pre-pre-training,再进行标准自然语言 pre-training,从而让模型先学到更通用的计算原语,再去吸收语义。

● 显式控制 NCA 复杂度,做面向任务的分布设计:论文提出可通过 gzip 可压缩性、状态空间大小等方式调节 NCA 复杂度,以便针对 web text、math、code 等不同下游域进行匹配。

● 把 NCA 视作"学习计算规律"的训练底座:作者的核心假设是,LLM 重要能力来自结构而非语义本身,因此让模型预测 NCA 轨迹,可以迫使其学习长程依赖、局部规则和潜在计算过程。

>> 核心思路步骤:

● 构造具有丰富时空结构的 NCA 轨迹:NCA 通过神经网络参数化更新规则,可生成长程时空模式,并呈现类似自然语言的重尾、Zipf 风格统计特征。

● 按复杂度区间采样训练数据:作者不是简单随机取样,而是根据复杂度带筛选 NCA 轨迹,比较不同压缩率/复杂度对应的迁移效果。

● 先做 NCA 预预训练,再转入自然语言预训练:模型先用 next-token prediction 学习 NCA 序列,再进入 OpenWebText、OpenWebMath、CodeParrot 等自然语言语料的常规预训练。

● 用困惑度、收敛速度和下游 pass@ 评估迁移:论文主要用验证 perplexity、达到最终 perplexity 所需 token 数、以及 GSM8K/HumanEval/BigBench-Lite 的 pass 准确率来衡量效果。

● 分析"什么在驱动迁移":作者通过重置部分权重来判断哪些模块携带迁移信号,并进一步研究数据复杂度与下游任务的匹配关系。

>> 优势:

● 样本效率高:仅用 164M NCA tokens,论文就报告了下游语言建模性能最高提升约 6%,并且加速收敛最高可达 1.6 倍。

● 甚至能超过更多自然语言数据的预预训练:令人意外的是,NCA 预预训练在部分设置下,甚至优于使用 1.6B tokens 的自然语言 C4 预预训练,且计算量还更高。

● 收益可迁移到推理任务:论文报告,这种收益不仅体现在困惑度上,也能转移到 GSM8K、HumanEval、BigBench-Lite 等推理/代码基准。

● 训练更快、更稳定:在多个模型规模和多种语料上,NCA 预预训练都能持续优于 scratch、Dyck 与 C4 基线,并表现出更快的收敛。

● 可做领域定制化设计:因为 NCA 的复杂度可调,作者认为它给了训练分布一个新旋钮,可以按目标域的计算特征去定制合成数据。

>> 结论和观点(侧重经验与建议):

● 迁移成立的关键不是"像不像语言",而是"像不像可学习的结构":作者认为,模型之所以能从 NCA 迁移到语言,是因为 NCA 提供了更纯粹的规则归纳信号,而不是依赖语义内容本身。

● 注意力层是最可迁移的组件:实验显示,重置 attention 权重会带来最大退化,说明 attention 更像通用的依赖追踪与隐式规则推断载体。

● MLP 更偏向存储域特定统计:论文发现,MLP 和 LayerNorm 的迁移效果更依赖源域与目标域是否对齐;当两者差异较大时,保留这些参数甚至可能干扰学习。

● 最优复杂度是"任务依赖"的,不是一刀切:OpenWebText 更偏好更复杂、低可压缩的 NCA 规则,而 CodeParrot 则更适合中等复杂度;这说明合成数据需要按目标域调参,而不是统一使用同一分布。

● 不是数据越多越好,而是匹配得越好越有效:论文明确指出,迁移效果并不随 NCA 数据量单调增长,过高复杂度或不匹配的分布也可能让收益 plateau。

● NCA 更像是"预预训练底座",不是语义学习的终点:作者把这一框架定义为通往"全合成预训练"的早期原型,但也承认最终语义获取仍可能需要有限且精选的自然语言数据。

● 当前仍有边界与开放问题:论文指出,NCA 目前更适合作为 pre-pre-training 信号;要成为自然语言预训练的完整替代,还需要解决更大 alphabet、不同复杂度区间下的性能平台期问题。

目录

[《Training Language Models via Neural Cellular Automata》翻译与解读](#《Training Language Models via Neural Cellular Automata》翻译与解读)

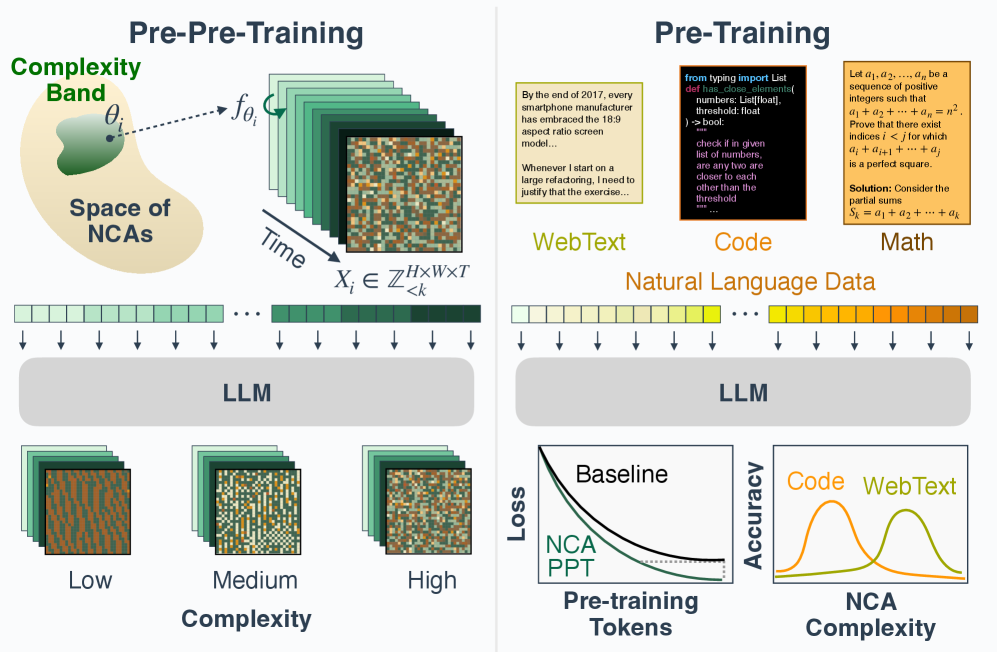

[Figure 1:Overview of NCA Pre-pre-training to Language Pre-training. We pre-pre-train a transformer with next-token prediction on the dynamics of neural cellular automata (NCA) sampled from selected complexity regions. We then conduct standard pre-training on natural language corpora. NCA pre-pre-training improves both validation perplexity and convergence speed on language pre-training. Interestingly, the optimal NCA distribution varies by downstream domain.图 1:NCA 预预训练到语言预训练的概述。我们先在从选定复杂度区域采样的神经元细胞自动机(NCA)动态上对一个变压器进行下一个标记预测的预预训练。然后在自然语言语料库上进行标准预训练。NCA 预预训练提高了语言预训练的验证困惑度和收敛速度。有趣的是,最优的 NCA 分布因下游领域而异。](#Figure 1:Overview of NCA Pre-pre-training to Language Pre-training. We pre-pre-train a transformer with next-token prediction on the dynamics of neural cellular automata (NCA) sampled from selected complexity regions. We then conduct standard pre-training on natural language corpora. NCA pre-pre-training improves both validation perplexity and convergence speed on language pre-training. Interestingly, the optimal NCA distribution varies by downstream domain.图 1:NCA 预预训练到语言预训练的概述。我们先在从选定复杂度区域采样的神经元细胞自动机(NCA)动态上对一个变压器进行下一个标记预测的预预训练。然后在自然语言语料库上进行标准预训练。NCA 预预训练提高了语言预训练的验证困惑度和收敛速度。有趣的是,最优的 NCA 分布因下游领域而异。)

[6 Discussion](#6 Discussion)

[Why should we expect transfer?为何我们应期待迁移?](#Why should we expect transfer?为何我们应期待迁移?)

[Why is 160M tokens of automata better than 1.6B tokens of text?为什么 1.6 亿个自动机标记比 160 亿个文本标记更好?](#Why is 160M tokens of automata better than 1.6B tokens of text?为什么 1.6 亿个自动机标记比 160 亿个文本标记更好?)

[Limitations and open problems局限性和开放性问题](#Limitations and open problems局限性和开放性问题)

《Training Language Models via Neural Cellular Automata》翻译与解读

|------------|--------------------------------------------------------------------------------------------------------------|

| 地址 | 论文地址:https://arxiv.org/abs/2603.10055 |

| 时间 | 2026年03月09日 |

| 作者 | 麻省理工学院(MIT)、Improbable AI实验室(Improbable AI Lab) |

Abstract

|-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Pre-training is crucial for large language models (LLMs), as it is when most representations and capabilities are acquired. However, natural language pre-training has problems: high-quality text is finite, it contains human biases, and it entangles knowledge with reasoning. This raises a fundamental question: is natural language the only path to intelligence? We propose using neural cellular automata (NCA) to generate synthetic, non-linguistic data for pre-pre-training LLMs--training on synthetic-then-natural language. NCA data exhibits rich spatiotemporal structure and statistics resembling natural language while being controllable and cheap to generate at scale. We find that pre-pre-training on only 164M NCA tokens improves downstream language modeling by up to 6% and accelerates convergence by up to 1.6x. Surprisingly, this even outperforms pre-pre-training on 1.6B tokens of natural language from Common Crawl with more compute. These gains also transfer to reasoning benchmarks, including GSM8K, HumanEval, and BigBench-Lite. Investigating what drives transfer, we find that attention layers are the most transferable, and that optimal NCA complexity varies by domain: code benefits from simpler dynamics, while math and web text favor more complex ones. These results enable systematic tuning of the synthetic distribution to target domains. More broadly, our work opens a path toward more efficient models with fully synthetic pre-training. Website: https://hanseungwook.github.io/blog/nca-pre-pre-training/ Code: https://github.com/danihyunlee/nca-pre-pretraining | 对于大型语言模型(LLM)来说,预训练至关重要,因为大多数表示和能力都是在这一阶段获得的。然而,自然语言预训练存在一些问题:高质量文本有限,包含人类偏见,并且将知识与推理混杂在一起。这引发了一个根本性的问题 :自然语言是通向智能的唯一途径吗?我们提出使用神经元细胞自动机(NCA) 生成合成的非语言数据,用于大型语言模型的预预训练------先在合成数据上训练,然后再在自然语言上训练。NCA 数据具有丰富的时空结构和类似于自然语言的统计特性,同时可控且易于大规模生成。我们发现,仅在 1.64 亿个 NCA 令牌上进行预预训练,就能使下游语言建模性能提高多达 6%,并使收敛速度加快多达 1.6 倍。令人惊讶的是,这甚至优于在 16 亿个来自 Common Crawl 的自然语言令牌上进行预预训练,尽管后者使用了更多的计算资源。这些改进也转移到了推理基准测试中,包括 GSM8K、HumanEval 和 BigBench-Lite。在探究驱动迁移的因素时,我们发现注意力层的迁移性最强,并且最优的 NCA 复杂度因领域而异:代码受益于更简单的动态机制,而数学和网络文本则倾向于更复杂的机制。这些结果使得针对目标领域对合成分布进行系统性调整成为可能。更广泛地说,我们的工作为实现具有完全合成预训练的更高效模型开辟了一条道路。 网站:https://hanseungwook.github.io/blog/nca-pre-pre-training/ 代码:https://github.com/danihyunlee/nca-pre-pretraining |

Figure 1:Overview of NCA Pre-pre-training to Language Pre-training. We pre-pre-train a transformer with next-token prediction on the dynamics of neural cellular automata (NCA) sampled from selected complexity regions. We then conduct standard pre-training on natural language corpora. NCA pre-pre-training improves both validation perplexity and convergence speed on language pre-training. Interestingly, the optimal NCA distribution varies by downstream domain.图 1:NCA 预预训练到语言预训练的概述。我们先在从选定复杂度区域采样的神经元细胞自动机(NCA)动态上对一个变压器进行下一个标记预测的预预训练。然后在自然语言语料库上进行标准预训练。NCA 预预训练提高了语言预训练的验证困惑度和收敛速度。有趣的是,最优的 NCA 分布因下游领域而异。

1、Introduction

|---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

| Scale has transformed neural networks, enabling emergent abilities like reasoning (Jaech et al., 2024; Jiang, 2023; Austin et al., 2021) and in-context learning (Brown et al., 2020; Wei et al., 2022; Zhao et al., 2024) in large language models (LLMs). However, neural scaling laws predict that continued improvements require exponentially more data (Kaplan et al., 2020), which is nearing exhaustion by 2028 (Villalobos et al., 2022). Furthermore, natural language inherits many undesirable human biases and needs tedious data curation and cleaning before it is used for training foundation models (Han et al., 2025a; An et al., 2024). This raises a fundamental question: Is natural language the only path to learning useful representations? In this paper, we explore an alternative path to using synthetic data from cellular automata. Our core hypothesis is that the emergence of reasoning and other abilities in LLMs relies on the underlying structure of natural language, rather than its semantics. Text is a lossy record of human cognition and the world it describes, containing diverse kinds of structure, from reasoning traces to procedural instructions (Ribeiro et al., 2023; Ruis et al., 2024; Cheng et al., 2025; Delétang et al., 2024). Next-token prediction on such data pressures models to internalize the latent computational processes that support coherent continuations, fostering key capabilities of intelligence (Delétang et al., 2023; Jiang, 2023). | 规模改变了神经网络,使大型语言模型(LLMs)具备了诸如推理(Jaech 等人,2024 年;Jiang,2023 年;Austin 等人,2021 年)和上下文学习(Brown 等人,2020 年;Wei 等人,2022 年;Zhao 等人,2024 年)等新能力。然而,神经网络规模法则预测,持续改进需要呈指数级增长的数据(Kaplan 等人,2020 年),而到 2028 年,这种数据将接近枯竭(Villalobos 等人,2022 年)。此外,自然语言继承了许多不良的人类偏见,在用于训练基础模型之前,需要进行繁琐的数据整理和清理(Han 等人,2025a;An 等人,2024 年)。这引发了一个根本性的问题:自然语言是学习有用表示的唯一途径吗?在本文中,我们探索了一条使用元胞自动机合成数据的替代路径。 我们的核心假设是,LLMs 中推理和其他能力的出现依赖于自然语言的底层结构,而非其语义。文本是对人类认知及其所描述的世界的一种有损记录,包含多种结构,从推理痕迹到程序指令(Ribeiro 等人,2023 年;Ruis 等人,2024 年;Cheng 等人,2025 年;Delétang 等人,2024 年)。在这样的数据上进行下一个标记预测,迫使模型内化支持连贯延续的潜在计算过程,从而培养出智能的关键能力(Delétang 等人,2023 年;Jiang,2023 年)。 |

| If the key ingredient is exposure to various structures rather than language semantics, then richly structured non-linguistic data could also be effective for teaching models to reason. To investigate this hypothesis, we employ algorithmically generated synthetic data from neural cellular automata (NCA) (Mordvintsev et al., 2020) as a synthetic training substrate. NCA generalize systems like Conway's Game of Life (Gardner, 1970) by replacing fixed dynamics rules with neural networks and can be used to generate diverse data distributions with spatially local rules. This produces long-range spatio-temporal patterns (see Figure 1) of arbitrary sizes that exhibit heavy-tailed, Zipfian token distributions (see Figure 8 in Appendix A) reminiscent of natural data. Crucially, we propose a method to explicitly control the complexity of NCA, enabling systematic tuning of the synthetic data distribution for optimal transfer to downstream domains. Prior work on synthetic pre-training has explored approaches like generating random strings with a recurrent network (Bloem, 2025) and simple algorithmic tasks (Wu et al., 2022; Shinnick et al., 2025a), but they have yet to match or outperform language training under matched token budgets. We hypothesize this is because such synthetic distributions are narrow and homogeneous, lacking certain key properties that characterize natural language. NCAs address this gap. The parametric structure of NCA yields diverse dynamics and allows systematic control over complexity. This enables us to ask not only whether synthetic data can transfer, but what structural properties make it effective. | 如果关键因素是接触各种结构而非语言语义,那么结构丰富的非语言数据也可能有效地教会模型进行推理。为了探究这一假设,我们采用神经元细胞自动机(NCA)(Mordvintsev 等人,2020 年)生成的算法合成数据作为合成训练基质。NCA 通过用神经网络取代固定的动态规则,对诸如康威生命游戏(Gardner,1970 年)之类的系统进行了推广,并且能够生成具有局部空间规则的多种数据分布。这会产生任意大小的长程时空模式(见图 1),其标记分布呈现出重尾、齐普夫分布(见附录 A 中的图 8),与自然数据类似。关键的是,我们提出了一种方法来明确控制 NCA 的复杂性,从而能够系统地调整合成数据的分布,以实现向下游领域的最佳迁移。此前关于合成预训练的工作探索了诸如使用循环网络生成随机字符串(Bloem,2025)以及简单的算法任务(Wu 等人,2022;Shinnick 等人,2025a)等方法,但它们尚未在匹配的标记预算下达到或超越语言训练的效果。我们推测这是因为这些合成分布狭窄且同质,缺乏某些表征自然语言的关键特性。NCA 解决了这一差距。NCA 可以弥补这一空白。NCA 的参数化结构产生了多样的动态变化,并允许对复杂性进行系统控制。这使我们不仅能探究合成数据是否可以迁移,还能探究是什么样的结构特性使其有效。 |

| We adopt a pre-pre-training framework: an initial phase of training on NCA dynamics that precedes standard pre-training on natural language (Hu et al., 2025b). Our ultimate vision is to pre-train entirely on clean synthetic data, followed by fine-tuning on a limited and curated corpora of natural language to acquire semantics (Han et al., 2025a). The pre-pre-training framework serves as an early prototype of this paradigm, allowing us to measure how computational primitives learned from synthetic NCA transfer to language tasks. Our contributions are as follows: 1. A synthetic pre-pre-training substrate that transfers to language and reasoning. We propose neural cellular automata (NCA) as a fully algorithmic, non-linguistic data source for pre-pre-training. NCA pre-pre-training improves downstream language modeling by up to 6% and converges up to 1.6× faster across web text, math, and code. These perplexity gains transfer to reasoning across benchmarks including GSM8K, HumanEval, and BigBench-Lite. Surprisingly, it outperforms pre-pre-training on natural language (C4), even with more data and compute. 2. Synthetic pre-training enables domain-targeted data design. We find that the optimal NCA complexity regime varies by downstream task: code benefits from lower-complexity rules while math and web text benefit from higher-complexity ones. NCAs' parametric structure offers a new lever for efficient training: tuning the complexity of training distributions to match the computational character of target domains. 3. Attention captures the most transferable priors. The attention layers capture the most useful computational primitives, accounting for the majority of the transfer gains. Attention appears to be a universal carrier of transferable capabilities such as long-range dependency tracking and in-context learning, whereas MLPs encode more domain-specific knowledge--making MLP transfer conditional on alignment between the synthetic and target domains. | 我们采用了一种预预训练框架:在标准的自然语言预训练之前,先对 NCA 动态进行初始训练(Hu 等人,2025b)。我们的最终愿景是完全在干净的合成数据上进行预训练,然后在有限且经过精心挑选的自然语言语料库上进行微调以获取语义(Han 等人,2025a)。预预训练框架是这一范式的早期原型,使我们能够衡量从合成 NCA 学习到的计算原语如何迁移到语言任务中。我们的贡献如下: 1. 一种可迁移到语言和推理的合成预预训练基质。我们提出神经细胞自动机(NCA)作为预预训练的完全算法化、非语言的数据源。NCA 预预训练可将下游语言建模性能提高多达 6%,并且在网页文本、数学和代码方面收敛速度提高多达 1.6 倍。这些困惑度的提升在包括 GSM8K、HumanEval 和 BigBench-Lite 在内的多个基准测试中的推理任务上都有所体现。令人惊讶的是,它甚至在使用更多数据和计算资源的情况下,也超过了对自然语言(C4)进行预训练的效果。 2. 合成预训练能够实现针对特定领域的数据设计。我们发现,最优的 NCA 复杂度范围因下游任务而异:代码任务受益于较低复杂度的规则,而数学和网络文本任务则受益于较高复杂度的规则。NCA 的参数化结构为高效训练提供了一个新的杠杆:通过调整训练分布的复杂度来匹配目标领域的计算特性。3.注意力机制捕捉到了最具迁移性的先验知识。注意力层捕获了最有用的计算原语,占据了大部分迁移收益。注意力机制似乎是诸如长程依赖追踪和上下文学习等可迁移能力的通用载体,而多层感知机(MLP)则编码了更多特定领域的知识------这使得 MLP 的迁移依赖于合成域和目标域之间的对齐。 |

6 Discussion

Why should we expect transfer?为何我们应期待迁移?