一.配置软件仓库并安装docker-ce

#利用阿里云部署软件仓库

[root@docker-node1 ~]# cat > /etc/yum.repos.d/docker.repo << EOF

[docker]

name = docker

baseurl = https://mirrors.aliyun.com/docker-ce/linux/rhel/9.6/x86_64/stable/

gpgcheck = 0

EOF

[root@docker-node1 ~]# dnf makecache

正在更新 Subscription Management 软件仓库。

无法读取客户身份

本系统尚未在权利服务器中注册。可使用 "rhc" 或 "subscription-manager" 进行注册。

docker 7.3 kB/s | 46 kB 00:06

AppStream 3.1 MB/s | 3.2 kB 00:00

BaseOS 2.7 MB/s | 2.7 kB 00:00

元数据缓存已建立。

[root@docker-node1 ~]# dnf search docker

正在更新 Subscription Management 软件仓库。

无法读取客户身份

本系统尚未在权利服务器中注册。可使用 "rhc" 或 "subscription-manager" 进行注册。

上次元数据过期检查:0:00:13 前,执行于 2026年03月14日 星期六 14时55分07秒。

==================================== 名称 和 概况 匹配:docker =====================================

docker-buildx-plugin.x86_64 : Docker Buildx plugin for the Docker CLI

docker-ce-rootless-extras.x86_64 : Rootless support for Docker

docker-compose-plugin.x86_64 : Docker Compose plugin for the Docker CLI

docker-model-plugin.x86_64 : Docker Model Runner plugin for the Docker CLI

pcp-pmda-docker.x86_64 : Performance Co-Pilot (PCP) metrics from the Docker daemon

podman-docker.noarch : Emulate Docker CLI using podman

======================================== 名称 匹配:docker =========================================

docker-ce.x86_64 : The open-source application container engine

docker-ce-cli.x86_64 : The open-source application container engine

[root@docker-node1 ~]# dnf install docker-ce -y

[root@docker-node1 ~]# vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --iptables=true #开启iptables功能

[root@docker-node1 ~]# echo br_netfilter > /etc/modules-load.d/docker_mod.conf

[root@docker-node1 ~]# modprobe -a br_netfilter

[root@docker-node1 ~]# cat > /etc/sysctl.d/docker.conf <<EOF

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

[root@docker-node1 ~]# sysctl --system

[root@docker-node1 ~]# systemctl enable --now docker

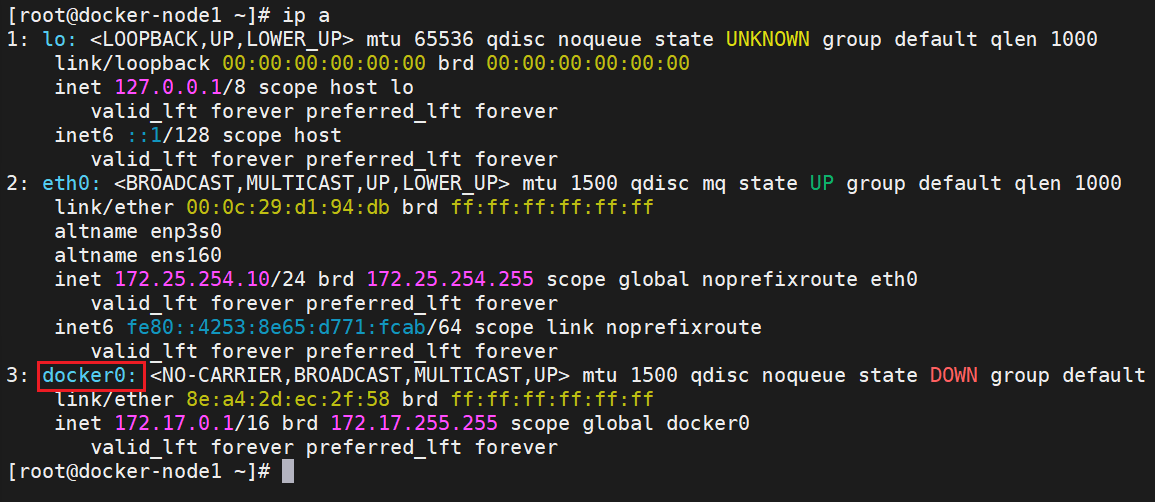

二.配置docker加速器

[root@docker-node1 ~]# cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://docker.1ms.run"]

}

EOF

[root@docker-node1 ~]# systemctl restart docker

[root@docker-node1 ~]# docker info

Registry Mirrors:

https://docker.1ms.run/三.docker常用命令

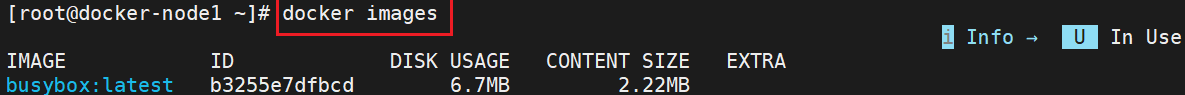

1.镜像查看

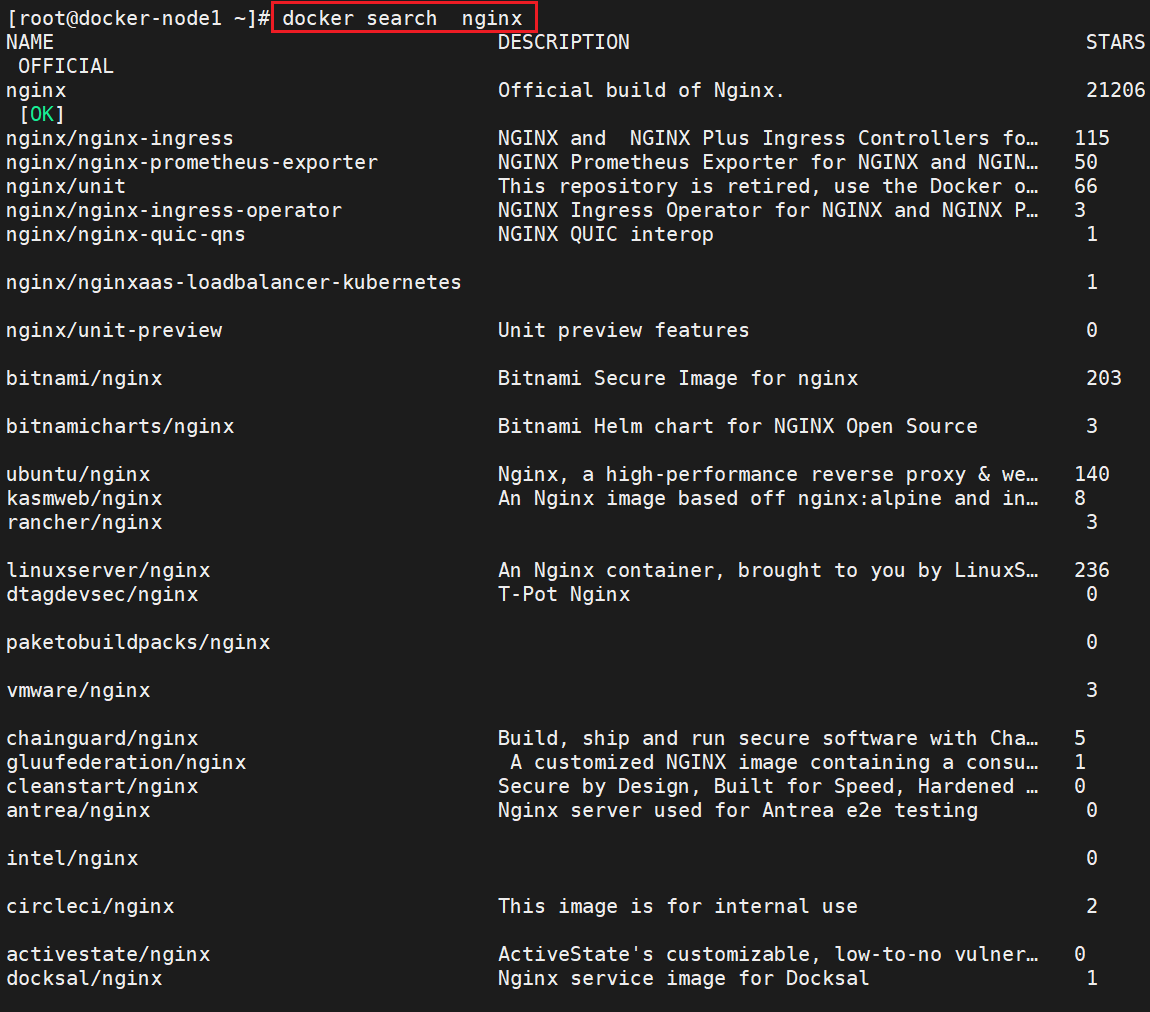

2.搜索镜像

docker search

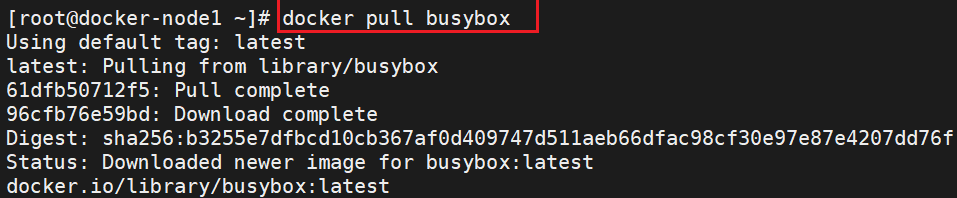

3.下载镜像

docker pull

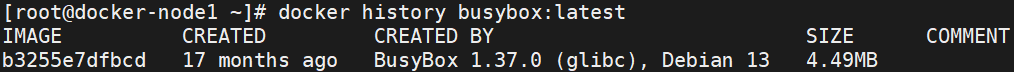

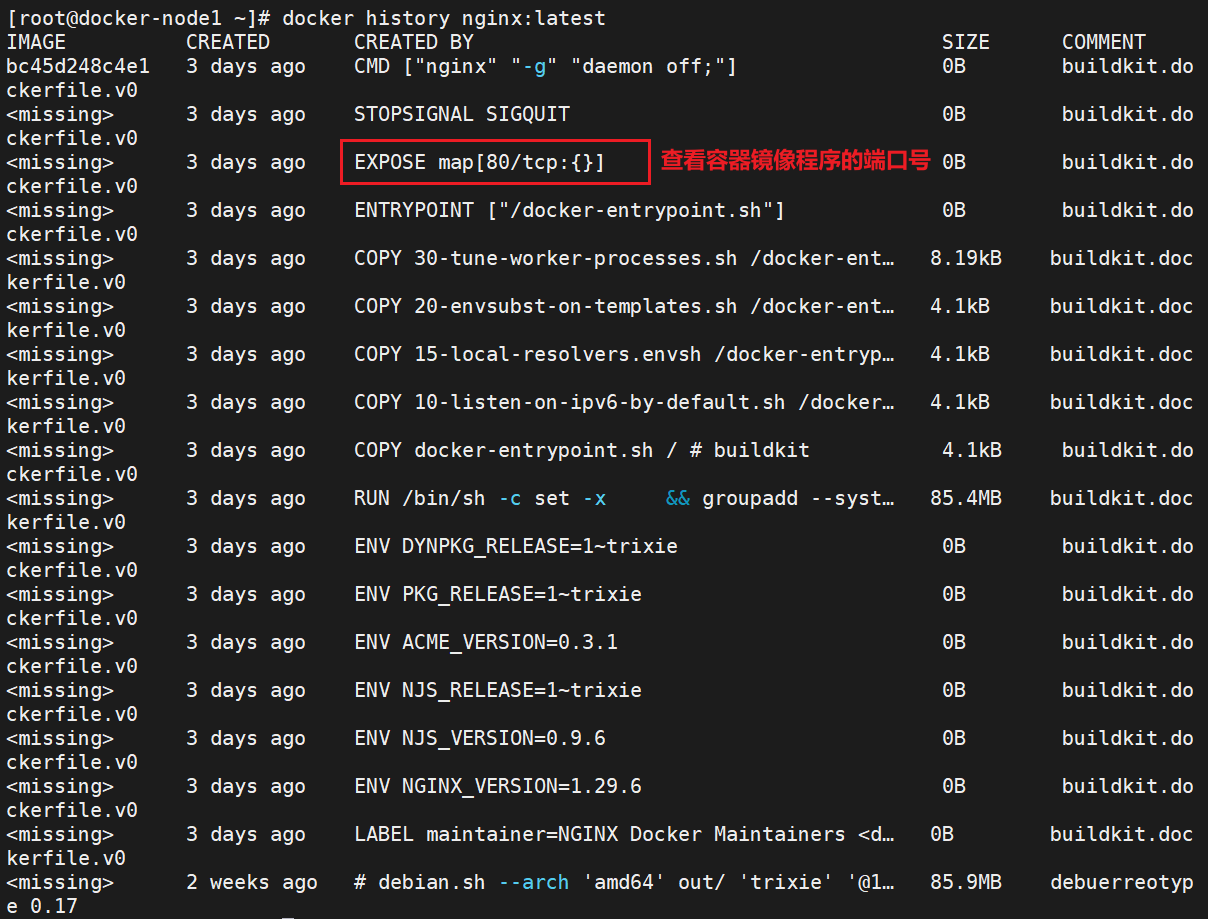

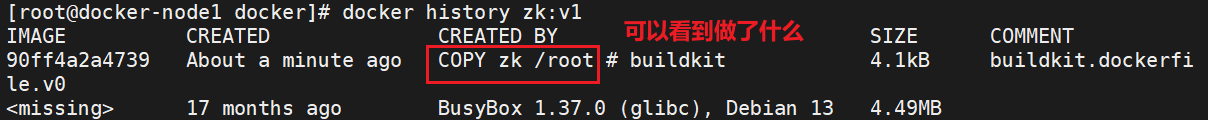

4.查看镜像提交历史

docker history

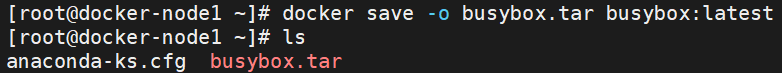

5.导出镜像

docker save

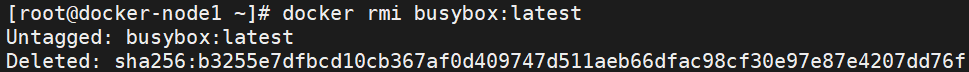

6.删除镜像

docker rmi

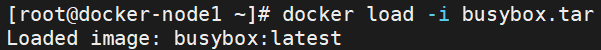

7.导入镜像

docker load

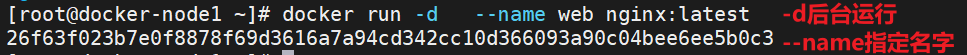

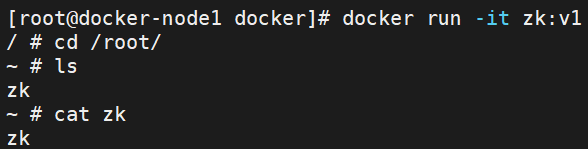

8.运行镜像

docker run

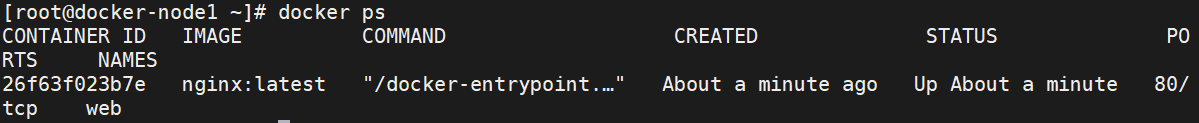

9.查看运行容器

docker ps

10.查看所有容器

docker ps -a

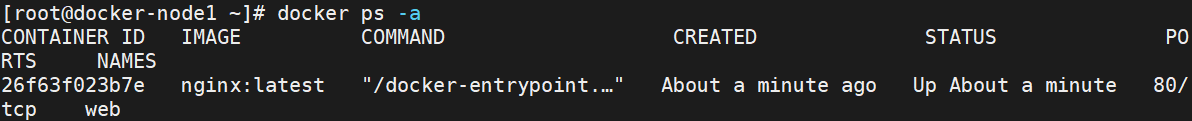

11.交互模式运行容器

交互运行容器默认退出后会停止

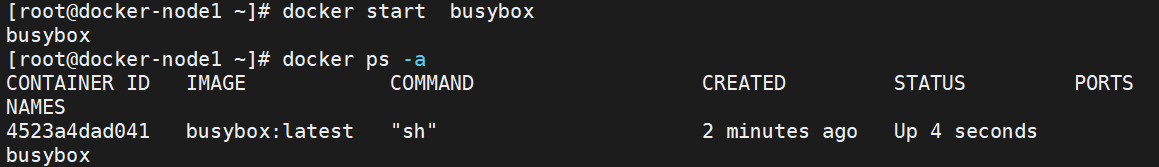

12.运行停止的容器

13.退出交互容器不对其停止

[root@docker-node1 ~]# docker attach busybox

/ # [ctrl]+[p]+[q] #按键

[root@docker-node1 ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

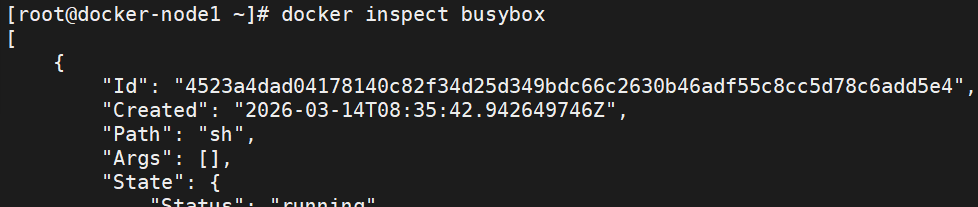

4523a4dad041 busybox:latest "sh" 4 minutes ago Up About a minute busybox14.查看容器信息

docker inspect

15.容器控制

[root@Docker-node1 ~]# docker stop busybox #停止容器

[root@Docker-node1 ~]# docker kill busybox #杀死容器,可以使用信号

[root@Docker-node1 ~]# docker start busybox #开启停止的容器

#在已经运行的容器中执行指定命令

[root@docker-node1 ~]# docker exec busybox touch /root/haha #非交互

[root@docker-node1 ~]# docker exec busybox ls /root

file1

file2

haha

[root@docker-node1 ~]# docker exec -it web /bin/bash #交互的

root@f3e369725fab:/#

#容器删除

[root@docker-node1 ~]# docker rm -f busybox

busybox

[root@docker-node1 ~]# docker stop web

web

[root@docker-node1 ~]# docker rm web

web

#内容提交

[root@docker-node1 ~]# docker run -it --name test busybox:latest

/ # touch /root/file

/ # ls /root/

file

ctrl+p+q 退出当前环境并继续运行容器

[root@docker-node1 ~]# docker commit -m "add file" test busybox-file:latest

sha256:31a32089d241d025a5a54f144f15319cc6fb55be1b41d049f8905a472d5a028e

[root@docker-node1 ~]# docker images

i Info → U In Use

IMAGE ID DISK USAGE CONTENT SIZE EXTRA

busybox-file:latest 31a32089d241 6.71MB 2.21MB

[root@docker-node1 ~]# docker run -it --name test busybox-file:latest

#文件在镜像中的复制

[root@docker-node1 ~]# docker run -it --name test busybox-file:latest

root@docker-node1 ~]# docker cp test:/root/file /mnt

Successfully copied 1.54kB to /mnt

[root@docker-node1 ~]# ls /mnt/

file hgfs

[root@docker-node1 ~]# docker cp /etc/passwd test:/root/

Successfully copied 3.07kB to test:/root/

[root@docker-node1 ~]# docker exec test ls /root

file

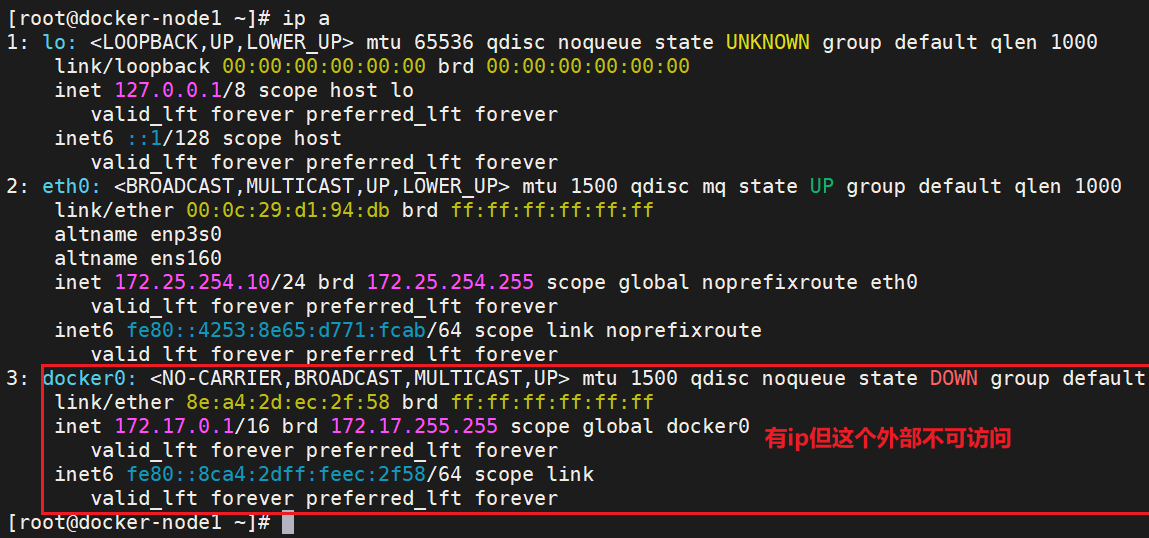

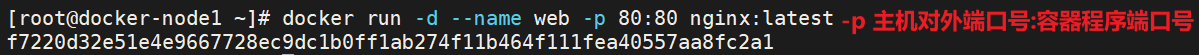

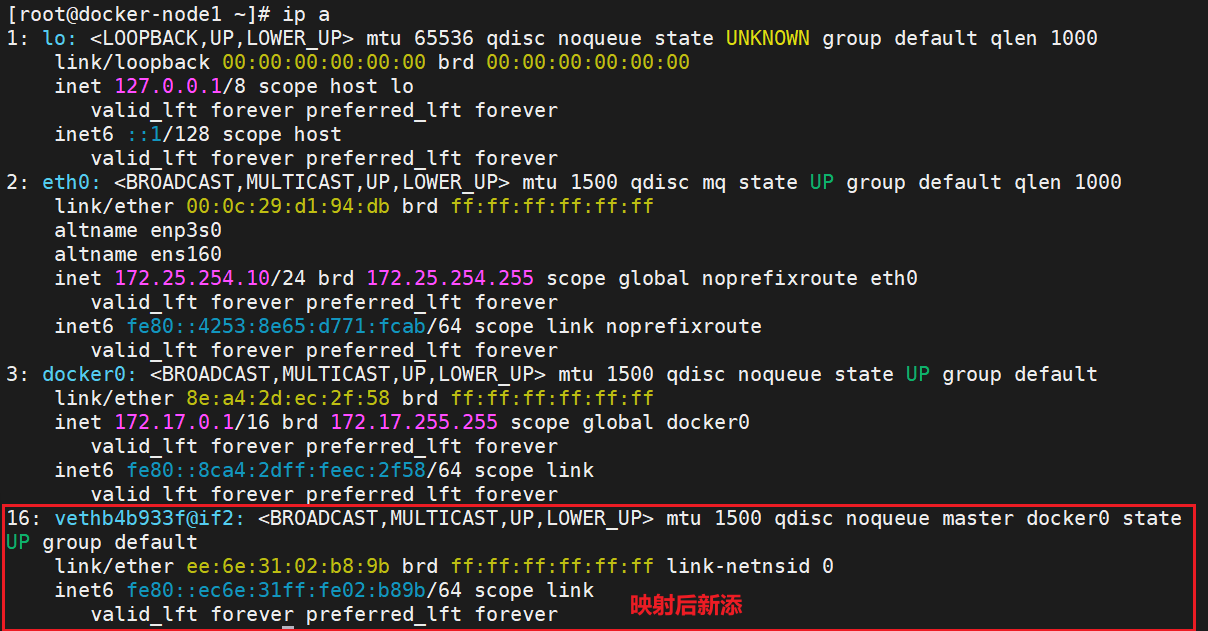

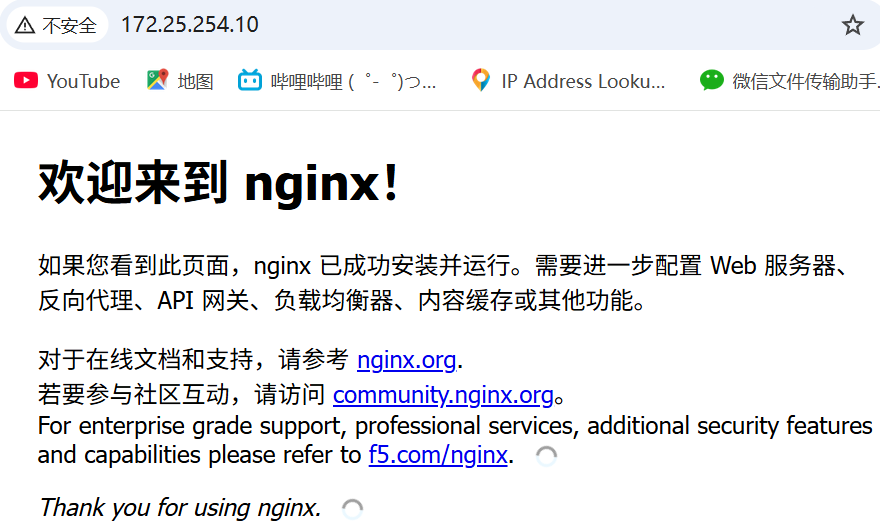

passwd四、端口映射

外部浏览器可以访问到

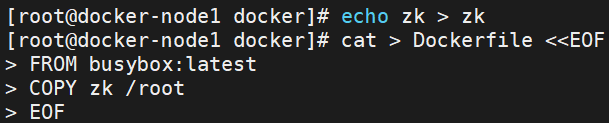

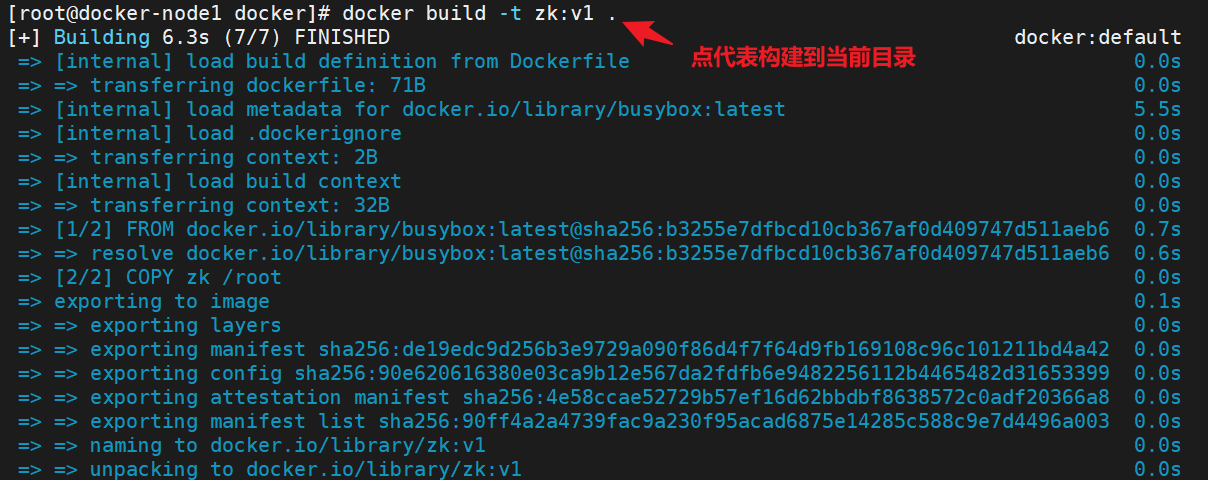

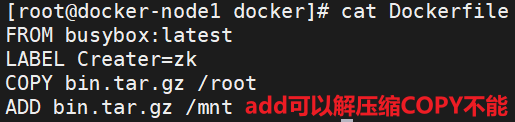

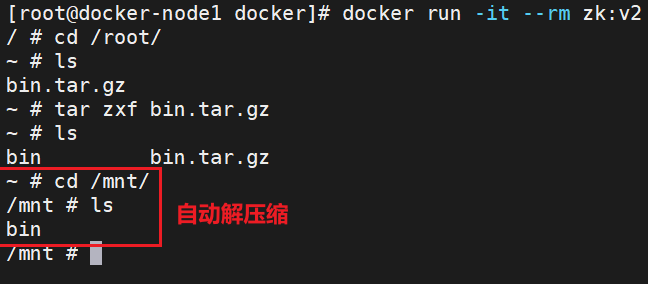

五、镜像构建

#建立构建目录

#编写构建规则文件

#构建

#查看

常用选项:

-d:后台运行容器(守护进程模式)。

--name:为容器指定一个名称(方便管理)。

-p <宿主机端口>:<容器端口>:端口映射(将宿主机的端口映射到容器内的端口)。

-v <宿主机目录>:<容器目录>:挂载卷(将宿主机的目录挂载到容器内)。

--rm:容器停止后自动删除(适合测试)。

交互式运行:使用 -it 选项进入容器,查看内部情况

构建命令

add

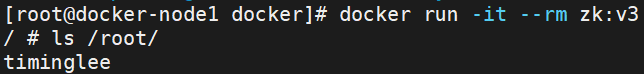

ENV

>>

ENV NAME=timinglee

RUN ["/bin/sh","-c", "touch /root/$NAME" ]

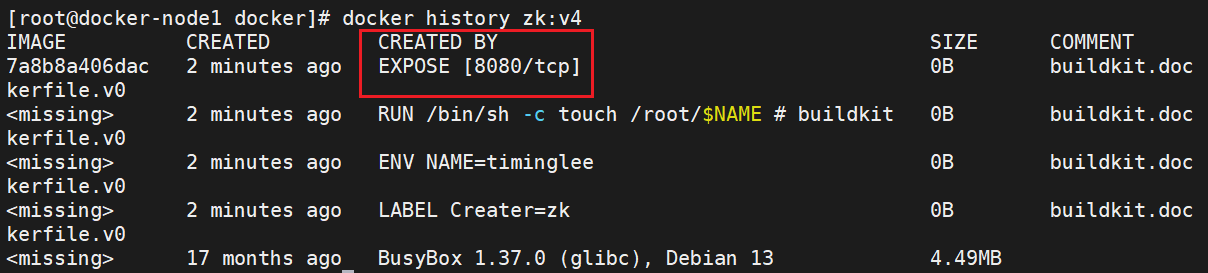

EXPOSE

>>

EXPOSE 8080

#VOLUEM

FROM busybox:latest

LABEL Creater=lee

ENV NAME=timinglee

EXPOSE 8080

VOLUME "/mnt"

RUN "/bin/sh","-c", "touch /root/$NAME"

#测试

root@docker-node1 docker# docker run -it --name test --rm lee:v6

root@docker-node1 \~# docker inspect test | grep -i mounts -A10

"Mounts": [

{

"Type": "volume",

"Name": "951e0ad881eda84a037614657b89cae88adac7c600ac03cd9505c067cee04741",

"Source": "/var/lib/docker/volumes/951e0ad881eda84a037614657b89cae88adac7c600ac03cd9505c067cee04741/_data",

"Destination": "/mnt",

"Driver": "local",

"Mode": "",

"RW": true,

"Propagation": ""

}

root@docker-node1 \~# cd "/var/lib/docker/volumes/951e0ad881eda84a037614657b89cae88adac7c600ac03cd9505c067cee04741/_data"

root@docker-node1 _data# touch lee{1..5}

#在容器中

/ # ls /mnt/

lee1 lee2 lee3 lee4 lee5

#WORKDIR

FROM busybox:latest

LABEL Creater=lee

ENV NAME=timinglee

EXPOSE 8080

VOLUME "/mnt"

RUN "/bin/sh","-c", "touch /root/$NAME"

WORKDIR "/mnt"

root@docker-node1 docker# docker run -it --name test --rm lee:v7

/mnt #

#CMD

#ENV CMD

FROM busybox

MAINTAINER lee@timinglee.org

ENV NAME lee

#CMD echo $NAME

#CMD "/bin/echo", "$NAME"

CMD "/bin/sh", "-c", "/bin/echo $NAME"

root@Docker-node1 docker# docker run -it --rm --name test example:v3

lee

]# docker run -it --name test --rm lee:v8 echo haha

haha

#ENTRYPOINT

FROM busybox

MAINTAINER lee@timinglee.org

ENV NAME lee

ENTRYPOINT echo $NAME

root@Docker-node1 docker# docker run -it --rm --name test example:v3 sh

lee

root@docker-node1 docker# docker run -it --name test --rm lee:v8

timinglee

root@docker-node1 docker# docker run -it --name test --rm lee:v8 echo haha

timinglee

docker images | awk '/\<zk\>/{system("docker rmi "$1)}' #批量删除构建镜像

docker container prune #删除所有已经停止的容器

镜像优化方案

镜像优化策略

-

选择最精简的基础镜像

-

减少镜像的层数

-

清理镜像构建的中间产物

方法1.缩减镜像层

[root@server1 docker]# vim Dockerfile

FROM centos:7 AS build

ADD nginx-1.23.3.tar.gz /mnt

WORKDIR /mnt/nginx-1.23.3

RUN yum install -y gcc make pcre-devel openssl-devel && sed -i 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --with-http_ssl_module --with-http_stub_status_module && make && make install && cd .. && rm -fr nginx-1.23.3 && yum clean all

EXPOSE 80

VOLUME ["/usr/local/nginx/html"]

CMD ["/usr/local/nginx/sbin/nginx", "-g", "daemon off;"]

[root@server1 docker]# docker build -t webserver:v2 .

[root@server1 docker]# docker images webserver

REPOSITORY TAG IMAGE ID CREATED SIZE

webserver v2 caf0f80f2332 4 seconds ago 317MB

webserver v1 bfd6774cc216 About an hour ago 494MB方法2.多阶段构建

[root@server1 docker]# vim Dockerfile

FROM centos:7 AS build

ADD nginx-1.23.3.tar.gz /mnt

WORKDIR /mnt/nginx-1.23.3

RUN yum install -y gcc make pcre-devel openssl-devel && sed -i 's/CFLAGS="$CFLAGS -g"/#CFLAGS="$CFLAGS -g"/g' auto/cc/gcc && ./configure --with-http_ssl_module --with-http_stub_status_module && make && make install && cd .. && rm -fr nginx-1.23.3 && yum clean all

FROM centos:7

COPY --from=build /usr/local/nginx /usr/local/nginx

EXPOSE 80

VOLUME ["/usr/local/nginx/html"]

CMD ["/usr/local/nginx/sbin/nginx", "-g", "daemon off;"]

[root@server1 docker]# docker build -t webserver:v3 .

[root@server1 docker]# docker images webserver

REPOSITORY TAG IMAGE ID CREATED SIZE

webserver v3 1ac964f2cefe 29 seconds ago 205MB

webserver v2 caf0f80f2332 3 minutes ago 317MB

webserver v1 bfd6774cc216 About an hour ago 494MB

#直接运行会占用shell,用-d在后台运行

docker run -d --name my-nginx -p 80:80 webserver:v3方法3.使用最精简镜像

使用google提供的最精简镜像

下载地址:

https://github.com/GoogleContainerTools/distroless

下载镜像:

docker pull gcr.io/distroless/base利用最精简镜像构建

[root@server1 ~]# mkdir new

[root@server1 ~]# cd new/

[root@server1 new]# vim Dockerfile

FROM nginx:1.23 AS base

# https://en.wikipedia.org/wiki/List_of_tz_database_time_zones

ARG TIME_ZONE

RUN mkdir -p /opt/var/cache/nginx && \

cp -a --parents /usr/lib/nginx /opt && \

cp -a --parents /usr/share/nginx /opt && \

cp -a --parents /var/log/nginx /opt && \

cp -aL --parents /var/run /opt && \

cp -a --parents /etc/nginx /opt && \

cp -a --parents /etc/passwd /opt && \

cp -a --parents /etc/group /opt && \

cp -a --parents /usr/sbin/nginx /opt && \

cp -a --parents /usr/sbin/nginx-debug /opt && \

cp -a --parents /lib/x86_64-linux-gnu/ld-* /opt && \

cp -a --parents /usr/lib/x86_64-linux-gnu/libpcre* /opt && \

cp -a --parents /lib/x86_64-linux-gnu/libz.so.* /opt && \

cp -a --parents /lib/x86_64-linux-gnu/libc* /opt && \

cp -a --parents /lib/x86_64-linux-gnu/libdl* /opt && \

cp -a --parents /lib/x86_64-linux-gnu/libpthread* /opt && \

cp -a --parents /lib/x86_64-linux-gnu/libcrypt* /opt && \

cp -a --parents /usr/lib/x86_64-linux-gnu/libssl.so.* /opt && \

cp -a --parents /usr/lib/x86_64-linux-gnu/libcrypto.so.* /opt && \

cp /usr/share/zoneinfo/${TIME_ZONE:-ROC} /opt/etc/localtime

FROM gcr.io/distroless/base-debian11

COPY --from=base /opt /

EXPOSE 80 443

ENTRYPOINT ["nginx", "-g", "daemon off;"]

[root@server1 new]# docker build -t webserver:v4 .

[root@server1 new]# docker images webserver

REPOSITORY TAG IMAGE ID CREATED SIZE

webserver v4 c0c4e1d49f3d 4 seconds ago 34MB

webserver v3 1ac964f2cefe 12 minutes ago 205MB

webserver v2 caf0f80f2332 15 minutes ago 317MB

webserver v1 bfd6774cc216 About an hour ago 494MB六、搭建docker的私有仓库

搭建简单的Registry仓库

[root@docker ~]# docker pull registry

或者docker load -i registry.tar开启Registry

[root@docker-node1 docker]# docker run -d -p 5000:5000 --restart=always --name registry registry

5e67b13aa1797d449dc639ded6572dacaa1078917625d51bd9b03227eedb72aa

[root@docker-node1 docker]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5e67b13aa179 registry "/entrypoint.sh /etc..." 8 seconds ago Up 7 seconds 0.0.0.0:5000->5000/tcp, [::]:5000->5000/tcp registry上传镜像到仓库中

#给要上传的经镜像大标签

[root@docker ~]# docker tag busybox:latest 172.25.254.10:5000/busybox:latest

#docker在上传的过程中默认使用https,但是我们并没有建立https认证需要的认证文件所以会报错

[root@docker ~]# docker push 172.25.254.10:5000/busybox:latest

The push refers to repository [172.25.254.10:5000/busybox]

Get "https://172.25.254.10:5000/v2/": dial tcp 172.25.254.10:5000: connect: connection refused

#配置非加密端口

[root@docker ~]# vim /root/docker/daemon.json

{

"insecure-registries" : ["http://172.25.254.10:5000"]

}

[root@docker ~]# systemctl restart docker

#上传镜像

[root@docker ~]# docker push 172.25.254.10:5000/busybox:latest

The push refers to repository [172.25.254.10:5000/busybox]

d51af96cf93e: Pushed

latest: digest: sha256:28e01ab32c9dbcbaae96cf0d5b472f22e231d9e603811857b295e61197e40a9b size: 527

#查看镜像上传

[root@docker ~]# curl 172.25.254.10:5000/v2/_catalog

{"repositories":["busybox"]}为Registry提加密传输

#生成认证key和证书

mkdir certs

[root@docker ~]# openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout certs/timinglee.org.key \

-addext "subjectAltName = DNS:reg.timinglee.org" \ #指定备用名称

-x509 -days 365 -out certs/timinglee.org.crt

You are about to be asked to enter information that will be incorporated

into your certificate request.

What you are about to enter is what is called a Distinguished Name or a DN.

There are quite a few fields but you can leave some blank

For some fields there will be a default value,

If you enter '.', the field will be left blank.

-----

Country Name (2 letter code) [XX]:CN

State or Province Name (full name) []:Shaanxi

Locality Name (eg, city) [Default City]:Xi'an

Organization Name (eg, company) [Default Company Ltd]:timinglee

Organizational Unit Name (eg, section) []:docker

Common Name (eg, your name or your server's hostname) []:reg.timinglee.org

Email Address []:admin@timinglee.org

#查看证书信息

[root@docker-node1 ~]# openssl x509 -in certs/timinglee.org.crt -noout -text

#启动registry仓库

[root@docker ~]# docker run -d -p 443:443 --restart=always --name registry \

-v /opt/registry:/var/lib/registry \

-v /root/certs:/certs \

-e REGISTRY_HTTP_ADDR=0.0.0.0:443 \

-e REGISTRY_HTTP_TLS_CERTIFICATE=/certs/timinglee.org.crt \

-e REGISTRY_HTTP_TLS_KEY=/certs/timinglee.org.key registry测试:

[root@docker-node1 docker]# docker push reg.timinglee.org/busybox:latest

Error response from daemon: Get "https://reg.timinglee.org/v2/": tls: failed to verify certificate: x509: certificate signed by unknown authority #docker客户端没有key和证书所以报错

#为客户端建立证书

mkdir -p /etc/docker/certs.d/reg.timinglee.org

cp /root/certs/timinglee.org.crt /etc/docker/certs.d/reg.timinglee.org/ca.crt

systemctl restart docker

[root@docker-node1 ~]# docker push 172.25.254.10:5000/busybox:latest

The push refers to repository [172.25.254.10:5000/busybox]

61dfb50712f5: Layer already exists

latest: digest: sha256:70ce0a747f09cd7c09c2d6eaeab69d60adb0398f569296e8c0e844599388ebd6 size: 610

i Info → Not all multiplatform-content is present and only the available single-platform image was pushed

sha256:b3255e7dfbcd10cb367af0d409747d511aeb66dfac98cf30e97e87e4207dd76f -> sha256:70ce0a747f09cd7c09c2d6eaeab69d60adb0398f569296e8c0e844599388ebd6

[root@docker-node1 ~]# curl -k https://172.25.254.10:5000/v2/_catalog

{"repositories":["busybox"]}为仓库建立登陆认证

#安装建立认证文件的工具包

[root@docker docker]# dnf install httpd-tools -y

#建立认证文件

[root@docker ~]# mkdir auth

[root@docker ~]# htpasswd -Bc auth/htpasswd timinglee #-B 强制使用最安全加密方式,默认用md5加密

New password:

Re-type new password:

Adding password for user timinglee

#添加认证到registry容器中

[root@docker ~]# docker run -d -p 443:443 --restart=always --name registry \

-v/opt/registry:/var/lib/registry \

-v /root/certs:/certs \

-e REGISTRY_HTTP_ADDR=0.0.0.0:443 \

-e REGISTRY_HTTP_TLS_CERTIFICATE=/certs/timinglee.org.crt \

-e REGISTRY_HTTP_TLS_KEY=/certs/timinglee.org.key \

-v /root/auth:/auth \

-e "REGISTRY_AUTH=htpasswd" \

-e "REGISTRY_AUTH_HTPASSWD_REALM=Registry Realm" \

-e REGISTRY_AUTH_HTPASSWD_PATH=/auth/htpasswd \

registry

[root@docker-node1 ~]# curl -k https://docker-node1/v2/_catalog -u timinglee:123456

{"repositories":["busybox"]}

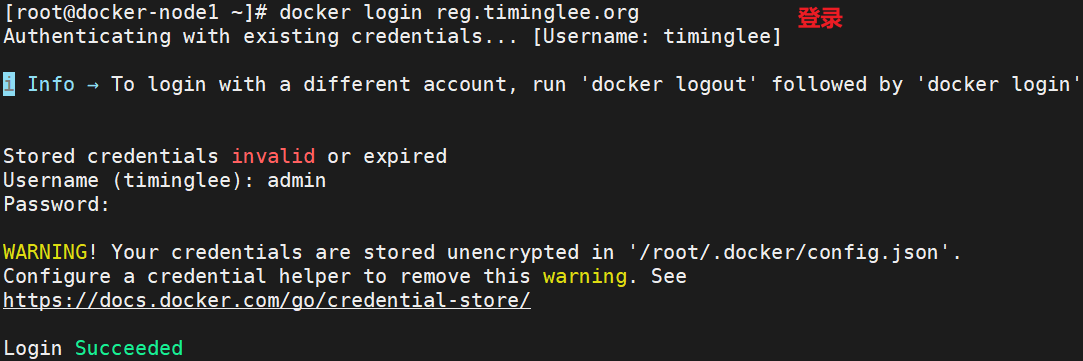

[root@docker-node1 ~]# echo "172.25.254.10 reg.timinglee.org" >> /etc/hosts

[root@docker-node1 ~]# docker login reg.timinglee.org

Username: timinglee

Password:

WARNING! Your credentials are stored unencrypted in '/root/.docker/config.json'.

Configure a credential helper to remove this warning. See

https://docs.docker.com/go/credential-store/

Login Succeeded当仓库开启认证后必须登陆仓库才能进行镜像上传

#未登陆情况下上传镜像

[root@docker ~]# docker push reg.timinglee.org/busybox

Using default tag: latest

The push refers to repository [reg.timinglee.org/busybox]

d51af96cf93e: Preparing

no basic auth credentials

#未登陆情况下也不能下载

[root@docker-node2 ~]# docker pull reg.timinglee.org/busybox

Using default tag: latest

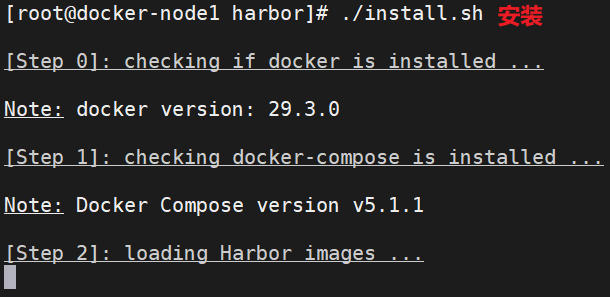

Error response from daemon: Head "https://reg.timinglee.org/v2/busybox/manifests/latest": no basic auth credentials建企业级私有仓库harbor

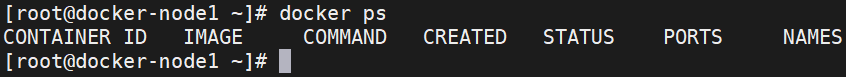

先把运行的容器清理

新版本docker不兼容,换老版本

dnf remove docker-ce -y

dnf install docker-ce-3:28.5.2-1.el9 -y

systemctl enable --now docker

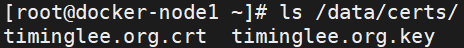

#拷贝之前生成的证书

mkdir -p /data

cp -rp certs/ /data/

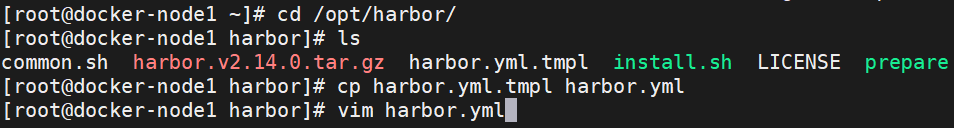

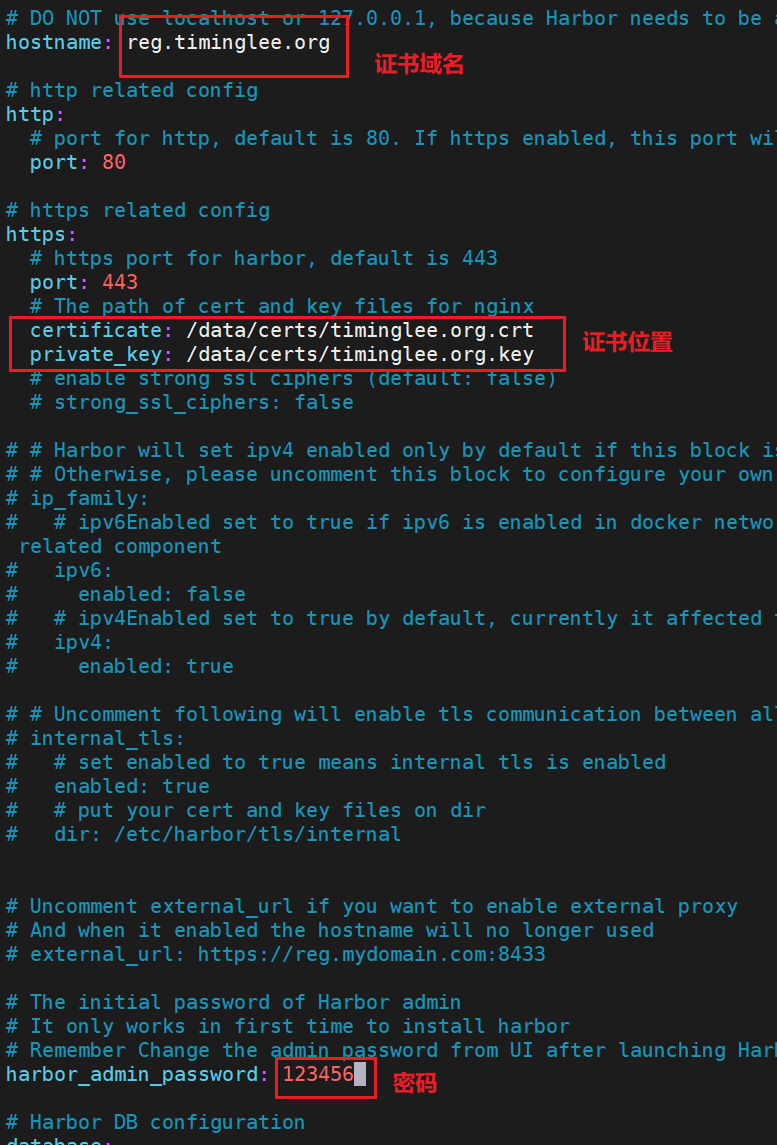

解压harbor资源包到/opt/目录

复制模板并编辑

#管理harbor的容器

[root@docker harbor]# docker compose stop

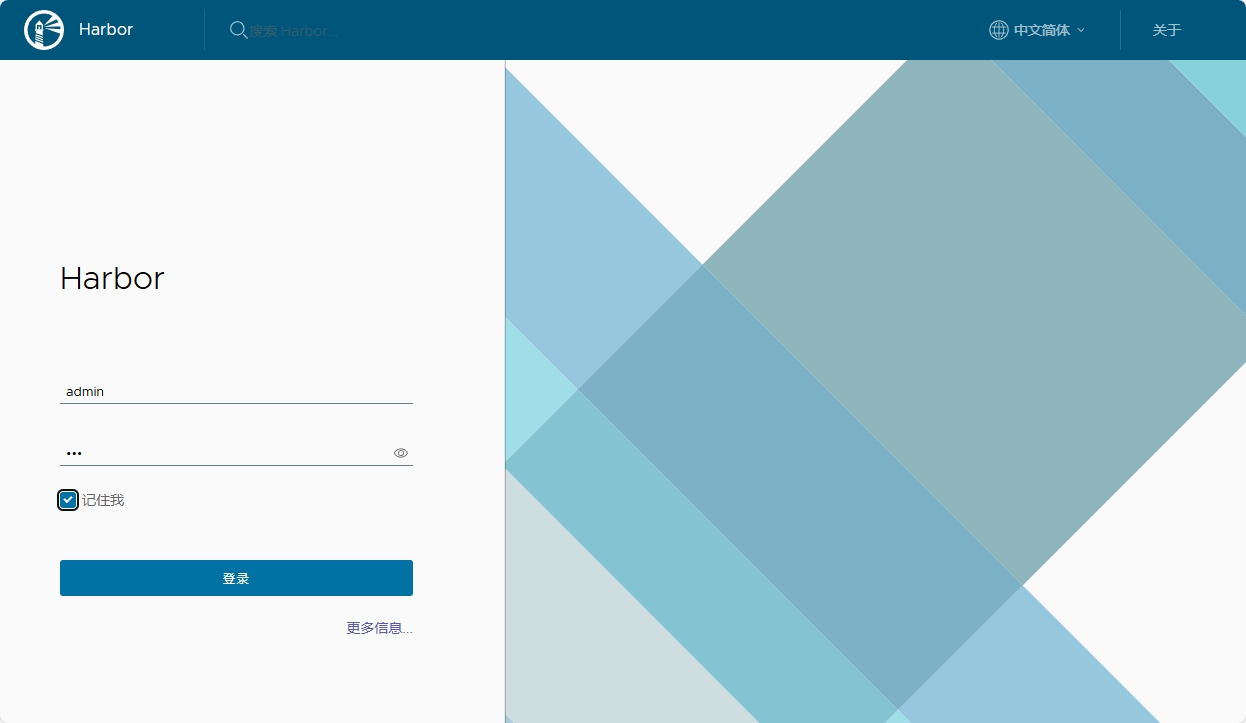

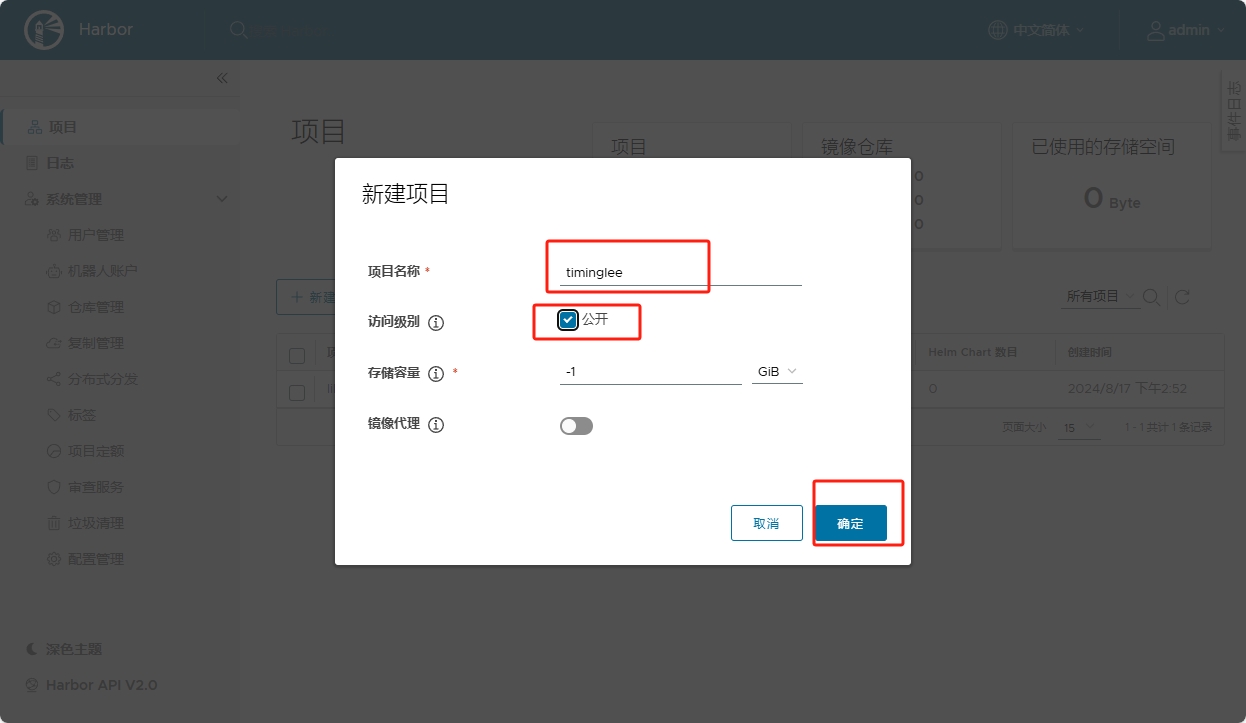

[root@docker harbor]# docker compose up -d1.登陆

2.建立仓库项目

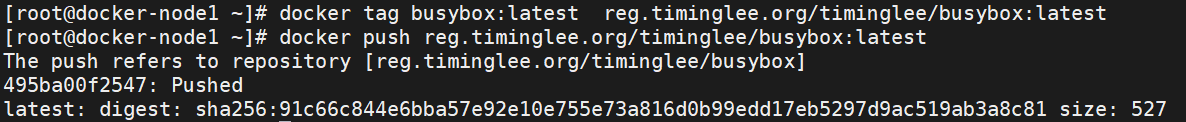

上传镜像

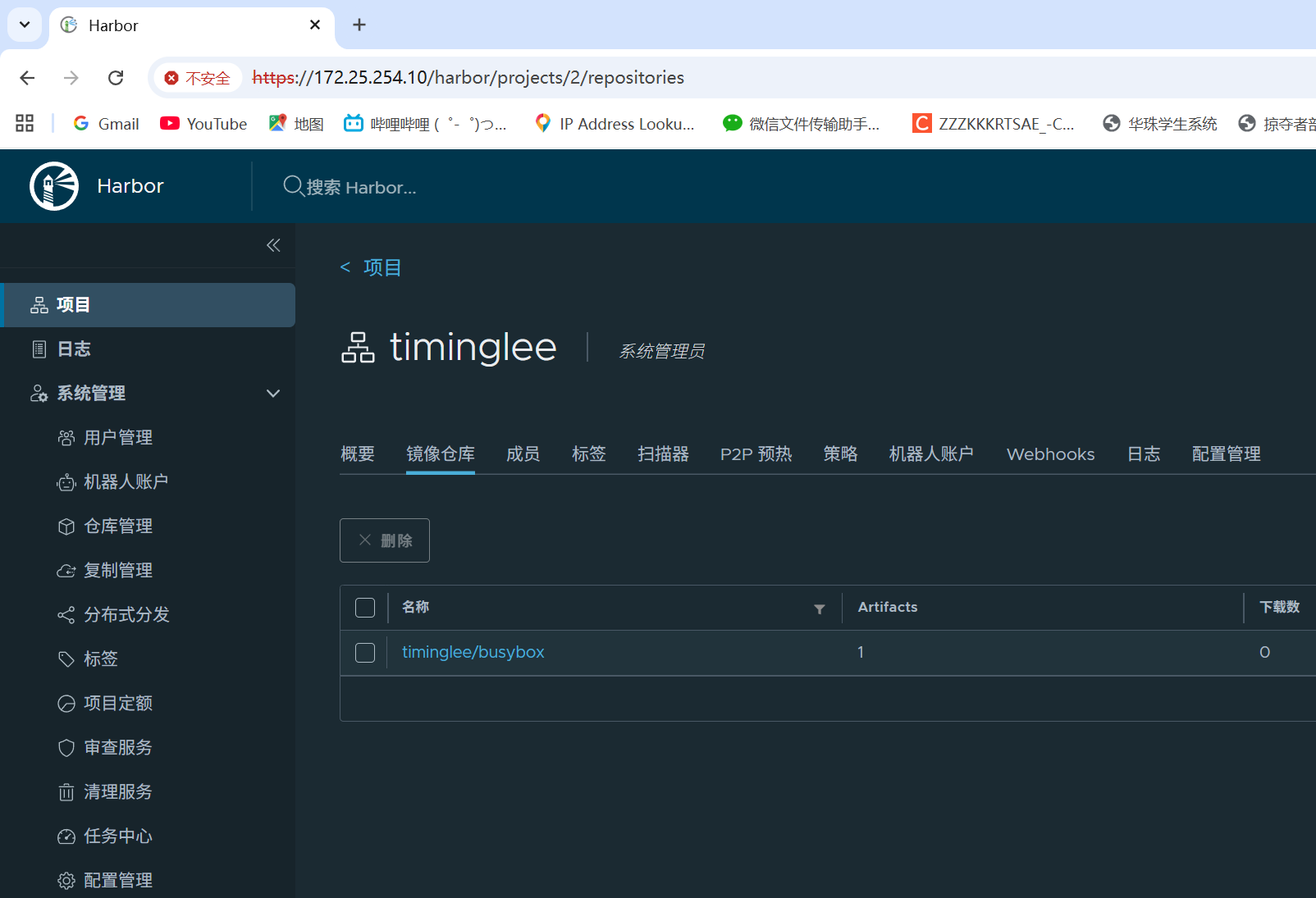

查看上传的镜像

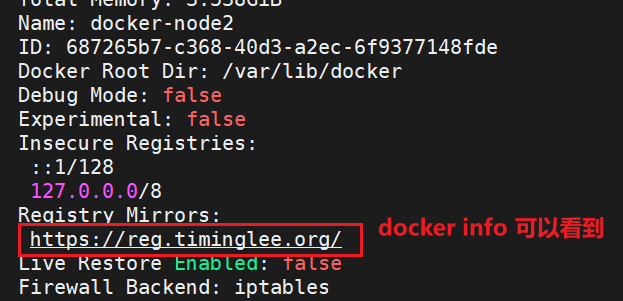

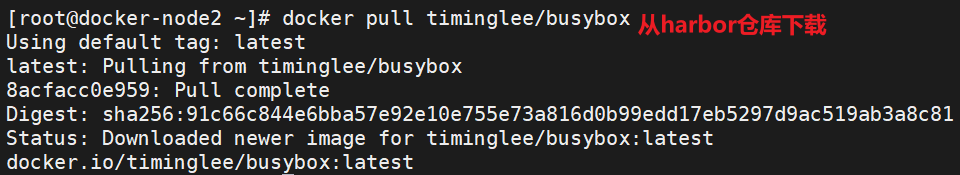

其他主机拉取harbor中的镜像

#配置证书

[root@docker-node2 ~]# mkdir -p /etc/docker/certs.d/reg.timinglee.org

[root@docker-node1 ~]# scp /root/certs/timinglee.org.crt 172.25.254.20:/etc/docker/certs.d/reg.timinglee.org/ca.crt

#配置加速器到服务机

[root@docker-node2 docker]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://reg.timinglee.org"]

}

#配置解析

[root@docker-node2 docker]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.20 docker-node2

172.25.254.10 reg.timinglee.org

[root@docker-node2 docker]# systemctl restart docker

七、Docker 网络

自定义桥接网络

docker引擎在分配ip时时根据容器启动顺序分配到,谁先启动谁用,是动态变更的

多容器互访用ip很显然不是很靠谱,那么多容器访问一般使用容器的名字访问更加稳定

docker原生网络是不支持dns解析的,自定义网络中内嵌了dns,所以要自定义桥接

[root@docker-node1 ~]# docker network create my_net1

[root@docker-node1 ~]# docker run -d --network my_net1 --name web nginx:1.23

[root@docker-node1 ~]# docker run -it --network my_net1 --name test busybox

/ # ping web

PING web (172.19.0.2): 56 data bytes

64 bytes from 172.19.0.2: seq=0 ttl=64 time=0.163 ms

64 bytes from 172.19.0.2: seq=1 ttl=64 time=0.228 ms

64 bytes from 172.19.0.2: seq=2 ttl=64 time=0.173 ms让不同的自定义网络互通

[root@docker-node1 ~]# docker network create my_net2

[root@docker-node1 ~]# docker run -d --name web1 --network my_net1 nginx:1.23

1ade478eadfe9a7b39f525e11902ffa6f7493367138512bf744aa4736986f73f

[root@docker-node1 ~]# docker run -it --name test --network my_net2 busybox:latest

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 22:52:DD:1B:E0:3A

inet addr:172.20.0.2 Bcast:172.20.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:11 errors:0 dropped:0 overruns:0 frame:0

TX packets:3 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1042 (1.0 KiB) TX bytes:126 (126.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # ping 172.19.0.2

PING 172.19.0.2 (172.19.0.2): 56 data bytes

ping不通

#将test容器中加入网络eth1

[root@docker-node1 ~]# docker network connect my_net1 test

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 22:52:DD:1B:E0:3A

inet addr:172.20.0.2 Bcast:172.20.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:19 errors:0 dropped:0 overruns:0 frame:0

TX packets:79 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:1546 (1.5 KiB) TX bytes:7462 (7.2 KiB)

eth1 Link encap:Ethernet HWaddr EA:52:69:48:43:B7

inet addr:172.19.0.3 Bcast:172.19.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:9 errors:0 dropped:0 overruns:0 frame:0

TX packets:3 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:726 (726.0 B) TX bytes:126 (126.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # ping 172.19.0.2

PING 172.19.0.2 (172.19.0.2): 56 data bytes

64 bytes from 172.19.0.2: seq=0 ttl=64 time=0.259 ms

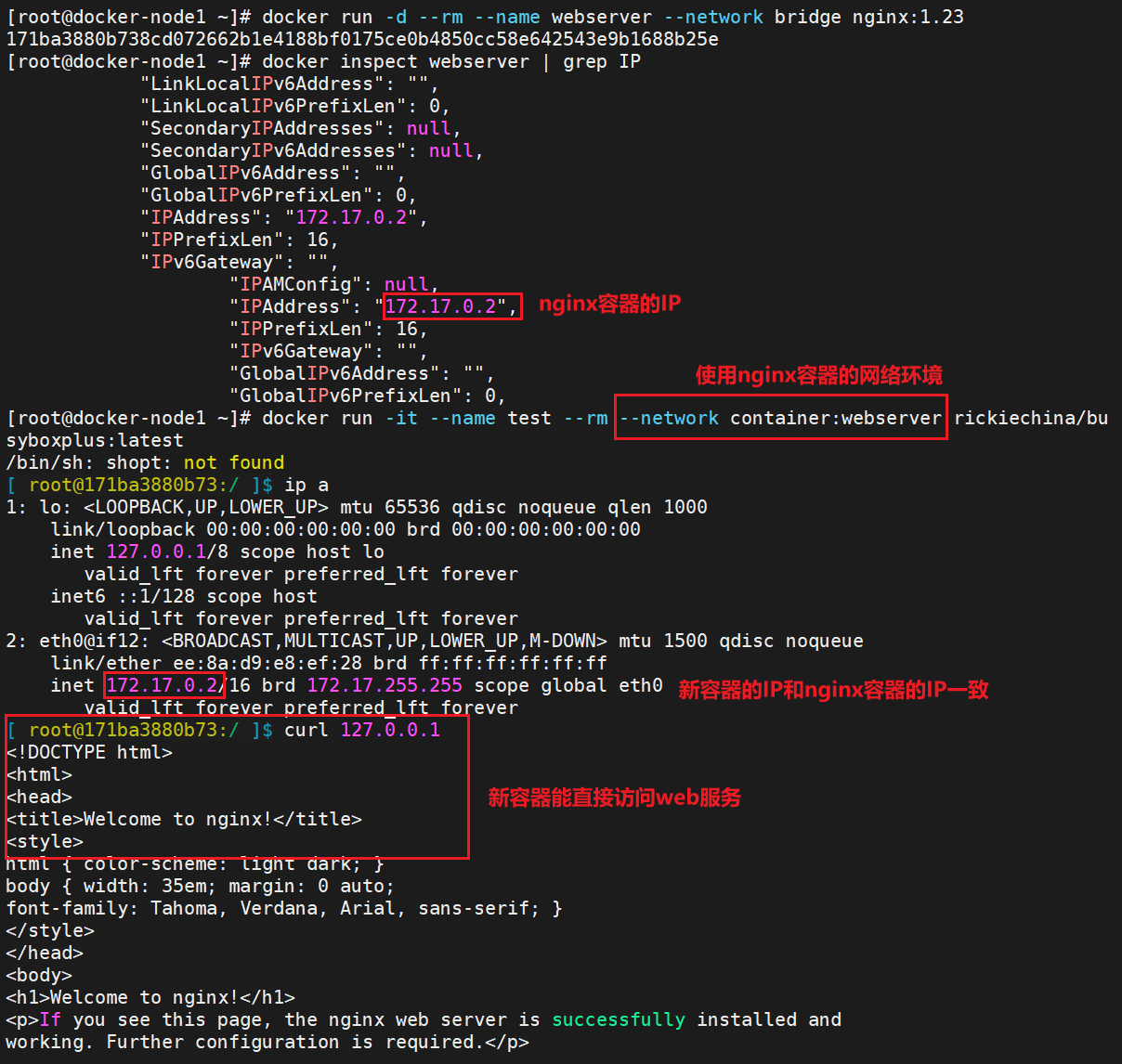

64 bytes from 172.19.0.2: seq=1 ttl=64 time=0.102 msjoined容器网络

Joined容器一种较为特别的网络模式,•在容器创建时使用**--network container:容器名**指定。

处于这个模式下的 Docker 容器会共享一个网络栈,这样两个容器之间可以使用localhost高效快速通信。

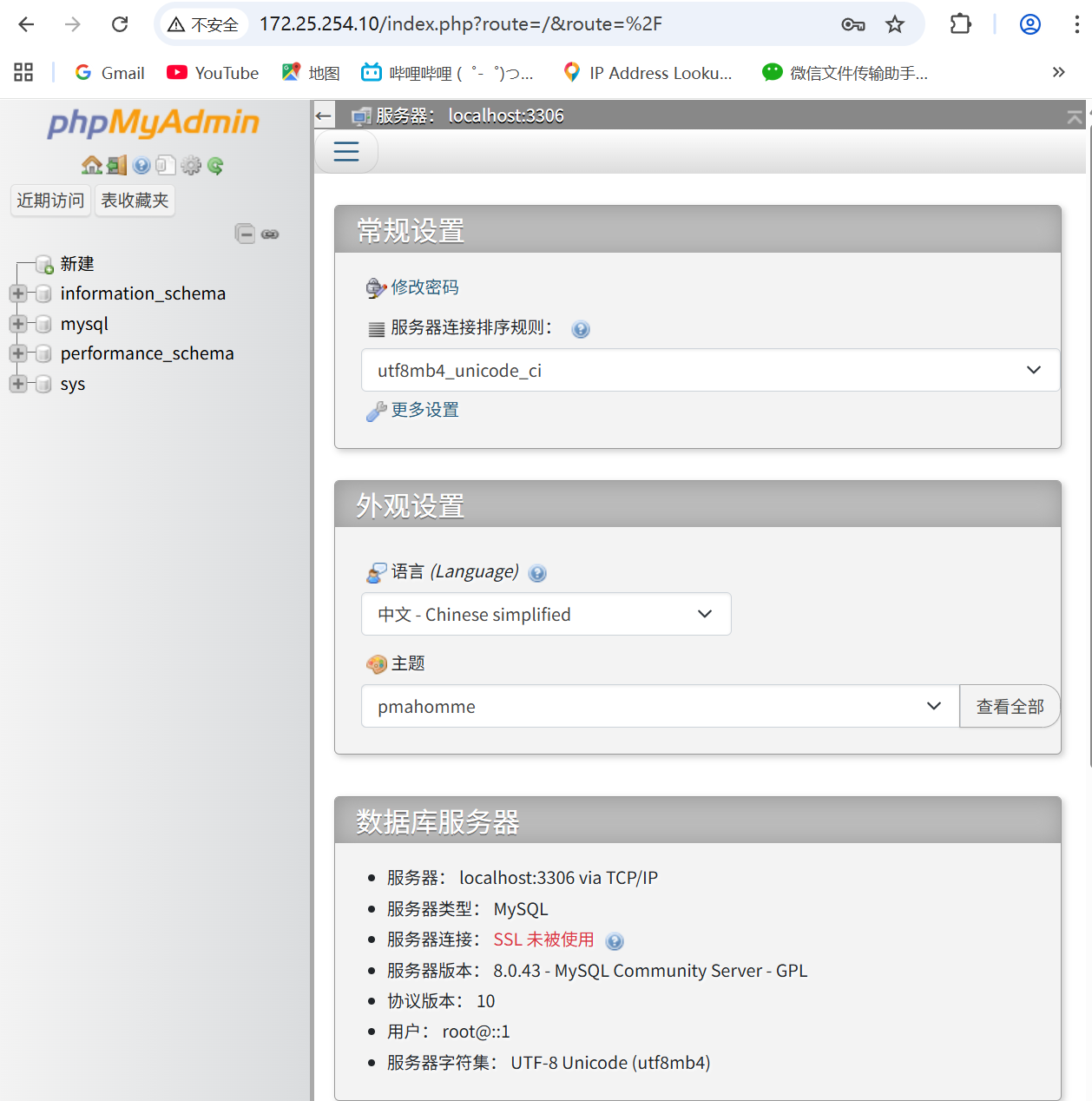

joined网络企业实战

#导入镜像

[root@docker-node1 ~]# docker load -i phpmyadmin-latest.tar.gz

[root@docker-node1 ~]# docker load -i mysql-8.0.tar

#运行容器

[root@docker-node1 ~]# docker run -d --name phpmyadmin -e PMA_ARBITRARY=1 -p 80:80 phpmyadmin:latest

[root@docker-node1 ~]# docker run -d --name mysql -e MYSQL_ROOT_PASSWORD='lee' --network container:phpmyadmin mysql:8.0

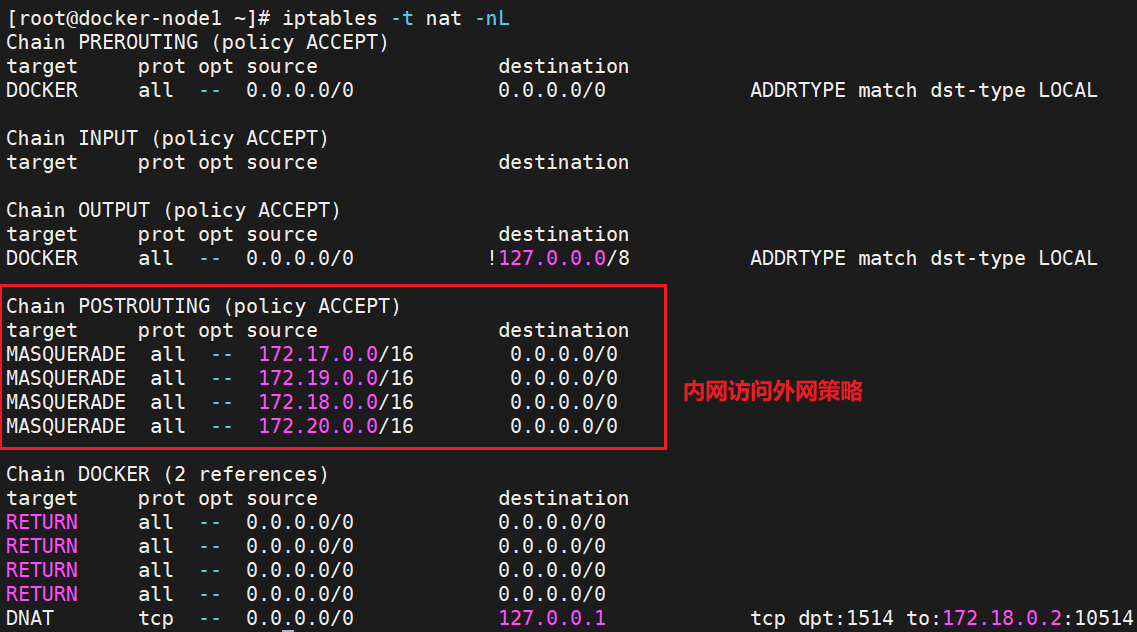

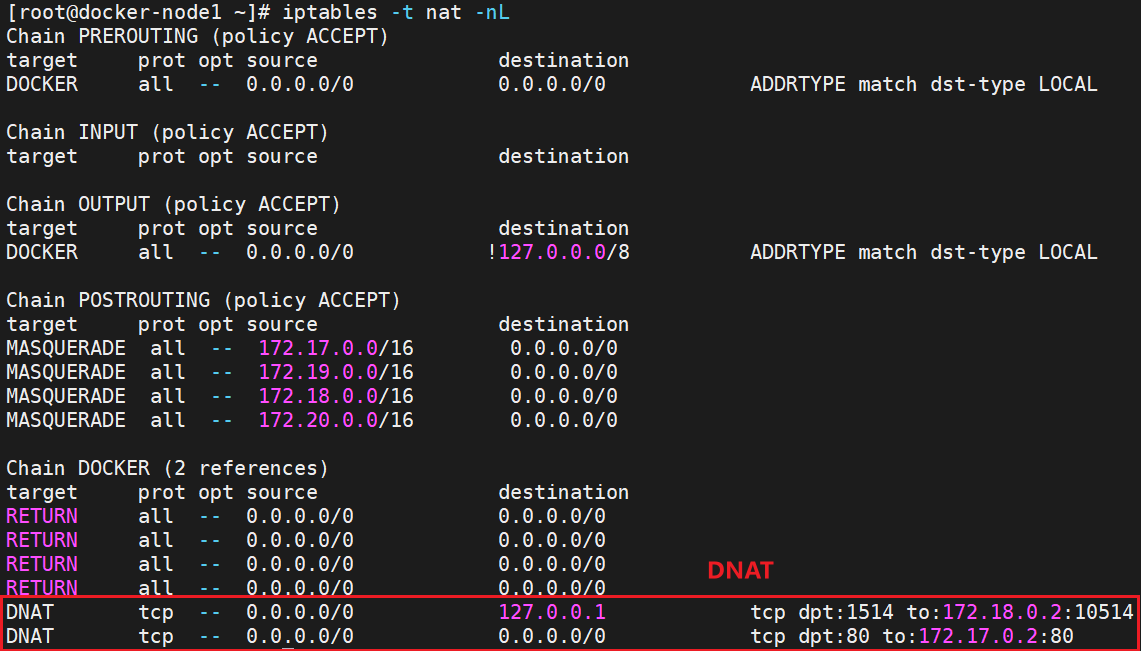

容器内外网的访问

| 特性 | PREROUTING 链 | POSTROUTING 链 |

|---|---|---|

| 处理阶段 | 数据包刚进入系统,路由决策之前 | 数据包即将离开系统,路由决策之后 |

| 主要用途 | 目的地址转换 (DNAT) | 源地址转换 (SNAT) |

| 修改对象 | 数据包的目的IP地址/端口 | 数据包的源IP地址/端口 |

| 影响范围 | 所有进入本机的流量(无论是发往本机还是转发) | 所有离开本机的流量(无论是本机发出还是转发) |

| 典型应用 | 端口映射、负载均衡、将公网流量转发至内网服务器 | 内网共享上网、隐藏内网IP地址、源地址伪装 |

容器访问外网

-

在rhel7中,docker访问外网是通过iptables添加地址伪装策略来完成容器网文外网

-

在rhel7之后的版本中通过nftables添加地址伪装来访问外网

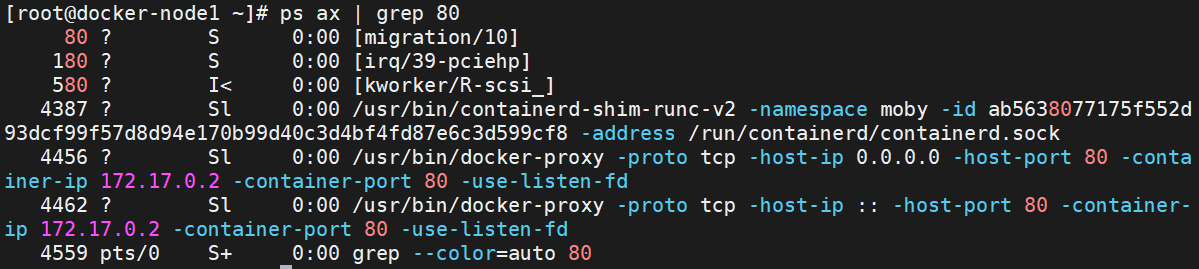

外网访问docker容器

①通过docker-proxy(代理)对数据包进行内转

docker run -d --name webserver -p 80:80 nginx:1.23

②通过dnat策略来完成浏览内转(目的地地址转换)

docker跨主机通信

macvlan网络方式实现跨主机通信

macvlan网络方式

-

Linux kernel提供的一种网卡虚拟化技术。

-

无需Linux bridge,直接使用物理接口,性能极好

-

容器的接口直接与主机网卡连接,无需NAT或端口映射。

-

macvlan会独占主机网卡,但可以使用vlan子接口实现多macvlan网络

-

vlan可以将物理二层网络划分为4094个逻辑网络,彼此隔离,vlan id取值为1~4094

macvlan网络间的隔离和连通

-

macvlan网络在二层上是隔离的,所以不同macvlan网络的容器是不能通信的

-

可以在三层上通过网关将macvlan网络连通起来

-

docker本身不做任何限制,像传统vlan网络那样管理即可

实现方法如下:

#两台主机添加仅主机网卡,打开网卡混杂模式:

[[root@docker-node1~2 ~]# ip link set eth1 promisc on

[root@docker-node1~2 ~]# ip link set up eth1

[root@docker-node1~2 ~]# ifconfig eth1

eth1: flags=4419<UP,BROADCAST,RUNNING,PROMISC,MULTICAST> mtu 1500

ether 00:0c:29:d1:94:e5 txqueuelen 1000 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

#两主机配置docker自建macvlan网络

[root@docker-node1~2 ~]# docker network create \

-d macvlan \

--subnet 1.1.1.0/24 \

--gateway 1.1.1.1 \

-o parent=eth1 lee

#测试跨主机docker是否可以通信

[root@docker-node1 ~]# docker run -it --name busybox --rm --network lee --ip 1.1.1.100 --rm busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

9: eth0@if3: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 8a:5f:00:39:70:0e brd ff:ff:ff:ff:ff:ff

inet 1.1.1.100/24 brd 1.1.1.255 scope global eth0

valid_lft forever preferred_lft forever

/ #

[root@docker-node2 ~]# docker run -it --name busybox --rm --network lee --ip 1.1.1.200 --rm busybox

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

5: eth0@if3: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether da:94:b4:d9:b6:0f brd ff:ff:ff:ff:ff:ff

inet 1.1.1.200/24 brd 1.1.1.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ping 1.1.1.100 #在node2开启的容器中ping node1主机中开启容器的地址

PING 1.1.1.100 (1.1.1.100): 56 data bytes

64 bytes from 1.1.1.100: seq=0 ttl=64 time=1.304 ms

64 bytes from 1.1.1.100: seq=1 ttl=64 time=1.196 ms

64 bytes from 1.1.1.100: seq=2 ttl=64 time=1.066 ms

64 bytes from 1.1.1.100: seq=3 ttl=64 time=1.423 ms| 网络模式 | 特点 |

|---|---|

| Bridge | 默认模式,NAT转换 |

| Host | 共享宿主机网络 |

| Macvlan | 独立MAC/IP,直连物理网络 |

| Overlay | 跨主机通信 |

| None | 无网络 |

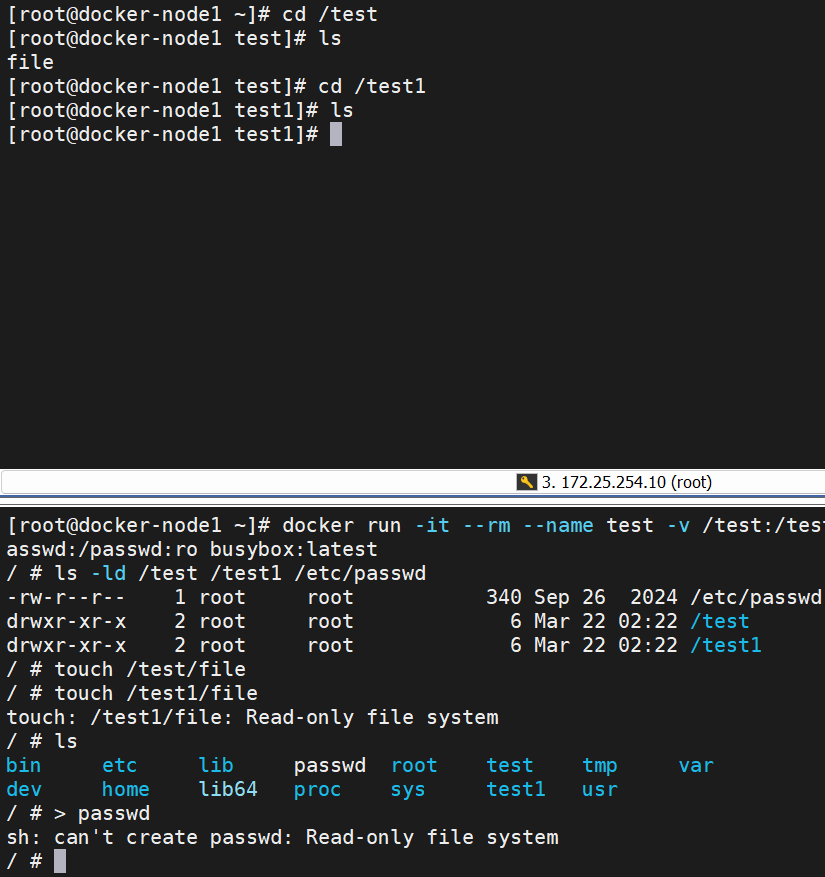

八、docker数据卷

bind mount 数据卷

-

是将主机上的目录或文件mount到容器里。

-

使用 -v 选项指定路径,格式 <host path>:<container path>

-

-v选项指定的路径,如果不存在,挂载时会自动创建

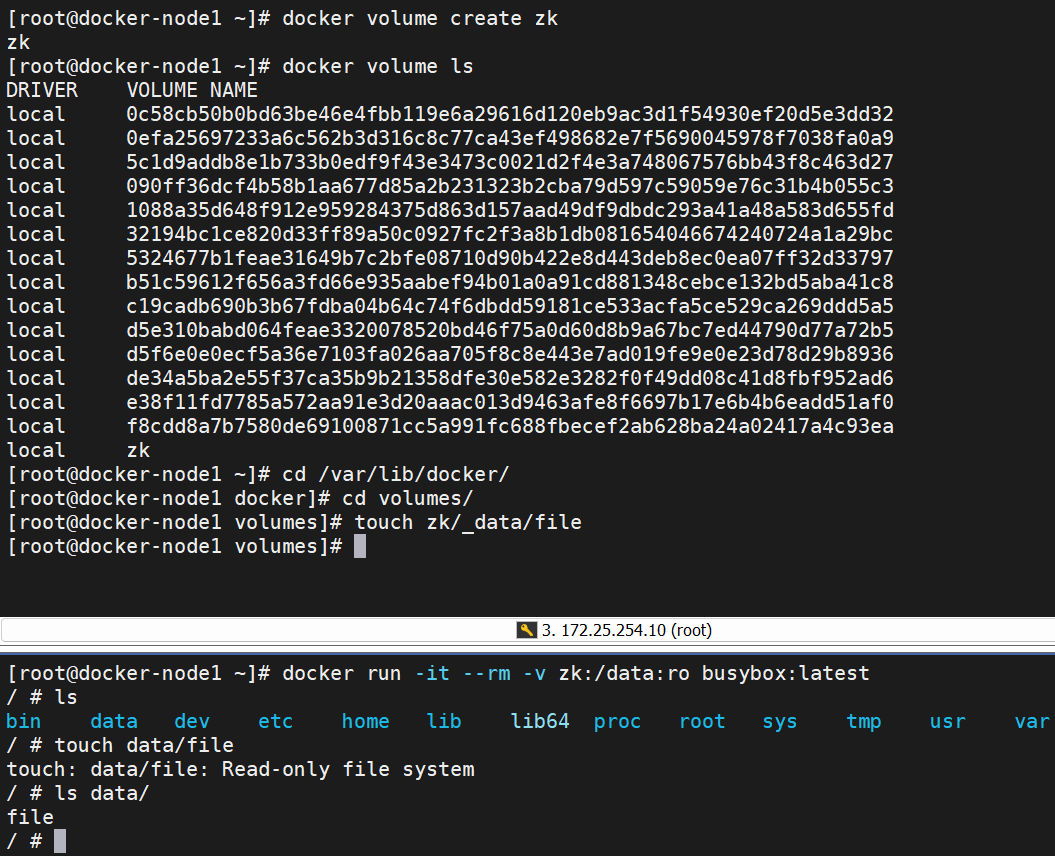

docker manager 卷

-

bind mount必须指定host文件系统路径,限制了移植性

-

docker managed volume 不需要指定mount源,docker自动为容器创建数据卷目录

-

默认创建的数据卷目录都在 /var/lib/docker/volumes 中

-

如果挂载时指向容器内已有的目录,原有数据会被复制到volume中

删除

#清理未使用的 Docker 数据卷

[root@docker-node1 volumes]# docker volume prune

WARNING! This will remove anonymous local volumes not used by at least one container.

Are you sure you want to continue? [y/N] y

#手动建立的需要手动删除

[root@docker-node1 volumes]# docker volume rm zk

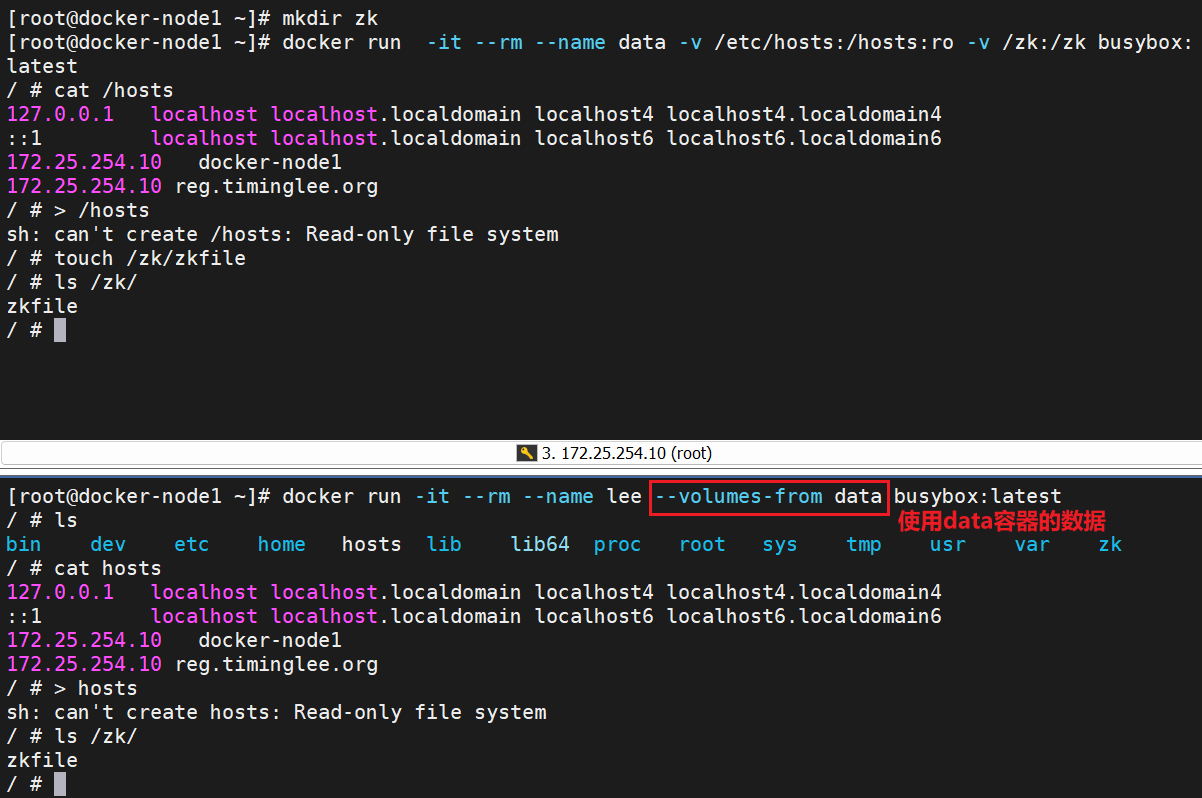

zk数据卷容器

数据卷容器(Data Volume Container)是 Docker 中一种特殊的容器,主要用于方便地在多个容器之间共享数据卷

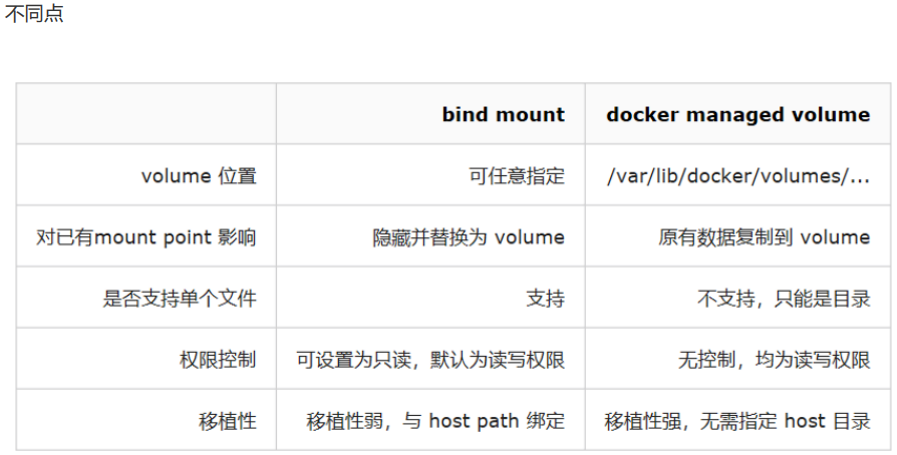

bind mount 数据卷和docker managed 数据卷的对比

相同点: 两者都是 host 文件系统中的某个路径

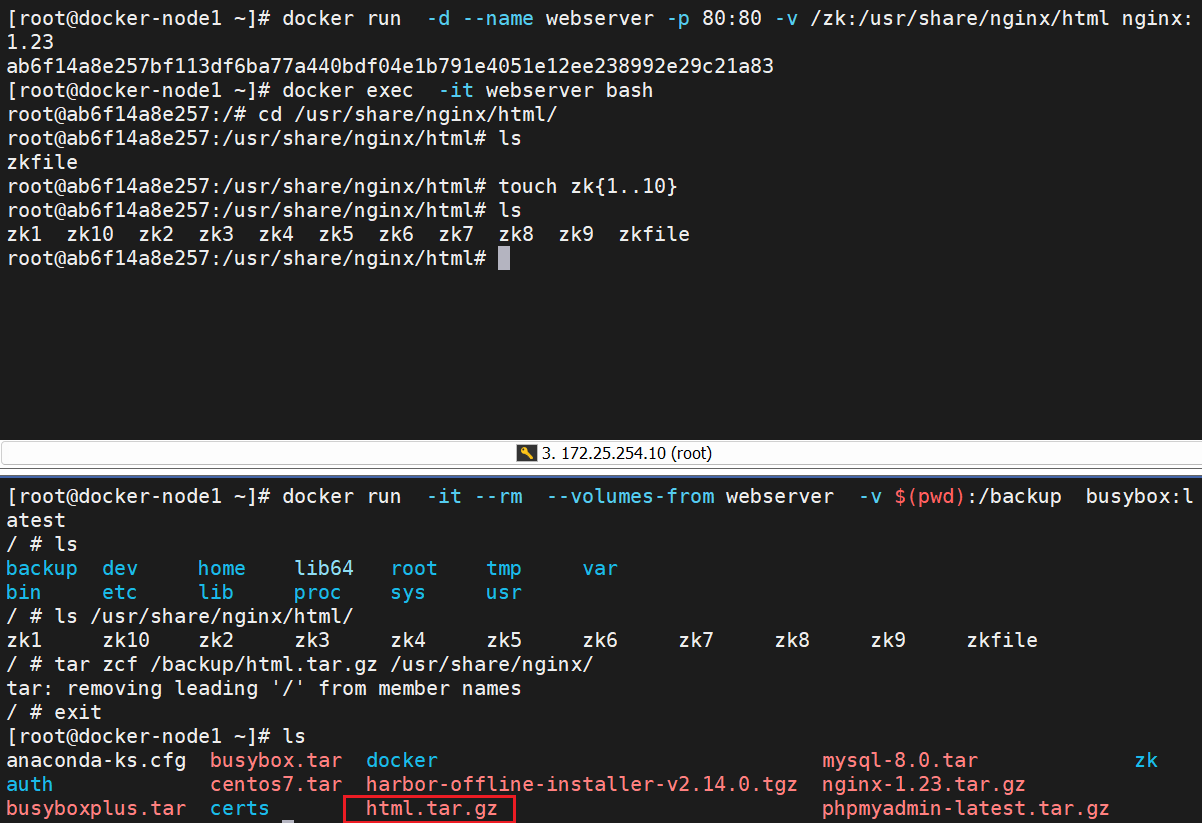

备份与迁移数据卷

备份数据卷

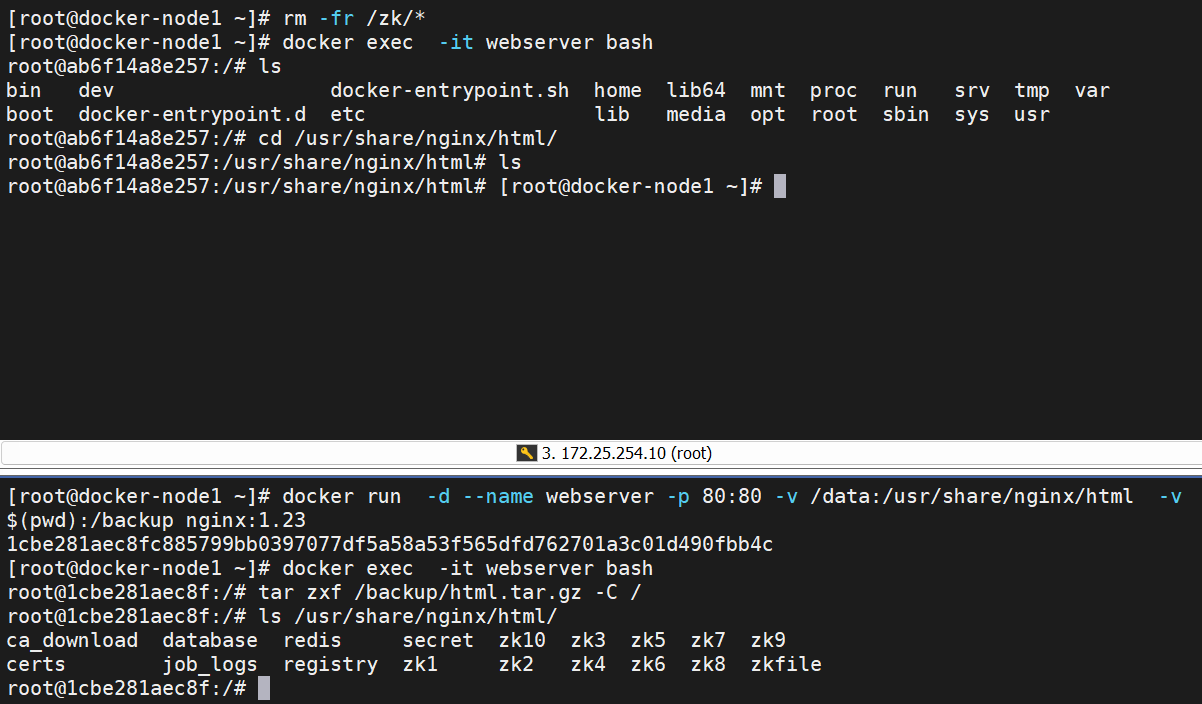

数据恢复

九、Docker 的安全优化

更改系统cgroup版本(企业中非必须)

#在rhel9中默认使用cgroup-v2 但是cgroup-v2中不利于观察docker的资源限制情况,所以推荐使用cgroup-v1

[root@docker-node1 ~]# mount -t cgroup

[root@docker-node1 ~]# mount -t cgroup2

cgroup2 on /sys/fs/cgroup type cgroup2 (rw,nosuid,nodev,noexec,relatime,nsdelegate,memory_recursiveprot)

[root@docker-node1 ~]# grubby --update-kernel=/boot/vmlinuz-$(uname -r) \

> --args="systemd.unified_cgroup_hierarchy=0 systemd.legacy_systemd_cgroup_controller"

[root@docker-node1 ~]# reboot

[root@docker-node1 ~]# mount -t cgroup #查看是否切换成cgroup

cgroup on /sys/fs/cgroup/systemd type cgroup (rw,nosuid,nodev,noexec,relatime,xattr,release_agent=/usr/lib/systemd/systemd-cgroups-agent,name=systemd)

cgroup on /sys/fs/cgroup/perf_event type cgroup (rw,nosuid,nodev,noexec,relatime,perf_event)

cgroup on /sys/fs/cgroup/cpuset type cgroup (rw,nosuid,nodev,noexec,relatime,cpuset)

cgroup on /sys/fs/cgroup/freezer type cgroup (rw,nosuid,nodev,noexec,relatime,freezer)

cgroup on /sys/fs/cgroup/misc type cgroup (rw,nosuid,nodev,noexec,relatime,misc)

cgroup on /sys/fs/cgroup/pids type cgroup (rw,nosuid,nodev,noexec,relatime,pids)

cgroup on /sys/fs/cgroup/net_cls,net_prio type cgroup (rw,nosuid,nodev,noexec,relatime,net_cls,net_prio)

cgroup on /sys/fs/cgroup/blkio type cgroup (rw,nosuid,nodev,noexec,relatime,blkio)

cgroup on /sys/fs/cgroup/hugetlb type cgroup (rw,nosuid,nodev,noexec,relatime,hugetlb)

cgroup on /sys/fs/cgroup/cpu,cpuacct type cgroup (rw,nosuid,nodev,noexec,relatime,cpu,cpuacct)

cgroup on /sys/fs/cgroup/rdma type cgroup (rw,nosuid,nodev,noexec,relatime,rdma)

cgroup on /sys/fs/cgroup/devices type cgroup (rw,nosuid,nodev,noexec,relatime,devices)

cgroup on /sys/fs/cgroup/memory type cgroup (rw,nosuid,nodev,noexec,relatime,memory)Docker资源限制

1.cpu的用量

[root@docker-node1 ~]# docker load -i ubuntu-latest.tar.gz

[root@docker-node1 ~]# docker run -it --rm ubuntu:latest

root@8505c1c08cc5:/# dd if=/dev/zero of=/dev/null & #疯狂写入

[root@docker-node1 ~]# top #CPU几乎完全占用

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

3449 root 20 0 2736 1408 1408 R 100.0 0.1 0:20.45 dd

#cpu资源限制

[root@docker-node1 ~]# docker run -it --rm --name test --cpu-period 100000 --cpu-quota 20000 ubuntu

root@a46d556cf8e2:/# dd if=/dev/zero of=/dev/null &

[root@docker-node1 ~]# top #CPU占用只到两成

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

3553 root 20 0 2736 1408 1408 R 19.9 0.1 0:07.45 dd2.cpu优先级

#关闭cpu的核心,当cpu都不空闲下才会出现争抢的情况,为了实验效果我们可以关闭一个cpu核心

root@docker ~]# echo 0 > /sys/devices/system/cpu/cpu1/online

[root@docker ~]# cat /proc/cpuinfo

processor : 0

vendor_id : GenuineIntel

cpu family : 6

model : 58

model name : Intel(R) Core(TM) i7-3770K CPU @ 3.50GHz

stepping : 9

microcode : 0x21

cpu MHz : 3901.000

cache size : 8192 KB

physical id : 0

siblings : 1

core id : 0

cpu cores : 1 ##cpu核心数为1

apicid : 0

initial apicid : 0

fpu : yes

fpu_exception : yes

cpuid level : 13

wp : yes

flags : fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ss ht syscall nx rdtscp lm constant_tsc arch_perfmon nopl xtopology tsc_reliable nonstop_tsc cpuid tsc_known_freq pni pclmulqdq ssse3 cx16 pcid sse4_1 sse4_2 x2apic popcnt tsc_deadline_timer aes xsave avx f16c rdrand hypervisor lahf_lm pti ssbd ibrs ibpb stibp fsgsbase tsc_adjust smep arat md_clear flush_l1d arch_capabilities

bugs : cpu_meltdown spectre_v1 spectre_v2 spec_store_bypass l1tf mds swapgs itlb_multihit srbds mmio_unknown

bogomips : 7802.00

clflush size : 64

cache_alignment : 64

address sizes : 45 bits physical, 48 bits virtual

power management:

#开启容器时如果指定了cpu使用优先级,那么设定文件为

[root@docker ~]# cat /sys/fs/cgroup/cpu/docker/"docker id(所要查看容器的id)"/cpu.shares

#开启容器并限制资源

[root@docker ~]# docker run -it --rm --cpu-shares 100 ubuntu #设定cpu优先级,最大为1024,值越大优先级越高

root@dc066aa1a1f0:/# dd if=/dev/zero of=/dev/null &

[1] 8

root@dc066aa1a1f0:/# top

top - 12:16:56 up 1 day, 2:22, 0 user, load average: 1.20, 0.37, 0.20

Tasks: 3 total, 2 running, 1 sleeping, 0 stopped, 0 zombie

%Cpu(s): 37.3 us, 61.4 sy, 0.0 ni, 0.0 id, 0.0 wa, 1.0 hi, 0.3 si, 0.0 st

MiB Mem : 3627.1 total, 502.5 free, 954.5 used, 2471.7 buff/cache

MiB Swap: 2063.0 total, 2062.3 free, 0.7 used. 2672.6 avail Mem

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

8 root 20 0 2736 1536 1536 R 3.6 0.0 0:16.74 dd #cpu被限制

1 root 20 0 4588 3968 3456 S 0.0 0.1 0:00.03 bash

9 root 20 0 8856 5248 3200 R 0.0 0.1 0:00.00 top

#开启另外一个容器不限制cpu的优先级

root@17f8c9d66fde:/# dd if=/dev/zero of=/dev/null &

[1] 8

root@17f8c9d66fde:/# top

top - 12:17:55 up 1 day, 2:23, 0 user, load average: 1.84, 0.70, 0.32

Tasks: 3 total, 2 running, 1 sleeping, 0 stopped, 0 zombie

%Cpu(s): 36.2 us, 62.1 sy, 0.0 ni, 0.0 id, 0.0 wa, 1.3 hi, 0.3 si, 0.0 st

MiB Mem : 3627.1 total, 502.3 free, 954.6 used, 2471.7 buff/cache

MiB Swap: 2063.0 total, 2062.3 free, 0.7 used. 2672.5 avail Mem

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

8 root 20 0 2736 1408 1408 R 94.0 0.0 1:09.34 dd #cpu没被限制

1 root 20 0 4588 3968 3456 S 0.0 0.1 0:00.02 bash

9 root 20 0 8848 5248 3200 R 0.0 0.1 0:00.01 top限制内存使用

[root@docker-node1 cpu]# docker run -d --name test --memory 200M --memory-swap 200M nginx:1.23

74989eeb8e1363cc79536736aad29c32310f1e5c4de14496e18a391bdea21599

[root@docker-node1 ~]# rpm -ivh libcgroup-0.41-19.el8.x86_64.rpm

[root@docker-node1 ~]# rpm -ivh libcgroup-tools-0.41-19.el8.x86_64.rpm

[root@docker-node1 ~]# ll /sys/fs/cgroup/memory/docker/74989eeb8e1363cc79536736aad29c32310f1e5c4de14496e18a391bdea21599/ #查看容器ID

[root@docker-node1 ~]# cgexec -g memory:docker/74989eeb8e1363cc79536736aad29c32310f1e5c4de14496e18a391bdea21599 dd if=/dev/zero of=/dev/shm/bigfile bs=1M count=120

记录了120+0 的读入

记录了120+0 的写出

125829120字节(126 MB,120 MiB)已复制,0.0389638 s,3.2 GB/s

[root@docker-node1 ~]# cgexec -g memory:docker/74989eeb8e1363cc79536736aad29c32310f1e5c4de14496e18a391bdea21599 dd if=/dev/zero of=/dev/shm/bigfile bs=1M count=200

已杀死 #被限制磁盘io限制

[root@docker-node1 ~]# docker run -it --name test --rm ubuntu:latest

root@13382a4ecb9b:/# dd if=/dev/zero of=/bigfile bs=1M count=1000

1000+0 records in

1000+0 records out

1048576000 bytes (1.0 GB, 1000 MiB) copied, 4.74269 s, 221 MB/s

#写入速率限制

[root@docker-node1 ~]# docker run -it --name test --device-write-bps /dev/sda:30M --rm ubuntu:latest

root@77ca9257045d:/# dd if=/dev/zero of=/bigfile bs=1M count=100 oflag=direct

100+0 records in

100+0 records out

104857600 bytes (105 MB, 100 MiB) copied, 3.34336 s, 31.4 MB/s容器特权

#在容器中默认情况下即使我是容器的超级用户也无法修改某些系统设定,比如网络

[root@docker-node1 ~]# docker run -it --name test --rm busybox:latest

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0@if21: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 1a:c0:4d:a4:20:a4 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

/ # ip a a 1.2.3.4/24 eth0@if41

ip: either "local" is duplicate, or "eth0@if41" is garbage

/ # fdisk -l

/ # exit这是因为容器使用的很多资源都是和系统真实主机公用的,如果允许容器修改这些重要资源,系统的稳定性会变的非常差

但是由于某些需要求,容器需要控制一些默认控制不了的资源,如何解决此问题,这时我们就要设置容器特权

#如果添加了--privileged 参数开启容器,容器获得权限近乎于宿主机的root用户

[root@docker-node1 ~]# docker run -it --rm --privileged busybox:latest

/ # fdisk -l

Disk /dev/sda: 100 GB, 107374182400 bytes, 209715200 sectors

411206 cylinders, 255 heads, 2 sectors/track

Units: sectors of 1 * 512 = 512 bytes

Device Boot StartCHS EndCHS StartLBA EndLBA Sectors Size Id Type

/dev/sda1 * 4,4,1 1023,254,2 2048 2099199 2097152 1024M 83 Linux

/dev/sda2 1023,254,2 1023,254,2 2099200 10307583 8208384 4008M 82 Linux swap

/dev/sda3 1023,254,2 1023,254,2 10307584 209715199 199407616 95.0G 83 Linux--privileged=true 的权限非常大,接近于宿主机的权限,为了防止用户的滥用,需要增加限制,只提供给容器必须的权限。此时Docker 提供了权限白名单的机制,使用--cap-add添加必要的权限

capabilities手册地址:http://man7.org/linux/man-pages/man7/capabilities.7.html

#开启指定白名单权限

#只开启网络权限

[root@docker-node1 ~]# docker run -it --rm --cap-add CAP_NET_ADMIN busybox:latest

/ # fdisk -l

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 12:C5:18:E8:4D:AE

inet addr:172.17.0.2 Bcast:172.17.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:9 errors:0 dropped:0 overruns:0 frame:0

TX packets:3 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:806 (806.0 B) TX bytes:126 (126.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # ip a a 1.2.3.4/24 dev eth0

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0@if25: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue

link/ether 12:c5:18:e8:4d:ae brd ff:ff:ff:ff:ff:ff

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet 1.2.3.4/24 scope global eth0

valid_lft forever preferred_lft forever十、容器编排工具Docker Compose

-

up- 创建和启动服务 -

stop- 停止服务但保留状态 -

start- 重新启动已停止的服务 -

down- 完全清理项目 -

restart- 重启服务 -

logs- 查看服务日志[root@docker-node1 ~]# mkdir /root/timinglee/

[root@docker-node1 ~]# cd /root/timinglee/

[root@docker-node1 timinglee]# cat docker-compose.yml

services:

web:

image: nginx:1.23

ports:

- "80:80"

volumes:

- lee:/data

dbserver:

image: mysql:8.0

environment:

MYSQL_ROOT_PASSWORD: leevolumes:

lee:[root@docker-node1 timinglee]# docker compose up #会占用终端的

[root@docker-node1 timinglee]# docker compose down

[root@docker-node1 timinglee]# docker compose ps

NAME IMAGE COMMAND SERVICE CREATED STATUS PORTS[root@docker-node1 ~]# docker compose -f /root/timinglee/docker-compose.yml up -d

[+] up 3/3

✔ Network timinglee_default Created 0.0s

✔ Container timinglee-dbserver-1 Started 0.2s

✔ Container timinglee-web-1 Started[root@docker-node1 timinglee]# docker compose stop

[root@docker-node1 timinglee]# docker compose start[root@docker-node1 timinglee]# docker compose down

[+] down 3/3

✔ Container timinglee-dbserver-1 Removed 0.7s

✔ Container timinglee-web-1 Removed 0.1s

✔ Network timinglee_default Removed[root@docker-node1 timinglee]# docker compose restart

[+] restart 0/1

[root@docker-node1 timinglee]# docker compose logs web