一、项目定位

项目名称:企业级分布式电商平台(支持秒杀、商品管理、订单、支付、用户中心)

核心目标:高可用(99.99%)、高并发、水平扩展、容器化部署、无单点故障

适用场景:中小型电商企业、互联网服务平台,可支撑万级并发

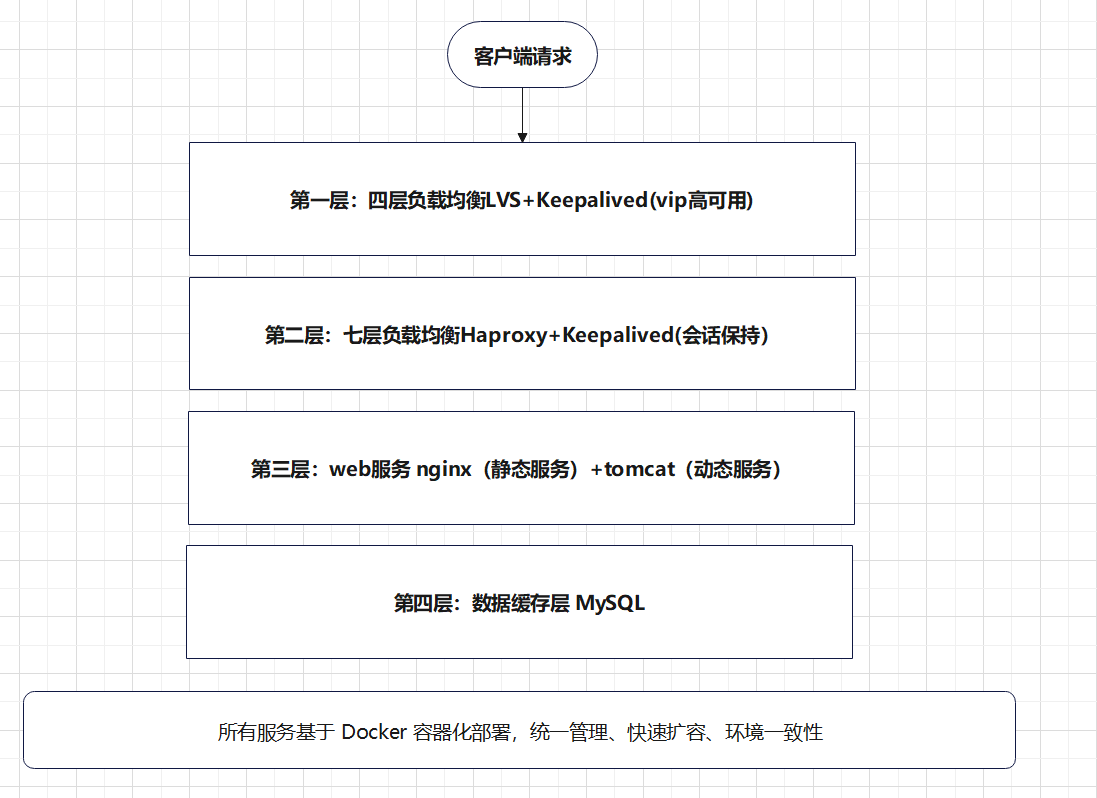

二、整体架构设计(企业标准四层架构)

架构核心优势:

-

无单点故障:LVS/HAProxy/MySQL全主备高可用

-

动静分离:Nginx处理静态资源,Tomcat专注业务逻辑

-

容器化运维:Docker一键部署、扩容、迁移

-

读写分离:MySQL主从架构提升数据库性能

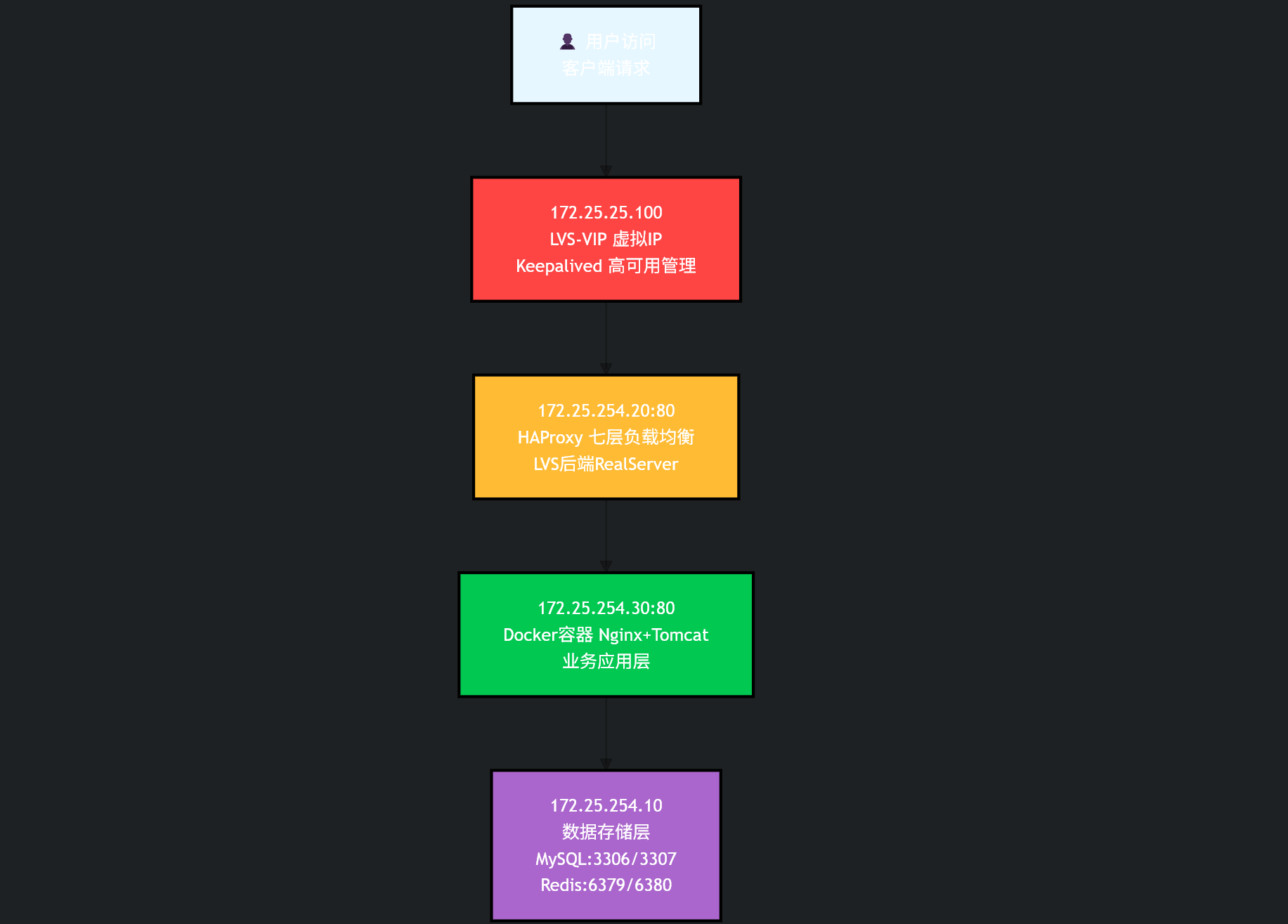

网络架构设计

三、技术栈分工

| 技术 | 核心作用 |

|---|---|

| LVS | 四层负载均衡,承载百万级连接,性能最强 |

| Keepalived | 实现 VIP 漂移,所有负载均衡 / 数据库高可用 |

| HAProxy | 七层负载均衡,支持 HTTP/HTTPS、会话保持 |

| Nginx | 静态资源服务器、反向代理、SSL 终端 |

| Tomcat | Java 后端服务容器(业务核心) |

| Redis | 分布式缓存、分布式锁、秒杀队列、会话共享 |

| MySQL | 关系型数据库,主从复制 + 读写分离 |

| Docker | 容器化打包、标准化部署、服务编排 |

四、项目模块划分

- 前端模块

商品首页、商品详情、购物车、订单、支付、用户中心

静态资源(HTML/CSS/JS/图片)由 Nginx直接托管

- 后端核心服务(Tomcat运行)

1.用户服务:注册、登录、Token认证

2.商品服务:商品增删改查、分类、搜索

3.购物车服务:Redis存储购物车,高性能

4.订单服务:订单创建、状态管理、防重复提交

5.秒杀服务:Redis限流+分布式锁,高并发核心

6.支付服务:对接第三方支付(模拟)

- 数据服务

MySQL:持久化用户、订单、商品数据

Redis:缓存热点商品、秒杀库存、会话、购物车

五、高可用部署方案

- 负载均衡层(高可用)

2台 LVS + Keepalived:一主一备,提供VIP,主节点挂了自动移到备机

2台 HAProxy + Keepalived:承接LVS流量,做七层转发,健康检查后端服务

作用:流量统一入口,自动剔除故障节点

- Web应用层

多台 Nginx + Tomcat Docker集群

Nginx反向代理Tomcat,动静分离 - 支持水平扩容:增加Docker容器即可提升并发

- 缓存层

Redis主从+哨兵模式(高可用)

缓存热点商品、秒杀库存、用户会话 - 避免缓存穿透、击穿、雪崩

- 数据库层

MySQL主从复制 + 读写分离

主库:写入(订单、支付)

从库:读取(商品、用户信息

定时备份,保证数据安全

六、核心实战功能

- 高可用秒杀系统

Redis预减库存 + 分布式锁 - 限流、防刷、防超卖 - 异步订单处理,高并发支撑

- 完整电商业务流程

用户注册 → 登录 → 浏览商品 → 加入购物车 → 下单 → 支付 → 订单查询

- 分布式会话共享

所有Tomcat会话存入Redis - 负载均衡切换节点不丢失登录状态

- 服务健康检查&自动故障剔除

HAProxy自动检查Nginx/Tomcat状态 - 宕机服务自动下线,不影响用户使用

- 容器化一键部署

所有服务封装Docker镜像,一条命令启动整套环境

七、服务器规划(企业标准最小部署)

共 8台服务器

-

LVS主节点

-

LVS备节点

-

HAProxy主节点

-

HAProxy备节点

-

Nginx+Tomcat集群节点1

-

Nginx+Tomcat集群节点2

-

MySQL主从服务器

-

Redis高可用服务器

学习环境可缩为3台:LVS+HAProxy合并、Web合并、数据库+缓存合并

八、部署流程(实战步骤)

实验环境限制缩为3台,在虚拟机中进行:LVS+HAProxy合并、Web合并、数据库+缓存合并

1. 环境设置:

1)关闭防火墙和selinux

bash

systemctl stop firewalld && systemctl disable firewalld

setenforce 0

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config2)利用阿里云部署软件仓库安装docker

部署软件仓库

bash

[root@mysql ~]# cat > /etc/yum.repos.d/docker1.repo << EOF

> [docker1]

> name = docker1

> baseurl = https://mirrors.aliyun.com/docker-ce/linux/rhel/9.6/x86_64/stable/

> gpgcheck = 0

> EOF安装

bash

[root@mysql ~]# dnf install docker-ce -y

已安装:

containerd.io-2.2.2-1.el9.x86_64 docker-buildx-plugin-0.31.1-1.el9.x86_64

docker-ce-3:29.3.0-1.el9.x86_64 docker-ce-cli-1:29.3.0-1.el9.x86_64

docker-ce-rootless-extras-29.3.0-1.el9.x86_64 docker-compose-plugin-5.1.1-1.el9.x86_64

完毕!

[root@mysql ~]# 修改配置给 Docker 做内核网络优化和开机自启

1️⃣ 修改 Docker 启动文件

bash

[root@mysql ~]# vim /lib/systemd/system/docker.service

15 ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --iptables=true让Docker 自动管理 iptables 规则

2️⃣ 加载网桥过滤内核模块

bash

[root@mysql ~]# echo br_netfilter > /etc/modules-load.d/docker_mod.conf

[root@mysql ~]# modprobe -a br_netfilter -

br_netfilter= Linux 网桥防火墙模块 -

必须加载,否则 Docker 容器无法跨主机通信、无法访问外网

-

写入配置文件 → 开机自动加载

3️⃣ 配置内核网络参数

bash

[root@mysql ~]# vim /etc/sysctl.d/docker.conf

[root@mysql ~]# cat /etc/sysctl.d/docker.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1-

bridge-nf-call-iptables=1让网桥流量走 iptables,容器端口映射生效 -

net.ipv4.ip_forward=1开启 IP 转发,容器才能访问外网、跨网段通信

4️⃣ 让内核参数立即生效

bash

[root@mysql ~]# sysctl --system 5️⃣ 设置 Docker 开机自启 + 立即启动

bash

[root@mysql ~]# systemctl enable --now docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /usr/lib/systemd/system/docker.service.上述步骤的脚本如下

bash

[root@mysql ~]# vim docker_optimize.sh

#!/bin/bash

# Docker 生产环境内核&网络一键优化脚本

# 自动配置:br_netfilter、ip_forward、iptables、开机自启

# 1. 加载内核模块并开机自启

echo "===== 加载网桥内核模块 ====="

echo br_netfilter > /etc/modules-load.d/docker_mod.conf

modprobe -a br_netfilter

# 2. 配置内核网络参数

echo "===== 配置内核转发参数 ====="

cat > /etc/sysctl.d/docker.conf <<EOF

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

# 3. 立即生效内核参数

sysctl --system

# 4. 确保 Docker 开启 iptables 管理

echo "===== 优化 Docker 启动参数 ====="

sed -i 's|^ExecStart=.*|ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --iptables=true|' /lib/systemd/system/docker.service

# 5. 重启 Docker 生效

systemctl daemon-reload

systemctl enable --now docker

systemctl restart docker

echo "===== Docker 优化完成!✅ ====="

docker -v

echo "内核转发状态:"

sysctl net.ipv4.ip_forward3)镜像获取

1.编辑/etc/docker/daemon.json文件,配置 Docker 使用国内镜像加速器

bash

[root@mysql ~]# vim /etc/docker/daemon.json

{

"registry-mirrors": [

"https://docker.mirrors.ustc.edu.cn",

"https://hub-mirror.c.163.com",

"https://mirror.baidubce.com",

"https://docker.m.daocloud.io"

]

}2.然后重启 Docker

bash

[root@mysql ~]# systemctl daemon-reload

[root@mysql ~]# systemctl restart docker3.下载需要的镜像,失败就多试几次

bash

[root@mysql ~]# docker pull redis:6.2

6.2: Pulling from library/redis

3fd7bd98ca65: Pull complete

6db0909c4473: Pull complete

a98ade17d30a: Pull complete

d6bbd0ad0c69: Pull complete

2eaae5f5db7a: Pull complete

ff40afe00f2c: Pull complete

5061fdecd88b: Pull complete

4f4fb700ef54: Pull complete

ba976514744f: Download complete

d1201f450c52: Download complete

Digest: sha256:83a75a9107fae42b4407232299be484b2367c402376511d178672feb9cc8eb24

Status: Downloaded newer image for redis:6.2

docker.io/library/redis:6.2

[root@mysql ~]#4.保存为tar包,方便复制到其他机器

bash

[root@mysql ~]# docker save -o redis-6.2.tar redis:6.2

[root@mysql ~]# docker save -o tomcat-9.tar tomcat:9

[root@mysql ~]# ll

总用量 976352

-rw-------. 1 root root 1000 1月 14 18:32 anaconda-ks.cfg

-rw-r--r-- 1 root root 1031 3月 24 10:10 docker_optimize.sh

-rw-r--r-- 1 root root 798737408 3月 24 16:24 mysql-8.0.tar

-rw------- 1 root root 42622976 3月 27 02:26 redis-6.2.tar

-rw------- 1 root root 158410240 3月 27 02:36 tomcat-9.tar

[root@mysql ~]# 4)ip划分

(172.25.254.0/24 内网段,192.168.1.0/24外网VIP段)

MySQL主从、Redis主从+哨兵:172.25.254.10

LVS+Keepalived、HAProxy+Keepalived:172.25.254.20

Nginx+Tomcat Docker集群:172.25.254.30

VIP规划:

192.168.1.100(LVS虚拟IP)、192.168.1.200(HAProxy虚拟IP)

2.数据库服务:MySQL主从复制+读写分离部署

1)加载mysql服务镜像

bash

[root@mysql ~]# ll

总用量 780028

-rw-------. 1 root root 1000 1月 14 18:32 anaconda-ks.cfg

-rw-r--r-- 1 root root 1031 3月 24 10:10 docker_optimize.sh

-rw-r--r-- 1 root root 798737408 3月 24 16:24 mysql-8.0.tar

[root@mysql ~]# docker load -i mysql-8.0.tar

Loaded image: mysql:8.0

[root@mysql ~]# docker images

i Info → U In Use

IMAGE ID DISK USAGE CONTENT SIZE EXTRA

mysql:8.0 bb3c7d314bcc 1.62GB 799MB

[root@mysql ~]# 2)创建存放数据的目录

bash

[root@mysql ~]# mkdir -p /data/mysql/master /data/mysql/slave

[root@mysql ~]# cd /data/mysql/

[root@mysql mysql]# ll

总用量 0

drwxr-xr-x 2 root root 6 3月 24 16:22 master

drwxr-xr-x 2 root root 6 3月 24 16:22 slave

[root@mysql mysql]3)在mysql目录下创建docker-compose.yml文件(方便管理)

bash

[root@mysql ~]# cd /data/mysql/

[root@mysql mysql]# vim docker-compose.yml

[root@mysql mysql]# cat docker-compose.yml

services:

mysql-master:

image: mysql:8.0

container_name: mysql-master

ports:

- "172.25.254.10:3306:3306"

environment:

MYSQL_ROOT_PASSWORD: root123

MYSQL_ROOT_HOST: '%'

TZ: Asia/Shanghai

volumes:

- ./master-data:/var/lib/mysql

- /etc/localtime:/etc/localtime:ro

# 数组格式:每个参数独立,最可靠

command:

- --server-id=1

- --gtid-mode=ON

- --enforce-gtid-consistency=ON

- --bind-address=0.0.0.0

- --character-set-server=utf8mb4

- --collation-server=utf8mb4_unicode_ci

- --default-authentication-plugin=mysql_native_password

networks:

- mysql-net

restart: unless-stopped

healthcheck:

test: ["CMD", "mysqladmin", "-uroot", "-proot123", "ping"]

interval: 10s

timeout: 5s

retries: 3

mysql-slave:

image: mysql:8.0

container_name: mysql-slave

ports:

- "172.25.254.10:3307:3306"

environment:

MYSQL_ROOT_PASSWORD: root123

MYSQL_ROOT_HOST: '%'

TZ: Asia/Shanghai

volumes:

- ./slave-data:/var/lib/mysql

- /etc/localtime:/etc/localtime:ro

command:

- --server-id=2

- --gtid-mode=ON

- --enforce-gtid-consistency=ON

- --bind-address=0.0.0.0

- --character-set-server=utf8mb4

- --collation-server=utf8mb4_unicode_ci

- --default-authentication-plugin=mysql_native_password

networks:

- mysql-net

restart: unless-stopped

healthcheck:

test: ["CMD", "mysqladmin", "-uroot", "-proot123", "ping"]

interval: 10s

timeout: 5s

retries: 3

networks:

mysql-net:

driver: bridge

[root@mysql mysql]#

bash

注释:

--server-id=1

作用:给 MySQL 一个唯一身份证号

主从架构里,每台 MySQL 必须有不同编号

主库写 1

从库写 2

--gtid-mode=ON

作用:开启 GTID 主从模式

GTID = 全局事务 ID

--enforce-gtid-consistency=ON

作用:强制 GTID 事务一致性

保证主从数据绝对一致,

不让执行会破坏主从一致性的 SQL。

networks:

mysql-net:

Compose 就会自动创建一个虚拟网络,容器名可以直接当 IP 用检测

bash

[root@mysql mysql]# docker compose config

name: mysql

services:

mysql-master:

command:

- --server-id=1

- --gtid-mode=ON

- --enforce-gtid-consistency=ON

- --bind-address=0.0.0.0

- --character-set-server=utf8mb4

- --collation-server=utf8mb4_unicode_ci

- --default-authentication-plugin=mysql_native_password

container_name: mysql-master

environment:

MYSQL_ROOT_HOST: '%'

MYSQL_ROOT_PASSWORD: root123

TZ: Asia/Shanghai

healthcheck:

test:

- CMD

- mysqladmin

- -uroot

- -proot123

- ping

timeout: 5s

interval: 10s

retries: 3

image: mysql:8.0

networks:

mysql-net: null

ports:

- mode: ingress

host_ip: 172.25.254.10

target: 3306

published: "3306"

protocol: tcp

restart: unless-stopped

volumes:

- type: bind

source: /data/mysql/master-data

target: /var/lib/mysql

bind: {}

- type: bind

source: /etc/localtime

target: /etc/localtime

read_only: true

bind: {}

mysql-slave:

command:

- --server-id=2

- --gtid-mode=ON

- --enforce-gtid-consistency=ON

- --bind-address=0.0.0.0

- --character-set-server=utf8mb4

- --collation-server=utf8mb4_unicode_ci

- --default-authentication-plugin=mysql_native_password

container_name: mysql-slave

environment:

MYSQL_ROOT_HOST: '%'

MYSQL_ROOT_PASSWORD: root123

TZ: Asia/Shanghai

healthcheck:

test:

- CMD

- mysqladmin

- -uroot

- -proot123

- ping

timeout: 5s

interval: 10s

retries: 3

image: mysql:8.0

networks:

mysql-net: null

ports:

- mode: ingress

host_ip: 172.25.254.10

target: 3306

published: "3307"

protocol: tcp

restart: unless-stopped

volumes:

- type: bind

source: /data/mysql/slave-data

target: /var/lib/mysql

bind: {}

- type: bind

source: /etc/localtime

target: /etc/localtime

read_only: true

bind: {}

networks:

mysql-net:

name: mysql_mysql-net

driver: bridge

[root@mysql mysql]# docker images启动

bash

[root@mysql mysql]# docker compose up -d

[+] up 3/3

✔ Network mysql_default Created 0.0s

✔ Container mysql-slave Started 0.5s

✔ Container mysql-master Started 0.4s

[root@mysql mysql]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

802041c93246 mysql:8.0 "docker-entrypoint.s..." 10 seconds ago Up 10 seconds 0.0.0.0:3306->3306/tcp, [::]:3306->3306/tcp, 33060/tcp mysql-master

c99430680c8a mysql:8.0 "docker-entrypoint.s..." 10 seconds ago Up 10 seconds 33060/tcp, 0.0.0.0:3307->3306/tcp, [::]:3307->3306/tcp mysql-slave

[root@mysql mysql]# 4)配置主从同步

bash

主库创建复制账号

[root@mysql mysql]# docker exec mysql-master mysql -uroot -proot123 -e "CREATE USER 'repl'@'%' IDENTIFIED BY 'repl123'; GRANT REPLICATION SLAVE ON *.* TO 'repl'@'%'; FLUSH PRIVILEGES;"

mysql: [Warning] Using a password on the command line interface can be insecure.

从库连接主库

[root@mysql mysql]# docker exec mysql-slave mysql -uroot -proot123 -e "

CHANGE MASTER TO

MASTER_HOST='mysql-master',

MASTER_USER='repl',

MASTER_PASSWORD='repl123',

MASTER_AUTO_POSITION=1,

GET_MASTER_PUBLIC_KEY=1;

START SLAVE;

"

GET_MASTER_PUBLIC_KEY=1

因为 MySQL 8.0 默认用了新的加密认证

主从复制必须 获取主库公钥 才能登录5)检查是否启动

bash

[root@mysql mysql]# ss -tlnp | grep 3306

LISTEN 0 4096 172.25.254.10:3306 0.0.0.0:* users:(("docker-proxy",pid=2261,fd=8))

[root@mysql mysql]# docker exec mysql-slave mysql -uroot -proot123 -e "show slave status\G" | grep Running

mysql: [Warning] Using a password on the command line interface can be insecure.

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Slave_SQL_Running_State: Replica has read all relay log; waiting for more updates

[root@mysql mysql]# docker exec mysql-slave mysql -uroot -proot123 -e "show slave status\G" | grep -E "(Running|Master)"

mysql: [Warning] Using a password on the command line interface can be insecure.

Master_Host: mysql-master

Master_User: repl

Master_Port: 3306

Master_Log_File: binlog.000003

Read_Master_Log_Pos: 197

Relay_Master_Log_File: binlog.000003

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Exec_Master_Log_Pos: 197

Master_SSL_Allowed: No

Master_SSL_CA_File:

Master_SSL_CA_Path:

Master_SSL_Cert:

Master_SSL_Cipher:

Master_SSL_Key:

Seconds_Behind_Master: 0

Master_SSL_Verify_Server_Cert: No

Master_Server_Id: 1

Master_UUID: f0a30015-275e-11f1-9ed9-46c3e1178669

Master_Info_File: mysql.slave_master_info

Slave_SQL_Running_State: Replica has read all relay log; waiting for more updates

Master_Retry_Count: 86400

Master_Bind:

Master_SSL_Crl:

Master_SSL_Crlpath:

Master_TLS_Version:

Master_public_key_path: 6)读写分离测试

bash

[root@mysql ~]# docker exec mysql-master mysql -uroot -proot123 -e "create database shop; use shop; create table user(id int);"

mysql: [Warning] Using a password on the command line interface can be insecure.

[root@mysql ~]# docker exec mysql-slave mysql -uroot -proot123 -e "use shop; show tables;"

mysql: [Warning] Using a password on the command line interface can be insecure.

Tables_in_shop

user

[root@mysql ~]3. 缓存服务:部署Redis主从+哨兵

1.创建目录

bash

[root@mysql ~]# mkdir -p /data/redis/{master,slave,sentinel1,sentinel2,sentinel3}

cd /data/redis

[root@mysql redis]# ll

总用量 0

drwxr-xr-x 2 root root 6 3月 27 14:40 master

drwxr-xr-x 2 root root 6 3月 27 14:40 sentinel1

drwxr-xr-x 2 root root 6 3月 27 14:40 sentinel2

drwxr-xr-x 2 root root 6 3月 27 14:40 sentinel3

drwxr-xr-x 2 root root 6 3月 27 14:40 slave

[root@mysql redis]# 2.编辑docker-compose.yml

bash

[root@mysql redis]# vim docker-compose.yml

[root@mysql redis]# cat docker-compose.yml

services:

redis-master:

image: redis:6.2

container_name: redis-master

ports:

- "172.25.254.10:6379:6379" # 绑定内网IP

volumes:

- ./master:/data

command: redis-server --appendonly yes --requirepass redis123 --masterauth redis123 --bind 0.0.0.0

redis-slave:

image: redis:6.2

container_name: redis-slave

ports:

- "172.25.254.10:6380:6379"

volumes:

- ./slave:/data

command: redis-server --appendonly yes --slaveof 172.25.254.10 6379 --requirepass redis123 --masterauth redis123 --bind 0.0.0.0

sentinel1:

image: redis:6.2

container_name: sentinel1

ports:

- "172.25.254.10:26379:26379"

volumes:

- ./sentinel1:/data

command: redis-sentinel /data/sentinel.conf

sentinel2:

image: redis:6.2

container_name: sentinel2

ports:

- "172.25.254.10:26380:26379"

volumes:

- ./sentinel2:/data

command: redis-sentinel /data/sentinel.conf

sentinel3:

image: redis:6.2

container_name: sentinel3

ports:

- "172.25.254.10:26381:26379"

volumes:

- ./sentinel3:/data

command: redis-sentinel /data/sentinel.conf3.生成哨兵配置(监控主库172.25.254.10:6379)

bash

[root@mysql redis]# for i in 1 2 3; do

dir="/data/redis/sentinel$i"

mkdir -p $dir

cat > $dir/sentinel.conf << EOF

port 26379

dir /tmp

sentinel monitor mymaster 172.25.254.10 6379 2

sentinel down-after-milliseconds mymaster 5000

sentinel parallel-syncs mymaster 1

sentinel failover-timeout mymaster 10000

sentinel auth-pass mymaster redis123

bind 0.0.0.0

EOF

done4.启动并检测

bash

[root@mysql redis]# docker compose up -d

[+] up 6/6

✔ Network redis_default Created 0.0s

✔ Container redis-slave Started 0.6s

✔ Container sentinel2 Started 0.6s

✔ Container sentinel1 Started 0.5s

✔ Container sentinel3 Started 0.6s

✔ Container redis-master Started 0.5s

[root@mysql redis]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ec4c7357aab8 redis:6.2 "docker-entrypoint.s..." 15 seconds ago Up 14 seconds 6379/tcp, 172.25.254.10:26379->26379/tcp sentinel1

d8de6a4e6e52 redis:6.2 "docker-entrypoint.s..." 15 seconds ago Up 14 seconds 6379/tcp, 172.25.254.10:26380->26379/tcp sentinel2

8465647e60f5 redis:6.2 "docker-entrypoint.s..." 15 seconds ago Up 14 seconds 172.25.254.10:6379->6379/tcp redis-master

f1f8b30d791c redis:6.2 "docker-entrypoint.s..." 15 seconds ago Up 14 seconds 6379/tcp, 172.25.254.10:26381->26379/tcp sentinel3

b6eed000e3ba redis:6.2 "docker-entrypoint.s..." 15 seconds ago Up 14 seconds 172.25.254.10:6380->6379/tcp redis-slave

c7c4603b81cf mysql:8.0 "docker-entrypoint.s..." 2 hours ago Up 2 hours (healthy) 172.25.254.10:3306->3306/tcp, 33060/tcp mysql-master

fd581481637b mysql:8.0 "docker-entrypoint.s..." 2 hours ago Up 2 hours (healthy) 33060/tcp, 172.25.254.10:3307->3306/tcp mysql-slave

[root@mysql redis]# 5.验证

redis主从验证

bash

[root@mysql redis]# docker exec redis-master redis-cli -a redis123 info replication

Warning: Using a password with '-a' or '-u' option on the command line interface may not be safe.

# Replication

role:master

connected_slaves:1

slave0:ip=172.20.0.1,port=6379,state=online,offset=14183,lag=1

master_failover_state:no-failover

master_replid:736a6ec6338f36540290a0f80d488f771dc9f32b

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:14183

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:14183

[root@mysql redis]# 哨兵模式验证

- 检查哨兵是否监控到主库

bash

[root@mysql redis]# docker exec sentinel1 redis-cli -p 26379 sentinel master mymaster

name

mymaster

ip

172.25.254.10

port

6379

runid

e3e783802269b22fae05e52b7bc3d3b3c66c7c67

flags

master

link-pending-commands

0

link-refcount

1

last-ping-sent

0

last-ok-ping-reply

738

last-ping-reply

738

down-after-milliseconds

5000

info-refresh

9686

role-reported

master

role-reported-time

382133

config-epoch

0

num-slaves

1

num-other-sentinels

2

quorum

2

failover-timeout

10000

parallel-syncs

1- 查看哨兵集群状态(应该显示3个哨兵)

bash

[root@mysql redis]# docker exec sentinel1 redis-cli -p 26379 sentinel sentinels mymaster

name

800f044f099138f1486b6bc7efdc2f39176c8df4

ip

172.20.0.6

port

26379

runid

800f044f099138f1486b6bc7efdc2f39176c8df4

flags

sentinel

link-pending-commands

0

link-refcount

1

last-ping-sent

0

last-ok-ping-reply

244

last-ping-reply

244

down-after-milliseconds

5000

last-hello-message

141

voted-leader

?

voted-leader-epoch

0

name

39a3b88a6d52990dd275870ac3cd6e926337bc1e

ip

172.20.0.4

port

26379

runid

39a3b88a6d52990dd275870ac3cd6e926337bc1e

flags

sentinel

link-pending-commands

0

link-refcount

1

last-ping-sent

0

last-ok-ping-reply

143

last-ping-reply

143

down-after-milliseconds

5000

last-hello-message

1985

voted-leader

?

voted-leader-epoch

0

[root@mysql redis]# - 查看当前主库信息

bash

[root@mysql redis]# docker exec sentinel1 redis-cli -p 26379 SENTINEL get-master-addr-by-name mymaster

172.25.254.10

6379- 查看哨兵日志(确认无报错)

bash

[root@mysql redis]# docker logs sentinel1 | tail -20

1:X 27 Mar 2026 07:05:03.399 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

1:X 27 Mar 2026 07:05:03.399 # Redis version=6.2.21, bits=64, commit=00000000, modified=0, pid=1, just started

1:X 27 Mar 2026 07:05:03.399 # Configuration loaded

1:X 27 Mar 2026 07:05:03.399 * Increased maximum number of open files to 10032 (it was originally set to 1024).

1:X 27 Mar 2026 07:05:03.399 * monotonic clock: POSIX clock_gettime

1:X 27 Mar 2026 07:05:03.400 * Running mode=sentinel, port=26379.

1:X 27 Mar 2026 07:05:03.404 # Sentinel ID is 6466c4f8e7d5974029b6e8469da0eebcbaeff1d3

1:X 27 Mar 2026 07:05:03.404 # +monitor master mymaster 172.25.254.10 6379 quorum 2

1:X 27 Mar 2026 07:05:04.451 * +slave slave 172.20.0.1:6379 172.20.0.1 6379 @ mymaster 172.25.254.10 6379

1:X 27 Mar 2026 07:05:05.519 * +sentinel sentinel 39a3b88a6d52990dd275870ac3cd6e926337bc1e 172.20.0.4 26379 @ mymaster 172.25.254.10 6379

1:X 27 Mar 2026 07:05:05.559 * +sentinel sentinel 800f044f099138f1486b6bc7efdc2f39176c8df4 172.20.0.6 26379 @ mymaster 172.25.254.10 6379

1:X 27 Mar 2026 07:05:09.487 # +sdown slave 172.20.0.1:6379 172.20.0.1 6379 @ mymaster 172.25.254.10 6379

[root@mysql redis]# 4. 高可用负载均衡:部署LVS+Keepalived → HAProxy+Keepalived

1.环境配置

bash

[root@haproxy ~]# dnf install ipvsadm keepalived -y加载lvs内核模块

bash

[root@haproxy ~]# modprobe ip_vs

modprobe ip_vs_rr

modprobe ip_vs_wrr

modprobe ip_vs_sh

modprobe nf_conntrack

modprobe ip_vs # 加载 LVS 核心模块

modprobe ip_vs_rr # 加载 轮询 调度算法

modprobe ip_vs_wrr # 加载 加权轮询 调度算法

modprobe ip_vs_sh # 加载 源地址哈希 调度算法(会话保持)

modprobe nf_conntrack # 加载连接跟踪,保证LVS转发正常永久加载

bash

[root@haproxy ~]# vim /etc/modules-load.d/ipvs.conf

[root@haproxy ~]# cat /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack配置内核参数

bash

[root@haproxy ~]# vim /etc/sysctl.conf

[root@haproxy ~]# cat /etc/sysctl.conf

# sysctl settings are defined through files in

# /usr/lib/sysctl.d/, /run/sysctl.d/, and /etc/sysctl.d/.

#

# Vendors settings live in /usr/lib/sysctl.d/.

# To override a whole file, create a new file with the same in

# /etc/sysctl.d/ and put new settings there. To override

# only specific settings, add a file with a lexically later

# name in /etc/sysctl.d/ and put new settings there.

#

# For more information, see sysctl.conf(5) and sysctl.d(5).

#解决LVS DR模式arp冲突、避免VIP广播乱飘

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.default.arp_ignore = 1

net.ipv4.conf.default.arp_announce = 2

#开启IP转发,LVS/HAProxy流量转发核心开关

net.ipv4.ip_forward = 12.配置 Keepalived(LVS 层,VIP: 192.168.1.100)

bash

[root@haproxy ~]# cp /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bakinterface 为你的实际网卡名

bash

[root@haproxy ~]# vim /etc/keepalived/keepalived.conf

[root@haproxy ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:c1:24:43 brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 172.25.254.20/24 brd 172.25.254.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fec1:2443/64 scope link noprefixroute

valid_lft forever preferred_lft forever

[root@haproxy ~]# cat /etc/keepalived/keepalived.conf

global_defs {

router_id LVS_MASTER

script_user root

enable_script_security

}

# LVS VIP

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.100/24 dev eth0 label eth0:vip1

}

}

# HAProxy VIP

vrrp_instance VI_2 {

state MASTER

interface eth0

virtual_router_id 52

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 2222

}

virtual_ipaddress {

172.25.254.200/24 dev eth0 label eth0:vip2

}

}

# LVS 服务器配置(转发到本机 127.0.0.1)

virtual_server 172.25.254.100 80 {

delay_loop 6

lb_algo wrr

lb_kind DR

protocol TCP

persistence_timeout 50

real_server 127.0.0.1 80 {

weight 1

TCP_CHECK {

connect_timeout 3

}

}

}3.配置 HAProxy(七层负载均衡)

1.安装并编辑配置

bash

[root@haproxy ~]# dnf install haproxy -y

备份默认的配置

[root@haproxy ~]# cp /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.bak编辑配置

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

[root@haproxy ~]# cat /etc/haproxy/haproxy.cfg

global

log /dev/log local0

maxconn 4096

user haproxy

group haproxy

daemon

defaults

log global

mode http

option httplog

option dontlognull

timeout connect 5000

timeout client 50000

timeout server 50000

# 统计页面

listen stats

bind 172.25.254.200:8080

stats enable

stats uri /stats

stats auth admin:admin123

# 前端入口

frontend http_front

bind 172.25.254.100:80

bind 172.25.254.200:80

default_backend web_servers

# 后端 Web 集群(指向 172.25.254.30)

backend web_servers

balance roundrobin

option httpchk GET /health

server web1 172.25.254.30:80 check inter 2000 rise 2 fall 3 weight 5创建日志目录

bash

[root@haproxy ~]# mkdir -p /var/lib/haproxy4.配置 LVS DR 模式回环地址l0绑定 VIP(用于接收 LVS 转发来的数据包)

bash

[root@haproxy ~]# ifconfig lo:0 172.25.254.100 netmask 255.255.255.255 up

[root@haproxy ~]# ip route add 172.25.254.100 dev lo:0 2>/dev/null || true

[root@haproxy ~]# cat >> /etc/sysctl.conf << 'EOF'

> # LVS DR 模式 ARP 抑制

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.eth0.arp_ignore = 1

net.ipv4.conf.eth0.arp_announce = 2

EOF

[root@haproxy ~]# 重新加载 /etc/sysctl.conf 里的所有内核参数

bash

[root@haproxy ~]# sysctl -p 2>/dev/null

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.default.arp_ignore = 1

net.ipv4.conf.default.arp_announce = 2

net.ipv4.ip_forward = 1

net.ipv4.conf.all.arp_ignore = 1

net.ipv4.conf.all.arp_announce = 2

net.ipv4.conf.lo.arp_ignore = 1

net.ipv4.conf.lo.arp_announce = 2

net.ipv4.conf.eth0.arp_ignore = 1

net.ipv4.conf.eth0.arp_announce = 2设置回环网卡 lo

bash

[root@haproxy ~]# sysctl -w net.ipv4.conf.lo.arp_ignore=1 2>/dev/null

net.ipv4.conf.lo.arp_ignore = 1

[root@haproxy ~]# sysctl -w net.ipv4.conf.lo.arp_announce=2 2>/dev/null

net.ipv4.conf.lo.arp_announce = 2

[root@haproxy ~]# sysctl -w net.ipv4.conf.eth0.arp_ignore=1 2>/dev/null

net.ipv4.conf.eth0.arp_ignore = 1

[root@haproxy ~]# sysctl -w net.ipv4.conf.eth0.arp_announce=2 2>/dev/null

net.ipv4.conf.eth0.arp_announce = 2

[root@haproxy ~]# 5.启动并检测

bash

[root@haproxy ~]# systemctl enable --now keepalived

[root@haproxy ~]# systemctl enable --now haproxy

Broadcast message from systemd-journald@haproxy (Fri 2026-03-27 19:22:31 CST):

haproxy[30607]: backend web_servers has no server available!

(因为还没有部署web所以这里有报错)

bash

[root@haproxy ~]#ip addr | grep 172.25.254

inet 172.25.254.100/32 scope global lo:0

inet 172.25.254.20/24 brd 172.25.254.255 scope global noprefixroute eth0

ipvsadm -Ln 2>/dev/null || echo "ipvsadm 未安装或无需显示"

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 wrr persistent 50

-> 172.25.254.20:80 Route 1 0 0

systemctl status haproxy --no-pager | head -5

● haproxy.service - HAProxy Load Balancer

Loaded: loaded (/usr/lib/systemd/system/haproxy.service; enabled; preset: disabled)

Active: active (running) since Fri 2026-03-27 19:22:31 CST; 24min ago

Process: 30603 ExecStartPre=/usr/sbin/haproxy -f $CONFIG -f $CFGDIR -c -q $OPTIONS (code=exited, status=0/SUCCESS)

Main PID: 30605 (haproxy)

[root@haproxy ~]#

[root@haproxy ~]# systemctl start keepalived

[root@haproxy ~]# ip addr | grep 172.25.254

inet 172.25.254.100/32 scope global lo:0

inet 172.25.254.20/24 brd 172.25.254.255 scope global noprefixroute eth0

inet 172.25.254.100/24 scope global secondary eth0:vip1

inet 172.25.254.200/24 scope global secondary eth0:vip2

[root@haproxy ~]# ^C5. Web集群:Docker部署Nginx+Tomcat集群

1.创建工作目录加载镜像

bash

[root@nginx ~]# mkdir -p /data/web/{nginx,apps}

[root@nginx ~]# cd /data/web/

[root@nginx web]# ll

总用量 0

drwxr-xr-x 2 root root 6 3月 27 19:59 apps

drwxr-xr-x 4 root root 32 3月 24 17:41 nginx

[root@nginx web]# mkdir -p nginx/conf.d

[root@nginx web]# mkdir -p nginx/html/static

[root@nginx web]#

cd

[root@nginx ~]# ll

总用量 228120

-rw-------. 1 root root 1000 1月 14 18:32 anaconda-ks.cfg

-rw-r--r-- 1 root root 75176448 3月 27 20:08 nginx-1.26.tar

-rw-r--r-- 1 root root 158410240 3月 27 20:09 tomcat-9.tar

[root@nginx ~]# docker load -i nginx-1.26.tar

^[[A^[[BLoaded image: nginx:1.26

[root@nginx ~]# docker load -i tomcat-9.tar

Loaded image: tomcat:92.创建 Nginx 配置文件

bash

[root@nginx web]# vim nginx/nginx.conf

[root@nginx web]# cat /data/web/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

keepalive_timeout 65;

server {

listen 80;

server_name localhost;

root /usr/share/nginx/html;

index index.html;

# 健康检查

location /health {

access_log off;

return 200 "healthy\n";

}

# 首页

location / {

root /usr/share/nginx/html;

try_files $uri $uri/ /index.html;

}

}

}3.编辑静态页面

bash

[root@nginx web]# vim nginx/html/index.html

[root@nginx web]# cat nginx/html/index.html

<!DOCTYPE html>

<html>

<head>

<title>企业级分布式电商平台</title>

<style>

body { font-family: Arial, sans-serif; text-align: center; margin-top: 50px; background: #f5f5f5; }

.container { background: white; padding: 40px; border-radius: 10px; box-shadow: 0 2px 10px rgba(0,0,0,0.1); display: inline-block; }

.status { color: #28a745; font-weight: bold; font-size: 24px; }

.info { color: #666; margin-top: 20px; }

.ip { font-family: monospace; background: #f8f9fa; padding: 5px 10px; border-radius: 4px; }

</style>

</head>

<body>

<div class="container">

<h1>🏢 企业级分布式电商平台</h1>

<p class="status">● 系统运行正常</p>

<div class="info">

<p>Web 节点: <span class="ip">172.25.254.30</span></p>

<p>负载均衡入口: <span class="ip">172.25.254.100</span></p>

<p>数据节点: <span class="ip">172.25.254.10</span></p>

</div>

</div>

</body>

</html>4.编辑docker-compose.yml

bash

[root@nginx web]# vim docker-compose.yml

[root@nginx web]# cat docker-compose.yml

services:

nginx:

image: nginx:1.26

container_name: nginx

ports:

- "80:80" # 这里改了!!!

volumes:

- ./nginx/nginx.conf:/etc/nginx/nginx.conf:ro

- ./nginx/html:/usr/share/nginx/html:ro

depends_on:

- tomcat

networks:

- web-net

restart: unless-stopped

tomcat:

image: tomcat:9

container_name: tomcat

environment:

- JAVA_OPTS=-server -Xms1g -Xmx2g -XX:+UseG1GC -Djava.security.egd=file:/dev/./urandom

- TZ=Asia/Shanghai

volumes:

- ./apps:/usr/local/tomcat/webapps

ports:

- "127.0.0.1:8080:8080"

networks:

- web-net

extra_hosts:

- "mysql-master:172.25.254.10"

- "mysql-slave:172.25.254.10"

- "redis-master:172.25.254.10"

restart: unless-stopped

networks:

web-net:

driver: bridge5.启动并测试

bash

[root@nginx ~]# cd /data/web/

[root@nginx web]# ll

总用量 4

drwxr-xr-x 2 root root 6 3月 27 19:59 apps

-rw-r--r-- 1 root root 822 3月 27 20:07 docker-compose.yml

drwxr-xr-x 4 root root 50 3月 27 20:00 nginx

[root@nginx web]# docker compose up -d

[+] up 3/3

✔ Network web_web-net Created 0.0s

✔ Container tomcat Started 0.2s

✔ Container nginx Started 0.3s

[root@nginx web]#

bash

[root@nginx web]# curl -s http://172.25.254.30/health

healthy

[root@nginx web]# curl http://172.25.254.30

<!DOCTYPE html>

<html>

<head>

<title>企业级分布式电商平台</title>

<style>

body { font-family: Arial, sans-serif; text-align: center; margin-top: 50px; background: #f5f5f5; }

.container { background: white; padding: 40px; border-radius: 10px; box-shadow: 0 2px 10px rgba(0,0,0,0.1); display: inline-block; }

.status { color: #28a745; font-weight: bold; font-size: 24px; }

.info { color: #666; margin-top: 20px; }

.ip { font-family: monospace; background: #f8f9fa; padding: 5px 10px; border-radius: 4px; }

</style>

</head>

<body>

<div class="container">

<h1>🏢 企业级分布式电商平台</h1>

<p class="status">● 系统运行正常</p>

<div class="info">

<p>Web 节点: <span class="ip">172.25.254.30</span></p>

<p>负载均衡入口: <span class="ip">172.25.254.100</span></p>

<p>数据节点: <span class="ip">172.25.254.10</span></p>

</div>

</div>

</body>

</html>6.访问检测

bash

[root@haproxy ~]#

[root@haproxy ~]# ipvsadm -Ln(LVS DR规则应如下)

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 wrr persistent 50

-> 127.0.0.1:80 Route 1 0 0

没有手动添加LVS DR规则

ipvsadm -A -t 172.25.254.100:80 -s wrr -p 50

ipvsadm -a -t 172.25.254.100:80 -r 127.0.0.1:80 -g -w 1

[root@haproxy ~]# curl http://172.25.254.100

<!DOCTYPE html>

<html>

<head>

<title>企业级分布式电商平台</title>

<style>

body { font-family: Arial, sans-serif; text-align: center; margin-top: 50px; background: #f5f5f5; }

.container { background: white; padding: 40px; border-radius: 10px; box-shadow: 0 2px 10px rgba(0,0,0,0.1); display: inline-block; }

.status { color: #28a745; font-weight: bold; font-size: 24px; }

.info { color: #666; margin-top: 20px; }

.ip { font-family: monospace; background: #f8f9fa; padding: 5px 10px; border-radius: 4px; }

</style>

</head>

<body>

<div class="container">

<h1>🏢 企业级分布式电商平台</h1>

<p class="status">● 系统运行正常</p>

<div class="info">

<p>Web 节点: <span class="ip">172.25.254.30</span></p>

<p>负载均衡入口: <span class="ip">172.25.254.100</span></p>

<p>数据节点: <span class="ip">172.25.254.10</span></p>

</div>

</div>

</body>

</html>

[root@haproxy ~]# 浏览器访问如下

九、项目亮点

-

完整企业级架构,不是Demo,是生产可用方案

-

全链路高可用,无任何单点故障

-

秒杀系统,解决高并发核心问题

-

容器化部署,符合现代运维标准

-

读写分离+缓存架构,性能提升10倍以上