讯飞虚拟人官方网址: https://passport.xfyun.cn/login

1.获取虚拟人关键数据

接口服务ID:xxxxxxxxxxxxx

APPID:xxx

APIKey:xxxx

APISecret:xxxxx

形象id:xxxxx

Vcn:xxxx

背景:xxxxxxxxxxxxxxx(上传资源图片后获得的背景)

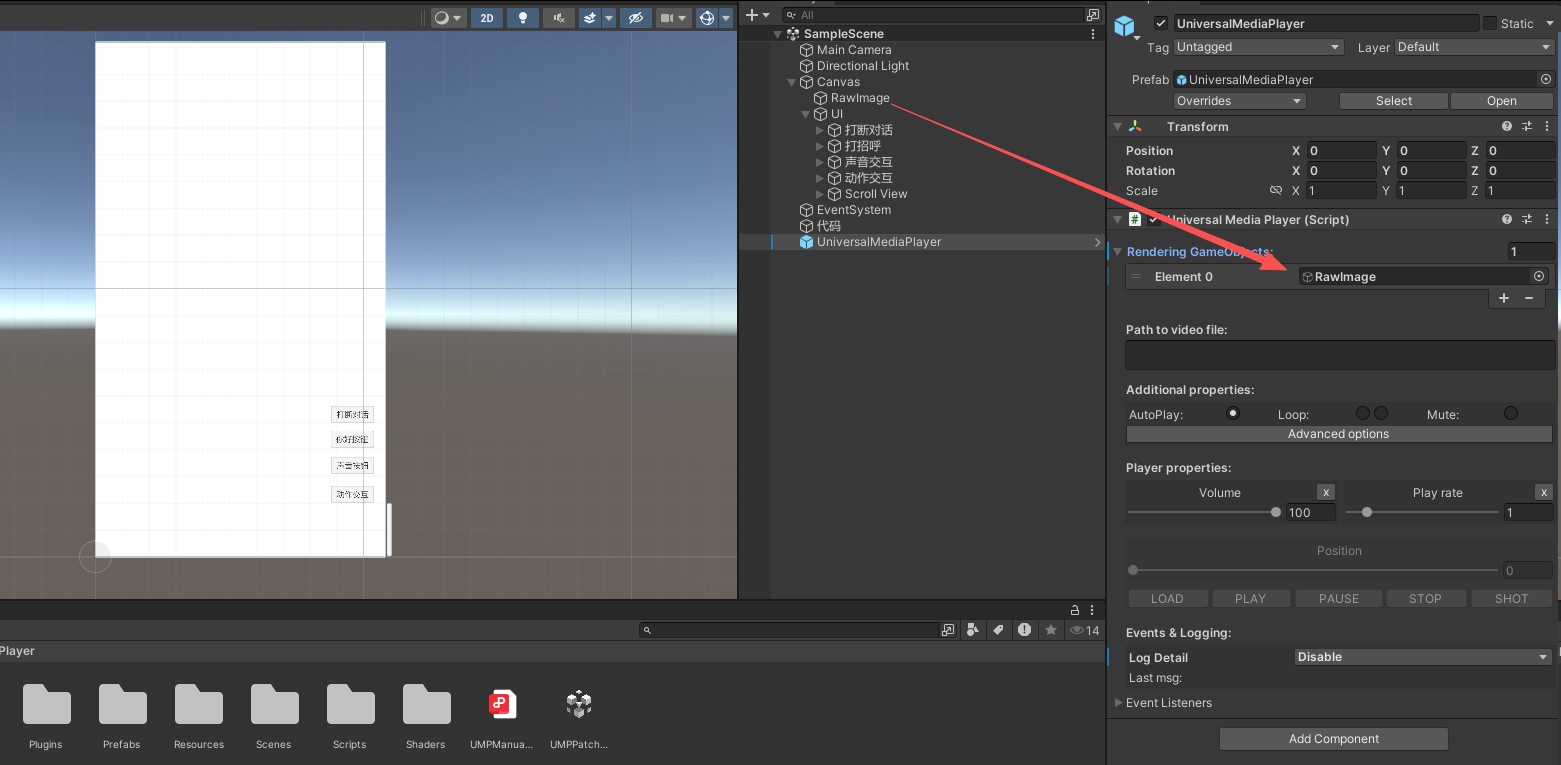

2.加入插件UMP Pro Win Mac Linux WebGL 2.0.3,播放获得的rtmp直播流(自己找资源,淘宝有)

添加UniversalMediaPlayer预制体场景中,再放入RawImage

3.所需代码

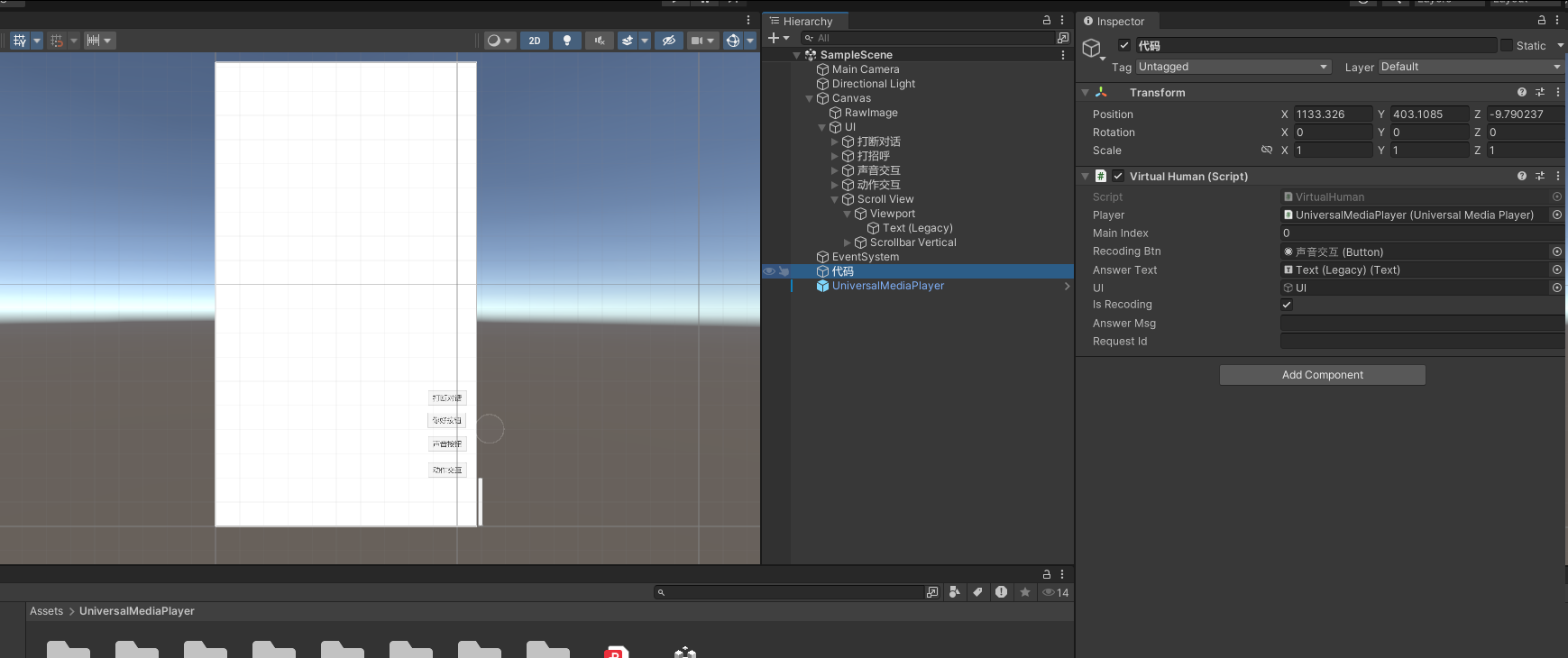

1)场景中加入VirtualHuman代码,之加入这一就行

cs

using AudioProcess;

using AvatarDemo;

using Newtonsoft.Json.Linq;

using System;

using System.Buffers.Text;

using System.Collections;

using System.Collections.Generic;

using System.IO;

using System.Reflection;

using System.Threading;

using System.Threading.Tasks;

using UMP;

using UnityEditor;

using UnityEditor.PackageManager.Requests;

using UnityEngine;

using UnityEngine.UI;

public class VirtualHuman : MonoBehaviour

{

/// <summary>

/// 播放器

/// </summary>

public UniversalMediaPlayer player;

/// <summary>

/// 状态代码:0,初始状态;1,获取直播流;2,回答文字改变

/// </summary>

public int MainIndex = 0;

/// <summary>

/// 声音交互按钮

/// </summary>

public Button RecodingBtn;

public Text answerText;

public GameObject UI;

/// <summary>

/// 是否录音

/// </summary>

public bool isRecoding = true;

/// <summary>

/// 文字回答

/// </summary>

public string answerMsg;

public string requestId;

AudioRecorder recorder = new AudioRecorder();

private Coroutine recordingLive;

// 实时语音交互相关变量

private Queue<byte[]> audioDataQueue = new Queue<byte[]>();

private bool isFirstAudioChunk = true;

private object queueLock = new object();

public void Start()

{

UI.SetActive(false);

answerMsg = "";

MainIndex = 0;

try

{

// 监听应用程序退出事件

Application.quitting += OnApplicationQuitting;

// 注册到AvatarMain的静态事件

AvatarMain.OnStreamUrlReceived += OnStreamUrlReceived;

// 注册到AvatarMain的静态事件

AvatarMain.OnAnswerReceived += OnAnswerReceived;

//后台线程运行

Task.Run(async () =>

{

try

{

await AvatarMain.RunAsync();

}

catch (Exception e)

{

Debug.LogError("Error in AvatarMain.RunAsync(): " + e.ToString());

}

});

}

catch (Exception e)

{

Debug.LogError("Error in Test.Start(): " + e.ToString());

}

}

public void Update()

{

//开始播放直播

if (MainIndex == 1)

{

player.Play();

MainIndex = 0;

//ChangeAction(1);

UI.SetActive(true);

}

else if (MainIndex == 2)

{

answerText.text = answerMsg;

MainIndex = 0;

}

}

/// <summary>

/// 直播流网址

/// </summary>

/// <param name="streamUrl"></param>

private void OnStreamUrlReceived(string streamUrl)

{

// 在这里可以处理接收到的直播流网址,比如显示到UI上或者传递给其他组件

Debug.Log("直播流网址接收委托方法: " + streamUrl);

// 设置播放器路径

player.Path = streamUrl;

MainIndex = 1;

}

/// <summary>

/// 文本接收

/// </summary>

/// <param name="answer"></param>

private void OnAnswerReceived(string answer)

{

MainIndex = 2;

answerMsg = answerMsg + answer;

}

/// <summary>

/// 第一次打招呼

/// </summary>

public void SayHellow(string names)

{

answerMsg = "";

MainIndex = 0;

//调用文本互动

string greeting = "你好,我是" + names;

JObject request = AvatarMain.BuildTextinteractRequest(greeting);

if (AvatarMain.avatarWsUtilInstance != null)

{

AvatarMain.avatarWsUtilInstance.SendWebSocket(request);

}

}

/// <summary>

/// 打断对话

/// </summary>

public void StopRequest()

{

answerMsg = "";

MainIndex = 2;

JObject requset = AvatarMain.BuildResetRequest();

if (AvatarMain.avatarWsUtilInstance != null)

{

AvatarMain.avatarWsUtilInstance.SendWebSocket(requset);

}

}

/// <summary>

/// 改变动作

/// </summary>

public void ChangeAction(int index)

{

//动作打招呼

JObject actionChange = AvatarMain.BuildCmdRequest(AvatarMain.actionId[index]);

if (AvatarMain.avatarWsUtilInstance != null)

{

AvatarMain.avatarWsUtilInstance.SendWebSocket(actionChange);

}

}

/// <summary>

/// 单论交互,处理录音按钮点击事件

/// </summary>

public void OnRecordingButtonClick()

{

if (isRecoding)

{

// 开始录音

Debug.Log("开始录音!");

recorder.onRecording += OnRecording;

recorder.StartRecording();

isRecoding = false;

StopRequest();

}

else

{

// 结束录音

Debug.Log("结束录音!");

recorder.StopRecording();

recorder.onRecording -= OnRecording;

isRecoding = true;

}

}

/// <summary>

/// 单论交互录音数据处理

/// </summary>

/// <param name="data">录音数据</param>

private void OnRecording(byte[] data)

{

answerMsg = "";

MainIndex = 2;

Debug.Log("录音数据长度: " + data.Length);

//后台线程运行

Task.Run(async () =>

{

try

{

await AvatarMain.RunAudio(data);

}

catch (Exception e)

{

Debug.LogError("Error in AvatarMain.RunAsync(): " + e.ToString());

}

});

}

/// <summary>

/// 实时交互录音按钮点击事件

/// 第一次调用:开启实时语音交互

/// 再次调用:结束实时语音交互

/// </summary>

public void OnRecordingLiveButtonClick()

{

if (isRecoding)

{

// 第一次调用:开始实时语音交互

Debug.Log("开始实时语音交互!");

// 重置状态

isFirstAudioChunk = true;

lock (queueLock)

{

audioDataQueue.Clear();

}

// 生成新的请求ID

requestId = Guid.NewGuid().ToString();

Debug.Log("requestId"+requestId);

// 开始录音(录音回调在协程中注册)

recorder.StartRecording();

isRecoding = false;

// 启动协程,每秒上传一次音频

Debug.Log("启动StartRecorder协程");

recordingLive = StartCoroutine(StartRecorder(requestId));

}

else

{

// 再次调用:结束实时语音交互

Debug.Log("结束实时语音交互!");

// 停止录音(录音回调在协程中移除)

recorder.StopRecording();

isRecoding = true;

// 停止协程

if (recordingLive != null)

{

StopCoroutine(recordingLive);

}

// 发送结束帧 status=2

Task.Run(async () =>

{

try

{

await AvatarMain.RunAudioLive(2, requestId, new byte[0]);

Debug.Log("实时语音交互结束帧已发送");

}

catch (Exception e)

{

Debug.LogError("Error sending final audio frame: " + e.ToString());

}

});

}

}

/// <summary>

/// 实时录音协程,每秒录制一次音频并上传

/// </summary>

IEnumerator StartRecorder(string requestId)

{

Debug.Log("StartRecorder协程开始执行,requestId: " + requestId);

// 确保录音回调已注册

recorder.onRecording += OnRecordinglive;

while (isRecoding == false)

{

Debug.Log("StartRecorder协程执行中,isRecoding: " + isRecoding);

// 开始录音

//Debug.Log("开始录制音频");

recorder.StartRecording();

// 等待1秒

yield return new WaitForSeconds(1);

// 停止录音

//Debug.Log("停止录制音频");

recorder.StopRecording();

// 从队列中获取音频数据

byte[] audioData = null;

lock (queueLock)

{

if (audioDataQueue.Count > 0)

{

// 合并队列中的所有音频数据

using (MemoryStream ms = new MemoryStream())

{

while (audioDataQueue.Count > 0)

{

byte[] chunk = audioDataQueue.Dequeue();

ms.Write(chunk, 0, chunk.Length);

}

audioData = ms.ToArray();

}

}

}

// 如果有音频数据,则上传

if (audioData != null && audioData.Length > 0)

{

// 确定status状态

int status;

if (isFirstAudioChunk)

{

status = 0; // 第一次调用,status=0

isFirstAudioChunk = false;

}

else

{

status = 1; // 之后每次调用,status=1

}

//Debug.Log($"上传音频数据,status={status}, 数据长度={audioData.Length}");

// 在后台线程中上传音频

Task.Run(async () =>

{

try

{

await AvatarMain.RunAudioLive(status, requestId, audioData);

}

catch (Exception e)

{

Debug.LogError("Error uploading audio: " + e.ToString());

}

});

}

else

{

Debug.Log("没有录制到音频数据");

}

}

// 移除录音回调

recorder.onRecording -= OnRecordinglive;

Debug.Log("StartRecorder协程结束执行");

}

/// <summary>

/// 实时录音数据回调,将数据添加到队列

/// </summary>

private void OnRecordinglive(byte[] data)

{

if (data != null && data.Length > 0)

{

lock (queueLock)

{

audioDataQueue.Enqueue(data);

}

//Debug.Log($"实时录音数据已添加到队列,长度: {data.Length}");

}

}

private void OnApplicationQuitting()

{

try

{

// 停止ping计时器

if (AvatarMain.pingTimer != null)

{

AvatarMain.pingTimer.Dispose();

AvatarMain.pingTimer = null;

Debug.Log("OnApplicationQuitting:Ping timer stopped");

}

// 发送停止请求

if (AvatarMain.avatarWsUtilInstance != null)

{

var stopRequest = AvatarMain.BuildStopRequest();

AvatarMain.avatarWsUtilInstance.SendWebSocket(stopRequest);

Debug.Log("Stop request sent on application quit");

// 彻底关闭WebSocket连接

AvatarMain.avatarWsUtilInstance.CloseWebSocket();

}

else

{

Debug.LogWarning("avatarWsUtilInstance is null, cannot send stop request on application quit");

}

}

catch (Exception e)

{

Debug.LogError("Error in OnApplicationQuitting(): " + e.ToString());

}

}

private void OnDestroy()

{

try

{

// 停止ping计时器

if (AvatarMain.pingTimer != null)

{

AvatarMain.pingTimer.Dispose();

AvatarMain.pingTimer = null;

Debug.Log("OnDestroy:Ping timer stopped");

}

// 发送停止请求

if (AvatarMain.avatarWsUtilInstance != null)

{

var stopRequest = AvatarMain.BuildStopRequest();

AvatarMain.avatarWsUtilInstance.SendWebSocket(stopRequest);

Debug.Log("Stop request sent");

// 彻底关闭WebSocket连接

AvatarMain.avatarWsUtilInstance.CloseWebSocket(); ;

}

else

{

Debug.LogWarning("avatarWsUtilInstance is null, cannot send stop request");

}

}

catch (Exception e)

{

Debug.LogError("Error in Test.OnDestroy(): " + e.ToString());

}

}

}2)连接讯飞网址代码AuthUtil和AvatarWsUtil

cs

using System;

using System.Collections.Generic;

using System.Linq;

using System.Net;

using System.Net.Http;

using System.Security.Cryptography;

using System.Text;

using UnityEngine;

namespace AvatarDemo

{

public class AuthUtil

{

public static string AssembleRequestUrl(string requestUrl, string apiKey, string apiSecret)

{

return AssembleRequestUrl(requestUrl, apiKey, apiSecret, "GET");

}

private static string AssembleRequestUrl(string requestUrl, string apiKey, string apiSecret, string method)

{

//转换WebSocket的URL,ws转为http,wss转为https

string httpRequestUrl = requestUrl.Replace("ws://", "http://").Replace("wss://", "https://");

try

{

Uri url = new Uri(httpRequestUrl);

//设置时间格式并设置UTC时区

string date = DateTime.UtcNow.ToString("r");

string host = url.Host;

//Debug.Log("host:" + host);

//构建签名字符串

StringBuilder builder = new StringBuilder("host: ").Append(host).Append("\n").

Append("date: ").Append(date).Append("\n").

Append(method).Append(" " + url.PathAndQuery + " HTTP/1.1");

Encoding charset = Encoding.UTF8;

//生成 HMAC SHA-256 签名:

using (HMACSHA256 mac = new HMACSHA256(Encoding.UTF8.GetBytes(apiSecret)))

{

byte[] hexDigits = mac.ComputeHash(Encoding.UTF8.GetBytes(builder.ToString()));

string sha = Convert.ToBase64String(hexDigits);

//生产授权头信息,将授权信息编码为 Base64,并构建最终的请求 URL。

string authorization = string.Format("hmac username=\"{0}\", algorithm=\"{1}\", headers=\"{2}\", signature=\"{3}\"", apiKey, "hmac-sha256", "host date request-line", sha);

string authBase = Convert.ToBase64String(Encoding.UTF8.GetBytes(authorization));

//Debug.Log("signature:" + sha);

//Debug.Log("authorization:" + authorization);

//Debug.Log("authBase:" + authBase);

return string.Format("{0}?authorization={1}&host={2}&date={3}", requestUrl, WebUtility.UrlEncode(authBase), WebUtility.UrlEncode(host), WebUtility.UrlEncode(date));

}

}

catch (Exception e)

{

throw new Exception("assemble requestUrl error:" + e.Message);

}

}

/**

* 计算签名所需要的header参数 (http 接口)

* @param requestUrl like 'http://rest-api.xfyun.cn/v2/iat'

* @param apiKey

* @param apiSecret

* @method request method POST/GET/PATCH/DELETE etc....

* @param body http request body

* @return header map ,contains all headers should be set when access api

*/

public static Dictionary<string, string> AssembleRequestHeader(string requestUrl, string apiKey, string apiSecret, string method, byte[] body)

{

try

{

Uri url = new Uri(requestUrl);

// 获取日期

string date = DateTime.UtcNow.ToString("r");

//计算body 摘要(SHA256)

using (SHA256 sha256Hash = SHA256.Create())

{

byte[] bytes = sha256Hash.ComputeHash(body);

string digest = "SHA256=" + Convert.ToBase64String(bytes);

string host = url.Host;

int port = url.Port; // port >0 说明url 中带有port

if (port > 0)

{

host = host + ":" + port;

}

string path = url.PathAndQuery;

if (string.IsNullOrEmpty(path))

{

path = "/";

}

//构建签名计算所需参数

StringBuilder builder = new StringBuilder().

Append("host: ").Append(host).Append("\n").

Append("date: ").Append(date).Append("\n").

Append(method).Append(" " + path + " HTTP/1.1").Append("\n").

Append("digest: " + digest);

Debug.Log("builder:" + builder);

Encoding charset = Encoding.UTF8;

//使用hmac-sha256计算签名

using (HMACSHA256 mac = new HMACSHA256(Encoding.UTF8.GetBytes(apiSecret)))

{

byte[] hexDigits = mac.ComputeHash(Encoding.UTF8.GetBytes(builder.ToString()));

string sha = Convert.ToBase64String(hexDigits);

// 构建header

string authorization = string.Format("hmac-auth api_key=\"{0}\", algorithm=\"{1}\", headers=\"{2}\", signature=\"{3}\"", apiKey, "hmac-sha256", "host date request-line digest", sha);

Dictionary<string, string> header = new Dictionary<string, string>();

Console.WriteLine();

header.Add("authorization", authorization);

header.Add("host", host);

header.Add("date", date);

header.Add("digest", digest);

//Console.WriteLine("header " + string.Join(", ", header.Select(x => x.Key + ": " + x.Value)));

return header;

}

}

}

catch (Exception e)

{

throw new Exception("assemble requestHeader error:" + e.Message);

}

}

}

}

cs

using System;

using System.Collections.Generic;

using System.Net.WebSockets;

using System.Text;

using System.Threading;

using System.Threading.Tasks;

using Newtonsoft.Json;

using Newtonsoft.Json.Linq;

using UnityEngine;

namespace AvatarDemo

{

public class AvatarWsUtil

{

// 定义接收直播流网址的委托

public delegate void StreamUrlReceivedHandler(string streamUrl);

// 定义接收直播流网址的事件

public event StreamUrlReceivedHandler OnStreamUrlReceived;

// 定义接收回答事件委托

public delegate void AnswerReceivedHandler(string answer);

//定义接收回答事件

public event AnswerReceivedHandler OnAnswerReceived;

public ClientWebSocket webSocket;

private readonly AtomicBoolean isConnected = new AtomicBoolean(false);

//这个位于 System.Threading 命名空间下的家伙,用起来其实特别直观。你给它一个初始数字,比如要等5个并行下载任务完成,就 new CountdownEvent(5)。每个任务干完自己的活,就喊一声 Signal(),相当于报到。主线程里调用 Wait(),就会乖乖地等在那里,直到5声"报到"都齐了,它才被放行。

private CountdownEvent countDownLatch;

private CountdownEvent connect;

public JObject jsonObject;

public static string vmr_status = "0";

public AvatarWsUtil()

{

}

public AvatarWsUtil(string requestUrl)

{

connect = new CountdownEvent(1);

Task.Run(async () => await ConnectAsync(requestUrl));

}

/// <summary>

/// 异步连接

/// </summary>

/// <param name="requestUrl"></param>

/// <returns></returns>

private async Task ConnectAsync(string requestUrl)

{

webSocket = new ClientWebSocket();

try

{

await webSocket.ConnectAsync(new Uri(requestUrl), CancellationToken.None);

Debug.Log("触发onOpen事件,连接上了");

isConnected.Value = true;

connect.Signal();

await ReceiveMessagesAsync();

}

catch (Exception ex)

{

Debug.LogError("WebSocket连接失败: " + ex.Message);

}

}

/// <summary>

/// 接收异步信息

/// </summary>

/// <returns></returns>

private async Task ReceiveMessagesAsync()

{

var buffer = new byte[1024 * 4];

try

{

while (webSocket.State == WebSocketState.Open)

{

var result = await webSocket.ReceiveAsync(new ArraySegment<byte>(buffer), CancellationToken.None);

if (result.MessageType == WebSocketMessageType.Text)

{

string text = Encoding.UTF8.GetString(buffer, 0, result.Count);

Debug.Log("onMessage: " + text);

JObject jsonObject = JObject.Parse(text);

//协议头部

int code = (int)jsonObject["header"]["code"];

if (code != 0)

{

OnEvent(1002, (string)jsonObject["header"]["message"], "server closed");

return;

}

//服务别名,请求数据包

JObject payload = (JObject)jsonObject["payload"];

if (payload != null)

{

if ((JObject)payload["avatar"] != null)

{

JObject avatar = (JObject)payload["avatar"];

// 检查event_type字段

if (avatar["event_type"] != null)

{

string eventType = (string)avatar["event_type"];

Debug.Log("eventType: " + eventType);

switch (eventType)

{

case "stream_info"://

Debug.Log("返回流地址:" + text);

if (avatar["stream_url"] != null)

{

string streamUrl = (string)avatar["stream_url"];

Debug.Log("获取到了推流地址,start成功: " + streamUrl);

// 触发直播流网址接收事件

OnStreamUrlReceived?.Invoke(streamUrl);

// 保持心跳协议, 只在countDownLatch不为null时才尝试Signal()

if (countDownLatch != null && countDownLatch.CurrentCount > 0)

{

try

{

countDownLatch.Signal();

}

catch (Exception ex)

{

Debug.LogWarning("Error signaling countDownLatch: " + ex.Message);

}

}

}

break;

case "stream_start":

//Debug.Log("首帧回调:" + text);

break;

case "pong":

//Debug.Log("心跳协议!" );

break;

case "tts_duration":

//Debug.Log("持续:" + text);

break;

case "driver_status":

//Debug.Log("驾驶:" + text);

break;

case "atcion_status":

//Debug.Log("动作:" + text);

break;

case "reset":

//Debug.Log("打断协议:" + text);

break;

case "audit_result":

//Debug.Log("动作执行:" + text);

break;

case "cmd":

//Debug.Log("动作:" + text);

break;

case "stop":

//Debug.Log("结束协议:" + text);

break;

default:

//Debug.Log("其他: " + text);

break;

}

}

}

else if ((JObject)payload["nlp"] != null)

{

//问题回答

JObject npl = (JObject)payload["nlp"];

if (npl != null)

{

if ((string)npl["answer"]["text"] != null)

{

Debug.Log("文字回答:" + (string)npl["answer"]["text"]);

// 触发回答接收事件

OnAnswerReceived?.Invoke((string)npl["answer"]["text"]);

}

}

}

else if ((JObject)payload["ars"] != null)

{

//问题回答

JObject asr = (JObject)payload["asr"];

if (asr != null)

{

if ((string)asr["text"] != null)

{

Debug.Log("回答:" + (string)asr["text"]);

}

}

}

}

}

else if (result.MessageType == WebSocketMessageType.Close)

{

try

{

await webSocket.CloseAsync(WebSocketCloseStatus.NormalClosure, string.Empty, CancellationToken.None);

}

catch (Exception ex)

{

Debug.LogWarning("Error closing WebSocket: " + ex.Message);

}

isConnected.Value = false;

// 只在countDownLatch不为null且计数大于0时才尝试Signal()

if (countDownLatch != null && countDownLatch.CurrentCount > 0)

{

try

{

countDownLatch.Signal();

}

catch (Exception ex)

{

Debug.LogWarning("Error signaling countDownLatch: " + ex.Message);

}

}

}

}

}

catch (WebSocketException ex)

{

// 捕获WebSocket异常,特别是远程服务器关闭连接的情况

if (ex.Message.Contains("closed the WebSocket connection"))

{

Debug.Log("WebSocket connection closed by remote server");

}

else

{

Debug.LogWarning("WebSocket error: " + ex.Message);

}

isConnected.Value = false;

// 只在countDownLatch不为null且计数大于0时才尝试Signal()

if (countDownLatch != null && countDownLatch.CurrentCount > 0)

{

try

{

countDownLatch.Signal();

}

catch (Exception e)

{

Debug.LogWarning("Error signaling countDownLatch: " + e.Message);

}

}

}

catch (Exception ex)

{

Debug.LogWarning("Unexpected error in ReceiveMessagesAsync: " + ex.Message);

isConnected.Value = false;

// 只在countDownLatch不为null且计数大于0时才尝试Signal()

if (countDownLatch != null && countDownLatch.CurrentCount > 0)

{

try

{

countDownLatch.Signal();

}

catch (Exception e)

{

Debug.LogWarning("Error signaling countDownLatch: " + e.Message);

}

}

}

}

public void StartJObject(JObject request, CountdownEvent countDownLatch)

{

this.countDownLatch = countDownLatch;

connect.Wait();

SendWebSocket(request);

}

public void StartJObject(JObject request)

{

connect.Wait();

SendWebSocket(request);

}

/// <summary>

/// 发送连接

/// </summary>

/// <param name="request"></param>

public void SendWebSocket(JObject request)

{

if (isConnected.Value && webSocket.State == WebSocketState.Open)

{

string jsonStr = request.ToString();

var buffer = Encoding.UTF8.GetBytes(jsonStr);

webSocket.SendAsync(new ArraySegment<byte>(buffer), WebSocketMessageType.Text, true, CancellationToken.None);

Debug.Log("发送请求:" + JsonConvert.SerializeObject(request));

}

else

{

Debug.LogWarning("WebSocket not connected, cannot send message");

}

}

private void OnEvent(int code, string reason, string eventName)

{

Debug.Log("session " + eventName + " . code:" + code + ", reason:" + reason);

isConnected.Value = false;

// 只在countDownLatch不为null且计数大于0时才尝试Signal()

if (countDownLatch != null && countDownLatch.CurrentCount > 0)

{

try

{

countDownLatch.Signal();

}

catch (Exception ex)

{

Debug.LogWarning("Error signaling countDownLatch: " + ex.Message);

}

}

try

{

webSocket.CloseAsync((WebSocketCloseStatus)code, reason, CancellationToken.None);

}

catch (Exception ex)

{

Debug.LogError(eventName + " error." + ex.Message);

}

}

/// <summary>

/// 关闭连接

/// </summary>

public void CloseWebSocket()

{

if (webSocket != null && webSocket.State == WebSocketState.Open)

{

webSocket.CloseAsync(WebSocketCloseStatus.NormalClosure, "", CancellationToken.None);

}

}

/// <summary>

/// 原子布尔类

/// </summary>

public class AtomicBoolean

{

private int _value;

public AtomicBoolean(bool initialValue = false)

{

_value = initialValue ? 1 : 0;

}

public bool Value

{

get => Interlocked.CompareExchange(ref _value, 0, 0) == 1;

set => Interlocked.Exchange(ref _value, value ? 1 : 0);

}

public bool Get() => Value;

public void Set(bool newValue) => Value = newValue;

}

}

}3)配置关键信息和整合调用代码AvatarMain

cs

using Newtonsoft.Json.Linq;

using System;

using System.Buffers.Text;

using System.Collections.Generic;

using System.IO;

using System.Threading;

using System.Threading.Tasks;

using UnityEditor;

using UnityEditor.PackageManager.Requests;

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.XR;

namespace AvatarDemo

{

public class AvatarMain : MonoBehaviour

{

public static string avatarUrl = "wss://avatar.cn-huadong-1.xf-yun.com/v1/interact";//接口地址,无需更改

public static string apiKey = "xxxxxxxxxxxxxx"; //请到交互平台-接口服务中获取

public static string apiSecret = "xxxxxxxxxxxxxx"; //请到交互平台-接口服务中获取

public static string appId = "xxxxxxxxxxxxxx"; //请到交互平台-接口服务中获取

public static string avatarId = "xxxxxxxxxxxxxx"; //请到交互平台-接口服务-形象列表中获取

public static string scene_id = "xxxxxxxxxxxxxx";//请到交互平台-接口服务中获取-接口服务ID

public static string TTE = "UTF8"; // 小语种必须使用UNICODE编码作为值

// 发音人参数。到控制台-我的应用-语音合成-添加试用或购买发音人,添加后即显示该发音人参数值,若试用未添加的发音人会报错11200

public static string VCN = "x4_yezi";//请到交互平台-接口服务-声音列表中获取

public static string TEXT = "欢迎来到讯飞开放平台";

/// <summary>

/// 背景

/// </summary>

public static string beijingData = "xxxxxxxxxxxxxx";

public static AvatarWsUtil avatarWsUtilInstance;

public static System.Threading.Timer pingTimer;

public static event AvatarWsUtil.StreamUrlReceivedHandler OnStreamUrlReceived;

public static event AvatarWsUtil.AnswerReceivedHandler OnAnswerReceived;

public static string requestId;//单次请求的唯一id

public static List<string> actionId = new List<string>() { "xxxxxxxxxxxxxx"};

public static AvatarWsUtil avatarWsUtil;

public static void MainMethod()

{

Task.Run(async () => await RunAsync());

}

/// <summary>

/// 异步编程,启动平台

/// </summary>

/// <returns></returns>

public static async Task RunAsync()

{

try

{

string requestUrl = AuthUtil.AssembleRequestUrl(avatarUrl, apiKey, apiSecret);

long l = DateTimeOffset.Now.ToUnixTimeMilliseconds();

// 总是创建新实例,确保使用带有认证信息的URL

avatarWsUtilInstance = new AvatarWsUtil(requestUrl);

// 将静态事件与实例事件关联

avatarWsUtilInstance.OnStreamUrlReceived += (streamUrl) =>

{

OnStreamUrlReceived?.Invoke(streamUrl);

};

//将静态事件与实例事件关联

avatarWsUtilInstance.OnAnswerReceived += (msg) =>

{

OnAnswerReceived?.Invoke(msg);

};

avatarWsUtil = avatarWsUtilInstance;

//发送start帧

Debug.Log("开始发送start协议" + requestUrl);

CountdownEvent countDownLatch = new CountdownEvent(1);

try

{

avatarWsUtil.StartJObject(BuildStartRequest(), countDownLatch);

}

catch (Exception e)

{

Debug.LogError("Error in avatarWsUtil.Start(): " + e.ToString());

}

countDownLatch.Wait();

//发送ping帧,start之后没5秒发送一次ping心跳,用来维持ws连接

pingTimer = new Timer(_ =>

{

try

{

avatarWsUtil.SendWebSocket(BuildPingRequest());

//Debug.Log("心跳协议开始!");

}

catch (Exception e)

{

Debug.LogError("Error sending ping: " + e.ToString());

}

}, null, 0, 5000);

}

catch (Exception ex)

{

Debug.LogError("Error in Main.RunAsync(): " + ex.ToString());

}

await Task.Delay(10);

}

/// <summary>

/// 从本地读取

/// </summary>

/// <param name="fildPath"></param>

/// <returns></returns>

public static async Task RunAudio(string fildPath)

{

// 音频驱动,不会进行理解,直接播报音频中的内容,只进行口唇匹配

// 一个音频中status参数值是:0-1-1-1-......-1-1-1-2。从0开始,1过渡,2结束。

// byte数组字节数不要太少,否则会有卡顿的感觉

// Task.Delay(100)这里是为了模拟间隔,最好间隔40-100ms

string audioPath = fildPath;

if (File.Exists(audioPath))

{

using (FileStream inputStream = new FileStream(audioPath, FileMode.Open, FileAccess.Read))

{

byte[] bytes = new byte[1024 * 30];

int len = 0;

int status = 0;

string requestId = Guid.NewGuid().ToString();

while ((len = inputStream.Read(bytes, 0, bytes.Length)) != 0)

{

if (len == 0)

{

status = 2;

}

await Task.Delay(100);

byte[] data = new byte[len];

Array.Copy(bytes, 0, data, 0, len);

Debug.Log("status=" + status);

string audioData = Convert.ToBase64String(data);

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(0, requestId, status, audioData));

Array.Clear(bytes, 0, bytes.Length);

status = 1;

}

await Task.Delay(100);

status = 2;

Debug.Log("status=" + status);

//补充最后一个status=2的尾帧。

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(0, requestId, status, "AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA=="));

Debug.Log("音频上传完事!");

}

}

else

{

Debug.LogWarning("Audio file not found: " + audioPath);

}

}

/// <summary>

/// 录音上传,单论交互

/// </summary>

/// <param name="data"></param>

/// <returns></returns>

public static async Task RunAudio(byte[] data)

{

// 音频驱动,不会进行理解,直接播报音频中的内容,只进行口唇匹配

// 一个音频中status参数值是:0-1-1-1-......-1-1-1-2。从0开始,1过渡,2结束。

// byte数组字节数不要太少,否则会有卡顿的感觉

// Task.Delay(100)这里是为了模拟间隔,最好间隔40-100ms

if (data == null || data.Length == 0)

{

Debug.LogWarning("Audio data is null or empty");

return;

}

int bufferSize = 1024 * 30; // 30KB per chunk

int len = 0;

int status = 0;

string requestId = Guid.NewGuid().ToString();

try

{

while (len < data.Length)

{

int chunkSize = Math.Min(bufferSize, data.Length - len);

if (chunkSize <= 0)

{

break;

}

byte[] chunk = new byte[chunkSize];

try

{

Array.Copy(data, len, chunk, 0, chunkSize);

}

catch (Exception ex)

{

Debug.LogError("Error copying audio data: " + ex.ToString());

Debug.LogError($"Data length: {data.Length}, Current position: {len}, Chunk size: {chunkSize}");

break;

}

await Task.Delay(100);

string audioData = Convert.ToBase64String(chunk);

Debug.Log("status=" + status);

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(0,requestId, status, audioData));

len += chunkSize;

status = 1;

}

await Task.Delay(100);

status = 2;

Debug.Log("status=" + status);

// 补充最后一个status=2的尾帧

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(0,requestId, status, "AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA=="));

Debug.Log("音频上传完事!");

}

catch (Exception ex)

{

Debug.LogError("Error in RunAudio: " + ex.ToString());

}

}

/// <summary>

/// 语音实时交互

/// </summary>

/// <param name="status">音频中status,从0开始,1过渡,2结束。</param>

/// <param name="requestId">会话id</param>

/// <param name="data">语音数据</param>

/// <returns></returns>

public static async Task RunAudioLive(int status, string requestId, byte[] data)

{

// 音频驱动,不会进行理解,直接播报音频中的内容,只进行口唇匹配

// 一个音频中status参数值是:0-1-1-1-......-1-1-1-2。从0开始,1过渡,2结束。

// byte数组字节数不要太少,否则会有卡顿的感觉

// Task.Delay(100)这里是为了模拟间隔,最好间隔40-100ms

//if (data == null || data.Length == 0)

//{

// Debug.LogWarning("Audio data is null or empty");

// return;

//}

int bufferSize = 1024 * 30; // 30KB per chunk

int len = 0;

try

{

if (status != 2)

{

while (len < data.Length)

{

int chunkSize = Math.Min(bufferSize, data.Length - len);

if (chunkSize <= 0)

{

break;

}

byte[] chunk = new byte[chunkSize];

try

{

Array.Copy(data, len, chunk, 0, chunkSize);

}

catch (Exception ex)

{

Debug.LogError("Error copying audio data: " + ex.ToString());

Debug.LogError($"Data length: {data.Length}, Current position: {len}, Chunk size: {chunkSize}");

break;

}

await Task.Delay(100);

string audioData = Convert.ToBase64String(chunk);

Debug.Log("status=" + status);

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(1, requestId, status, audioData));

len += chunkSize;

}

}

else

{

await Task.Delay(100);

Debug.Log("status=" + status);

// 补充最后一个status=2的尾帧

avatarWsUtil.SendWebSocket(BuildAudioInteractRequest(1, requestId, status, "AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAA=="));

Debug.Log("音频上传完事!");

}

}

catch (Exception ex)

{

Debug.LogError("Error in RunAudio: " + ex.ToString());

}

}

/// <summary>

/// 启动协议

/// </summary>

/// <returns></returns>

public static JObject BuildStartRequest()

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "start"}, //控制参数

{"request_id", Guid.NewGuid().ToString()},

{"scene_id", scene_id} //请到交互平台-接口服务中获取,传入"接口服务ID"

};

JObject parameter = new JObject()

{

{

"avatar", new JObject()

{

{"avatar_id", avatarId}, // (必传)授权的形象资源id,请到交互平台-接口服务-形象列表中获取

{"width", 1080}, // 视频分辨率:宽

{"height", 1920}, // 视频分辨率:高

{

"stream", new JObject()

{

{"protocol", "rtmp"}, //(必传)视频协议,支持rtmp,xrtc、webrtc、flv,目前只有xrtc支持透明背景,需配合alpha参数传1

{"fps", 25}, // (非必传)视频刷新率,值越大,越流畅,取值范围0-25,默认25即可

{"bitrate", 5000}, //(非必传)视频码率,值越大,越清晰,对网络要求越高

{"alpha", 0} //(非必传)透明背景,需配合protocol=xrtc,0关闭,1开启

}

}

}

},

{

"tts", new JObject()

{

{"speed", 50}, // 语速:[0,100],默认50

{"vcn", VCN} //(必传)授权的声音资源id,请到交互平台-接口服务-声音列表中获取

}

},

{

"subtitle", new JObject() //注意:由于是云端发送的字幕,因此无法获取虚拟人具体读到哪个字了,也无法暂停和续播

{

{"subtitle", 0}, //0关闭,1开启

{"font_color", "#FF0000"}, //字体颜色

{"font_size", 10}, //字体大小,取值范围:1-10

{"position_x", 0}, //字幕左右移动,必须配合width、height一起传

{"position_y", 0}, //字幕上下移动,必须配合width、height一起传

{"font_name", "mainTitle"}, //字体样式,目前有以下字体:

//'Sanji.Suxian.Simple','Honglei.Runninghand.Sim','Hunyuan.Gothic.Bold',

//'Huayuan.Gothic.Regular','mainTitle'

{"width", 100}, //字幕宽

{"height", 100} //字幕高

}

}

};

JObject payload = new JObject()

{

{

"background", new JObject()

{

{"data", beijingData

} //传图片的res_key,res_key值请去交互平台-素材管理-背景中上传图片获取

}

}

};

JObject result = new JObject();

result["header"] = header;

result["parameter"] = parameter;

result["payload"] = payload;

return result;

}

/// <summary>

/// 文本驱动协议

/// </summary>

/// <param name="text"></param>

/// <returns></returns>

public static JObject BuildTextRequest(string text)

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "text_driver"},

{"request_id", Guid.NewGuid().ToString()}

};

JObject parameter = new JObject()

{

{

"avatar_dispatch", new JObject()

{

{"interactive_mode", 0}

}

},

{

"tts", new JObject()

{

{"vcn", VCN}, //合成发音人

{"speed", 50},

{"pitch", 50},

{"volume", 50}

}

},

{

"air", new JObject()

{

{"air", 1}, //是否开启自动动作,0关闭/1开启,自动动作只有开启交互走到大模型时才生效

//星火大模型会根据语境自动插入动作,且必须是支持动作的形象

{"add_nonsemantic", 1} //是否开启无指向性动作,0关闭,1开启(需配合nlp=true时生效),虚拟人会做没有意图指向性的动作

}

}

};

JObject payload = new JObject()

{

{

"text", new JObject()

{

{"content", text}

}

}

};

JObject result = new JObject();

result["header"] = header;

result["parameter"] = parameter;

result["payload"] = payload;

return result;

}

/// <summary>

/// 心跳,保活协议

/// </summary>

/// <returns></returns>

public static JObject BuildPingRequest()

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "ping"},

{"request_id", Guid.NewGuid().ToString()}

};

JObject result = new JObject();

result["header"] = header;

return result;

}

/// <summary>

/// 文本交互协议

/// </summary>

/// <param name="text"></param>

/// <returns></returns>

public static JObject BuildTextinteractRequest(string text)

{

requestId = Guid.NewGuid().ToString();

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "text_interact"},

{"request_id", requestId}

};

JObject parameter = new JObject()

{

{

"tts", new JObject()

{

{"vcn", VCN},

{"speed", 50},

{"pitch", 50},

{

"audio", new JObject()

{

{"sample_rate", 16000}

}

}

}

},

{

"air", new JObject()

{

{"air", 1}, //是否开启自动动作,0关闭/1开启,自动动作只有开启交互走到大模型时才生效

//星火大模型会根据语境自动插入动作,且必须是支持动作的形象

{"add_nonsemantic", 1} //是否开启无指向性动作,0关闭,1开启(需配合nlp=true时生效),虚拟人会做没有意图指向性的动作

}

}

};

JObject payload = new JObject()

{

{

"text", new JObject()

{

{"content", text}

}

}

};

JObject result = new JObject();

result["header"] = header;

result["parameter"] = parameter;

result["payload"] = payload;

return result;

}

/// <summary>

/// 音频驱动协议

/// </summary>

/// <param name="requestid">单次交互的id</param>

/// <param name="status"></param>

/// <param name="content"></param>

/// <returns></returns>

public static JObject BuildAudioRequest(string request_id, int status, string str)

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "audio_driver"},

{"request_id",request_id}

};

JObject parameter = new JObject()

{

{

"avatar_dispatch", new JObject()

{

{"audio_mode", 0}

}

}

};

JObject payload = new JObject()

{

{

"audio", new JObject()

{

{"encoding", "raw"},

{"sample_rate", 16000},

{"channels", 1},

{"bit_depth", 16}, //音频采样位深

{"status", status}, //数据状态

{"seq", 1}, //数据序号

{"audio", str},//音频base64

{"frame_size", 0} //帧大小

}

}

};

JObject result = new JObject();

result["header"] = header;

result["parameter"] = parameter;

result["payload"] = payload;

return result;

}

/// <summary>

/// 音频交互协议

/// </summary>

/// <param name="full_duplex"> 全双工</param>

/// <param name="request_id">交互回合id</param>

/// <param name="status">音频参数:从0开始,1过渡,2结束。</param>

/// <param name="str">音频base64</param>

/// <returns></returns>

public static JObject BuildAudioInteractRequest(int full_duplex, string request_id, int status, string str)

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "audio_interact"},

{"request_id", request_id}

};

JObject parameter = new JObject()

{

{

"asr", new JObject()

{

{"full_duplex", full_duplex},// 全双工(实时交互)使用全双工:可以不暂停语音输入,即可进行交互。

// 不使用全双工:必须等语音输入完毕,status=2后,才会进行交互(单轮交互)

}

}

};

JObject payload = new JObject()

{

{

"audio", new JObject()

{

{"encoding", "raw"},

{"sample_rate", 16000},

{"channels", 1},

{"bit_depth", 16},

{"status", status}, //一个音频中status参数值是:0-1-1-1-......-1-1-1-2。从0开始,1过渡,2结束。

{"seq", 1},

{"audio", str},//音频base64

{"frame_size", 0}

}

}

};

JObject result = new JObject();

result["header"] = header;

result["parameter"] = parameter;

result["payload"] = payload;

return result;

}

/// <summary>

/// 切换动作

/// </summary>

/// <param name="dongzuo"></param>

/// <returns></returns>

public static JObject BuildCmdRequest(string dongzuo)

{

requestId = Guid.NewGuid().ToString();

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "cmd"},

{"request_id", requestId}

};

JObject payload = new JObject()

{

{

"cmd_text", new JObject()

{

{

"avatar", new JObject()

{

{"type", "action"},

{"value", dongzuo},

{"tb", 0 }// 立即出发动作

}

}

}

}

};

JObject result = new JObject();

result["header"] = header;

result["payload"] = payload;

return result;

}

/// <summary>

/// 重置(打断)协议

/// </summary>

/// <returns></returns>

public static JObject BuildResetRequest()

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "reset"},

{"request_id", Guid.NewGuid().ToString()}

};

JObject result = new JObject();

result["header"] = header;

return result;

}

/// <summary>

/// stop停止协议

/// </summary>

/// <returns></returns>

public static JObject BuildStopRequest()

{

JObject header = new JObject()

{

{"app_id", appId},

{"ctrl", "stop"},

{"request_id", Guid.NewGuid().ToString()}

};

JObject result = new JObject();

result["header"] = header;

return result;

}

}

}4)录制声音所需要的工具代码AudioRecorder和LoadAudio

cs

using System;

using System.Collections;

using System.Collections.Generic;

using System.IO;

using System.Text;

using UnityEngine;

using UnityEngine.Networking;

namespace AudioProcess

{

public delegate void RecordingDelegate(byte[] data);

public delegate void ShowProblem(string msg);

public class AudioRecorder

{

AudioClip clip;

readonly int frequency = 16000;

readonly int maxsec = 10;

DateTime start_time;

public RecordingDelegate onRecording;

public ShowProblem onShow;

/// <summary>

/// 开始录音

/// </summary>

/// <param name="index"></param>

public void StartRecording(int index = 0)

{

if (index >= Microphone.devices.Length)

{

onShow?.Invoke("设备不存在");

#if UNITY_EDITOR

Debug.LogError("设备不存在");

#endif

return;

}

clip = Microphone.Start(Microphone.devices[index], false, maxsec, frequency);

start_time = DateTime.Now;

}

/// <summary>

/// 结束录音

/// </summary>

/// <param name="index"></param>

public void StopRecording(int index = 0)

{

if (index >= Microphone.devices.Length)

{

onShow?.Invoke("设备不存在");

#if UNITY_EDITOR

Debug.LogError("设备不存在");

#endif

return;

}

string device = Microphone.devices[index];

if (!Microphone.IsRecording(device))

{

onShow?.Invoke("设备未录音或者超时");

#if UNITY_EDITOR

Debug.LogError("设备未录音");

#endif

return;

}

Microphone.End(device);

using (MemoryStream stream = new MemoryStream())

{

double sec = (DateTime.Now - start_time).TotalSeconds;

if (sec < 0.02f) return;

int dataLength = (int)(sec * frequency);

ClipToStream(stream, clip, dataLength);

//ClipToStream(stream, clip, (int)(DateTime.Now - start_time).TotalSeconds * frequency);

WriteHeader(stream, clip, dataLength);

byte[] data = stream.GetBuffer();

onRecording?.Invoke(data);

}

}

public void ClipToStream(MemoryStream stream, AudioClip clip, int len)

{

//Debug.Log("长度:" + len);

float[] samples = new float[len];

clip.GetData(samples, 0);

for (int i = 0; i < len; i++)

{

stream.Write(BitConverter.GetBytes((short)(samples[i] * 0x7FFF)));

}

}

private void WriteHeader(MemoryStream stream, AudioClip clip, int dataLength)

{

int hz = clip.frequency;

int channels = clip.channels;

// 计算数据大小(每个样本2字节)

int dataSize = dataLength * 2;

// 计算整个文件大小

int fileSize = 36 + dataSize;

stream.Seek(0, SeekOrigin.Begin);

byte[] riff = Encoding.UTF8.GetBytes("RIFF");

stream.Write(riff, 0, 4);

byte[] chunkSize = BitConverter.GetBytes(fileSize - 8);

stream.Write(chunkSize, 0, 4);

byte[] wave = Encoding.UTF8.GetBytes("WAVE");

stream.Write(wave, 0, 4);

byte[] fmt = Encoding.UTF8.GetBytes("fmt ");

stream.Write(fmt, 0, 4);

byte[] subChunk1 = BitConverter.GetBytes(16);

stream.Write(subChunk1, 0, 4);

ushort one = 1;

byte[] audioFormat = BitConverter.GetBytes(one);

stream.Write(audioFormat, 0, 2);

byte[] numChannels = BitConverter.GetBytes(channels);

stream.Write(numChannels, 0, 2);

byte[] sampleRate = BitConverter.GetBytes(hz);

stream.Write(sampleRate, 0, 4);

byte[] byteRate = BitConverter.GetBytes(hz * channels * 2);

stream.Write(byteRate, 0, 4);

ushort blockAlign = (ushort)(channels * 2);

stream.Write(BitConverter.GetBytes(blockAlign), 0, 2);

ushort bps = 16;

byte[] bitsPerSample = BitConverter.GetBytes(bps);

stream.Write(bitsPerSample, 0, 2);

byte[] datastring = Encoding.UTF8.GetBytes("data");

stream.Write(datastring, 0, 4);

byte[] subChunk2 = BitConverter.GetBytes(dataSize);

stream.Write(subChunk2, 0, 4);

}

}

}

cs

using System;

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.Networking;

namespace AudioProcess

{

public class LoadAudio : MonoBehaviour

{

public static LoadAudio _instance;

private void Awake()

{

if (_instance == null)

{

_instance = this;

}

else

{

Destroy(this.gameObject);

}

}

// Start is called before the first frame update

void Start()

{

}

/// <summary>

/// 外部加载音频实例

/// </summary>

/// <param name="url"></param>

/// <param name="getClip"></param>

public void loadAudioClip(string url, GetAudio getClip, GetAudioError audioError)

{

StartCoroutine(GetAudioClip(url, getClip, audioError));

}

/// 获得音频

/// </summary>

/// <param name="url"></param>

/// <returns></returns>

private IEnumerator GetAudioClip(string url, GetAudio getClip, GetAudioError audioError)

{

using (var uwr = UnityWebRequestMultimedia.GetAudioClip(url, AudioType.WAV))

{

yield return uwr.SendWebRequest();

if (uwr.result == UnityWebRequest.Result.Success)

{

AudioClip clip = DownloadHandlerAudioClip.GetContent(uwr);

getClip?.Invoke(clip);

Debug.Log("下载语音");

}

else

{

audioError?.Invoke(uwr.error);

Debug.LogError(uwr.error);

yield break;

}

}

}

/// <summary>

/// AudioClip clip 转成 byte[]

/// </summary>

/// <param name="clip"></param>

/// <returns></returns>

public byte[] ConvertAudioClipToByteArray(AudioClip clip)

{

float[] samples = new float[clip.samples * clip.channels];

clip.GetData(samples, 0);

// 转换为字节数组

byte[] bytes = new byte[samples.Length * 4]; // 对于float类型,每个样本占用4字节

Buffer.BlockCopy(samples, 0, bytes, 0, bytes.Length);

return bytes;

}

}

public delegate void GetAudio(AudioClip clip);

public delegate void GetAudioError(string msg);

}