RAG与Agent性能调优:5.动态切片策略与重叠机制提升RAG召回率

Gitee地址:https://gitee.com/agiforgagaplus/OptiRAGAgent

文章详情目录:RAG与Agent性能调优

上一节:第4节:切片语义割裂怎么办?

下一节:第6节:OCR文本错漏频发?结合LLM纠错,让图像文本也能精确使用

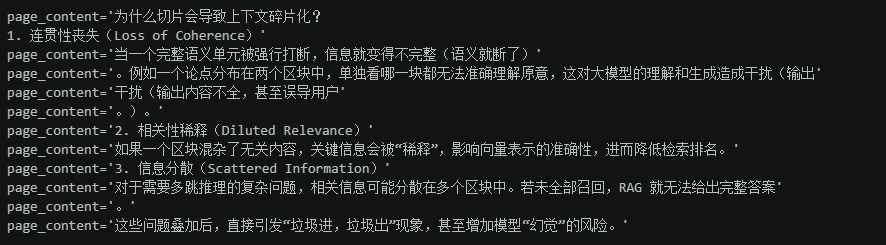

碎片化原因

- 连贯性丧失:语义中断,大模型理解受损

- 相关性稀释:关键信息被稀释检索排名下降

- 信息分散:多条推理信息不全,答案不完整

- 结果:垃圾进垃圾出,增加幻觉风险

策略:动态切片+重叠机制

动态切片:源头避免碎片化

定义:智能,自适应切分,根据语义,结构,主题定切分点

类型:

- 内容感知型

-

- 语义切分:识别语义断点

- 主题切分:按主题切分

- 优势:减少语义切割,提高连贯性

- 结构感知型:

-

- 布局感知切片:利用pdf,htmL结构元素

- 文档特异性切分:针对特定格式,如md

- 优势: 保留文档结构

- 高级自适应型:

-

- 智能体切片:大语言模型判断切分边界

- 优势:更加灵活智能

重叠机制:非动态策略缓冲

核心:相邻区块保留重复内容,形成滑动窗口

作用:

- 局部语义保持

- 缓解信息缺失

- 提升召回率

建议:区块大小10%到20%

协同效果

动态切片,缓解碎片化重叠机制,补充,增强上下完整

最佳实践

- 结构化文档:优先结构感知切片

- 纯文本:推荐语义切片(如LlamaIndex的SemanticSplitterNodeParser)

- 尝试:父子模式

- 重叠机制:必要时启用,控制在10%到20%

- 持续优化:建立评估系统

固定大小文本切块递归方法极其改进

主要问题

- 上下文割裂:机械截段文本透坏语义

- 语义完整性受损:区块内句意不完整,影响匹配精度和大模型生成回答质量

改进策略

- 引入重叠机制:相灵块保留重复内容,确保连贯性

- 智能截断:尽量在标点符号或段落结束处切分

实践工具:LangChain 的 RecursiveCharacterTextSplitter

from langchain.text_splitter import RecursiveCharacterTextSplitter

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=50,

chunk_overlap=5,

length_function=len,

separators=["\n", "。", ""]

)

""" # 待处理的文本

texts = text_splitter.create_documents([text])

for doc in texts:

print(doc)

核心优势:利用一组优先级分割符递归切割文档,尽可能保留语义完整

工作原理:

- 初步切分:用一个分割符段落划分

- 检查长度:若某段超过chunk_size,用下一个分割符切分

- 递规处理:依次尝试剩余分割符,直到满足长度

- 合并优化:相邻小块合并后chunk_size,仍小于则合并

切割策略建议

根据内容类型选择:

- 逻辑紧密性:尽量保证段落完整性

- 语义独立型:可按句子切分

结构向量化模型特征:

- 模型对长文本处理不佳:适当缩短块长

- 模型擅长短文本:可适当切分,但保留关键上下文

关注大语言输入限制:控制快长,避免超模型最大输入

持续实验与优化:如普适性最佳实践,需要测验建立评估体系

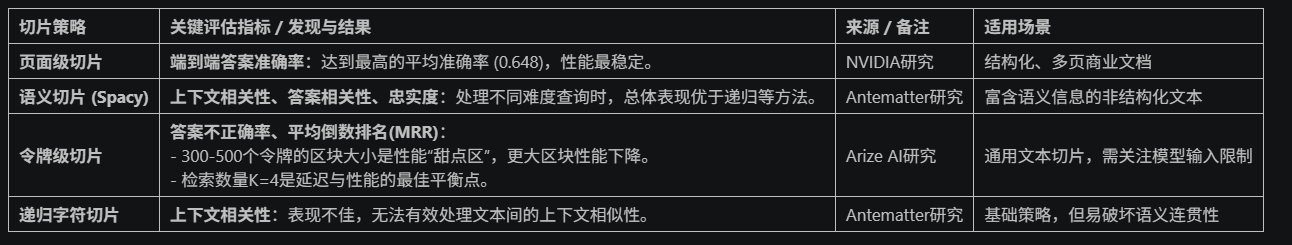

切片策略对RAG指标的量化影响

不存在普遍的切片策略,最优选择取决于文档类型和查询复杂度

趋势:先进RAG系统需要动态切片路由,根据文档特征选择策略(如PDF用布局解析器,TXT用语义分割器,PY用代码分割器)

重叠机制的作用与代价

核心作用:

- 维护指代关系:边界处维持局部语义连贯

- 提升检索准确性:增强跨区块信息关联,提高召回率和匹配质量

成本与挑战:

- 存储成本升高:向量数据库体积膨胀,索引构建时间延长

- 计算开销上升:索引规模增大,增加向量负担,延长查询延迟

- 冗余信息传递:大量重叠区块会浪费大语言模型上下文窗口

- 建议:重叠区块设置为chunk_size,通过实验调整

动态切块:LlamaIndex 的 SemanticSplitterNodeParser

固定大小的文本切块在RAG系统中导致上下文碎片化,影响检索和生成质量,为解决此问题LlamaIndex 的 SemanticSplitterNodeParser,这是一款通过语义理解实现智能切分的先进工具

核心机制

其的核心思想是:根据文本语义变化点进行动态切分,而非依赖固定字符或句法结构

工作流程:

- 句子级拆分:将文档按句子进行划分

- 组块构建:连续多个句子组成一个句子组(由buffer_size控制)

- 语义嵌入生成:使用指定嵌入模型为每个句子生成向量表示

- 语义相似度计算:利用余弦距离衡量向量句子组之间的差异

- 动态切分决策:当语义差异超过设定的阈值(由breakpoint_percentile_threshold),则在此处插入切分点

这种方式保证了每个文本块内部语义连贯,信息完整,极大提升了后续检索与生成效果

核心参数解析

embed_model(BaseEmbedding, 必需):

- 用于生成语义向量的嵌入模型。这是语义比较的基础,其质量直接决定切片效果。

buffer_size (整数, 默认: 1):

- 评估语义相似性时,组合在一起的句子数量。

- 设为1表示逐句比较;大于1则将多个句子视为一个单元,有助于考虑更广泛的上下文。

breakpoint_percentile_threshold (整数, 默认: 95):

-

确定切分点的余弦距离百分位阈值。

-

敏感度调节参数: 较低值(如80)意味着对语义变化更敏感,产生更多、更小的区块;较高值(如98)要求语义变化非常显著才切分,产生更少、更大的区块。

from llama_index.core.node_parser import SemanticSplitterNodeParser

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.core.schema import Document

import os设置 API 密钥

os.getenv("OPENAI_API_KEY")

示例文本:包含多个主题的内容

multi_theme_text = (

"人工智能(AI)正在彻底改变医疗保健行业。AI算法能够通过分析医学影像,比人类放射科医生更早、更准确地诊断出癌症等疾病。"

"此外,AI在药物研发中也发挥着关键作用,能够预测化合物的有效性,从而大大缩短新药上市的时间。"

"话锋一转,我们来谈谈金融科技(FinTech)。移动支付已经成为全球主流,数字钱包和非接触式支付改变了人们的消费习惯。"

"区块链技术则为跨境支付和资产代币化提供了去中心化的解决方案,有望重塑整个金融体系的底层架构。"

)构建文档对象

document = Document(text=multi_theme_text)

初始化嵌入模型

embed_model = OpenAIEmbedding()

初始化语义切分器

splitter = SemanticSplitterNodeParser(

buffer_size=1,

breakpoint_percentile_threshold=90,

embed_model=embed_model

)执行切分

nodes = splitter.get_nodes_from_documents([document])

输出结果

print(f"语义切分后得到 {len(nodes)} 个节点:")

for i, node in enumerate(nodes):

print(f"--- 节点 {i+1} ---")

print(node.get_content())

print("-" * 20)

实践建议

1. 嵌入模型选择

- 通用文本:选`OpenAI`的`text-embedding-ada-002`。

- 专业领域:用领域预训练模型(如`BioBERT`)或微调模型。

- 注意:嵌入模型质量直接影响切分效果。

2. buffer_size设置

- buffer_size=1: 逐句比较,适合语义边界明显文本。

- buffer_size>1:适合语义渐变的长段落。

3. 切分敏感度调节

- 高敏感度(低阈值,如80):适用于精细切分,如多跳问答。

- 低敏感度(高阈值,如98):适用于保留完整段落。

SemanticSplitterNodeParser是LlamaIndex中实现语义切片的核心,通过语义理解避免上下文断裂。

推荐策略

- 优先 高质量、领域适配的嵌入模型。

- 合理设置`buffer_size`和`breakpoint_percentile_threshold`。

- 借助可视化工具优化。

效果: 通过语义驱动的动态切片,显著提升RAG系统召回率与生成质量,尤其适用于复杂文本。

超越句子:使用命题进行原子化检索

传统切片难以满足RAG高精度与完整上下为需求,而命题原子化检索

- 体现了由小到大的思想:细腻度检索,提高准确率,结合付快,提供完整上下文

- 目标:协同提升检索精度与生成质量

什么是命题?

定义:文本中原子化的包含事实单元

特征:

- 不可再拆分:不能拆解为更小的语义单元

- 独立表达:脱离上下文,独立解释事实概念

- 自然语言形式:简洁明了,无需额外信息

作用:高精度命题作为解锁单元,命中后追溯原始父块,提供大语言模型完整上下文,确保生成内容准确完整

TopicNodeParser:LLM驱动的主题一致性重组器

功能:

- 利用llm将段落分解为多个命题

- 根据主题一致性,重组命题,形成新语义区块

参数:similarity_method大语言模型或嵌入模型判断主题相关性

场景:检索精度要求极高,允许高计算成本的场景

优势:精准捕捉语义边界,深度解析复杂文本

代价:依赖大模型推理成本高,适合离线处理

DenseXRetrievalPack:开箱即用的命题化解决方案

核心流程

- 自动为知识库节点提取命题

- 构建专门针对命题的检索器

- 返回与命题相关的原始父区块用于生成

优势:快速部署命题化检索,无需手动编写提示词或训练

架构:结合大语言模型提取命题+向量索引构建+递归检索

命题提取的实现细节

-

LlamaIndex和Langchain均有实现。

-

LlamaIndex使用论文提供的提示词生成命题。

from llama_index.core.prompts import PromptTemplate

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.packs.dense_x_retrieval import DenseXRetrievalPack

from llama_index.core.readers import SimpleDirectoryReader

from llama_index.core.llama_pack import download_llama_pack

import os

import nest_asyncio应用 nest_asyncio 以支持异步调用

nest_asyncio.apply()

PROPOSITIONS_PROMPT = PromptTemplate(

"""Decompose the "Content" into clear and simple propositions, ensuring they are interpretable out of

context.- Split compound sentence into simple sentences. Maintain the original phrasing from the input

whenever possible. - For any named entity that is accompanied by additional descriptive information, separate this

information into its own distinct proposition. - Decontextualize the proposition by adding necessary modifier to nouns or entire sentences

and replacing pronouns (e.g., "it", "he", "she", "they", "this", "that") with the full name of the

entities they refer to. - Present the results as a list of strings, formatted in JSON.

Input: Title: ¯Eostre. Section: Theories and interpretations, Connection to Easter Hares. Content:

The earliest evidence for the Easter Hare (Osterhase) was recorded in south-west Germany in

1678 by the professor of medicine Georg Franck von Franckenau, but it remained unknown in

other parts of Germany until the 18th century. Scholar Richard Sermon writes that "hares were

frequently seen in gardens in spring, and thus may have served as a convenient explanation for the

origin of the colored eggs hidden there for children. Alternatively, there is a European tradition

that hares laid eggs, since a hare's scratch or form and a lapwing's nest look very similar, and

both occur on grassland and are first seen in the spring. In the nineteenth century the influence

of Easter cards, toys, and books was to make the Easter Hare/Rabbit popular throughout Europe.

German immigrants then exported the custom to Britain and America where it evolved into the

Easter Bunny."

Output: [ "The earliest evidence for the Easter Hare was recorded in south-west Germany in

1678 by Georg Franck von Franckenau.", "Georg Franck von Franckenau was a professor of

medicine.", "The evidence for the Easter Hare remained unknown in other parts of Germany until

the 18th century.", "Richard Sermon was a scholar.", "Richard Sermon writes a hypothesis about

the possible explanation for the connection between hares and the tradition during Easter", "Hares

were frequently seen in gardens in spring.", "Hares may have served as a convenient explanation

for the origin of the colored eggs hidden in gardens for children.", "There is a European tradition

that hares laid eggs.", "A hare's scratch or form and a lapwing's nest look very similar.", "Both

hares and lapwing's nests occur on grassland and are first seen in the spring.", "In the nineteenth

century the influence of Easter cards, toys, and books was to make the Easter Hare/Rabbit popular

throughout Europe.", "German immigrants exported the custom of the Easter Hare/Rabbit to

Britain and America.", "The custom of the Easter Hare/Rabbit evolved into the Easter Bunny in

Britain and America." ]Input: {node_text}

Output:"""

)import json

def safe_json_loads(text: str) -> list:

# 去除首尾空白和不可见字符

text = text.strip()# 如果以 ```json 开头,去掉代码块标记 if text.startswith("```json"): text = text[7:] if text.endswith("```"): text = text[:-3] # 再次去除首尾空白 text = text.strip() try: return json.loads(text) except json.JSONDecodeError as e: print(f"JSONDecodeError at position {e.pos}: {e}") return []def extract_propositions(text: str, llm: OpenAI):

"""

使用 LLM 提取文本中的命题(Propositions)

"""

# 构建完整提示词

prompt = PROPOSITIONS_PROMPT.format(node_text=text)# 调用 LLM 获取响应 response = llm.complete(prompt).text.strip() # 解析响应为 JSON 列表 try: propositions = safe_json_loads(response) except Exception as e: print("JSON解析失败,原始响应:", response) return [] return propositions初始化 LLM

llm = OpenAI(model="gpt-4o", temperature=0.1, max_tokens=750)

embed_model = OpenAIEmbedding(embed_batch_size=128)示例文本用于测试 extract_propositions 函数

test_text = "埃菲尔铁塔位于巴黎,建于1889年。"

调用函数并输出结果

propositions = extract_propositions(test_text, llm)

print("提取出的命题:")

print(propositions)from llama_index.core.readers import SimpleDirectoryReader

from llama_index.core.llama_pack import download_llama_pack

import nest_asyncio

nest_asyncio.apply()import os

os.environ["OPENAI_API_KEY"] = "YOUR_OPENAI_KEY"

首次运行,下载 DenseXRetrievalPack

DenseXRetrievalPack = download_llama_pack(

"DenseXRetrievalPack", "./dense_pack"

)

If you have already downloaded DenseXRetrievalPack, you can import it directly.

from llama_index.packs.dense_x_retrieval import DenseXRetrievalPack

Load documents

dir_path = "/Users/wilson/rag50_test"

documents = SimpleDirectoryReader(dir_path).load_data()Use LLM to extract propositions from every document/node

dense_pack = DenseXRetrievalPack(documents)

response = dense_pack.run("埃菲尔铁塔建于哪一年?")

print(response)from llama_index.core.prompts import PromptTemplate

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

from llama_index.packs.dense_x_retrieval import DenseXRetrievalPack

from llama_index.core.readers import SimpleDirectoryReader

from llama_index.core.llama_pack import download_llama_pack

import os

import nest_asyncio应用 nest_asyncio 以支持异步调用

nest_asyncio.apply()

PROPOSITIONS_PROMPT = PromptTemplate(

"""Decompose the "Content" into clear and simple propositions, ensuring they are interpretable out of

context.- Split compound sentence into simple sentences. Maintain the original phrasing from the input

whenever possible. - For any named entity that is accompanied by additional descriptive information, separate this

information into its own distinct proposition. - Decontextualize the proposition by adding necessary modifier to nouns or entire sentences

and replacing pronouns (e.g., "it", "he", "she", "they", "this", "that") with the full name of the

entities they refer to. - Present the results as a list of strings, formatted in JSON.

Input: Title: ¯Eostre. Section: Theories and interpretations, Connection to Easter Hares. Content:

The earliest evidence for the Easter Hare (Osterhase) was recorded in south-west Germany in

1678 by the professor of medicine Georg Franck von Franckenau, but it remained unknown in

other parts of Germany until the 18th century. Scholar Richard Sermon writes that "hares were

frequently seen in gardens in spring, and thus may have served as a convenient explanation for the

origin of the colored eggs hidden there for children. Alternatively, there is a European tradition

that hares laid eggs, since a hare's scratch or form and a lapwing's nest look very similar, and

both occur on grassland and are first seen in the spring. In the nineteenth century the influence

of Easter cards, toys, and books was to make the Easter Hare/Rabbit popular throughout Europe.

German immigrants then exported the custom to Britain and America where it evolved into the

Easter Bunny."

Output: [ "The earliest evidence for the Easter Hare was recorded in south-west Germany in

1678 by Georg Franck von Franckenau.", "Georg Franck von Franckenau was a professor of

medicine.", "The evidence for the Easter Hare remained unknown in other parts of Germany until

the 18th century.", "Richard Sermon was a scholar.", "Richard Sermon writes a hypothesis about

the possible explanation for the connection between hares and the tradition during Easter", "Hares

were frequently seen in gardens in spring.", "Hares may have served as a convenient explanation

for the origin of the colored eggs hidden in gardens for children.", "There is a European tradition

that hares laid eggs.", "A hare's scratch or form and a lapwing's nest look very similar.", "Both

hares and lapwing's nests occur on grassland and are first seen in the spring.", "In the nineteenth

century the influence of Easter cards, toys, and books was to make the Easter Hare/Rabbit popular

throughout Europe.", "German immigrants exported the custom of the Easter Hare/Rabbit to

Britain and America.", "The custom of the Easter Hare/Rabbit evolved into the Easter Bunny in

Britain and America." ]Input: {node_text}

Output:"""

)import json

def safe_json_loads(text: str) -> list:

# 去除首尾空白和不可见字符

text = text.strip()# 如果以 ```json 开头,去掉代码块标记 if text.startswith("```json"): text = text[7:] if text.endswith("```"): text = text[:-3] # 再次去除首尾空白 text = text.strip() try: return json.loads(text) except json.JSONDecodeError as e: print(f"JSONDecodeError at position {e.pos}: {e}") return []def extract_propositions(text: str, llm: OpenAI):

"""

使用 LLM 提取文本中的命题(Propositions)

"""

# 构建完整提示词

prompt = PROPOSITIONS_PROMPT.format(node_text=text)# 调用 LLM 获取响应 response = llm.complete(prompt).text.strip() # 解析响应为 JSON 列表 try: propositions = safe_json_loads(response) except Exception as e: print("JSON解析失败,原始响应:", response) return [] return propositions初始化 LLM

llm = OpenAI(model="gpt-4o", temperature=0.1, max_tokens=750)

embed_model = OpenAIEmbedding(embed_batch_size=128)示例文本用于测试 extract_propositions 函数

test_text = "埃菲尔铁塔位于巴黎,建于1889年。"

调用函数并输出结果

propositions = extract_propositions(test_text, llm)

print("提取出的命题:")

print(propositions) - Split compound sentence into simple sentences. Maintain the original phrasing from the input

内部原理详解

DenseXRetrievalPack 的核心逻辑遵循 "由小到大"(small-to-big) 的检索思想:

步骤一:基础切块(Nodes)

- 使用

SentenceSplitter将文档划分为基本文本块(nodes),作为命题提取的基础单元。

步骤二:命题提取(Sub-Nodes)

- 调用 LLM 和预定义 Prompt,异步提取每个 node 中的命题,转化为子节点(sub-nodes)。

- 同时保留 sub-node 与原始 node 的映射关系。

步骤三:构建混合索引

- 将原始 nodes 与 sub-nodes 一起构建向量索引(

VectorStoreIndex)。 - 支持命题级检索(高精度)与区块级生成(完整上下文)。

步骤四:递归检索机制

- 使用

RecursiveRetriever进行检索:

-

- 优先检索子命题:确保高精度匹配。

- 找到则回溯父区块:保证信息完整性。

命题化检索的价值

命题化检索是RAG领域的前沿方向

- 突破传统限制: 不再局限于段落或句子作为最小单元

- 双重提示:通过精细化切分和上下文,增强机制,同时提升检索精度与生成质量

- 适用场景:特别适合知识密集型乐务,如多跳回答、法律文书分析、科研文献检索

总结

从结构入手,优先使用结构感知策略

场景:明确格式文档

做法:RAGFlow模板化、LlamaIndex的HTMLNodeParser/TableNodeParser

优势:确保原始语义与结构,提升检索质量

对纯文本,走向语义:采用语义驱动切片

场景:非结构化文本

做法:LlamaIndex的SemanticSplitterNodeParser

优势:提升上下文连贯性,精确检索复杂语义

拥抱"由小到大"架构:探索层次化检索模式

场景:需兼顾精度与完整性任务(多跳问答)

做法:Dify父子模式、LlamaIndex的SentenceWindowNodeParser、命题化检索

优势:小块精度,大块完整性;规避重叠依赖

将重叠视为战术补充:适度使用而非依赖

场景:固定/递归切片时的辅助手段

做法:10%-20%重叠比例,避免冗余

优势:简单策略下提升上下文,成本可控

构建评估体系:持续验证与迭代优化

场景:任何RAG应用上线及运行中

做法:定义指标(召回率、准确性),A/B测试,结合人工反馈

优势:避免盲目选择,持续优化,适应需求

切片作用:为混合检索、重排、查询、转换、多阶段检索等高级技术提供了质量数据弹药

目标:确保切片策略的输出,能为整个RAG系统奠定基础