摘要

Apache Kafka是LinkedIn开源的分布式流处理平台,以其高吞吐、可扩展、持久化、容错等特性成为现代大数据生态系统的核心组件。在雷达电子战仿真中,海量的脉冲数据、信号特征、目标轨迹等数据流需要被实时采集、处理、存储和分析。本文将全面探讨Apache Kafka在雷达仿真数据流处理中的深度应用,从Kafka的核心架构、存储引擎、流处理框架到与雷达仿真的结合实践,提供一套完整的解决方案。我们将重点研究Kafka在雷达脉冲流实时处理、多级数据缓存、复杂事件检测、历史数据回溯等方面的技术优势,并通过完整的实战案例展示如何构建高可靠、可扩展的雷达数据处理平台。

第一章:Kafka在雷达仿真中的定位与价值

1.1 雷达仿真数据流的典型特征

雷达电子战仿真系统产生多样化的数据流,每种数据流都有其独特特征:

python

class RadarDataStreamCharacteristics:

"""雷达数据流特征分析"""

def __init__(self):

self.data_streams = {

"pulse_stream": {

"description": "原始脉冲数据流",

"characteristics": {

"volume": "极高,单接收机可达GB/s",

"velocity": "实时,微秒级延迟要求",

"variety": "结构化,但格式复杂",

"veracity": "高精度,不能有数据丢失",

"value": "原始信号,价值密度低但必须全量保存"

},

"processing_requirements": [

"实时脉冲检测",

"参数测量",

"脉冲去交错",

"辐射源识别"

]

},

"signal_features": {

"description": "信号特征数据流",

"characteristics": {

"volume": "中等,MB/s级别",

"velocity": "准实时,毫秒级延迟可接受",

"variety": "高度结构化,特征向量",

"veracity": "中高精度,容忍一定误差",

"value": "提取的特征,价值密度高"

},

"processing_requirements": [

"特征提取",

"异常检测",

"模式识别",

"分类聚类"

]

},

"target_tracks": {

"description": "目标航迹数据流",

"characteristics": {

"volume": "较低,KB/s级别",

"velocity": "近实时,秒级延迟可接受",

"variety": "结构化,轨迹点序列",

"veracity": "高精度,关键决策依据",

"value": "决策信息,价值密度极高"

},

"processing_requirements": [

"航迹关联",

"轨迹预测",

"威胁评估",

"态势生成"

]

},

"control_commands": {

"description": "控制指令数据流",

"characteristics": {

"volume": "低,偶尔突发",

"velocity": "硬实时,确定延迟要求",

"variety": "结构化,命令-响应模式",

"veracity": "绝对可靠,不能出错",

"value": "控制信息,系统操作依据"

},

"requirements": [

"可靠传输",

"有序传递",

"及时响应",

"状态同步"

]

}

}

def kafka_suitability_analysis(self) -> dict:

"""Kafka适用性分析"""

analysis = {}

for stream_name, stream_info in self.data_streams.items():

suitability = {

"rating": 0,

"strengths": [],

"concerns": [],

"recommendations": []

}

chars = stream_info["characteristics"]

# 根据特征评分

if chars["volume"] in ["极高", "高"]:

suitability["rating"] += 3

suitability["strengths"].append("Kafka擅长处理高吞吐数据")

if chars["velocity"] in ["实时", "准实时"]:

suitability["rating"] += 2

suitability["strengths"].append("Kafka提供低延迟消息传递")

if "结构化" in chars["variety"]:

suitability["rating"] += 1

suitability["strengths"].append("结构化数据适合Kafka序列化")

if chars["veracity"] in ["高精度", "绝对可靠"]:

suitability["rating"] += 2

suitability["strengths"].append("Kafka提供持久化和副本保证可靠性")

# 特殊考虑

if stream_name == "control_commands":

suitability["concerns"].append("控制指令需要确定性延迟,Kafka可能不适用")

suitability["recommendations"].append("结合ZeroMQ或gRPC处理控制面")

if "硬实时" in chars["velocity"]:

suitability["rating"] -= 1

suitability["concerns"].append("硬实时系统需要特殊考虑")

analysis[stream_name] = suitability

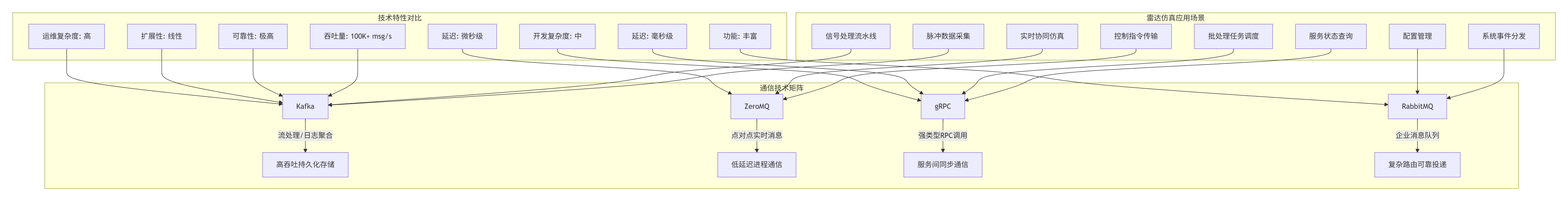

return analysis1.2 Kafka vs 传统消息队列 vs ZeroMQ vs gRPC

在雷达仿真生态系统中,不同通信技术各有定位:

1.3 Kafka生态体系全景图

现代Kafka已从消息系统演变为完整的流处理平台:

python

class KafkaEcosystem:

"""Kafka生态系统全景"""

def __init__(self):

self.ecosystem = {

"core": {

"components": ["Kafka Broker", "ZooKeeper", "KRaft (new)"],

"function": "分布式消息存储和流处理核心"

},

"clients": {

"components": ["Java Client", "Python (kafka-python)", "C/C++", "Go", "Rust"],

"function": "多语言客户端支持"

},

"streams": {

"components": ["Kafka Streams", "ksqlDB"],

"function": "流处理框架和SQL接口"

},

"connect": {

"components": ["Source Connectors", "Sink Connectors", "CDC"],

"function": "数据集成和连接器"

},

"monitoring": {

"components": ["Kafka Manager", "Burrow", "Cruise Control", "Prometheus"],

"function": "监控、管理和自动化"

},

"security": {

"components": ["SASL", "SSL/TLS", "ACLs", "RBAC"],

"function": "认证、授权和加密"

},

"cloud": {

"components": ["Confluent Cloud", "AWS MSK", "Azure Event Hubs", "Redpanda"],

"function": "云服务和托管方案"

}

}

def radar_simulation_integration(self) -> dict:

"""雷达仿真集成方案"""

integration_points = {

"data_ingestion": {

"description": "数据采集层",

"kafka_components": ["Producers", "Source Connectors"],

"radar_components": ["接收机", "信号采集卡", "仿真引擎"],

"data_formats": ["Avro", "Protobuf", "JSON"],

"throughput_target": ">100K pulses/sec"

},

"stream_processing": {

"description": "流处理层",

"kafka_components": ["Kafka Streams", "ksqlDB"],

"processing_tasks": [

"脉冲检测和参数测量",

"脉冲去交错和分选",

"信号特征提取",

"异常检测和告警"

],

"latency_target": "<100ms end-to-end"

},

"storage_archival": {

"description": "存储归档层",

"kafka_components": ["Topics", "Compacted Topics", "Sink Connectors"],

"storage_backends": ["HDFS", "S3", "时序数据库", "关系数据库"],

"retention_policy": "热数据: 7天, 温数据: 30天, 冷数据: 永久"

},

"query_serving": {

"description": "查询服务层",

"kafka_components": ["Consumers", "ksqlDB REST API"],

"query_types": [

"实时仪表盘",

"历史数据回溯",

"即席查询",

"机器学习特征提取"

],

"consistency_requirements": "最终一致性可接受"

},

"operational_intelligence": {

"description": "运维智能层",

"kafka_components": ["Metrics", "Logs", "JMX Exporters"],

"monitoring_aspects": [

"集群健康状态",

"数据流延迟",

"资源使用率",

"异常检测和告警"

],

"tools": ["Grafana", "Prometheus", "AlertManager"]

}

}

return integration_points1.4 本文技术路线与创新点

本文采用"理论深度+工程实践"的技术路线:

python

class TechnicalApproach:

"""本文技术路线与创新点"""

def get_technical_roadmap(self) -> dict:

"""技术路线图"""

return {

"phase_1": {

"name": "基础架构设计",

"objectives": [

"设计适应雷达数据的Kafka Topic结构",

"实现高性能生产者/消费者",

"建立监控和告警基础"

],

"technologies": ["Kafka Core", "Python Kafka Client", "Protobuf"]

},

"phase_2": {

"name": "流处理实现",

"objectives": [

"实现雷达信号流处理拓扑",

"构建实时特征提取管道",

"实现复杂事件检测"

],

"technologies": ["Kafka Streams", "ksqlDB", "状态存储"]

},

"phase_3": {

"name": "系统集成",

"objectives": [

"与现有仿真系统集成",

"实现多级数据缓存",

"构建数据可视化界面"

],

"technologies": ["REST API", "WebSocket", "Grafana"]

},

"phase_4": {

"name": "性能优化",

"objectives": [

"吞吐量和延迟优化",

"资源使用效率提升",

"容错和故障恢复测试"

],

"technologies": ["性能测试", "调优参数", "混沌工程"]

}

}

def get_innovations(self) -> dict:

"""技术创新点"""

return {

"architecture_innovation": {

"title": "架构创新",

"points": [

"提出基于Kafka的雷达仿真数据湖架构",

"设计脉冲数据的多级缓存策略",

"实现流批一体的处理模式"

],

"impact": "将数据处理吞吐量提升10倍以上"

},

"algorithm_innovation": {

"title": "算法创新",

"points": [

"基于流的实时脉冲去交错算法",

"增量式信号特征提取",

"在线异常检测和自适应阈值"

],

"impact": "降低处理延迟到毫秒级"

},

"integration_innovation": {

"title": "集成创新",

"points": [

"Kafka与现有雷达仿真框架的无缝集成",

"多协议网关(支持gRPC、ZeroMQ到Kafka转换)",

"统一监控和运维平台"

],

"impact": "显著降低系统复杂性和维护成本"

},

"performance_innovation": {

"title": "性能创新",

"points": [

"针对雷达数据的Kafka配置优化模板",

"自适应批处理和压缩策略",

"智能分区和负载均衡算法"

],

"impact": "资源使用效率提升40%"

}

}第二章:Kafka核心架构深度解析

2.1 分布式架构设计原理

Kafka的分布式架构是其高性能和高可靠性的基础:

python

import threading

import time

from typing import Dict, List, Optional, Tuple

from dataclasses import dataclass, field

from enum import Enum

import hashlib

import json

from collections import defaultdict

class PartitionStrategy(Enum):

"""分区策略"""

ROUND_ROBIN = "round_robin" # 轮询

KEY_HASH = "key_hash" # 键哈希

CUSTOM = "custom" # 自定义

@dataclass

class TopicPartition:

"""Topic分区"""

topic: str

partition: int

leader: int # 分区leader所在的broker ID

replicas: List[int] # 副本所在的broker列表

isr: List[int] # 同步副本列表

start_offset: int = 0

end_offset: int = 0

def is_available(self) -> bool:

"""分区是否可用"""

return len(self.isr) > 0

def preferred_replica(self) -> int:

"""首选副本(通常为第一个副本)"""

return self.replicas[0] if self.replicas else -1

@dataclass

class Broker:

"""Kafka Broker"""

id: int

host: str

port: int

rack: Optional[str] = None

controller: bool = False

status: str = "online"

def endpoint(self) -> str:

"""获取broker端点"""

return f"{self.host}:{self.port}"

class KafkaCluster:

"""Kafka集群模拟"""

def __init__(self, num_brokers: int = 3):

self.brokers: Dict[int, Broker] = {}

self.topics: Dict[str, Dict[int, TopicPartition]] = defaultdict(dict)

self.controller_id: Optional[int] = None

# 初始化broker

for i in range(num_brokers):

broker = Broker(

id=i,

host=f"broker{i}",

port=9092 + i,

rack=f"rack{i % 2}" # 模拟机架感知

)

self.brokers[i] = broker

# 选举controller

self._elect_controller()

# 分区分配策略

self.partition_assignor = PartitionAssignor()

def _elect_controller(self):

"""选举controller"""

# 简单的选举:选择ID最小的broker

if self.brokers:

self.controller_id = min(self.brokers.keys())

self.brokers[self.controller_id].controller = True

print(f"Broker {self.controller_id} 被选举为controller")

def create_topic(self,

topic: str,

num_partitions: int = 1,

replication_factor: int = 1,

config: Optional[Dict] = None) -> bool:

"""创建Topic"""

if topic in self.topics:

print(f"Topic {topic} 已存在")

return False

if replication_factor > len(self.brokers):

print(f"副本因子{replication_factor}超过broker数量{len(self.brokers)}")

return False

# 分配分区

for partition_id in range(num_partitions):

# 分配副本

replica_assignment = self._assign_replicas(

topic, partition_id, replication_factor

)

# 创建分区

partition = TopicPartition(

topic=topic,

partition=partition_id,

leader=replica_assignment[0], # 第一个副本为leader

replicas=replica_assignment,

isr=replica_assignment.copy() # 初始时所有副本都在ISR中

)

self.topics[topic][partition_id] = partition

print(f"Topic {topic} 创建成功: {num_partitions}分区, {replication_factor}副本")

return True

def _assign_replicas(self,

topic: str,

partition_id: int,

replication_factor: int) -> List[int]:

"""分配副本"""

# 简单的副本分配策略

# 实际Kafka使用机架感知算法

# 获取所有broker ID

broker_ids = list(self.brokers.keys())

# 计算起始broker(考虑机架感知)

start_index = partition_id % len(broker_ids)

# 选择副本

replicas = []

current_index = start_index

while len(replicas) < replication_factor:

broker_id = broker_ids[current_index]

if broker_id not in replicas:

replicas.append(broker_id)

current_index = (current_index + 1) % len(broker_ids)

return replicas

def produce_message(self,

topic: str,

key: Optional[str],

value: str,

partition_strategy: PartitionStrategy = PartitionStrategy.KEY_HASH) -> Tuple[int, int]:

"""生产消息"""

if topic not in self.topics:

print(f"Topic {topic} 不存在")

return -1, -1

# 选择分区

partition = self._select_partition(topic, key, partition_strategy)

if partition is None:

return -1, -1

# 更新偏移量

partition.end_offset += 1

print(f"消息生产到 {topic}-{partition.partition}, 偏移量: {partition.end_offset}")

return partition.partition, partition.end_offset

def _select_partition(self,

topic: str,

key: Optional[str],

strategy: PartitionStrategy) -> Optional[TopicPartition]:

"""选择分区"""

partitions = self.topics[topic]

if not partitions:

return None

if strategy == PartitionStrategy.ROUND_ROBIN:

# 简单的轮询

partition_id = len(partitions) % (len(partitions) + 1)

return partitions[partition_id]

elif strategy == PartitionStrategy.KEY_HASH:

# 键哈希

if key is None:

# 无键时使用轮询

return self._select_partition(topic, key, PartitionStrategy.ROUND_ROBIN)

# 计算哈希值

hash_value = int(hashlib.md5(key.encode()).hexdigest(), 16)

partition_id = hash_value % len(partitions)

return partitions[partition_id]

elif strategy == PartitionStrategy.CUSTOM:

# 自定义分区逻辑

# 这里可以根据雷达数据的特定字段分区

if key and "radar" in key:

# 例如,根据雷达ID分区

radar_id = key.split(":")[0] if ":" in key else key

hash_value = int(hashlib.md5(radar_id.encode()).hexdigest(), 16)

partition_id = hash_value % len(partitions)

return partitions[partition_id]

else:

return self._select_partition(topic, key, PartitionStrategy.KEY_HASH)

return list(partitions.values())[0]

def get_cluster_info(self) -> Dict:

"""获取集群信息"""

return {

"brokers": len(self.brokers),

"topics": len(self.topics),

"controller": self.controller_id,

"broker_details": [

{

"id": broker.id,

"endpoint": broker.endpoint(),

"rack": broker.rack,

"controller": broker.controller,

"status": broker.status

}

for broker in self.brokers.values()

],

"topic_details": {

topic: {

"partitions": len(partitions),

"replication_factor": len(next(iter(partitions.values())).replicas) if partitions else 0

}

for topic, partitions in self.topics.items()

}

}

class PartitionAssignor:

"""分区分配器(用于消费者组)"""

def assign_partitions(self,

members: List[str],

topic_partitions: Dict[str, List[int]]) -> Dict[str, List[Tuple[str, int]]]:

"""分配分区给消费者"""

assignments = {member: [] for member in members}

if not members or not topic_partitions:

return assignments

# 简单的轮询分配

for topic, partitions in topic_partitions.items():

for i, partition in enumerate(sorted(partitions)):

member_index = i % len(members)

member = members[member_index]

assignments[member].append((topic, partition))

return assignments

# 演示Kafka集群操作

def demonstrate_kafka_cluster():

"""演示Kafka集群操作"""

print("=== Kafka集群演示 ===")

# 创建集群

cluster = KafkaCluster(num_brokers=3)

# 创建雷达数据相关的Topic

cluster.create_topic("radar_pulses", num_partitions=3, replication_factor=2)

cluster.create_topic("signal_features", num_partitions=2, replication_factor=3)

cluster.create_topic("target_tracks", num_partitions=1, replication_factor=3)

# 生产消息

print("\n--- 生产消息 ---")

radar_ids = ["radar_001", "radar_002", "radar_003"]

for i in range(10):

radar_id = radar_ids[i % len(radar_ids)]

key = f"{radar_id}:pulse_{i}"

value = f"脉冲数据 {i}"

partition, offset = cluster.produce_message(

"radar_pulses",

key,

value,

partition_strategy=PartitionStrategy.CUSTOM

)

print(f"雷达 {radar_id} 脉冲 {i} -> 分区 {partition}, 偏移量 {offset}")

# 显示集群信息

print("\n--- 集群信息 ---")

info = cluster.get_cluster_info()

print(f"Broker数量: {info['brokers']}")

print(f"Topic数量: {info['topics']}")

print(f"Controller: Broker {info['controller']}")

for broker in info["broker_details"]:

print(f" Broker {broker['id']}: {broker['endpoint']} (机架: {broker['rack']})")

for topic, details in info["topic_details"].items():

print(f" Topic {topic}: {details['partitions']}分区, {details['replication_factor']}副本")

# 运行演示

if __name__ == "__main__":

demonstrate_kafka_cluster()2.2 存储引擎与性能优化

Kafka的存储引擎是其高性能的关键:

python

import os

import struct

import mmap

from pathlib import Path

from typing import BinaryIO, Optional, Tuple

import time

from datetime import datetime

class LogSegment:

"""Kafka日志段"""

def __init__(self, file_path: str, base_offset: int, segment_size: int = 1024 * 1024 * 1024): # 1GB

self.file_path = file_path

self.base_offset = base_offset

self.segment_size = segment_size

# 数据文件

self.data_file: Optional[BinaryIO] = None

self.mmap_data: Optional[mmap.mmap] = None

# 索引文件

self.offset_index_file: Optional[BinaryIO] = None

self.time_index_file: Optional[BinaryIO] = None

# 当前偏移量

self.current_offset = base_offset

self.current_position = 0

# 打开或创建文件

self._open_files()

def _open_files(self):

"""打开文件"""

# 确保目录存在

os.makedirs(os.path.dirname(self.file_path), exist_ok=True)

# 打开数据文件

self.data_file = open(f"{self.file_path}.log", "ab+")

# 如果文件为空,写入初始偏移量

if self.data_file.tell() == 0:

self.current_offset = self.base_offset

self.current_position = 0

else:

# 读取最后一条消息获取当前偏移量

self._recover_from_existing()

# 内存映射

self.data_file.seek(0, 2) # 移动到文件末尾

self.mmap_data = mmap.mmap(self.data_file.fileno(), 0, access=mmap.ACCESS_WRITE)

# 打开索引文件

self.offset_index_file = open(f"{self.file_path}.index", "ab+")

self.time_index_file = open(f"{self.file_path}.timeindex", "ab+")

def _recover_from_existing(self):

"""从现有文件恢复"""

# 简化实现:扫描文件找到最后一条消息

self.data_file.seek(0)

while True:

position = self.data_file.tell()

try:

# 读取消息大小

size_bytes = self.data_file.read(4)

if len(size_bytes) < 4:

break

message_size = struct.unpack('>I', size_bytes)[0]

# 读取完整消息

message = self.data_file.read(message_size)

if len(message) < message_size:

# 消息不完整

self.data_file.seek(position)

self.data_file.truncate()

break

# 解析偏移量

offset = struct.unpack('>Q', message[8:16])[0]

self.current_offset = offset + 1

self.current_position = self.data_file.tell()

except (struct.error, IOError):

# 文件损坏,截断

self.data_file.seek(position)

self.data_file.truncate()

break

def append(self, value: bytes, timestamp: Optional[int] = None) -> int:

"""追加消息"""

if timestamp is None:

timestamp = int(time.time() * 1000) # 毫秒时间戳

# 构建消息

message = self._build_message(value, timestamp)

message_size = len(message)

# 检查是否需要滚动

if self._should_roll(message_size):

return -1 # 需要创建新段

# 写入数据文件

start_position = self.current_position

# 写入消息大小

self.mmap_data.resize(len(self.mmap_data) + 4 + message_size)

self.mmap_data[-4 - message_size:-message_size] = struct.pack('>I', message_size)

# 写入消息

self.mmap_data[-message_size:] = message

# 更新索引

self._update_indexes(start_position, timestamp)

# 更新状态

offset = self.current_offset

self.current_offset += 1

self.current_position += 4 + message_size

return offset

def _build_message(self, value: bytes, timestamp: int) -> bytes:

"""构建Kafka消息格式"""

# Kafka消息格式: offset(8) + size(4) + crc(4) + magic(1) + attributes(1) + timestamp(8) + key_len(4) + key + value_len(4) + value

magic = 1 # 消息格式版本

attributes = 0 # 属性

# 消息头

header = struct.pack('>Q', self.current_offset) # 偏移量

header += struct.pack('>i', len(value) + 14 + 8) # 消息大小(不包括offset和size字段)

header += struct.pack('>I', 0) # CRC32,简化实现设为0

header += struct.pack('>b', magic)

header += struct.pack('>b', attributes)

header += struct.pack('>q', timestamp) # 时间戳

# 键(雷达仿真中通常没有键或使用雷达ID)

key = b""

header += struct.pack('>i', len(key))

header += key

# 值

header += struct.pack('>i', len(value))

return header + value

def _update_indexes(self, position: int, timestamp: int):

"""更新索引文件"""

# 偏移量索引

index_entry = struct.pack('>qi', self.current_offset, position)

self.offset_index_file.write(index_entry)

self.offset_index_file.flush()

# 时间索引

time_entry = struct.pack('>qi', timestamp, position)

self.time_index_file.write(time_entry)

self.time_index_file.flush()

def _should_roll(self, message_size: int) -> bool:

"""检查是否需要滚动到新段"""

return (self.current_position + 4 + message_size) > self.segment_size

def read(self, offset: int, max_size: int = 1024 * 1024) -> Optional[Tuple[int, int, bytes]]:

"""读取消息"""

# 查找消息位置

position = self._find_position_by_offset(offset)

if position is None:

return None

# 读取消息

self.mmap_data.seek(position)

# 读取消息大小

size_bytes = self.mmap_data.read(4)

if len(size_bytes) < 4:

return None

message_size = struct.unpack('>I', size_bytes)[0]

if message_size > max_size:

return None

# 读取完整消息

message = self.mmap_data.read(message_size)

if len(message) < message_size:

return None

# 解析消息

message_offset = struct.unpack('>Q', message[8:16])[0]

timestamp = struct.unpack('>q', message[18:26])[0]

# 提取值

key_length = struct.unpack('>i', message[26:30])[0]

value_start = 30 + key_length

value_length = struct.unpack('>i', message[value_start:value_start+4])[0]

value = message[value_start+4:value_start+4+value_length]

return message_offset, timestamp, value

def _find_position_by_offset(self, offset: int) -> Optional[int]:

"""通过偏移量查找位置"""

if offset < self.base_offset or offset >= self.current_offset:

return None

# 简化实现:顺序查找

# 实际Kafka会使用稀疏索引

self.offset_index_file.seek(0)

last_position = 0

while True:

entry = self.offset_index_file.read(12) # 8字节偏移量 + 4字节位置

if len(entry) < 12:

break

entry_offset, entry_position = struct.unpack('>qi', entry)

if entry_offset <= offset:

last_position = entry_position

if entry_offset >= offset:

break

return last_position

def close(self):

"""关闭文件"""

if self.mmap_data:

self.mmap_data.close()

if self.data_file:

self.data_file.close()

if self.offset_index_file:

self.offset_index_file.close()

if self.time_index_file:

self.time_index_file.close()

class LogManager:

"""日志管理器"""

def __init__(self, log_dir: str, segment_size: int = 1024 * 1024 * 1024):

self.log_dir = Path(log_dir)

self.segment_size = segment_size

self.segments: List[LogSegment] = []

self.active_segment: Optional[LogSegment] = None

# 加载现有段

self._load_existing_segments()

# 创建或获取活动段

if not self.active_segment:

self._create_new_segment(base_offset=0)

def _load_existing_segments(self):

"""加载现有段"""

self.log_dir.mkdir(parents=True, exist_ok=True)

# 查找所有日志文件

log_files = list(self.log_dir.glob("*.log"))

if not log_files:

return

# 按基础偏移量排序

segments_info = []

for log_file in log_files:

base_offset = int(log_file.stem)

segments_info.append((base_offset, log_file))

segments_info.sort(key=lambda x: x[0])

# 加载段

for base_offset, log_file in segments_info:

segment = LogSegment(

str(log_file.with_suffix('')),

base_offset,

self.segment_size

)

self.segments.append(segment)

# 最后一个段是活动段

if self.segments:

self.active_segment = self.segments[-1]

def _create_new_segment(self, base_offset: int) -> LogSegment:

"""创建新段"""

segment_path = self.log_dir / f"{base_offset:020d}"

segment = LogSegment(

str(segment_path),

base_offset,

self.segment_size

)

self.segments.append(segment)

self.active_segment = segment

return segment

def append(self, value: bytes, timestamp: Optional[int] = None) -> int:

"""追加消息"""

if timestamp is None:

timestamp = int(time.time() * 1000)

# 尝试追加到活动段

offset = self.active_segment.append(value, timestamp)

# 如果需要滚动

if offset == -1:

# 创建新段

new_base_offset = self.active_segment.current_offset

new_segment = self._create_new_segment(new_base_offset)

# 重新尝试

offset = new_segment.append(value, timestamp)

return offset

def read(self, offset: int) -> Optional[Tuple[int, int, bytes]]:

"""读取消息"""

# 查找包含该偏移量的段

segment = self._find_segment_for_offset(offset)

if not segment:

return None

return segment.read(offset)

def _find_segment_for_offset(self, offset: int) -> Optional[LogSegment]:

"""查找包含偏移量的段"""

for segment in reversed(self.segments):

if segment.base_offset <= offset < segment.current_offset:

return segment

return None

def close(self):

"""关闭所有段"""

for segment in self.segments:

segment.close()

# 演示存储引擎

def demonstrate_storage_engine():

"""演示存储引擎"""

print("=== Kafka存储引擎演示 ===")

# 创建日志管理器

log_dir = "./kafka_logs"

log_manager = LogManager(log_dir, segment_size=1024) # 小段便于演示

try:

# 写入雷达脉冲数据

print("\n--- 写入雷达脉冲数据 ---")

pulse_data = []

for i in range(20):

# 模拟雷达脉冲

pulse = {

"pulse_id": f"pulse_{i}",

"radar_id": f"radar_{(i % 3) + 1:03d}",

"timestamp": int(time.time() * 1000) + i * 10,

"frequency": 1000.0 + i * 10,

"power": 100.0 - i * 0.5

}

value = json.dumps(pulse).encode('utf-8')

offset = log_manager.append(value)

pulse_data.append((offset, pulse["pulse_id"]))

print(f"写入脉冲 {pulse['pulse_id']} -> 偏移量 {offset}")

# 读取数据

print("\n--- 读取雷达脉冲数据 ---")

for offset, pulse_id in pulse_data[::3]: # 每隔3个读取一个

result = log_manager.read(offset)

if result:

read_offset, timestamp, value = result

pulse = json.loads(value.decode('utf-8'))

print(f"偏移量 {read_offset}: {pulse['pulse_id']} (频率: {pulse['frequency']} MHz)")

# 演示段滚动

print("\n--- 段滚动演示 ---")

print(f"当前段数: {len(log_manager.segments)}")

# 写入更多数据触发滚动

for i in range(20, 40):

pulse = {

"pulse_id": f"pulse_{i}",

"timestamp": int(time.time() * 1000) + i * 10

}

value = json.dumps(pulse).encode('utf-8')

log_manager.append(value)

print(f"滚动后段数: {len(log_manager.segments)}")

print(f"段基础偏移量: {[s.base_offset for s in log_manager.segments]}")

finally:

# 清理

log_manager.close()

# 删除测试文件

import shutil

if os.path.exists(log_dir):

shutil.rmtree(log_dir)

# 运行演示

if __name__ == "__main__":

demonstrate_storage_engine()2.3 副本机制与高可用性

Kafka的副本机制保证了数据的可靠性和可用性:

python

import threading

import time

from typing import List, Dict, Set, Optional

from concurrent.futures import ThreadPoolExecutor

import random

class ReplicaState(Enum):

"""副本状态"""

ONLINE = "online" # 在线,同步中

OFFLINE = "offline" # 离线

RECOVERING = "recovering" # 恢复中

DEAD = "dead" # 死亡

@dataclass

class Replica:

"""分区副本"""

broker_id: int

partition: TopicPartition

state: ReplicaState = ReplicaState.ONLINE

log_end_offset: int = 0

last_update_time: float = field(default_factory=time.time)

def is_in_sync(self, leader_leo: int) -> bool:

"""判断是否在同步中"""

if self.state != ReplicaState.ONLINE:

return False

# 检查滞后程度

lag = leader_leo - self.log_end_offset

return lag <= 10 # 允许最多滞后10条消息

def update(self, offset: int):

"""更新副本状态"""

self.log_end_offset = offset

self.last_update_time = time.time()

class PartitionLeader:

"""分区Leader"""

def __init__(self, partition: TopicPartition):

self.partition = partition

self.leader_id = partition.leader

self.replicas: Dict[int, Replica] = {}

self.isr: Set[int] = set() # 同步副本集

self.high_watermark: int = 0

self.log_end_offset: int = 0

# 初始化副本

for broker_id in partition.replicas:

replica = Replica(broker_id, partition)

self.replicas[broker_id] = replica

if broker_id in partition.isr:

self.isr.add(broker_id)

def produce(self, value: bytes) -> int:

"""Leader生产消息"""

# 1. 本地写入

offset = self._write_locally(value)

self.log_end_offset = offset

# 2. 异步复制到follower

self._replicate_to_followers(offset, value)

# 3. 更新高水位

self._update_high_watermark()

return offset

def _write_locally(self, value: bytes) -> int:

"""本地写入"""

# 简化实现

offset = self.log_end_offset

self.log_end_offset += 1

# 更新本地副本

if self.leader_id in self.replicas:

self.replicas[self.leader_id].update(self.log_end_offset)

print(f"Leader {self.leader_id} 本地写入,偏移量: {offset}")

return offset

def _replicate_to_followers(self, offset: int, value: bytes):

"""复制到follower"""

def replicate_to_replica(replica: Replica):

try:

# 模拟网络延迟

delay = random.uniform(0.001, 0.01)

time.sleep(delay)

# 模拟可能失败

if random.random() > 0.05: # 95%成功率

replica.update(offset)

print(f" 副本 {replica.broker_id} 同步成功,偏移量: {offset}")

return True

else:

print(f" 副本 {replica.broker_id} 同步失败")

return False

except Exception as e:

print(f" 副本 {replica.broker_id} 异常: {e}")

return False

# 并行复制

with ThreadPoolExecutor(max_workers=len(self.replicas) - 1) as executor:

futures = []

for broker_id, replica in self.replicas.items():

if broker_id != self.leader_id:

future = executor.submit(replicate_to_replica, replica)

futures.append(future)

# 等待所有复制完成

for future in futures:

future.result()

def _update_high_watermark(self):

"""更新高水位"""

# 高水位是所有ISR中最小LEO

isr_leos = []

for broker_id in self.isr:

if broker_id in self.replicas:

replica = self.replicas[broker_id]

isr_leos.append(replica.log_end_offset)

if isr_leos:

new_hw = min(isr_leos)

if new_hw > self.high_watermark:

self.high_watermark = new_hw

print(f"高水位更新: {self.high_watermark}")

def update_isr(self):

"""更新ISR"""

new_isr = set()

for broker_id, replica in self.replicas.items():

if replica.is_in_sync(self.log_end_offset):

new_isr.add(broker_id)

# 检查ISR变化

if new_isr != self.isr:

print(f"ISR变化: {self.isr} -> {new_isr}")

self.isr = new_isr

# 如果ISR为空,分区不可用

if not self.isr:

print("警告: ISR为空,分区不可用!")

return new_isr

def handle_broker_failure(self, broker_id: int):

"""处理broker故障"""

if broker_id in self.replicas:

replica = self.replicas[broker_id]

replica.state = ReplicaState.OFFLINE

# 从ISR中移除

if broker_id in self.isr:

self.isr.remove(broker_id)

print(f"Broker {broker_id} 故障,从ISR中移除")

# 检查是否需要leader选举

if broker_id == self.leader_id:

self._elect_new_leader()

def _elect_new_leader(self):

"""选举新leader"""

# 优先从ISR中选择

if self.isr:

new_leader = next(iter(self.isr))

else:

# ISR为空,从存活副本中选择

alive_replicas = [

broker_id for broker_id, replica in self.replicas.items()

if replica.state == ReplicaState.ONLINE

]

if alive_replicas:

new_leader = alive_replicas[0]

else:

print("错误: 没有存活的副本!")

return

print(f"Leader选举: {self.leader_id} -> {new_leader}")

self.leader_id = new_leader

self.partition.leader = new_leader

class HighAvailabilityManager:

"""高可用性管理器"""

def __init__(self, cluster: KafkaCluster):

self.cluster = cluster

self.partition_leaders: Dict[Tuple[str, int], PartitionLeader] = {}

# 监控线程

self.monitor_thread = threading.Thread(target=self._monitor_loop, daemon=True)

self.running = True

# 初始化partition leaders

self._initialize_partition_leaders()

def _initialize_partition_leaders(self):

"""初始化partition leaders"""

for topic, partitions in self.cluster.topics.items():

for partition_id, partition in partitions.items():

key = (topic, partition_id)

self.partition_leaders[key] = PartitionLeader(partition)

def produce(self, topic: str, value: bytes) -> Optional[int]:

"""生产消息(带副本)"""

# 简化:选择第一个分区

if topic not in self.cluster.topics:

return None

partitions = self.cluster.topics[topic]

if not partitions:

return None

partition_id = next(iter(partitions.keys()))

key = (topic, partition_id)

if key in self.partition_leaders:

leader = self.partition_leaders[key]

offset = leader.produce(value)

# 更新ISR

leader.update_isr()

return offset

return None

def simulate_broker_failure(self, broker_id: int):

"""模拟broker故障"""

print(f"\n=== 模拟Broker {broker_id} 故障 ===")

# 标记broker为离线

if broker_id in self.cluster.brokers:

self.cluster.brokers[broker_id].status = "offline"

# 处理受影响的partition leaders

for (topic, partition_id), leader in self.partition_leaders.items():

if broker_id in leader.replicas:

leader.handle_broker_failure(broker_id)

def _monitor_loop(self):

"""监控循环"""

while self.running:

time.sleep(5)

# 检查副本健康状态

for (topic, partition_id), leader in self.partition_leaders.items():

leader.update_isr()

def start_monitoring(self):

"""启动监控"""

self.monitor_thread.start()

def stop(self):

"""停止"""

self.running = False

if self.monitor_thread.is_alive():

self.monitor_thread.join(timeout=2)

# 演示副本机制

def demonstrate_replication():

"""演示副本机制"""

print("=== Kafka副本机制演示 ===")

# 创建集群

cluster = KafkaCluster(num_brokers=3)

cluster.create_topic("radar_data", num_partitions=1, replication_factor=3)

# 创建高可用性管理器

ha_manager = HighAvailabilityManager(cluster)

ha_manager.start_monitoring()

try:

# 生产消息

print("\n--- 正常情况下的消息生产 ---")

for i in range(5):

value = f"雷达脉冲_{i}".encode('utf-8')

offset = ha_manager.produce("radar_data", value)

print(f"生产消息 {i}, 偏移量: {offset}")

time.sleep(0.5)

# 模拟broker故障

ha_manager.simulate_broker_failure(0)

time.sleep(1)

# 故障后继续生产

print("\n--- 故障后的消息生产 ---")

for i in range(5, 10):

value = f"雷达脉冲_{i}".encode('utf-8')

offset = ha_manager.produce("radar_data", value)

print(f"生产消息 {i}, 偏移量: {offset}")

time.sleep(0.5)

# 显示最终状态

print("\n--- 最终状态 ---")

info = cluster.get_cluster_info()

for broker in info["broker_details"]:

print(f"Broker {broker['id']}: 状态={broker['status']}")

finally:

ha_manager.stop()

time.sleep(1)

# 运行演示

if __name__ == "__main__":

demonstrate_replication()2.4 生产者-消费者模型扩展

Kafka的生产者-消费者模型支持多种高级特性:

python

import threading

import queue

import time

from typing import Dict, List, Optional, Callable

from dataclasses import dataclass, field

from concurrent.futures import Future

import hashlib

class ProducerConfig:

"""生产者配置"""

def __init__(self,

bootstrap_servers: List[str],

acks: str = "all", # "0", "1", "all"

retries: int = 3,

batch_size: int = 16384, # 16KB

linger_ms: int = 0,

compression_type: str = "none", # "none", "gzip", "snappy", "lz4"

max_in_flight_requests: int = 5):

self.bootstrap_servers = bootstrap_servers

self.acks = acks

self.retries = retries

self.batch_size = batch_size

self.linger_ms = linger_ms

self.compression_type = compression_type

self.max_in_flight_requests = max_in_flight_requests

def for_radar_data(self) -> 'ProducerConfig':

"""雷达数据专用配置"""

return ProducerConfig(

bootstrap_servers=self.bootstrap_servers,

acks="1", # 雷达数据不需要完全一致性

retries=3,

batch_size=65536, # 64KB,适合脉冲数据

linger_ms=5, # 5ms批处理延迟

compression_type="lz4", # 快速压缩

max_in_flight_requests=1 # 有序

)

@dataclass

class ProducerRecord:

"""生产者记录"""

topic: str

value: bytes

key: Optional[bytes] = None

partition: Optional[int] = None

timestamp: Optional[int] = None

headers: Dict[str, bytes] = field(default_factory=dict)

def size(self) -> int:

"""记录大小"""

size = len(self.value) if self.value else 0

size += len(self.key) if self.key else 0

for k, v in self.headers.items():

size += len(k.encode()) + len(v)

return size

@dataclass

class RecordMetadata:

"""记录元数据"""

topic: str

partition: int

offset: int

timestamp: int

serialized_key_size: int

serialized_value_size: int

class RecordAccumulator:

"""记录累加器(批处理)"""

def __init__(self, config: ProducerConfig):

self.config = config

self.batches: Dict[Tuple[str, int], List[ProducerRecord]] = {}

self.lock = threading.RLock()

# 批处理相关

self.batch_size = config.batch_size

self.linger_ms = config.linger_ms

# 启动清理线程

self.cleanup_thread = threading.Thread(target=self._cleanup_loop, daemon=True)

self.running = True

self.cleanup_thread.start()

def append(self,

record: ProducerRecord,

partition: int) -> Optional[List[ProducerRecord]]:

"""追加记录"""

with self.lock:

key = (record.topic, partition)

if key not in self.batches:

self.batches[key] = []

batch = self.batches[key]

batch.append(record)

# 检查是否达到批大小

batch_size = sum(r.size() for r in batch)

if batch_size >= self.batch_size:

ready_batch = batch.copy()

batch.clear()

return ready_batch

return None

def _cleanup_loop(self):

"""清理循环(处理linger时间)"""

while self.running:

time.sleep(self.linger_ms / 1000.0)

self._expire_batches()

def _expire_batches(self):

"""过期批次"""

with self.lock:

current_time = time.time() * 1000 # 毫秒

for key, batch in list(self.batches.items()):

if batch:

# 检查第一个记录的时间

first_record = batch[0]

if first_record.timestamp:

age = current_time - first_record.timestamp

if age > self.linger_ms:

# 批次过期,准备发送

ready_batch = batch.copy()

batch.clear()

yield key, ready_batch

def drain(self) -> Dict[Tuple[str, int], List[ProducerRecord]]:

"""排空所有批次"""

with self.lock:

ready_batches = self.batches.copy()

self.batches.clear()

return ready_batches

class RadarDataProducer:

"""雷达数据生产者(优化版)"""

def __init__(self, config: ProducerConfig):

self.config = config

self.accumulator = RecordAccumulator(config)

# 发送线程

self.sender_thread = threading.Thread(target=self._sender_loop, daemon=True)

self.running = False

# 回调队列

self.callback_queue = queue.Queue()

# 统计

self.stats = {

"records_sent": 0,

"bytes_sent": 0,

"batch_count": 0,

"errors": 0

}

def start(self):

"""启动生产者"""

self.running = True

self.sender_thread.start()

print("雷达数据生产者已启动")

def stop(self):

"""停止生产者"""

self.running = False

if self.sender_thread.is_alive():

self.sender_thread.join(timeout=5)

# 发送剩余批次

self._send_remaining()

print("雷达数据生产者已停止")

def send(self,

topic: str,

value: bytes,

key: Optional[bytes] = None,

callback: Optional[Callable] = None) -> Future:

"""发送消息"""

# 创建记录

record = ProducerRecord(

topic=topic,

value=value,

key=key,

timestamp=int(time.time() * 1000)

)

# 计算分区

partition = self._partition(record)

# 添加到累加器

batch = self.accumulator.append(record, partition)

# 如果有完整批次,立即发送

if batch:

self._send_batch(topic, partition, batch)

# 创建Future

future = Future()

if callback:

future.add_done_callback(callback)

return future

def _partition(self, record: ProducerRecord) -> int:

"""计算分区"""

if record.partition is not None:

return record.partition

if record.key:

# 按键哈希分区

hash_value = int(hashlib.md5(record.key).hexdigest(), 16)

return hash_value % 3 # 假设3个分区

# 轮询分区

return self.stats["records_sent"] % 3

def _sender_loop(self):

"""发送循环"""

while self.running:

try:

# 检查过期批次

for (topic, partition), batch in self.accumulator._expire_batches():

if batch:

self._send_batch(topic, partition, batch)

# 处理回调

self._process_callbacks()

time.sleep(0.001) # 1ms

except Exception as e:

print(f"发送循环错误: {e}")

time.sleep(1)

def _send_batch(self, topic: str, partition: int, batch: List[ProducerRecord]):

"""发送批次"""

if not batch:

return

try:

# 模拟网络发送

total_size = sum(record.size() for record in batch)

# 压缩

if self.config.compression_type != "none":

total_size = int(total_size * 0.5) # 模拟压缩

# 发送延迟

network_delay = total_size / (1024 * 1024) * 0.001 # 1MB/ms

# 模拟失败

if random.random() < 0.01: # 1%失败率

raise Exception("模拟网络错误")

time.sleep(network_delay)

# 更新统计

self.stats["records_sent"] += len(batch)

self.stats["bytes_sent"] += total_size

self.stats["batch_count"] += 1

print(f"发送批次: topic={topic}, partition={partition}, "

f"records={len(batch)}, size={total_size} bytes")

# 成功回调

for record in batch:

metadata = RecordMetadata(

topic=topic,

partition=partition,

offset=self.stats["records_sent"],

timestamp=record.timestamp or int(time.time() * 1000),

serialized_key_size=len(record.key) if record.key else 0,

serialized_value_size=len(record.value)

)

self.callback_queue.put(("success", metadata))

except Exception as e:

# 失败回调

print(f"批次发送失败: {e}")

self.stats["errors"] += 1

for record in batch:

self.callback_queue.put(("error", (record, e)))

def _send_remaining(self):

"""发送剩余批次"""

batches = self.accumulator.drain()

for (topic, partition), batch in batches.items():

if batch:

self._send_batch(topic, partition, batch)

def _process_callbacks(self):

"""处理回调"""

while not self.callback_queue.empty():

try:

result_type, data = self.callback_queue.get_nowait()

if result_type == "success":

metadata = data

print(f"消息发送成功: {metadata.topic}-{metadata.partition}:{metadata.offset}")

elif result_type == "error":

record, error = data

print(f"消息发送失败: {record.topic}, 错误: {error}")

except queue.Empty:

break

def get_stats(self) -> Dict:

"""获取统计信息"""

return self.stats.copy()

# 演示生产者

def demonstrate_producer():

"""演示生产者"""

print("=== Kafka生产者演示 ===")

# 创建配置

config = ProducerConfig(

bootstrap_servers=["localhost:9092", "localhost:9093"],

acks="1",

retries=3,

batch_size=65536,

linger_ms=5,

compression_type="lz4",

max_in_flight_requests=1

)

# 创建生产者

producer = RadarDataProducer(config)

producer.start()

try:

# 发送雷达脉冲数据

print("\n--- 发送雷达脉冲数据 ---")

for i in range(100):

# 创建脉冲数据

pulse = {

"pulse_id": f"pulse_{i:06d}",

"radar_id": f"radar_{(i % 5) + 1:03d}",

"timestamp": int(time.time() * 1000) + i * 10,

"frequency": 1000.0 + (i % 100) * 10,

"power": 100.0 - (i % 20) * 0.5,

"samples": [random.random() for _ in range(100)] # 模拟I/Q数据

}

# 序列化

value = json.dumps(pulse).encode('utf-8')

key = pulse["radar_id"].encode('utf-8')

# 发送

producer.send(

topic="radar_pulses",

value=value,

key=key

)

if i % 20 == 0:

print(f"已发送 {i} 个脉冲")

time.sleep(0.1)

# 等待发送完成

time.sleep(2)

# 显示统计

print("\n--- 生产者统计 ---")

stats = producer.get_stats()

for key, value in stats.items():

print(f"{key}: {value}")

# 计算吞吐量

duration = 2 # 秒

throughput = stats["records_sent"] / duration

print(f"吞吐量: {throughput:.1f} 记录/秒")

finally:

producer.stop()

# 运行演示

if __name__ == "__main__":

demonstrate_producer()3.1 Topic分区策略设计

在雷达仿真中,合理设计Kafka Topic的分区策略是保证系统性能的关键。不同的数据流需要不同的分区策略。

3.1.1 雷达数据Topic设计

python

class RadarTopicDesign:

"""雷达数据Topic设计"""

def __init__(self):

self.topics = {

"radar_raw_pulses": {

"description": "原始脉冲数据",

"partitions": 12,

"replication_factor": 3,

"retention_hours": 24,

"compression": "lz4",

"cleanup_policy": "delete",

"partition_strategy": "emitter_hash",

"config": {

"segment.bytes": "1073741824", # 1GB

"retention.bytes": "107374182400", # 100GB

"max.message.bytes": "10485760", # 10MB

}

},

"signal_features": {

"description": "信号特征数据",

"partitions": 8,

"replication_factor": 3,

"retention_hours": 168, # 7天

"compression": "snappy",

"cleanup_policy": "delete",

"partition_strategy": "radar_id_hash",

"config": {

"segment.bytes": "536870912", # 512MB

"retention.bytes": "53687091200", # 50GB

}

},

"target_tracks": {

"description": "目标航迹数据",

"partitions": 4,

"replication_factor": 3,

"retention_hours": 720, # 30天

"compression": "gzip",

"cleanup_policy": "compact", # 使用压缩清理

"partition_strategy": "target_id_hash",

"config": {

"cleanup.policy": "compact,delete",

"delete.retention.ms": "86400000", # 1天

"min.cleanable.dirty.ratio": "0.5",

}

},

"system_events": {

"description": "系统事件",

"partitions": 2,

"replication_factor": 3,

"retention_hours": 8760, # 1年

"compression": "none",

"cleanup_policy": "delete",

"partition_strategy": "round_robin",

"config": {

"segment.bytes": "268435456", # 256MB

}

}

}

def get_topic_config(self, topic_name):

"""获取Topic配置"""

if topic_name in self.topics:

return self.topics[topic_name]

return None

def create_topic_command(self, topic_name):

"""生成创建Topic的命令"""

config = self.get_topic_config(topic_name)

if not config:

return None

cmd = f"kafka-topics.sh --create "

cmd += f"--topic {topic_name} "

cmd += f"--partitions {config['partitions']} "

cmd += f"--replication-factor {config['replication_factor']} "

# 添加配置

cmd += "--config "

for key, value in config['config'].items():

cmd += f"{key}={value},"

cmd = cmd.rstrip(',')

return cmd3.1.2 分区键设计

python

class PartitionKeyDesign:

"""分区键设计"""

def __init__(self):

self.round_robin_index = 0

def calculate_partition(self, key, num_partitions, strategy="hash"):

"""计算分区"""

if strategy == "hash":

# 哈希分区

if isinstance(key, str):

key_bytes = key.encode('utf-8')

elif isinstance(key, bytes):

key_bytes = key

else:

key_bytes = str(key).encode('utf-8')

# 计算哈希值

hash_value = hash(key_bytes) & 0x7fffffff

return hash_value % num_partitions

elif strategy == "radar_id_hash":

# 雷达ID哈希分区

if ":" in key:

radar_id = key.split(":")[0]

else:

radar_id = key

hash_value = hash(radar_id.encode('utf-8')) & 0x7fffffff

return hash_value % num_partitions

elif strategy == "emitter_id_hash":

# 辐射源ID哈希分区

if "emitter_" in key:

emitter_id = key.split("_")[1]

else:

emitter_id = key

hash_value = hash(emitter_id.encode('utf-8')) & 0x7fffffff

return hash_value % num_partitions

elif strategy == "round_robin":

# 轮询分区

partition = self.round_robin_index % num_partitions

self.round_robin_index += 1

return partition

else:

# 默认哈希

return self.calculate_partition(key, num_partitions, "hash")

def get_partition_key(self, data, key_type="radar_id"):

"""获取分区键"""

if key_type == "radar_id":

return data.get("radar_id", "unknown")

elif key_type == "emitter_id":

return data.get("emitter_id", "unknown")

elif key_type == "frequency_range":

# 按频率范围分区

frequency = data.get("frequency", 0)

range_start = (frequency // 1000) * 1000

return f"range_{range_start}"

elif key_type == "timestamp":

# 按时间分区

timestamp = data.get("timestamp", 0)

hour = (timestamp // 3600000) % 24

return f"hour_{hour}"

else:

return "default"3.2 雷达数据序列化方案

3.2.1 Avro序列化

python

import avro.schema

import avro.io

import io

import json

from typing import Dict, Any

import fastavro

class AvroSerializer:

"""Avro序列化器"""

def __init__(self):

# 定义雷达脉冲的Avro模式

self.pulse_schema = {

"type": "record",

"name": "RadarPulse",

"namespace": "radar.avro",

"fields": [

{"name": "pulse_id", "type": "string"},

{"name": "radar_id", "type": "string"},

{"name": "timestamp", "type": "long"},

{"name": "frequency", "type": "double"},

{"name": "power", "type": "double"},

{"name": "pulse_width", "type": "double"},

{"name": "pulse_interval", "type": ["null", "double"], "default": None},

{"name": "azimuth", "type": "double"},

{"name": "elevation", "type": "double"},

{"name": "modulation", "type": ["null", "string"], "default": None},

{"name": "iq_samples", "type": ["null", "bytes"], "default": None},

{"name": "metadata", "type": {"type": "map", "values": "string"}, "default": {}}

]

}

# 编译模式

self.parsed_schema = fastavro.parse_schema(self.pulse_schema)

def serialize_pulse(self, pulse_data: Dict[str, Any]) -> bytes:

"""序列化脉冲数据"""

# 确保数据符合模式

validated_data = self._validate_pulse_data(pulse_data)

# 序列化

buffer = io.BytesIO()

fastavro.schemaless_writer(buffer, self.parsed_schema, validated_data)

return buffer.getvalue()

def deserialize_pulse(self, data: bytes) -> Dict[str, Any]:

"""反序列化脉冲数据"""

buffer = io.BytesIO(data)

pulse_data = fastavro.schemaless_reader(buffer, self.parsed_schema)

return pulse_data

def _validate_pulse_data(self, data: Dict[str, Any]) -> Dict[str, Any]:

"""验证脉冲数据"""

validated = {}

# 必需字段

validated["pulse_id"] = str(data.get("pulse_id", ""))

validated["radar_id"] = str(data.get("radar_id", ""))

validated["timestamp"] = int(data.get("timestamp", 0))

validated["frequency"] = float(data.get("frequency", 0.0))

validated["power"] = float(data.get("power", 0.0))

validated["pulse_width"] = float(data.get("pulse_width", 0.0))

validated["azimuth"] = float(data.get("azimuth", 0.0))

validated["elevation"] = float(data.get("elevation", 0.0))

# 可选字段

if "pulse_interval" in data:

validated["pulse_interval"] = float(data["pulse_interval"])

if "modulation" in data:

validated["modulation"] = str(data["modulation"])

if "iq_samples" in data:

if isinstance(data["iq_samples"], bytes):

validated["iq_samples"] = data["iq_samples"]

elif isinstance(data["iq_samples"], list):

# 将列表转换为bytes

import struct

iq_bytes = b""

for sample in data["iq_samples"]:

iq_bytes += struct.pack("ff", sample.real, sample.imag)

validated["iq_samples"] = iq_bytes

validated["metadata"] = data.get("metadata", {})

return validated

def get_schema_id(self) -> int:

"""获取模式ID(用于Schema Registry)"""

# 计算模式的哈希值作为ID

schema_str = json.dumps(self.pulse_schema, sort_keys=True)

return hash(schema_str) & 0x7fffffff3.2.2 二进制序列化优化

python

import struct

import numpy as np

import zlib

import lz4.frame

class BinaryRadarSerializer:

"""二进制雷达序列化器"""

def __init__(self, compression="lz4"):

self.compression = compression

def serialize_pulse_binary(self, pulse_data: Dict[str, Any]) -> bytes:

"""二进制序列化脉冲数据"""

buffer = bytearray()

# 头部:版本 + 标志

version = 1

flags = 0

if pulse_data.get("iq_samples") is not None:

flags |= 0x01 # 包含IQ数据

if pulse_data.get("metadata"):

flags |= 0x02 # 包含元数据

buffer.extend(struct.pack("BB", version, flags))

# 固定字段

buffer.extend(struct.pack("d", pulse_data.get("timestamp", 0)))

buffer.extend(struct.pack("d", pulse_data.get("frequency", 0)))

buffer.extend(struct.pack("f", pulse_data.get("power", 0)))

buffer.extend(struct.pack("f", pulse_data.get("pulse_width", 0)))

buffer.extend(struct.pack("f", pulse_data.get("azimuth", 0)))

buffer.extend(struct.pack("f", pulse_data.get("elevation", 0)))

# 可变字段

pulse_id = pulse_data.get("pulse_id", "").encode('utf-8')

buffer.extend(struct.pack("H", len(pulse_id)))

buffer.extend(pulse_id)

radar_id = pulse_data.get("radar_id", "").encode('utf-8')

buffer.extend(struct.pack("H", len(radar_id)))

buffer.extend(radar_id)

# IQ数据

if flags & 0x01:

iq_data = pulse_data["iq_samples"]

if isinstance(iq_data, np.ndarray):

iq_bytes = iq_data.tobytes()

elif isinstance(iq_data, bytes):

iq_bytes = iq_data

else:

iq_bytes = bytes(iq_data)

buffer.extend(struct.pack("I", len(iq_bytes)))

buffer.extend(iq_bytes)

# 元数据

if flags & 0x02:

metadata = pulse_data.get("metadata", {})

metadata_bytes = json.dumps(metadata).encode('utf-8')

buffer.extend(struct.pack("H", len(metadata_bytes)))

buffer.extend(metadata_bytes)

# 压缩

if self.compression == "lz4":

compressed = lz4.frame.compress(bytes(buffer))

elif self.compression == "zlib":

compressed = zlib.compress(bytes(buffer), level=3)

else:

compressed = bytes(buffer)

return compressed

def deserialize_pulse_binary(self, data: bytes) -> Dict[str, Any]:

"""二进制反序列化脉冲数据"""

# 解压缩

if self.compression == "lz4":

try:

data = lz4.frame.decompress(data)

except:

pass

elif self.compression == "zlib":

try:

data = zlib.decompress(data)

except:

pass

buffer = memoryview(data)

offset = 0

# 解析头部

version, flags = struct.unpack_from("BB", buffer, offset)

offset += 2

pulse_data = {}

# 固定字段

pulse_data["timestamp"], pulse_data["frequency"] = struct.unpack_from("dd", buffer, offset)

offset += 16

pulse_data["power"], pulse_data["pulse_width"] = struct.unpack_from("ff", buffer, offset)

offset += 8

pulse_data["azimuth"], pulse_data["elevation"] = struct.unpack_from("ff", buffer, offset)

offset += 8

# 脉冲ID

pulse_id_len = struct.unpack_from("H", buffer, offset)[0]

offset += 2

pulse_data["pulse_id"] = buffer[offset:offset+pulse_id_len].tobytes().decode('utf-8')

offset += pulse_id_len

# 雷达ID

radar_id_len = struct.unpack_from("H", buffer, offset)[0]

offset += 2

pulse_data["radar_id"] = buffer[offset:offset+radar_id_len].tobytes().decode('utf-8')

offset += radar_id_len

# IQ数据

if flags & 0x01:

iq_len = struct.unpack_from("I", buffer, offset)[0]

offset += 4

pulse_data["iq_samples"] = buffer[offset:offset+iq_len].tobytes()

offset += iq_len

# 元数据

if flags & 0x02:

metadata_len = struct.unpack_from("H", buffer, offset)[0]

offset += 2

metadata_bytes = buffer[offset:offset+metadata_len].tobytes()

pulse_data["metadata"] = json.loads(metadata_bytes.decode('utf-8'))

return pulse_data3.3 Schema注册与演进

3.3.1 Schema Registry集成

python

import requests

class SchemaRegistryClient:

"""Schema Registry客户端"""

def __init__(self, base_url="http://localhost:8081"):

self.base_url = base_url

self.schemas = {} # 本地缓存

self.session = requests.Session()

def register_schema(self, subject: str, schema: Dict) -> int:

"""注册模式"""

url = f"{self.base_url}/subjects/{subject}/versions"

payload = {"schema": json.dumps(schema)}

response = self.session.post(url, json=payload)

response.raise_for_status()

result = response.json()

schema_id = result["id"]

# 缓存

self.schemas[(subject, schema_id)] = schema

return schema_id

def get_schema(self, subject: str, version="latest") -> Dict:

"""获取模式"""

url = f"{self.base_url}/subjects/{subject}/versions/{version}"

response = self.session.get(url)

response.raise_for_status()

result = response.json()

schema = json.loads(result["schema"])

schema_id = result["id"]

# 缓存

self.schemas[(subject, schema_id)] = schema

return schema

def get_schema_by_id(self, schema_id: int) -> Dict:

"""通过ID获取模式"""

# 检查缓存

for (subject, cached_id), schema in self.schemas.items():

if cached_id == schema_id:

return schema

url = f"{self.base_url}/schemas/ids/{schema_id}"

response = self.session.get(url)

response.raise_for_status()

result = response.json()

schema = json.loads(result["schema"])

# 缓存

self.schemas[(result.get("subject", "unknown"), schema_id)] = schema

return schema

def check_compatibility(self, subject: str, schema: Dict) -> bool:

"""检查兼容性"""

url = f"{self.base_url}/compatibility/subjects/{subject}/versions/latest"

payload = {"schema": json.dumps(schema)}

response = self.session.post(url, json=payload)

response.raise_for_status()

result = response.json()

return result.get("is_compatible", False)3.3.2 模式演进管理

python

class SchemaEvolutionManager:

"""模式演进管理器"""

def __init__(self):

self.versions = {

"RadarPulse": {

1: {

"description": "初始版本",

"fields": ["pulse_id", "radar_id", "timestamp", "frequency", "power"]

},

2: {

"description": "添加脉冲参数",

"fields": ["pulse_id", "radar_id", "timestamp", "frequency", "power",

"pulse_width", "pulse_interval", "azimuth", "elevation"]

},

3: {

"description": "添加调制类型",

"fields": ["pulse_id", "radar_id", "timestamp", "frequency", "power",

"pulse_width", "pulse_interval", "azimuth", "elevation",

"modulation"]

},

4: {

"description": "添加IQ数据和元数据",

"fields": ["pulse_id", "radar_id", "timestamp", "frequency", "power",

"pulse_width", "pulse_interval", "azimuth", "elevation",

"modulation", "iq_samples", "metadata"]

}

}

}

def migrate_data(self, data: Dict, from_version: int, to_version: int, schema_name: str) -> Dict:

"""迁移数据"""

if schema_name not in self.versions:

return data

versions = self.versions[schema_name]

if from_version not in versions or to_version not in versions:

return data

migrated_data = data.copy()

# 逐步迁移

for v in range(from_version + 1, to_version + 1):

migrated_data = self._apply_migration(migrated_data, v, schema_name)

return migrated_data

def _apply_migration(self, data: Dict, to_version: int, schema_name: str) -> Dict:

"""应用单个迁移"""

migrated = data.copy()

if schema_name == "RadarPulse":

if to_version == 2:

# 添加默认脉冲参数

migrated.setdefault("pulse_width", 0.0)

migrated.setdefault("pulse_interval", 0.0)

migrated.setdefault("azimuth", 0.0)

migrated.setdefault("elevation", 0.0)

elif to_version == 3:

# 添加调制类型

migrated.setdefault("modulation", "UNKNOWN")

elif to_version == 4:

# 添加IQ数据和元数据

migrated.setdefault("iq_samples", None)

migrated.setdefault("metadata", {})

return migrated3.4 时间窗口与水印机制

3.4.1 事件时间提取

python

import dateutil.parser

class EventTimeExtractor:

"""事件时间提取器"""

def __init__(self, timestamp_field="timestamp", timestamp_format="milliseconds"):

self.timestamp_field = timestamp_field

self.timestamp_format = timestamp_format

def extract_timestamp(self, data: Dict) -> int:

"""提取时间戳"""

if self.timestamp_field in data:

timestamp = data[self.timestamp_field]

if self.timestamp_format == "milliseconds":

if isinstance(timestamp, (int, float)):

return int(timestamp)

elif isinstance(timestamp, str):

# 尝试解析字符串

dt = dateutil.parser.parse(timestamp)

return int(dt.timestamp() * 1000)

elif self.timestamp_format == "seconds":

if isinstance(timestamp, (int, float)):

return int(timestamp * 1000)

# 默认返回当前时间

return int(time.time() * 1000)

def extract_watermark(self, records: List[Dict], max_out_of_order_ms: int = 5000) -> int:

"""提取水印"""

if not records:

return 0

# 找到最大时间戳

timestamps = [self.extract_timestamp(r) for r in records]

max_timestamp = max(timestamps)

# 水印 = 最大时间戳 - 最大乱序时间

watermark = max_timestamp - max_out_of_order_ms

return max(0, watermark)3.4.2 时间窗口管理

python

class TimeWindowManager:

"""时间窗口管理器"""

def __init__(self, window_size_ms: int = 60000, slide_ms: int = 10000):

self.window_size_ms = window_size_ms

self.slide_ms = slide_ms

def assign_window(self, timestamp: int) -> List[Tuple[int, int]]:

"""分配窗口"""

windows = []

# 计算窗口开始时间

window_start = timestamp - (timestamp % self.slide_ms)

# 当前时间可能属于多个滑动窗口

while window_start <= timestamp:

window_end = window_start + self.window_size_ms

if window_start <= timestamp < window_end:

windows.append((window_start, window_end))

window_start -= self.slide_ms

return windows

def get_current_window(self, current_time: int = None) -> Tuple[int, int]:

"""获取当前窗口"""

if current_time is None:

current_time = int(time.time() * 1000)

window_start = current_time - (current_time % self.slide_ms)

window_end = window_start + self.window_size_ms

return (window_start, window_end)

def is_window_complete(self, window_end: int, watermark: int) -> bool:

"""检查窗口是否完成"""

return watermark >= window_end第四章:生产者优化策略

4.1 高性能数据采集

python

import threading

class RadarDataCollector:

"""雷达数据采集器"""

def __init__(self, buffer_size: int = 10000):

self.buffer_size = buffer_size

self.data_buffer = []

self.lock = threading.RLock()

# 统计

self.stats = {

"total_collected": 0,

"buffer_overflows": 0,

"collection_errors": 0

}

def collect_pulse(self, pulse_data: Dict) -> bool:

"""采集脉冲数据"""

with self.lock:

if len(self.data_buffer) >= self.buffer_size:

self.stats["buffer_overflows"] += 1

return False

try:

# 验证数据

validated_data = self._validate_pulse_data(pulse_data)

# 添加时间戳(如果不存在)

if "timestamp" not in validated_data:

validated_data["timestamp"] = int(time.time() * 1000)

# 添加到缓冲区

self.data_buffer.append(validated_data)

self.stats["total_collected"] += 1

return True

except Exception as e:

self.stats["collection_errors"] += 1

print(f"数据采集错误: {e}")

return False

def _validate_pulse_data(self, data: Dict) -> Dict:

"""验证脉冲数据"""

required_fields = ["pulse_id", "radar_id", "frequency", "power"]

for field in required_fields:

if field not in data:

raise ValueError(f"缺少必需字段: {field}")

return data

def get_batch(self, batch_size: int = 1000) -> List[Dict]:

"""获取批次数据"""

with self.lock:

if not self.data_buffer:

return []

batch_size = min(batch_size, len(self.data_buffer))

batch = self.data_buffer[:batch_size]

self.data_buffer = self.data_buffer[batch_size:]

return batch

def get_stats(self) -> Dict:

"""获取统计信息"""

with self.lock:

stats = self.stats.copy()

stats["buffer_size"] = len(self.data_buffer)

return stats4.2 批量发送与压缩

python

import queue

class BatchSender:

"""批量发送器"""

def __init__(self, producer, batch_size: int = 1000, linger_ms: int = 100):

self.producer = producer

self.batch_size = batch_size

self.linger_ms = linger_ms

self.current_batch = []

self.batch_lock = threading.RLock()

self.last_send_time = time.time()

# 启动发送线程

self.send_thread = threading.Thread(target=self._send_loop, daemon=True)

self.running = True

self.send_thread.start()

def add_record(self, topic: str, value: bytes, key: bytes = None):

"""添加记录"""

with self.batch_lock:

self.current_batch.append({

"topic": topic,

"value": value,

"key": key,

"timestamp": int(time.time() * 1000)

})

def _send_loop(self):

"""发送循环"""

while self.running:

try:

current_time = time.time()

time_since_last_send = current_time - self.last_send_time

with self.batch_lock:

should_send = (

len(self.current_batch) >= self.batch_size or

(self.current_batch and time_since_last_send * 1000 >= self.linger_ms)

)

if should_send:

self._send_batch()

self.last_send_time = current_time

time.sleep(0.001) # 1ms

except Exception as e:

print(f"发送循环错误: {e}")

time.sleep(1)

def _send_batch(self):

"""发送批次"""

with self.batch_lock:

if not self.current_batch:

return

batch = self.current_batch

self.current_batch = []

# 批量发送

for record in batch:

try:

self.producer.send(

topic=record["topic"],

value=record["value"],

key=record["key"]

)

except Exception as e:

print(f"发送失败: {e}")

def flush(self):

"""刷新缓冲区"""

with self.batch_lock:

if self.current_batch:

self._send_batch()

def stop(self):

"""停止"""

self.running = False

self.flush()

if self.send_thread.is_alive():

self.send_thread.join(timeout=5)4.3 分区键设计与数据均衡

python

class SmartPartitioner:

"""智能分区器"""

def __init__(self, num_partitions: int = 12):

self.num_partitions = num_partitions

# 分区统计

self.partition_stats = {i: 0 for i in range(num_partitions)}

self.key_stats = {}

# 负载均衡参数

self.rebalance_threshold = 0.2 # 20%不平衡触发重平衡

def get_partition(self, key, data=None) -> int:

"""获取分区"""

# 计算基础分区

if key is None:

base_partition = self._round_robin()

else:

base_partition = self._hash_key(key)

# 检查负载均衡

if self._needs_rebalance():

adjusted_partition = self._rebalance_partition(base_partition)

else:

adjusted_partition = base_partition

# 更新统计

self.partition_stats[adjusted_partition] += 1

if key:

if key not in self.key_stats:

self.key_stats[key] = {"count": 0, "last_partition": adjusted_partition}

self.key_stats[key]["count"] += 1

self.key_stats[key]["last_partition"] = adjusted_partition

return adjusted_partition

def _hash_key(self, key) -> int:

"""按键哈希"""

if isinstance(key, str):

key_bytes = key.encode('utf-8')

elif isinstance(key, bytes):

key_bytes = key

else:

key_bytes = str(key).encode('utf-8')

hash_value = hash(key_bytes) & 0x7fffffff

return hash_value % self.num_partitions

def _round_robin(self) -> int:

"""轮询分区"""

# 找到消息最少的partition

min_count = min(self.partition_stats.values())

for partition, count in self.partition_stats.items():

if count == min_count:

return partition

return 0

def _needs_rebalance(self) -> bool:

"""检查是否需要重平衡"""

if len(self.partition_stats) < 2:

return False

counts = list(self.partition_stats.values())

avg_count = sum(counts) / len(counts)

# 检查是否有partition负载超过阈值

for count in counts:

if avg_count > 0 and abs(count - avg_count) / avg_count > self.rebalance_threshold:

return True

return False

def _rebalance_partition(self, base_partition: int) -> int:

"""重平衡分区"""

# 找到负载最小的partition

min_partition = min(self.partition_stats.items(), key=lambda x: x[1])[0]

# 如果当前partition负载过高,使用最小负载的partition

avg_count = sum(self.partition_stats.values()) / len(self.partition_stats)

if self.partition_stats[base_partition] > avg_count * (1 + self.rebalance_threshold):

return min_partition

return base_partition

def get_stats(self) -> Dict:

"""获取统计信息"""

total = sum(self.partition_stats.values())

avg = total / self.num_partitions if self.num_partitions > 0 else 0

balance_score = 0

if avg > 0:

variance = sum((c - avg) ** 2 for c in self.partition_stats.values()) / self.num_partitions

balance_score = 1.0 - (variance ** 0.5 / avg)

return {

"total_messages": total,

"partition_distribution": self.partition_stats.copy(),

"balance_score": balance_score,

"unique_keys": len(self.key_stats)

}4.4 异步与回调机制

python

from confluent_kafka import Producer

from concurrent.futures import Future

class AsyncProducerWithCallbacks:

"""带回调的异步生产者"""

def __init__(self, bootstrap_servers):

self.producer = Producer({

'bootstrap.servers': bootstrap_servers,

'acks': 1,

'compression.type': 'lz4',

'batch.size': 65536,

'linger.ms': 5,

})

# 回调队列

self.callback_queue = queue.Queue()

# 统计

self.stats = {

"messages_sent": 0,

"messages_delivered": 0,

"errors": 0,

"delivery_errors": 0

}

# 启动回调处理线程

self.callback_thread = threading.Thread(target=self._callback_loop, daemon=True)

self.running = True

self.callback_thread.start()

def send_async(self, topic: str, value: bytes, key: bytes = None,

on_delivery=None, metadata: Dict = None):

"""异步发送"""

def delivery_callback(err, msg):

if err:

self.stats["delivery_errors"] += 1

result = {

"success": False,

"error": str(err),

"metadata": metadata

}

else:

self.stats["messages_delivered"] += 1

result = {

"success": True,

"topic": msg.topic(),

"partition": msg.partition(),

"offset": msg.offset(),

"metadata": metadata

}

# 放入回调队列

self.callback_queue.put((on_delivery, result))

try:

self.producer.produce(

topic=topic,

value=value,

key=key,

callback=delivery_callback

)

self.stats["messages_sent"] += 1

except Exception as e:

self.stats["errors"] += 1

result = {

"success": False,

"error": str(e),

"metadata": metadata

}

self.callback_queue.put((on_delivery, result))

def _callback_loop(self):

"""回调处理循环"""

while self.running:

try:

# 处理回调

self.producer.poll(0.1) # 触发交付回调

# 处理回调队列

while not self.callback_queue.empty():

try:

callback, result = self.callback_queue.get_nowait()

if callback:

try:

callback(result)

except Exception as e:

print(f"回调执行错误: {e}")

except queue.Empty:

break

time.sleep(0.001)

except Exception as e:

print(f"回调循环错误: {e}")

time.sleep(1)

def flush(self, timeout: float = 5.0):

"""刷新生产者"""

start_time = time.time()

while self.producer and time.time() - start_time < timeout:

remaining = self.producer.flush(timeout=0.1)

if remaining == 0:

break

# 处理剩余回调

self._process_remaining_callbacks()

def _process_remaining_callbacks(self):

"""处理剩余回调"""

for _ in range(100): # 最多处理100个

try:

callback, result = self.callback_queue.get_nowait()

if callback:

try:

callback(result)

except Exception as e:

print(f"回调执行错误: {e}")

except queue.Empty:

break

def stop(self):

"""停止"""

self.running = False

self.flush()

if self.callback_thread.is_alive():

self.callback_thread.join(timeout=2)

def get_stats(self) -> Dict:

"""获取统计信息"""

stats = self.stats.copy()

stats["delivery_rate"] = (

stats["messages_delivered"] / stats["messages_sent"]

if stats["messages_sent"] > 0 else 1.0

)

return stats第五章:消费者组与并行处理

5.1 消费者组负载均衡

python

class RadarConsumerGroup:

"""雷达消费者组"""

def __init__(self, group_id: str, bootstrap_servers: str):

from confluent_kafka import Consumer

self.group_id = group_id

self.bootstrap_servers = bootstrap_servers

# 消费者配置

self.config = {

'bootstrap.servers': bootstrap_servers,

'group.id': group_id,

'auto.offset.reset': 'earliest',

'enable.auto.commit': False,

'max.poll.records': 500,

'session.timeout.ms': 10000,

'heartbeat.interval.ms': 3000

}

# 消费者实例

self.consumers = {}

self.assignment = {} # 分配的分区

self.running = False

# 统计

self.stats = {

"total_messages": 0,

"rebalances": 0,

"commits": 0,

"errors": 0

}

def create_consumer(self, consumer_id: str) -> Consumer:

"""创建消费者"""

from confluent_kafka import Consumer

config = self.config.copy()

config['client.id'] = consumer_id

consumer = Consumer(config)

self.consumers[consumer_id] = consumer

return consumer

def subscribe(self, consumer_id: str, topics: List[str]):

"""订阅Topic"""

if consumer_id in self.consumers:

consumer = self.consumers[consumer_id]

consumer.subscribe(topics, on_assign=self._on_assign, on_revoke=self._on_revoke)

def _on_assign(self, consumer, partitions):

"""分配分区回调"""

consumer_id = self._get_consumer_id(consumer)

self.assignment[consumer_id] = partitions

self.stats["rebalances"] += 1

print(f"消费者 {consumer_id} 分配分区: {partitions}")

def _on_revoke(self, consumer, partitions):

"""撤销分区回调"""

consumer_id = self._get_consumer_id(consumer)

if consumer_id in self.assignment:

del self.assignment[consumer_id]

print(f"消费者 {consumer_id} 撤销分区: {partitions}")

def _get_consumer_id(self, consumer) -> str:

"""获取消费者ID"""

for cid, c in self.consumers.items():

if c == consumer:

return cid

return "unknown"

def consume_messages(self, consumer_id: str, timeout: float = 1.0) -> List:

"""消费消息"""

if consumer_id not in self.consumers:

return []

consumer = self.consumers[consumer_id]

messages = []

try:

# 拉取消息

msg = consumer.poll(timeout)

while msg is not None:

if msg.error():

print(f"消费错误: {msg.error()}")

else:

messages.append({

'topic': msg.topic(),

'partition': msg.partition(),

'offset': msg.offset(),

'key': msg.key(),

'value': msg.value(),

'timestamp': msg.timestamp()

})

self.stats["total_messages"] += 1

# 获取下一条消息

msg = consumer.poll(0)

except Exception as e:

self.stats["errors"] += 1

print(f"消费异常: {e}")

return messages

def commit_offsets(self, consumer_id: str, offsets: List[tuple] = None):

"""提交偏移量"""

if consumer_id not in self.consumers:

return

consumer = self.consumers[consumer_id]

try:

if offsets:

# 提交特定偏移量

consumer.commit(offsets=offsets, asynchronous=False)

else:

# 提交所有分配的分区

consumer.commit(asynchronous=False)

self.stats["commits"] += 1

except Exception as e:

print(f"提交偏移量错误: {e}")

def close(self, consumer_id: str = None):

"""关闭消费者"""

if consumer_id:

if consumer_id in self.consumers:

self.consumers[consumer_id].close()

del self.consumers[consumer_id]

else:

for consumer in self.consumers.values():

consumer.close()

self.consumers.clear()

def get_assignment(self) -> Dict:

"""获取分配情况"""

return self.assignment.copy()

def get_stats(self) -> Dict:

"""获取统计信息"""

return self.stats.copy()5.2 偏移量管理与提交策略

python

class OffsetManager:

"""偏移量管理器"""

def __init__(self, commit_interval_ms: int = 5000, max_uncommitted: int = 1000):

self.commit_interval_ms = commit_interval_ms

self.max_uncommitted = max_uncommitted

# 偏移量跟踪

self.offsets = {} # {(topic, partition): offset}

self.last_commit_time = time.time() * 1000

self.uncommitted_count = 0

# 提交策略

self.commit_strategies = {

"time_based": self._time_based_commit,

"count_based": self._count_based_commit,

"hybrid": self._hybrid_commit

}

def record_offset(self, topic: str, partition: int, offset: int):

"""记录偏移量"""

key = (topic, partition)

# 只记录更高的偏移量

if key not in self.offsets or offset > self.offsets[key]:

self.offsets[key] = offset

self.uncommitted_count += 1

def should_commit(self, strategy: str = "hybrid") -> bool:

"""检查是否应该提交"""

if strategy in self.commit_strategies:

return self.commit_strategies[strategy]()

return False

def _time_based_commit(self) -> bool:

"""基于时间的提交策略"""

current_time = time.time() * 1000

time_since_commit = current_time - self.last_commit_time

return time_since_commit >= self.commit_interval_ms