可变分辨率(令牌预算)

所有 Gemma 4 模型都支持可变分辨率,这意味着可以处理不同分辨率的图片。此外,您还可以决定是以较高分辨率还是较低分辨率处理指定图片。例如,如果您要执行对象检测,可能需要以更高的分辨率处理图片。例如,视频理解可以采用较低的分辨率来处理每个帧,从而加快推理速度。从本质上讲,这是推理速度与图像表示准确性之间的权衡。

此选择由 token 预算控制,该预算表示为给定图片生成的视觉 token(也称为视觉 token 嵌入)数量上限。

用户可以选择 70、140、280、560 或 1120 个令牌的预算规模。系统会根据预算调整输入的大小。如果您的预算较高(例如 1120 个令牌),则图片可以保持较高的分辨率,因此需要处理的图像块也会更多。如果您的预算较低(例如 70 个令牌),则需要缩小图片尺寸,这样需要处理的补丁数量就会减少。如果预算较高(因此 token 数量较多),您可以捕获的信息会比预算较低时多得多。

此预算决定了图片调整的大小。假设您的预算为 280 个令牌,那么补丁数量上限为 9 x 280 = 2,520。为什么要乘以 9?这是因为在下一步中,系统会通过对每个 3x3 相邻区块的补丁求平均值,将其合并为单个嵌入。生成的嵌入是视觉词元嵌入。视觉令牌嵌入越多,从图片中提取的信息就越精细。

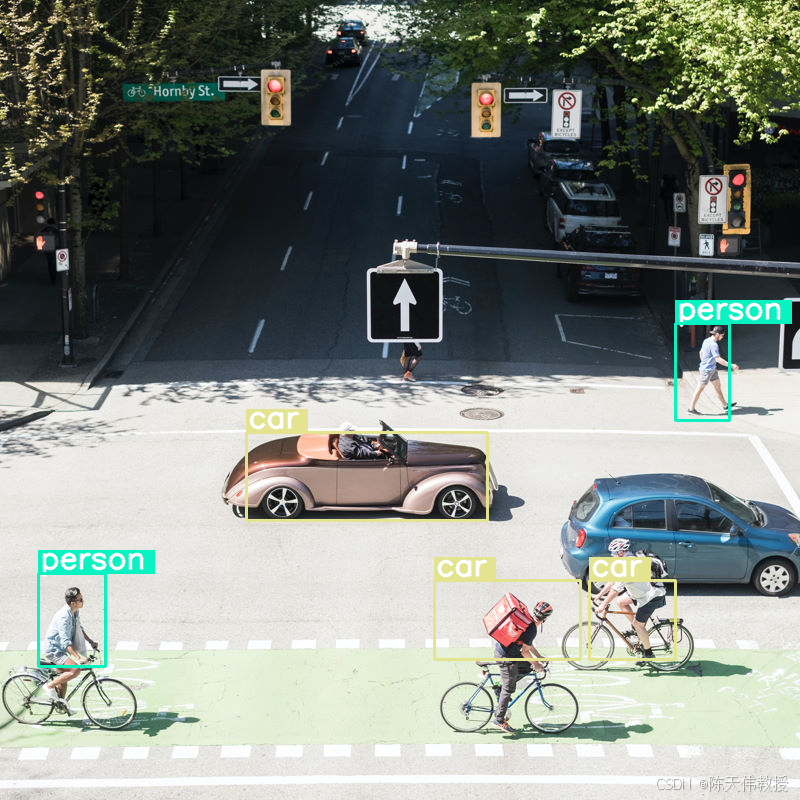

我们来探索一下,如果我们对图片执行对象检测并将预算大小设置为非常低的值 (70),会发生什么情况:

import numpy as np

from PIL import Image

import requests, cv2, re, json

from transformers import GenerationConfig

config = GenerationConfig.from_pretrained(MODEL_ID)

config.max_new_tokens = 512

gen_kwargs = dict(generation_config=config)

img_url = "https://huggingface.co/datasets/Xenova/transformers.js-docs/resolve/main/city-streets.jpg"

input_image = Image.open(requests.get(img_url, stream=True).raw)

def draw_bounding_box(image, coordinates, label, label_colors, width, height):

"""Draw a bounding box based on input image and coordinates"""

y1, x1, y2, x2 = [int(c) / 1024 for c in coordinates]

y1, x1, y2, x2 = round(y1 * height), round(x1 * width), round(y2 * height), round(x2 * width)

color = label_colors.setdefault(label, np.random.randint(0, 256, (3,)).tolist())

text_size = cv2.getTextSize(label, cv2.FONT_HERSHEY_SIMPLEX, 1, 3)[0]

cv2.rectangle(image, (x1, y1 - text_size[1]), (x1 + text_size[0] + 8, y1), color, -1)

cv2.putText(image, label, (x1 + 2, y1 - 5), cv2.FONT_HERSHEY_SIMPLEX, 1, (255, 255, 255), 2, cv2.LINE_AA)

cv2.rectangle(image, (x1, y1), (x2, y2), color, 2)

return image

def draw_results(text_content):

"""Based on an input image, draw bounding boxes and labels"""

# Extract JSON

match = re.search(r'```json\s+(.*?)\s+```', text_content, re.DOTALL)

if not match:

print("No JSON code block found.")

return None

# Extract data

data_list = json.loads(match.group(1))

output_img = np.array(input_image)

label_colors = {}

w, h = input_image.size

# Draw bounding boxes

for item in data_list:

output_img = draw_bounding_box(output_img, item["box_2d"], item["label"], label_colors, w, h)

return Image.fromarray(output_img)

# Detect person, card, and traffic light

messages = [

{

"role": "user", "content": [

{"type": "image", "url": img_url},

{"type": "text", "text": "detect person and car, output only ```json"}

]

}

]

# Run pipeline and set token budget to 70

vqa_pipe.image_processor.max_soft_tokens = 70

output = vqa_pipe(messages, return_full_text=False, generate_kwargs=gen_kwargs)

print(output[0]['generated_text'])

draw_results(output[0]['generated_text'])```json

[

{"box_2d": [413, 864, 537, 933], "label": "person"},

{"box_2d": [553, 315, 666, 623], "label": "car"},

{"box_2d": [743, 754, 843, 864], "label": "car"},

{"box_2d": [743, 556, 843, 743], "label": "car"},

{"box_2d": [733, 49, 853, 135], "label": "person"}

]

效果还不错,但很明显,图片经过了相当程度的压缩,因为系统并未检测到所有车辆和人员。提高令牌预算应该可以解决此问题!

比较令牌预算

让我们来探索一下增加预算规模后会发生什么情况!预算越大,生成的软 token 和处理的软 token 就越多。这应该有助于改进对象检测。

import matplotlib.pyplot as plt

def count_tokens(processor, tokens):

input_ids = tokens['input_ids'][0] # Get input IDs from the tokenizer output

img_counting = []

img_count = 0

aud_counting = []

aud_count = 0

for x in input_ids: # Iterate over the token list

# Use tokenizer.decode() to convert tokens back to words

word = processor.decode([x]) # No need to convert to JAX array for decoding

if x == processor.tokenizer.image_token_id:

img_count = img_count + 1

elif x == processor.tokenizer.audio_token_id:

aud_count = aud_count + 1

elif x == processor.tokenizer.eoi_token_id:

img_counting.append(img_count)

img_count = 0

elif x == processor.tokenizer.eoa_token_id:

aud_counting.append(aud_count)

aud_count = 0

for item in img_counting:

print(f"# of Image Tokens: {item}")

for item in aud_counting:

print(f"# of Audio Tokens: {item}")

input_image.resize((2000, 2000))

# Detect person and car

messages = [

{

"role": "user", "content": [

{"type": "image", "url": img_url},

{"type": "text", "text": "detect person and car, output only ```json"}

]

}

]

# Run for different budget sizes

budget_sizes = [70, 140, 280, 560]

# 1120 won't fit on T4, but works on L4 or highger

#budget_sizes = [70, 140, 280, 560, 1120]

results = {}

for budget in budget_sizes:

print(f"Budget Size: {budget}")

vqa_pipe.image_processor.max_soft_tokens = budget

inputs = vqa_pipe.processor.apply_chat_template(messages, tokenize=True, return_dict=True, return_tensors="pt")

count_tokens(vqa_pipe.processor, inputs)

output = vqa_pipe(messages, return_full_text=False, generate_kwargs=gen_kwargs)

result_text = output[0]['generated_text']

print(output[0]['generated_text'])

result_image = draw_results(result_text)

if result_image:

results[budget] = result_image

# Display side-by-side

fig, axes = plt.subplots(1, len(results), figsize=(5 * len(results), 6))

if len(results) == 1:

axes = [axes]

for ax, (budget, img) in zip(axes, results.items()):

ax.imshow(img)

ax.set_title(f"max_soft_tokens = {budget}", fontsize=14, fontweight='bold')

ax.axis('off')

plt.tight_layout()

plt.show()Budget Size: 70

# of Image Tokens: 64

```json

[

{"box_2d": [731, 57, 873, 132], "label": "person"},

{"box_2d": [556, 314, 675, 618], "label": "car"},

{"box_2d": [736, 754, 843, 864], "label": "car"},

{"box_2d": [756, 553, 935, 736], "label": "person"}

]

```

Budget Size: 140

# of Image Tokens: 121

```json

[

{"box_2d": [736, 734, 809, 836], "label": "car"},

{"box_2d": [745, 556, 919, 715], "label": "person"},

{"box_2d": [748, 0, 906, 166], "label": "person"},

{"box_2d": [541, 322, 647, 626], "label": "car"},

{"box_2d": [413, 874, 513, 924], "label": "person"}

]

```

Budget Size: 280

# of Image Tokens: 256

```json

[

{"box_2d": [403, 876, 511, 924], "label": "person"},

{"box_2d": [532, 313, 652, 623], "label": "car"},

{"box_2d": [735, 732, 817, 828], "label": "car"},

{"box_2d": [742, 554, 912, 662], "label": "person"},

{"box_2d": [760, 15, 899, 163], "label": "person"},

{"box_2d": [768, 554, 912, 724], "label": "person"}

]

```

Budget Size: 560

# of Image Tokens: 529

```json

[

{"box_2d": [741, 0, 910, 135], "label": "person"},

{"box_2d": [547, 254, 650, 624], "label": "car"},

{"box_2d": [773, 526, 912, 666], "label": "person"},

{"box_2d": [601, 707, 742, 1000], "label": "car"},

{"box_2d": [411, 873, 515, 931], "label": "person"},

{"box_2d": [765, 700, 851, 874], "label": "person"}

]

```总结与后续步骤

在本指南中,您学习了如何使用 Gemma 4 模型来执行图片理解任务。这些示例涵盖了以下方面:从图片生成文本、使用提示模板进行视觉 QA、同时处理多张图片、光学字符识别 (OCR)、使用边界框进行对象检测,以及使用令牌预算管理可变分辨率。