文章目录

-

-

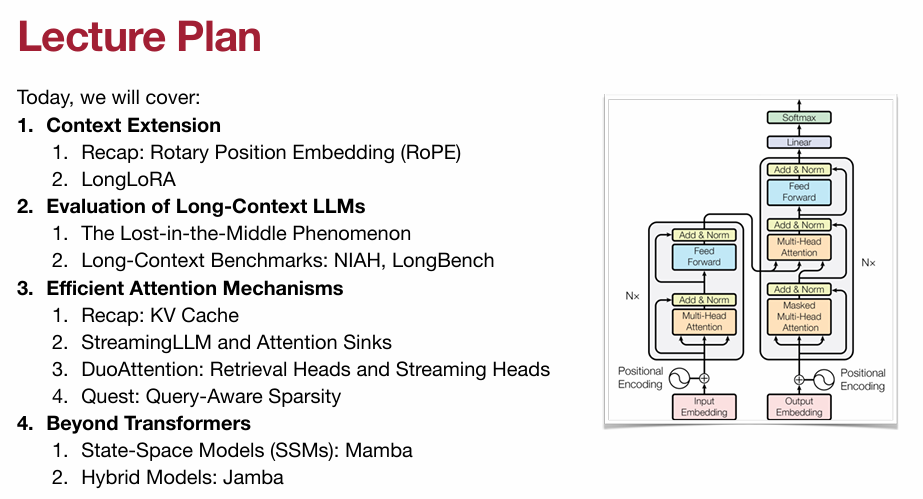

- [1. Context Extension](#1. Context Extension)

-

- [1.1 Rotary Position Embedding (RoPE)](#1.1 Rotary Position Embedding (RoPE))

- [1.2 LongLoRA](#1.2 LongLoRA)

- [2. Evaluation of Long-Context LLMs](#2. Evaluation of Long-Context LLMs)

-

- [2.1 The Lost in the Middle Phenomenon](#2.1 The Lost in the Middle Phenomenon)

- [2.2 Long-Context Benchmarks: NIAH, LongBench](#2.2 Long-Context Benchmarks: NIAH, LongBench)

- [3. Efficient Attention Mechanisms](#3. Efficient Attention Mechanisms)

-

- [3.1 KV Cache](#3.1 KV Cache)

- [3.2 StreamingLLM and Attention Sinks(重点)](#3.2 StreamingLLM and Attention Sinks(重点))

- [3.3 DuoAttention: Retrieval Heads and Streaming Heads (重点)](#3.3 DuoAttention: Retrieval Heads and Streaming Heads (重点))

- [3.4 Quest: Query-Aware Sparsity(重点)](#3.4 Quest: Query-Aware Sparsity(重点))

- [4. Beyond Transformers](#4. Beyond Transformers)

-

- [4.1 State-Space Models (SSMs): Mamba](#4.1 State-Space Models (SSMs): Mamba)

- [4.2 Hybrid Models: Jamba](#4.2 Hybrid Models: Jamba)

-

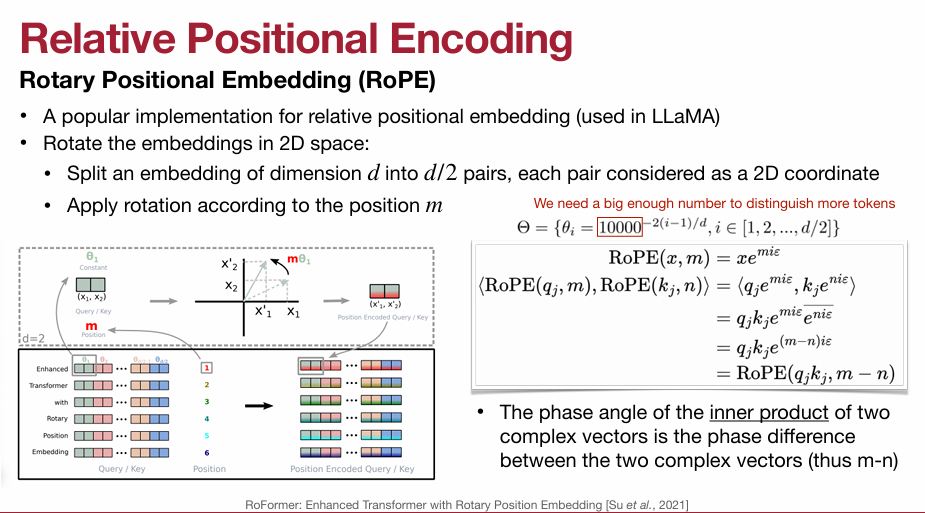

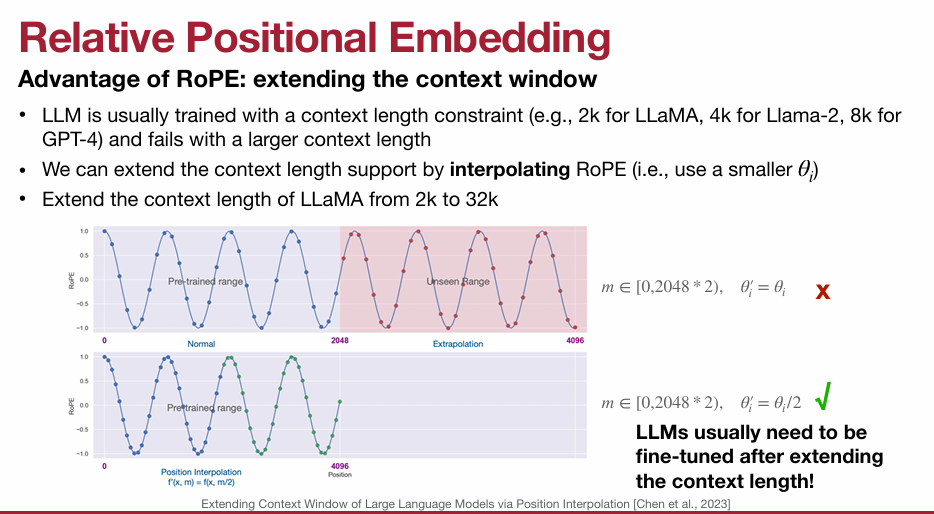

1. Context Extension

1.1 Rotary Position Embedding (RoPE)

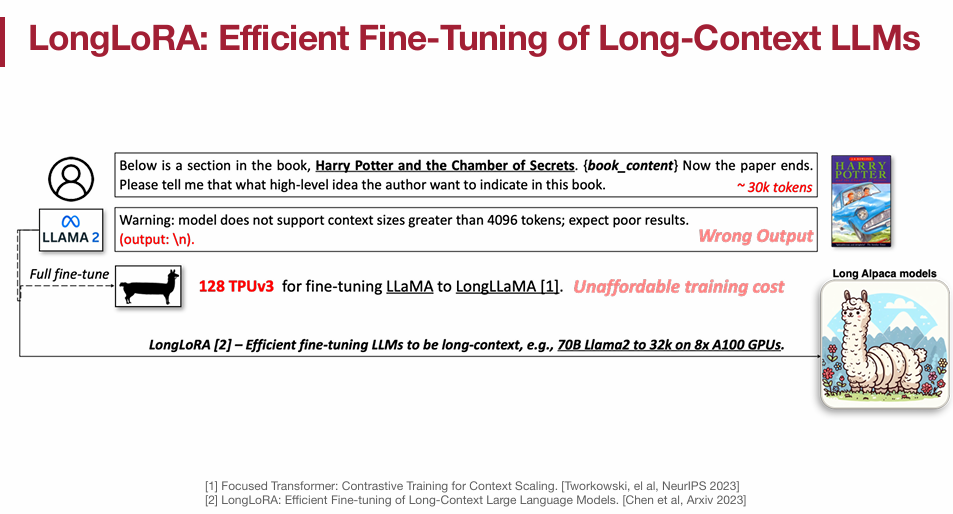

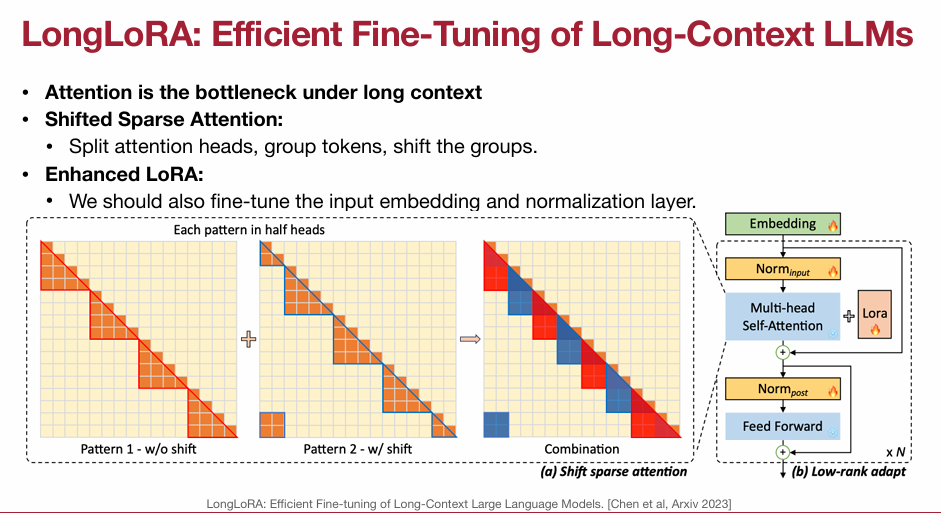

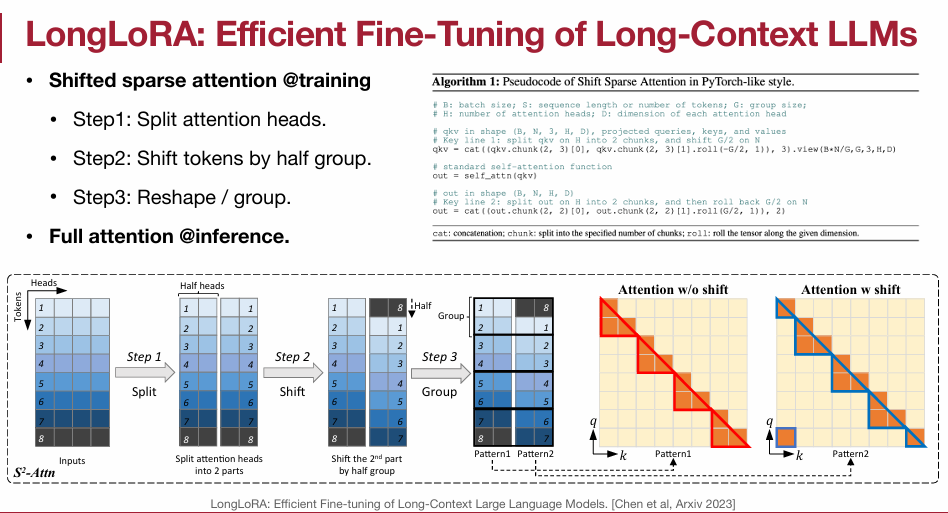

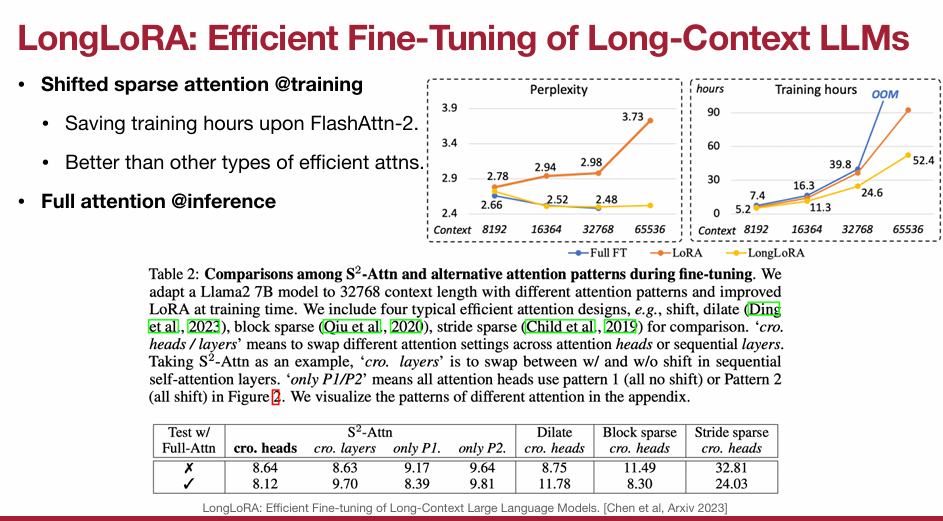

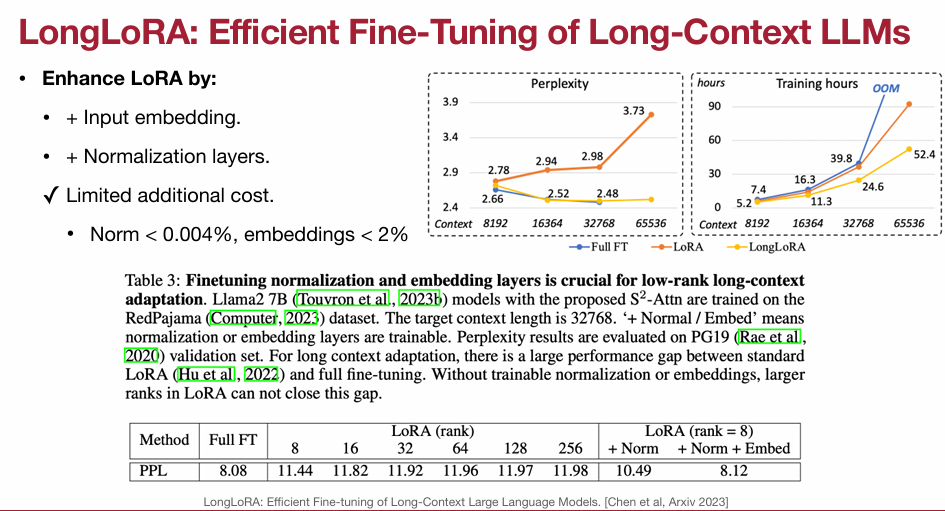

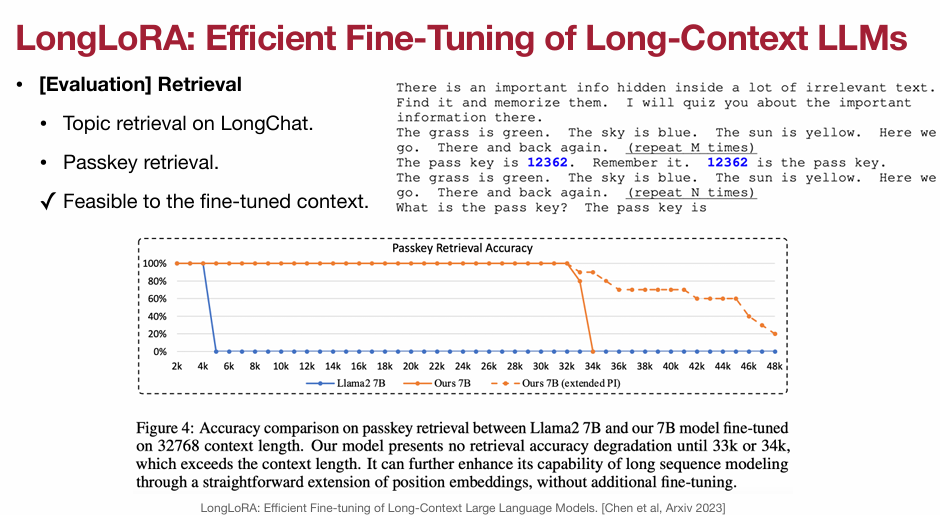

1.2 LongLoRA

2. Evaluation of Long-Context LLMs

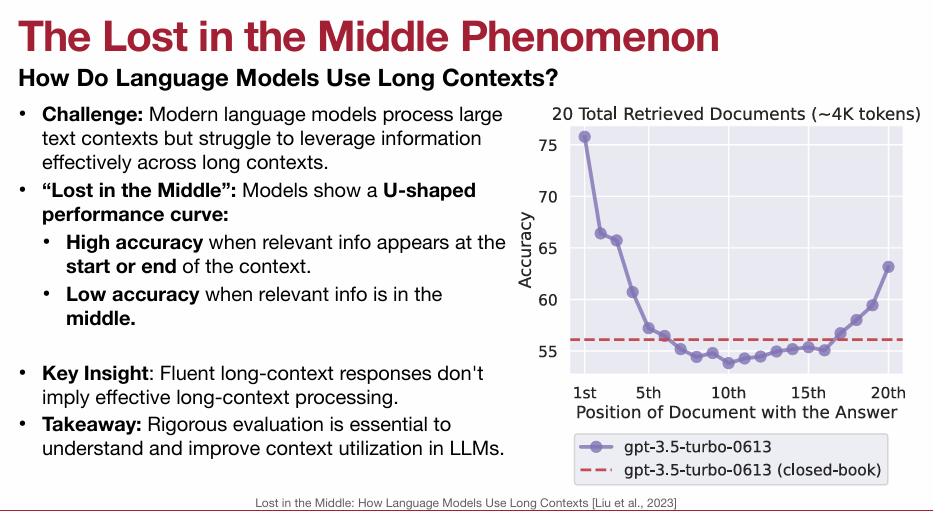

2.1 The Lost in the Middle Phenomenon

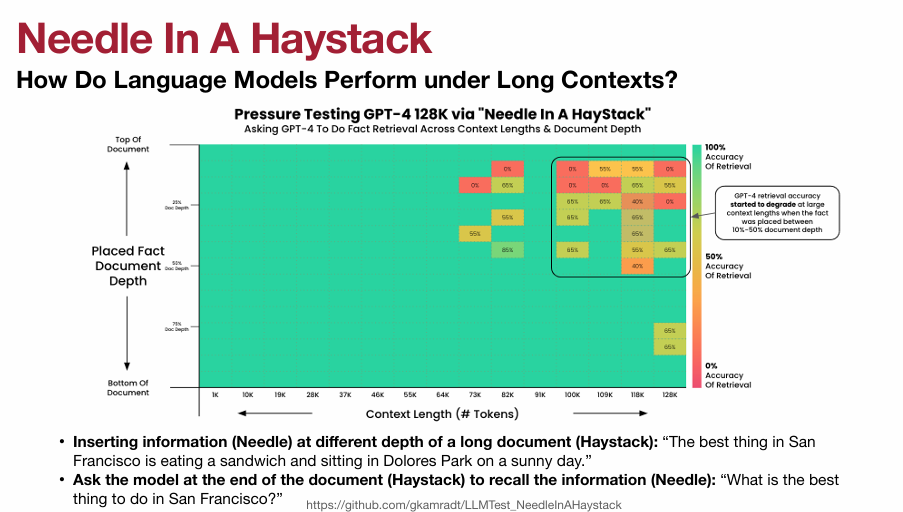

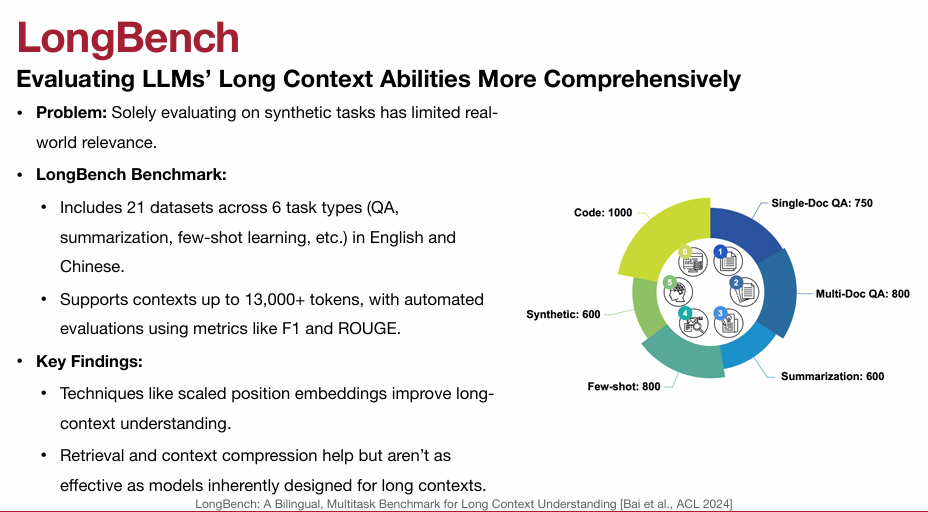

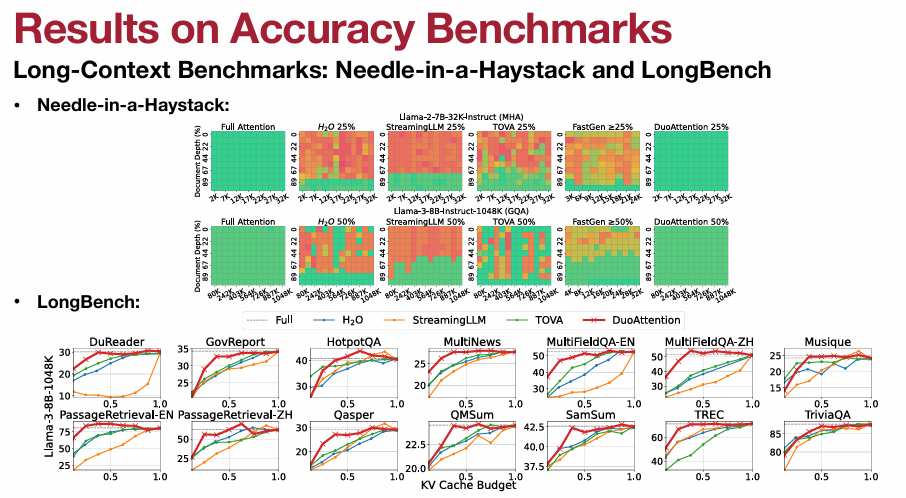

2.2 Long-Context Benchmarks: NIAH, LongBench

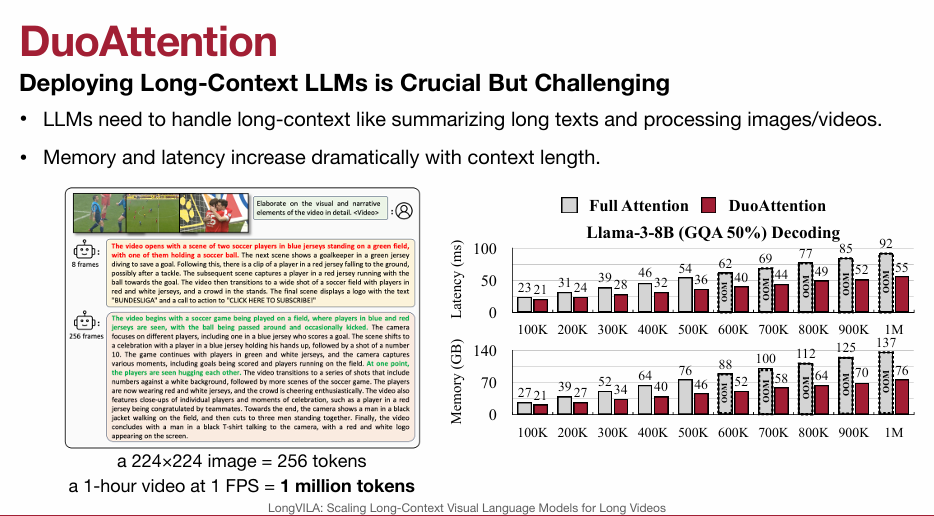

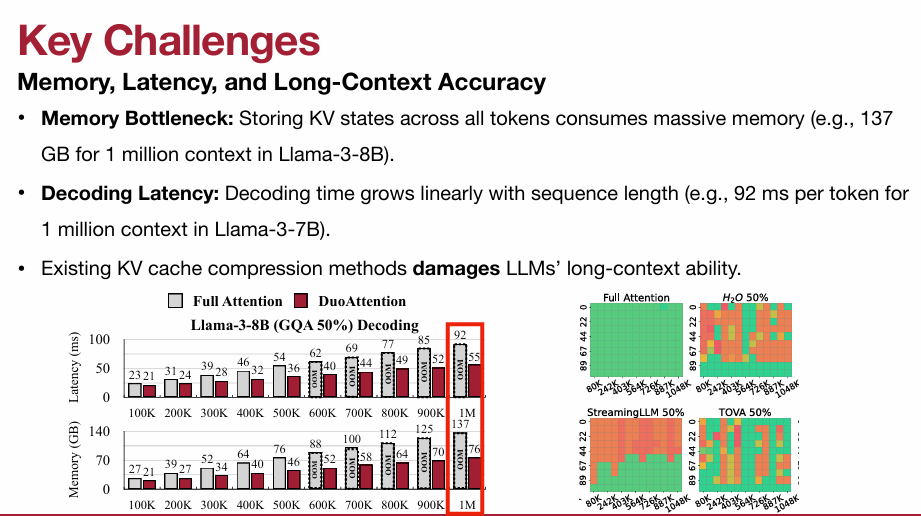

3. Efficient Attention Mechanisms

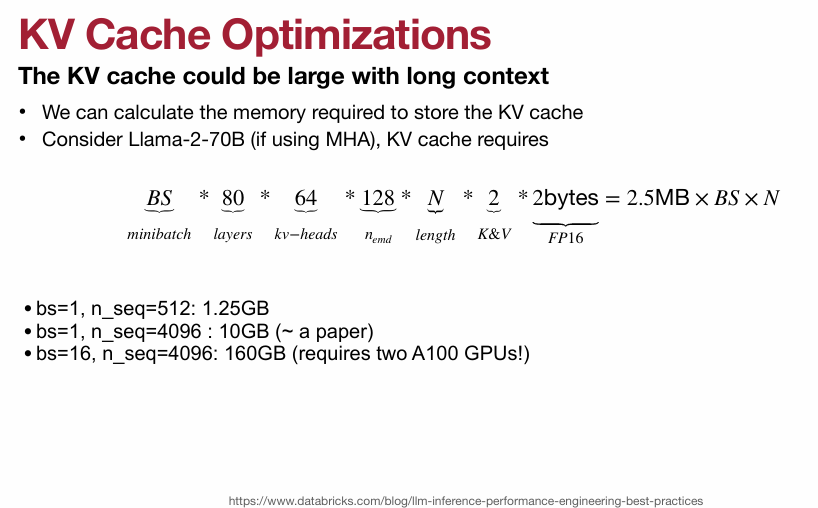

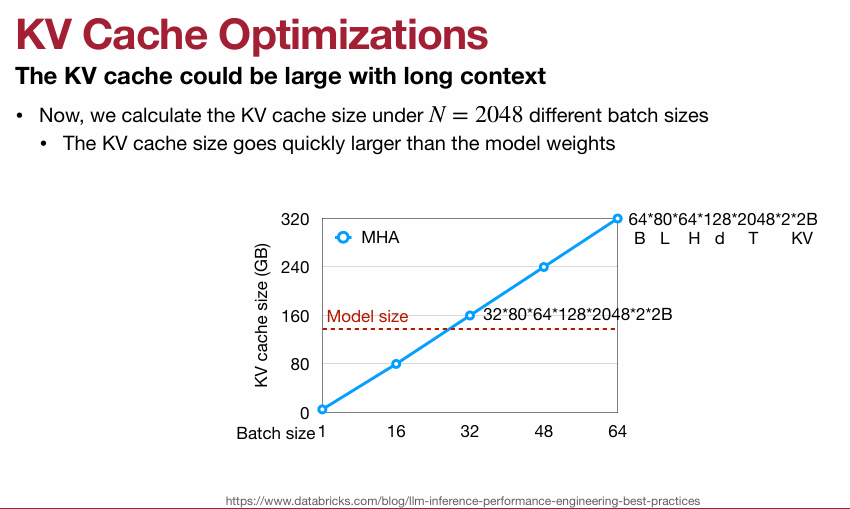

3.1 KV Cache

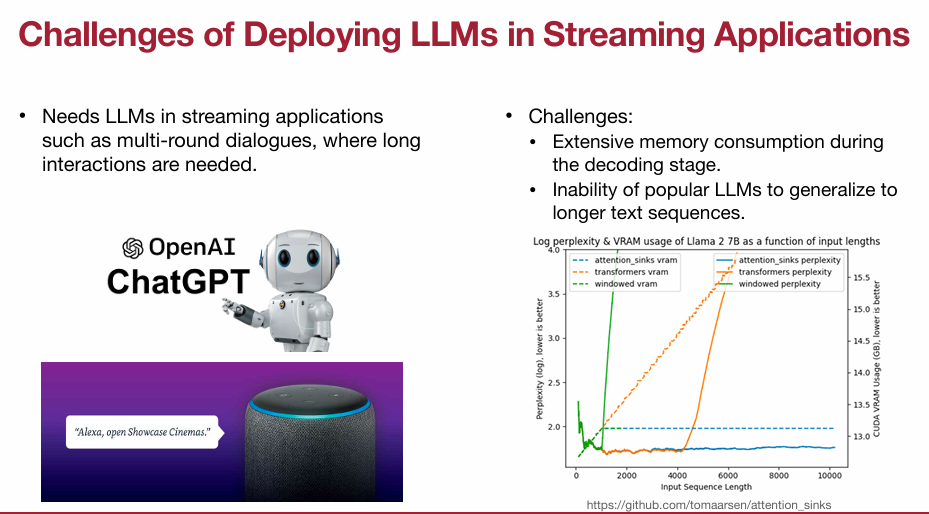

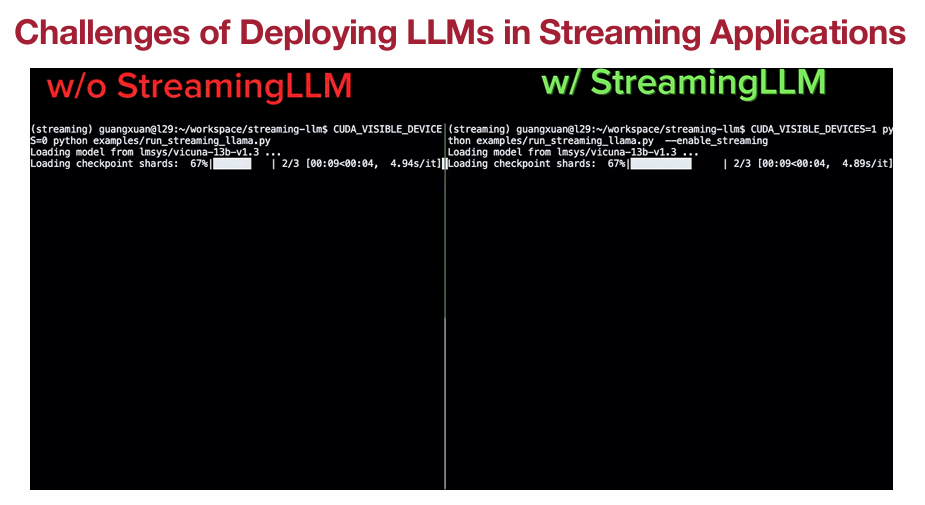

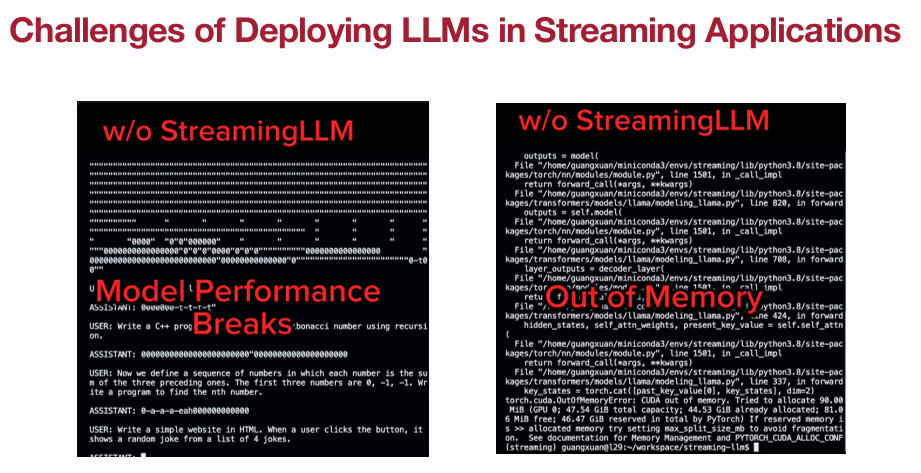

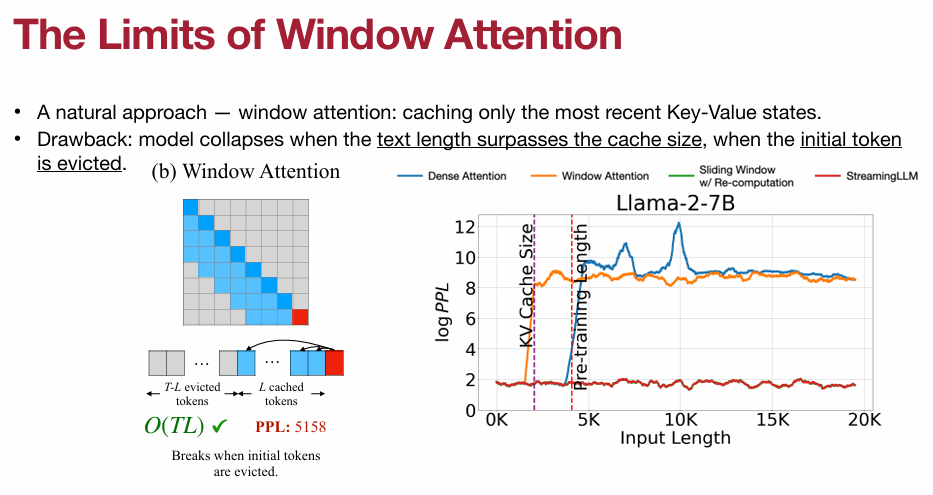

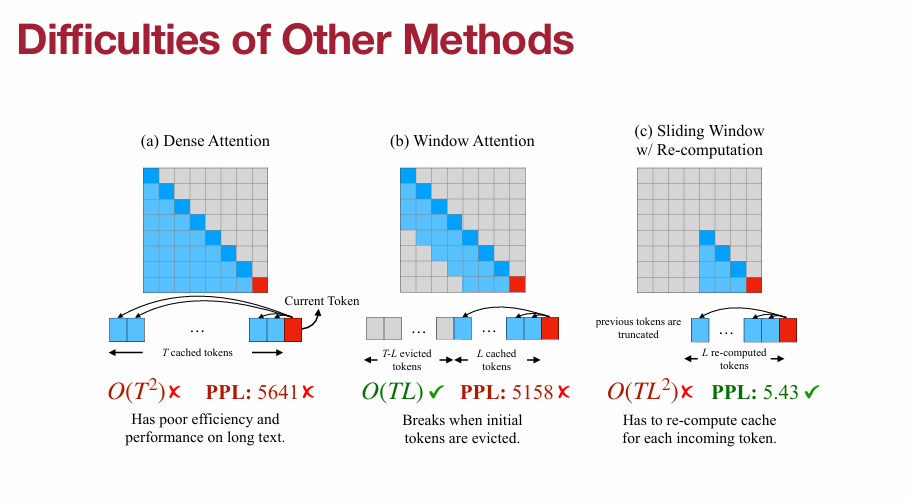

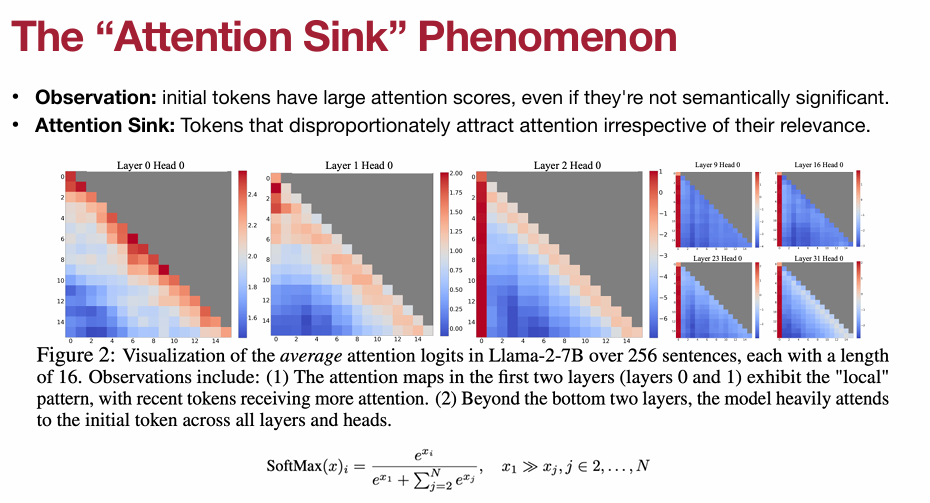

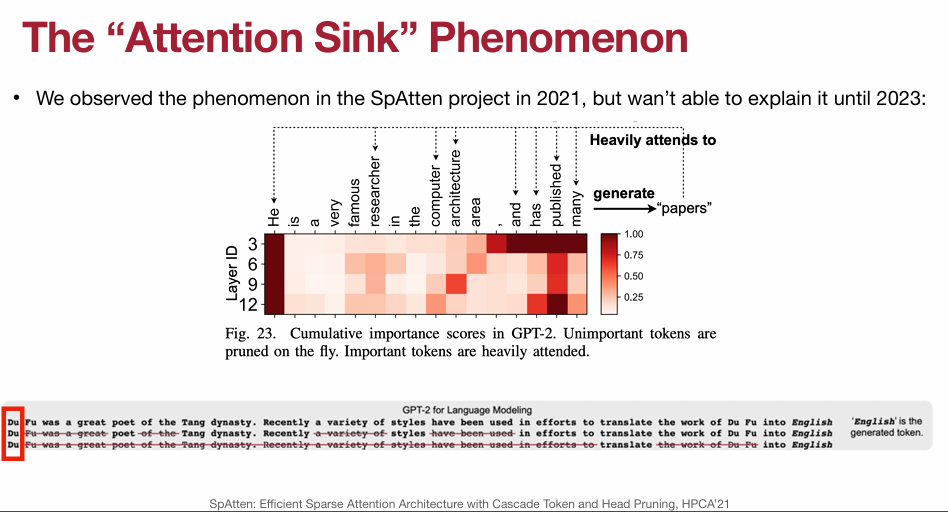

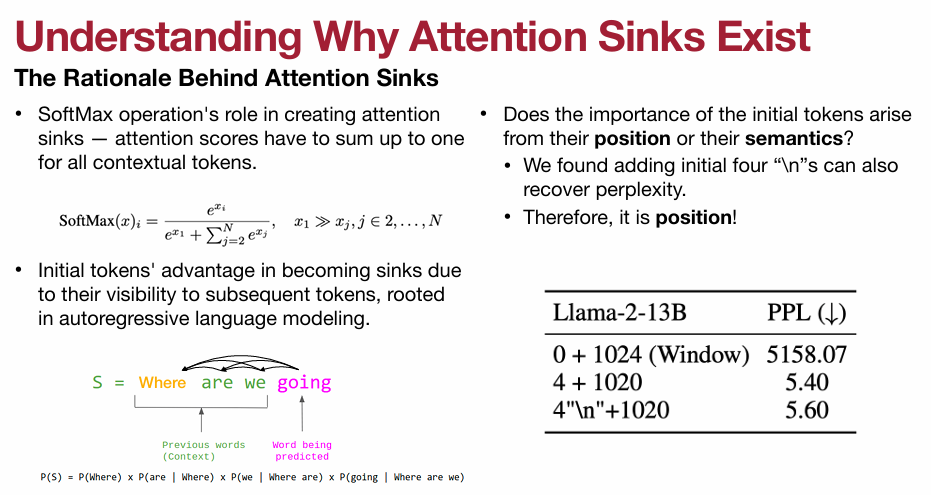

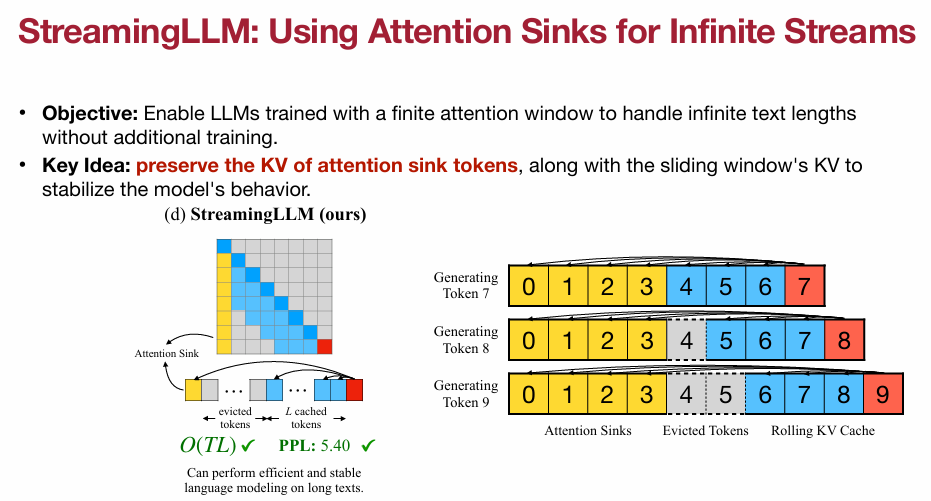

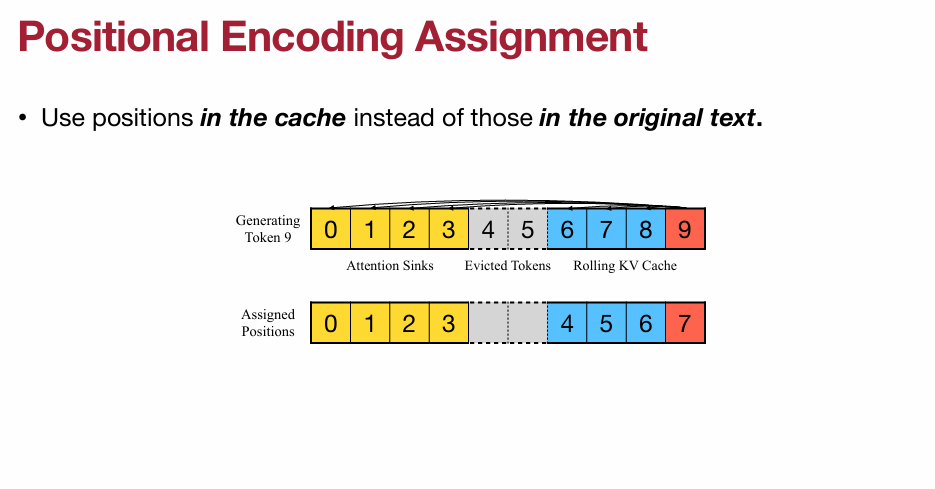

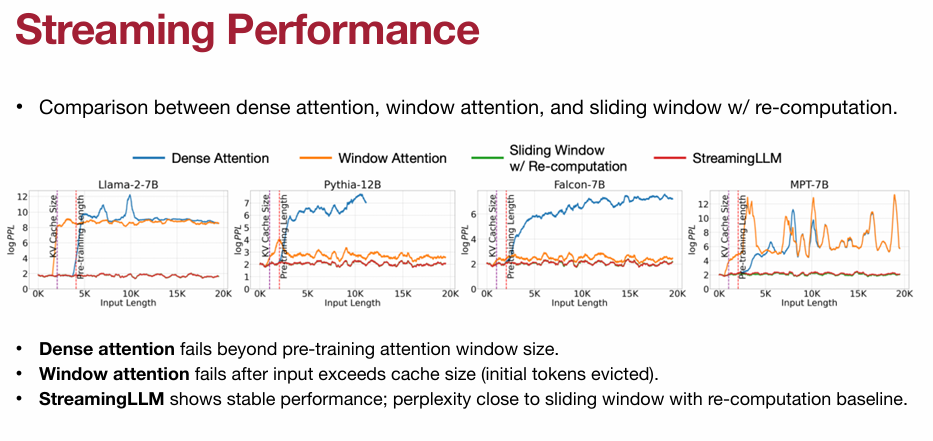

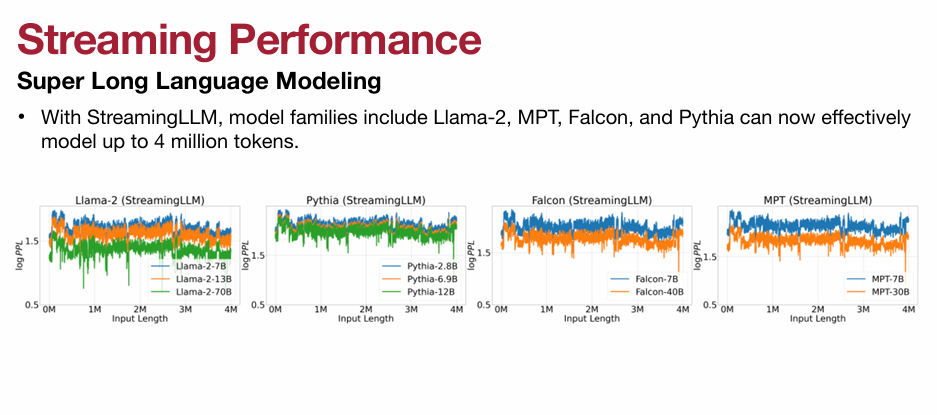

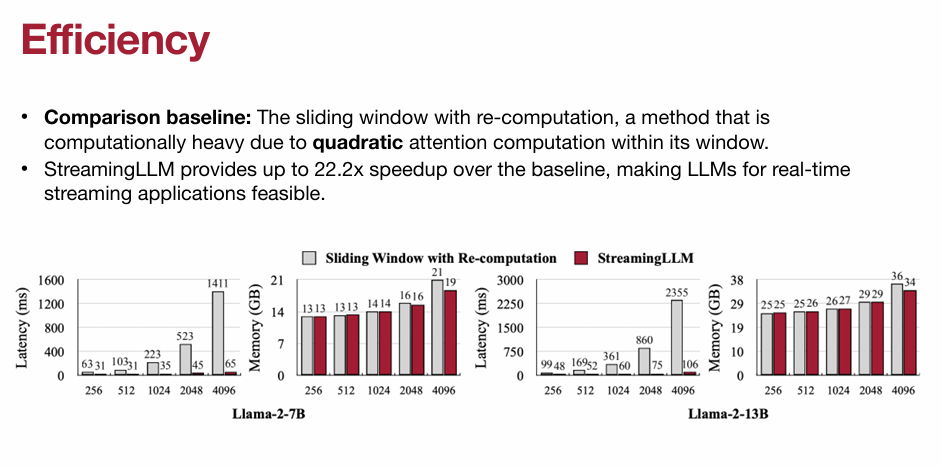

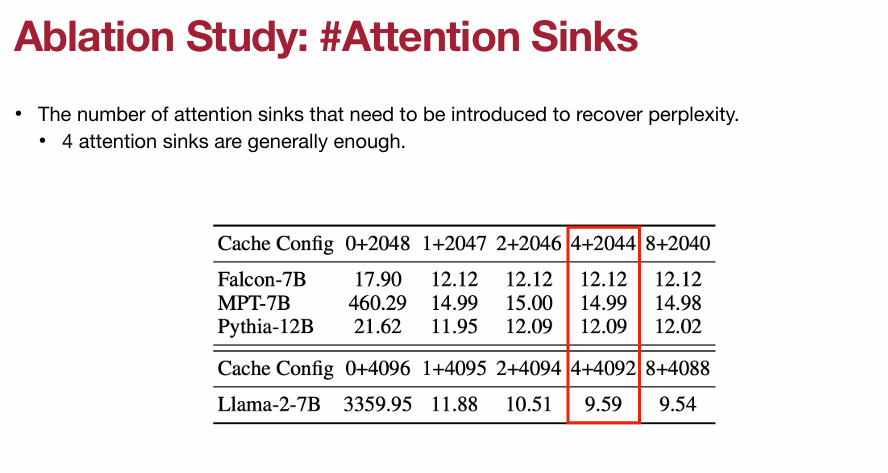

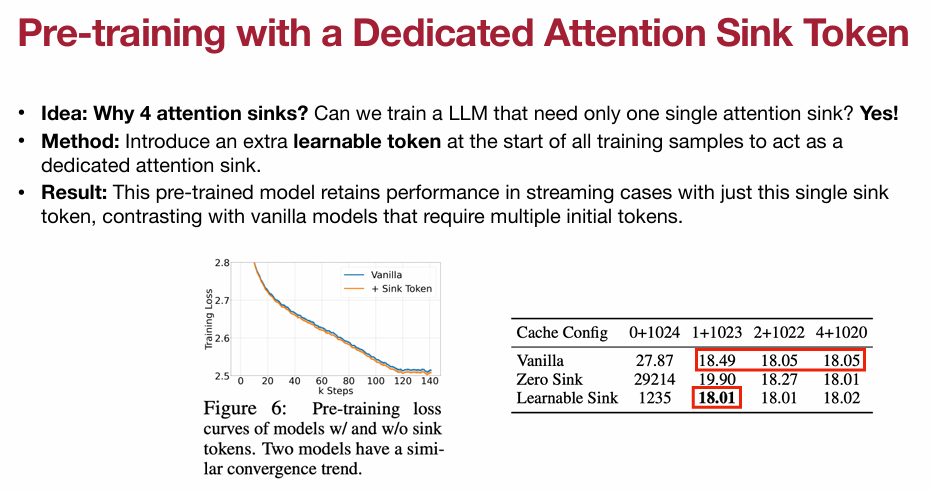

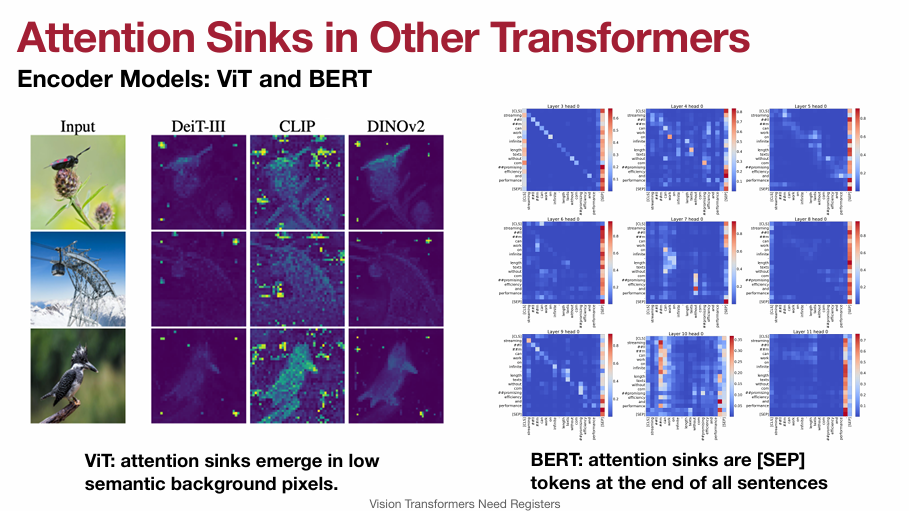

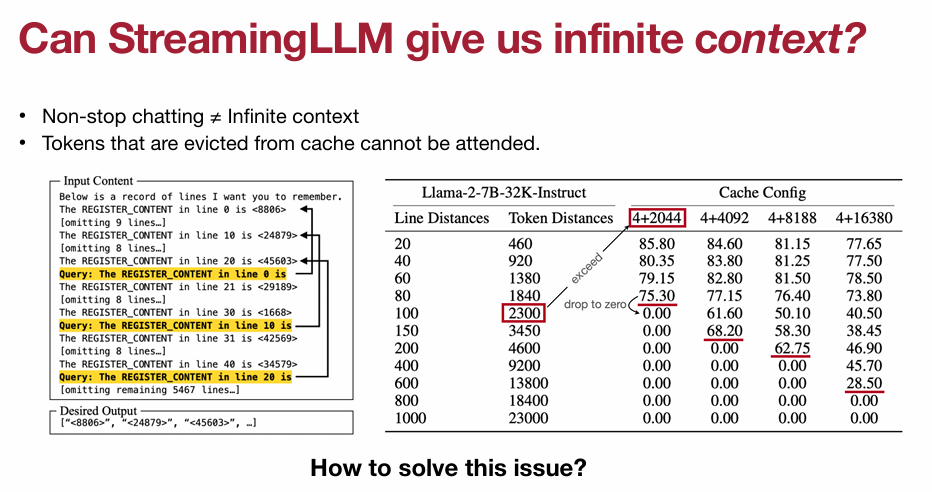

3.2 StreamingLLM and Attention Sinks(重点)

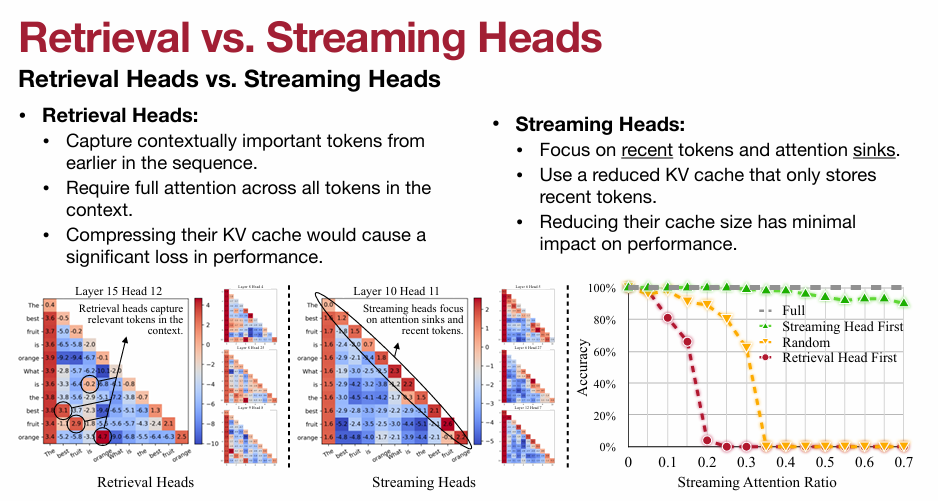

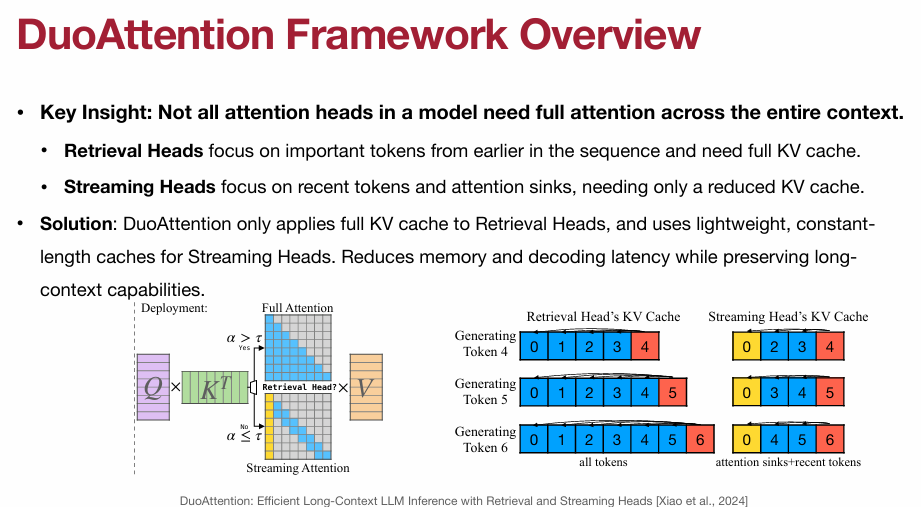

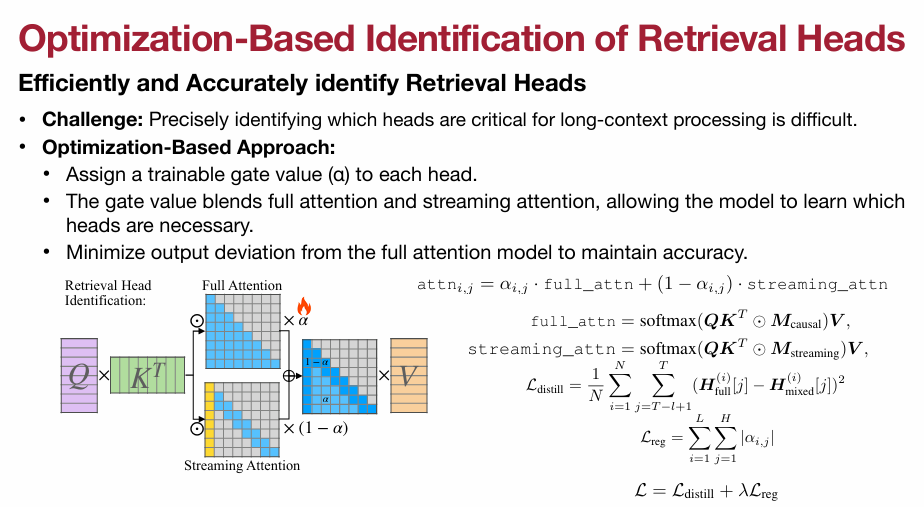

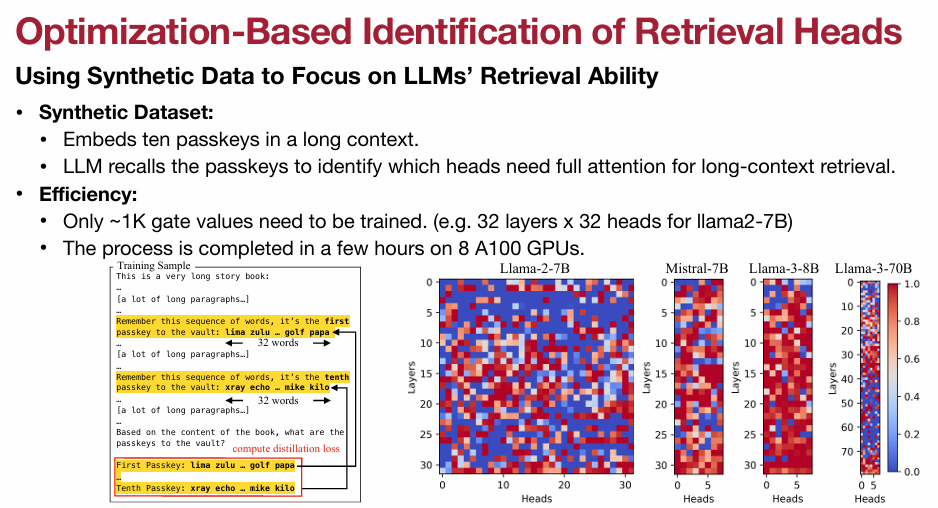

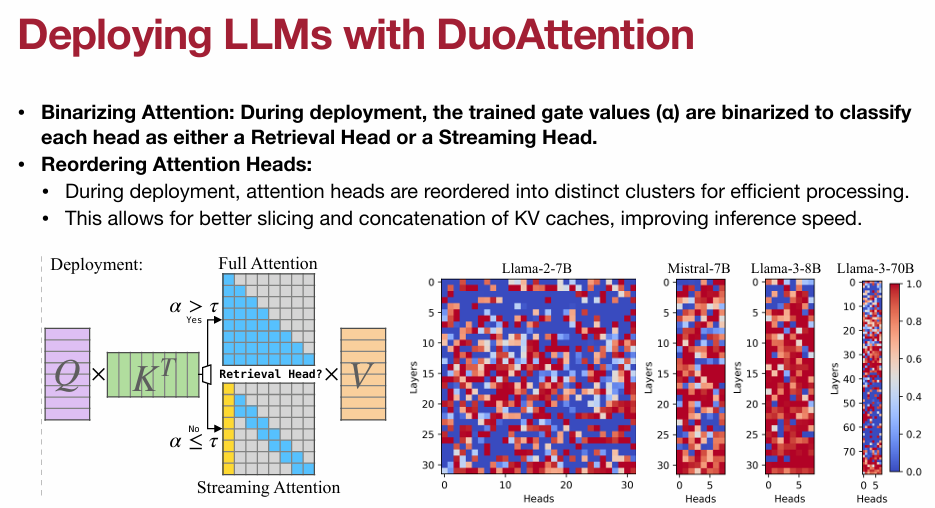

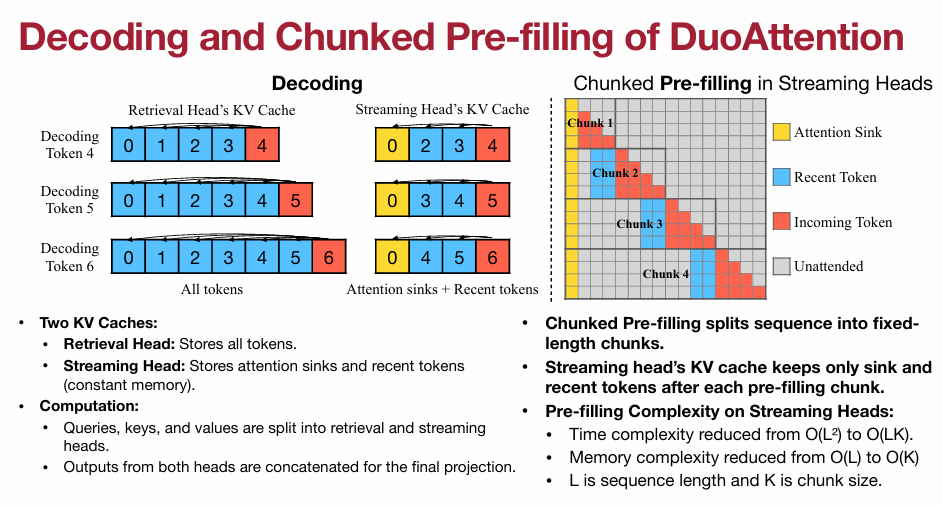

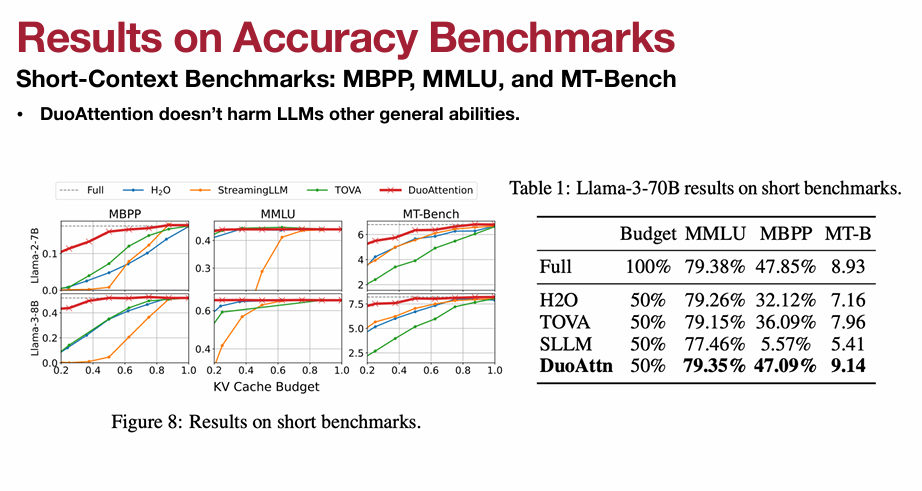

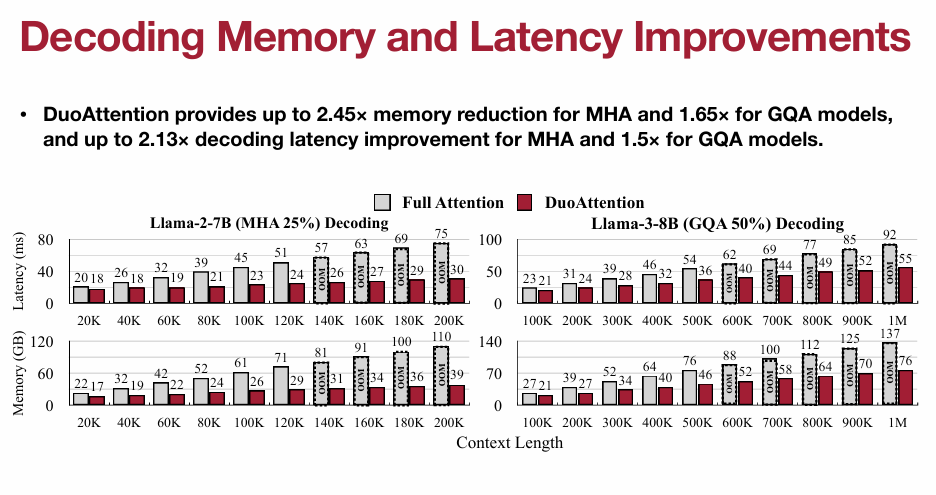

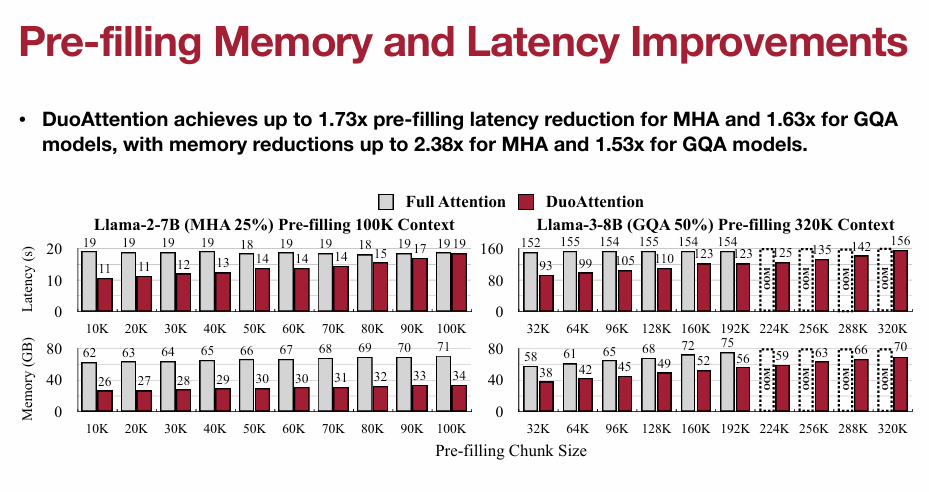

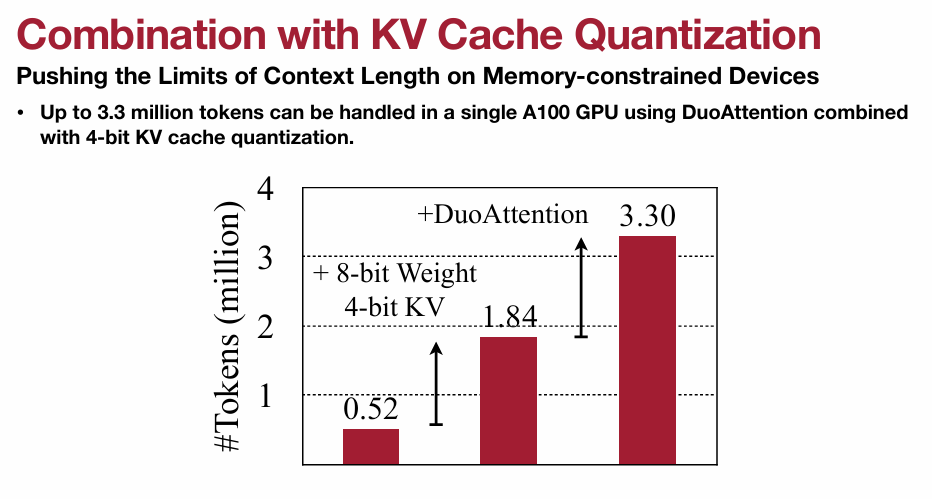

3.3 DuoAttention: Retrieval Heads and Streaming Heads (重点)

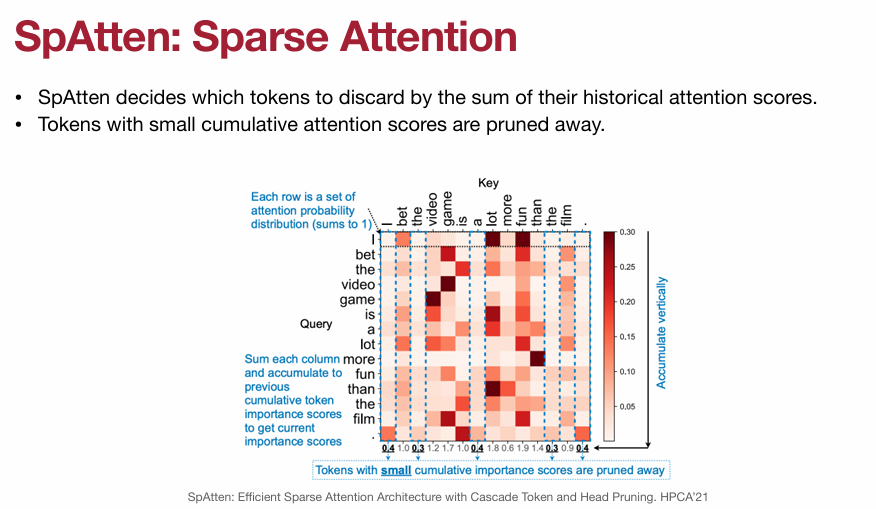

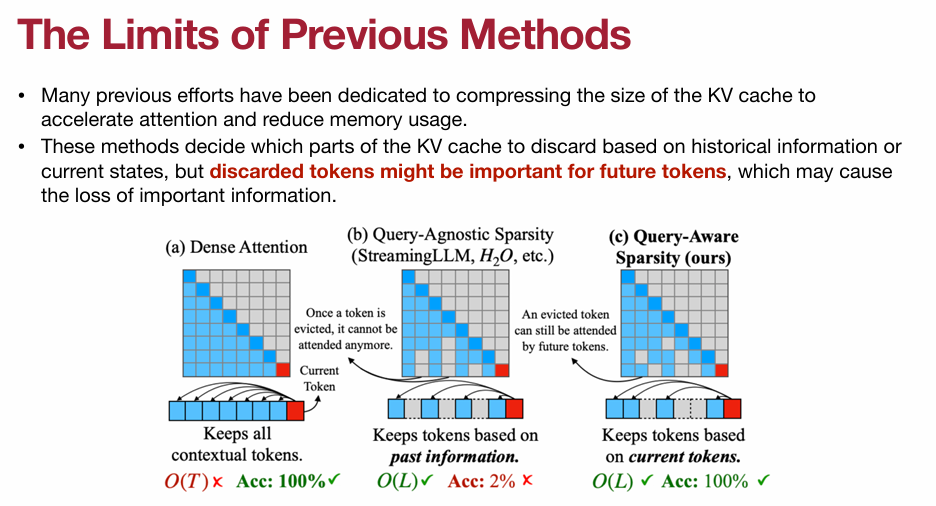

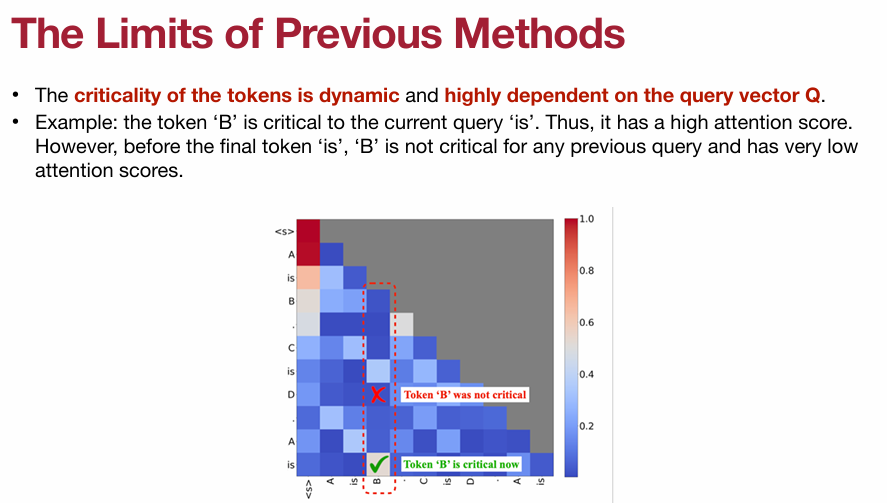

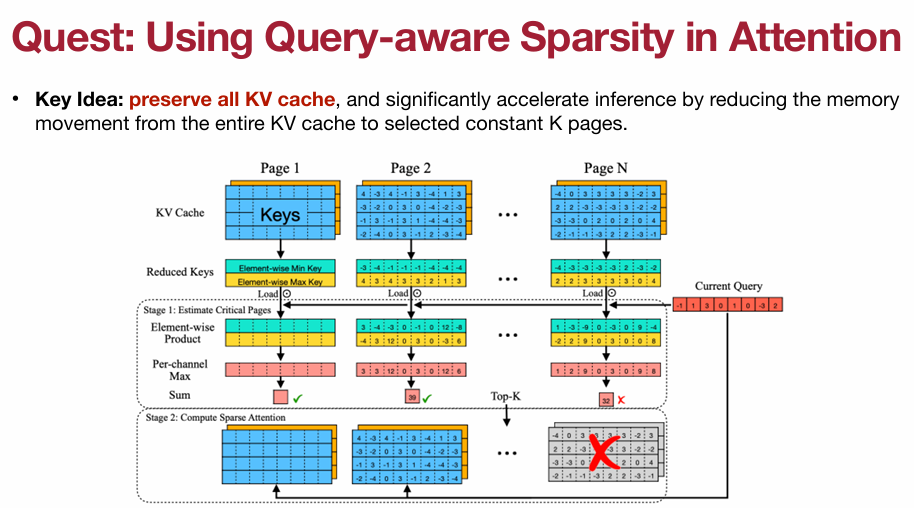

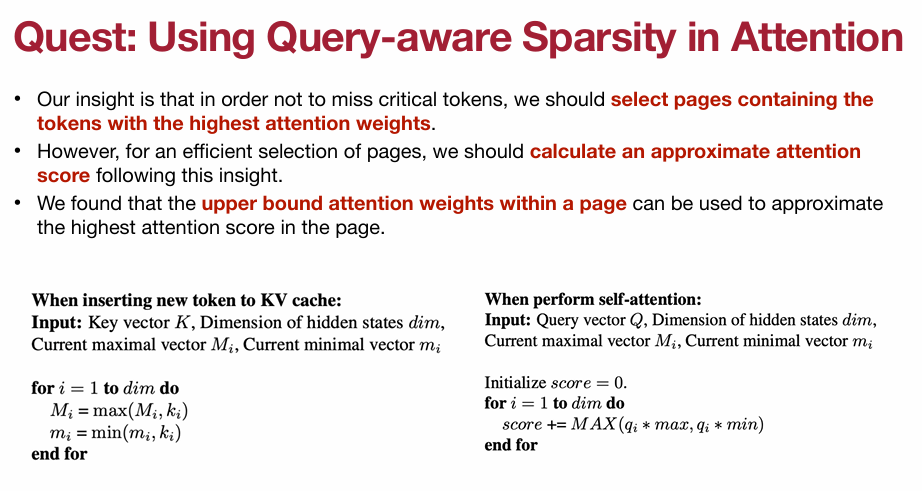

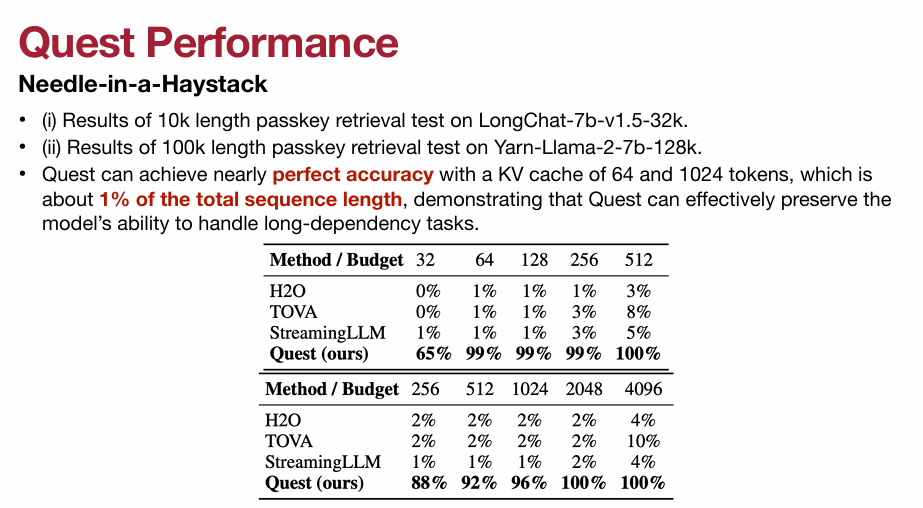

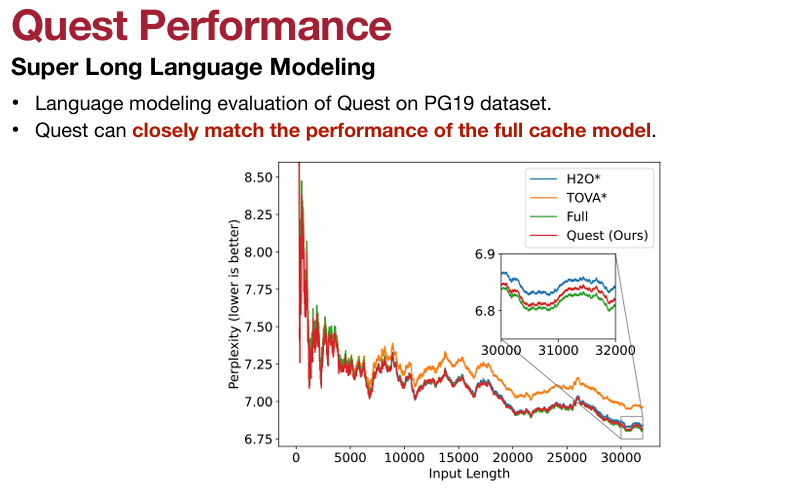

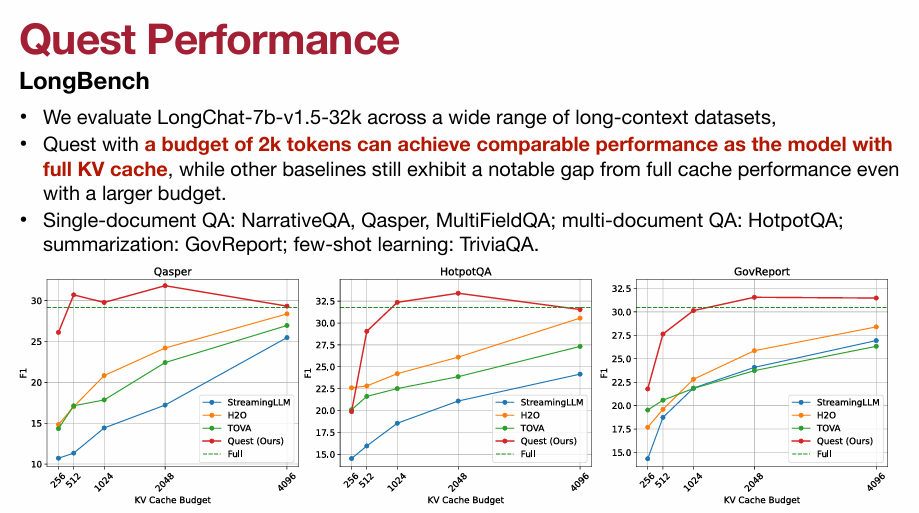

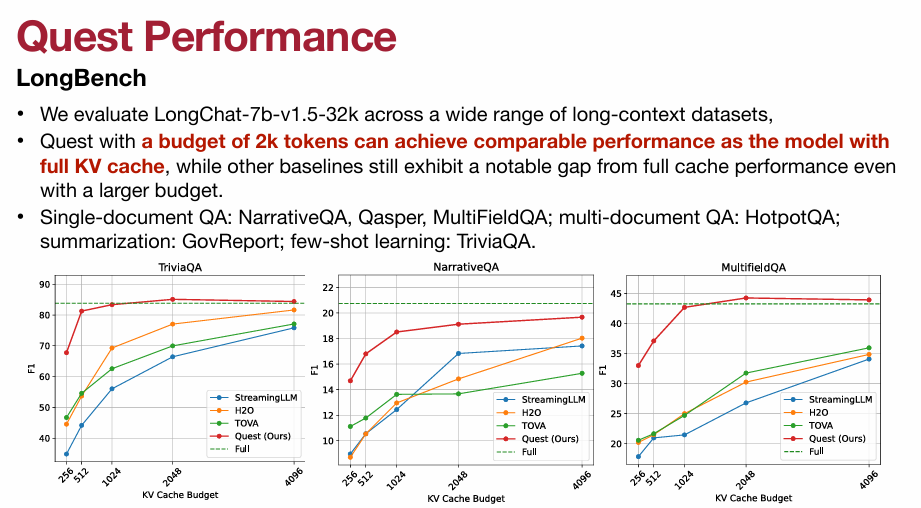

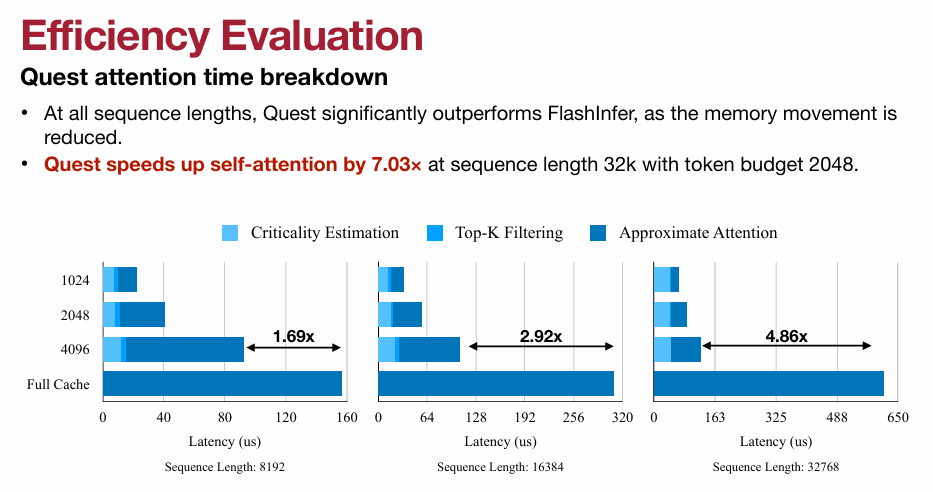

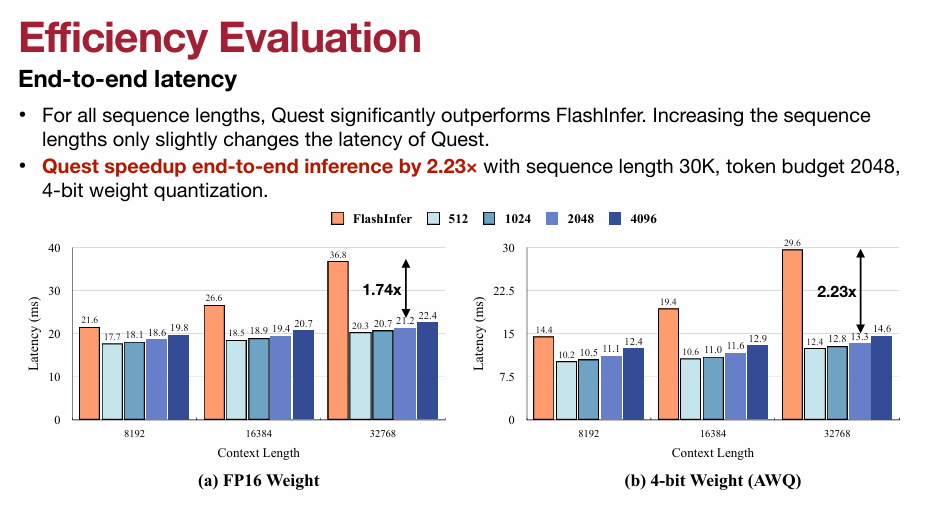

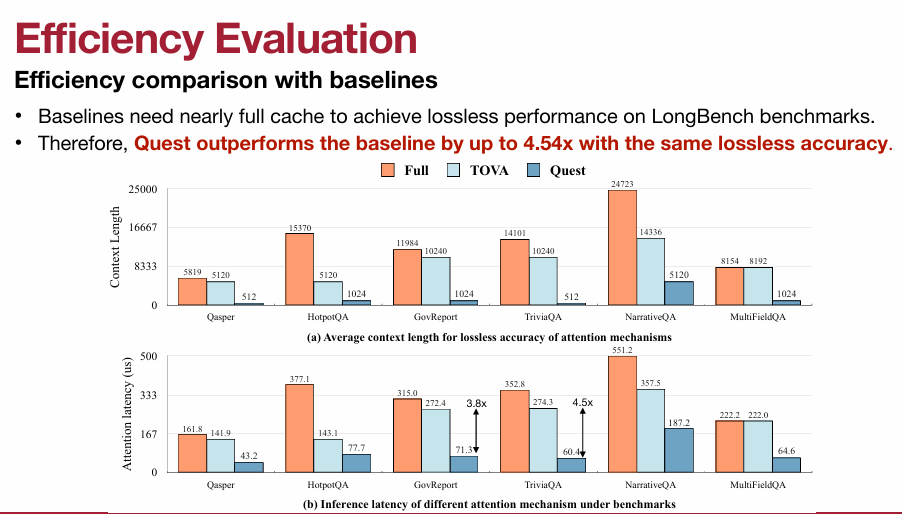

3.4 Quest: Query-Aware Sparsity(重点)

4. Beyond Transformers

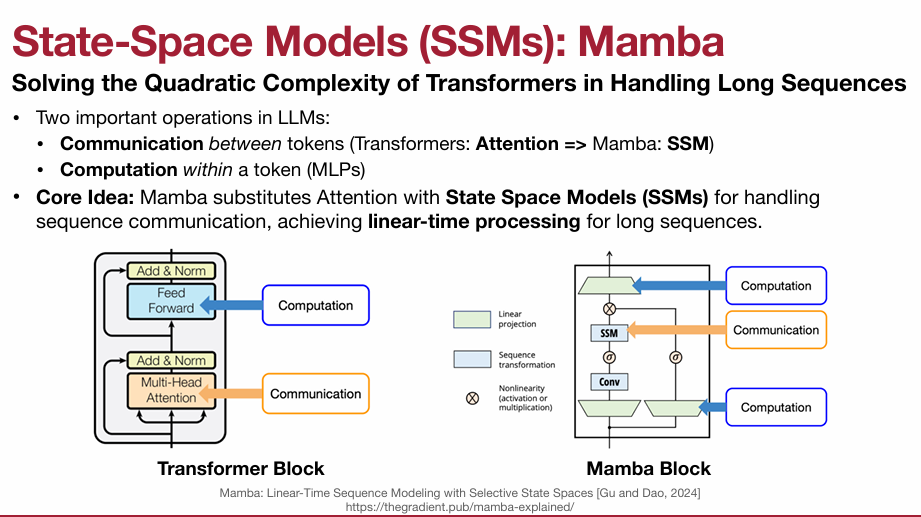

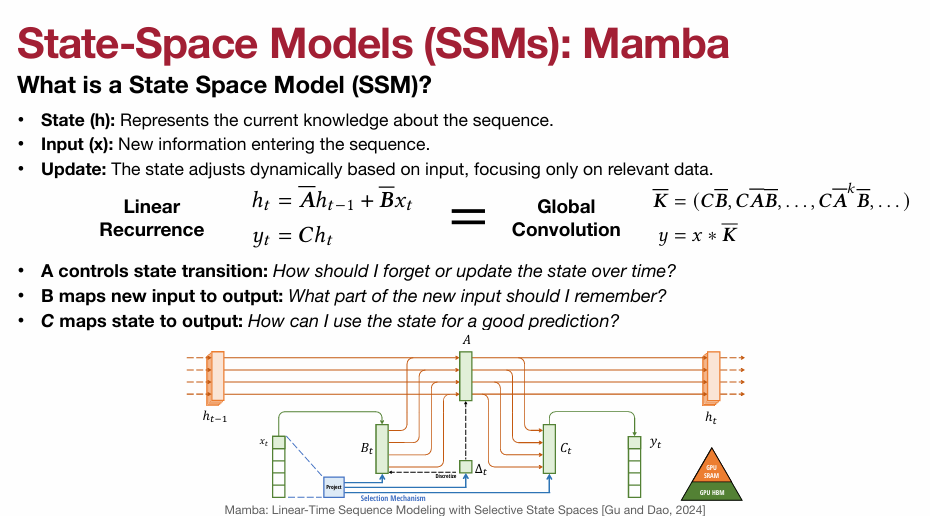

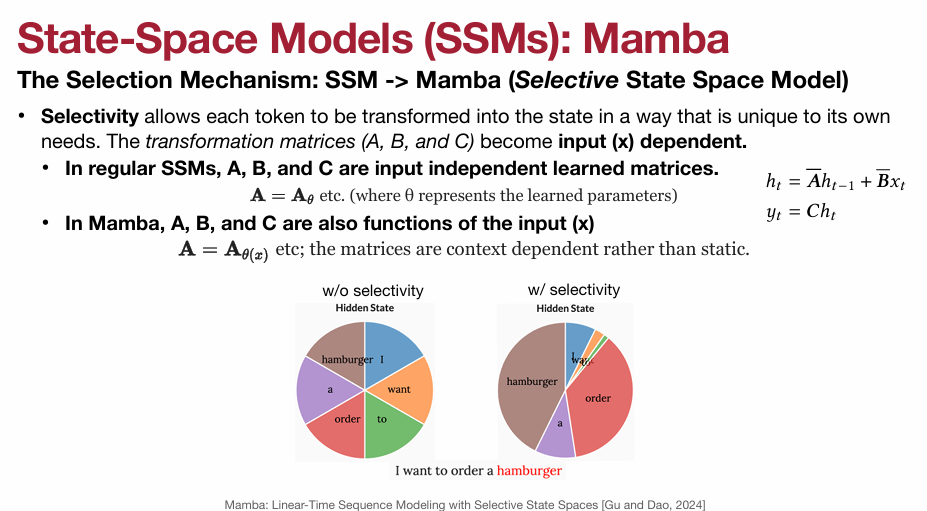

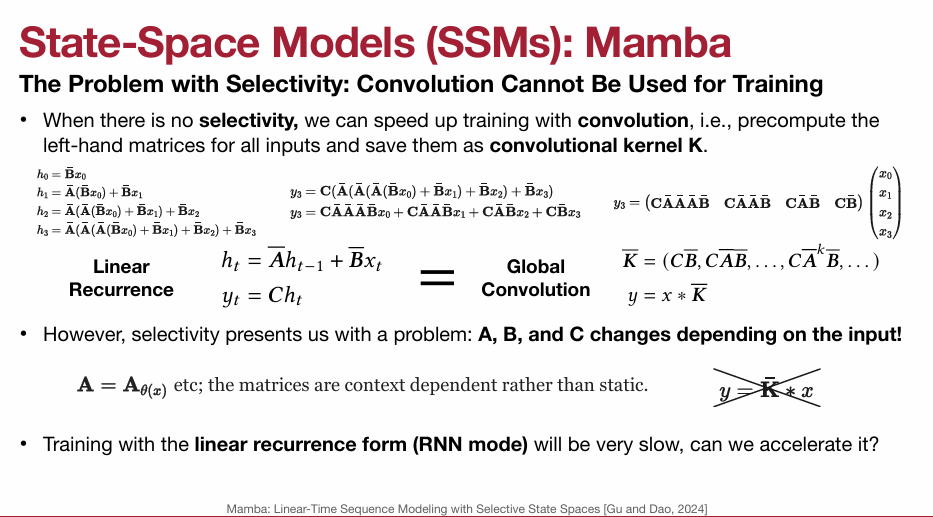

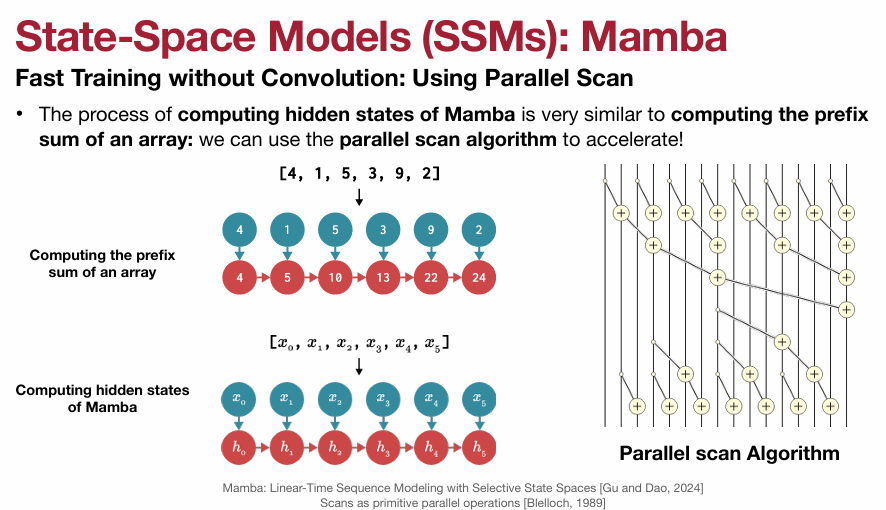

4.1 State-Space Models (SSMs): Mamba

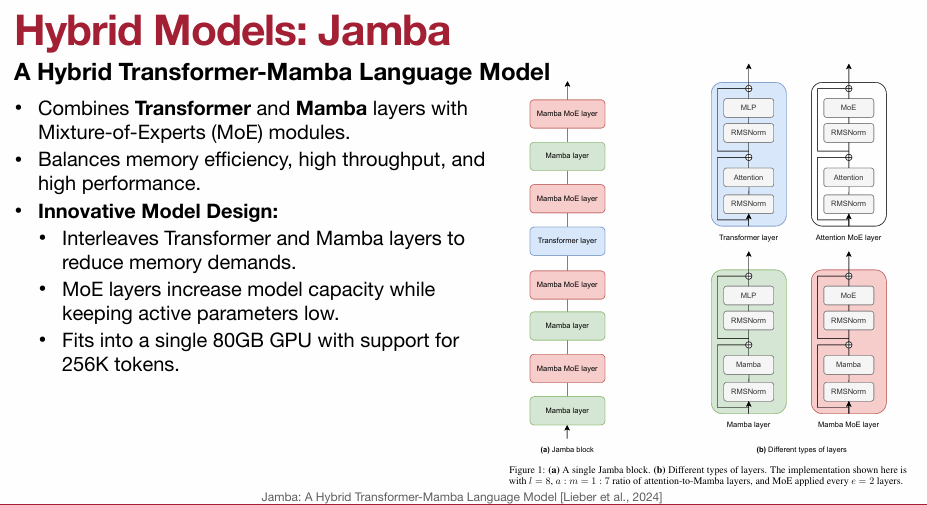

4.2 Hybrid Models: Jamba