C++AI多模型聊天系统(三)AI多模型(豆包/Kimi/千问)接入与实现

- 前言

- 一、豆包的接入与实现

-

- [1.1 与DeepSeek的差异点](#1.1 与DeepSeek的差异点)

- [1.2 代码实现](#1.2 代码实现)

- [1.3 豆包完整代码](#1.3 豆包完整代码)

- 二、kimi的接入与实现

-

- [2.1 与DeepSeek的差异点](#2.1 与DeepSeek的差异点)

- [2.2 代码实现](#2.2 代码实现)

- [2.3 Kimi完整代码](#2.3 Kimi完整代码)

- 三、千问的接入与实现

-

- [3.1 千问完整代码](#3.1 千问完整代码)

- 四、三个模型接入后的回顾

-

- [4.1 代码复用情况](#4.1 代码复用情况)

- [4.2 架构的缺陷](#4.2 架构的缺陷)

前言

上一篇我们接入了DeepSeek,跑通了从API文档到代码实现的完整链路。

- 但一个模型跑通只是开始,真正考验我们架构设计的是:换一个模型接入,需要改多少代码?

这一篇我们一口气接入三个模型------字节跳动的豆包、Moonshot的Kimi、阿里云的通义千问。它们的共同点是都提供类OpenAI的HTTP API,但接口路径、鉴权方式、模型ID、流式响应字段各有不同。

如果我们的LLMProvider抽象设计是合理的,接入一个新模型只需要做三件事:

搞清楚对方的API格式

-

写一个继承LLMProvider的子类

-

在RegisterBuiltinProviders里加一行注册代码

-

上层的LLMManager和ChatSDK不应该有任何改动。

接完这三个模型后,我们会回头做一个对比分析.

一、豆包的接入与实现

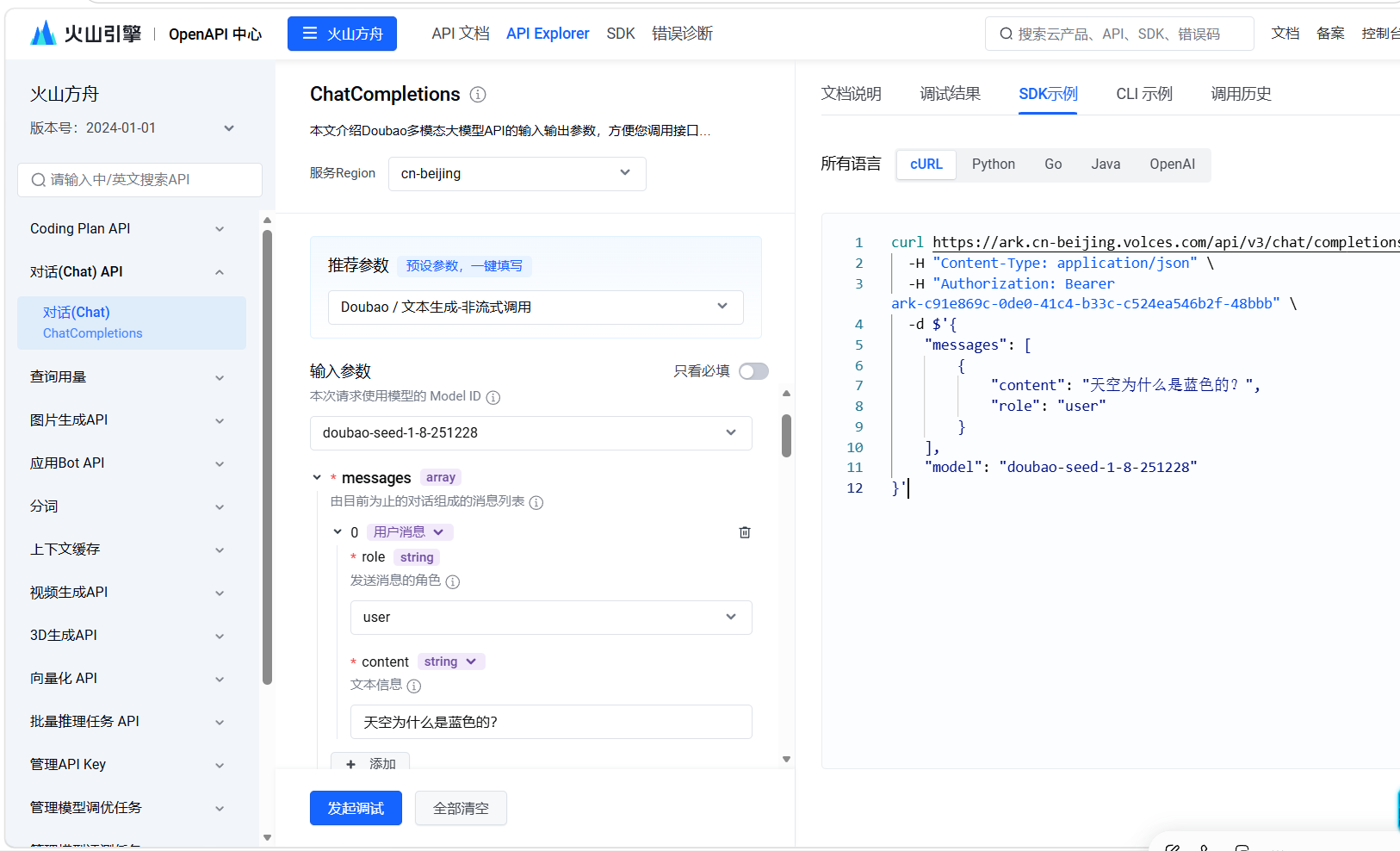

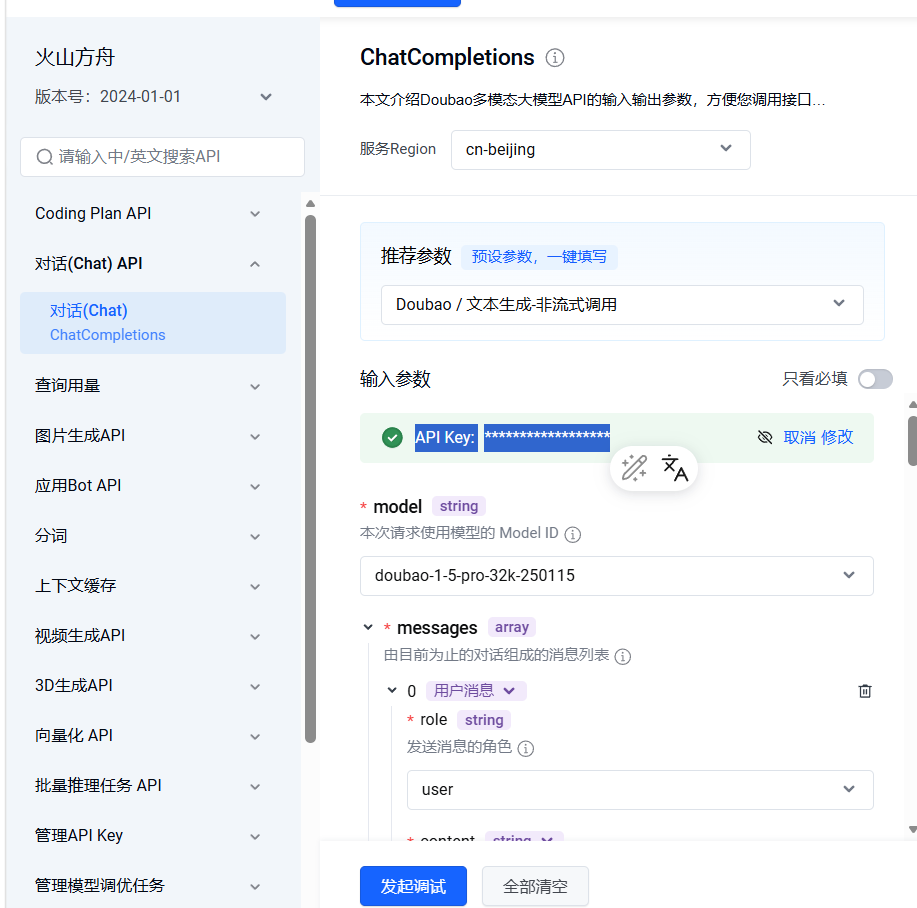

https://api.volcengine.com/api-explorer

- 豆包是字节跳动火山引擎旗下的大模型。打开火山引擎API文档,能拿到以下关键信息:

bash

接口地址:https://ark.cn-beijing.volces.com

接口路径:/api/v3/chat/completions

鉴权方式:Bearer Token(API Key从火山引擎控制台创建)

模型ID:doubao-seed-1-8-251228(推理点ID,创建后可见)豆包的API结构基本兼容OpenAI格式,但有一个关键区别:路径是

/api/v3/chat/completions,不是/v1/chat/completions。 直接用DeepSeek那套路径会404。

1.1 与DeepSeek的差异点

对比DeepSeek,豆包接入有两个需要单独处理的地方:

(1)接口路径不同

DeepSeek走 /v1/chat/completions,豆包走 /api/v3/chat/completions。这意味着URL拼接逻辑不能写死,每个Provider要维护自己的路径。

(2)模型ID不是固定字符串

豆包的模型ID是从火山引擎控制台创建推理点时生成的动态ID(如doubao-seed-1-8-251228),而不是像DeepSeek那样写死deepseek-v4-flash就行。这要求我们的代码里GetModelName()的返回值必须和API要求的模型ID严格一致。

1.2 代码实现

头文件结构和DeepSeek完全一致,现在我们主要看实现文件里几个值得注意的点:

初始化部分------和DeepSeek几乎一样,只是默认Endpoint换成了火山引擎的地址:

cpp

m_endpoint = "https://ark.cn-beijing.volces.com";全量请求部分------核心区别就一行:

cpp

req.path = "/api/v3/chat/completions"; // 豆包用v3路径其他逻辑(拼JSON、发POST、解析choices[0].message.content)和DeepSeek完全一样。

流式请求部分------SSE格式和DeepSeek一致,解析逻辑可以复用。

豆包接入的核心工作量其实就两个地方:找到正确的接口路径、拿到可用的模型ID。其余90%的代码都是直接复用的。

1.3 豆包完整代码

头文件 DoubaoProvider.h

cpp

#pragma once

#include "../core/LLMProvider.h"

#include "../core/common.h"

namespace ai_chat_sdk {

class DoubaoProvider : public LLMProvider {

public:

void InitModel(const std::map<std::string, std::string>& modelConfig) override;

bool IsAvailable() const override;

std::string GetModelName() const override;

std::string GetModelDesc() const override;

virtual std::string GetModelId() const override;

std::string SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) override;

std::string SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) override;

};

}实现文件 DoubaoProvider.cpp

cpp

#define CPPHTTPLIB_OPENSSL_SUPPORT

#include "../include/model/DoubaoProvider.h"

#include "../include/util/myLog.h"

#include "../3rdparty/httplib/httplib.h"

#include "../include/core/common.h"

#include <jsoncpp/json/json.h>

#include <sstream>

#include <map>

namespace ai_chat_sdk {

void DoubaoProvider::InitModel(const std::map<std::string, std::string>& modelConfig) {

auto apiKeyIter = modelConfig.find("api_key");

if (apiKeyIter == modelConfig.end()) {

LOG_ERR("Doubao 初始化失败: 未找到 api_key");

m_isAvailable = false;

return;

}

m_apiKey = apiKeyIter->second;

auto endpointIter = modelConfig.find("endpoint");

if (endpointIter == modelConfig.end()) {

m_endpoint = "https://ark.cn-beijing.volces.com";

LOG_INFO("使用 Doubao 默认接口地址:{}", m_endpoint);

} else {

m_endpoint = endpointIter->second;

}

m_isAvailable = true;

LOG_INFO("Doubao 初始化成功!接口地址:{}", m_endpoint);

}

bool DoubaoProvider::IsAvailable() const { return m_isAvailable; }

std::string DoubaoProvider::GetModelName() const { return "doubao"; }

std::string DoubaoProvider::GetModelId() const { return "doubao"; }

std::string DoubaoProvider::GetModelDesc() const {

return "字节跳动豆包Seed-1.8多模态模型,支持图片理解+文本对话";

}

// 全量请求

std::string DoubaoProvider::SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) {

if (!IsAvailable()) { return ""; }

double temperature = 0.7;

int maxTokens = 2048;

if(requestParam.count("temperature")) temperature = std::stod(requestParam.at("temperature"));

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

std::string text;

for(const auto& item : msg.contents) {

if(item.type == "input_text") text += item.text;

}

msgObj["content"] = text;

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = "doubao-seed-1-8-251228";

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(60, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"}

};

// 【与DeepSeek的区别】豆包用的路径是 /api/v3/chat/completions

auto resp = client.Post("/api/v3/chat/completions", headers, reqStr, "application/json");

if (!resp) return "请求失败:无法连接服务器";

if (resp->status != 200) return "请求失败,请检查密钥/模型/网络";

Json::Value respBody;

std::istringstream respStream(resp->body);

Json::CharReaderBuilder reader;

std::string parseErr;

if (Json::parseFromStream(reader, respStream, &respBody, &parseErr)) {

if (respBody.isMember("choices") && !respBody["choices"].empty()) {

return respBody["choices"][0]["message"]["content"].asString();

}

}

return "解析AI响应失败";

}

// 流式请求

std::string DoubaoProvider::SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) {

if(!IsAvailable()) { callback("", true); return ""; }

double temperature = 0.7;

int maxTokens = 2048;

if(requestParam.count("temperature")) temperature = std::stod(requestParam.at("temperature"));

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

std::string text;

for(const auto& item : msg.contents) {

if(item.type == "input_text") text += item.text;

}

msgObj["content"] = text;

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = "doubao-seed-1-8-251228";

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

reqBody["stream"] = true;

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(300, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"},

{"Accept", "text/event-stream"}

};

std::string buffer;

std::string fullResponse;

bool streamFinish = false;

httplib::Request req;

req.method = "POST";

req.path = "/api/v3/chat/completions"; //豆包路径

req.headers = headers;

req.body = reqStr;

req.response_handler = [&](const httplib::Response& res) {

return res.status == 200;

};

req.content_receiver = [&](const char* data, size_t length, size_t, size_t) {

buffer.append(data, length);

size_t pos = 0;

while((pos = buffer.find("\n\n")) != std::string::npos) {

std::string chunk = buffer.substr(0, pos);

buffer.erase(0, pos + 2);

if(chunk.empty() || chunk[0] == ':') continue;

if(chunk.compare(0, 6, "data: ") == 0) {

std::string json_str = chunk.substr(6);

if(json_str == "[DONE]") {

callback("", true);

streamFinish = true;

return true;

}

Json::Value modelDataJson;

std::istringstream respStream(json_str);

std::string parseErr;

if(Json::parseFromStream(Json::CharReaderBuilder(), respStream, &modelDataJson, &parseErr)) {

if (!modelDataJson["choices"].empty()) {

std::string content = modelDataJson["choices"][0]["delta"]["content"].asString();

if (!content.empty()) {

fullResponse += content;

callback(content, false);

}

}

}

}

}

return true;

};

client.send(req);

if(!streamFinish) callback("", true);

return fullResponse;

}

}二、kimi的接入与实现

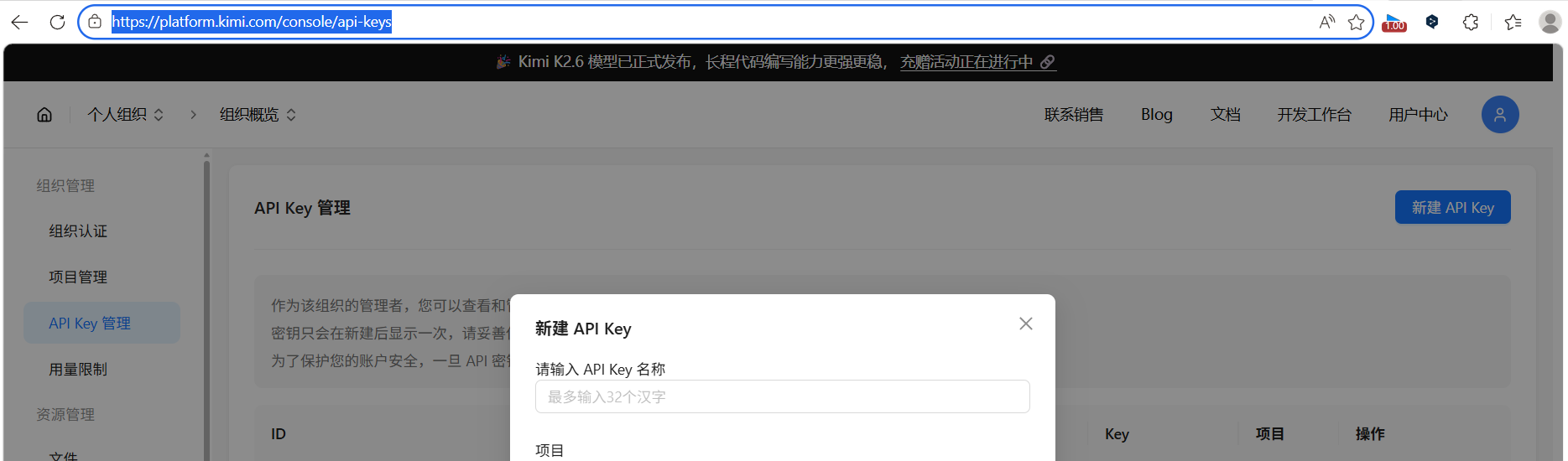

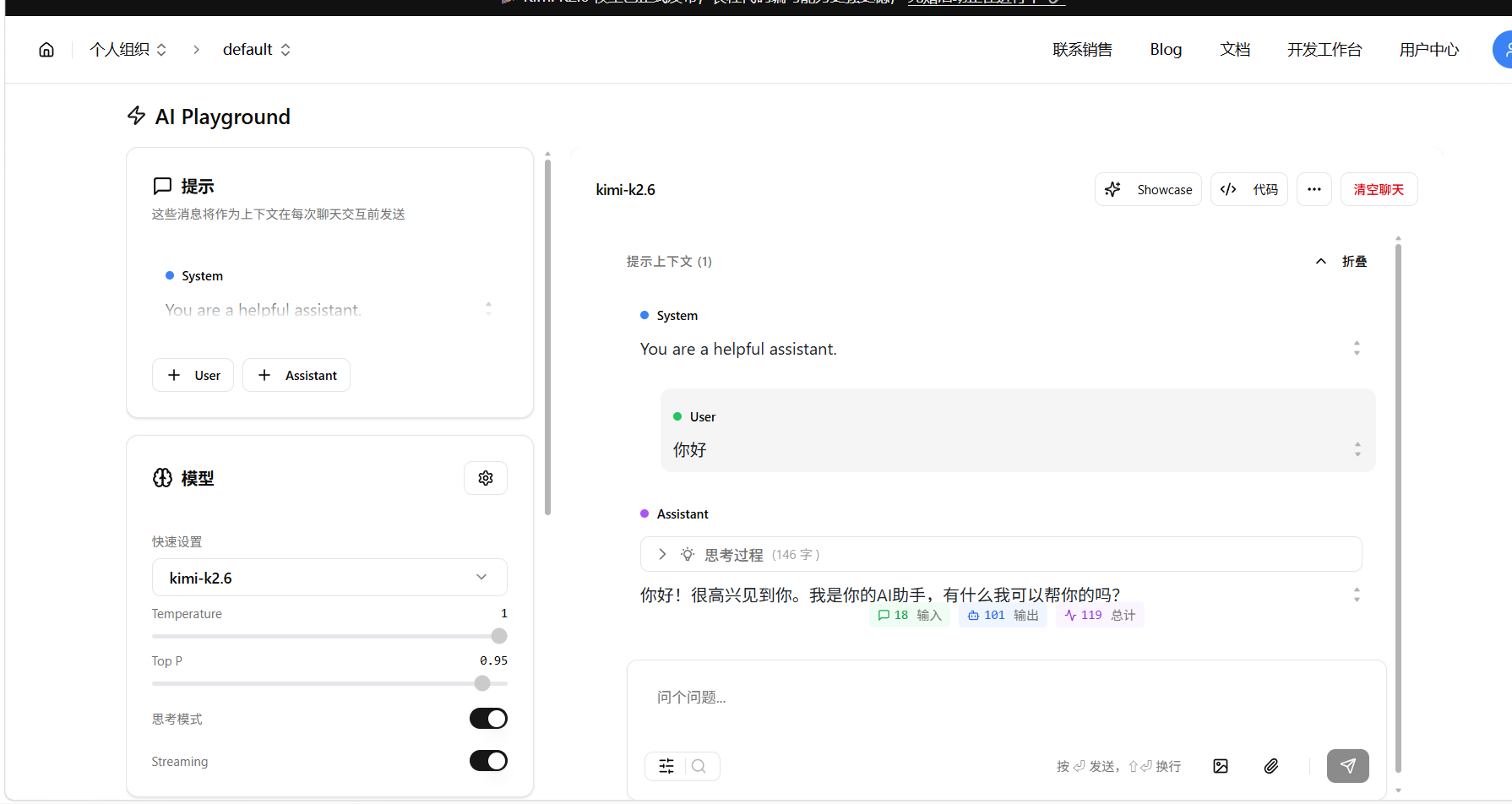

- Kimi是Moonshot AI出品的大模型,以超长上下文(256K)闻名。打开Moonshot开放平台:

kimi的开放平台https://platform.kimi.com/

- 首先申请对应的key

- https://platform.kimi.com/console/api-keys

bash

接口地址:https://api.moonshot.cn/v1

接口路径:/chat/completions

鉴权方式:Bearer Token

模型ID:moonshot-v1-8kKimi的API完全兼容OpenAI格式 ,接口结构和DeepSeek几乎一模一样。但它有一个额外特性:支持reasoning_content字段,可以输出模型的思考过程。

2.1 与DeepSeek的差异点

Kimi接入有两个需要特别处理的地方:

(1)API地址里带了路径前缀

官方给的地址是 https://api.moonshot.cn/v1,注意末尾有个/v1。但httplib的Client构造只认主机名,不能直接把带路径的URL传进去,否则发请求时会404。

解决方案:初始化时把URL拆成主机名+路径前缀:

cpp

// 把 https://api.moonshot.cn/v1 拆成

m_endpoint = "https://api.moonshot.cn"; // 给httplib的Client用

m_path_prefix = "/v1"; // 拼接请求路径时加在前面

// 最终请求路径 = m_path_prefix + "/chat/completions"

// = "/v1/chat/completions"这个拆分逻辑在千问接入时同样需要,后面会看到。

(2)流式响应支持多模态格式和思考过程

Kimi的流式响应里,delta对象可能同时包含:

content:最终回答内容reasoning_content:模型的思考过程(如果开启了深度思考)

处理逻辑需要做字段判断:

cpp

if (delta.isMember("reasoning_content")) {

content = delta["reasoning_content"].asString(); // 先输出思考过程

} else if (delta.isMember("content")) {

content = delta["content"].asString(); // 再输出最终回答

}这是第一个在流式处理里需要区分reasoning_content和content的模型。DeepSeek和豆包都只有content字段。

2.2 代码实现

头文件里可以看到和DeepSeek的一个区别------多了m_path_prefix成员:

cpp

protected:

std::string m_path_prefix; // 路径前缀,解决httplib带路径base_url的404问题这个成员在Kimi和千问的Provider里都存在,是因为它们的API地址都带路径前缀。这是一种"用空间换通用性"的做法------不是所有Provider都需要这个字段,但需要的时候有地方存。

2.3 Kimi完整代码

头文件 KimiProvider.h

cpp

#pragma once

#include "../core/LLMProvider.h"

#include "../core/common.h"

namespace ai_chat_sdk {

class KimiProvider : public LLMProvider {

public:

void InitModel(const std::map<std::string, std::string>& modelConfig) override;

bool IsAvailable() const override;

virtual std::string GetModelId() const override;

std::string GetModelName() const override;

std::string GetModelDesc() const override;

std::string SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) override;

std::string SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) override;

protected:

bool m_isAvailable = false;

std::string m_apiKey;

std::string m_endpoint;

std::string m_path_prefix; // 【关键】解决带路径base_url的404问题

};

}实现文件 KimiProvider.cpp

cpp

#define CPPHTTPLIB_OPENSSL_SUPPORT

#include "../include/model/KimiProvider.h"

#include "../include/util/myLog.h"

#include "../3rdparty/httplib/httplib.h"

#include "../include/core/common.h"

#include <jsoncpp/json/json.h>

#include <sstream>

#include <map>

#define KIMI_ENABLE_THINKING false

namespace ai_chat_sdk {

void KimiProvider::InitModel(const std::map<std::string, std::string>& modelConfig) {

auto apiKeyIter = modelConfig.find("api_key");

if (apiKeyIter == modelConfig.end()) {

LOG_ERR("Kimi 初始化失败: 未找到 api_key");

m_isAvailable = false;

return;

}

m_apiKey = apiKeyIter->second;

auto endpointIter = modelConfig.find("endpoint");

if (endpointIter == modelConfig.end()) {

m_endpoint = "https://api.moonshot.cn/v1";

} else {

m_endpoint = endpointIter->second;

}

// 【关键】解析base_url,拆分主机和路径前缀

// "https://api.moonshot.cn/v1" → 主机="https://api.moonshot.cn" 路径="/v1"

std::string full_url = m_endpoint;

if (full_url.substr(0, 8) == "https://") full_url = full_url.substr(8);

size_t slash_pos = full_url.find('/');

if (slash_pos != std::string::npos) {

m_endpoint = "https://" + full_url.substr(0, slash_pos);

m_path_prefix = full_url.substr(slash_pos);

} else {

m_path_prefix = "";

}

m_isAvailable = true;

LOG_INFO("Kimi 初始化成功!主机:{},路径前缀:{}", m_endpoint, m_path_prefix);

}

bool KimiProvider::IsAvailable() const { return m_isAvailable; }

std::string KimiProvider::GetModelId() const { return "kimi"; }

std::string KimiProvider::GetModelName() const { return "moonshot-v1-8k"; }

std::string KimiProvider::GetModelDesc() const {

return "Moonshot Kimi K2.6,支持256K超长上下文、多模态理解与深度思考";

}

// 全量请求

std::string KimiProvider::SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) {

if (!IsAvailable()) return "";

int maxTokens = 32768;

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

double temperature = KIMI_ENABLE_THINKING ? 1.0 : 0.6;

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

if(msg.contents.size() == 1 && msg.contents[0].type == "input_text") {

msgObj["content"] = msg.contents[0].text;

} else {

Json::Value contentArray;

for(const auto& item : msg.contents) {

Json::Value itemObj;

if(item.type == "input_text") {

itemObj["type"] = "text";

itemObj["text"] = item.text;

} else if(item.type == "input_image") {

itemObj["type"] = "image_url";

itemObj["image_url"]["url"] = item.image_url;

}

contentArray.append(itemObj);

}

msgObj["content"] = contentArray;

}

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = GetModelName();

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

reqBody["thinking"]["type"] = KIMI_ENABLE_THINKING ? "enabled" : "disabled";

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(120, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"}

};

// 【关键】用 m_path_prefix 拼接完整路径

auto resp = client.Post((m_path_prefix + "/chat/completions").c_str(), headers, reqStr, "application/json");

if (!resp) return "请求失败:无法连接服务器";

if (resp->status != 200) return "请求失败,请检查密钥/模型/网络";

Json::Value respBody;

std::istringstream respStream(resp->body);

std::string parseErr;

if (Json::parseFromStream(Json::CharReaderBuilder(), respStream, &respBody, &parseErr)) {

if (respBody.isMember("choices") && !respBody["choices"].empty()) {

return respBody["choices"][0]["message"]["content"].asString();

}

}

return "解析AI响应失败";

}

// 流式请求

std::string KimiProvider::SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) {

if(!IsAvailable()) { callback("", true); return ""; }

int maxTokens = 32768;

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

double temperature = KIMI_ENABLE_THINKING ? 1.0 : 0.6;

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

if(msg.contents.size() == 1 && msg.contents[0].type == "input_text") {

msgObj["content"] = msg.contents[0].text;

} else {

Json::Value contentArray;

for(const auto& item : msg.contents) {

Json::Value itemObj;

if(item.type == "input_text") {

itemObj["type"] = "text";

itemObj["text"] = item.text;

} else if(item.type == "input_image") {

itemObj["type"] = "image_url";

itemObj["image_url"]["url"] = item.image_url;

}

contentArray.append(itemObj);

}

msgObj["content"] = contentArray;

}

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = GetModelName();

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

reqBody["stream"] = true;

reqBody["thinking"]["type"] = KIMI_ENABLE_THINKING ? "enabled" : "disabled";

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(300, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"},

{"Accept", "text/event-stream"}

};

std::string buffer;

std::string fullResponse;

bool streamFinish = false;

httplib::Request req;

req.method = "POST";

req.path = m_path_prefix + "/chat/completions";

req.headers = headers;

req.body = reqStr;

req.response_handler = [&](const httplib::Response& res) {

return res.status == 200;

};

req.content_receiver = [&](const char* data, size_t length, size_t, size_t) {

buffer.append(data, length);

size_t pos = 0;

while((pos = buffer.find("\n\n")) != std::string::npos) {

std::string chunk = buffer.substr(0, pos);

buffer.erase(0, pos + 2);

if(chunk.empty() || chunk[0] == ':') continue;

if(chunk.compare(0, 6, "data: ") == 0) {

std::string json_str = chunk.substr(6);

if(json_str == "[DONE]") {

callback("", true);

streamFinish = true;

return true;

}

Json::Value modelDataJson;

std::istringstream respStream(json_str);

std::string parseErr;

if(Json::parseFromStream(Json::CharReaderBuilder(), respStream, &modelDataJson, &parseErr)) {

if (!modelDataJson["choices"].empty()) {

const Json::Value& delta = modelDataJson["choices"][0]["delta"];

std::string content;

// 【Kimi特色】先检查思考过程

if(delta.isMember("reasoning_content") && !delta["reasoning_content"].isNull()) {

content = delta["reasoning_content"].asString();

} else if(delta.isMember("content") && !delta["content"].isNull()) {

content = delta["content"].asString();

}

if (!content.empty()) {

fullResponse += content;

callback(content, false);

}

}

}

}

}

return true;

};

client.send(req);

if(!streamFinish) callback("", true);

return fullResponse;

}

}三、千问的接入与实现

我们进入到千问的api开放平台官网

https://www.aliyun.com/product/bailian

同样我们继续前面的步骤,获取对应的apikey和对应的格式和配置

接口地址:https://dashscope.aliyuncs.com/compatible-mode/v1

接口路径:/chat/completions

鉴权方式:Bearer Token(阿里云API Key)

模型ID:qwen3.6-plus流式响应同样支持思考过程:

千问的流式delta里也有reasoning_content和content两个字段,处理逻辑和Kimi完全一致。

实现文件 QwenProvider.cpp

3.1 千问完整代码

头文件 QwenProvider.h

cpp

#pragma once

#include "../core/LLMProvider.h"

#include "../core/common.h"

namespace ai_chat_sdk {

class QwenProvider : public LLMProvider {

public:

void InitModel(const std::map<std::string, std::string>& modelConfig) override;

bool IsAvailable() const override;

virtual std::string GetModelId() const override;

std::string GetModelName() const override;

std::string GetModelDesc() const override;

std::string SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) override;

std::string SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) override;

std::string m_path_prefix; // 【和Kimi一样】解决httplib的路径前缀问题

};

}

cpp

#define CPPHTTPLIB_OPENSSL_SUPPORT

#include "../include/model/QwenProvider.h"

#include "../include/util/myLog.h"

#include "../3rdparty/httplib/httplib.h"

#include "../include/core/common.h"

#include <jsoncpp/json/json.h>

#include <sstream>

#include <map>

#define QWEN_ENABLE_THINKING false

namespace ai_chat_sdk {

void QwenProvider::InitModel(const std::map<std::string, std::string>& modelConfig) {

auto apiKeyIter = modelConfig.find("api_key");

if (apiKeyIter == modelConfig.end()) {

LOG_ERR("千问 初始化失败: 未找到 api_key");

m_isAvailable = false;

return;

}

m_apiKey = apiKeyIter->second;

auto endpointIter = modelConfig.find("endpoint");

if (endpointIter == modelConfig.end()) {

m_endpoint = "https://dashscope.aliyuncs.com/compatible-mode/v1";

} else {

m_endpoint = endpointIter->second;

}

// 【和Kimi一样】拆分主机和路径前缀

std::string full_url = m_endpoint;

if(full_url.substr(0,8) == "https://") full_url = full_url.substr(8);

size_t slash_pos = full_url.find('/');

if (slash_pos != std::string::npos) {

m_endpoint = "https://" + full_url.substr(0, slash_pos);

m_path_prefix = full_url.substr(slash_pos);

} else {

m_path_prefix = "";

}

m_isAvailable = true;

LOG_INFO("千问 初始化成功!主机:{},路径前缀:{}", m_endpoint, m_path_prefix);

}

bool QwenProvider::IsAvailable() const { return m_isAvailable; }

std::string QwenProvider::GetModelId() const { return "qwen"; }

std::string QwenProvider::GetModelName() const { return "qwen3.6-plus"; }

std::string QwenProvider::GetModelDesc() const {

return "阿里云通义千问3.6-Plus,支持深度思考,中文能力优秀";

}

// 全量请求

std::string QwenProvider::SendMessage(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam) {

if (!IsAvailable()) return "";

double temperature = 0.7;

int maxTokens = 2048;

if(requestParam.count("temperature")) temperature = std::stod(requestParam.at("temperature"));

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

std::string textContent;

for(const auto& item : msg.contents) {

if(item.type == "input_text") textContent += item.text;

}

msgObj["content"] = textContent;

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = GetModelName();

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

reqBody["enable_thinking"] = QWEN_ENABLE_THINKING; // 【千问特色】思考开关

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(60, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"}

};

auto resp = client.Post((m_path_prefix + "/chat/completions").c_str(), headers, reqStr, "application/json");

if (!resp) return "请求失败:无法连接服务器";

if (resp->status != 200) return "请求失败,请检查密钥/模型/网络";

Json::Value respBody;

std::istringstream respStream(resp->body);

std::string parseErr;

if (Json::parseFromStream(Json::CharReaderBuilder(), respStream, &respBody, &parseErr)) {

if (respBody.isMember("choices") && !respBody["choices"].empty()) {

return respBody["choices"][0]["message"]["content"].asString();

}

}

return "解析AI响应失败";

}

// 流式请求

std::string QwenProvider::SendMessageStream(

const std::vector<Message>& messages,

const std::map<std::string, std::string>& requestParam,

const std::function<void(const std::string&, bool)>& callback) {

if(!IsAvailable()) { callback("", true); return ""; }

double temperature = 0.7;

int maxTokens = 2048;

if(requestParam.count("temperature")) temperature = std::stod(requestParam.at("temperature"));

if(requestParam.count("maxTokens")) maxTokens = std::stoi(requestParam.at("maxTokens"));

Json::Value messagesArray;

for(const auto& msg : messages) {

Json::Value msgObj;

msgObj["role"] = msg.role;

std::string textContent;

for(const auto& item : msg.contents) {

if(item.type == "input_text") textContent += item.text;

}

msgObj["content"] = textContent;

messagesArray.append(msgObj);

}

Json::Value reqBody;

reqBody["model"] = GetModelName();

reqBody["messages"] = messagesArray;

reqBody["temperature"] = temperature;

reqBody["max_tokens"] = maxTokens;

reqBody["stream"] = true;

reqBody["enable_thinking"] = QWEN_ENABLE_THINKING;

Json::StreamWriterBuilder writer;

writer["indentation"] = "";

std::string reqStr = Json::writeString(writer, reqBody);

httplib::Client client(m_endpoint.c_str());

client.set_connection_timeout(30, 0);

client.set_read_timeout(300, 0);

httplib::Headers headers = {

{"Authorization", "Bearer " + m_apiKey},

{"Content-Type", "application/json"},

{"Accept", "text/event-stream"}

};

std::string buffer;

std::string fullResponse;

bool streamFinish = false;

httplib::Request req;

req.method = "POST";

req.path = m_path_prefix + "/chat/completions";

req.headers = headers;

req.body = reqStr;

req.response_handler = [&](const httplib::Response& res) {

return res.status == 200;

};

req.content_receiver = [&](const char* data, size_t length, size_t, size_t) {

buffer.append(data, length);

size_t pos = 0;

while((pos = buffer.find("\n\n")) != std::string::npos) {

std::string chunk = buffer.substr(0, pos);

buffer.erase(0, pos + 2);

if(chunk.empty() || chunk[0] == ':') continue;

if(chunk.compare(0, 6, "data: ") == 0) {

std::string json_str = chunk.substr(6);

if(json_str == "[DONE]") {

callback("", true);

streamFinish = true;

return true;

}

Json::Value modelDataJson;

std::istringstream respStream(json_str);

std::string parseErr;

if(Json::parseFromStream(Json::CharReaderBuilder(), respStream, &modelDataJson, &parseErr)) {

if (!modelDataJson["choices"].empty()) {

const Json::Value& delta = modelDataJson["choices"][0]["delta"];

std::string content;

// 【和Kimi一样】同时支持思考过程和最终回答

if(delta.isMember("reasoning_content") && !delta["reasoning_content"].isNull()) {

content = delta["reasoning_content"].asString();

} else if(delta.isMember("content") && !delta["content"].isNull()) {

content = delta["content"].asString();

}

if (!content.empty()) {

fullResponse += content;

callback(content, false);

}

}

}

}

}

return true;

};

client.send(req);

if(!streamFinish) callback("", true);

return fullResponse;

}

}四、三个模型接入后的回顾

到现在,我们已经接入了四个云端大模型:DeepSeek、豆包、Kimi、千问

4.1 代码复用情况

-

我们现在回顾我们的代码发现了许多的问题

四个模型的Provider实现里,相同的逻辑包括: -

可用性检查(

IsAvailable()) -

从

requestParam提取参数(temperature、maxTokens) -

JSON拼接(messagesArray的构建逻辑)

-

HTTP状态码校验(200判断)

-

流式SSE事件解析(

\n\n分隔、data:前缀过滤、[DONE]检测) -

JSON反序列化(JsonCpp的

parseFromStream)

不同的逻辑只集中在这几个地方:

| 模型 | 接口路径 | 模型ID | 特殊字段 |

|---|---|---|---|

| DeepSeek | /v1/chat/completions |

deepseek-chat |

无 |

| 豆包 | /api/v3/chat/completions |

doubao-seed-1-8-251228 |

无 |

| Kimi | /v1/chat/completions |

moonshot-v1-8k |

reasoning_content |

| 千问 | /chat/completions |

qwen3.6-plus |

reasoning_content、enable_thinking |

4.2 架构的缺陷

现在回头看:

- 接入新模型的成本:写一个Provider子类,约200行代码。其中150行是复制粘贴(JSON拼接、HTTP发送、流式解析),真正需要适配的只有50行左右(路径、模型名、响应字段差异)。

- 上层代码改动量 :

LLMManager和ChatSDK的代码零改动 。因为上层只依赖LLMProvider接口,不关心底层是DeepSeek还是Kimi。 - 扩展性:加一个新模型只需要两步------写一个继承类、在注册函数里加一行。这是典型的"开闭原则":对扩展开放,对修改关闭。

四个Provider的代码确实存在大量重复。理想情况下,既然它们都走OpenAI兼容格式,可以做一层OpenAICompatibleProvider基类,把公共逻辑抽出来,各模型只覆写差异化的配置项。

这个优化的确应该做,但是...

-

目前四个模型的差异点还在变化中(比如豆包的v3路径、Kimi的思考过程,文言一心和各种代码还有很多的不通点),后续也会加入ollma本地大模型和各种调优

-

我们先保持每个Provider独立,调试时能一眼看出问题在哪个环节

-

等模型数量超过10个、重复代码多到难以维护时,再做重构才是合适的时机

我们先跑通,后面的博客里会详细讲解如何优化重构代码

我的个人主页,欢迎来阅读我的其他文章

https://blog.csdn.net/2402_83322742?spm=1011.2415.3001.5343我的C++AI多模型聊天系统项目专栏

欢迎来阅读指出不足

https://blog.csdn.net/2402_83322742/category_13159665.html?spm=1001.2014.3001.5482