DeepSeek版本更新

看到DeepSeek版本更新到V4,也是第一时间就更新上了,deepseekV4默认开启思考模式,而思考模式开启时调用工具强制要求回传reasoning_content否则会报错 经过研究官方文档https://api-docs.deepseek.com/zh-cn/guides/thinking_mode和源码找到如下解决方案

解决方案:关闭思考模式

关闭后,模型不再返回reasoning_content,也就无需回传,错误自然消失,但是V4模型均默认开启思考模式,无法通过V3的切换chat模型来关闭,需要通过修改源码增加思考模式开关的参数来实现

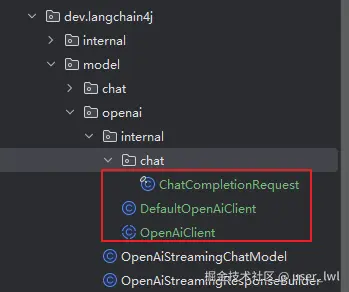

1.复制如下源码对原来的源码进行覆盖

2.修改ChatCompletionRequest.java

增加thinking参数

java

/**

* 额外的请求体参数,用于传递 DeepSeek 思考模式配置

* {"thinking": {"type": "disabled"}}

*/

@JsonProperty

private final Map<String, String> thinking;

java

public Map<String, String> thinking() {

return thinking;

}修改构造器

java

public ChatCompletionRequest(Builder builder) {

this.model = builder.model;

this.messages = builder.messages;

this.temperature = builder.temperature;

this.topP = builder.topP;

this.n = builder.n;

this.stream = builder.stream;

this.streamOptions = builder.streamOptions;

this.stop = builder.stop;

this.maxTokens = builder.maxTokens;

this.maxCompletionTokens = builder.maxCompletionTokens;

this.presencePenalty = builder.presencePenalty;

this.frequencyPenalty = builder.frequencyPenalty;

this.logitBias = builder.logitBias;

this.user = builder.user;

this.responseFormat = builder.responseFormat;

this.seed = builder.seed;

this.tools = builder.tools;

this.toolChoice = builder.toolChoice;

this.parallelToolCalls = builder.parallelToolCalls;

this.store = builder.store;

this.metadata = builder.metadata;

this.reasoningEffort = builder.reasoningEffort;

this.serviceTier = builder.serviceTier;

this.thinking = builder.thinking != null ? unmodifiableMap(builder.thinking) : null;

this.functions = builder.functions;

this.functionCall = builder.functionCall;

}修改equalTo、hashCode和toString

java

private boolean equalTo(ChatCompletionRequest another) {

return Objects.equals(model, another.model)

&& Objects.equals(messages, another.messages)

&& Objects.equals(temperature, another.temperature)

&& Objects.equals(topP, another.topP)

&& Objects.equals(n, another.n)

&& Objects.equals(stream, another.stream)

&& Objects.equals(streamOptions, another.streamOptions)

&& Objects.equals(stop, another.stop)

&& Objects.equals(maxTokens, another.maxTokens)

&& Objects.equals(maxCompletionTokens, another.maxCompletionTokens)

&& Objects.equals(presencePenalty, another.presencePenalty)

&& Objects.equals(frequencyPenalty, another.frequencyPenalty)

&& Objects.equals(logitBias, another.logitBias)

&& Objects.equals(user, another.user)

&& Objects.equals(responseFormat, another.responseFormat)

&& Objects.equals(seed, another.seed)

&& Objects.equals(tools, another.tools)

&& Objects.equals(toolChoice, another.toolChoice)

&& Objects.equals(parallelToolCalls, another.parallelToolCalls)

&& Objects.equals(store, another.store)

&& Objects.equals(metadata, another.metadata)

&& Objects.equals(reasoningEffort, another.reasoningEffort)

&& Objects.equals(serviceTier, another.serviceTier)

&& Objects.equals(thinking, another.thinking)

&& Objects.equals(functions, another.functions)

&& Objects.equals(functionCall, another.functionCall);

}

@Override

public int hashCode() {

int h = 5381;

h += (h << 5) + Objects.hashCode(model);

h += (h << 5) + Objects.hashCode(messages);

h += (h << 5) + Objects.hashCode(temperature);

h += (h << 5) + Objects.hashCode(topP);

h += (h << 5) + Objects.hashCode(n);

h += (h << 5) + Objects.hashCode(stream);

h += (h << 5) + Objects.hashCode(streamOptions);

h += (h << 5) + Objects.hashCode(stop);

h += (h << 5) + Objects.hashCode(maxTokens);

h += (h << 5) + Objects.hashCode(maxCompletionTokens);

h += (h << 5) + Objects.hashCode(presencePenalty);

h += (h << 5) + Objects.hashCode(frequencyPenalty);

h += (h << 5) + Objects.hashCode(logitBias);

h += (h << 5) + Objects.hashCode(user);

h += (h << 5) + Objects.hashCode(responseFormat);

h += (h << 5) + Objects.hashCode(seed);

h += (h << 5) + Objects.hashCode(tools);

h += (h << 5) + Objects.hashCode(toolChoice);

h += (h << 5) + Objects.hashCode(parallelToolCalls);

h += (h << 5) + Objects.hashCode(store);

h += (h << 5) + Objects.hashCode(metadata);

h += (h << 5) + Objects.hashCode(reasoningEffort);

h += (h << 5) + Objects.hashCode(serviceTier);

h += (h << 5) + Objects.hashCode(thinking);

h += (h << 5) + Objects.hashCode(functions);

h += (h << 5) + Objects.hashCode(functionCall);

return h;

}

@Override

public String toString() {

return "ChatCompletionRequest{"

+ "model=" + model

+ ", messages=" + messages

+ ", temperature=" + temperature

+ ", topP=" + topP

+ ", n=" + n

+ ", stream=" + stream

+ ", streamOptions=" + streamOptions

+ ", stop=" + stop

+ ", maxTokens=" + maxTokens

+ ", maxCompletionTokens=" + maxCompletionTokens

+ ", presencePenalty=" + presencePenalty

+ ", frequencyPenalty=" + frequencyPenalty

+ ", logitBias=" + logitBias

+ ", user=" + user

+ ", responseFormat=" + responseFormat

+ ", seed=" + seed

+ ", tools=" + tools

+ ", toolChoice=" + toolChoice

+ ", parallelToolCalls=" + parallelToolCalls

+ ", store=" + store

+ ", metadata=" + metadata

+ ", reasoningEffort=" + reasoningEffort

+ ", serviceTier=" + serviceTier

+ ", thinking=" + thinking

+ ", functions=" + functions

+ ", functionCall=" + functionCall

+ "}";

}修改Builder

java

private Map<String, String> thinking;

java

public Builder from(ChatCompletionRequest instance) {

model(instance.model);

messages(instance.messages);

temperature(instance.temperature);

topP(instance.topP);

n(instance.n);

stream(instance.stream);

streamOptions(instance.streamOptions);

stop(instance.stop);

maxTokens(instance.maxTokens);

maxCompletionTokens(instance.maxCompletionTokens);

presencePenalty(instance.presencePenalty);

frequencyPenalty(instance.frequencyPenalty);

logitBias(instance.logitBias);

user(instance.user);

responseFormat(instance.responseFormat);

seed(instance.seed);

tools(instance.tools);

toolChoice(instance.toolChoice);

parallelToolCalls(instance.parallelToolCalls);

store(instance.store);

metadata(instance.metadata);

reasoningEffort(instance.reasoningEffort);

serviceTier(instance.serviceTier);

thinking(instance.thinking);

functions(instance.functions);

functionCall(instance.functionCall);

return this;

}

java

public Builder thinking(Map<String, String> thinking) {

this.thinking = thinking;

return this;

}3.修改DefaultOpenAiClient.java

将chatCompletion方法改为如下添加关闭思考模式的参数

java

@Override

public SyncOrAsyncOrStreaming<ChatCompletionResponse> chatCompletion(ChatCompletionRequest request) {

// 创建 thinking Map

// 设置禁用思考模式,用于兼容不返回reasoning_content字段

Map<String, String> thinking = new HashMap<>();

thinking.put("type", "disabled");

// 构建带有 thinking 的请求(关闭思考模式)

ChatCompletionRequest requestWithExtraBody = ChatCompletionRequest.builder()

.from(request)

.thinking(thinking)

.stream(false)

.build();

HttpRequest httpRequest = HttpRequest.builder()

.method(POST)

.url(baseUrl, "chat/completions")

.addHeader("Content-Type", "application/json")

.addHeaders(defaultHeaders)

.body(Json.toJson(requestWithExtraBody))

.build();

// 流式请求同样添加 thinking

ChatCompletionRequest streamingRequestWithExtraBody = ChatCompletionRequest.builder()

.from(request)

.thinking(thinking)

.stream(true)

.build();

HttpRequest streamingHttpRequest = HttpRequest.builder()

.method(POST)

.url(baseUrl, "chat/completions")

.addHeader("Content-Type", "application/json")

.addHeaders(defaultHeaders)

.body(Json.toJson(streamingRequestWithExtraBody))

.build();

return new RequestExecutor<>(httpClient, httpRequest, streamingHttpRequest, ChatCompletionResponse.class);

}