bash

#!/bin/bash

#IT_BEGIN

#IT_TYPE=3

#IT SYSTEM_GPU_DISCOVER|discovery.gpuInfo[disc]

#原型指标

#IT_RULE SYSTEM_GPU_UUID|gpuUuid[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_NAME|gpuName[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_MODEL|gpuModel[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_DRIVER|gpuDriver[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_MEMORY_TOTAL|gpuMemoryTotal[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_MEMORY_USED|gpuMemoryUsed[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_MEMORY_FREE|gpuMemoryFree[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_TEMP|gpuTemperature[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_UTIL|gpuUtilization[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_MEMORY_UTIL|gpuMemoryUtilization[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_POWER|gpuPower[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_POWER_LIMIT|gpuPowerLimit[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_FAN_SPEED|gpuFanSpeed[{#GPUINDEX}]

#IT_RULE SYSTEM_IP_ADDRESS|IpAddress[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_PROCESS_PID|gpuProcessPid[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_PROCESS_TYPE|gpuProcessType[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_PROCESS_NAME|gpuProcessName[{#GPUINDEX}]

#IT_RULE SYSTEM_GPU_PROCESS_MEM|gpuProcessMemoryUsage[{#GPUINDEX}]

#IT_END

# 检查是否安装了nvidia-smi

check_nvidia_smi() {

if ! command -v nvidia-smi &> /dev/null; then

echo "ERROR: nvidia-smi not found. Please install NVIDIA drivers first." >&2

exit 1

fi

}

# 获取服务器IP地址

get_ip_addresses() {

local ip_list=""

# 方法1: 使用ip命令 (推荐,现代Linux系统)

if command -v ip &> /dev/null; then

# 获取所有IPv4地址,排除回环地址

ip_list=$(ip -4 addr show 2>/dev/null | grep -E "inet\s" | grep -v "127.0.0.1" | awk '{print $2}' | cut -d'/' -f1 | sort -u | tr '\n' ',' 2>/dev/null || echo "")

# 方法2: 使用ifconfig (传统方法)

elif command -v ifconfig &> /dev/null; then

ip_list=$(ifconfig 2>/dev/null | grep -E "inet\s" | grep -v "127.0.0.1" | awk '{print $2}' | tr '\n' ',' 2>/dev/null || echo "")

# 方法3: 使用hostname命令

elif command -v hostname &> /dev/null; then

# 获取主机名对应的所有IP

hostname_ip=$(hostname -I 2>/dev/null)

if [ $? -eq 0 ] && [ -n "$hostname_ip" ]; then

# 过滤掉IPv6和回环地址

ip_list=$(echo "$hostname_ip" | tr ' ' '\n' | grep -E "^[0-9]+\.[0-9]+\.[0-9]+\.[0-9]+$" | grep -v "^127\." | tr '\n' ',' 2>/dev/null || echo "")

fi

fi

# 移除末尾的逗号

ip_list="${ip_list%,}"

# 如果没有获取到IP,返回"N/A"

if [ -z "$ip_list" ]; then

echo "N/A"

else

echo "$ip_list"

fi

}

# 获取进程类型(C:计算, G:图形, X:混合, U:未知)

get_process_type() {

local pid=$1

local pname=$2

if [ -z "$pid" ] || [ "$pid" = "" ]; then

echo "U"

return

fi

# 检查进程是否为图形进程

if echo "$pname" | grep -qi "xorg\|Xorg\|X11\|gnome-shell\|kwin\|dwm\|compiz\|kde\|mate\|xfce\|cinnamon" 2>/dev/null; then

echo "G"

return

fi

# 检查进程是否使用CUDA(计算进程)

if [ -d "/proc/$pid" ]; then

# 方法1: 检查进程加载的so库

if lsof -p "$pid" 2>/dev/null | grep -q "libcuda\.so\|libnvidia\|libcudart\.so" 2>/dev/null; then

echo "C"

return

fi

# 方法2: 检查进程命令行参数

if ps -p "$pid" -o args= 2>/dev/null | grep -q"\-\-cuda\|cuda\|cudnn\|tensorrt\|tensorflow\|pytorch\|torch" 2>/dev/null; then

echo "C"

return

fi

# 方法3: 检查进程环境变量

if cat "/proc/$pid/environ" 2>/dev/null | tr '\0' '\n' | grep -qi "CUDA\|NVIDIA" 2>/dev/null; then

echo "C"

return

fi

fi

# 通过nvidia-smi获取进程类型(较新版本的nvidia-smi支持)

local nvidia_type=$(nvidia-smi pmon -s u -c 1 2>/dev/null | grep "^\s*$pid\s" | awk '{print $3}' 2>/dev/null)

if [ -n "$nvidia_type" ]; then

case "$nvidia_type" in

C) echo "C" ;;

G) echo "G" ;;

X) echo "X" ;;

*) echo "U" ;;

esac

return

fi

# 默认返回未知

echo "U"

}

# 发现模式:列出所有GPU

if [ "$1" = "disc" ]; then

check_nvidia_smi

# 获取GPU数量

gpu_count=$(nvidia-smi --query-gpu=count --format=csv,noheader 2>/dev/null | head -n 1)

if [ -z "$gpu_count" ] || [ "$gpu_count" = "0" ]; then

echo "WARNING: No NVIDIA GPU detected" >&2

exit 0

fi

# 输出GPU索引

for ((i=0; i<gpu_count; i++)); do

echo "{#GPUINDEX}=$i"

done

exit 0

fi

check_nvidia_smi

shname=$(basename "$0")

ATTR="_X(g=$shname,p=cmdb,t=script,f=0)"

# 获取GPU数量

gpu_count=$(nvidia-smi --query-gpu=count --format=csv,noheader 2>/dev/null | head -n 1)

if [ -z "$gpu_count" ] || [ "$gpu_count" = "0" ]; then

echo "WARNING: No NVIDIA GPU detected" >&2

echo "COL_DETAIL_START:"

echo "COL_DETAIL_END:"

exit 0

fi

# 获取所有GPU基础信息

gpu_info_raw=$(nvidia-smi --query-gpu=index,uuid,name,driver_version,memory.total,memory.used,memory.free,temperature.gpu,utilization.gpu,utilization.memory,power.draw,power.limit,fan.speed --format=csv,noheader 2>/dev/null)

if [ $? -ne 0 ] || [ -z "$gpu_info_raw" ]; then

echo "ERROR: Failed to get GPU info" >&2

echo "COL_DETAIL_START:"

echo "COL_DETAIL_END:"

exit 1

fi

# 获取所有GPU进程信息

process_info_raw=$(nvidia-smi --query-compute-apps=pid,process_name,gpu_uuid,used_memory --format=csv,noheader 2>/dev/null 2>/dev/null)

# 获取所有GPU的图形/计算进程信息(通过nvidia-smi pmon)

# 这个命令可以显示进程类型:C=计算, G=图形, X=混合, U=未知

gpu_process_type_raw=$(timeout 2 nvidia-smi pmon -s u -c 1 2>/dev/null | grep -v "^\s*#" | grep -v "^\s*$" 2>/dev/null || echo "")

# 获取IP地址

IP_ADDRESS=$(get_ip_addresses)

# 多指标输出开始

echo "COL_DETAIL_START:"

# 输出IP地址指标

echo "IpAddress[$ATTR]|+|$IP_ADDRESS"

# 处理每个GPU

while IFS= read -r line || [ -n "$line" ]; do

# 解析CSV格式的GPU基础数据

IFS=',' read -r -a gpu_data <<< "$line"

GPUINDEX=$(echo "${gpu_data[0]}" | xargs 2>/dev/null)

UUID=$(echo "${gpu_data[1]}" | xargs 2>/dev/null)

GPU_NAME=$(echo "${gpu_data[2]}" | xargs 2>/dev/null)

DRIVER=$(echo "${gpu_data[3]}" | xargs 2>/dev/null)

MEMORY_TOTAL=$(echo "${gpu_data[4]}" | sed 's/ MiB//' | xargs 2>/dev/null)

MEMORY_USED=$(echo "${gpu_data[5]}" | sed 's/ MiB//' | xargs 2>/dev/null)

MEMORY_FREE=$(echo "${gpu_data[6]}" | sed 's/ MiB//' | xargs 2>/dev/null)

TEMP=$(echo "${gpu_data[7]}" | xargs 2>/dev/null)

GPU_UTIL=$(echo "${gpu_data[8]}" | sed 's/ %//' | xargs 2>/dev/null)

MEMORY_UTIL=$(echo "${gpu_data[9]}" | sed 's/ %//' | xargs 2>/dev/null)

POWER=$(echo "${gpu_data[10]}" | sed 's/ W//' | xargs 2>/dev/null)

POWER_LIMIT=$(echo "${gpu_data[11]}" | sed 's/ W//' | xargs 2>/dev/null)

FAN_SPEED=$(echo "${gpu_data[12]}" | sed 's/ %//' | xargs 2>/dev/null)

# 从GPU名称提取模型

GPU_MODEL="$GPU_NAME"

# 输出GPU基础指标

#GPU唯一标识符

echo "gpuUuid[$ATTR,$GPUINDEX]|+|$UUID"

#GPU名称

echo "gpuName[$ATTR,$GPUINDEX]|+|$GPU_NAME"

#GPU型号

echo "gpuModel[$ATTR,$GPUINDEX]|+|$GPU_MODEL"

#驱动版本

echo "gpuDriver[$ATTR,$GPUINDEX]|+|$DRIVER"

#总显存 单位MB

echo "gpuMemoryTotal[$ATTR,$GPUINDEX]|+|$MEMORY_TOTAL"

#已用显存 单位MB

echo "gpuMemoryUsed[$ATTR,$GPUINDEX]|+|$MEMORY_USED"

#空闲显存 单位MB

echo "gpuMemoryFree[$ATTR,$GPUINDEX]|+|$MEMORY_FREE"

#GPU温度 单位摄氏度

echo "gpuTemperature[$ATTR,$GPUINDEX]|+|$TEMP"

#GPU利用率 百分比

echo "gpuUtilization[$ATTR,$GPUINDEX]|+|$GPU_UTIL"

#显存利用率 百分比

echo "gpuMemoryUtilization[$ATTR,$GPUINDEX]|+|$MEMORY_UTIL"

#当前功耗 单位瓦特

echo "gpuPower[$ATTR,$GPUINDEX]|+|$POWER"

#功耗限制 单位瓦特

echo "gpuPowerLimit[$ATTR,$GPUINDEX]|+|$POWER_LIMIT"

#风扇转速 百分比

echo "gpuFanSpeed[$ATTR,$GPUINDEX]|+|$FAN_SPEED"

# 初始化进程变量为空

PROCESS_PID=""

PROCESS_TYPE="U" # 默认未知

PROCESS_NAME=""

PROCESS_MEM=""

# 从进程信息中查找当前GPU的进程

if [ -n "$process_info_raw" ]; then

while IFS= read -r proc_line || [ -n "$proc_line" ]; do

IFS=',' read -r pid p_name proc_uuid p_mem <<< "$proc_line"

proc_uuid_clean=$(echo "$proc_uuid" | xargs 2>/dev/null)

# 如果进程的UUID匹配当前GPU的UUID

if [ "$proc_uuid_clean" = "$UUID" ]; then

PROCESS_PID=$(echo "$pid" | xargs 2>/dev/null)

PROCESS_NAME=$(echo "$p_name" | xargs 2>/dev/null)

PROCESS_MEM=$(echo "$p_mem" | sed 's/ MiB//' | xargs 2>/dev/null)

# 尝试从nvidia-smi pmon获取进程类型

if [ -n "$gpu_process_type_raw" ] && [ -n "$PROCESS_PID" ]; then

# 查找这个PID对应的进程类型

process_type_line=$(echo "$gpu_process_type_raw" | grep -E "^\s*$PROCESS_PID\s" 2>/dev/null | head -n1)

if [ -n "$process_type_line" ]; then

ptype=$(echo "$process_type_line" | awk '{print $3}' 2>/dev/null)

case "$ptype" in

C) PROCESS_TYPE="C" ;;

G) PROCESS_TYPE="G" ;;

X) PROCESS_TYPE="X" ;;

*) PROCESS_TYPE=$(get_process_type "$PROCESS_PID" "$PROCESS_NAME") ;;

esac

else

# 如果pmon中没有,通过其他方法判断

PROCESS_TYPE=$(get_process_type "$PROCESS_PID" "$PROCESS_NAME")

fi

else

# 如果没有pmon信息,通过其他方法判断

PROCESS_TYPE=$(get_process_type "$PROCESS_PID" "$PROCESS_NAME")

fi

# 只取第一个匹配的进程

break

fi

done <<< "$process_info_raw"

fi

# 输出GPU进程指标

#GPU进程ID

echo "gpuProcessPid[$ATTR,$GPUINDEX]|+|$PROCESS_PID"

#GPU进程类型 (C=计算, G=图形, X=混合, U=未知)

echo "gpuProcessType[$ATTR,$GPUINDEX]|+|$PROCESS_TYPE"

#GPU进程名称

echo "gpuProcessName[$ATTR,$GPUINDEX]|+|$PROCESS_NAME"

#进程占用显存 单位MiB

echo "gpuProcessMemoryUsage[$ATTR,$GPUINDEX]|+|$PROCESS_MEM"

done <<< "$gpu_info_raw"

# 多指标输出结束

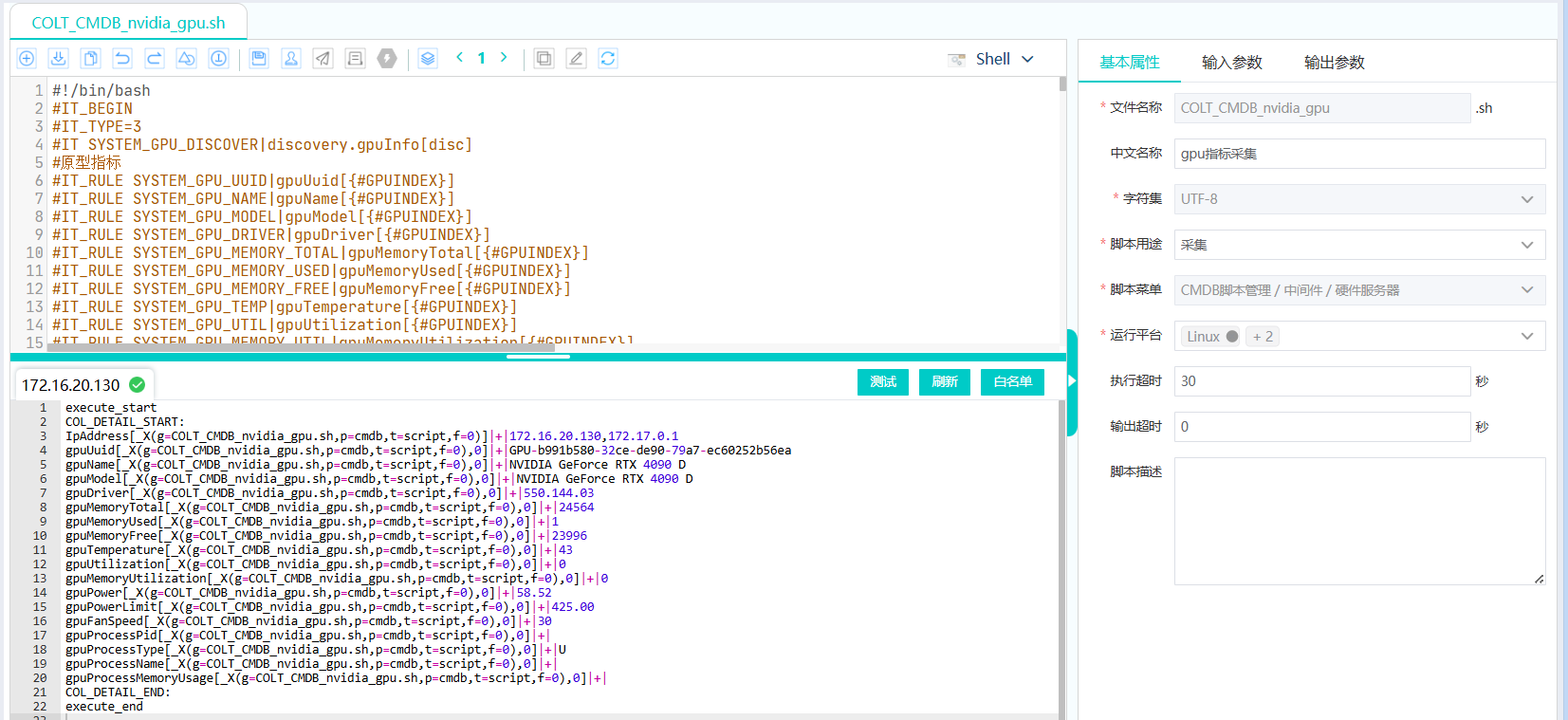

echo "COL_DETAIL_END:"自测在130服务器执行结果如下:

bash

[root@localhost ~]# sh COLT_CMDB_nvidia_gpu_20260508.sh

COL_DETAIL_START:

IpAddress[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0)]|+|172.16.20.130,172.17.0.1

gpuUuid[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|GPU-b991b580-32ce-de90-79a7-ec60252b56ea

gpuName[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|NVIDIA GeForce RTX 4090 D

gpuModel[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|NVIDIA GeForce RTX 4090 D

gpuDriver[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|550.144.03

gpuMemoryTotal[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|24564

gpuMemoryUsed[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|12653

gpuMemoryFree[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|11343

gpuTemperature[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|33

gpuUtilization[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|0

gpuMemoryUtilization[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|0

gpuPower[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|13.42

gpuPowerLimit[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|425.00

gpuFanSpeed[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|32

gpuProcessPid[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|49063

gpuProcessType[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|C

gpuProcessName[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|/usr/local/bin/ollama

gpuProcessMemoryUsage[_X(g=COLT_CMDB_nvidia_gpu_20260508.sh,p=cmdb,t=script,f=0),0]|+|0

COL_DETAIL_END: