1.安装nfs,在每个节点上安装

yum install -y nfs-utils

2.创建共享目录(主节点上操作)

mkdir -p /opt/nfs/k8s

3.编写NFS的共享配置

/opt/nfs/k8s *(rw,no_root_squash)

#*代表对所有IP都开放此目录,rw是读写

4.启动nfs

systemctl enable nfs-server #开机启动

systemctl restart nfs-server #启动

5.测试nfs

showmount -e 主节点ip

查看NFS共享目录,ip为机器的内部IP

6.创建先关yaml文件

注:在 k8s 1.20 之后,出于对性能和统一 apiserver 调用方式的初衷,k8s 移除对 SelfLink 的支持,而默认上面指定的 provisioner 版本需要 SelfLink 功能,因此 PVC 无法进行自动制备。所以需要找不需要SelfLink的镜像。

class.yaml

bash

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: fuseim.pri/ifs # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "true"deploy.yaml

bash

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

---

kind: Deployment

apiVersion: apps/v1

metadata:

name: nfs-client-provisioner

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/pylixm/nfs-subdir-external-provisioner:v4.0.0

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 192.168.2.74 #主节点ip

- name: NFS_PATH

value: /opt/k8s/

volumes:

- name: nfs-client-root

nfs:

server: 192.168.2.74 #主节点ip

path: /opt/k8s/rbac.yaml

bash

kind: ServiceAccount

apiVersion: v1

metadata:

name: nfs-client-provisioner

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

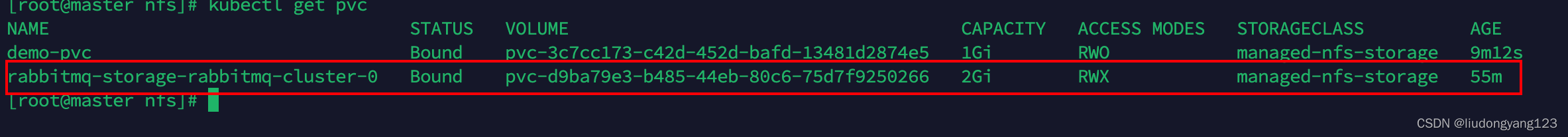

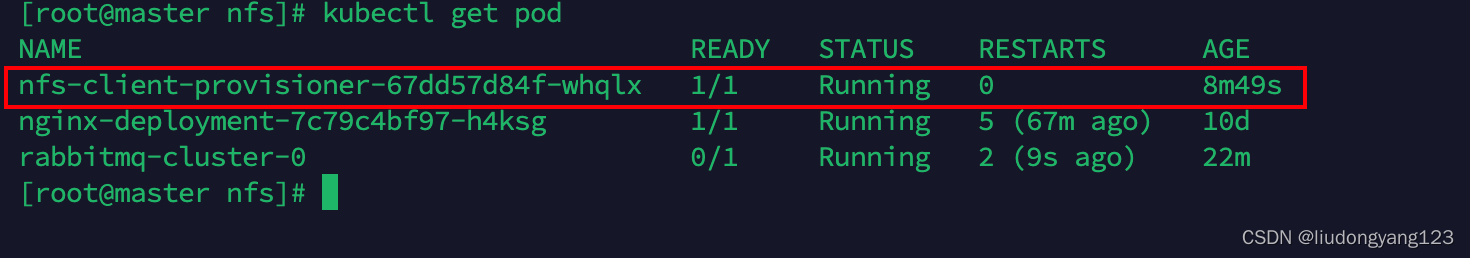

apiGroup: rbac.authorization.k8s.io7.查看对应的pod

kubectl get pod

8.创建pvc.yaml 测试

bash

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: demo-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: managed-nfs-storage9.kubectl get pvc