- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

参考链接:

LANGUAGE MODELING WITH NN.TRANSFORMER AND TORCHTEXT

任务:自定义输入一段英文文本进行预测

一、定义模型

python

from tempfile import TemporaryDirectory

from typing import Tuple

from torch import nn,Tensor

from torch.nn import TransformerEncoder, TransformerEncoderLayer

import math, os, torch

class TransformerModel(nn.Module):

def __init__(self, ntoken: int, d_model: int, nhead: int, d_hid: int, nlayers: int, dropout: float = 0.5):

super().__init__()

self.pos_encoder = PositionalEncoding(d_model, dropout)

#定义编码器层

encoder_layers = TransformerEncoderLayer(d_model, nhead, d_hid, dropout)

#定义编码器,pytorch将Transformer编码器进行了打包,这里直接调用即可

self.transformer_encoder = TransformerEncoder(encoder_layers, nlayers)

self.embedding = nn.Embedding(ntoken,d_model)

self.d_model = d_model

self.linear = nn.Linear(d_model, ntoken)

self.init_weights()

#初始化权重

def init_weights(self) -> None:

initrange = 0.1

self.embedding.weight.data.uniform_(-initrange, initrange)

self.linear.bias.data.zeros_()

self.linear.weight.data.uniform_(-initrange, initrange)

def forward(self, src:Tensor, src_mask: Tensor = None) -> Tensor:

"""

Arguments:

src: Tensor, 形状为[seq_len, batch_size]

src_mask: Tensor, 形状为[seq_len, seq_len]

Returns:

输出的Tensor,形状为[seq_len, batch_size, ntoken]

"""

src = self.embedding(src) * math.sqrt(self.d_model)

src = self.pos_encoder(src)

output = self.transformer_encoder(src, src_mask)

output = self.linear(output)

return output

python

class PositionalEncoding(nn.Module):

def __init__(self, d_model: int, dropout: float = 0.1, max_len: int = 5000):

super().__init__()

self.dropout = nn.Dropout(p = dropout)

#生成位置编码的位置张量

position = torch.arange(max_len).unsqueeze(1)

#计算位置编码的除数项

div_term = torch.exp(torch.arange(0, d_model, 2) * (-math.log(10000.0) / d_model))

#创建位置编码张量

pe = torch.zeros(max_len, 1, d_model)

#使用正弦函数计算位置编码中的基数维度部分

pe[:, 0, 1::2] = torch.sin(position * div_term)

#使用余弦函数计算位置编码中的偶数维度部分

pe[:, 0, 1::2] = torch.cos(position * div_term)

self.register_buffer('pe', pe)

def forward(self, x: Tensor) -> Tensor:

"""

Arguments:

x: Tensor, 形状为[seq_len, batch_size, embedding_dim]

"""

#将位置编码添加到输入张量

x = x + self.pe[:x.size(0)]

#应用dropout

return self.dropout(x)二、加载数据集

本实验使用torchtext生成Wikitext-2数据集。在此之前,你需要安装下面的包:

- pip install portalocker

- pip install torchdata

python

import torchtext

from torchtext.datasets.wikitext2 import WikiText2

from torchtext.data.utils import get_tokenizer

from torchtext.vocab import build_vocab_from_iterator

#从torchtext库中导入WikiTetx2数据集

train_iter = WikiText2(split = 'train')

#获取基本的英语分词器

tokenizer = get_tokenizer('basic_english')

#通过迭代器构建词汇表

vocab = build_vocab_from_iterator(map(tokenizer, train_iter), specials=['<unk>'])

#将默认索引设置为'<unk>'

vocab.set_default_index(vocab['<unk>'])

def data_process(raw_text_iter: dataset.IterableDataset) -> Tensor:

"""将原始文本转换为扁平的张量"""

data = [torch.tensor(vocab(tokenizer(item)),

dtype = torch.long) for item in raw_text_iter]

return torch.cat(tuple(filer(lambda t: t.numel() > 0, data)))

#由于构建词汇表时"train_iter"被使用了,所以需要重新创建

train_iter, val_iter, test_iter = WikiText2()

#队训练、验证和测试数据进行处理

train_data = data_process(train_iter)

val_data = data_process(val_iter)

test_data = data_process(test_iter)

#检查是否有可用的CUDA设备,将设备设置为GPU或者CPU

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

def batchify(data: Tensor, bsz: int) -> Tensor:

"""将数据划分为bsz个单独的序列,去除不能完全容纳的额外元素。

参数:

data: Tensor,形状为``[N]``

bsz:int,批大小

返回:

形状为[N // bsz, bsz]的张量

"""

seq_len = data.size(0) // bsz

data = data[:seq_len * bsz]

data = data.view(bsz, seq_len).t().contiguous()

return data.to(device)

#设置批大小和评估批大小

batch_size = 20

eval_batch_size = 10

#将训练、验证和测试数据进行批处理

train_data = batchify(train_data, batch_size) #形状为[seq_len, batch_size]

val_data = batchify(val_data, eval_batch_size)

test_data = batchify(test_data, eval_batch_size)

python

bptt = 35

#获取批次数据

def get_batch(source:Tensor, i: int) -> Tuple[Tensor, Tensor]:

"""

参数:

source: Tensor,形状为``[full_seq_len, batch_size]``

i : int, 当前批次索引

返回:

tuple(data, target),

-data形状为[seq_len, batch_size],

-target形状为[seq_len * batch_size]

"""

#计算当前批次的序列长度,最大为bptt,确保不超过source的长度

seq_len = min(bptt, len(source) - 1 - i)

#获取data,从i开始,长度为seq_len

data = source[i:i+seq_len]

#获取target,从i+1开始,长度为seq_len,并将其形状转换为一维张量

target = source[i+1:i+1+seq_len].reshape(-1)

return data, target三、初始化实例

python

ntokend = len(vocab)

emsize = 200

d_hid = 200

nlayers = 2

nhead = 2

dropout = 0.2

#创建transformer模型

model = TransformerModel(ntokend,

emsize,

nhead,

d_hid,

nlayers,

dropout).to(device)四、训练模型

结合使用CrossEntropyLoss与SGD(随机梯度下降优化器)。训练期间,使用torch.nn.utils.clip_grad_norm_来防止梯度爆炸

python

import time

criterion = nn.CrossEntropyLoss() #定义交叉熵损失函数

lr = 5.0

optimizer = torch.optim.SGD(model.parameters(),lr = lr)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 1.0, gama = 0.95)

def train(model: nn.Module) -> None:

model.train() #开启训练模式

total_loss = 0.

log_interval = 200 #

start_time = time.time()

num_batches = len(train_data) // bptt

for batch, i in enumerate(range(0, train_data.size(0) - 1, bptt)):

data, targets = get_batch(train_data, i)

output = model(data)

output_flat = output.view(-1, ntokens)

loss = criterion(output_flat, targets) #计算损失

optimizer.zero_grad()

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), 0.5)

optimizer.step()

total_loss += loss.item()

if batch % log_interval == 0 and batch > 0:

lr = scheduler.get_last_lr()[0]

ms_per_batch = (time.time() - start_time) * 1000 / log_interval

cur_loss = total_loss / log_interval

ppl = math.exp(cur_loss)

print(f'| epoch{epoch:3d} | {batch:5d} / {num_batches:5d} batches |'

f'lr{lr:02.2f} | ms/batch {ms_per_batch:5.2f} |'

f'loss {cur_loss:5.2f}|ppl{ppl:8.2f}')

total_loss = 0

start_time = time.time()

def evaluate(model:nn.Module, eval_data:Tensor) -> float:

model.eval()

total_loss = 0.

with torch.no_grad():

for i in range(0,eval_data.size(0) - 1, bptt):

data, targets = get_batch(eval_data,i)

seq_len = data.size(0)

output = model(data)

output_flat = output.view(-1,ntokens)

total_loss += seq_len * criterion(output_flat, targets).item()

return total_loss / (len(eval_data) - 1)

python

best_val_loss = float('inf')

epochs = 1

with TemporaryDirectory() as tempdir:

best_model_params_path = os.path.join(tempdir, "best_model_params.pt")

for epoch in range(1, epochs + 1):

epoch_start_time = time.time()

train(model)

val_loss = evaluate(model, val_data)

val_ppl = math_exp(val_loss)

elapsed = time.time() - epoch_start_time

#打印当前epoch的信息,包括耗时、验证损失和困惑度

print('-' * 89)

print(f'|end of epoch {epoch:3d} | time:{elapsed: 5.2f}s |'

f'valid loss {val_loss:5.2f} | valid ppl {val_ppl: 8.2f}')

print('-' * 89)

if val_loss < best_val_loss:

best_val_loss = val_loss

torch.save(model.state_dict(), best_model_params_path)

scheduler.step() #更新学习率

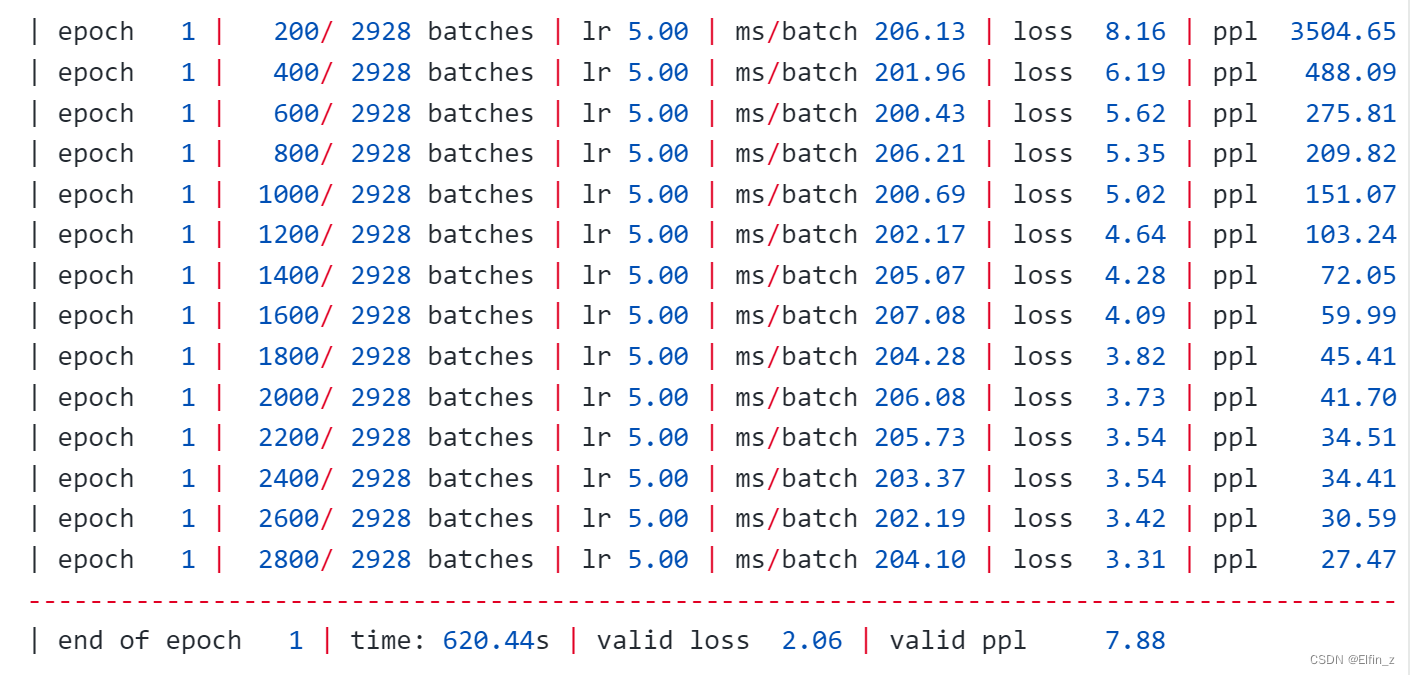

model.load_state_dict(torch.load(best_model_params_path))代码输出:

五、总结

加载数据集时,注意包的版本关联关系。另外,注意结合使用优化器提升优化性能。