目录

[部署docker 引擎](#部署docker 引擎)

[部署 etcd 集群](#部署 etcd 集群)

[部署 Master01 服务器相关组件](#部署 Master01 服务器相关组件)

etcd 存储了 Kubernetes 集群的所有配置数据和状态信息,包括资源对象、集群配置、元数据等,是k8s中一个十分重要的组件,因为需要存储大量数据,通常部署在专用机器上,但是我们模拟实验中因为电脑配置问题,就将 etcd 装在master和node上

环境准备

我为各主机分配的ip地址如下

master1 172.16.233.101

node1 172.16.233.103

node2 172.16.233.104

初始化操作系统

关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

清除系统上 iptables 规则

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

关闭selinux

setenforce 0

永久关闭增强功能

sed -i 's/enforcing/disabled/' /etc/selinux/config

关闭swap

swapoff -a

sed -ri 's/.swap./#&/' /etc/fstab

在master 中添加hosts

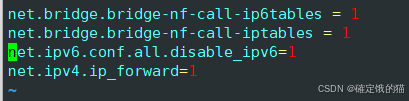

在三台机器上调整内核参数

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

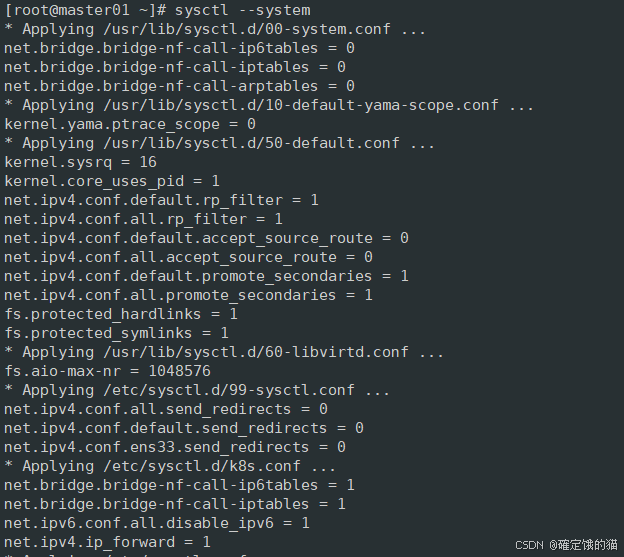

重新加载系统中的所有内核参数配置文件,并应用这些配置

sysctl --system

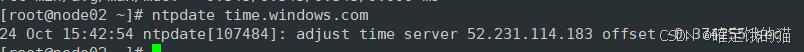

时间同步

yum install -y ntpdate

ntpdate time.windows.com

部署docker 引擎

在node节点上部署安装docker引擎

yum install -y yum-utils device-mapper-persistent-data lvm2 epel-release

如果epel-release 安装不成功可以导入epel.repo依赖,如果没有依赖可以私信我

接下来添加 Docker CE 的 Yum 仓库配置文件到系统中

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

然后下载docker

yum install -y docker-ce docker-ce-cli containerd.io

在 /etc/docker/daemon.json 中配置加速器,这里选择华为镜像加速器

vim /etc/docker/daemon.json

{

"registry-mirrors": "[https://0a40cefd360026b40f39c00627fa6f20.mirror.swr.myhuaweicloud.com](https://0a40cefd360026b40f39c00627fa6f20.mirror.swr.myhuaweicloud.com "https://0a40cefd360026b40f39c00627fa6f20.mirror.swr.myhuaweicloud.com")"

}

保存退出后重载系统配置文件

重启docker

systemctl daemon-reload

systecmctl restart docker.service

可以试着拉一个镜像看看,docker 是否能正常运行

docker pull redis

部署 etcd 集群

etcd 在生产环境中一般推荐集群方式部署。由于etcd 的leader选举机制,要求至少为3台或以上的奇数台。

准备签发证书环境

CFSSL 是 CloudFlare 开发的一款开源的 PKI/TLS 工具包,用于生成、签名和验证证书。它主要用于自动化证书管理,特别是在 Kubernetes 等分布式系统中,用于生成和管理 TLS 证书

由于它使用配置文件生成证书,因此自签之前,需要生成它识别的 json 格式的配置文件,CFSSL 提供了方便的命令行生成配置文件。

CFSSL 用来为 etcd 提供 TLS 证书,它支持签三种类型的证书:

client 证书,服务端连接客户端时携带的证书,用于客户端验证服务端身份,如 kube-apiserver 访问 etcd;

server 证书,客户端连接服务端时携带的证书,用于服务端验证客户端身份,如 etcd 对外提供服务;

peer 证书,相互之间连接时使用的证书,如 etcd 节点之间进行验证和通信。 这里全部都使用同一套证书认证。

在master01 节点上操作

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/local/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/local/cfssljson

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/local/bin/cfssl-certinfo

下载完成后对文件进行赋权

chmod +x /usr/local/bin/cfssl*

PS:

cfssl:证书签发的工具命令

cfssljson:将 cfssl 生成的证书(json格式)变为文件承载式证书

cfssl-certinfo:验证证书的信息

接下来生成etcd 证书

创建目录

mkdir /opt/k8s

cd /opt/k8s

编辑 etcd.sh 文件

vim etcd.sh

#!/bin/bash

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat > $WORK_DIR/cfg/etcd <<EOF

#Member

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#Clustering

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat > /usr/lib/systemd/system/etcd.service <<EOF

Unit

#创建etcd配置文件/opt/etcd/cfg/etcd

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat > $WORK_DIR/cfg/etcd <<EOF

#Member

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#Clustering

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat > /usr/lib/systemd/system/etcd.service <<EOF

Unit

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

Service

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--logger=zap \

--enable-v2

Restart=on-failure

LimitNOFILE=65536

Install

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

保存并退出

编辑 etcd-cert.sh 文件

vim etcd-cert.sh

#!/bin/bash

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"172.16.233.101",

"172.16.233.103",

"172.16.233.104"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

保存并退出

随后为两个文件赋权

chmod +x etcd-cert.sh etcd.sh

创建用于生成CA 证书、etcd 服务器以及私钥的目录

mkdir /opt/k8s/etcd-cert

mv etcd-cert.sh etcd-cert/

cd /opt/k8s/etcd-cert/

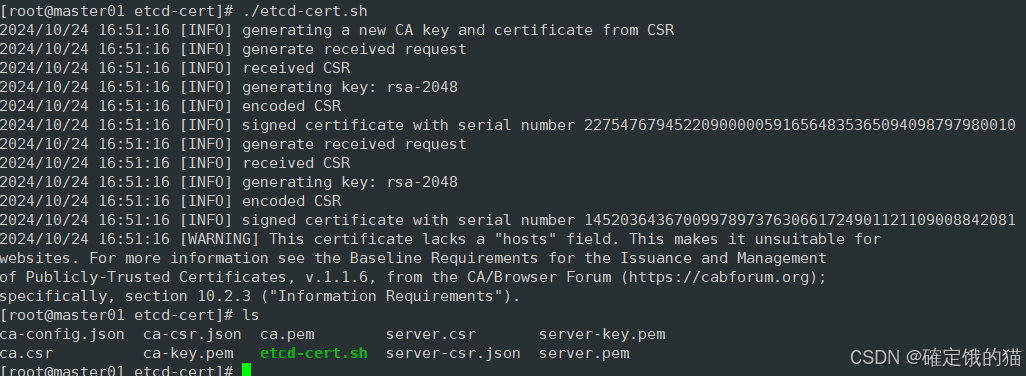

运行 etcd-cert.sh 文件

ls 看一下

很好,进行下一步

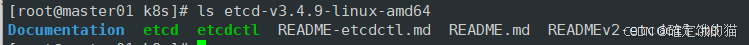

上传 etcd-v3.4.9-linux-amd64.tar.gz 到/opt/k8s 目录中

https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

ls查看一下

#创建用于存放 etcd 配置文件,命令文件,证书的目录

mkdir -p /opt/etcd/{cfg,bin,ssl}

cd /opt/k8s/etcd-v3.4.9-linux-amd64/

mv etcd etcdctl /opt/etcd/bin/

cp /opt/k8s/etcd-cert/*.pem /opt/etcd/ssl/

我们回到 /opt/k8s 目录

./etcd.sh etcd01 172.16.233.101 etcd02=https://172.16.233.103:2380,etcd03=https://172.16.233.104:2380

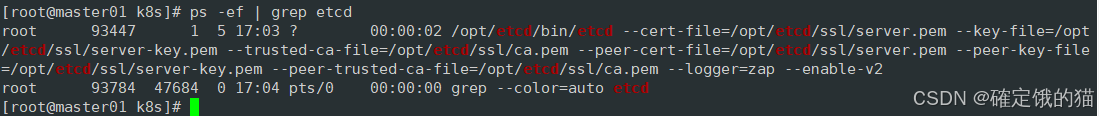

运行该命令后机器会卡住,因为需要三台 etcd 服务器同时启动,我们这里只启动了一台所以卡住了,三台机器都启动后可恢复,我们暂时忽略,打开另一窗口 查看etcd 进程是否正常

ps -ef | grep etcd

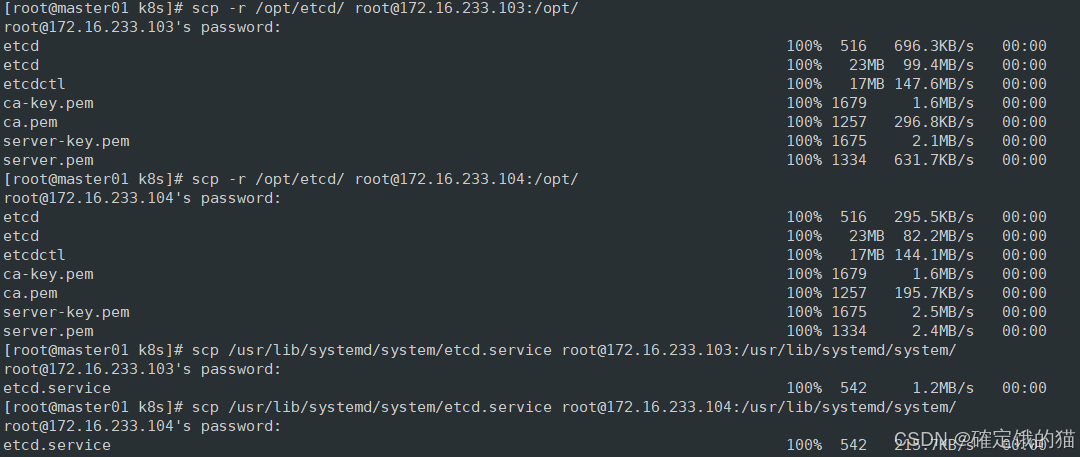

将 etcd 相关的证书文件、命令文件和服务管理文件全部拷贝到另外两个etcd集群节点

scp -r /opt/etcd/ root@172.16.233.103:/opt/

scp -r /opt/etcd/ root@172.16.233.104:/opt/

scp /usr/lib/systemd/system/etcd.service root@172.16.233.103:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service root@172.16.233.104:/usr/lib/systemd/system/

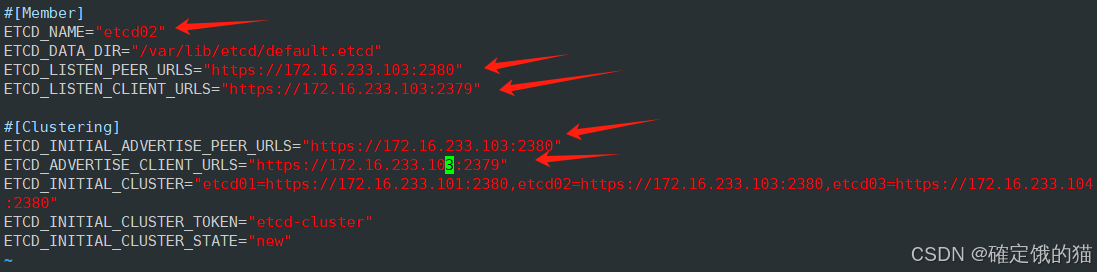

完成后我们去到node01节点操作

vim /opt/etcd/cfg/etcd

将这五行的ip地址都改为node01节点的ip地址

启动etcd 服务

systemctl start etcd

systemctl enable etcd

再去node02节点操作

和上一步相同,修改配置文件

vim /opt/etcd/cfg/etcd

同样将这五行改为本机ip

启动etcd

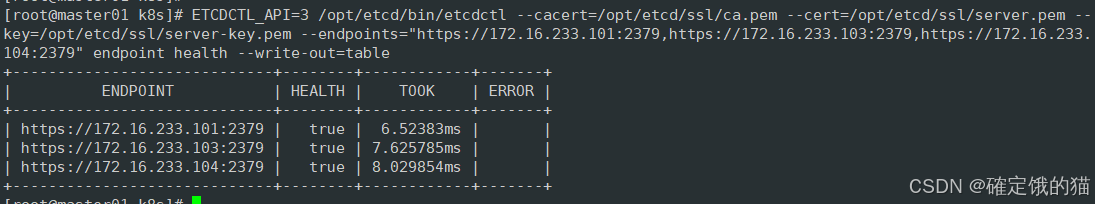

在master节点上检查集群健康状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.233.101:2379,https://172.16.233.103:2379,https://172.16.233.104:2379" endpoint health --write-out=table

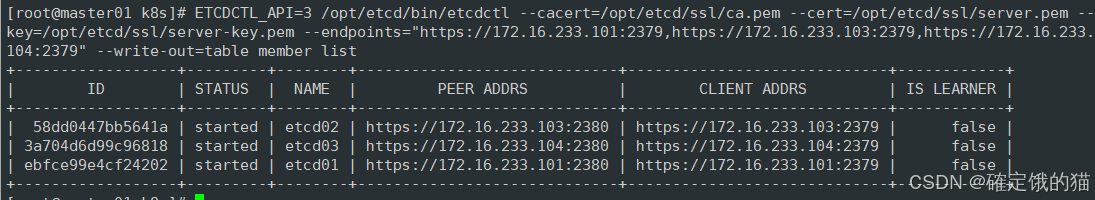

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.233.101:2379,https://172.16.233.103:2379,https://172.16.233.104:2379" --write-out=table member list

部署 Master01 服务器相关组件

在matser节点上继续操作

CA证书、私钥

上传master.zip (admin.sh、apiserver.sh、controller-manager.sh、scheduler.sh)文件到/opt/k8s 目录中

cd /opt/k8s

vim k8s-cert.sh

#!/bin/bash

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#hosts中将所有可能作为 apiserver 的 ip 添加进去,后面 keepalived 使用的 VIP 也要加入

cat > apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"172.16.233.101",

"172.16.233.102",

"172.16.233.99",

"172.16.233.105",

"172.16.233.106",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

#-----------------------

#生成 kubectl 连接集群的证书和私钥,具有admin权限

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": \[\],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": \[\],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

保存退出

unzip master.zip

chmod +x *.sh

创建kubernetes工作目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

创建用于生成CA证书、相关组件的证书和私钥的目录

mkdir /opt/k8s/k8s-cert

mv /opt/k8s/k8s-cert.sh /opt/k8s/k8s-cert

cd /opt/k8s/k8s-cert/

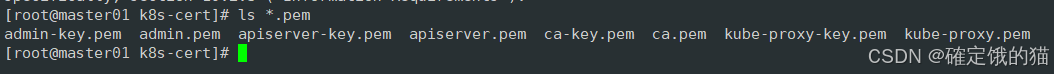

运行k8s-cert.sh,生成CA证书、相关组件的证书和私钥

./k8s-cert.sh

ls *.pem

将CA证书、apiserver相关证书和私钥到 kubernetes工作目录的 ssl 子目录中

cp ca*.pem api*.pem /opt/kubernetes/ssl

上传 kubernetes-server-linux-amd64.tar.gz 到 /opt/k8s/ 目录中并解压

下载地址:kubernetes/CHANGELOG/CHANGELOG-1.20.md at release-1.20 · kubernetes/kubernetes · GitHub

tar zxvf kubernetes-server-linux-amd64.tar.gz

cd /opt/k8s/kubernetes/server/bin

复制master组件的关键命令文件到 kubernetes工作目录的 bin 子目录中

cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

为命令做一个软连接

ln -s /opt/kubernetes/bin/* /usr/local/bin/

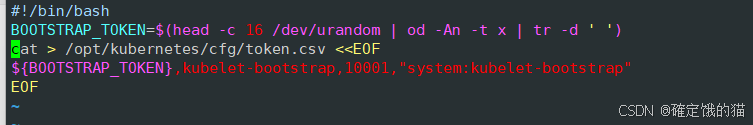

创建 bootstrap token 认证文件,apiserver 启动时会调用,然后就相当于在集群内创建了一个这个用户,接下来就可以用 RBAC 给他授权

cd /opt/k8s/

vim token.sh

#!/bin/bash

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

cat > /opt/kubernetes/cfg/token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

chmod +x token.sh

./token.sh

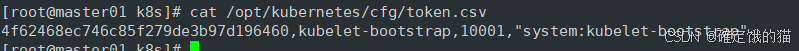

cat /opt/kubernetes/cfg/token.csv

apiserver

cd /opt/k8s

vim apiserver.sh

#!/bin/bash

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat >/opt/kubernetes/cfg/kube-apiserver <<EOF

KUBE_APISERVER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--etcd-servers=${ETCD_SERVERS} \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--tls-private-key-file=/opt/kubernetes/ssl/apiserver-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--service-account-issuer=api \\

--service-account-signing-key-file=/opt/kubernetes/ssl/apiserver-key.pem \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \\

--requestheader-allowed-names=kubernetes \\

--requestheader-extra-headers-prefix=X-Remote-Extra- \\

--requestheader-group-headers=X-Remote-Group \\

--requestheader-username-headers=X-Remote-User \\

--enable-aggregator-routing=true \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

EOF

cat >/usr/lib/systemd/system/kube-apiserver.service <<EOF

Unit

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

Service

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

Install

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

chmod +x apiserver.sh

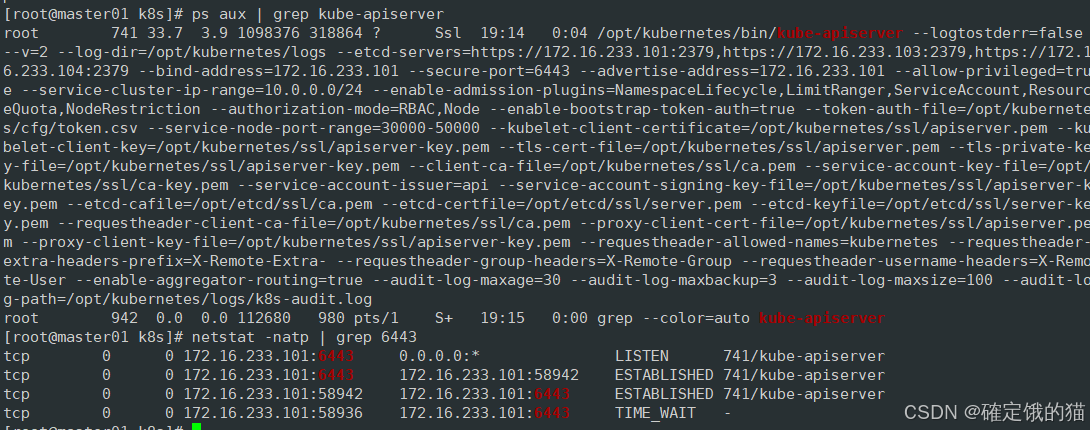

二进制文件、token、证书都准备好后,开启 apiserver 服务

./apiserver.sh 172.16.233.101 https://172.16.233.101:2379,https://172.16.233.103:2379,https://172.16.233.104:2379

查看apiserver是否启动成功

ps aux | grep kube-apiserver

netstat -natp | grep 6443

scheduler

vim scheduler.sh

#!/bin/bash

##创建 kube-scheduler 启动参数配置文件

MASTER_ADDRESS=$1

cat >/opt/kubernetes/cfg/kube-scheduler <<EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--leader-elect=true \\

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--bind-address=127.0.0.1"

##生成kube-scheduler证书

cd /opt/k8s/k8s-cert/

#创建证书请求文件

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": \[\],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

#生成证书

#生成kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

KUBE_APISERVER="https://172.16.233.101:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-scheduler \

--client-certificate=./kube-scheduler.pem \

--client-key=./kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-scheduler \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

##创建 kube-scheduler.service 服务管理文件

cat >/usr/lib/systemd/system/kube-scheduler.service <<EOF

Unit

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

Service

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

Install

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

chmod +x scheduler.sh

./scheduler.sh

controller-manager.sh

#!/bin/bash

##创建 kube-controller-manager 启动参数配置文件

MASTER_ADDRESS=$1

cat >/opt/kubernetes/cfg/kube-controller-manager <<EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--leader-elect=true \\

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/16 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--cluster-signing-duration=87600h0m0s"

EOF

##生成kube-controller-manager证书

cd /opt/k8s/k8s-cert/

#创建证书请求文件

cat > kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"hosts": \[\],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

#生成证书

#生成kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"

KUBE_APISERVER="https://172.16.233.101:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-controller-manager \

--client-certificate=./kube-controller-manager.pem \

--client-key=./kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-controller-manager \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

##创建 kube-controller-manager.service 服务管理文件

cat >/usr/lib/systemd/system/kube-controller-manager.service <<EOF

Unit

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

Service

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

Install

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

chmod +x controller-manager.sh

admin

vim admin.sh

#!/bin/bash

mkdir /root/.kube

KUBE_CONFIG="/root/.kube/config"

KUBE_APISERVER="https://172.16.233.101:6443"

cd /opt/k8s/k8s-cert/

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials cluster-admin \

--client-certificate=./admin.pem \

--client-key=./admin-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=cluster-admin \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

chmod +x admin.sh

./admin.sh

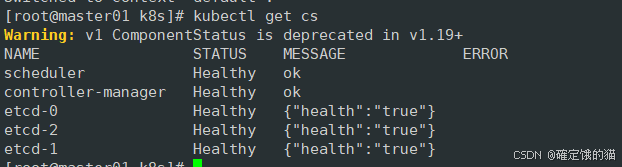

通过kubectl工具查看当前集群组件状态

kubectl get cs