文章目录

- [Biclustering (双聚类)](#Biclustering (双聚类))

- 谱二分聚类算法演示

- [Spectral Co-Clustering 算法演示](#Spectral Co-Clustering 算法演示)

- 使用光谱协同聚类算法进行文档的二分聚类

Biclustering (双聚类)

关于双聚类技术的示例。

谱双聚类的演示

使用谱协同聚类算法对文档进行双聚类

使用谱协同聚类算法对新闻组文档进行双聚类

注意:前往末尾下载完整示例代码或通过 JupyterLite 或 Binder 在浏览器中运行此示例。

谱二分聚类算法演示

本例演示了如何使用 SpectralBiclustering 算法生成方格数据集并对其进行二分聚类。谱二分聚类算法专门设计用于通过同时考虑矩阵的行(样本)和列(特征)来聚类数据。它的目标是识别样本之间以及样本子集中的模式,从而在数据中检测到局部化结构。这使得谱二分聚类特别适合于特征顺序或排列固定的数据集,例如图像、时间序列或基因组。

数据生成后,经过打乱并传递给谱二分聚类算法。然后重新排列打乱矩阵的行和列来绘制找到的二分聚类。

python

# 作者:scikit-learn 开发者

# SPDX-License-Identifier: BSD-3-Clause生成样本数据

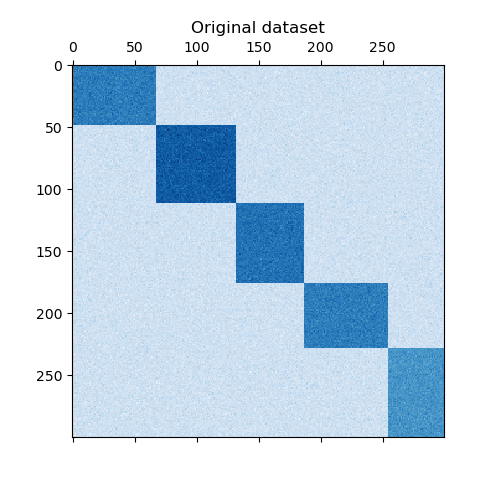

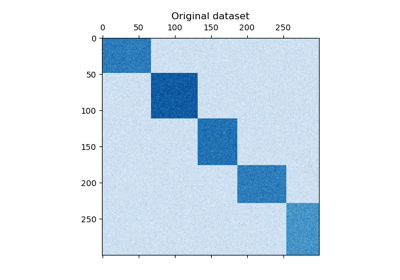

我们使用 make_checkerboard 函数生成样本数据。shape=(300, 300) 中的每个像素都代表来自均匀分布的值。噪声来自正态分布,其中 noise 的值是标准差。

如您所见,数据分布在 12 个簇单元中,且相对容易区分。

python

from matplotlib import pyplot as plt

from sklearn.datasets import make_checkerboard

n_clusters = (4, 3)

data, rows, columns = make_checkerboard(

shape=(300, 300), n_clusters=n_clusters, noise=10, shuffle=False, random_state=42

)

plt.matshow(data, cmap=plt.cm.Blues)

plt.title("原始数据集")

plt.show()

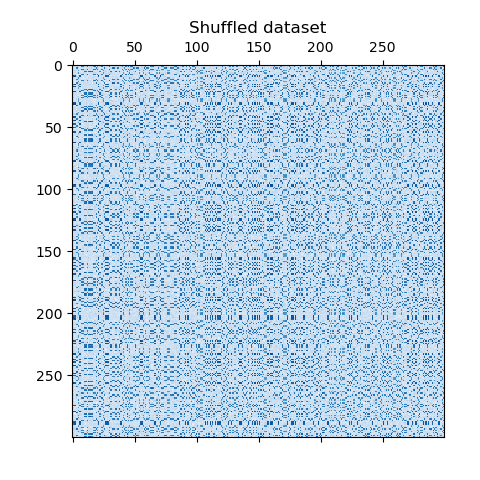

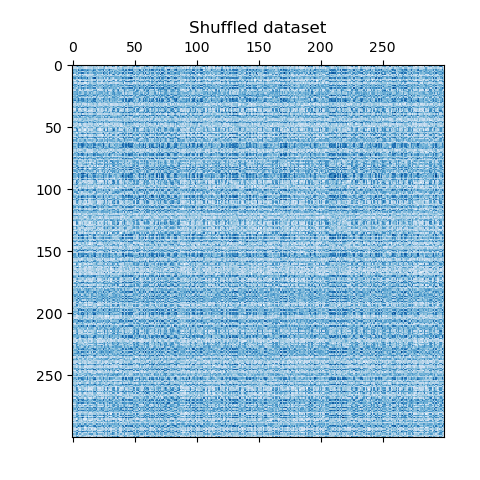

我们打乱数据,目标是之后使用 SpectralBiclustering 重建它。

python

import numpy as np

# 创建打乱行和列索引的列表

rng = np.random.RandomState(0)

row_idx_shuffled = rng.permutation(data.shape[0])

col_idx_shuffled = rng.permutation(data.shape[1])我们重新定义打乱的数据并绘制它。我们观察到我们失去了原始数据矩阵的结构。

python

data = data[row_idx_shuffled][:, col_idx_shuffled]

plt.matshow(data, cmap=plt.cm.Blues)

plt.title("打乱的数据集")

plt.show()

拟合 SpectralBiclustering

我们拟合模型并比较获得的聚类与真实值。请注意,在创建模型时,我们指定了与创建数据集时相同的簇数(n_clusters = (4, 3)),这将有助于获得良好的结果。

python

from sklearn.cluster import SpectralBiclustering

from sklearn.metrics import consensus_score

model = SpectralBiclustering(n_clusters=n_clusters, method="log", random_state=0)

model.fit(data)

# 计算两组二分聚类之间的相似度

score = consensus_score(model.biclusters_, (rows[:, row_idx_shuffled], columns[:, col_idx_shuffled]))

print(f"一致性得分:{score:.1f}")***

一致性得分:1.0该得分介于 0 和 1 之间,其中 1 对应于完美的匹配。它显示了二分聚类的质量。

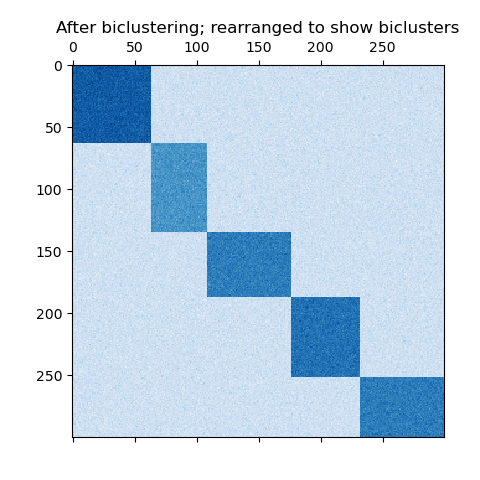

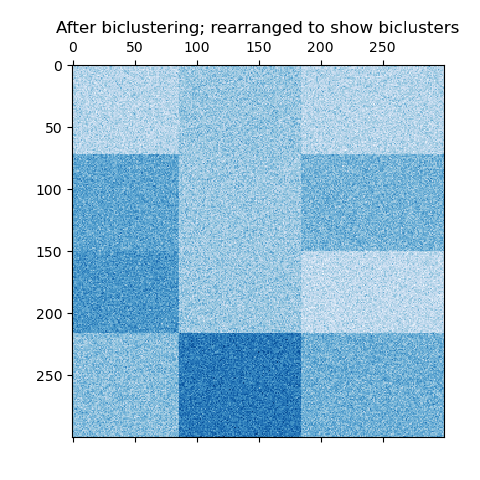

绘制结果

现在,我们根据 SpectralBiclustering 模型分配的行和列标签按升序重新排列数据,并再次绘制。row_labels_ 的范围从 0 到 3,而 column_labels_ 的范围从 0 到 2,代表每行有 4 个簇,每列有 3 个簇。

python

# 首先重新排列行,然后是列。

reordered_rows = data[np.argsort(model.row_labels_)]

reordered_data = reordered_rows[:, np.argsort(model.column_labels_)]

plt.matshow(reordered_data, cmap=plt.cm.Blues)

plt.title("二分聚类后;重新排列以显示二分聚类")

plt.show()

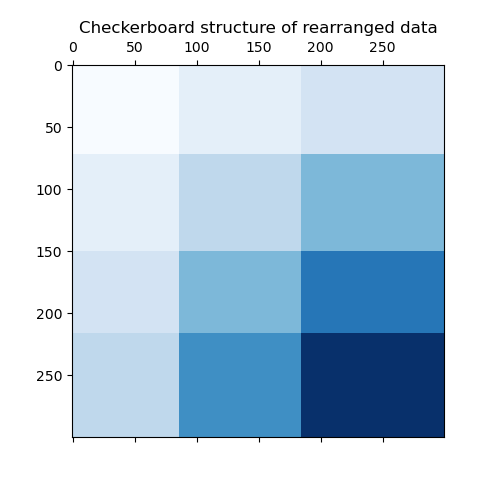

最后一步,我们想展示模型分配的行和列标签之间的关系。因此,我们使用 numpy.outer 创建一个网格,它将排序后的 row_labels_ 和 column_labels_ 相加 1,以确保标签从 1 开始而不是 0,以便更好地可视化。

python

plt.matshow(np.outer(np.sort(model.row_labels_) + 1, np.sort(model.column_labels_) + 1), cmap=plt.cm.Blues)

plt.title("重新排列数据的方格结构")

plt.show()

行和列标签向量的外积显示了方格结构的表示,其中不同的行和列标签组合用不同的蓝色阴影表示。

脚本的总运行时间:(0 分钟 0.534 秒)

- 启动 binder : https://mybinder.org/v2/gh/scikit-learn/scikit-learn/1.6.X?urlpath=lab/tree/notebooks/auto_examples/bicluster/plot_spectral_biclustering.ipynb

- 启动 JupyterLite : https://scikit-learn.org/stable/lite/lab/index.html?path=auto_examples/bicluster/plot_spectral_biclustering.ipynb

- 下载 Jupyter notebook:`plot_spectral_biclustering.ipynb](https://scikit-learn.org/stable/_downloads//6b00e458f3e282f1cc421f077b2fcad1/plot_spectral_biclustering.ipynb\>

- 下载 Python 源代码:

plot_spectral_biclustering.py: https://scikit-learn.org/stable/_downloads//ac19db97f4bbd077ccffef2736ed5f3d/plot_spectral_biclustering.py - 下载 zip 文件:

plot_spectral_biclustering.zip: <https://scikit-learn.org/stable/_downloads//f66c3a9f3631c05c52d31172371922ab/plot_spectral_biclustering.zip\`

Spectral Co-Clustering 算法演示

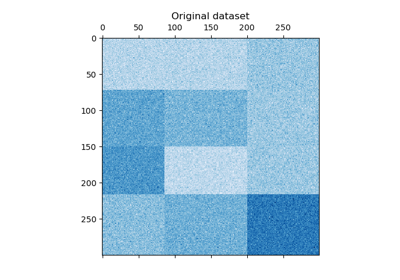

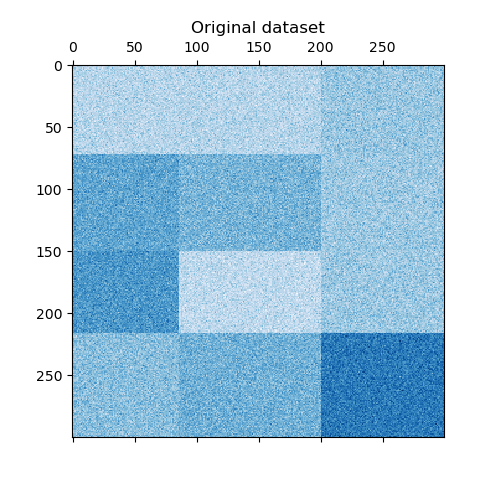

本例演示了如何使用 Spectral Co-Clustering 算法生成数据集并对其进行二分聚类。

数据集使用 make_biclusters 函数生成,该函数创建一个包含小值的矩阵,并植入具有大值的大值二分块。然后,行和列进行洗牌,并传递给 Spectral Co-Clustering 算法。通过重新排列洗牌后的矩阵以显示连续的二分块,展示了算法找到二分块的高准确性。

shell

一致性得分:1.000

python

# 作者:scikit-learn 开发者

# SPDX-License-Identifier: BSD-3-Clause

import numpy as np

from matplotlib import pyplot as plt

from sklearn.cluster import [SpectralCoclustering](https://scikit-learn.org/stable/modules/generated/sklearn.cluster.SpectralCoclustering.html#sklearn.cluster.SpectralCoclustering "sklearn.cluster.SpectralCoclustering")

from sklearn.datasets import [make_biclusters](https://scikit-learn.org/stable/modules/generated/sklearn.datasets.make_biclusters.html#sklearn.datasets.make_biclusters "sklearn.datasets.make_biclusters")

from sklearn.metrics import [consensus_score](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.consensus_score.html#sklearn.metrics.consensus_score "sklearn.metrics.consensus_score")

data, rows, columns = [make_biclusters](https://scikit-learn.org/stable/modules/generated/sklearn.datasets.make_biclusters.html#sklearn.datasets.make_biclusters "sklearn.datasets.make_biclusters")(

shape=(300, 300), n_clusters=5, noise=5, shuffle=False, random_state=0

)

[plt.matshow](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.matshow.html#matplotlib.pyplot.matshow "matplotlib.pyplot.matshow")(data, cmap=plt.cm.Blues)

[plt.title](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.title.html#matplotlib.pyplot.title "matplotlib.pyplot.title")("原始数据集")

# 洗牌聚类

rng = [np.random.RandomState](https://numpy.org/doc/stable/reference/random/legacy.html#numpy.random.RandomState "numpy.random.RandomState")(0)

row_idx = rng.permutation(data.shape[0])

col_idx = rng.permutation(data.shape[1])

data = data[row_idx][:, col_idx]

[plt.matshow](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.matshow.html#matplotlib.pyplot.matshow "matplotlib.pyplot.matshow")(data, cmap=plt.cm.Blues)

[plt.title](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.title.html#matplotlib.pyplot.title "matplotlib.pyplot.title")("洗牌后的数据集")

model = [SpectralCoclustering](https://scikit-learn.org/stable/modules/generated/sklearn.cluster.SpectralCoclustering.html#sklearn.cluster.SpectralCoclustering "sklearn.cluster.SpectralCoclustering")(n_clusters=5, random_state=0)

model.fit(data)

score = [consensus_score](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.consensus_score.html#sklearn.metrics.consensus_score "sklearn.metrics.consensus_score")(model.biclusters_, (rows[:, row_idx], columns[:, col_idx]))

print("一致性得分:{:.3f}".format(score))

fit_data = data[[np.argsort](https://numpy.org/doc/stable/reference/generated/numpy.argsort.html#numpy.argsort "numpy.argsort")(model.row_labels_)]

fit_data = fit_data[:, [np.argsort](https://numpy.org/doc/stable/reference/generated/numpy.argsort.html#numpy.argsort "numpy.argsort")(model.column_labels_)]

[plt.matshow](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.matshow.html#matplotlib.pyplot.matshow "matplotlib.pyplot.matshow")(fit_data, cmap=plt.cm.Blues)

[plt.title](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.title.html#matplotlib.pyplot.title "matplotlib.pyplot.title")("二分聚类后;重新排列以显示二分块")

[plt.show](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.show.html#matplotlib.pyplot.show "matplotlib.pyplot.show")()脚本的总运行时间: (0 分钟 0.347 秒)

- 启动 binder : https://mybinder.org/v2/gh/scikit-learn/scikit-learn/1.6.X?urlpath=lab/tree/notebooks/auto_examples/bicluster/plot_spectral_coclustering.ipynb

- 启动 JupyterLite : https://scikit-learn.org/stable/lite/lab/index.html?path=auto_examples/bicluster/plot_spectral_coclustering.ipynb

- 下载 Jupyter notebook:`plot_spectral_coclustering.ipynb](https://scikit-learn.org/stable/_downloads//ee8e74bb66ae2967f890e19f28090b37/plot_spectral_coclustering.ipynb\>

- 下载 Python 源代码:

plot_spectral_coclustering.py: https://scikit-learn.org/stable/_downloads//bc8849a7cb8ea7a8dc7237431b95a1cc/plot_spectral_coclustering.py - 下载 zip 文件:

plot_spectral_coclustering.zip: <https://scikit-learn.org/stable/_downloads//3019a99f3f124514f9a0650bcb33fa27/plot_spectral_coclustering.zip\`

使用光谱协同聚类算法进行文档的二分聚类

本例展示了如何使用光谱协同聚类算法对二十个新闻组数据集进行处理。由于"comp.os.ms-windows.misc"类别包含大量仅包含数据的帖子,因此该类别被排除。

TF-IDF 向量化后的帖子形成了一个词频矩阵,然后使用 Dhillon 的光谱协同聚类算法进行二分聚类。结果文档-词二分聚类表示了那些子文档中更常用的一些子词。

对于一些最好的二分聚类,打印出其最常见的文档类别和十个最重要的词。最好的二分聚类是由其归一化切割确定的。最好的词是通过比较其在二分聚类内外部的和来确定的。

为了比较,文档还使用 MiniBatchKMeans 进行了聚类。从二分聚类中得到的文档簇比 MiniBatchKMeans 找到的簇的 V-measure 更好。

shell

向量化...

协同聚类...

完成耗时 1.20 秒。V-measure: 0.4415

MiniBatchKMeans...

完成耗时 2.28 秒。V-measure: 0.3015

最佳二分聚类:

----------------

二分聚类 0 : 8 个文档,6 个词

类别 : 100% talk.politics.mideast

词 : cosmo, angmar, alfalfa, alphalpha, proline, benson

二分聚类 1 : 1948 个文档,4325 个词

类别 : 23% talk.politics.guns, 18% talk.politics.misc, 17% sci.med

词 : gun, guns, geb, banks, gordon, clinton, pitt, cdt, surrender, veal

二分聚类 2 : 1259 个文档,3534 个词

类别 : 27% soc.religion.christian, 25% talk.politics.mideast, 25% alt.atheism

词 : god, jesus, christians, kent, sin, objective, belief, christ, faith, moral

二分聚类 3 : 775 个文档,1623 个词

类别 : 30% comp.windows.x, 25% comp.sys.ibm.pc.hardware, 20% comp.graphics

词 : scsi, nada, ide, vga, esdi, isa, kth, s3, vlb, bmug

二分聚类 4 : 2180 个文档,2802 个词

类别 : 18% comp.sys.mac.hardware, 16% sci.electronics, 16% comp.sys.ibm.pc.hardware

词 : voltage, shipping, circuit, receiver, processing, scope, mpce, analog, kolstad, umass

python

# Authors: The scikit-learn developers

# SPDX-License-Identifier: BSD-3-Clause

from collections import Counter

from time import time

import numpy as np

from sklearn.cluster import MiniBatchKMeans, SpectralCoclustering

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.metrics.cluster import v_measure_score

def number_normalizer(tokens):

"""将所有数字标记映射到占位符。

对于许多应用,以数字开头的标记不是直接有用的,但这样的标记存在的事实可能很重要。通过应用这种形式的降维,某些方法可能表现得更好。

"""

return ("#NUMBER" if token[0].isdigit() else token for token in tokens)

class NumberNormalizingVectorizer(TfidfVectorizer):

def build_tokenizer(self):

tokenize = super().build_tokenizer()

return lambda doc: list(number_normalizer(tokenize(doc)))

# 排除 'comp.os.ms-windows.misc'

categories = [

"alt.atheism", "comp.graphics", "comp.sys.ibm.pc.hardware", "comp.sys.mac.hardware", "comp.windows.x", "misc.forsale", "rec.autos", "rec.motorcycles", "rec.sport.baseball", "rec.sport.hockey", "sci.crypt", "sci.electronics", "sci.med", "sci.space", "soc.religion.christian", "talk.politics.guns", "talk.politics.mideast", "talk.politics.misc", "talk.religion.misc", ]

newsgroups = fetch_20newsgroups(categories=categories)

y_true = newsgroups.target

vectorizer = NumberNormalizingVectorizer(stop_words="english", min_df=5)

cocluster = SpectralCoclustering(

n_clusters=len(categories), svd_method="arpack", random_state=0

)

kmeans = MiniBatchKMeans(

n_clusters=len(categories), batch_size=20000, random_state=0, n_init=3

)

print("向量化...")

X = vectorizer.fit_transform(newsgroups.data)

print("协同聚类...")

start_time = time()

cocluster.fit(X)

y_cocluster = cocluster.row_labels_

print(

f"完成耗时 {time() - start_time:.2f}s. V-measure: {v_measure_score(y_cocluster, y_true):.4f}"

)

print("MiniBatchKMeans...")

start_time = time()

y_kmeans = kmeans.fit_predict(X)

print(

f"完成耗时 {time() - start_time:.2f}s. V-measure: {v_measure_score(y_kmeans, y_true):.4f}"

)

feature_names = vectorizer.get_feature_names_out()

document_names = list(newsgroups.target_names[i] for i in newsgroups.target)

def bicluster_ncut(i):

rows, cols = cocluster.get_indices(i)

if not (np.any(rows) and np.any(cols)):

import sys

return sys.float_info.max

row_complement = np.nonzero(np.logical_not(cocluster.rows_[i]))[0]

col_complement = np.nonzero(np.logical_not(cocluster.columns_[i]))[0]

# 注意:以下与 X[rows[:, np.newaxis], cols].sum() 相同,但在 scipy <= 0.16 中更快

weight = X[rows][:, cols].sum()

cut = X[row_complement][:, cols].sum() + X[rows][:, col_complement].sum()

return cut / weight

bicluster_ncuts = list(bicluster_ncut(i) for i in range(len(newsgroups.target_names)))

best_idx = np.argsort(bicluster_ncuts)[:5]

print()

print("最佳二分聚类:")

print("----------------")

for idx, cluster in enumerate(best_idx):

n_rows, n_cols = cocluster.get_shape(cluster)

cluster_docs, cluster_words = cocluster.get_indices(cluster)

if not len(cluster_docs) or not len(cluster_words):

continue

# 类别

counter = Counter(document_names[doc] for doc in cluster_docs)

cat_string = ", ".join(

f"{(c / n_rows * 100):.0f}% {name}" for name, c in counter.most_common(3)

)

# 词

out_of_cluster_docs = cocluster.row_labels_ != cluster

out_of_cluster_docs = np.where(out_of_cluster_docs)[0]

word_col = X[:, cluster_words]

word_scores = np.array(

word_col[cluster_docs, :].sum(axis=0)

- word_col[out_of_cluster_docs, :].sum(axis=0)

)

word_scores = word_scores.ravel()

important_words = list(

feature_names[cluster_words[i]] for i in word_scores.argsort()[:-11:-1]

)

print(f"二分聚类 {idx} : {n_rows} 个文档,{n_cols} 个词")

print(f"类别 : {cat_string}")

print(f"词 : {', '.join(important_words)}\n")脚本的总运行时间: (0 分钟 15.176 秒)

- 启动 binder : https://mybinder.org/v2/gh/scikit-learn/scikit-learn/1.6.X?urlpath=lab/tree/notebooks/auto_examples/bicluster/plot_bicluster_newsgroups.ipynb

- 启动 JupyterLite : https://scikit-learn.org/stable/lite/lab/index.html?path=auto_examples/bicluster/plot_bicluster_newsgroups.ipynb

- 下载 Jupyter notebook: plot_bicluster_newsgroups.ipynb](https://scikit-learn.org/stable/_downloads//3f7191b01d0103d1886c959ed7687c4d/plot_bicluster_newsgroups.ipynb\>

- 下载 Python 源代码: plot_bicluster_newsgroups.py` : https://scikit-learn.org/stable/_downloads//e68419b513284db108081422c73a5667/plot_bicluster_newsgroups.py

- 下载 zip 文件: plot_bicluster_newsgroups.zip

: <https://scikit-learn.org/stable/_downloads//ccd24a43e08ccb154430edf7f927cfc7/plot_bicluster_newsgroups.zip