DAY 44 预训练模型

知识点回顾:

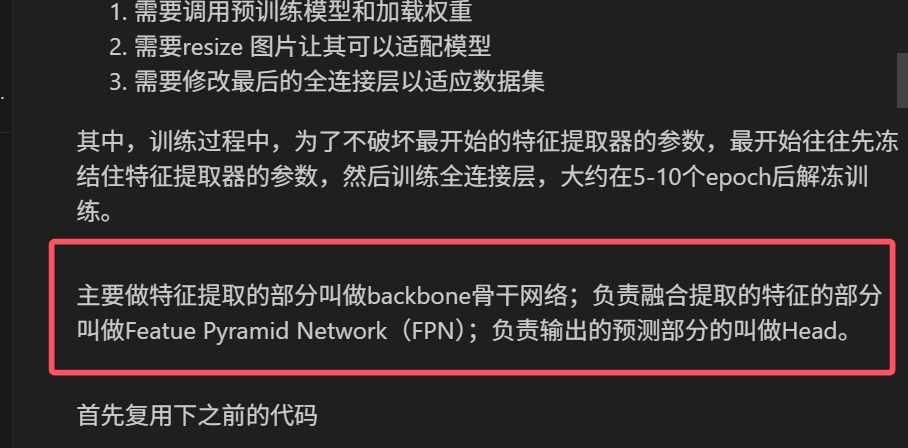

- 预训练的概念

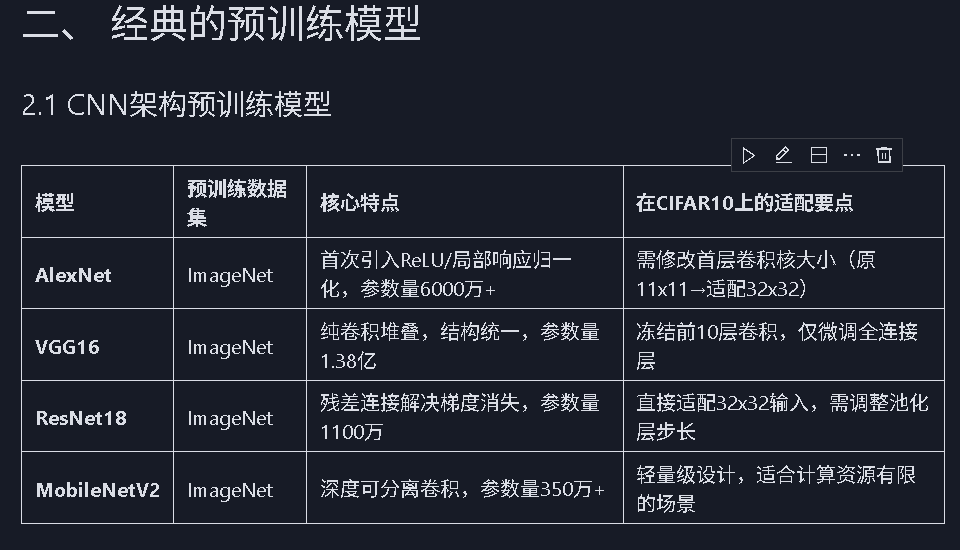

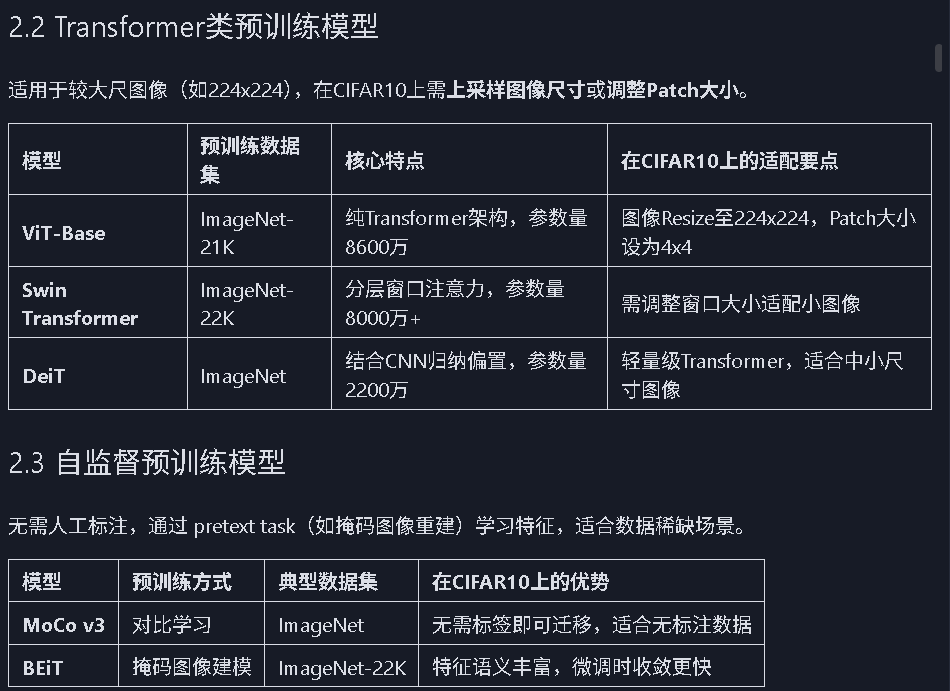

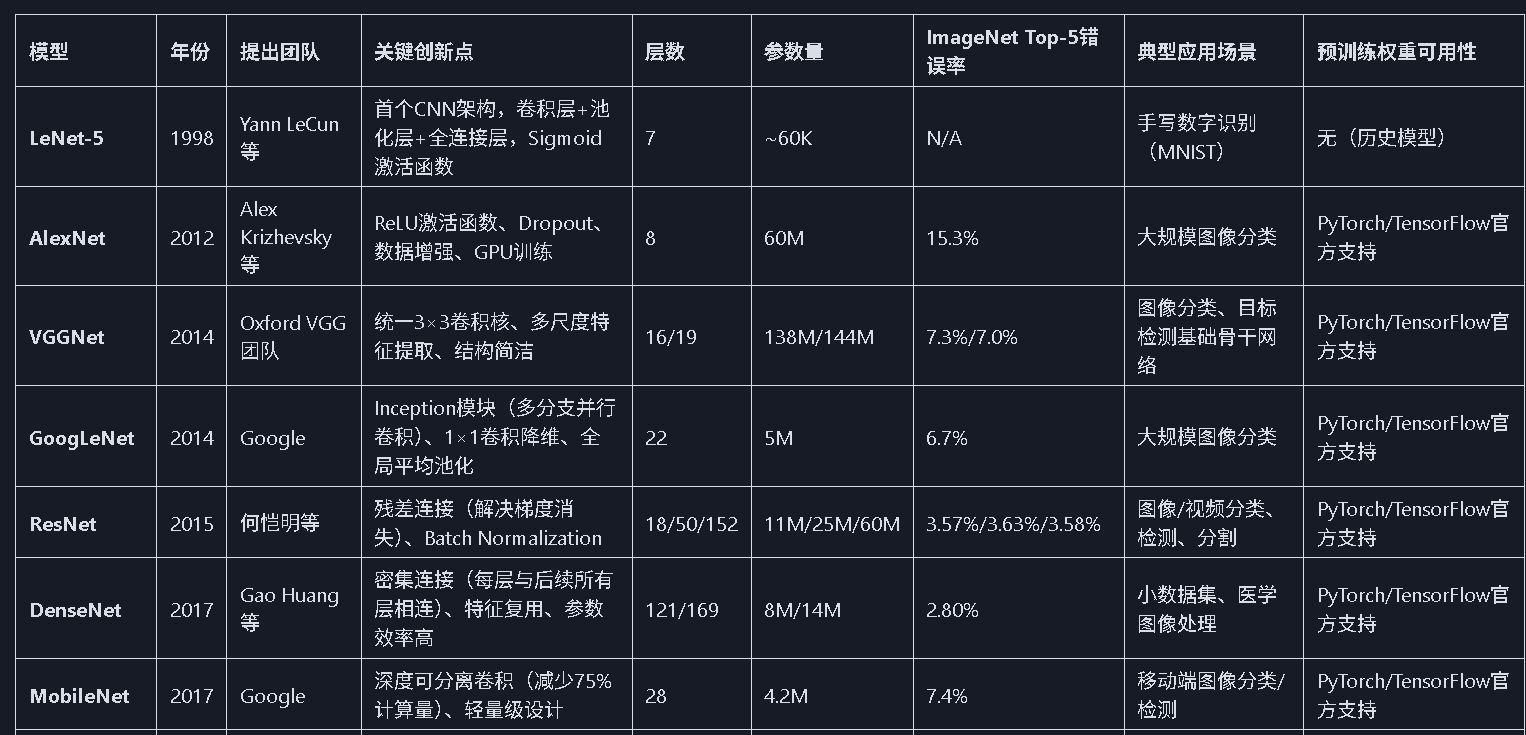

- 常见的分类预训练模型

- 图像预训练模型的发展史

- 预训练的策略

- 预训练代码实战:resnet18

作业:

- 尝试在cifar10对比如下其他的预训练模型,观察差异,尽可能和他人选择的不同

- 尝试通过ctrl进入resnet的内部,观察残差究竟是什么

python

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

# 设置中文字体支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")

# 1. 数据预处理(训练集增强,测试集标准化)

train_transform = transforms.Compose([

transforms.RandomCrop(32, padding=4),

transforms.RandomHorizontalFlip(),

transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1),

transforms.RandomRotation(15),

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

test_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

# 2. 加载CIFAR-10数据集

train_dataset = datasets.CIFAR10(

root='./data',

train=True,

download=True,

transform=train_transform

)

test_dataset = datasets.CIFAR10(

root='./data',

train=False,

transform=test_transform

)

# 3. 创建数据加载器(可调整batch_size)

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

# 4. 训练函数(支持学习率调度器)

def train(model, train_loader, test_loader, criterion, optimizer, scheduler, device, epochs):

model.train() # 设置为训练模式

train_loss_history = []

test_loss_history = []

train_acc_history = []

test_acc_history = []

all_iter_losses = []

iter_indices = []

for epoch in range(epochs):

running_loss = 0.0

correct_train = 0

total_train = 0

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

# 记录Iteration损失

iter_loss = loss.item()

all_iter_losses.append(iter_loss)

iter_indices.append(epoch * len(train_loader) + batch_idx + 1)

# 统计训练指标

running_loss += iter_loss

_, predicted = output.max(1)

total_train += target.size(0)

correct_train += predicted.eq(target).sum().item()

# 每100批次打印进度

if (batch_idx + 1) % 100 == 0:

print(f"Epoch {epoch+1}/{epochs} | Batch {batch_idx+1}/{len(train_loader)} "

f"| 单Batch损失: {iter_loss:.4f}")

# 计算 epoch 级指标

epoch_train_loss = running_loss / len(train_loader)

epoch_train_acc = 100. * correct_train / total_train

# 测试阶段

model.eval()

correct_test = 0

total_test = 0

test_loss = 0.0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += criterion(output, target).item()

_, predicted = output.max(1)

total_test += target.size(0)

correct_test += predicted.eq(target).sum().item()

epoch_test_loss = test_loss / len(test_loader)

epoch_test_acc = 100. * correct_test / total_test

# 记录历史数据

train_loss_history.append(epoch_train_loss)

test_loss_history.append(epoch_test_loss)

train_acc_history.append(epoch_train_acc)

test_acc_history.append(epoch_test_acc)

# 更新学习率调度器

if scheduler is not None:

scheduler.step(epoch_test_loss)

# 打印 epoch 结果

print(f"Epoch {epoch+1} 完成 | 训练损失: {epoch_train_loss:.4f} "

f"| 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%")

# 绘制损失和准确率曲线

plot_iter_losses(all_iter_losses, iter_indices)

plot_epoch_metrics(train_acc_history, test_acc_history, train_loss_history, test_loss_history)

return epoch_test_acc # 返回最终测试准确率

# 5. 绘制Iteration损失曲线

def plot_iter_losses(losses, indices):

plt.figure(figsize=(10, 4))

plt.plot(indices, losses, 'b-', alpha=0.7)

plt.xlabel('Iteration(Batch序号)')

plt.ylabel('损失值')

plt.title('训练过程中的Iteration损失变化')

plt.grid(True)

plt.show()

# 6. 绘制Epoch级指标曲线

def plot_epoch_metrics(train_acc, test_acc, train_loss, test_loss):

epochs = range(1, len(train_acc) + 1)

plt.figure(figsize=(12, 5))

# 准确率曲线

plt.subplot(1, 2, 1)

plt.plot(epochs, train_acc, 'b-', label='训练准确率')

plt.plot(epochs, test_acc, 'r-', label='测试准确率')

plt.xlabel('Epoch')

plt.ylabel('准确率 (%)')

plt.title('准确率随Epoch变化')

plt.legend()

plt.grid(True)

# 损失曲线

plt.subplot(1, 2, 2)

plt.plot(epochs, train_loss, 'b-', label='训练损失')

plt.plot(epochs, test_loss, 'r-', label='测试损失')

plt.xlabel('Epoch')

plt.ylabel('损失值')

plt.title('损失值随Epoch变化')

plt.legend()

plt.grid(True)

plt.tight_layout()

plt.show()

# 导入ResNet模型

from torchvision.models import resnet18

# 定义ResNet18模型(支持预训练权重加载)

def create_resnet18(pretrained=True, num_classes=10):

# 加载预训练模型(ImageNet权重)

model = resnet18(pretrained=pretrained)

# 修改最后一层全连接层,适配CIFAR-10的10分类任务

in_features = model.fc.in_features

model.fc = nn.Linear(in_features, num_classes)

# 将模型转移到指定设备(CPU/GPU)

model = model.to(device)

return model

# 创建ResNet18模型(加载ImageNet预训练权重,不进行微调)

model = create_resnet18(pretrained=True, num_classes=10)

model.eval() # 设置为推理模式

# 测试单张图片(示例)

from torchvision import utils

# 从测试数据集中获取一张图片

dataiter = iter(test_loader)

images, labels = dataiter.next()

images = images[:1].to(device) # 取第1张图片

# 前向传播

with torch.no_grad():

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

# 显示图片和预测结果

plt.imshow(utils.make_grid(images.cpu(), normalize=True).permute(1, 2, 0))

plt.title(f"预测类别: {predicted.item()}")

plt.axis('off')

plt.show()

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms, models

from torch.utils.data import DataLoader

import matplotlib.pyplot as plt

import os

# 设置中文字体支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题

# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")

# 1. 数据预处理(训练集增强,测试集标准化)

train_transform = transforms.Compose([

transforms.RandomCrop(32, padding=4),

transforms.RandomHorizontalFlip(),

transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1),

transforms.RandomRotation(15),

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

test_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

# 2. 加载CIFAR-10数据集

train_dataset = datasets.CIFAR10(

root='./data',

train=True,

download=True,

transform=train_transform

)

test_dataset = datasets.CIFAR10(

root='./data',

train=False,

transform=test_transform

)

# 3. 创建数据加载器

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

# 4. 定义ResNet18模型

def create_resnet18(pretrained=True, num_classes=10):

model = models.resnet18(pretrained=pretrained)

# 修改最后一层全连接层

in_features = model.fc.in_features

model.fc = nn.Linear(in_features, num_classes)

return model.to(device)

# 5. 冻结/解冻模型层的函数

def freeze_model(model, freeze=True):

"""冻结或解冻模型的卷积层参数"""

# 冻结/解冻除fc层外的所有参数

for name, param in model.named_parameters():

if 'fc' not in name:

param.requires_grad = not freeze

# 打印冻结状态

frozen_params = sum(p.numel() for p in model.parameters() if not p.requires_grad)

total_params = sum(p.numel() for p in model.parameters())

if freeze:

print(f"已冻结模型卷积层参数 ({frozen_params}/{total_params} 参数)")

else:

print(f"已解冻模型所有参数 ({total_params}/{total_params} 参数可训练)")

return model

# 6. 训练函数(支持阶段式训练)

def train_with_freeze_schedule(model, train_loader, test_loader, criterion, optimizer, scheduler, device, epochs, freeze_epochs=5):

"""

前freeze_epochs轮冻结卷积层,之后解冻所有层进行训练

"""

train_loss_history = []

test_loss_history = []

train_acc_history = []

test_acc_history = []

all_iter_losses = []

iter_indices = []

# 初始冻结卷积层

if freeze_epochs > 0:

model = freeze_model(model, freeze=True)

for epoch in range(epochs):

# 解冻控制:在指定轮次后解冻所有层

if epoch == freeze_epochs:

model = freeze_model(model, freeze=False)

# 解冻后调整优化器(可选)

optimizer.param_groups[0]['lr'] = 1e-4 # 降低学习率防止过拟合

model.train() # 设置为训练模式

running_loss = 0.0

correct_train = 0

total_train = 0

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

# 记录Iteration损失

iter_loss = loss.item()

all_iter_losses.append(iter_loss)

iter_indices.append(epoch * len(train_loader) + batch_idx + 1)

# 统计训练指标

running_loss += iter_loss

_, predicted = output.max(1)

total_train += target.size(0)

correct_train += predicted.eq(target).sum().item()

# 每100批次打印进度

if (batch_idx + 1) % 100 == 0:

print(f"Epoch {epoch+1}/{epochs} | Batch {batch_idx+1}/{len(train_loader)} "

f"| 单Batch损失: {iter_loss:.4f}")

# 计算 epoch 级指标

epoch_train_loss = running_loss / len(train_loader)

epoch_train_acc = 100. * correct_train / total_train

# 测试阶段

model.eval()

correct_test = 0

total_test = 0

test_loss = 0.0

with torch.no_grad():

for data, target in test_loader:

data, target = data.to(device), target.to(device)

output = model(data)

test_loss += criterion(output, target).item()

_, predicted = output.max(1)

total_test += target.size(0)

correct_test += predicted.eq(target).sum().item()

epoch_test_loss = test_loss / len(test_loader)

epoch_test_acc = 100. * correct_test / total_test

# 记录历史数据

train_loss_history.append(epoch_train_loss)

test_loss_history.append(epoch_test_loss)

train_acc_history.append(epoch_train_acc)

test_acc_history.append(epoch_test_acc)

# 更新学习率调度器

if scheduler is not None:

scheduler.step(epoch_test_loss)

# 打印 epoch 结果

print(f"Epoch {epoch+1} 完成 | 训练损失: {epoch_train_loss:.4f} "

f"| 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%")

# 绘制损失和准确率曲线

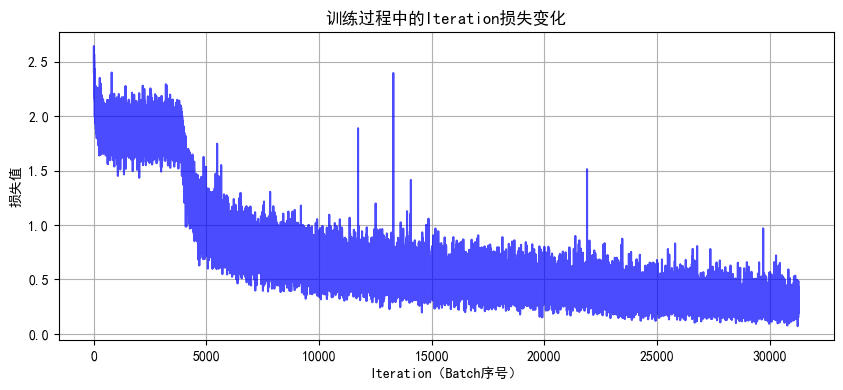

plot_iter_losses(all_iter_losses, iter_indices)

plot_epoch_metrics(train_acc_history, test_acc_history, train_loss_history, test_loss_history)

return epoch_test_acc # 返回最终测试准确率

# 7. 绘制Iteration损失曲线

def plot_iter_losses(losses, indices):

plt.figure(figsize=(10, 4))

plt.plot(indices, losses, 'b-', alpha=0.7)

plt.xlabel('Iteration(Batch序号)')

plt.ylabel('损失值')

plt.title('训练过程中的Iteration损失变化')

plt.grid(True)

plt.show()

# 8. 绘制Epoch级指标曲线

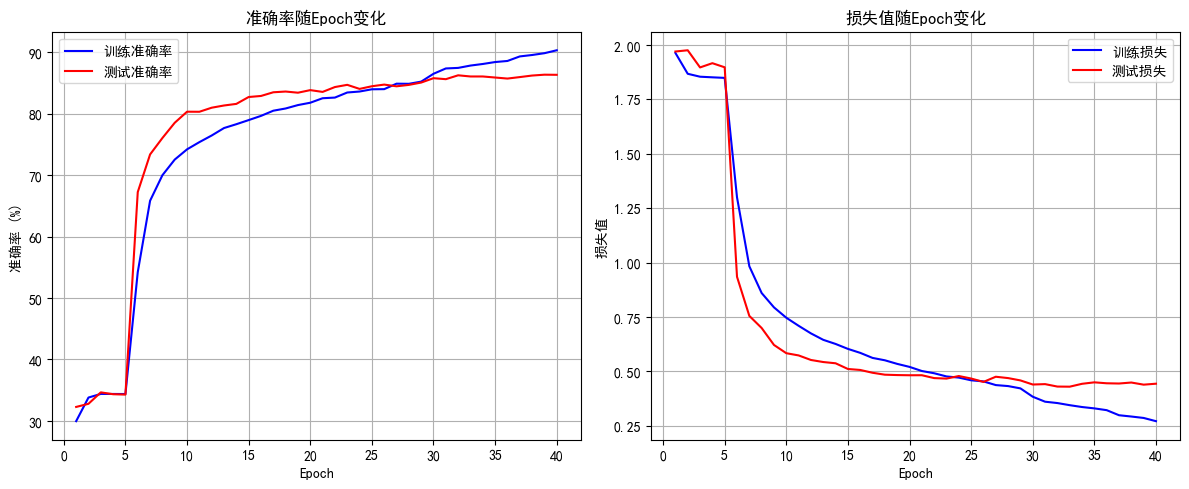

def plot_epoch_metrics(train_acc, test_acc, train_loss, test_loss):

epochs = range(1, len(train_acc) + 1)

plt.figure(figsize=(12, 5))

# 准确率曲线

plt.subplot(1, 2, 1)

plt.plot(epochs, train_acc, 'b-', label='训练准确率')

plt.plot(epochs, test_acc, 'r-', label='测试准确率')

plt.xlabel('Epoch')

plt.ylabel('准确率 (%)')

plt.title('准确率随Epoch变化')

plt.legend()

plt.grid(True)

# 损失曲线

plt.subplot(1, 2, 2)

plt.plot(epochs, train_loss, 'b-', label='训练损失')

plt.plot(epochs, test_loss, 'r-', label='测试损失')

plt.xlabel('Epoch')

plt.ylabel('损失值')

plt.title('损失值随Epoch变化')

plt.legend()

plt.grid(True)

plt.tight_layout()

plt.show()

# 主函数:训练模型

def main():

# 参数设置

epochs = 40 # 总训练轮次

freeze_epochs = 5 # 冻结卷积层的轮次

learning_rate = 1e-3 # 初始学习率

weight_decay = 1e-4 # 权重衰减

# 创建ResNet18模型(加载预训练权重)

model = create_resnet18(pretrained=True, num_classes=10)

# 定义优化器和损失函数

optimizer = optim.Adam(model.parameters(), lr=learning_rate, weight_decay=weight_decay)

criterion = nn.CrossEntropyLoss()

# 定义学习率调度器

scheduler = optim.lr_scheduler.ReduceLROnPlateau(

optimizer, mode='min', factor=0.5, patience=2, verbose=True

)

# 开始训练(前5轮冻结卷积层,之后解冻)

final_accuracy = train_with_freeze_schedule(

model=model,

train_loader=train_loader,

test_loader=test_loader,

criterion=criterion,

optimizer=optimizer,

scheduler=scheduler,

device=device,

epochs=epochs,

freeze_epochs=freeze_epochs

)

print(f"训练完成!最终测试准确率: {final_accuracy:.2f}%")

# # 保存模型

# torch.save(model.state_dict(), 'resnet18_cifar10_finetuned.pth')

# print("模型已保存至: resnet18_cifar10_finetuned.pth")

if __name__ == "__main__":

main()

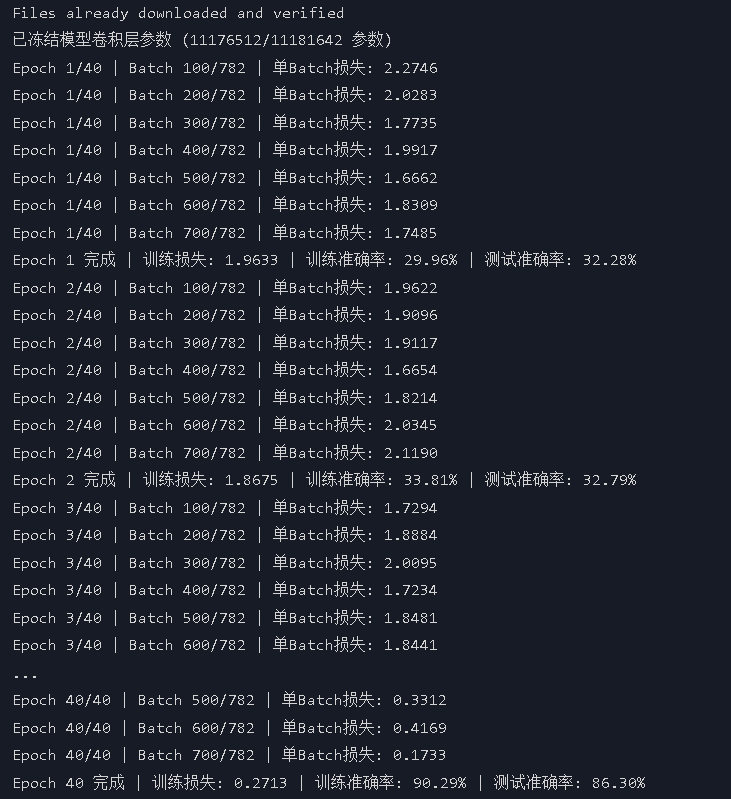

几个明显的现象

-

解冻后几个epoch即可达到之前cnn训练20轮的效果,这是预训练的优势

-

由于训练集用了 RandomCrop(随机裁剪)、RandomHorizontalFlip(随机水平翻转)、ColorJitter(颜色抖动)等数据增强操作,这会让训练时模型看到的图片有更多 "干扰" 或变形。比如一张汽车图片,训练时可能被裁剪成只显示局部、颜色也有变化,模型学习难度更高;而测试集是标准的、没增强的图片,模型预测相对轻松,就可能出现训练集准确率暂时低于测试集的情况,尤其在训练前期增强对模型影响更明显。随着训练推进,模型适应增强后会缓解。

-

最后收敛后的效果超过非预训练模型的80%,大幅提升