1. 音频框架

动态 PCM 允许 ALSA PCM 设备在 PCM 流运行时将其 PCM 音频数字路由到各种数字端点。例如,PCM0 可以将数字音频路由到 I2S DAI0、I2S DAI1 或 PDM DAI2。这对于公开多个 ALSA PCM 并可以路由到多个 DAI 的片上系统 DSP 驱动程序非常有用。

DPCM 运行时路由由 ALSA 混音器设置确定,方式与 ASoC 编解码器驱动程序中模拟信号的路由方式相同。DPCM 使用表示 DSP 内部音频路径的 DAPM 图,并使用混音器设置来确定每个 ALSA PCM 使用的路径。

DPCM 重用所有现有的组件编解码器、平台和 DAI 驱动程序,无需任何修改。

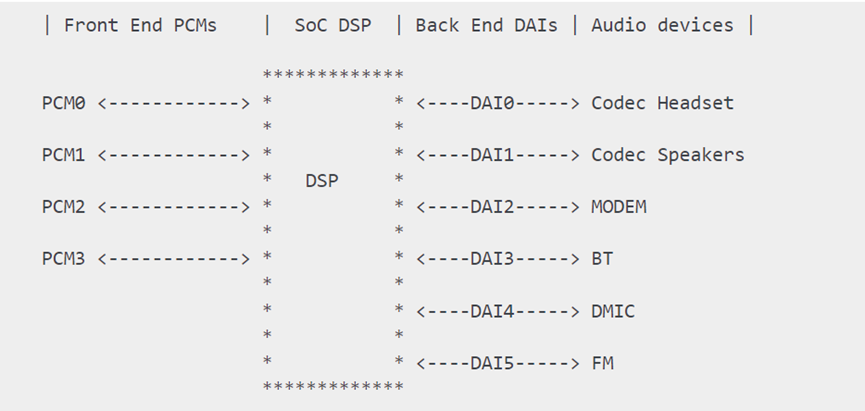

以基于片上系统的DSP的手机音频系统为例:

此图显示了一个简单的智能手机音频子系统。它支持蓝牙、FM 数字收音机、扬声器、耳机插孔、数字麦克风和蜂窝调制解调器。此声卡公开 4 个 DSP 前端 (FE) ALSA PCM 设备,并支持 6 个后端 (BE) DAI。每个 FE PCM 可以将音频数据数字路由到任何 BE DAI。FE PCM 设备还可以将音频路由到多个 BE DAI。

这里有几个概念:

- Front End PCMs:音频前端,一个前端对应着一个 PCM 设备

- Back End DAIs:音频后端,一个后端对应着一个 DAI 接口,一个 FE PCM 能够连接到一个或多个 BE DAI

- Audio Device:有 headset、speaker、earpiece、mic、bt、modem 等;不同的设备可能与不同的 DAI 接口连接,也可能与同一个 DAI 接口连接(如上图,Speaker 和 Earpiece 都连接到 DAI1)

- Soc DSP:本文范围内实现路由功能:连接 FE PCMs 和 BE DAIs,例如连接 PCM0 与 DAI1:

以msm8996为例,具体原理图请在官网查询,如果是公司项目,高通会开通项目权限并获取相关资料,比如原理图或者user guide之类的开发指导文档。

FE对应pcm设备,可以理解为是一个逻辑概念,而BE则对应具体的硬件。

msm8996的FE有:

deep_buffer

low_latency

mutil_channel

compress_offload

audio_record

usb_audio

a2dp_audio

voice_call

BE,主要对应几路I2S,有对接内部codec使用的,以及预留的对接外部codec使用

SLIM_BUS

Aux_PCM

Primary_MI2S

Secondary_MI2S

Tertiary_MI2S

Quatermary_MI2S

高通的HAL层中的usecase和device比较重要,

usecase指的是音频的应用场景,与FE相对应,例如常用的音频场景有:

low_latency:按键音、触摸音、游戏背景音等低延时的放音场景

deep_buffer:音乐、视频等对时延要求不高的放音场景

compress_offload:mp3、flac、aac等格式的音源播放场景,这种音源不需要软件解码,直接把数据送到硬件解码器(aDSP),由硬件解码器(aDSP)进行解码

record:普通录音场景

record_low_latency:低延时的录音场景

voice_call:语音通话场景

voip_call:网络通话场景

device 表示音频端点设备,包括输出端点(如 speaker、headphone、earpiece)和输入端点(如 headset-mic、builtin-mic)。高通 HAL 对音频设备做了扩展,比如 speaker 分为:

SND_DEVICE_OUT_SPEAKER:普通的外放设备

SND_DEVICE_OUT_SPEAKER_PROTECTED:带保护的外放设备

SND_DEVICE_OUT_VOICE_SPEAKER:普通的通话免提设备

SND_DEVICE_OUT_VOICE_SPEAKER_PROTECTED:带保护的通话免提设备

音频 DSP,大致可以等同于 codec。在高通大多数平台上,codec分为两部分,一部分是数字 codec,其在 MSM 上;另一部分是模拟 codec,其在 PMIC 上。

在高通HAL中,一个device连接着BE DAI,但一个BE DAI对应多个device。所以确定了device,也就能确定对应的BE DAI

2.音频通路

通过音频框架可以看到,音频通路就是将FE PCMs、BE DAIs、Devices,这三块均打开并串联起来,从而完成一个音频通路的设置。

FE_PCMs <=> BE_DAIs <=> Devices

(1)打开PCM

打开PCM流即打开音频流,一个音频流对应着一个usecase,打开音频流的流程在前面的章节中已经提到过,下面看下如何根据usercase找到对应的FE_PCM

在调用start_output_stream时,会调用

out->pcm_device_id = platform_get_pcm_device_id(out->usecase, PCM_PLAYBACK);

根据usercase,找到对应的pcm_id,然后调用select_device根据usecase选择对应的device。

cpp

int start_output_stream(struct stream_out *out)

{

int ret = 0;

struct audio_usecase *uc_info;

struct audio_device *adev = out->dev;

bool a2dp_combo = false;

......

out->pcm_device_id = platform_get_pcm_device_id(out->usecase, PCM_PLAYBACK);

if (out->pcm_device_id < 0) {

ALOGE("%s: Invalid PCM device id(%d) for the usecase(%d)",

__func__, out->pcm_device_id, out->usecase);

ret = -EINVAL;

goto error_config;

}

uc_info = (struct audio_usecase *)calloc(1, sizeof(struct audio_usecase));

uc_info->id = out->usecase;

uc_info->type = PCM_PLAYBACK;

uc_info->stream.out = out;

uc_info->devices = out->devices;

uc_info->in_snd_device = SND_DEVICE_NONE;

uc_info->out_snd_device = SND_DEVICE_NONE;

......

if ((out->devices & AUDIO_DEVICE_OUT_ALL_A2DP) &&

(!audio_extn_a2dp_is_ready())) {

if (!a2dp_combo) {

check_a2dp_restore_l(adev, out, false);

} else {

audio_devices_t dev = out->devices;

if (dev & AUDIO_DEVICE_OUT_SPEAKER_SAFE)

out->devices = AUDIO_DEVICE_OUT_SPEAKER_SAFE;

else

out->devices = AUDIO_DEVICE_OUT_SPEAKER;

select_devices(adev, out->usecase);

out->devices = dev;

}

} else {

select_devices(adev, out->usecase);

}

......

}语音通话的场景是有差异的,它不是传统的音频流。进入通话时,首先设置通话模式为AUDIO_MODE_IN_CALL,再传入音频设备routing=$device, 然后调用out_set_parameters函数,在该函数中如果检测到为语音通话模式,则打开语音通话的FE PCM

cpp

static int out_set_parameters(struct audio_stream *stream, const char *kvpairs)

{

......

if (new_dev != AUDIO_DEVICE_NONE) {

bool same_dev = out->devices == new_dev;

out->devices = new_dev;

if (output_drives_call(adev, out)) {

if (!voice_is_call_state_active(adev)) {

if (adev->mode == AUDIO_MODE_IN_CALL) {

adev->current_call_output = out;

ret = voice_start_call(adev);

}

} else {

adev->current_call_output = out;

voice_update_devices_for_all_voice_usecases(adev);

}

}

}

......

}

int voice_start_call(struct audio_device *adev)

{

int ret = 0;

adev->voice.in_call = true;

voice_set_mic_mute(adev, adev->voice.mic_mute);

ret = voice_extn_start_call(adev);

if (ret == -ENOSYS) {

ret = voice_start_usecase(adev, USECASE_VOICE_CALL);

}

return ret;

}(2)路由选择

在高通的HAL中,有一个关键的配置文件:mixer_paths.xml,该文件主要设置了音频通路以及code的路由。例如,与usecase相关的配置如下所示:

<path name="deep-buffer-playback speaker">

<ctl name="QUAT_MI2S_RX Audio Mixer MultiMedia1" value="1" />

</path>

<path name="deep-buffer-playback headphones">

<ctl name="TERT_MI2S_RX Audio Mixer MultiMedia1" value="1" />

</path>

<path name="deep-buffer-playback earphones">

<ctl name="QUAT_MI2S_RX Audio Mixer MultiMedia1" value="1" />

</path>

<path name="low-latency-playback speaker">

<ctl name="QUAT_MI2S_RX Audio Mixer MultiMedia5" value="1" />

</path>

<path name="low-latency-playback headphones">

<ctl name="TERT_MI2S_RX Audio Mixer MultiMedia5" value="1" />

</path>

<path name="low-latency-playback earphones">

<ctl name="QUAT_MI2S_RX Audio Mixer MultiMedia5" value="1" />

</path>

这些通路就是连接 usecase与device 之间的路由。比如 "deep-buffer-playback speaker" 是连接 deep-buffer-playback FE PCM与speaker Device 之间的路由,打开 "deep-buffer-playback speaker",则把 deep-buffer-playback FE PCM 和 speaker Device 连接起来;关闭 "deep-buffer-playback speaker",则断开 deep-buffer-playback FE PCM 和 speaker Device 的连接。

前文提到"device 连接着唯一的 BE DAI,确定了 device 也就能确定所连接的 BE DAI",因此这些路由通路其实都隐含着 BE DAI 的连接:FE PCM 并非直接到 device 的,而是 FE PCM 先连接到 BE DAI,BE DAI 再连接到 device。这点有助于理解路由控件,路由控件面向的是 FE PCM 和 BE DAI 之间的连接,回放类型的路由控件名称一般是: BE_DAI Audio Mixer FE_PCM,录制类型的路由控件名称一般是:FE_PCM Audio Mixer BE_DAI。

以deep-buffer-playback speaker这个usecase为例,

<ctl name="QUAT_MI2S_RX Audio Mixer MultiMedia1" value="1" />

MultiMedia1:deep_buffer usacase 对应的 FE PCM

QUAT_MI2S_RX:speaker device 所连接的 BE DAI

Audio Mixer:表示 DSP 路由功能

value:1 表示连接,0 表示断开连接

这个控件的意思是:把 MultiMedia1 PCM 与 QUAT_MI2S_RX DAI (第4路I2S)连接起来。这个控件并没有指明 QUAT_MI2S_RX DAI 与 speaker device 之间的连接,因为 BE DAIs 与 Devices 之间并不需要路由控件,如之前所强调"device 连接着唯一的 BE DAI,确定了 device 也就能确定所连接的 BE DAI"。

路由控件的开关不仅仅影响 FE PCMs、BE DAIs 的连接或断开,同时会使能或禁用 BE DAIs

该路由的使能函数是 enable_audio_route()/disable_audio_route(),这两个函数名称很贴合,控制 FE PCMs 与 BE DAIs 的连接或断开。

代码流程很简单,把 usecase 和 device 拼接起来就是路由的 path name 了,然后再调用 audio_route_apply_and_update_path() 来设置路由通路:

cpp

const char * const use_case_table[AUDIO_USECASE_MAX] = {

[USECASE_AUDIO_PLAYBACK_DEEP_BUFFER] = "deep-buffer-playback",

[USECASE_AUDIO_PLAYBACK_LOW_LATENCY] = "low-latency-playback",

[USECASE_AUDIO_PLAYBACK_WITH_HAPTICS] = "audio-with-haptics-playback",

[USECASE_AUDIO_PLAYBACK_HIFI] = "hifi-playback",

[USECASE_AUDIO_PLAYBACK_OFFLOAD] = "compress-offload-playback",

[USECASE_AUDIO_PLAYBACK_TTS] = "audio-tts-playback",

[USECASE_AUDIO_PLAYBACK_ULL] = "audio-ull-playback",

[USECASE_AUDIO_PLAYBACK_MMAP] = "mmap-playback",

......

}

BD - do these go to the platform-info.xml file.

// will help in avoiding strdups here

backend_table[SND_DEVICE_IN_BT_SCO_MIC] = strdup("bt-sco");

backend_table[SND_DEVICE_IN_BT_SCO_MIC_WB] = strdup("bt-sco-wb");

backend_table[SND_DEVICE_IN_BT_SCO_MIC_NREC] = strdup("bt-sco");

backend_table[SND_DEVICE_IN_BT_SCO_MIC_WB_NREC] = strdup("bt-sco-wb");

backend_table[SND_DEVICE_OUT_BT_SCO] = strdup("bt-sco");

backend_table[SND_DEVICE_OUT_BT_SCO_WB] = strdup("bt-sco-wb");

backend_table[SND_DEVICE_OUT_BT_A2DP] = strdup("bt-a2dp");

backend_table[SND_DEVICE_OUT_SPEAKER_AND_BT_A2DP] = strdup("speaker-and-bt-a2dp");

backend_table[SND_DEVICE_OUT_SPEAKER_SAFE_AND_BT_A2DP] =

strdup("speaker-safe-and-bt-a2dp");

......

}

void platform_add_backend_name(void *platform, char *mixer_path,

snd_device_t snd_device)

{

struct platform_data *my_data = (struct platform_data *)platform;

if ((snd_device < SND_DEVICE_MIN) || (snd_device >= SND_DEVICE_MAX)) {

ALOGE("%s: Invalid snd_device = %d", __func__, snd_device);

return;

}

const char * suffix = backend_table[snd_device];

if (suffix != NULL) {

strlcat(mixer_path, " ", MIXER_PATH_MAX_LENGTH);

strlcat(mixer_path, suffix, MIXER_PATH_MAX_LENGTH);

}

}

int enable_audio_route(struct audio_device *adev,

struct audio_usecase *usecase)

{

snd_device_t snd_device;

char mixer_path[MIXER_PATH_MAX_LENGTH];

if (usecase == NULL)

return -EINVAL;

ALOGV("%s: enter: usecase(%d)", __func__, usecase->id);

audio_extn_sound_trigger_update_stream_status(usecase, ST_EVENT_STREAM_BUSY);

if (usecase->type == PCM_CAPTURE) {

struct stream_in *in = usecase->stream.in;

struct audio_usecase *uinfo;

snd_device = usecase->in_snd_device;

if (in) {

if (in->enable_aec || in->enable_ec_port) {

audio_devices_t out_device = AUDIO_DEVICE_OUT_SPEAKER;

struct listnode *node;

struct audio_usecase *voip_usecase = get_usecase_from_list(adev,

USECASE_AUDIO_PLAYBACK_VOIP);

if (voip_usecase) {

out_device = voip_usecase->stream.out->devices;

} else if (adev->primary_output &&

!adev->primary_output->standby) {

out_device = adev->primary_output->devices;

} else {

list_for_each(node, &adev->usecase_list) {

uinfo = node_to_item(node, struct audio_usecase, list);

if (uinfo->type != PCM_CAPTURE) {

out_device = uinfo->stream.out->devices;

break;

}

}

}

platform_set_echo_reference(adev, true, out_device);

in->ec_opened = true;

}

}

} else

snd_device = usecase->out_snd_device;

audio_extn_utils_send_app_type_cfg(adev, usecase);

audio_extn_ma_set_device(usecase);

audio_extn_utils_send_audio_calibration(adev, usecase);

// we shouldn't truncate mixer_path

ALOGW_IF(strlcpy(mixer_path, use_case_table[usecase->id], sizeof(mixer_path))

>= sizeof(mixer_path), "%s: truncation on mixer path", __func__);

// this also appends to mixer_path

platform_add_backend_name(adev->platform, mixer_path, snd_device);

ALOGD("%s: usecase(%d) apply and update mixer path: %s", __func__, usecase->id, mixer_path);

audio_route_apply_and_update_path(adev->audio_route, mixer_path);

ALOGV("%s: exit", __func__);

return 0;

}(3)打开device

Android 音频框架层中,音频设备仅表示输入输出端点,它不关心 BE DAIs 与 端点之间都经过了哪些部件(widget)。但我们做底层的必须清楚知道:从BE DAIs 到端点,整条通路经历了哪些部件。具体要经过哪些部件,要参照高通的原理图,有一页是关于codec详细的原理图及路由

为了使得声音从 speaker 端点输出,我们需要打开 AIF1、DAC1、SPKOUT 这些部件,并把它们串联起来,这样音频数据才能顺着这条路径(AIF1>DAC1>SPKOUT>SPEAKER)一路输出到 speaker。

在 mixer_pahts.xml 中看到 speaker 通路:

<path name="speaker">

<ctl name="SPKL DAC1 Switch" value="1" />

<ctl name="DAC1L AIF1RX1 Switch" value="1" />

<ctl name="DAC1R AIF1RX2 Switch" value="1" />

</path>

这些设备通路由 enable_snd_device()/disable_snd_device() 设置:

cpp

int enable_snd_device(struct audio_device *adev,

snd_device_t snd_device)

{

int i, num_devices = 0;

snd_device_t new_snd_devices[2];

int ret_val = -EINVAL;

if (snd_device < SND_DEVICE_MIN ||

snd_device >= SND_DEVICE_MAX) {

ALOGE("%s: Invalid sound device %d", __func__, snd_device);

goto on_error;

}

platform_send_audio_calibration(adev->platform, snd_device);

if (adev->snd_dev_ref_cnt[snd_device] >= 1) {

ALOGV("%s: snd_device(%d: %s) is already active",

__func__, snd_device, platform_get_snd_device_name(snd_device));

goto on_success;

}

/* due to the possibility of calibration overwrite between listen

and audio, notify sound trigger hal before audio calibration is sent */

audio_extn_sound_trigger_update_device_status(snd_device,

ST_EVENT_SND_DEVICE_BUSY);

if (audio_extn_spkr_prot_is_enabled())

audio_extn_spkr_prot_calib_cancel(adev);

audio_extn_dsm_feedback_enable(adev, snd_device, true);

if ((snd_device == SND_DEVICE_OUT_SPEAKER ||

snd_device == SND_DEVICE_OUT_SPEAKER_SAFE ||

snd_device == SND_DEVICE_OUT_SPEAKER_REVERSE ||

snd_device == SND_DEVICE_OUT_VOICE_SPEAKER) &&

audio_extn_spkr_prot_is_enabled()) {

if (platform_get_snd_device_acdb_id(snd_device) < 0) {

goto on_error;

}

if (audio_extn_spkr_prot_start_processing(snd_device)) {

ALOGE("%s: spkr_start_processing failed", __func__);

goto on_error;

}

} else if (platform_can_split_snd_device(snd_device,

&num_devices,

new_snd_devices) == 0) {

for (i = 0; i < num_devices; i++) {

enable_snd_device(adev, new_snd_devices[i]);

}

platform_set_speaker_gain_in_combo(adev, snd_device, true);

} else {

char device_name[DEVICE_NAME_MAX_SIZE] = {0};

if (platform_get_snd_device_name_extn(adev->platform, snd_device, device_name) < 0 ) {

ALOGE(" %s: Invalid sound device returned", __func__);

goto on_error;

}

ALOGD("%s: snd_device(%d: %s)", __func__, snd_device, device_name);

if (is_a2dp_device(snd_device) &&

(audio_extn_a2dp_start_playback() < 0)) {

ALOGE("%s: failed to configure A2DP control path", __func__);

goto on_error;

}

audio_route_apply_and_update_path(adev->audio_route, device_name);

}

on_success:

adev->snd_dev_ref_cnt[snd_device]++;

ret_val = 0;

on_error:

return ret_val;

}代码中有几处概念,要注意一下:

(1)设备引用计数

每个设备都有各自的引用计数 snd_dev_ref_cnt,引用计数在 enable_snd_device() 中累加,如果大于 1,则表示该设备已经被打开了,那么就不会重复打开该设备;引用计数在 disable_snd_device() 中累减,如果为 0,则表示没有 usecase 需要该设备了,那么就关闭该设备。

(2) 待保护的设备

带 "audio_extn_spkr_prot" 前缀的函数是带保护的外放设备的相关函数,这些带保护的外放设备和其他设备不一样,它虽然属于输出设备,但往往还需要打开一个 PCM_IN 作为 I/V Feedback,有了 I/V Feedback 保护算法才能正常运作。

(3)多输出设备的分割

比如 SND_DEVICE_OUT_SPEAKER_AND_HEADPHONES 分割为 SND_DEVICE_OUT_SPEAKER + SND_DEVICE_OUT_HEADPHONES,然后再一一打开 speaker、headphones。为什么要把多输出设备分割为 外放设备+其他设备 的形式?现在智能手机的外放设备一般都是带保护的,需要跑喇叭保护算法,而其他设备如蓝牙耳机也可能需要跑 aptX 算法,如果没有分割的话,只能下发一个 acdb id,无法把喇叭保护算法和 aptX 算法都调度起来。多输出设备分割时,还需要遵循一个规则:如果这些设备均连接到同一个 BE DAI,则无须分割。

cpp

int platform_can_split_snd_device(snd_device_t snd_device,

int *num_devices,

snd_device_t *new_snd_devices)

{

int ret = -EINVAL;

if (NULL == num_devices || NULL == new_snd_devices) {

ALOGE("%s: NULL pointer ..", __func__);

return -EINVAL;

}

/*

* If wired headset/headphones/line devices share the same backend

* with speaker/earpiece this routine -EINVAL.

*/

if (snd_device == SND_DEVICE_OUT_SPEAKER_AND_HEADPHONES &&

!platform_check_backends_match(SND_DEVICE_OUT_SPEAKER, SND_DEVICE_OUT_HEADPHONES)) {

*num_devices = 2;

new_snd_devices[0] = SND_DEVICE_OUT_SPEAKER;

new_snd_devices[1] = SND_DEVICE_OUT_HEADPHONES;

ret = 0;

} else if (snd_device == SND_DEVICE_OUT_SPEAKER_AND_LINE &&

!platform_check_backends_match(SND_DEVICE_OUT_SPEAKER, SND_DEVICE_OUT_LINE)) {

*num_devices = 2;

new_snd_devices[0] = SND_DEVICE_OUT_SPEAKER;

new_snd_devices[1] = SND_DEVICE_OUT_LINE;

ret = 0;

}

return ret;

}