综合案例(使用Logstatsh对采集日志进行处理)

说个前提你的日志要可控,日志要可控,日志要可控,否则你会采集到怀疑人生,尤其在生产环境,需要和研发进行良好沟通。哇哈哈!

要求

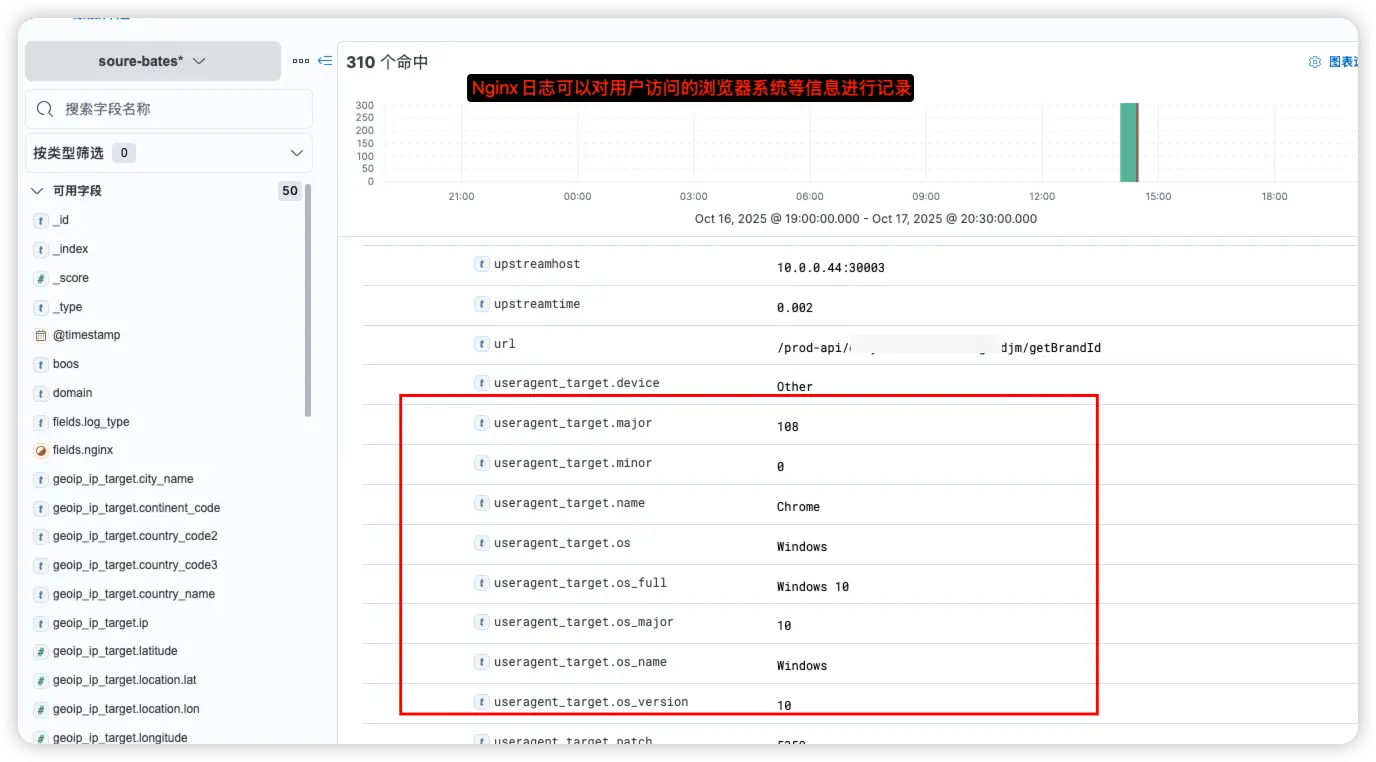

1.对Nginx的access.log日志进行分析,使用设备,客户端IP地址,归属地,PV,UP,IP统计

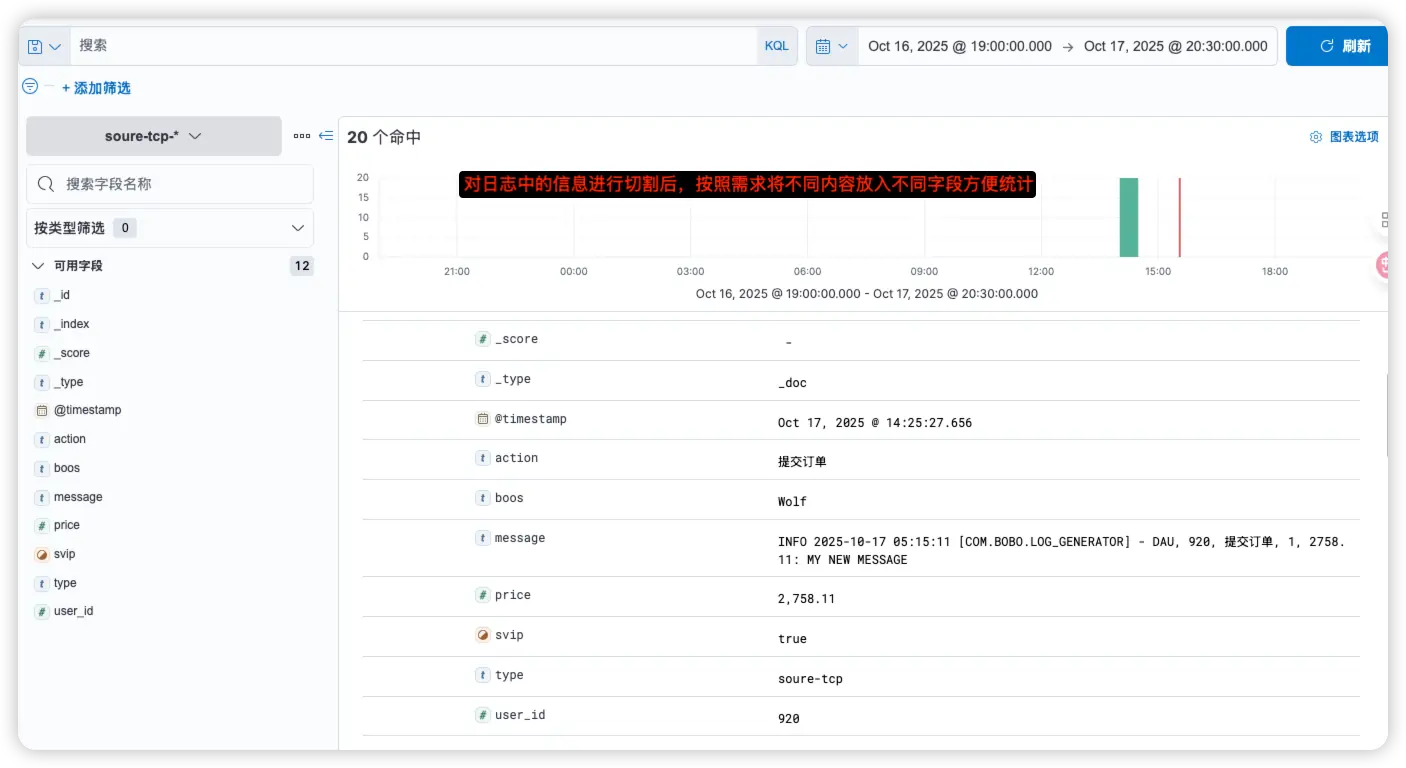

2.对app.log 分析价格 svip的人数,分布情况,价格等

- • Bases端口:7777

- • TCP端口: 8888

1.Logstatsh配置

ini

root@ubuntu2204test99:~/elkf/logstash/pipeline# cat beats-tcp-redis-logstatsh-es.conf

input {

beats {

type => "soure-bates"

port => 7777

}

tcp {

type => "soure-tcp"

port => 8888

}

#redis {

# type => "soure-redis"

# data_type => "list"

# db => 5

# host => "192.168.1.43"

# port => "6379"

# password => "123456"

# key => "filebeat-log"

#}

}

filter {

mutate {

add_field => {

"boos" => "Wolf"

}

}

if [type] == "soure-bates" {

mutate {

remove_field => ["agent", "host", "@version", "ecs", "tags", "input", "log"]

}

geoip {

source => "remote_ip"

#fileds => ["city_name","country_name","ip"]

target => "geoip_ip_target"

}

useragent {

source => "http_user_agent"

target => "useragent_target"

}

} else {

mutate {

remove_field => ["port", "host", "@version"]

split => {

# 对指定字段指定切割条件,进行字段切割

"message" => "|"

}

# 添加字段,字段内容引用切割后的内容分段

add_field => {

"user_id" => "%{[message][1]}"

"action" => "%{[message][2]}"

"svip" => "%{[message][3]}"

"price" => "%{[message][4]}"

}

# 去掉字段2边的空格

strip => ["svip"]

# 将price字段拷贝到price_wolf字段当中

copy => {

"price" => "price_wolf"

}

# 修改字段名称

rename => {

"svip" => "supsvip"

}

# 替换字段内容

replace => { "message" => "%{message}: My new Message"}

#指定字段的字母全部大写

uppercase => [ "message" ]

}

# 将制定字段转换为对应数据类型

mutate {

convert => {

"user_id" => "integer"

"svip" => "boolean"

"price" => "float"

}

}

}

}

output {

stdout {}

if [type] == "soure-bates"{

elasticsearch {

hosts => ["192.168.1.99:9201","192.168.1.99:9202","192.168.1.99:9203"]

user => "elastic"

password => "123456"

index => "soure-bates-%{+yyyy.MM.dd}"

}

} else if [type] == "soure-tcp" {

elasticsearch {

hosts => ["192.168.1.99:9201","192.168.1.99:9202","192.168.1.99:9203"]

user => "elastic"

password => "123456"

index => "soure-tcp-%{+yyyy.MM.dd}"

}

} else {

elasticsearch {

hosts => ["192.168.1.99:9201","192.168.1.99:9202","192.168.1.99:9203"]

user => "elastic"

password => "123456"

index => "soure-other-%{+yyyy.MM.dd}"

}

}

}2.Filebeat配置

2.1 Nginx采集Json日志

ruby

# Nginx日志监控

root@ubuntu2204test99:/usr/local/filebeat-7.17.24# cat filebeat-nginxlog-json-logstatsh.yml

filebeat.inputs:

- type: log

enable: true

tags: ["nginxjson-log"]

json.keys_under_root: true #对Json格式的日志进行解析并放在顶级字段,如果不是json格式会有大量报错

paths:

- /root/nginx_log/access_json_nginx.log

fields:

nginx: true

log_type: json

fields_under_root: false

output.logstash:

hosts: ["192.168.1.99:7777"]

# 测试启动命令

root@ubuntu2204test99:/usr/local/filebeat-7.17.24# ./filebeat -e -c filebeat-nginxlog-json-logstatsh.yml --path.data /tmp/filebeat0012.2 采集开发日志

ruby

# 使用NC将日志传入到logstatsh中

root@ubuntu2204test99:~/log-python# cat /tmp/app.log |nc 192.168.1.99 88882.3 Nginx日志参考格式

ruby

root@ubuntu2204test99:~# cat nginx_log/access_json_nginx.log

{"timestamp":"2025-10-11T15:00:28.603+08:00","server_ip":"10.0.0.17","remote_ip":"221.8.152.37","xff":"-","remote_user":"-","domain":"www.testserv.com","url":"/prod-api/easy-test/goodjm/getBrandId","referer":"https://www.testserv.com/","upstreamtime":"0.002","responsetime":"0.003","request_method":"POST","status":"200","response_length":"505","request_length":"109","protocol":"HTTP/2.0","upstreamhost":"10.0.0.44:30003","http_user_agent":"Mozilla/5.0 (Windows NT 10.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/108.0.5359.125 Safari/537.36 JM_PC/12.13.0.0 Language/zh_CN jmpc;jdlog;windows;12.13.0.0;(Windows 10 Version 2004); JMPCHLM"}

{"timestamp":"2025-10-11T15:00:28.779+08:00","server_ip":"10.0.0.17","remote_ip":"140.255.68.184","xff":"-","remote_user":"-","domain":"www.testserv.com","url":"/prod-api/easy-test/taobaoProduct/search","referer":"https://www.testserv.com/","upstreamtime":"2.738","responsetime":"2.738","request_method":"POST","status":"200","response_length":"10496","request_length":"1285","protocol":"HTTP/2.0","upstreamhost":"10.0.0.14:30003","http_user_agent":"Mozilla/5.0 (Windows NT 10.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/108.0.5359.125 Safari/537.36 JM_PC/12.13.0.0 Language/zh_CN jmpc;jdlog;windows;12.13.0.0;(Windows 10 Version 22H2); JMPCHLM"}2.4 研发日志

ini

root@ubuntu2204test99:~# cat /tmp/app.log

INFO 2025-10-17 05:14:17 [com.bobo.log_generator] - DAU|7218|提交订单|1|2439.51

INFO 2025-10-17 05:14:22 [com.bobo.log_generator] - DAU|7207|评论产品|1|1578.42

INFO 2025-10-17 05:14:23 [com.bobo.log_generator] - DAU|9652|提交订单|1|2486.18

INFO 2025-10-17 05:14:26 [com.bobo.log_generator] - DAU|5095|空购物车|0|1920.26

INFO 2025-10-17 05:14:29 [com.bobo.log_generator] - DAU|3757|加入购物车|1|1600.62

INFO 2025-10-17 05:14:32 [com.bobo.log_generator] - DAU|2265|使用优惠券|1|2967.05

INFO 2025-10-17 05:14:36 [com.bobo.log_generator] - DAU|3640|评论产品|0|2932.49

INFO 2025-10-17 05:14:39 [com.bobo.log_generator] - DAU|1270|提交订单|1|2780.55

INFO 2025-10-17 05:14:40 [com.bobo.log_generator] - DAU|2128|加入购物车|0|2317.06

INFO 2025-10-17 05:14:44 [com.bobo.log_generator] - DAU|6283|评论产品|1|2737.0

INFO 2025-10-17 05:14:47 [com.bobo.log_generator] - DAU|156|浏览产品|0|1697.01

INFO 2025-10-17 05:14:51 [com.bobo.log_generator] - DAU|2926|使用优惠券|1|1629.04

INFO 2025-10-17 05:14:52 [com.bobo.log_generator] - DAU|8780|提交订单|1|2448.92

INFO 2025-10-17 05:14:56 [com.bobo.log_generator] - DAU|8391|领取优惠券|0|2676.02

INFO 2025-10-17 05:14:59 [com.bobo.log_generator] - DAU|6675|使用优惠券|0|2807.36

INFO 2025-10-17 05:15:02 [com.bobo.log_generator] - DAU|2248|领取优惠券|1|2715.31

INFO 2025-10-17 05:15:03 [com.bobo.log_generator] - DAU|1007|使用优惠券|1|2759.94

INFO 2025-10-17 05:15:06 [com.bobo.log_generator] - DAU|7130|加入购物车|0|2787.82

INFO 2025-10-17 05:15:07 [com.bobo.log_generator] - DAU|6850|评论产品|1|1650.43

INFO 2025-10-17 05:15:11 [com.bobo.log_generator] - DAU|920|提交订单|1|2758.113.采集后截图