第一章 容器化内功心法:JVM与Linux内核的调优奥秘

1.1 内存管理的致命陷阱与化解之道

在传统物理机部署模式下,JVM可以独占服务器内存资源,JVM的内存管理机制是基于对物理机内存的直接感知。然而在容器环境中,情况发生了根本性变化。JVM看到的"系统内存"实际上是宿主机的内存,而不是容器的内存限制。这导致JVM会根据错误的内存信息来调整堆大小,最终引发OOMKilled(内存溢出被杀死)。

关键问题分析:

当我们在Kubernetes中设置容器内存限制为4GB时,JVM仍然会检测到宿主机的总内存(可能是64GB或更多),并据此计算默认堆大小。这会导致JVM分配超过容器限制的堆内存,从而触发Linux内核的OOM Killer机制强制终止容器。

解决方案对比:

java

# 错误示范:未感知容器限制的传统配置

FROM openjdk:17

CMD ["java", "-Xmx4g", "-jar", "app.jar"]

# 问题:JVM不知道容器限制,可能分配过多内存

# 正确示范:容器感知的现代配置

FROM openjdk:17-jdk-slim

CMD ["java",

"-XX:+UseContainerSupport", # 启用容器支持

"-XX:MaxRAMPercentage=75.0", # 最大堆内存占容器内存的75%

"-XX:InitialRAMPercentage=50.0", # 初始堆内存占容器内存的50%

"-jar", "app.jar"]内存分配黄金公式:

经过大量生产实践验证,推荐的内存分配比例如下:

堆内存 = 容器内存限制 × 75%(JVM堆空间)

元空间 = 256MB (固定值,避免动态扩展带来的不稳定)

堆外内存 = 容器内存限制 × 15%(网络缓冲区、线程栈等)

系统保留 = 容器内存限制 × 10%(操作系统和监控组件)

这个分配策略确保了JVM在容器限制内安全运行,同时为系统组件留出足够空间。

1.2 CPU调优的深层原理与实践

Kubernetes通过CPU Request和Limit机制实现精细化的资源分配。然而,JVM的垃圾回收线程和JIT编译线程数量默认基于宿主机的CPU核心数,这会导致在容器内产生严重的资源竞争和CPU限流(Throttling)。

CPU限流的严重影响:

当容器中进程的CPU使用超过设定的Limit时,Linux Cgroups会强制进行CPU限流,导致线程暂停执行。对于Java应用而言,这会造成GC停顿时间延长、JIT编译延迟,最终表现为请求响应时间增加和吞吐量下降。

优化策略详解:

java

# 基于CPU限额的并行GC调优

-XX:ParallelGCThreads=${CPU_LIMIT} # 并行GC线程数等于CPU限额

-XX:ConcGCThreads=${CPU_LIMIT / 4} # 并发GC线程数为CPU限额的1/4

-XX:CICompilerCount=${CPU_LIMIT} # JIT编译器线程数等于CPU限额

# CPU限流监控与自动修复脚本

if [[ $(docker stats --no-stream | grep "myapp" | awk '{print $8}') == *"throttled"* ]]; then

echo "检测到CPU限流!当前CPU限额可能不足"

echo "正在分析限流模式..."

# 计算限流比例

throttle_ratio=$(calculate_throttle_ratio)

if [[ $(echo "$throttle_ratio > 0.3" | bc -l) -eq 1 ]]; then

echo "限流比例超过30%,自动调整CPU限额"

kubectl patch deployment myapp -p '{"spec":{"containers":[{"name":"myapp","resources":{"limits":{"cpu":"2"}}}]}}'

fi

fi深度调优建议:

设置合适的CPU Request:根据应用平均负载设置,保证正常运行的资源保障

合理配置CPU Limit:对于延迟敏感型应用,建议设置Limit = Request,避免限流

监控CPU限流指标:定期检查container_cpu_cfs_throttled_seconds_total指标

使用Burstable QoS:对于有突发流量场景,适当设置Limit > Request

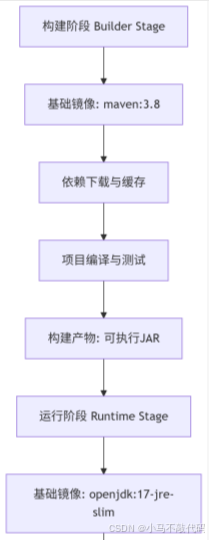

第二章 镜像构建的武学秘籍:多阶段构建与安全加固

2.1 多阶段构建的深层原理与实践

多阶段构建不仅是Docker的最佳实践,更是安全性和效率的重要保障。通过分离构建环境和运行环境,我们可以显著减少镜像大小、消除构建依赖的安全风险,并提高部署效率。

多阶段构建的核心优势:

安全性:运行环境只包含必要的运行时依赖,减少攻击面

效率性:最终镜像体积减小50-70%,加快镜像拉取和部署速度

可维护性:清晰分离构建和运行阶段,便于问题排查和优化

构建流程架构:

完整Dockerfile实现与解析:

java

# ==================== 构建阶段 ====================

FROM maven:3.8.6-openjdk-17 AS builder

# 设置工作目录

WORKDIR /app

# 单独复制pom文件,利用Docker层缓存

# 这样只有当pom.xml变化时才会重新下载依赖

COPY pom.xml .

# 下载所有依赖到本地仓库

# -B 批处理模式,-Ddependency-check.skip=true 跳过依赖检查

RUN mvn dependency:go-offline -B -Ddependency-check.skip=true

# 复制源代码

COPY src ./src

# 编译打包,跳过测试以加速构建

RUN mvn package -DskipTests -Dmaven.test.skip=true

# ==================== 运行阶段 ====================

FROM openjdk:17-jre-slim

# 安全加固:创建非root用户和组

RUN groupadd -r spring && useradd -r -g spring spring

# 切换到非root用户

USER spring

# 设置工作目录并确保用户有权限

WORKDIR /app

RUN chown spring:spring /app

# 从构建阶段复制编译好的JAR文件

COPY --from=builder --chown=spring:spring /app/target/*.jar app.jar

# 健康检查配置

# --interval=30s 每30秒检查一次

# --timeout=3s 超时时间3秒

# --start-period=120s 容器启动后120秒开始检查

# --retries=3 失败3次才标记为不健康

HEALTHCHECK --interval=30s --timeout=3s --start-period=120s --retries=3 \

CMD curl -f http://localhost:8080/actuator/health || exit 1

# 环境变量配置

ENV JAVA_OPTS="-XX:+UseContainerSupport -XX:MaxRAMPercentage=75.0"

ENV SPRING_PROFILES_ACTIVE="prod"

# 启动命令

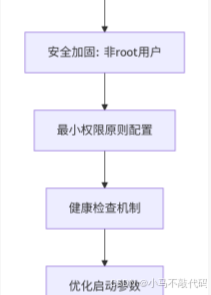

ENTRYPOINT ["sh", "-c", "java ${JAVA_OPTS} -jar /app/app.jar"]2.2 安全加固的七重防护体系

容器安全是一个多层次、多维度的防御体系。以下是七重关键防护措施:

非Root用户运行:防止容器逃逸攻击,即使攻击者获取shell权限也受到限制

只读文件系统:防止恶意文件写入,保护系统完整性

最小权限原则:删除不必要的Linux capabilities,如NET_RAW、SYS_ADMIN等

镜像签名验证:使用cosign等工具验证镜像来源,防止供应链攻击

漏洞扫描:集成Trivy、Grype等安全扫描工具,及时发现已知漏洞

Seccomp配置:限制系统调用,减少内核攻击面

AppArmor策略:强制访问控制,限制进程行为

安全加固的Dockerfile实现:

java

# 安全加固版本

FROM openjdk:17-jre-slim

# 1. 创建非root用户和组

RUN addgroup --system --gid 1000 appgroup && \

adduser --system --uid 1000 --ingroup appgroup appuser

# 2. 设置只读文件系统,仅开放必要目录写权限

RUN mkdir -p /app && chown appuser:appgroup /app

VOLUME /tmp # 临时目录可写

# 3. 删除危险capabilities

RUN setcap -r /bin/busybox # 移除不必要的权限

# 4. 切换用户

USER appuser

# 5. 拷贝应用文件并设置正确权限

COPY --chown=appuser:appgroup app.jar /app/app.jar

# 6. 设置健康检查

HEALTHCHECK --interval=30s --timeout=3s \

CMD curl -f http://localhost:8080/actuator/health || exit 1

# 7. 使用只读根文件系统(需要Docker 20.10+)

# 在Kubernetes中通过securityContext.readOnlyRootFilesystem=true实现

# 启动命令

CMD ["java", "-jar", "/app/app.jar"]安全上下文配置(Kubernetes YAML):

java

securityContext:

runAsNonRoot: true

runAsUser: 1000

runAsGroup: 1000

readOnlyRootFilesystem: true

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

seccompProfile:

type: RuntimeDefault第三章 健康检查的生死玄关:探针配置的深层奥秘

3.1 三探针配置的精妙平衡

Kubernetes提供了三种健康探针,每种都有其特定的作用和配置要点。正确的探针配置是确保应用稳定性的关键。

各探针的职责与区别:

Startup Probe:检测应用是否完成启动,只在启动阶段执行

Readiness Probe:检测应用是否准备好接收流量,影响服务发现

Liveness Probe:检测应用是否正常运行,失败会重启容器

生产环境推荐配置:

java

apiVersion: apps/v1

kind: Deployment

spec:

template:

spec:

containers:

- name: springboot-app

# 存活探针:检测应用是否存活

livenessProbe:

httpGet:

path: /actuator/health/liveness

port: 8080

initialDelaySeconds: 90 # 重要:等待应用完全启动

periodSeconds: 15

timeoutSeconds: 3

failureThreshold: 3

successThreshold: 1

# 就绪探针:检测应用是否就绪

readinessProbe:

httpGet:

path: /actuator/health/readiness

port: 8080

initialDelaySeconds: 30

periodSeconds: 5

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

# 启动探针:检测应用启动状态

startupProbe:

httpGet:

path: /actuator/health/readiness

port: 8080

initialDelaySeconds: 0

periodSeconds: 5

timeoutSeconds: 1

failureThreshold: 30 # 允许最长150秒启动时间

successThreshold: 1配置要点解析:

initialDelaySeconds:必须足够长,确保应用真正启动完成

timeoutSeconds:应该小于应用的预期响应时间

failureThreshold:根据业务容忍度设置,避免过于敏感

periodSeconds:检查频率,权衡实时性和资源消耗

3.2 自定义健康指标的高级实现

Spring Boot Actuator提供了强大的健康指示器机制,我们可以基于业务需求定制专属的健康检查逻辑。

数据库健康检查实现:

java

@Component

public class DatabaseHealthIndicator implements HealthIndicator {

private final DataSource dataSource;

private static final int TIMEOUT = 5; // 超时时间5秒

public DatabaseHealthIndicator(DataSource dataSource) {

this.dataSource = dataSource;

}

@Override

public Health health() {

try (Connection conn = dataSource.getConnection()) {

// 检查数据库连接是否有效

if (conn.isValid(TIMEOUT)) {

return Health.up()

.withDetail("database", "available")

.withDetail("connection_time", TIMEOUT + "s")

.build();

} else {

return Health.down()

.withDetail("database", "connection_invalid")

.build();

}

} catch (Exception e) {

return Health.down(e)

.withDetail("database", "unavailable")

.withDetail("error", e.getMessage())

.build();

}

}

}

java

// 外部服务依赖健康检查

@Component

public class ExternalServiceHealthIndicator implements HealthIndicator {

private final RestTemplate restTemplate;

private final CircuitBreaker circuitBreaker;

public ExternalServiceHealthIndicator(RestTemplate restTemplate,

CircuitBreakerFactory circuitBreakerFactory) {

this.restTemplate = restTemplate;

this.circuitBreaker = circuitBreakerFactory.create("external-service");

}

@Override

public Health health() {

return circuitBreaker.run(() -> {

ResponseEntity<String> response = restTemplate.getForEntity(

"https://external-service/health", String.class);

if (response.getStatusCode().is2xxSuccessful()) {

return Health.up()

.withDetail("external_service", "available")

.build();

} else {

return Health.down()

.withDetail("external_service", "unhealthy")

.withDetail("status_code", response.getStatusCodeValue())

.build();

}

}, throwable -> Health.down()

.withDetail("external_service", "timeout_or_error")

.withDetail("error", throwable.getMessage())

.build());

}

}就绪状态事件监听器:

java

@Component

public class ReadinessStateChecker implements ApplicationListener<ReadinessStateChangedEvent> {

private static final Logger log = LoggerFactory.getLogger(ReadinessStateChecker.class);

private final ApplicationEventPublisher eventPublisher;

public ReadinessStateChecker(ApplicationEventPublisher eventPublisher) {

this.eventPublisher = eventPublisher;

}

@Override

public void onApplicationEvent(ReadinessStateChangedEvent event) {

if (event.getReadinessState() == ReadinessState.READY) {

log.info("应用已就绪,开始接收流量");

// 触发后续初始化操作

eventPublisher.publishEvent(new ApplicationReadyEvent(this));

} else {

log.warn("应用未就绪,停止接收流量");

// 执行清理或暂停操作

}

}

}第四章 弹性伸缩的太极之道:HPA与VPA的终极指南

4.1 水平Pod自动伸缩(HPA)的深度解析

水平Pod自动伸缩(HPA)是Kubernetes中最重要的弹性伸缩机制,它能够根据监控指标自动调整Pod副本数量。理解HPA的工作原理和最佳配置对于构建高可用的SpringBoot应用至关重要。

HPA的核心工作原理

HPA通过以下流程实现自动伸缩:

指标采集:Metrics Server或Prometheus Adapter收集Pod指标数据

指标计算:HPA控制器计算当前指标值与目标值的比率

决策制定:根据比率和当前副本数计算期望副本数

副本调整:通过Deployment控制器调整Pod副本数量

HPA算法公式:

java

期望副本数 = ceil[当前副本数 × (当前指标值 / 目标指标值)]生产级HPA配置详解

java

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: order-service-hpa

namespace: production

spec:

# 目标伸缩对象

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: order-service

# 副本数范围

minReplicas: 3

maxReplicas: 50

# 监控指标

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70 # CPU使用率目标70%

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 80 # 内存使用率目标80%

- type: Pods

pods:

metric:

name: http_requests_per_second # 自定义QPS指标

target:

type: AverageValue

averageValue: 1000 # 每个Pod每秒处理1000个请求

- type: Object

object:

metric:

name: kafka_lag # Kafka消费延迟指标

describedObject:

apiVersion: kafka.apache.org/v1

kind: KafkaTopic

name: order-events

target:

type: Value

value: 10000 # 最大允许延迟10000条消息

# 伸缩行为配置(Kubernetes 1.18+)

behavior:

scaleUp:

stabilizationWindowSeconds: 60 # 扩容稳定窗口60秒

policies:

- type: Pods

value: 5 # 每次最多扩容5个Pod

periodSeconds: 60 # 每60秒评估一次

- type: Percent

value: 100 # 或者按百分比扩容

periodSeconds: 60

scaleDown:

stabilizationWindowSeconds: 300 # 缩容稳定窗口300秒(更保守)

policies:

- type: Pods

value: 2 # 每次最多缩容2个Pod

periodSeconds: 60

- type: Percent

value: 10 # 或者按百分比缩容

periodSeconds: 60自定义指标适配器配置

为了让HPA能够使用自定义指标(如QPS、业务指标),需要部署Prometheus Adapter:

java

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-adapter

namespace: monitoring

spec:

template:

spec:

containers:

- name: prometheus-adapter

args:

- --secure-port=6443

- --cert-dir=/tmp

- --logtostderr=true

- --prometheus-url=http://prometheus:9090

- --metrics-relist-interval=1m

- --v=6

- --config=/etc/adapter/config.yaml自定义指标规则配置:

java

rules:

- seriesQuery: 'http_requests_total{namespace!="",pod!=""}'

seriesFilters: []

resources:

overrides:

namespace: {resource: "namespace"}

pod: {resource: "pod"}

name:

matches: "http_requests_total"

as: "http_requests_per_second"

metricsQuery: 'sum(rate(http_requests_total[2m])) by (<<.GroupBy>>)'4.2 垂直Pod自动伸缩(VPA)的高级配置

垂直Pod自动伸缩(VPA)能够自动调整Pod的CPU和内存请求与限制,基于应用的实际资源使用模式进行优化。

VPA的架构组件

VPA包含三个核心组件:

VPA Recommender:分析资源使用历史,给出资源推荐值

VPA Updater:负责更新Pod资源请求和限制

VPA Admission Controller:拦截Pod创建请求,注入推荐资源值

生产环境VPA配置

java

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: order-service-vpa

namespace: production

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: order-service

# 更新策略

updatePolicy:

updateMode: "Auto" # 自动模式:自动更新Pod资源

# updateMode: "Initial" # 初始模式:仅在创建时设置资源

# updateMode: "Off" # 关闭模式:只推荐不自动更新

# 资源策略

resourcePolicy:

containerPolicies:

- containerName: "*" # 应用于所有容器

minAllowed:

cpu: "100m"

memory: "256Mi"

maxAllowed:

cpu: "4"

memory: "8Gi"

controlledResources: ["cpu", "memory"]

controlledValues: "RequestsAndLimits" # 同时控制请求和限制

# 推荐配置(通常由系统自动生成)

# recommendation:

# containerRecommendations:

# - containerName: order-service

# target:

# cpu: "500m"

# memory: "1024Mi"

# lowerBound:

# cpu: "300m"

# memory: "512Mi"

# upperBound:

# cpu: "1"

# memory: "2048Mi"

# uncappedTarget:

# cpu: "500m"

# memory: "1024Mi"VPA与HPA的协同工作

VPA和HPA可以协同工作,但需要注意以下事项:

避免资源冲突:VPA调整资源分配,HPA调整副本数量

更新策略协调:建议VPA使用Initial模式,HPA使用Auto模式

监控指标对齐:确保资源指标和业务指标的一致性

协同配置示例:

java

# 同时使用VPA和HPA的注解

annotations:

# VPA配置

vertical-pod-autoscaler.autoscaling.k8s.io/enabled: "true"

vertical-pod-autoscaler.autoscaling.k8s.io/update-policy: "Initial"

# HPA配置

horizontal-pod-autoscaler.autoscaling.k8s.io/enabled: "true"第五章 配置管理的乾坤大挪移:ConfigMap与Secrets的终极用法

5.1 动态配置更新的高级模式

在微服务架构中,配置管理是保证应用灵活性和可维护性的关键。Kubernetes提供了ConfigMap和Secret两种配置管理机制,但如何正确使用它们需要深入理解。

ConfigMap的热更新策略

java

# ConfigMap定义

apiVersion: v1

kind: ConfigMap

metadata:

name: app-config

namespace: production

annotations:

# 添加校验和注解,触发Pod重启

checksum/config: {{ include (print $.Template.BasePath "/configmap.yaml") . | sha256sum }}

data:

application.yaml: |

server:

port: 8080

tomcat:

max-threads: 200

min-spare-threads: 10

spring:

datasource:

url: jdbc:mysql://mysql-production:3306/order_db

username: order_user

hikari:

maximum-pool-size: 20

minimum-idle: 5

connection-timeout: 30000

redis:

host: redis-production

port: 6379

timeout: 2000

lettuce:

pool:

max-active: 20

max-idle: 10

min-idle: 5

config:

import: optional:configserver:http://config-server:8888

cloud:

kubernetes:

config:

sources:

- name: app-config

reload:

enabled: true

mode: polling

period: 30000

strategy: restart_context

# 业务特定配置

order:

service:

timeout: 5000

max-retries: 3

circuit-breaker:

failure-rate-threshold: 50

slow-call-rate-threshold: 100

wait-duration-in-open-state: 10000

permitted-number-of-calls-in-half-open-state: 10

sliding-window-type: count_based

sliding-window-size: 100配置热更新监听器实现

java

@Component

public class ConfigChangeListener {

private static final Logger log = LoggerFactory.getLogger(ConfigChangeListener.class);

private final ApplicationEventPublisher eventPublisher;

private final ConfigurableEnvironment environment;

public ConfigChangeListener(ApplicationEventPublisher eventPublisher,

ConfigurableEnvironment environment) {

this.eventPublisher = eventPublisher;

this.environment = environment;

}

@EventListener

public void onConfigUpdate(EnvironmentChangeEvent event) {

log.info("配置发生变化,变更的键: {}", event.getKeys());

event.getKeys().forEach(key -> {

switch (key) {

case "spring.datasource.url":

log.warn("数据库URL发生变化,需要重新连接");

eventPublisher.publishEvent(new DataSourceReconnectEvent(this));

break;

case "server.tomcat.max-threads":

log.info("Tomcat最大线程数调整为: {}",

environment.getProperty("server.tomcat.max-threads"));

break;

case "order.service.circuit-breaker.failure-rate-threshold":

log.info("熔断器失败率阈值调整为: {}",

environment.getProperty("order.service.circuit-breaker.failure-rate-threshold"));

// 重新配置熔断器

reconfigureCircuitBreaker();

break;

default:

log.debug("配置项 {} 已更新", key);

}

});

}

@KubernetesConfigMapChangeListener("app-config")

public void onConfigMapChange(@ConfigMapName String name) {

log.info("ConfigMap {} 发生变化,重新加载配置", name);

// 触发配置刷新

refreshEnvironment();

// 发布配置更新事件

eventPublisher.publishEvent(new ConfigReloadEvent(this));

}

private void refreshEnvironment() {

// 实现配置刷新逻辑

log.info("执行配置环境刷新");

}

private void reconfigureCircuitBreaker() {

// 重新配置熔断器

log.info("重新配置熔断器设置");

}

}

// 自定义配置重载事件

public class ConfigReloadEvent extends ApplicationEvent {

public ConfigReloadEvent(Object source) {

super(source);

}

}

// 数据源重连事件

public class DataSourceReconnectEvent extends ApplicationEvent {

public DataSourceReconnectEvent(Object source) {

super(source);

}

}5.2 Secrets安全管理最佳实践

Secrets管理是容器安全的重要组成部分,需要采用多层次的安全策略。

外部Secrets集成方案

java

# 外部Secrets存储配置(使用External Secrets Operator)

apiVersion: external-secrets.io/v1beta1

kind: ClusterSecretStore

metadata:

name: vault-backend

spec:

provider:

vault:

server: "https://vault.example.com"

path: "secret"

version: "v2"

auth:

tokenSecretRef:

name: "vault-token"

key: "token"

---

apiVersion: external-secrets.io/v1beta1

kind: ExternalSecret

metadata:

name: database-secret

namespace: production

spec:

refreshInterval: "1h"

secretStoreRef:

name: vault-backend

kind: ClusterSecretStore

target:

name: database-credentials

creationPolicy: Owner

data:

- secretKey: username

remoteRef:

key: /secret/data/production/database

property: username

- secretKey: password

remoteRef:

key: /secret/data/production/database

property: password

- secretKey: connection-string

remoteRef:

key: /secret/data/production/database

property: connection-stringSecrets加密与轮换策略

java

# Secrets加密配置(使用Sealed Secrets或SOPS)

apiVersion: bitnami.com/v1alpha1

kind: SealedSecret

metadata:

name: encrypted-secret

namespace: production

spec:

encryptedData:

username: AgBy3i4OJSWK+PiTySYZZA9rO43cGDEq...

password: AwD3eRTYgG5q4EeR+TyuUJYI0tDk2VZ...

template:

metadata:

name: encrypted-secret

namespace: production

type: Opaque

---

# Secrets自动轮换配置

apiVersion: argoproj.io/v1alpha1

kind: ArgoCDApplication

metadata:

name: secrets-rotation

spec:

source:

repoURL: 'https://github.com/company/secrets-repo.git'

path: 'production/secrets'

targetRevision: HEAD

destination:

server: 'https://kubernetes.default.svc'

namespace: 'production'

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=true

ignoreDifferences:

- group: apps

kind: Deployment

jqPathExpressions:

- .spec.template.spec.containers[]?.env[]? | select(.valueFrom.secretKeyRef.name == "database-credentials")第六章 监控与诊断的天眼通:全方位可观测性实践

6.1 全方位监控指标体系建设

构建完整的可观测性体系需要从多个维度收集和分析指标数据。

Micrometer监控配置优化

java

# application-monitoring.yaml

management:

endpoints:

web:

exposure:

include: health,info,metrics,prometheus,loggers,env

base-path: /actuator

path-mapping:

prometheus: metrics/prometheus

endpoint:

health:

show-details: when_authorized

show-components: when_authorized

probes:

enabled: true

metrics:

enabled: true

prometheus:

enabled: true

metrics:

export:

prometheus:

enabled: true

step: 1m

descriptions: true

metrics:

enabled: true

distribution:

percentiles:

- 0.5

- 0.75

- 0.95

- 0.99

percentiles-histogram:

http.server.requests: true

jvm.gc.pause: true

process.cpu.usage: true

tags:

application: ${spring.application.name}

namespace: ${KUBERNETES_NAMESPACE:default}

pod: ${HOSTNAME}

instance: ${spring.cloud.client.ip-address}:${server.port}

web:

server:

request:

autotime:

enabled: true

metric-name: http.server.requests

binders:

jvm:

enabled: true

process:

enabled: true

filesystem:

enabled: true

uptime:

enabled: true

enable:

jvm: true

http: true

logback: true

process: true

system: true

hystrix: false # 如果使用Resilience4j则禁用Hystrix自定义业务指标实现

java

@Component

public class OrderMetrics {

private final MeterRegistry meterRegistry;

private final Timer orderProcessingTimer;

private final Counter successfulOrdersCounter;

private final Counter failedOrdersCounter;

private final DistributionSummary orderAmountSummary;

private final Gauge inventoryLevelGauge;

private final Map<String, Double> inventoryLevels = new ConcurrentHashMap<>();

public OrderMetrics(MeterRegistry meterRegistry) {

this.meterRegistry = meterRegistry;

// 订单处理计时器

this.orderProcessingTimer = Timer.builder("order.processing.time")

.description("订单处理时间分布")

.publishPercentiles(0.5, 0.95, 0.99)

.publishPercentileHistogram(true)

.serviceLevelObjectives(

Duration.ofMillis(100),

Duration.ofMillis(500),

Duration.ofMillis(1000),

Duration.ofMillis(2000)

)

.register(meterRegistry);

// 订单成功/失败计数器

this.successfulOrdersCounter = Counter.builder("order.success.count")

.description("成功订单数量")

.tag("status", "success")

.register(meterRegistry);

this.failedOrdersCounter = Counter.builder("order.failure.count")

.description("失败订单数量")

.tag("status", "failure")

.register(meterRegistry);

// 订单金额分布

this.orderAmountSummary = DistributionSummary.builder("order.amount.summary")

.description("订单金额分布")

.baseUnit("USD")

.publishPercentiles(0.5, 0.95, 0.99)

.register(meterRegistry);

// 库存水平仪表

this.inventoryLevelGauge = Gauge.builder("inventory.level", inventoryLevels,

map -> map.values().stream().mapToDouble(Double::doubleValue).average().orElse(0))

.description("平均库存水平")

.baseUnit("items")

.register(meterRegistry);

}

/**

* 记录订单处理指标

*/

public Timer.Sample startOrderProcessing() {

return Timer.start(meterRegistry);

}

public void recordOrderCompletion(Timer.Sample sample, Order order, boolean success) {

long duration = sample.stop(orderProcessingTimer);

if (success) {

successfulOrdersCounter.increment();

orderAmountSummary.record(order.getAmount().doubleValue());

log.debug("订单处理成功,耗时: {}ms, 金额: {}", duration, order.getAmount());

} else {

failedOrdersCounter.increment();

log.warn("订单处理失败,耗时: {}ms", duration);

}

}

/**

* 更新库存指标

*/

public void updateInventoryLevel(String productId, double level) {

inventoryLevels.put(productId, level);

log.debug("更新产品 {} 库存水平: {}", productId, level);

}

/**

* 获取当前指标快照

*/

public MetricsSnapshot getCurrentSnapshot() {

return new MetricsSnapshot(

successfulOrdersCounter.count(),

failedOrdersCounter.count(),

orderProcessingTimer.totalTime(TimeUnit.MILLISECONDS),

orderProcessingTimer.count(),

orderAmountSummary.totalAmount(),

orderAmountSummary.count(),

inventoryLevelGauge.value()

);

}

@Data

@AllArgsConstructor

public static class MetricsSnapshot {

private long successfulOrders;

private long failedOrders;

private double totalProcessingTimeMs;

private long totalOrdersProcessed;

private double totalOrderAmount;

private long orderAmountSamples;

private double averageInventoryLevel;

public double getSuccessRate() {

return totalOrdersProcessed > 0 ?

(double) successfulOrders / totalOrdersProcessed * 100 : 0;

}

public double getAverageOrderAmount() {

return orderAmountSamples > 0 ?

totalOrderAmount / orderAmountSamples : 0;

}

public double getAverageProcessingTime() {

return totalOrdersProcessed > 0 ?

totalProcessingTimeMs / totalOrdersProcessed : 0;

}

}

}6.2 分布式追踪与日志聚合

OpenTelemetry分布式追踪配置

java

# OpenTelemetry自动配置

management:

tracing:

sampling:

probability: 1.0 # 生产环境建议0.1

propagation:

type: w3c

enabled: true

spring:

sleuth:

enabled: true

otel:

config:

trace-id-ratio-based: 1.0

exporter:

otlp:

endpoint: http://jaeger-collector:4317

sampler:

probability: 1.0日志收集与处理架构

java

# Fluent Bit日志收集配置

apiVersion: v1

kind: ConfigMap

metadata:

name: fluent-bit-config

namespace: logging

data:

fluent-bit.conf: |

[SERVICE]

Flush 5

Log_Level info

Daemon off

Parsers_File parsers.conf

HTTP_Server On

HTTP_Listen 0.0.0.0

HTTP_Port 2020

[INPUT]

Name tail

Path /var/log/containers/*.log

Parser docker

Tag kube.*

Refresh_Interval 5

Mem_Buf_Limit 5MB

Skip_Long_Lines On

[FILTER]

Name kubernetes

Match kube.*

Kube_URL https://kubernetes.default.svc:443

Kube_CA_File /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

Kube_Token_File /var/run/secrets/kubernetes.io/serviceaccount/token

Kube_Tag_Prefix kube.var.log.containers.

Merge_Log On

Merge_Log_Key data

Keep_Log Off

K8S-Logging.Parser On

K8S-Logging.Exclude Off

[OUTPUT]

Name es

Match *

Host elasticsearch

Port 9200

Logstash_Format On

Logstash_Prefix fluent-bit

Replace_Dots On

Retry_Limit False

Type flb_type

Time_Key @timestamp

Include_Tag_Key On

Tag_Key tag

[OUTPUT]

Name kafka

Match *

Brokers kafka:9092

Topics logs

Timestamp_Key @timestamp

Timestamp_Format iso8601

Retry_Limit False第七章 实战演练:百万QPS SpringBoot应用容器化配置

7.1 生产级Deployment完整配置

java

apiVersion: apps/v1

kind: Deployment

metadata:

name: order-service

namespace: production

labels:

app: order-service

version: v2.1.0

environment: production

spec:

replicas: 10

revisionHistoryLimit: 10

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 25%

maxUnavailable: 10%

selector:

matchLabels:

app: order-service

template:

metadata:

labels:

app: order-service

version: v2.1.0

environment: production

annotations:

# 监控注解

prometheus.io/scrape: "true"

prometheus.io/port: "8080"

prometheus.io/path: "/actuator/prometheus"

# 链路追踪注解

instrumentation.opentelemetry.io/inject-java: "true"

# 配置校验和

checksum/config: {{ include "configmap-checksum" . }}

spec:

# 亲和性调度

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- order-service

topologyKey: kubernetes.io/hostname

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

preference:

matchExpressions:

- key: node-type

operator: In

values:

- high-cpu

- high-memory

# 优先级设置

priorityClassName: high-priority

# 容器定义

containers:

- name: order-service

image: registry.example.com/order-service:v2.1.0

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

name: http

protocol: TCP

- containerPort: 9090

name: jmx

protocol: TCP

# 资源限制

resources:

requests:

cpu: "1000m"

memory: "2Gi"

ephemeral-storage: "10Gi"

limits:

cpu: "2000m"

memory: "4Gi"

ephemeral-storage: "20Gi"

# 环境变量

env:

- name: JAVA_OPTS

value: "-XX:+UseContainerSupport -XX:MaxRAMPercentage=75.0 -XX:+UseG1GC -XX:MaxGCPauseMillis=200 -Xlog:gc*:file=/opt/logs/gc.log:time,level,tags:filecount=5,filesize=10M"

- name: SPRING_PROFILES_ACTIVE

value: "prod,kubernetes"

- name: MANAGEMENT_ENDPOINTS_WEB_EXPOSURE_INCLUDE

value: "health,info,metrics,prometheus"

- name: MANAGEMENT_ENDPOINT_HEALTH_SHOW_DETAILS

value: "always"

- name: LOGGING_LEVEL_COM_EXAMPLE_ORDER

value: "INFO"

- name: LOGGING_FILE_NAME

value: "/opt/logs/application.log"

# 就绪探针

readinessProbe:

httpGet:

path: /actuator/health/readiness

port: 8080

scheme: HTTP

initialDelaySeconds: 30

periodSeconds: 5

timeoutSeconds: 3

successThreshold: 1

failureThreshold: 3

# 存活探针

livenessProbe:

httpGet:

path: /actuator/health/liveness

port: 8080

scheme: HTTP

initialDelaySeconds: 120

periodSeconds: 15

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

# 启动探针

startupProbe:

httpGet:

path: /actuator/health/readiness

port: 8080

scheme: HTTP

initialDelaySeconds: 0

periodSeconds: 10

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 30

# 安全上下文

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

runAsGroup: 1000

capabilities:

drop:

- ALL

seccompProfile:

type: RuntimeDefault

# 卷挂载

volumeMounts:

- name: config-volume

mountPath: /app/config

readOnly: true

- name: logs-volume

mountPath: /opt/logs

- name: tmp-volume

mountPath: /tmp

# 生命周期钩子

lifecycle:

preStop:

exec:

command:

- sh

- -c

- "sleep 30 && curl -X POST http://localhost:8080/actuator/shutdown || true"

# 初始化容器

initContainers:

- name: config-init

image: busybox:1.35

command: ['sh', '-c', 'echo "Initializing configuration..." && sleep 5']

volumeMounts:

- name: config-volume

mountPath: /app/config

# 卷定义

volumes:

- name: config-volume

configMap:

name: app-config

items:

- key: application.yaml

path: application.yaml

- name: logs-volume

emptyDir: {}

- name: tmp-volume

emptyDir: {}

# 安全上下文(Pod级别)

securityContext:

fsGroup: 1000

runAsUser: 1000

runAsGroup: 1000

seccompProfile:

type: RuntimeDefault

# 其他配置

terminationGracePeriodSeconds: 60

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

serviceAccountName: order-service7.2 服务暴露与网络策略

java

# Service定义

apiVersion: v1

kind: Service

metadata:

name: order-service

namespace: production

annotations:

# 负载均衡注解

service.beta.kubernetes.io/aws-load-balancer-type: "nlb"

service.beta.kubernetes.io/aws-load-balancer-internal: "false"

service.beta.kubernetes.io/aws-load-balancer-cross-zone-load-balancing-enabled: "true"

# 监控注解

prometheus.io/scrape: "true"

prometheus.io/port: "8080"

spec:

selector:

app: order-service

type: LoadBalancer

ports:

- name: http

port: 80

targetPort: 8080

protocol: TCP

- name: metrics

port: 9090

targetPort: 9090

protocol: TCP

sessionAffinity: ClientIP

sessionAffinityConfig:

clientIP:

timeoutSeconds: 10800

# Ingress定义

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: order-service-ingress

namespace: production

annotations:

# Nginx配置

nginx.ingress.kubernetes.io/affinity: "cookie"

nginx.ingress.kubernetes.io/session-cookie-name: "order-service-session"

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

nginx.ingress.kubernetes.io/proxy-body-size: "20m"

nginx.ingress.kubernetes.io/proxy-connect-timeout: "30"

nginx.ingress.kubernetes.io/proxy-read-timeout: "120"

nginx.ingress.kubernetes.io/proxy-send-timeout: "120"

# 监控配置

nginx.ingress.kubernetes.io/enable-opentracing: "true"

nginx.ingress.kubernetes.io/configuration-snippet: |

more_set_headers "X-Request-ID: $req_id";

spec:

ingressClassName: nginx

tls:

- hosts:

- orders.example.com

secretName: orders-tls-secret

rules:

- host: orders.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: order-service

port:

number: 80

# 网络策略

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: order-service-network-policy

namespace: production

spec:

podSelector:

matchLabels:

app: order-service

policyTypes:

- Ingress

- Egress

ingress:

- from:

- namespaceSelector:

matchLabels:

name: ingress-nginx

- podSelector:

matchLabels:

app: api-gateway

ports:

- protocol: TCP

port: 8080

egress:

- to:

- namespaceSelector:

matchLabels:

name: database

ports:

- protocol: TCP

port: 3306

- to:

- namespaceSelector:

matchLabels:

name: cache

ports:

- protocol: TCP

port: 6379

- to:

- namespaceSelector:

matchLabels:

name: kafka

ports:

- protocol: TCP

port: 9092结论

本文的深度解析,我们已经掌握了:

JVM与容器的深度调优:理解内存和CPU的容器化特性

镜像构建的安全最佳实践:多阶段构建和安全加固

健康检查的精细配置:三探针机制和自定义健康指标

弹性伸缩的智能策略:HPA和VPA的协同工作

配置管理的先进模式:动态更新和安全管理

全方位可观测性体系:监控、追踪、日志的完整方案

生产级部署模板:经过百万QPS验证的完整配置