zookeeper: Apache Hadoop生态组件部署分享-zookeeper

hadoop:Apache Hadoop生态组件部署分享-Hadoop

hive: Apache Hadoop生态组件部署分享-Hive

hbase: Apache Hadoop生态组件部署分享-Hbase

impala:Apache Hadoop生态组件部署分享-Impala

spark: Apache Hadoop生态组件部署分享-Spark

sqoop: Apache Hadoop生态组件部署分享-Sqoop

下载地址: https://kafka.apache.org/downloads

文档地址: https://kafka.apache.org/documentation/#java

说明: kafaka4.1.0 已经脱离了zk的依赖,因此你可以把它当成独立的组件去使用.(这里需要提高java版本,在启动脚本加上export JAVA_HOME=/opt/module/jdk-17.0.12即可,这样也不会影响其他组件)

1、上传并解压并分发

apache

tar -xf kafka_2.13-4.1.0.tgz -C /opt/apache/scp -rp kafka_2.13-4.1.0/ 192.168.242.231:/opt/apache/scp -rp kafka_2.13-4.1.0/ 192.168.242.232:/opt/apache/2、修改配置

A. apache230.hadoop.com 配置内容

ini

process.roles=broker,controller

node.id=1#controller.quorum.bootstrap.servers=apache230.hadoop.com:9093,apache231.hadoop.com:9093,apache232.hadoop.com:9093controller.quorum.voters=1@apache230.hadoop.com:9093,2@apache231.hadoop.com:9093,3@apache232.hadoop.com:9093############################# Socket Server Settings #############################advertised.listeners=PLAINTEXT://apache230.hadoop.com:9092controller.listener.names=CONTROLLERlistener.security.protocol.map=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSLnum.network.threads=3num.io.threads=8socket.send.buffer.bytes=102400socket.receive.buffer.bytes=102400socket.request.max.bytes=104857600

############################# Log Basics #############################log.dirs=/opt/apache/kafka_2.13-4.1.0/kraft-combined-logs_1

num.partitions=1num.recovery.threads.per.data.dir=1

############################# Internal Topic Settings #############################offsets.topic.replication.factor=1share.coordinator.state.topic.replication.factor=1share.coordinator.state.topic.min.isr=1transaction.state.log.replication.factor=1transaction.state.log.min.isr=1

log.retention.hours=168log.segment.bytes=1073741824log.retention.check.interval.ms=300000B. apache231.hadoop.com 配置内容

ruby

process.roles=broker,controllernode.id=2#controller.quorum.bootstrap.servers=apache230.hadoop.com:9093,apache231.hadoop.com:9093,apache232.hadoop.com:9093controller.quorum.voters=1@apache230.hadoop.com:9093,2@apache231.hadoop.com:9093,3@apache232.hadoop.com:9093############################# Socket Server Settings #############################advertised.listeners=PLAINTEXT://apache231.hadoop.com:9092controller.listener.names=CONTROLLERlistener.security.protocol.map=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSLnum.network.threads=3num.io.threads=8socket.send.buffer.bytes=102400socket.receive.buffer.bytes=102400socket.request.max.bytes=104857600############################# Log Basics #############################log.dirs=/opt/apache/kafka_2.13-4.1.0/kraft-combined-logs_1num.partitions=1num.recovery.threads.per.data.dir=1############################# Internal Topic Settings #############################offsets.topic.replication.factor=1share.coordinator.state.topic.replication.factor=1share.coordinator.state.topic.min.isr=1transaction.state.log.replication.factor=1transaction.state.log.min.isr=1log.retention.hours=168log.segment.bytes=1073741824log.retention.check.interval.ms=300000C. apache232.hadoop.com 配置内容

ini

process.roles=broker,controller

node.id=3#controller.quorum.bootstrap.servers=apache230.hadoop.com:9093,apache231.hadoop.com:9093,apache232.hadoop.com:9093controller.quorum.voters=1@apache230.hadoop.com:9093,2@apache231.hadoop.com:9093,3@apache232.hadoop.com:9093############################# Socket Server Settings #############################advertised.listeners=PLAINTEXT://apache232.hadoop.com:9092controller.listener.names=CONTROLLERlistener.security.protocol.map=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSLnum.network.threads=3num.io.threads=8socket.send.buffer.bytes=102400socket.receive.buffer.bytes=102400socket.request.max.bytes=104857600

############################# Log Basics #############################log.dirs=/opt/apache/kafka_2.13-4.1.0/kraft-combined-logs_1

num.partitions=1num.recovery.threads.per.data.dir=1

############################# Internal Topic Settings #############################offsets.topic.replication.factor=1share.coordinator.state.topic.replication.factor=1share.coordinator.state.topic.min.isr=1transaction.state.log.replication.factor=1transaction.state.log.min.isr=1

log.retention.hours=168log.segment.bytes=1073741824log.retention.check.interval.ms=3000003、生成UUI并格式化kafka

python

[root@apache230 kafka_2.13-4.1.0]# ./bin/kafka-storage.sh random-uuid2025-10-10 09:54:31,580 INFO utils.Log4jControllerRegistration$: Registered `kafka:type=kafka.Log4jController` MBeaniDUiq4ziSGC65Y38y9fOvA

三台节点都执行bin/kafka-storage.sh format --cluster-id iDUiq4ziSGC65Y38y9fOvA --config config/server.properties4、启动kafka

bash

bin/kafka-server-start.sh config/server.properties5、kafka相关操作验证

python

[root@apache232 kafka_2.13-4.1.0]# bin/kafka-topics.sh --create --topic quickstart-events --bootstrap-server apache232.hadoop.com:9092Created topic quickstart-events.

bin/kafka-topics.sh --describe --topic quickstart-events --bootstrap-server apache232.hadoop.com:9092[root@apache232 kafka_2.13-4.1.0]# bin/kafka-topics.sh --describe --topic quickstart-events --bootstrap-server apache232.hadoop.com:9092Topic: quickstart-events TopicId: Y3um9focTcmQkzRd5q8SaA PartitionCount: 1 ReplicationFactor: 1 Configs: min.insync.replicas=1,segment.bytes=1073741824 Topic: quickstart-events Partition: 0 Leader: 2 Replicas: 2 Isr: 2 Elr: LastKnownElr:

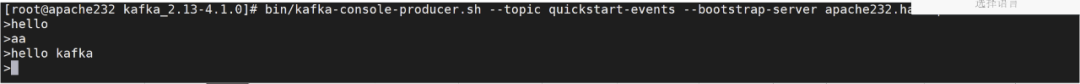

#生产者bin/kafka-console-producer.sh --topic quickstart-events --bootstrap-server apache232.hadoop.com:9092

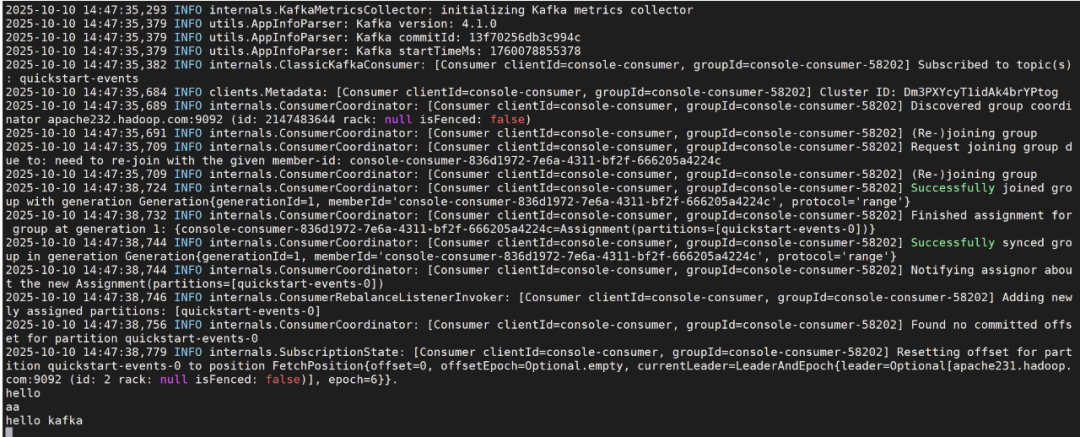

#消费者bin/kafka-console-consumer.sh --topic quickstart-events --from-beginning --bootstrap-server apache232.hadoop.com:9092

消费截图