超详细ubuntu22.04部署k8s1.28高可用(一)【多master+keepalived+nginx实现负载均衡】

文章目录

- 超详细ubuntu22.04部署k8s1.28高可用(一)【多master+keepalived+nginx实现负载均衡】

- 一、整体架构说明

- 二、环境准备

- 三、Kubeadm部署K8S集群

-

- [1. 安装常用依赖(每台服务器)](#1. 安装常用依赖(每台服务器))

- [2. 基础配置(192.168.24.31~34)](#2. 基础配置(192.168.24.31~34))

- [3. 安装containerd(192.168.24.31~34)](#3. 安装containerd(192.168.24.31~34))

- [4. 安装kubelet、kubeadm、kubectl(192.168.24.31~34)](#4. 安装kubelet、kubeadm、kubectl(192.168.24.31~34))

- [5. 安装Nginx并配置(192.168.24.35~36)](#5. 安装Nginx并配置(192.168.24.35~36))

- [6. 安装Keepalived并配置(192.168.24.35~36)](#6. 安装Keepalived并配置(192.168.24.35~36))

- [7. 初始化集群(192.168.24.31)](#7. 初始化集群(192.168.24.31))

- [8. 安装网络插件](#8. 安装网络插件)

- 总结

随着业务规模扩大和系统稳定性要求的不断提升,单 Master 的 Kubernetes 集群在生产环境中已经难以满足高可用需求。一旦 Master 节点发生故障,集群将面临 API 不可用、调度停止等风险,严重影响业务运行

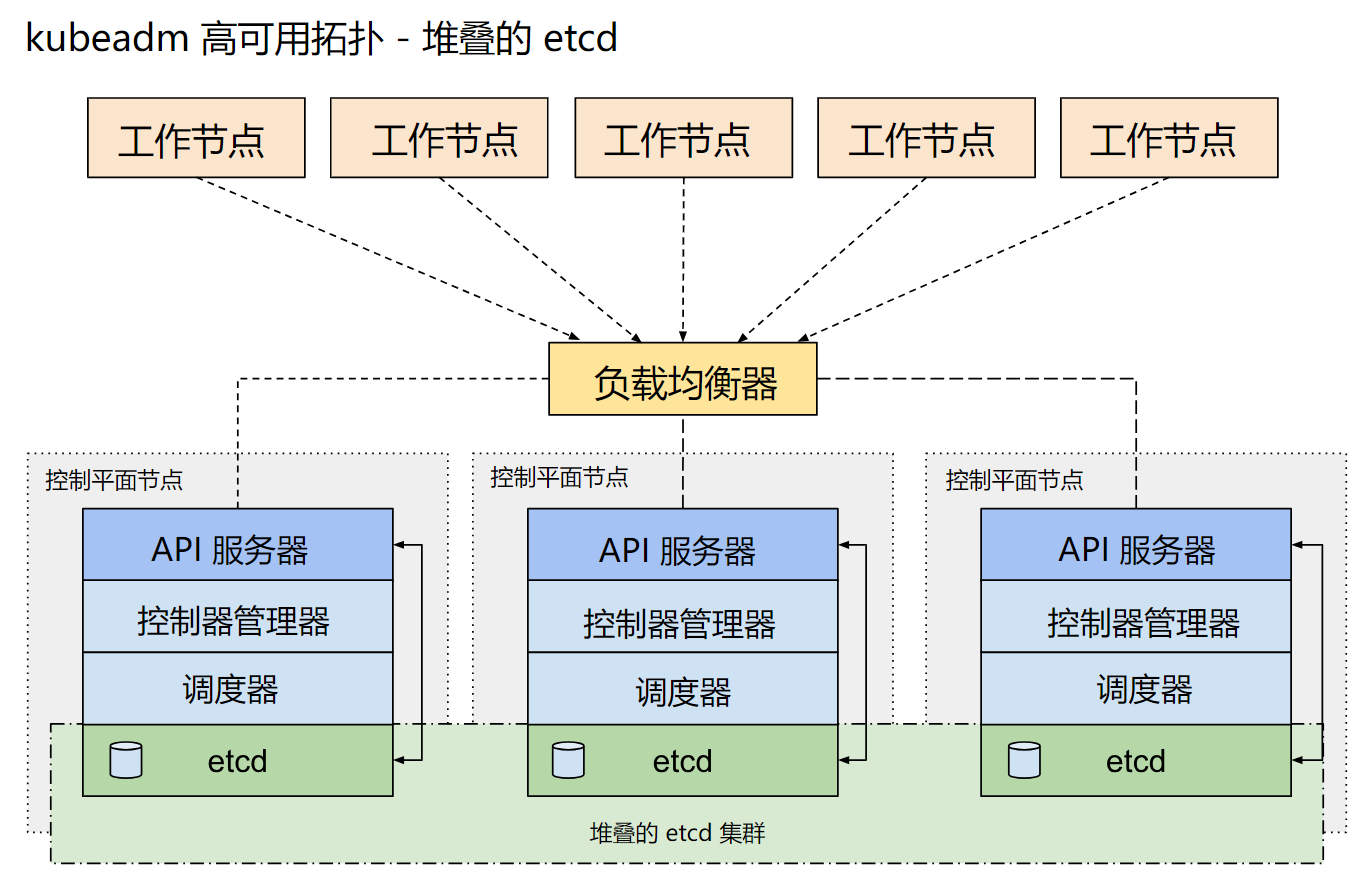

Kubernetes 官方推荐通过 多 Master + etcd 集群 的方式构建高可用控制平面,但在多 Master 架构下,如何为 kube-apiserver 提供统一、稳定的访问入口,成为集群高可用部署中的关键问题

在生产实践中,常见的解决方案是通过 Keepalived + Nginx:

- Keepalived 提供 VIP 漂移能力,保证访问入口的高可用

- Nginx 作为四层或七层负载均衡器,将请求分发到后端多个 Master 节点上的 kube-apiserver

- 多个 Master 节点同时运行 kube-apiserver / controller-manager / scheduler,并配合 etcd 集群 实现控制平面的整体高可用

本篇将基于 Kubernetes 1.28 版本,详细介绍一种 生产可落地的高可用部署方案,通过:

- 多 Master + 多 etcd 架构

- Keepalived 提供 VIP

- Nginx 实现 kube-apiserver 的负载均衡

构建一个稳定、可靠、可扩展的 Kubernetes 高可用集群,为后续的业务容器化部署和集群运维打下坚实基础

一、整体架构说明

本方案采用 三 Master + 两节点负载均衡 的经典高可用架构:

- 控制平面高可用: 多个 Master 节点同时运行 kube-apiserver、controller-manager、scheduler 组件

- etcd 高可用:每个 Master 节点部署一个 etcd 实例,组成三节点 etcd 集群

- 访问入口高可用:Keepalived 提供 VIP,Nginx 监听 VIP 并将请求负载到后端 Master 节点

无论单个 Master、单个 etcd 节点,还是单个负载均衡节点发生故障,集群都可以自动切换,确保 Kubernetes API 服务持续可用

二、环境准备

| 服务器 | 主机名 | IP地址 | 主要组件 |

|---|---|---|---|

| master-01 + etcd-01节点 | k8s-master-1 | 192.168.24.31 | apiserver controller-manager scheduler proxy etcd |

| master-02 + etcd-02节点 | k8s-master-2 | 192.168.24.32 | apiserver controller-manager scheduler proxy etcd |

| master-03 + etcd-03节点 | k8s-master-3 | 192.168.24.33 | apiserver controller-manager scheduler proxy etcd |

| node-01 节点 | k8s-node-1 | 192.168.24.34 | kubelet proxy(资源有限,本demo只使用一个node节点) |

| nginx + keepalive-01节点 | keepalived-1 | 192.168.24.35 | nginx keepalived(主) |

| nginx + keepalive-02节点 | keepalived-2 | 192.168.24.36 | nginx keepalived(备) |

三、Kubeadm部署K8S集群

1. 安装常用依赖(每台服务器)

bash

apt install iproute2 net-tools ntpdate tcpdump telnet traceroute nfs-kernel-server nfs-common lrzsz tree openssl libssl-dev libpcre3 libpcre3-dev zlib1g-dev ntpdate tcpdump telnet traceroute gcc openssh-server lrzsz tree openssl libssl-dev libpcre3 libpcre3-dev zlib1g-dev ntpdate tcpdump telnet traceroute iotop unzip zip vim -y2. 基础配置(192.168.24.31~34)

bash

# 配置主机名

hostnamectl set-hostname k8s-master-1

hostnamectl set-hostname k8s-master-2

hostnamectl set-hostname k8s-master-3

hostnamectl set-hostname k8s-node-1

# 配置hosts文件

cat >> /etc/hosts << EOF

192.168.24.31 k8s-master-1

192.168.24.32 k8s-master-2

192.168.24.33 k8s-master-3

192.168.24.34 k8s-master-4

EOF

# 关闭swap分区

swapoff -a

sed -i '/swap/d' /etc/fstab

# 加载内核模块

cat <<EOF | tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

modprobe overlay

modprobe br_netfilter

# 加载sysctl参数

cat <<EOF | tee /etc/sysctl.d/kubernetes.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.ipv4.ip_nonlocal_bind=1

EOF

sysctl --system3. 安装containerd(192.168.24.31~34)

bash

# 安装containerd(K8s1.24版本以后已经不使用docker作为容器运行时了)

apt update

apt install -y containerd

# 生成配置文件

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml

# 修改cgroup驱动和镜像地址

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

sed -i 's#sandbox_image = "registry.k8s.io/pause:3.8"#sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9"#' /etc/containerd/config.toml

# 启动containerd

systemctl restart containerd

systemctl enable containerd

containerd --version4. 安装kubelet、kubeadm、kubectl(192.168.24.31~34)

bash

# 添加阿里云GPG KEY

mkdir -p /etc/apt/keyrings

curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/deb/Release.key \

| gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

# 添加阿里云K8S源

cat <<EOF | tee /etc/apt/sources.list.d/kubernetes.list

deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] \

https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/deb/ /

EOF

# 更新并安装

apt update

apt install -y kubelet kubeadm kubectl

# 配置开机自启

systemctl enable kubelet --now

systemctl status kubelet #可以看到状态是auto-restart,正常5. 安装Nginx并配置(192.168.24.35~36)

bash

# 安装nginx

#安装必备组件

sudo apt install curl gnupg2 ca-certificates lsb-release ubuntu-keyring

#导入导入官方nginx签名密钥,以便apt可以验证包的真实性,获取密钥

curl https://nginx.org/keys/nginx_signing.key | gpg --dearmor \

| sudo tee /usr/share/keyrings/nginx-archive-keyring.gpg >/dev/null

#设置apt存储库

echo "deb [signed-by=/usr/share/keyrings/nginx-archive-keyring.gpg] \

http://nginx.org/packages/ubuntu `lsb_release -cs` nginx" \

| sudo tee /etc/apt/sources.list.d/nginx.list

#使用主线nginx包

echo "deb [signed-by=/usr/share/keyrings/nginx-archive-keyring.gpg] \

http://nginx.org/packages/mainline/ubuntu `lsb_release -cs` nginx" \

| sudo tee /etc/apt/sources.list.d/nginx.list

#设置存储库

echo -e "Package: *\nPin: origin nginx.org\nPin: release o=nginx\nPin-Priority: 900\n" \

| sudo tee /etc/apt/preferences.d/99nginx

#更新源并安装nginx

apt update

apt install nginx

# 配置nginx

vim /etc/nginx/nginx.conf

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}

stream {

upstream kube_apiserver {

server 192.168.24.31:6443;

server 192.168.24.32:6443;

server 192.168.24.33:6443;

}

server {

listen 6443;

proxy_pass kube_apiserver;

}

}

# 启动nginx

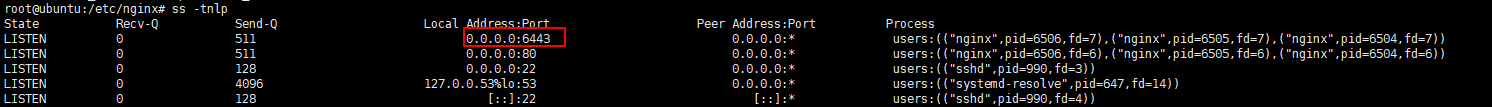

nginx -t && nginx

# 验证nginx端口是否启动

ss -tnlp

6. 安装Keepalived并配置(192.168.24.35~36)

bash

# 安装keepalived

apt install keepalived

# 配置keepalived

vim /etc/keepalived/keepalived.conf (192.168.24.35)

vrrp_instance VI_1 {

state MASTER #主节点

interface ens160 #根据自己网卡名称进行更改

virtual_router_id 51

priority 100 #节点优先级

advert_int 1

authentication {

auth_type PASS

auth_pass 1234

}

virtual_ipaddress {

192.168.24.30 #定义的VIP地址

}

}

vim /etc/keepalived/keepalived.conf (192.168.24.36)

vrrp_instance VI_1 {

state BACKUP #备用节点

interface ens160

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1234

}

virtual_ipaddress {

192.168.24.30

}

}

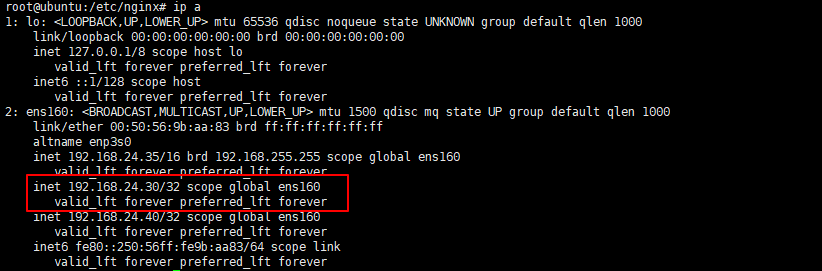

# 启动keepalived

systemctl enable keepalived --now

# 查看VIP是否生效

ip a

7. 初始化集群(192.168.24.31)

bash

# 初始化集群(VIP:6443)

kubeadm init --control-plane-endpoint "192.168.24.30:6443" --upload-certs \

--pod-network-cidr=10.244.0.0/16 \

--kubernetes-version=1.28.15 \

--image-repository=registry.aliyuncs.com/google_containers

# 其他master节点加入集群(类似如下命令,初始化完成后会有提示)

kubeadm join 192.168.24.30:6443 --token odmvxr.yvxk22il5749xkdc \

--discovery-token-ca-cert-hash sha256:40c0f9fdb7f426a18a5d90e30bb65de4d6115d2dab27c78b20194e7424c5afdc \

--control-plane \

--certificate-key 382d1d4669d7149422b6a6aace780e3d38965c5450888403b8d0b3bc871434f3

# node节点加入集群(类似如下命令,初始化完成后会有提示)

kubeadm join 192.168.24.30:6443 \

--token odmvxr.yvxk22il5749xkdc \

--discovery-token-ca-cert-hash sha256:40c0f9fdb7f426a18a5d90e30bb65de4d6115d2dab27c78b20194e7424c5afdc

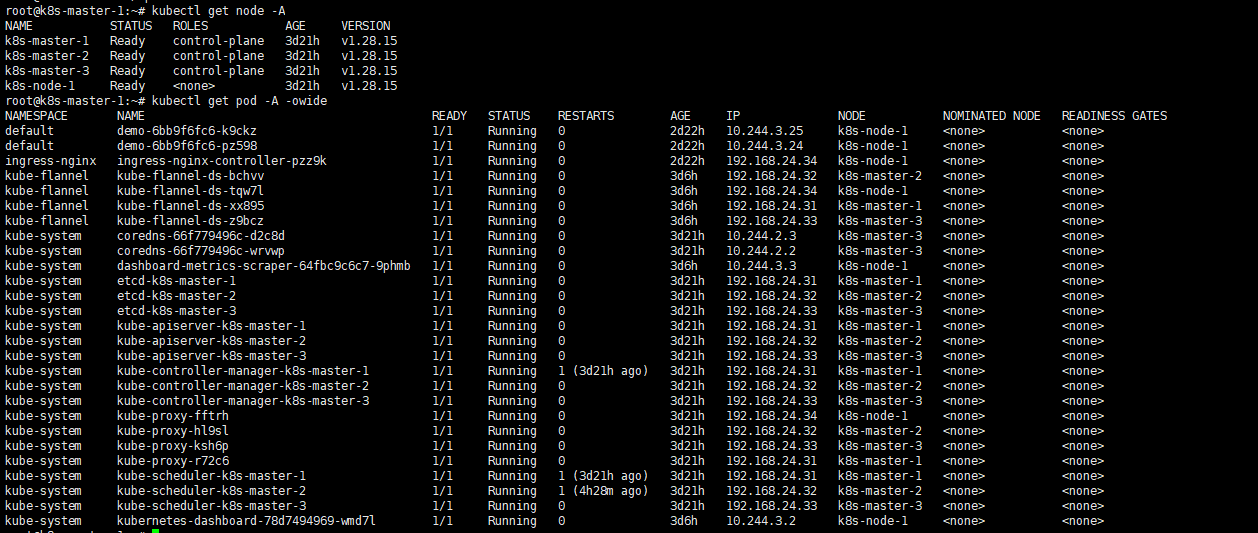

# 验证K8S集群部署情况(如果出现NotReady,是没有安装网络插件导致的,往后看)

root@k8s-master-1:~# kubectl get node -A

NAME STATUS ROLES AGE VERSION

k8s-master-1 Ready control-plane 3d21h v1.28.15

k8s-master-2 Ready control-plane 3d21h v1.28.15

k8s-master-3 Ready control-plane 3d21h v1.28.15

k8s-node-1 Ready <none> 3d21h v1.28.15

root@k8s-master-1:~# kubectl get pod -A -owide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

default demo-6bb9f6fc6-k9ckz 1/1 Running 0 2d22h 10.244.3.25 k8s-node-1 <none> <none>

default demo-6bb9f6fc6-pz598 1/1 Running 0 2d22h 10.244.3.24 k8s-node-1 <none> <none>

ingress-nginx ingress-nginx-controller-pzz9k 1/1 Running 0 2d22h 192.168.24.34 k8s-node-1 <none> <none>

kube-flannel kube-flannel-ds-bchvv 1/1 Running 0 3d6h 192.168.24.32 k8s-master-2 <none> <none>

kube-flannel kube-flannel-ds-tqw7l 1/1 Running 0 3d6h 192.168.24.34 k8s-node-1 <none> <none>

kube-flannel kube-flannel-ds-xx895 1/1 Running 0 3d6h 192.168.24.31 k8s-master-1 <none> <none>

kube-flannel kube-flannel-ds-z9bcz 1/1 Running 0 3d6h 192.168.24.33 k8s-master-3 <none> <none>

kube-system coredns-66f779496c-d2c8d 1/1 Running 0 3d21h 10.244.2.3 k8s-master-3 <none> <none>

kube-system coredns-66f779496c-wrvwp 1/1 Running 0 3d21h 10.244.2.2 k8s-master-3 <none> <none>

kube-system dashboard-metrics-scraper-64fbc9c6c7-9phmb 1/1 Running 0 3d6h 10.244.3.3 k8s-node-1 <none> <none>

kube-system etcd-k8s-master-1 1/1 Running 0 3d21h 192.168.24.31 k8s-master-1 <none> <none>

kube-system etcd-k8s-master-2 1/1 Running 0 3d21h 192.168.24.32 k8s-master-2 <none> <none>

kube-system etcd-k8s-master-3 1/1 Running 0 3d21h 192.168.24.33 k8s-master-3 <none> <none>

kube-system kube-apiserver-k8s-master-1 1/1 Running 0 3d21h 192.168.24.31 k8s-master-1 <none> <none>

kube-system kube-apiserver-k8s-master-2 1/1 Running 0 3d21h 192.168.24.32 k8s-master-2 <none> <none>

kube-system kube-apiserver-k8s-master-3 1/1 Running 0 3d21h 192.168.24.33 k8s-master-3 <none> <none>

kube-system kube-controller-manager-k8s-master-1 1/1 Running 1 (3d21h ago) 3d21h 192.168.24.31 k8s-master-1 <none> <none>

kube-system kube-controller-manager-k8s-master-2 1/1 Running 0 3d21h 192.168.24.32 k8s-master-2 <none> <none>

kube-system kube-controller-manager-k8s-master-3 1/1 Running 0 3d21h 192.168.24.33 k8s-master-3 <none> <none>

kube-system kube-proxy-fftrh 1/1 Running 0 3d21h 192.168.24.34 k8s-node-1 <none> <none>

kube-system kube-proxy-hl9sl 1/1 Running 0 3d21h 192.168.24.32 k8s-master-2 <none> <none>

kube-system kube-proxy-ksh6p 1/1 Running 0 3d21h 192.168.24.33 k8s-master-3 <none> <none>

kube-system kube-proxy-r72c6 1/1 Running 0 3d21h 192.168.24.31 k8s-master-1 <none> <none>

kube-system kube-scheduler-k8s-master-1 1/1 Running 1 (3d21h ago) 3d21h 192.168.24.31 k8s-master-1 <none> <none>

kube-system kube-scheduler-k8s-master-2 1/1 Running 1 (4h28m ago) 3d21h 192.168.24.32 k8s-master-2 <none> <none>

kube-system kube-scheduler-k8s-master-3 1/1 Running 0 3d21h 192.168.24.33 k8s-master-3 <none> <none>

kube-system kubernetes-dashboard-78d7494969-wmd7l 1/1 Running 0 3d6h 10.244.3.2 k8s-node-1 <none> <none>

8. 安装网络插件

bash

# 这里以安装Flannel为例

mkdir -p /root/kubernetes/cni

vim /root/kubernetes/cni/kube-flannel.yaml

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"EnableNFTables": false,

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: hub.hwj.com/k8s/flannel-cni-plugin:v1.4.1-flannel1

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: hub.hwj.com/k8s/flannel:v0.25.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: hub.hwj.com/k8s/flannel:v0.25.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

# 运行falnnel pod

# 耐心等待Pod启动后再使用kubectl get node就能看到是Ready状态了

cd /root/kubernetes/cni && kubectl apply -f .

# 如果遇到镜像拉取失败报错X509,是因为我这里使用的是自签证书的Harbor仓库,各位需根据自己的实际情况进行镜像更换

# 排查命令

kubectl get pod -n kube-flannel

kubectl describe pod -n kube-falnnel kube-flannel-ds-xxxxx

systemctl status containerd

# 解决方案(将harbor服务器的证书文件复制到k8s服务器后更新证书,重启containerd即可)

scp -rp root@harbor:/data/cert/ca.crt k8s:/usr/local/share/ca-certificates/harbor-ca.crt

sudo update-ca-certificates #更新证书

ll /etc/ssl/certs/harbor-ca.pem

systemctl restart containerd总结

🚀 本文基于 Ubuntu 22.04 + Kubernetes 1.28,完整演示了一套 生产可落地的 Kubernetes 高可用部署方案,通过 多 Master + Keepalived + Nginx 架构,成功构建了一个稳定、可靠的高可用集群控制平面

下一篇 将在此高可用集群基础之上,进一步介绍 Ingress,逐步完善 Kubernetes 的生产级使用体系