一:Flink CDC

1:什么是CDC

术语解析: CDC:表示变更数据捕获技术

技术本质: 通过捕获数据库变更事件(增删改),并按照发生顺序记录下来。实现数据同步

应用场景: 广泛应用于数据仓库、实时分析、数据同步等场景。

2:CDC种类

|------------|-------------------------|-------------------------------------------|

| | 基于查询的CDC | 基于Binlog的CDC |

| 开源产品 | sqoop、kafka jdbc source | Canal(阿里开源)、Maxwell、Debezium(flink cdc内置) |

| 执行模式 | 批处理 | 流处理 |

| 是否捕捉所有数据变化 | 否 | 是 |

| 延迟低 | 高延迟 | 低延迟 |

| 是否增加数据库压力 | 是 | 否 |

Binlog的CDC适配MySQL. flink cdc内置,直接采集binlog日志,对于MySQL TP库是绝佳的实现方案。

为什么有了Canal和Max well之后,还有flink cdc呢?

Canal/Maxwell 专注 CDC 采集与转发,而 Flink CDC 是 "采集 + 实时计算" 一体化的连接器,能简化架构、保障 Exactly-Once、支持全增量一体化与复杂流处理,完美适配实时数仓 / 数据湖等现代实时场景,因此成为主流选择。

3:Flink CDC

flink 社区开发了 flink-cdc-connectors 组件,这是一个可以直接从 MysQL、PostgresQL等数据库直接读取全量数据 和增量变更数据的 source组件 。

目前也开源,开源地址:https://github.com/ververica/flink-cdc-connectors

二:Flink CDC案例实操

技术对比: 需要理解两种API的适用场景和性能差异

实践重点: 掌握基于DataStream和SQL两种方式的代码实现

生产考量: 学习如何根据实际业务需求选择合适的API方案

1:DataStream方式的应用

1:创建一个Maven项目

这个没啥好说的。

2:导入Maven依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.example</groupId>

<artifactId>flink-cdc</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<flink.version>1.17.1</flink.version>

<scala.binary.version>2.12</scala.binary.version>

</properties>

<dependencies>

<!-- Flink核心依赖 -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients</artifactId>

<version>${flink.version}</version> <!-- 版本必须与上方一致 -->

<!-- 本地运行时注释掉provided,打包提交集群时改为provided -->

<!-- <scope>provided</scope> -->

</dependency>

<!-- MySQL CDC连接器 -->

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-mysql-cdc</artifactId>

<version>2.4.0</version>

</dependency>

<!-- MySQL JDBC驱动 -->

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.33</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.75</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.1</version>

<configuration>

<source>8</source>

<target>8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>3:编写代码

package com.dashu;

import com.ververica.cdc.connectors.mysql.MySqlSource;

import com.ververica.cdc.connectors.mysql.table.StartupOptions;

import com.ververica.cdc.debezium.DebeziumSourceFunction;

import com.ververica.cdc.debezium.StringDebeziumDeserializationSchema;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

public class FlinkCDC1 {

public static void main(String[] args) throws Exception {

// 获取Flink的执行环境

StreamExecutionEnvironment evn = StreamExecutionEnvironment.getExecutionEnvironment();

evn.setParallelism(1);

// 通过Flink CDC构建SourceFunction

DebeziumSourceFunction<String> sourceFunction = MySqlSource.<String>builder()

.hostname("192.168.67.133")

.port(3306)

.username("root")

.password("root")

//可变形参:可以同时监控多个数据库

.databaseList("cdc_test")

//可变形参:可以同时监控多个数据表

//表的格式要这么写。

.tableList("cdc_test.table_a")

// 反序列化器,后续肯定不用这个,这里先展示下。

.deserializer(new StringDebeziumDeserializationSchema())

// 监控模式。

.startupOptions(StartupOptions.initial())

.build();

DataStreamSource<String> dataStreamSource = evn.addSource(sourceFunction);

// 此时已经获取到流对象,可以对数据进行二次加工,写入到其他数据库,或者写入到中间件当中。

// 数据打印

dataStreamSource.print();

// 启动任务

evn.execute("FlinkCDC");

}

}4:开启MySQL的BinLog

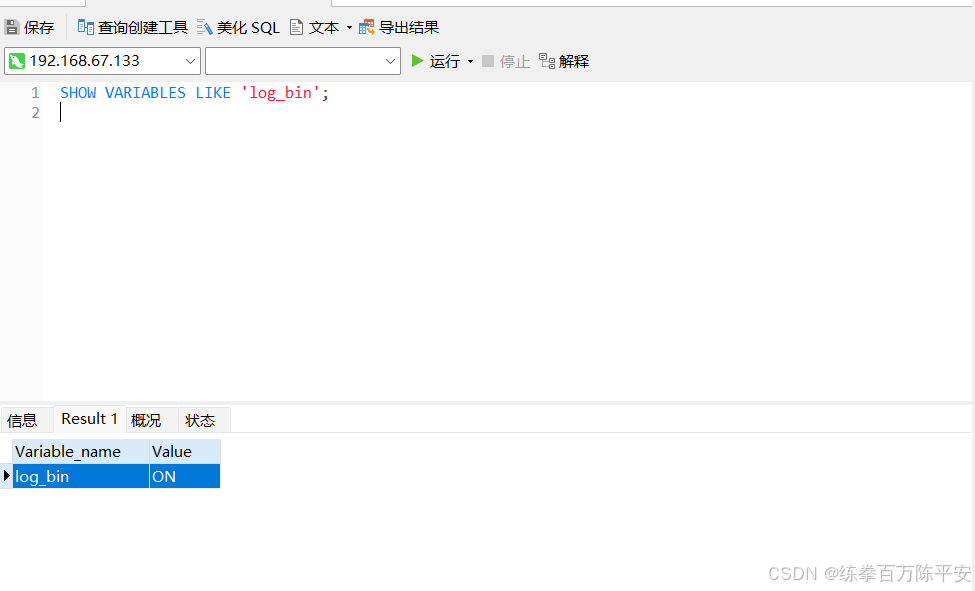

1:MySQL8默认对所有schema都开启了binlog

2:MySQL配置文件在vim /etc/my.cnf

3:查询binlog文件

[root@localhost ~]# cd /var/lib/mysql [root@localhost mysql]# ls | grep binlog binlog.000018 binlog.000019 binlog.000020 binlog.000021 binlog.000022 binlog.000023 binlog.000024 binlog.index

[root@localhost mysql]# ls -l -h -t

total 93M

-rw-r-----. 1 mysql mysql 192K Feb 5 09:46 #ib_16384_0.dblwr

-rw-r-----. 1 mysql mysql 16M Feb 5 09:46 undo_002

-rw-r-----. 1 mysql mysql 12M Feb 5 09:45 ibdata1

-rw-r-----. 1 mysql mysql 16M Feb 5 09:45 undo_001

-rw-r-----. 1 mysql mysql 1.1K Feb 5 09:45 binlog.000024

-rw-r-----. 1 mysql mysql 28M Feb 5 09:45 mysql.ibd

drwxr-x---. 2 mysql mysql 27 Feb 5 09:44 cdc_test

-rw-r-----. 1 mysql mysql 12M Feb 5 09:30 ibtmp1

srwxrwxrwx. 1 mysql mysql 0 Feb 5 09:30 mysql.sock

-rw-------. 1 mysql mysql 5 Feb 5 09:30 mysql.sock.lock

-rw-r-----. 1 mysql mysql 112 Feb 5 09:30 binlog.index

-rw-r-----. 1 mysql mysql 14K Feb 5 09:30 binlog.000023

drwxr-x---. 2 mysql mysql 4.0K Feb 5 09:30 #innodb_redo

drwxr-x---. 2 mysql mysql 187 Feb 5 09:30 #innodb_temp

drwxr-x---. 2 mysql mysql 4.0K Feb 4 03:16 ems

drwxr-x---. 2 mysql mysql 6 Feb 4 03:09 nacos_config

-rw-r-----. 1 mysql mysql 3.5K Feb 4 02:06 ib_buffer_pool

-rw-r-----. 1 mysql mysql 180 Feb 4 02:06 binlog.000022

-rw-r-----. 1 mysql mysql 157 Feb 4 02:06 binlog.000021

-rw-r-----. 1 mysql mysql 496 Feb 3 06:34 binlog.000020

-rw-r-----. 1 mysql mysql 1.5K Jan 12 07:26 binlog.000019

-rw-r-----. 1 mysql mysql 157 Jan 7 09:38 binlog.000018

drwxr-x---. 2 mysql mysql 28 Nov 26 09:56 sys

drwxr-x---. 2 mysql mysql 143 Nov 26 09:55 mysql

-rw-------. 1 mysql mysql 1.7K Nov 26 09:55 private_key.pem

-rw-r--r--. 1 mysql mysql 452 Nov 26 09:55 public_key.pem

-rw-r--r--. 1 mysql mysql 1.1K Nov 26 09:55 client-cert.pem

-rw-------. 1 mysql mysql 1.7K Nov 26 09:55 client-key.pem

-rw-r--r--. 1 mysql mysql 1.1K Nov 26 09:55 server-cert.pem

-rw-------. 1 mysql mysql 1.7K Nov 26 09:55 server-key.pem

-rw-r--r--. 1 mysql mysql 1.1K Nov 26 09:55 ca.pem

-rw-------. 1 mysql mysql 1.7K Nov 26 09:55 ca-key.pem

drwxr-x---. 2 mysql mysql 8.0K Nov 26 09:55 performance_schema

-rw-r-----. 1 mysql mysql 8.2M Nov 26 09:55 #ib_16384_1.dblwr

-rw-r-----. 1 mysql mysql 56 Nov 26 09:55 auto.cnf

[root@localhost mysql]#5:验证结果

D:\soft\JDK\JDK18\bin\java.exe "-javaagent:D:\soft\IntelliJ IDEA 2023.2.8\lib\idea_rt.jar=45242:D:\soft\IntelliJ IDEA 2023.2.8\bin" -Dfile.encoding=UTF-8 -classpath D:\soft\JDK\JDK18\jre\lib\charsets.jar;D:\soft\JDK\JDK18\jre\lib\deploy.jar;D:\soft\JDK\JDK18\jre\lib\ext\access-bridge-64.jar;D:\soft\JDK\JDK18\jre\lib\ext\cldrdata.jar;D:\soft\JDK\JDK18\jre\lib\ext\dnsns.jar;D:\soft\JDK\JDK18\jre\lib\ext\jaccess.jar;D:\soft\JDK\JDK18\jre\lib\ext\jfxrt.jar;D:\soft\JDK\JDK18\jre\lib\ext\localedata.jar;D:\soft\JDK\JDK18\jre\lib\ext\nashorn.jar;D:\soft\JDK\JDK18\jre\lib\ext\sunec.jar;D:\soft\JDK\JDK18\jre\lib\ext\sunjce_provider.jar;D:\soft\JDK\JDK18\jre\lib\ext\sunmscapi.jar;D:\soft\JDK\JDK18\jre\lib\ext\sunpkcs11.jar;D:\soft\JDK\JDK18\jre\lib\ext\zipfs.jar;D:\soft\JDK\JDK18\jre\lib\javaws.jar;D:\soft\JDK\JDK18\jre\lib\jce.jar;D:\soft\JDK\JDK18\jre\lib\jfr.jar;D:\soft\JDK\JDK18\jre\lib\jfxswt.jar;D:\soft\JDK\JDK18\jre\lib\jsse.jar;D:\soft\JDK\JDK18\jre\lib\management-agent.jar;D:\soft\JDK\JDK18\jre\lib\plugin.jar;D:\soft\JDK\JDK18\jre\lib\resources.jar;D:\soft\JDK\JDK18\jre\lib\rt.jar;D:\code\flink\flink-cdc\target\classes;D:\repository\org\apache\flink\flink-java\1.17.1\flink-java-1.17.1.jar;D:\repository\org\apache\flink\flink-core\1.17.1\flink-core-1.17.1.jar;D:\repository\org\apache\flink\flink-annotations\1.17.1\flink-annotations-1.17.1.jar;D:\repository\org\apache\flink\flink-metrics-core\1.17.1\flink-metrics-core-1.17.1.jar;D:\repository\org\apache\flink\flink-shaded-asm-9\9.3-16.1\flink-shaded-asm-9-9.3-16.1.jar;D:\repository\org\apache\flink\flink-shaded-jackson\2.13.4-16.1\flink-shaded-jackson-2.13.4-16.1.jar;D:\repository\org\apache\commons\commons-text\1.10.0\commons-text-1.10.0.jar;D:\repository\com\esotericsoftware\kryo\kryo\2.24.0\kryo-2.24.0.jar;D:\repository\com\esotericsoftware\minlog\minlog\1.2\minlog-1.2.jar;D:\repository\org\objenesis\objenesis\2.1\objenesis-2.1.jar;D:\repository\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\repository\org\apache\commons\commons-compress\1.21\commons-compress-1.21.jar;D:\repository\org\apache\commons\commons-lang3\3.12.0\commons-lang3-3.12.0.jar;D:\repository\org\apache\commons\commons-math3\3.6.1\commons-math3-3.6.1.jar;D:\repository\com\twitter\chill-java\0.7.6\chill-java-0.7.6.jar;D:\repository\org\slf4j\slf4j-api\1.7.36\slf4j-api-1.7.36.jar;D:\repository\com\google\code\findbugs\jsr305\1.3.9\jsr305-1.3.9.jar;D:\repository\org\apache\flink\flink-streaming-java\1.17.1\flink-streaming-java-1.17.1.jar;D:\repository\org\apache\flink\flink-file-sink-common\1.17.1\flink-file-sink-common-1.17.1.jar;D:\repository\org\apache\flink\flink-runtime\1.17.1\flink-runtime-1.17.1.jar;D:\repository\org\apache\flink\flink-rpc-core\1.17.1\flink-rpc-core-1.17.1.jar;D:\repository\org\apache\flink\flink-rpc-akka-loader\1.17.1\flink-rpc-akka-loader-1.17.1.jar;D:\repository\org\apache\flink\flink-queryable-state-client-java\1.17.1\flink-queryable-state-client-java-1.17.1.jar;D:\repository\org\apache\flink\flink-hadoop-fs\1.17.1\flink-hadoop-fs-1.17.1.jar;D:\repository\commons-io\commons-io\2.11.0\commons-io-2.11.0.jar;D:\repository\org\apache\flink\flink-shaded-netty\4.1.82.Final-16.1\flink-shaded-netty-4.1.82.Final-16.1.jar;D:\repository\org\apache\flink\flink-shaded-zookeeper-3\3.7.1-16.1\flink-shaded-zookeeper-3-3.7.1-16.1.jar;D:\repository\org\javassist\javassist\3.24.0-GA\javassist-3.24.0-GA.jar;D:\repository\org\xerial\snappy\snappy-java\1.1.8.3\snappy-java-1.1.8.3.jar;D:\repository\org\lz4\lz4-java\1.8.0\lz4-java-1.8.0.jar;D:\repository\org\apache\flink\flink-shaded-force-shading\16.1\flink-shaded-force-shading-16.1.jar;D:\repository\org\apache\flink\flink-shaded-guava\30.1.1-jre-16.1\flink-shaded-guava-30.1.1-jre-16.1.jar;D:\repository\org\apache\flink\flink-table-planner_2.12\1.17.1\flink-table-planner_2.12-1.17.1.jar;D:\repository\org\immutables\value\2.8.8\value-2.8.8.jar;D:\repository\org\immutables\value-annotations\2.8.8\value-annotations-2.8.8.jar;D:\repository\org\codehaus\janino\commons-compiler\3.0.11\commons-compiler-3.0.11.jar;D:\repository\org\codehaus\janino\janino\3.0.11\janino-3.0.11.jar;D:\repository\org\apache\flink\flink-table-api-java-bridge\1.17.1\flink-table-api-java-bridge-1.17.1.jar;D:\repository\org\apache\flink\flink-table-api-java\1.17.1\flink-table-api-java-1.17.1.jar;D:\repository\org\apache\flink\flink-table-api-bridge-base\1.17.1\flink-table-api-bridge-base-1.17.1.jar;D:\repository\org\apache\flink\flink-scala_2.12\1.17.1\flink-scala_2.12-1.17.1.jar;D:\repository\org\scala-lang\scala-reflect\2.12.7\scala-reflect-2.12.7.jar;D:\repository\org\scala-lang\scala-library\2.12.7\scala-library-2.12.7.jar;D:\repository\org\scala-lang\scala-compiler\2.12.7\scala-compiler-2.12.7.jar;D:\repository\org\scala-lang\modules\scala-xml_2.12\1.0.6\scala-xml_2.12-1.0.6.jar;D:\repository\com\twitter\chill_2.12\0.7.6\chill_2.12-0.7.6.jar;D:\repository\org\apiguardian\apiguardian-api\1.1.2\apiguardian-api-1.1.2.jar;D:\repository\org\apache\flink\flink-table-runtime\1.17.1\flink-table-runtime-1.17.1.jar;D:\repository\org\apache\flink\flink-table-common\1.17.1\flink-table-common-1.17.1.jar;D:\repository\com\ibm\icu\icu4j\67.1\icu4j-67.1.jar;D:\repository\org\apache\flink\flink-cep\1.17.1\flink-cep-1.17.1.jar;D:\repository\org\apache\flink\flink-clients\1.17.1\flink-clients-1.17.1.jar;D:\repository\org\apache\flink\flink-optimizer\1.17.1\flink-optimizer-1.17.1.jar;D:\repository\commons-cli\commons-cli\1.5.0\commons-cli-1.5.0.jar;D:\repository\com\ververica\flink-connector-mysql-cdc\2.4.0\flink-connector-mysql-cdc-2.4.0.jar;D:\repository\com\ververica\flink-connector-debezium\2.4.0\flink-connector-debezium-2.4.0.jar;D:\repository\io\debezium\debezium-api\1.9.7.Final\debezium-api-1.9.7.Final.jar;D:\repository\io\debezium\debezium-embedded\1.9.7.Final\debezium-embedded-1.9.7.Final.jar;D:\repository\org\apache\kafka\connect-api\3.2.0\connect-api-3.2.0.jar;D:\repository\org\apache\kafka\kafka-clients\3.2.0\kafka-clients-3.2.0.jar;D:\repository\javax\ws\rs\javax.ws.rs-api\2.1.1\javax.ws.rs-api-2.1.1.jar;D:\repository\org\apache\kafka\connect-runtime\3.2.0\connect-runtime-3.2.0.jar;D:\repository\org\apache\kafka\connect-transforms\3.2.0\connect-transforms-3.2.0.jar;D:\repository\org\apache\kafka\kafka-tools\3.2.0\kafka-tools-3.2.0.jar;D:\repository\net\sourceforge\argparse4j\argparse4j\0.7.0\argparse4j-0.7.0.jar;D:\repository\ch\qos\reload4j\reload4j\1.2.19\reload4j-1.2.19.jar;D:\repository\org\bitbucket\b_c\jose4j\0.7.9\jose4j-0.7.9.jar;D:\repository\com\fasterxml\jackson\core\jackson-annotations\2.12.6\jackson-annotations-2.12.6.jar;D:\repository\com\fasterxml\jackson\jaxrs\jackson-jaxrs-json-provider\2.12.6\jackson-jaxrs-json-provider-2.12.6.jar;D:\repository\com\fasterxml\jackson\jaxrs\jackson-jaxrs-base\2.12.6\jackson-jaxrs-base-2.12.6.jar;D:\repository\com\fasterxml\jackson\module\jackson-module-jaxb-annotations\2.12.6\jackson-module-jaxb-annotations-2.12.6.jar;D:\repository\jakarta\xml\bind\jakarta.xml.bind-api\2.3.2\jakarta.xml.bind-api-2.3.2.jar;D:\repository\jakarta\activation\jakarta.activation-api\1.2.1\jakarta.activation-api-1.2.1.jar;D:\repository\org\glassfish\jersey\containers\jersey-container-servlet\2.34\jersey-container-servlet-2.34.jar;D:\repository\org\glassfish\jersey\containers\jersey-container-servlet-core\2.34\jersey-container-servlet-core-2.34.jar;D:\repository\org\glassfish\hk2\external\jakarta.inject\2.6.1\jakarta.inject-2.6.1.jar;D:\repository\jakarta\ws\rs\jakarta.ws.rs-api\2.1.6\jakarta.ws.rs-api-2.1.6.jar;D:\repository\org\glassfish\jersey\inject\jersey-hk2\2.34\jersey-hk2-2.34.jar;D:\repository\org\glassfish\hk2\hk2-locator\2.6.1\hk2-locator-2.6.1.jar;D:\repository\org\glassfish\hk2\external\aopalliance-repackaged\2.6.1\aopalliance-repackaged-2.6.1.jar;D:\repository\org\glassfish\hk2\hk2-api\2.6.1\hk2-api-2.6.1.jar;D:\repository\org\glassfish\hk2\hk2-utils\2.6.1\hk2-utils-2.6.1.jar;D:\repository\javax\xml\bind\jaxb-api\2.3.0\jaxb-api-2.3.0.jar;D:\repository\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\repository\org\eclipse\jetty\jetty-server\9.4.44.v20210927\jetty-server-9.4.44.v20210927.jar;D:\repository\javax\servlet\javax.servlet-api\3.1.0\javax.servlet-api-3.1.0.jar;D:\repository\org\eclipse\jetty\jetty-http\9.4.44.v20210927\jetty-http-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-io\9.4.44.v20210927\jetty-io-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-servlet\9.4.44.v20210927\jetty-servlet-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-security\9.4.44.v20210927\jetty-security-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-util-ajax\9.4.44.v20210927\jetty-util-ajax-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-servlets\9.4.44.v20210927\jetty-servlets-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-continuation\9.4.44.v20210927\jetty-continuation-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-util\9.4.44.v20210927\jetty-util-9.4.44.v20210927.jar;D:\repository\org\eclipse\jetty\jetty-client\9.4.44.v20210927\jetty-client-9.4.44.v20210927.jar;D:\repository\org\reflections\reflections\0.9.12\reflections-0.9.12.jar;D:\repository\org\apache\maven\maven-artifact\3.8.4\maven-artifact-3.8.4.jar;D:\repository\org\codehaus\plexus\plexus-utils\3.3.0\plexus-utils-3.3.0.jar;D:\repository\org\apache\kafka\connect-json\3.2.0\connect-json-3.2.0.jar;D:\repository\com\fasterxml\jackson\datatype\jackson-datatype-jdk8\2.12.6\jackson-datatype-jdk8-2.12.6.jar;D:\repository\org\apache\kafka\connect-file\3.2.0\connect-file-3.2.0.jar;D:\repository\io\debezium\debezium-connector-mysql\1.9.7.Final\debezium-connector-mysql-1.9.7.Final.jar;D:\repository\io\debezium\debezium-core\1.9.7.Final\debezium-core-1.9.7.Final.jar;D:\repository\com\fasterxml\jackson\core\jackson-core\2.13.2\jackson-core-2.13.2.jar;D:\repository\com\fasterxml\jackson\core\jackson-databind\2.13.2.2\jackson-databind-2.13.2.2.jar;D:\repository\com\fasterxml\jackson\datatype\jackson-datatype-jsr310\2.13.2\jackson-datatype-jsr310-2.13.2.jar;D:\repository\com\google\guava\guava\30.1.1-jre\guava-30.1.1-jre.jar;D:\repository\com\google\guava\failureaccess\1.0.1\failureaccess-1.0.1.jar;D:\repository\com\google\guava\listenablefuture\9999.0-empty-to-avoid-conflict-with-guava\listenablefuture-9999.0-empty-to-avoid-conflict-with-guava.jar;D:\repository\io\debezium\debezium-ddl-parser\1.9.7.Final\debezium-ddl-parser-1.9.7.Final.jar;D:\repository\org\antlr\antlr4-runtime\4.8\antlr4-runtime-4.8.jar;D:\repository\com\zendesk\mysql-binlog-connector-java\0.27.2\mysql-binlog-connector-java-0.27.2.jar;D:\repository\com\github\luben\zstd-jni\1.5.0-2\zstd-jni-1.5.0-2.jar;D:\repository\com\esri\geometry\esri-geometry-api\2.2.0\esri-geometry-api-2.2.0.jar;D:\repository\com\zaxxer\HikariCP\4.0.3\HikariCP-4.0.3.jar;D:\repository\org\awaitility\awaitility\4.0.1\awaitility-4.0.1.jar;D:\repository\org\hamcrest\hamcrest\2.1\hamcrest-2.1.jar;D:\repository\com\mysql\mysql-connector-j\8.0.33\mysql-connector-j-8.0.33.jar;D:\repository\com\google\protobuf\protobuf-java\3.21.9\protobuf-java-3.21.9.jar;D:\repository\com\alibaba\fastjson\1.2.75\fastjson-1.2.75.jar com.dashu.FlinkCDC1

SLF4J: Failed to load class "org.slf4j.impl.StaticLoggerBinder".

SLF4J: Defaulting to no-operation (NOP) logger implementation

SLF4J: See http://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

log4j:WARN No appenders could be found for logger (org.apache.flink.shaded.netty4.io.netty.util.internal.logging.InternalLoggerFactory).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

SLF4J: Failed to load class "org.slf4j.impl.StaticMDCBinder".

SLF4J: Defaulting to no-operation MDCAdapter implementation.

SLF4J: See http://www.slf4j.org/codes.html#no_static_mdc_binder for further details.

二月 06, 2026 8:24:29 上午 com.github.shyiko.mysql.binlog.BinaryLogClient connect

信息: Connected to 192.168.67.133:3306 at binlog.000024/1095 (sid:5805, cid:25)

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={ts_sec=1770337470, file=binlog.000024, pos=1095}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1001}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1001,name=张三,sex=male},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770337470000,snapshot=last,db=cdc_test,table=user_info,server_id=0,file=binlog.000024,pos=1095,row=0},op=r,ts_ms=1770337469306}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}6:查看数据更新

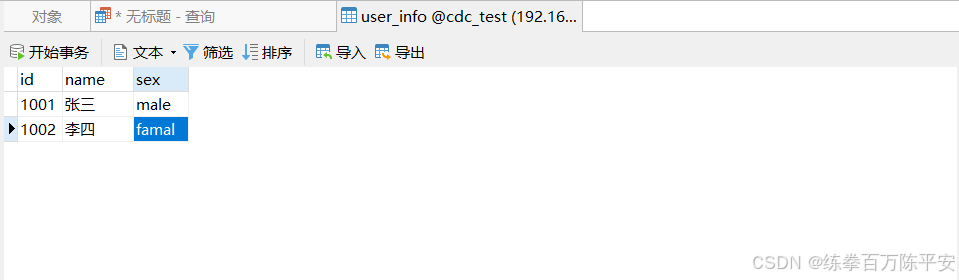

首先,我们手动往数据库中插入一条数据:

然后,突然发现控制台已经读取到了:

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={ts_sec=1770337470, file=binlog.000024, pos=1095}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1001}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1001,name=张三,sex=male},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770337470000,snapshot=last,db=cdc_test,table=user_info,server_id=0,file=binlog.000024,pos=1095,row=0},op=r,ts_ms=1770337469306}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

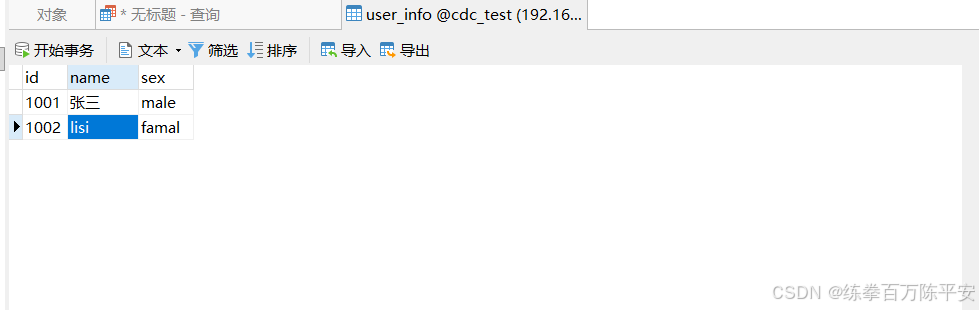

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338014, file=binlog.000024, pos=1174, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1002,name=李四,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338014000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1322,row=0,thread=26},op=c,ts_ms=1770338013346}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}然后,我们把李四,改为lisi:

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={ts_sec=1770337470, file=binlog.000024, pos=1095}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1001}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1001,name=张三,sex=male},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770337470000,snapshot=last,db=cdc_test,table=user_info,server_id=0,file=binlog.000024,pos=1095,row=0},op=r,ts_ms=1770337469306}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338014, file=binlog.000024, pos=1174, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1002,name=李四,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338014000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1322,row=0,thread=26},op=c,ts_ms=1770338013346}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

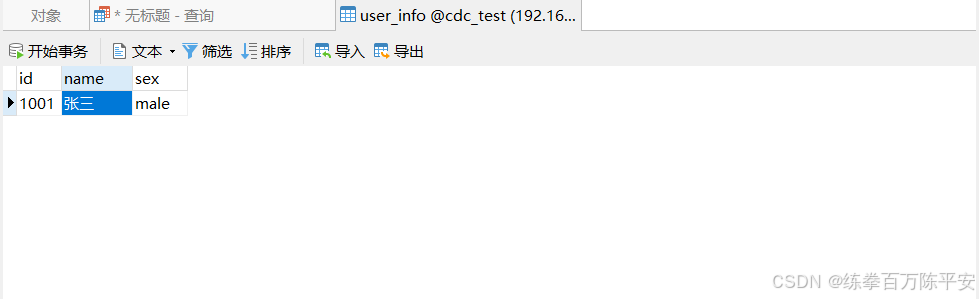

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338180, file=binlog.000024, pos=1489, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{before=Struct{id=1002,name=李四,sex=famal},after=Struct{id=1002,name=lisi,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338180000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1646,row=0,thread=26},op=u,ts_ms=1770338178961}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}然后再删除一条数据:

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={ts_sec=1770337470, file=binlog.000024, pos=1095}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1001}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1001,name=张三,sex=male},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770337470000,snapshot=last,db=cdc_test,table=user_info,server_id=0,file=binlog.000024,pos=1095,row=0},op=r,ts_ms=1770337469306}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338014, file=binlog.000024, pos=1174, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{after=Struct{id=1002,name=李四,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338014000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1322,row=0,thread=26},op=c,ts_ms=1770338013346}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338180, file=binlog.000024, pos=1489, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{before=Struct{id=1002,name=李四,sex=famal},after=Struct{id=1002,name=lisi,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338180000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1646,row=0,thread=26},op=u,ts_ms=1770338178961}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}

SourceRecord{sourcePartition={server=mysql_binlog_source}, sourceOffset={transaction_id=null, ts_sec=1770338337, file=binlog.000024, pos=1834, row=1, server_id=1, event=2}} ConnectRecord{topic='mysql_binlog_source.cdc_test.user_info', kafkaPartition=null, key=Struct{id=1002}, keySchema=Schema{mysql_binlog_source.cdc_test.user_info.Key:STRUCT}, value=Struct{before=Struct{id=1002,name=lisi,sex=famal},source=Struct{version=1.9.7.Final,connector=mysql,name=mysql_binlog_source,ts_ms=1770338337000,db=cdc_test,table=user_info,server_id=1,file=binlog.000024,pos=1982,row=0,thread=26},op=d,ts_ms=1770338335944}, valueSchema=Schema{mysql_binlog_source.cdc_test.user_info.Envelope:STRUCT}, timestamp=null, headers=ConnectHeaders(headers=)}到这,我们已经基于Flink CDC使用流的方式监控到了MySQL数据的变化。

生产环境中,我们会默认开启check point。任务挂掉之后,会从checkpoint继续执行。从checkpoint恢复数据的时候。就不会从开始读取数据了。

7:开始CK

package com.dashu;

import com.ververica.cdc.connectors.mysql.MySqlSource;

import com.ververica.cdc.connectors.mysql.table.StartupOptions;

import com.ververica.cdc.debezium.DebeziumSourceFunction;

import com.ververica.cdc.debezium.StringDebeziumDeserializationSchema;

import org.apache.flink.streaming.api.CheckpointingMode;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

public class FlinkCDC1 {

public static void main(String[] args) throws Exception {

// 获取Flink的执行环境

StreamExecutionEnvironment evn = StreamExecutionEnvironment.getExecutionEnvironment();

evn.setParallelism(1);

// 开启CK,这里我们开启的5秒钟做一次,生产环境中我们采用的是分钟级别一次。

evn.enableCheckpointing(5000);

// 设置checkpoint超时时间

evn.getCheckpointConfig().setCheckpointTimeout(10000);

// 设置Check为精准一次性。

evn.getCheckpointConfig().setCheckpointingMode(CheckpointingMode.EXACTLY_ONCE);

evn.getCheckpointConfig().setMaxConcurrentCheckpoints(1);

// 这块咱们就不看了,没有安装hdfs

// evn.setStateBackend(new FlinkSQLCDC(""))

// 通过Flink CDC构建SourceFunction

DebeziumSourceFunction<String> sourceFunction = MySqlSource.<String>builder()

.hostname("192.168.67.133")

.port(3306)

.username("root")

.password("root")

//可变形参:可以同时监控多个数据库

.databaseList("cdc_test")

//可变形参:可以同时监控多个数据表

//表的格式要这么写。

.tableList("cdc_test.user_info")

// 反序列化器,后续肯定不用这个,这里先展示下。

.deserializer(new StringDebeziumDeserializationSchema())

// 监控模式。

.startupOptions(StartupOptions.initial())

.build();

DataStreamSource<String> dataStreamSource = evn.addSource(sourceFunction);

// 此时已经获取到流对象,可以对数据进行二次加工,写入到其他数据库,或者写入到中间件当中。

// 数据打印

dataStreamSource.print();

// 启动任务

evn.execute("FlinkCDC");

}

}