卷积神经网络(CNN)最经典、最基础的组成方式是:

输入 → 【卷积层 + 激活函数 + 池化层】 × 多次重复 → 分类 / 回归输出

卷积层+池化层是卷积神经网络的核心骨架。

关于卷积层与池化层的原理、过程、参数说明参考文章:PyTorch_conda-CSDN博客中的卷积层与池化层部分。

池化层(也叫下采样层)的本质是对卷积层输出的特征图做 "降维压缩" ,用固定大小的窗口(如 2×2)在特征图上滑动,只保留窗口内最关键的信息(最大值 / 平均值),丢弃冗余细节。之所以在每个卷积层后面加一个池化层,主要原因如下:

- **降低特征图尺寸,解决 "计算爆炸" 问题:**卷积层的作用是提取特征,但会输出高维度的特征图(比如卷积后是 224×224×64),如果直接把这样的特征图传给下一层卷积 / 全连接层会使计算量会呈指数级增长。

- **扩大感受野,捕捉更全局的特征:**感受野是指神经网络中某个神经元能 "感知到" 的原始输入图像的区域(感受野越大,神经元能捕捉的全局特征越多)。卷积层的感受野有限(比如 3×3 卷积核的感受野只有 3×3),而池化层能不增加卷积核参数的前提下,扩大后续层的感受野。比如第一层卷积为3X3卷积核,接一层2X2池化层,第二层卷积的感受野就可以扩大到3×3×2=6×6

- 增强平移不变性,让模型更鲁棒: 平移不变性是指物体在图像中稍微移动一点位置(比如猫的脸右移 1 像素),模型依然能识别出这是猫。卷积层对像素位置很敏感 ------ 哪怕物体只移 1 像素,卷积输出的特征图像素值都会变;而池化层能 "忽略这种微小位移"。比如卷积后特征图的一个 2×2 窗口值为

[[5, 3], [2, 4]],最大池化取 5,如果物体平移 1 像素,窗口值变成[[3, 5], [4, 2]],最大池化还是取 5,核心特征(最大值)没丢,模型依然能识别。这是 CNN 能应对 "物体位置不固定" 的关键,也是相比传统图像识别算法的核心优势之一。

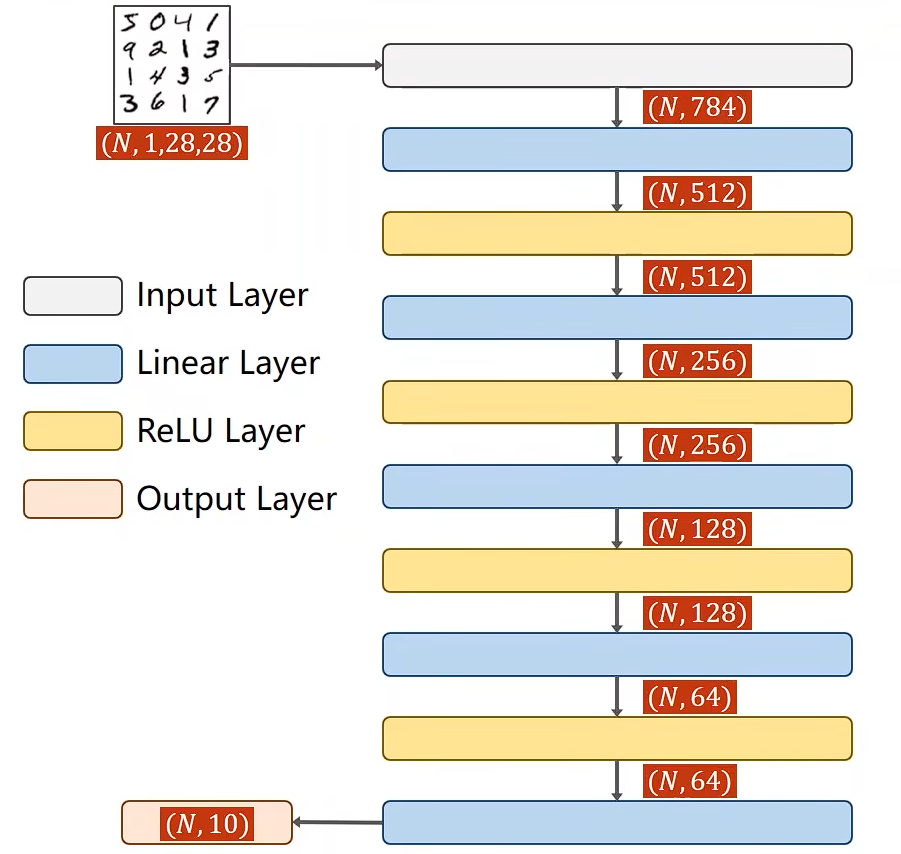

在文章PyTorch实现多分类-CSDN博客我们通过如下全连接层实现了识别MINST数据集的训练过程:

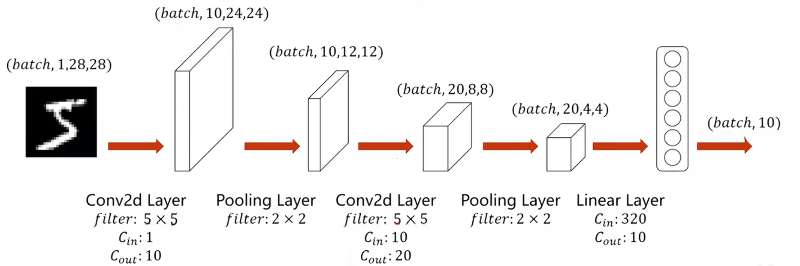

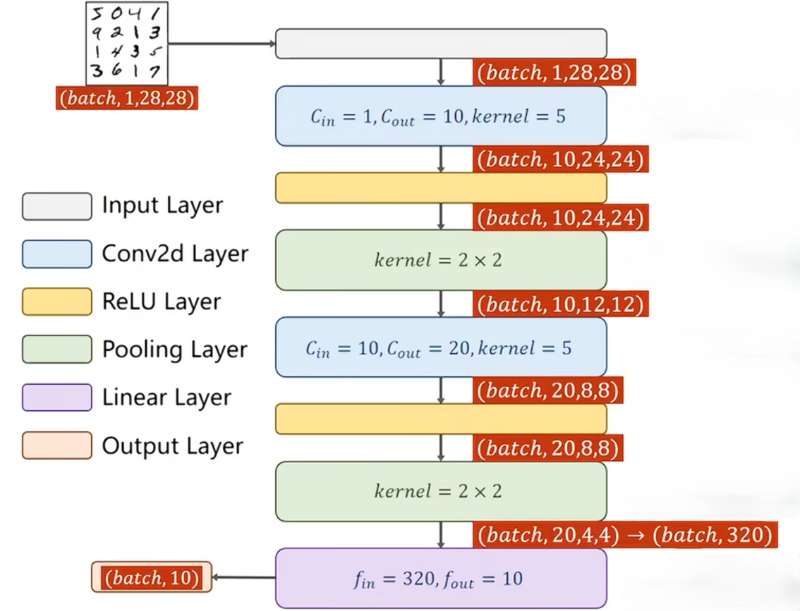

但是全连接层的每个神经元强行把全图所有像素连在一起,把相距很远的像素也强行关联。这会使图像丢失空间信息,像素的上下左右、相邻关系、边缘、形状全部消失,只剩一串无关系的数值。使用卷积神经网络可使错误率进一步降低,下面继续结合MINST数据集构建 "两个卷积层+两个池化层+一个全连接层"的神经网络结构进行多分类任务:

完整代码实例

与PyTorch实现多分类-CSDN博客唯一不同在于换了神经网络层,即*class Net(torch.nn.Module)*中的神经网络结构不同:

python

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist/',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

# design model using class

class Net(torch.nn.Module):

def __init__(self):

super().__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5)

self.pooling = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(320, 10)

def forward(self, x):

# flatten data from (n,1,28,28) to (n, 784)

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

x = x.view(batch_size, -1)

x = self.fc(x)

return x

model = Net()

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d %% ' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()可以将训练过程放到GPU上训练:

- *device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device):*将模型迁移到GPU - *inputs, target = inputs.to(device), target.to(device):*将张量计算也迁移到GPU

python

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist/',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

# design model using class

class Net(torch.nn.Module):

def __init__(self):

super().__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5)

self.pooling = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(320, 10)

def forward(self, x):

# flatten data from (n,1,28,28) to (n, 784)

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

x = x.view(batch_size, -1)

# print("x.shape",x.shape)

x = self.fc(x)

return x

model = Net()

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

inputs, target = inputs.to(device), target.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

images, labels = images.to(device), labels.to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d %% ' % (100*correct/total))

return correct/total

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()运行结果如下所示: